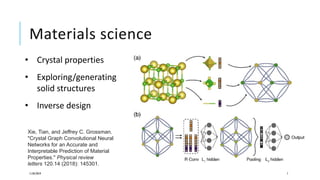

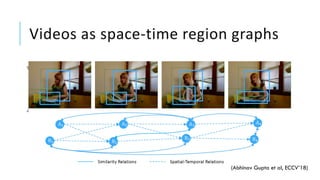

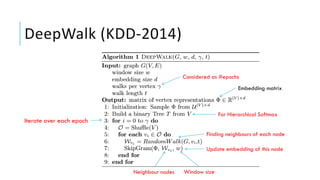

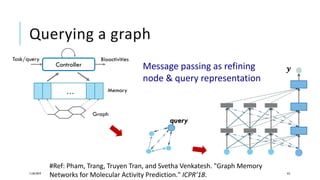

This document discusses representation learning on graphs. It begins by explaining why graph representation learning is important as graphs are pervasive in many scientific disciplines. It then discusses various techniques for graph representation learning including graph embedding methods like DeepWalk and Node2Vec, message passing neural networks, and graph generation methods like variational autoencoders. The document concludes by discussing challenges in graph reasoning to deduce knowledge from graphs in response to queries.