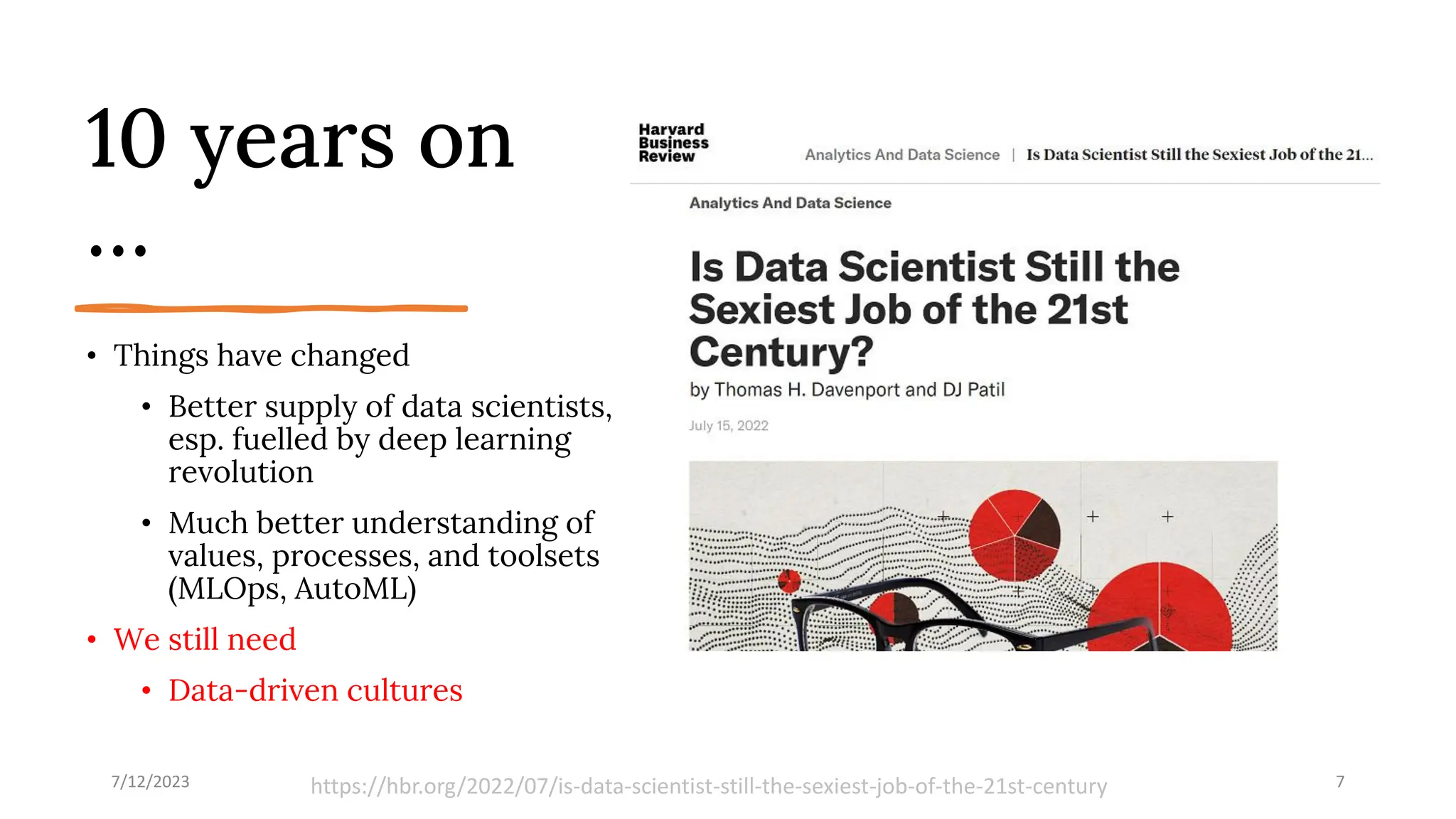

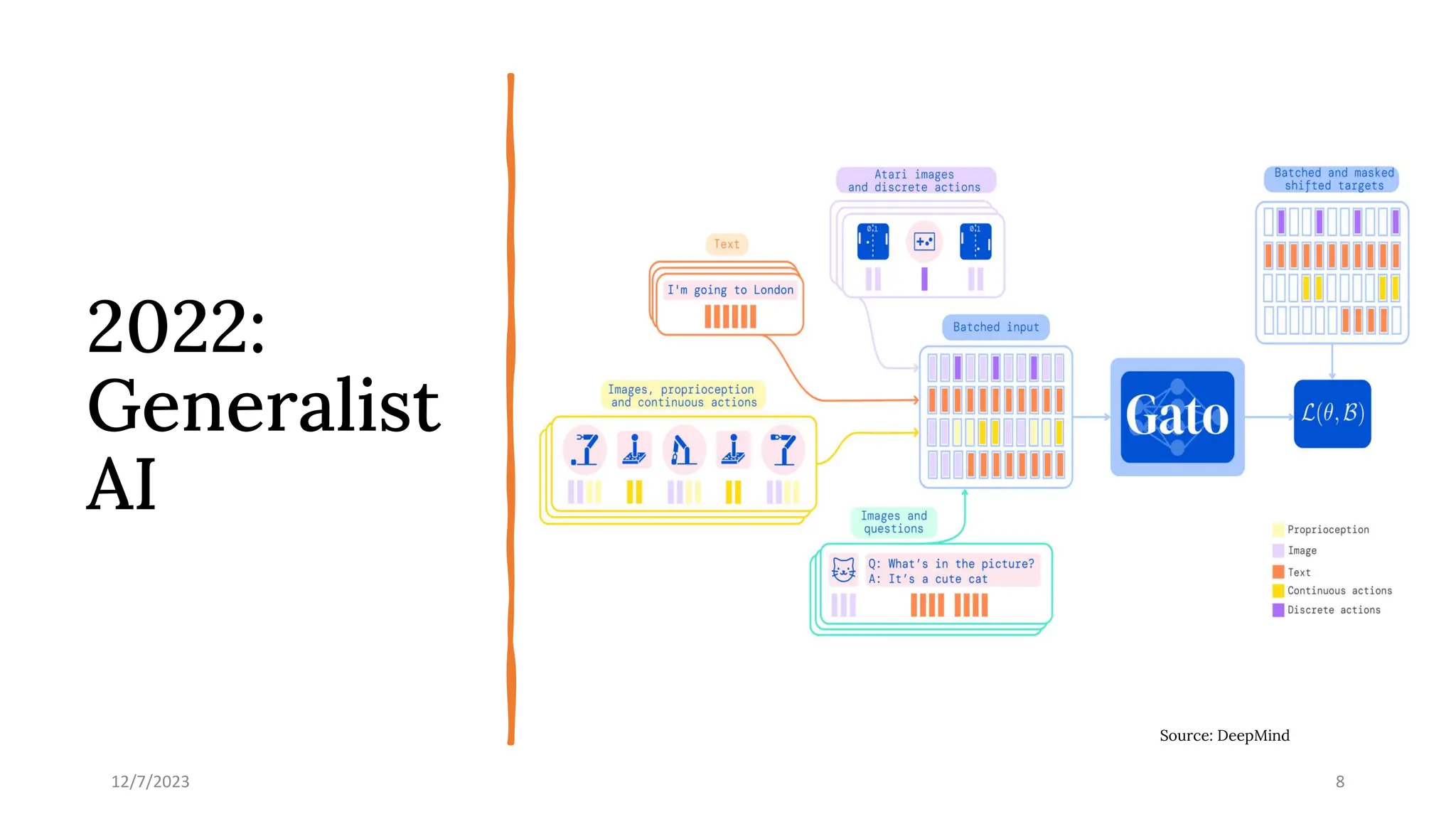

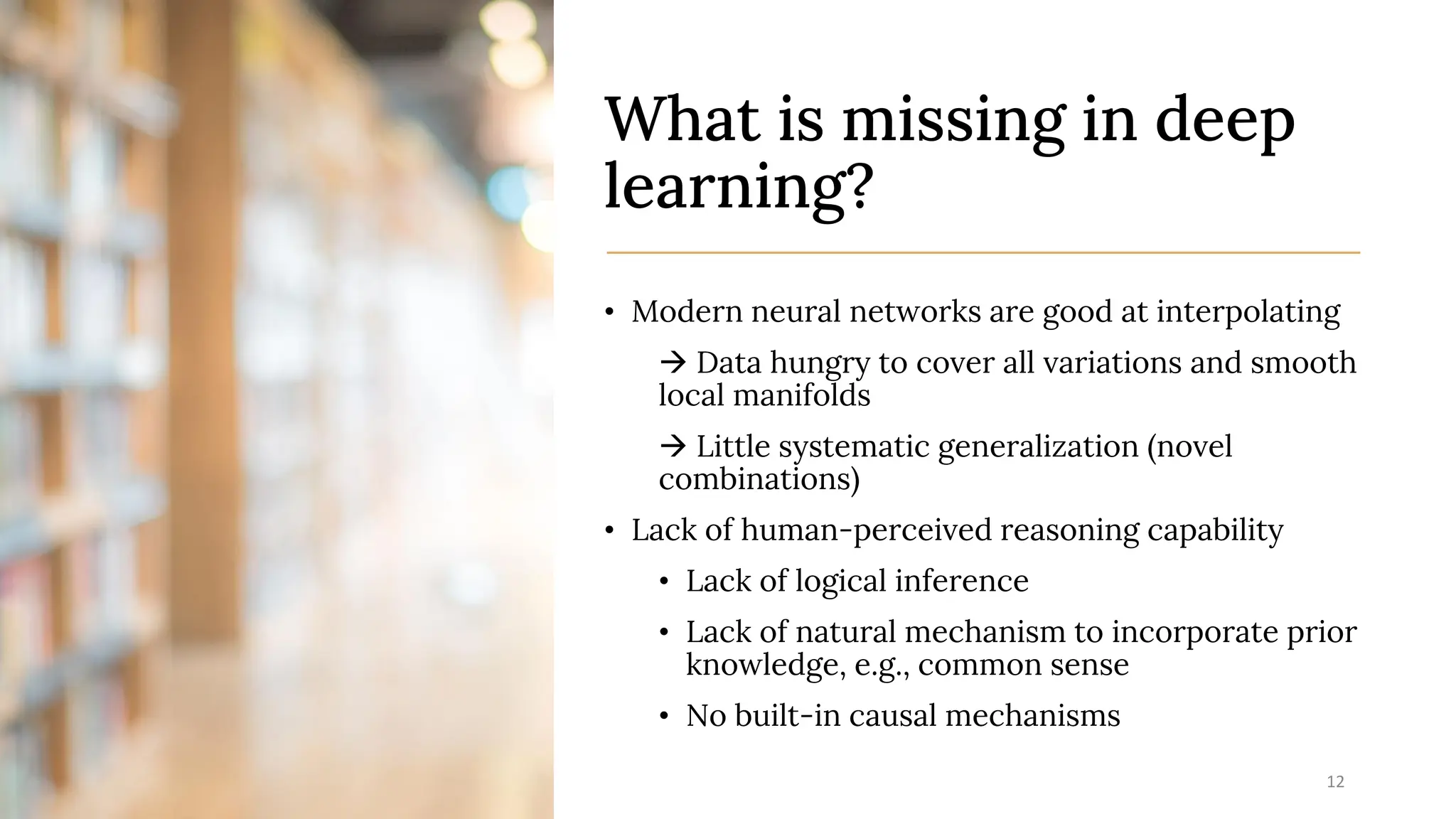

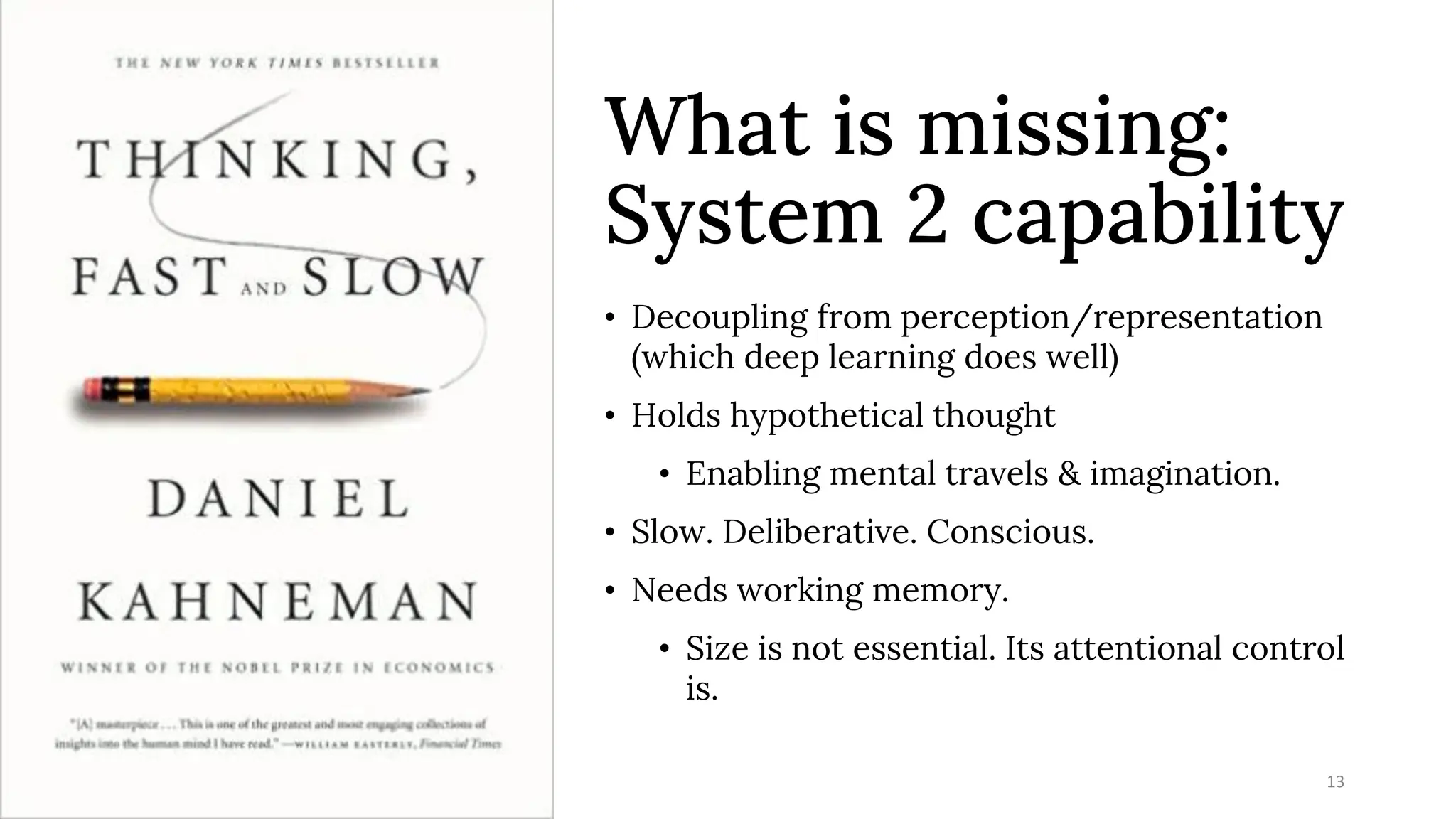

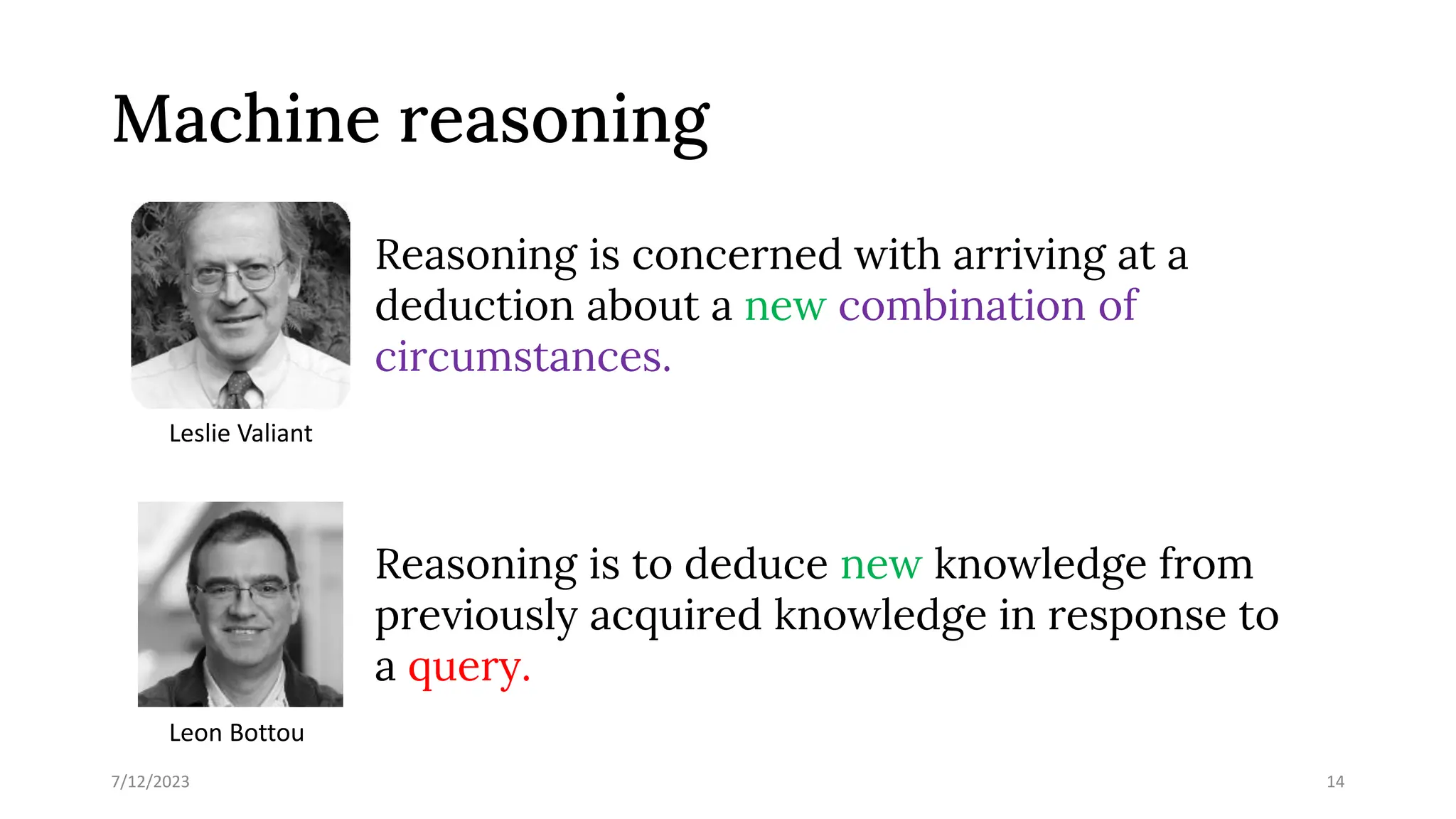

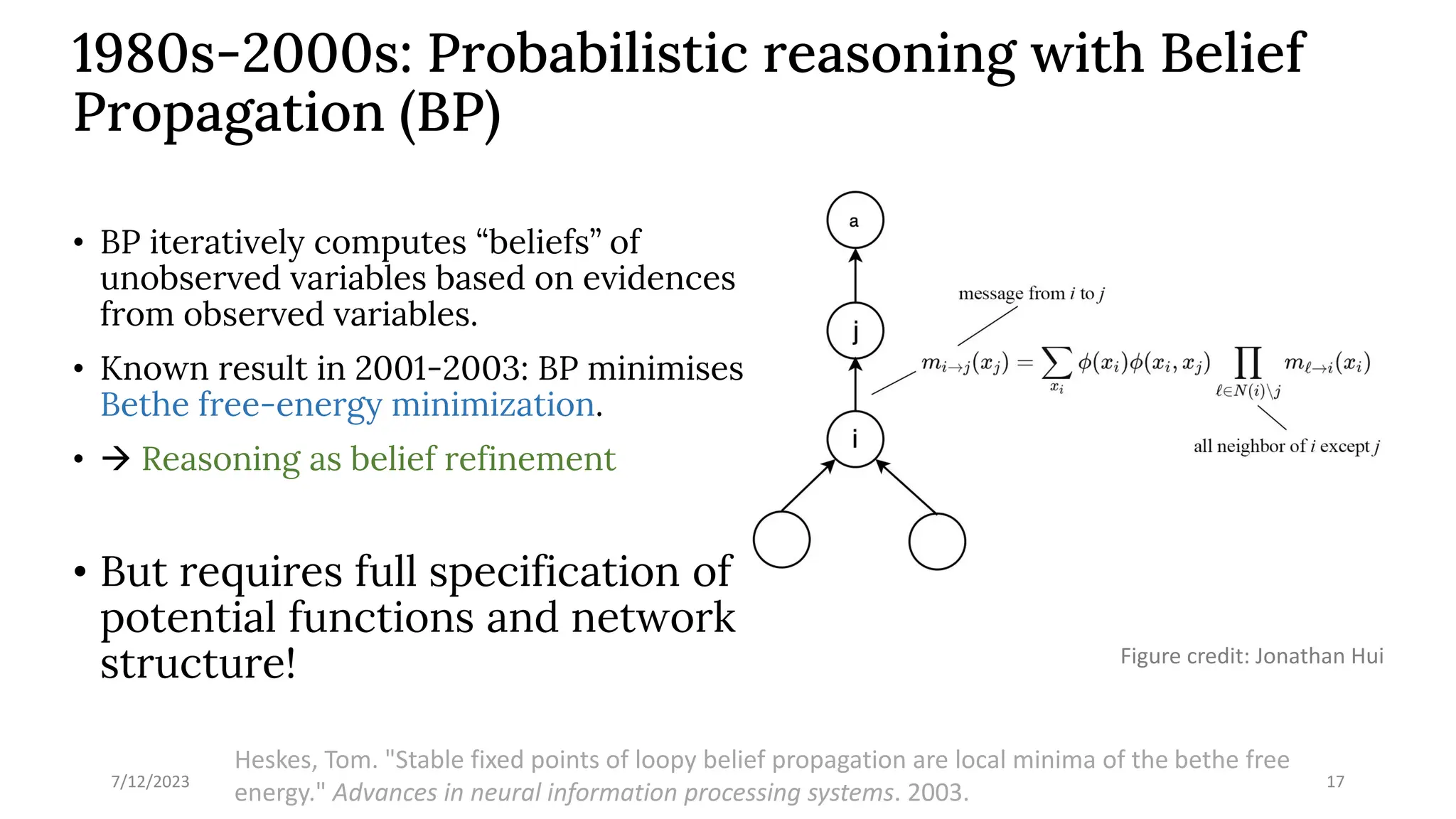

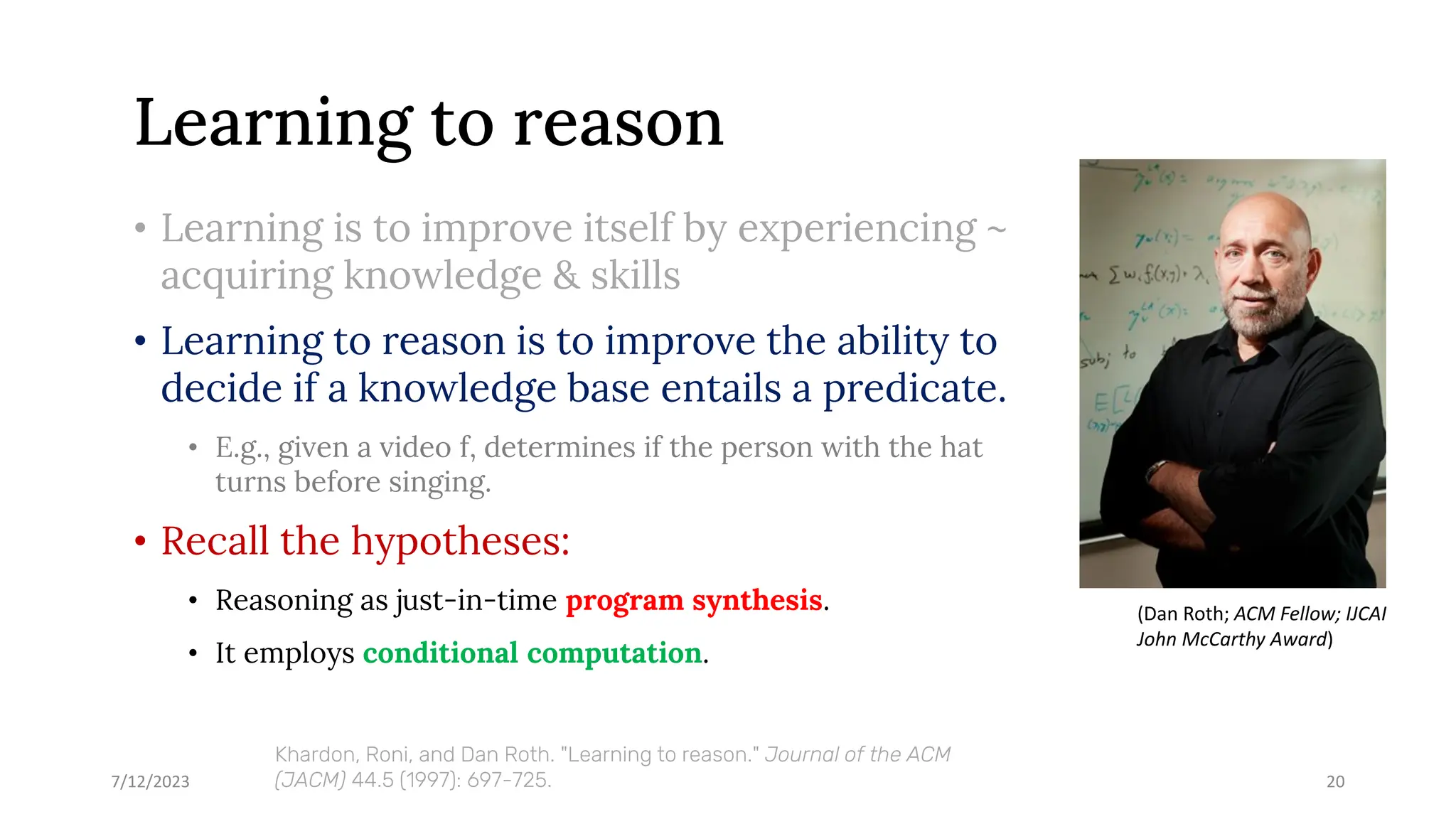

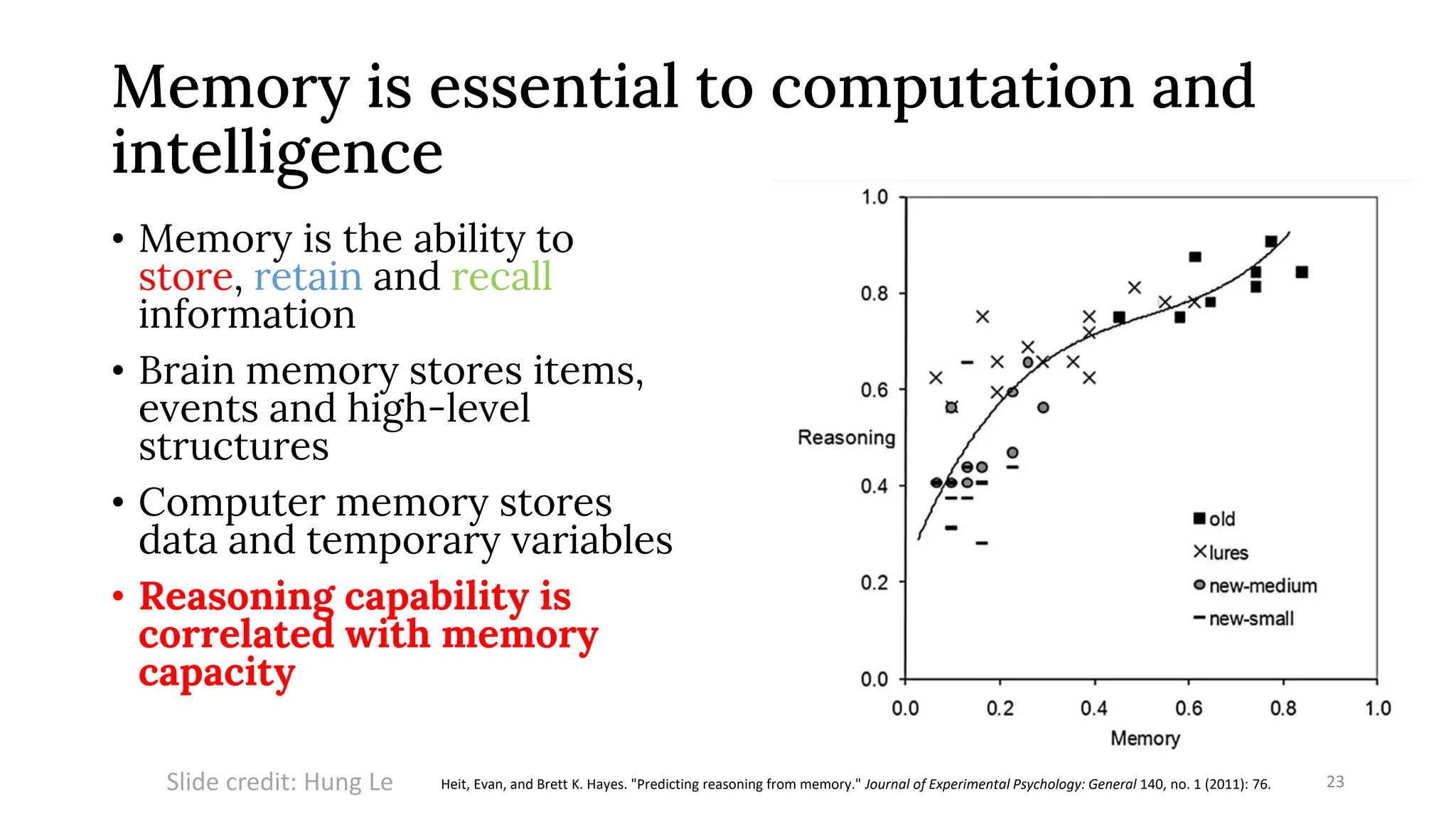

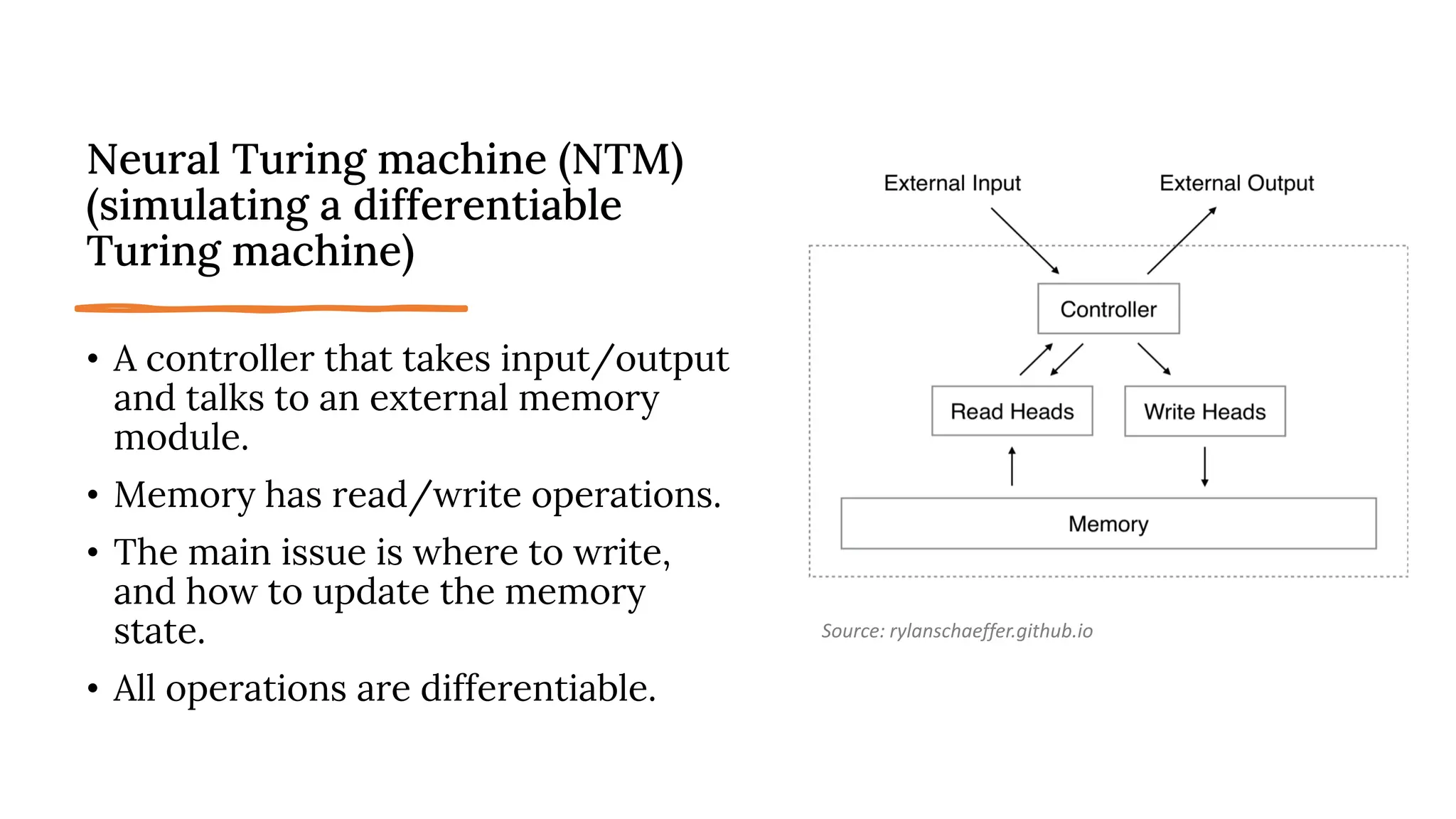

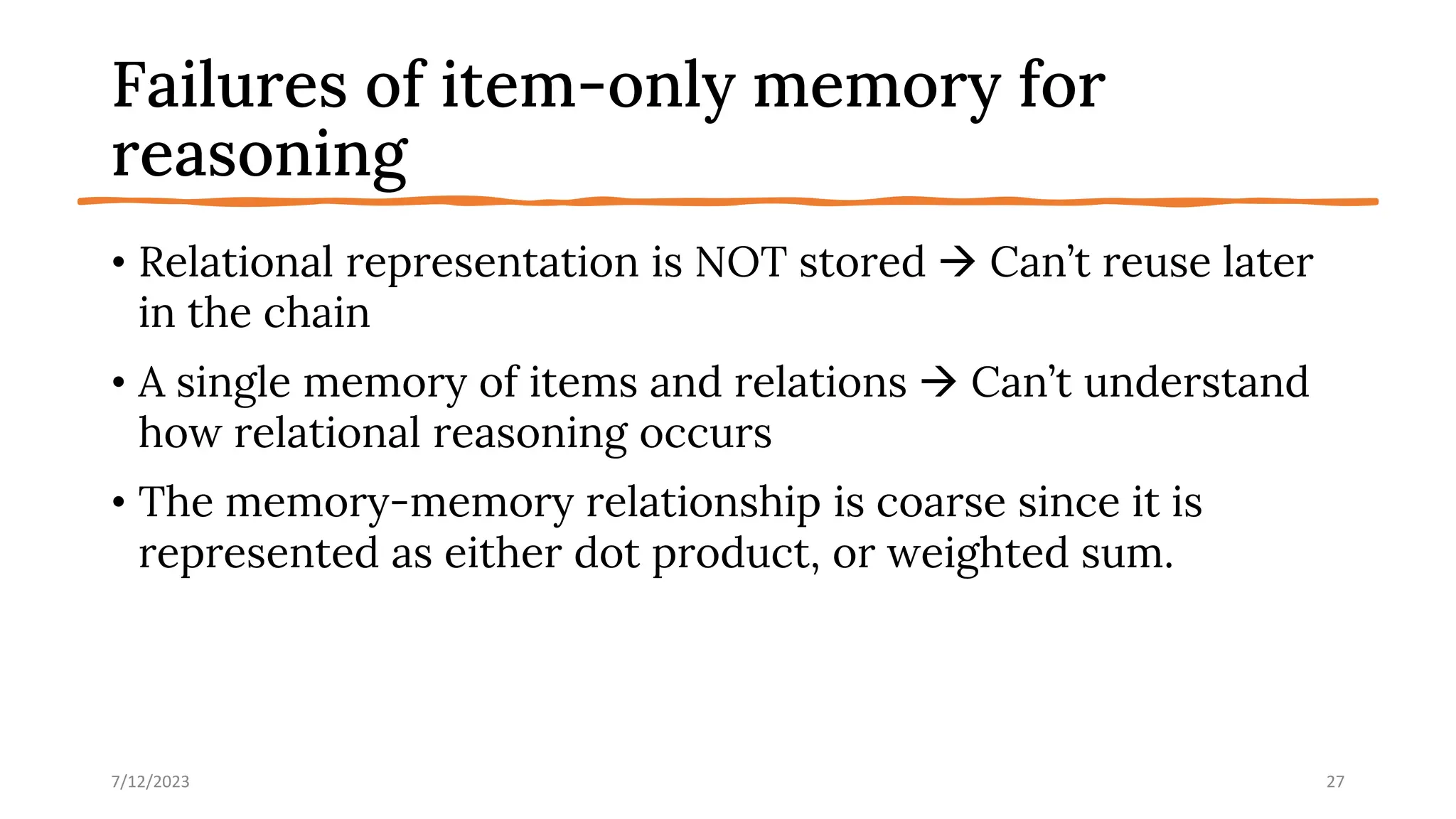

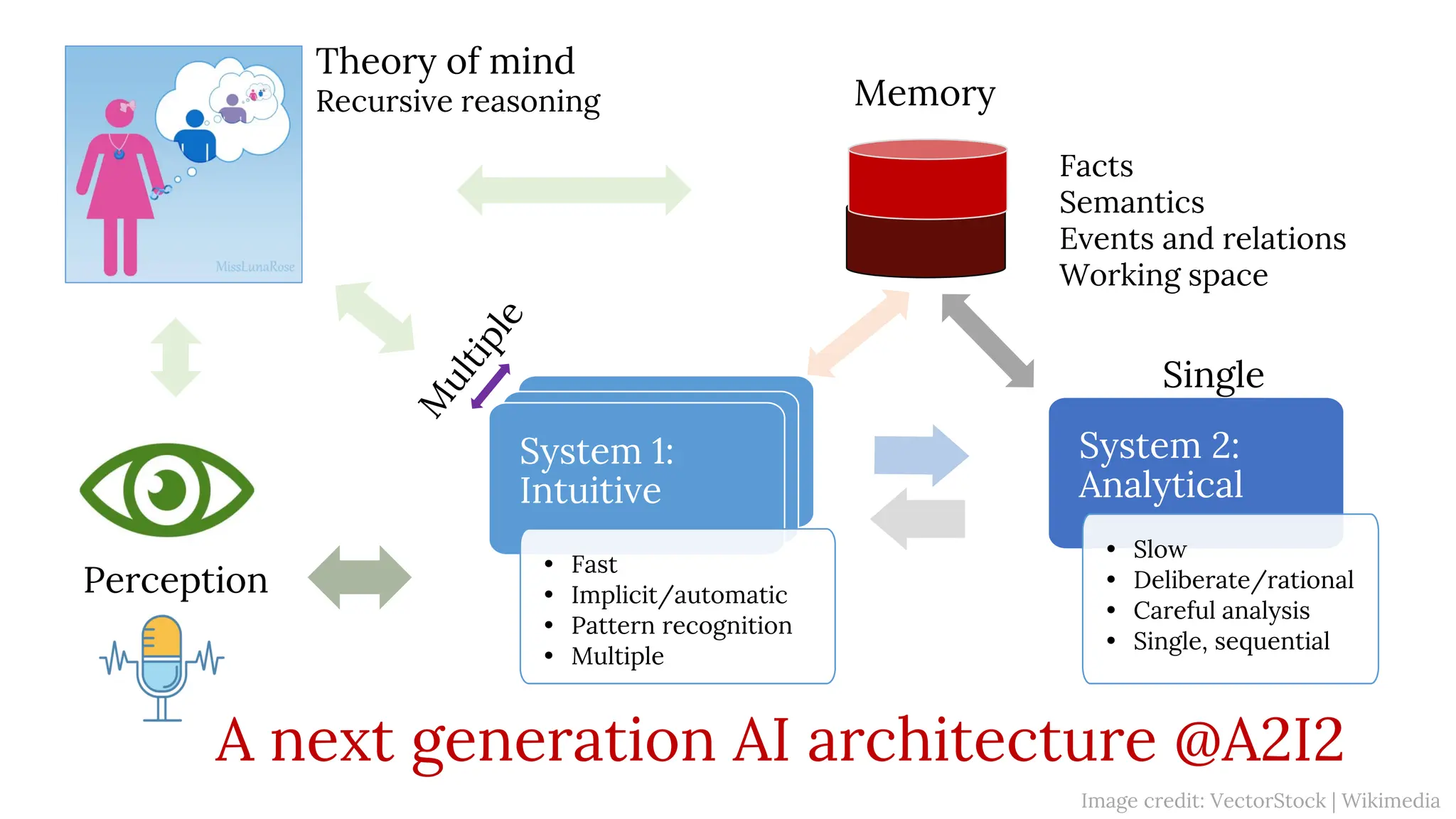

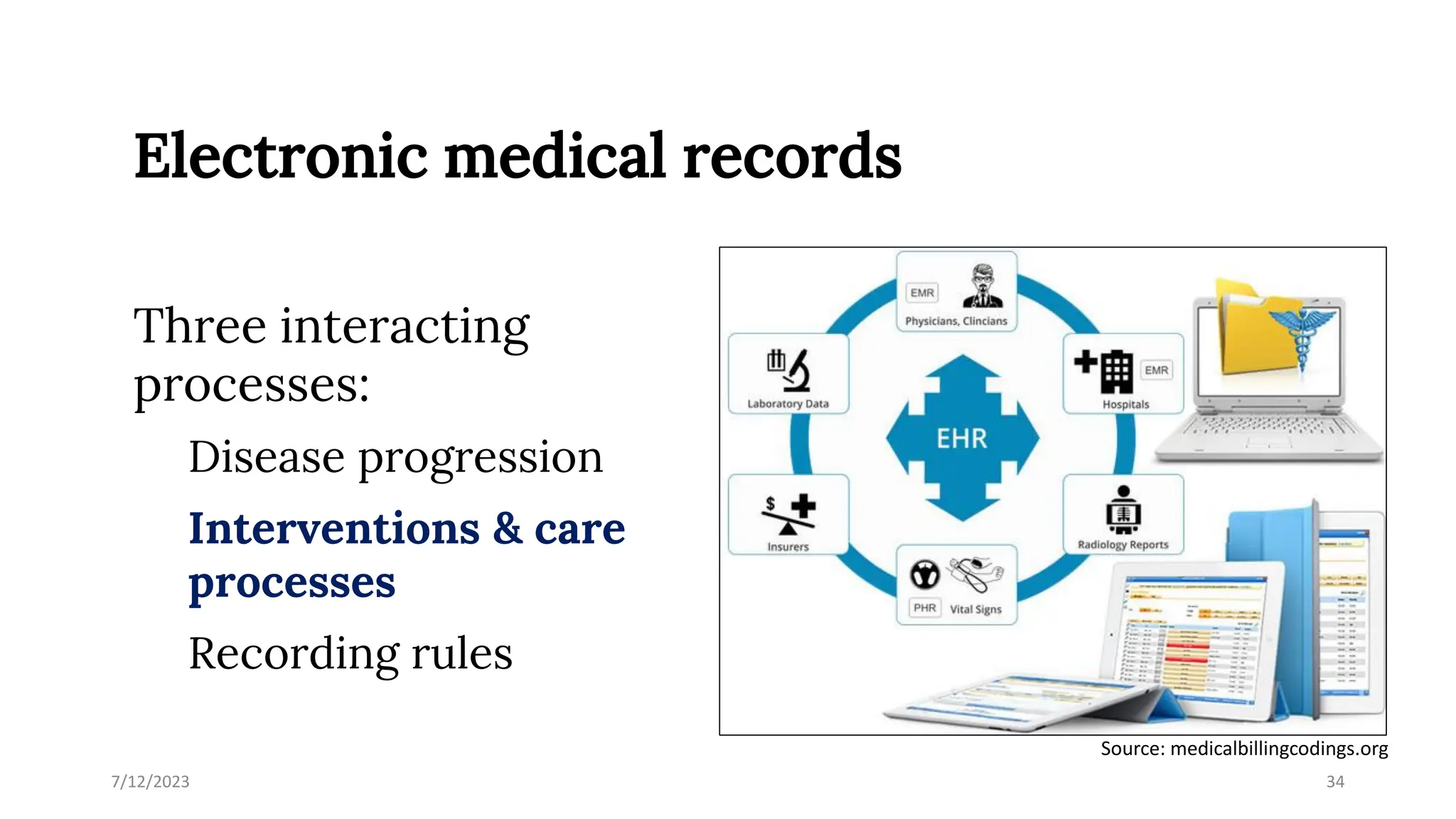

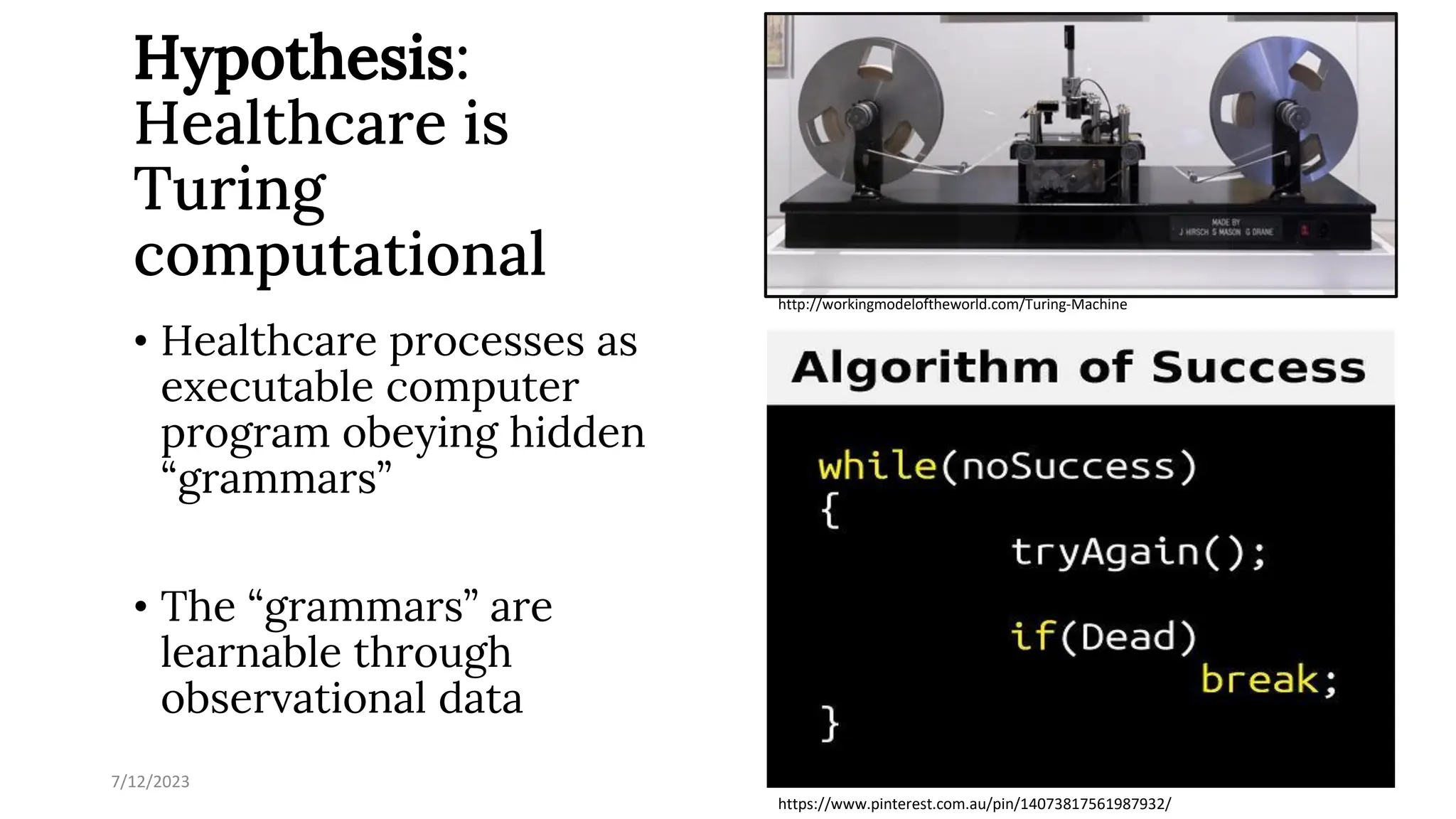

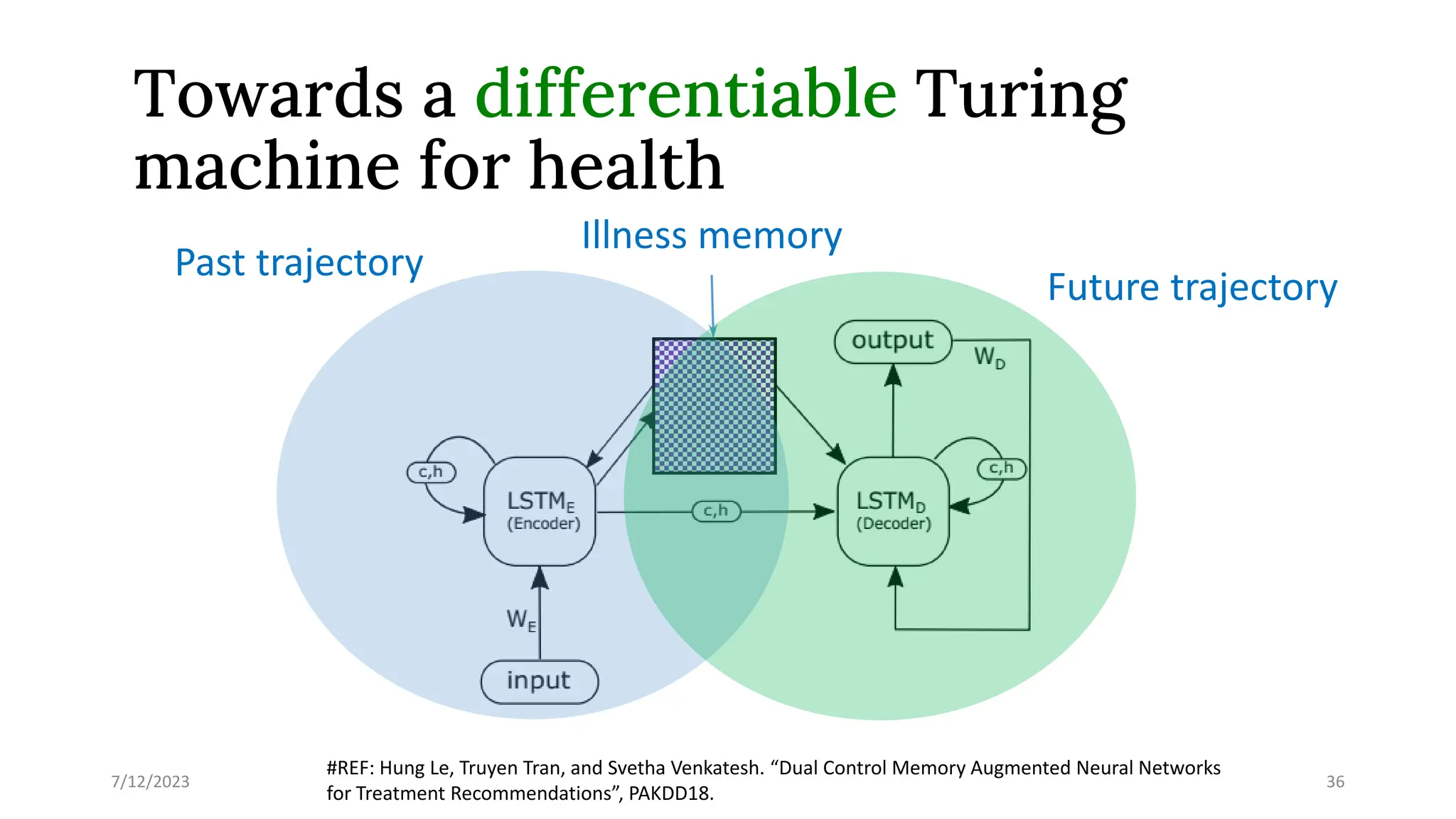

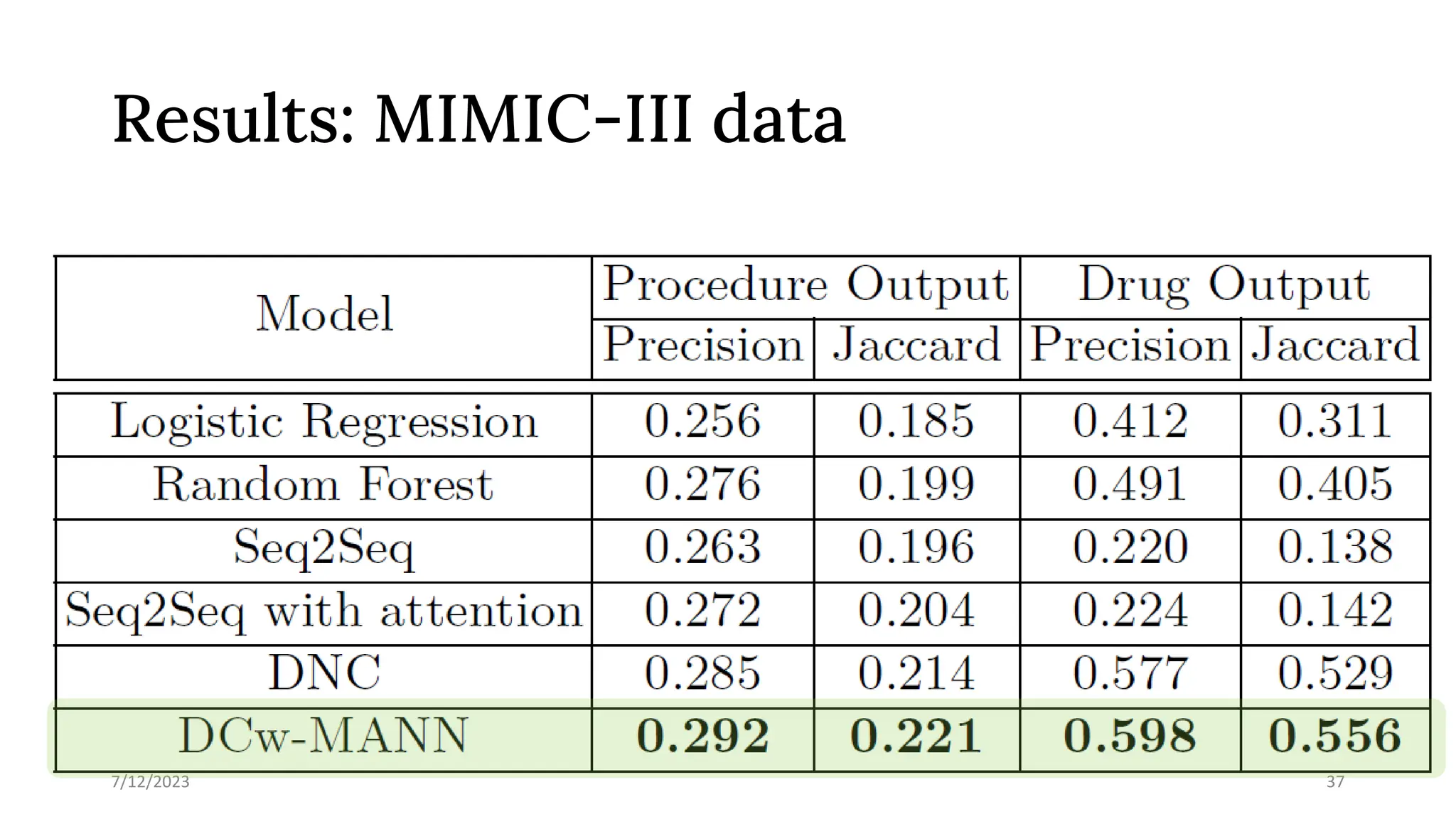

The document discusses advancements in machine learning and AI, highlighting the limitations of current deep learning methods, particularly in reasoning capabilities and generalization. It outlines various approaches to enhancing machine reasoning, including implicit and explicit program synthesis, and emphasizes the importance of integrating memory processes with reasoning. The document also explores applications in healthcare and drug discovery, proposing a Turing machine model for healthcare processes.

![7/12/2023 9

Cartoonist Zach Weinersmith, Science: Abridged Beyond the Point of Usefulness, 2017

Deep learning

[…] Now DL works on

everything, except for

small data, shifted data,

noisy data, artificially

twisted data, deep stuffs,

exact stuffs, abstract

stuffs, causal stuffs,

symbolic stuffs, thinking

stuffs, and stuffs that no

one knows how they work

like consciousness.](https://image.slidesharecdn.com/deep-analytics-v2-231207055151-d4f94efd/75/Deep-analytics-via-learning-to-reason-9-2048.jpg)

![Rodney Brooks’

prediction (1/2018)

[In 2023, there is an] emergence of the

generally agreed upon "next big thing" in AI

beyond deep learning.

As of 11/2022, there is no news yet!

http://rodneybrooks.com/my-dated-predictions/](https://image.slidesharecdn.com/deep-analytics-v2-231207055151-d4f94efd/75/Deep-analytics-via-learning-to-reason-10-2048.jpg)

![Why (not) neural reasoning?

Reasoning is not necessarily achieved by

making logical inferences

There is a continuity between [algebraically

rich inference] and [connecting together

trainable learning systems]

Central to reasoning is composition rules to

guide the combinations of modules to address

new tasks

→ Neural networks are a plausible candidate!

7/12/2023 18

“When we observe a visual scene,

when we hear a complex sentence, we

are able to explain in formal terms the

relation of the objects in the scene, or

the precise meaning of the sentence

components. However, there is no

evidence that such a formal analysis

necessarily takes place: we see a

scene, we hear a sentence, and we just

know what they mean. This suggests

the existence of a middle layer,

already a form of reasoning, but not

yet formal or logical.”

Bottou, Léon. "From machine learning to machine

reasoning." Machine learning 94.2 (2014): 133-149.

Leon Bottou](https://image.slidesharecdn.com/deep-analytics-v2-231207055151-d4f94efd/75/Deep-analytics-via-learning-to-reason-18-2048.jpg)

![“[…] one cannot hope to

understand reasoning without

understanding the memory

processes […]”

(Thompson and Feeney, 2014)

7/12/2023 22

Learning to remember](https://image.slidesharecdn.com/deep-analytics-v2-231207055151-d4f94efd/75/Deep-analytics-via-learning-to-reason-22-2048.jpg)

![Noisy, dynamic data: Problem

38

Unified multimodal representation

[Events/features streams] [Sensory streams]

Cross-channel deliberative

reasoning

Anomaly detection

Events detection

Prediction/forecast

Generation

[Downstream tasks]

Dist. events assoc.

Decoder

[Memory]

?](https://image.slidesharecdn.com/deep-analytics-v2-231207055151-d4f94efd/75/Deep-analytics-via-learning-to-reason-38-2048.jpg)

![Noisy, dynamic data: System

39

Unified multimodal representation

[Events/features streams] [Sensory streams]

Cross-channel deliberative

reasoning

Anomaly detection

Events detection

Prediction/forecast

Generation

[Downstream tasks]

Dist. events assoc.

Decoder

[Memory]](https://image.slidesharecdn.com/deep-analytics-v2-231207055151-d4f94efd/75/Deep-analytics-via-learning-to-reason-39-2048.jpg)