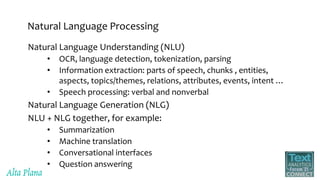

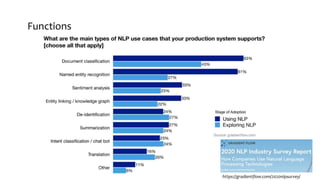

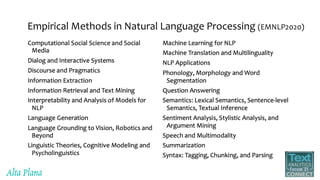

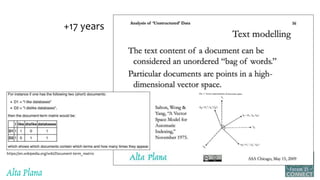

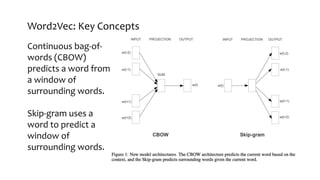

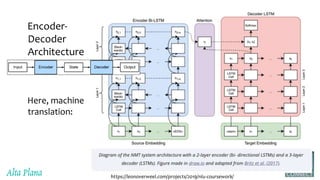

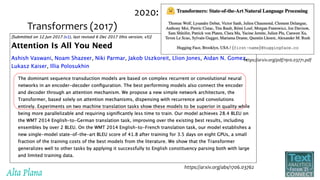

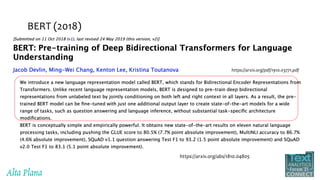

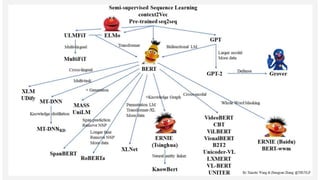

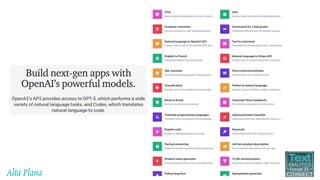

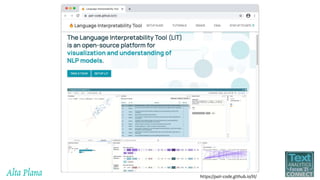

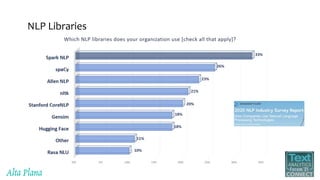

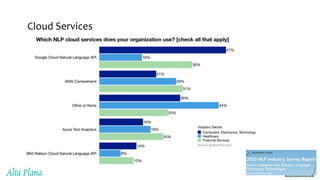

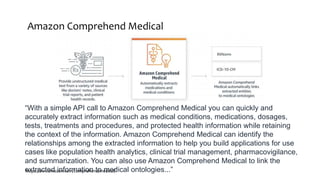

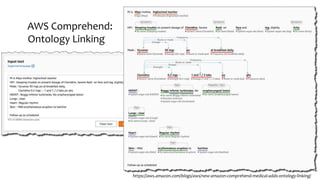

This document discusses recent advances in natural language processing (NLP), focusing on natural language understanding (NLU) and natural language generation (NLG). It highlights various techniques such as word embeddings, including word2vec and BERT, and applications like machine translation, summarization, and conversational interfaces. Additionally, it references NLP libraries and cloud services, emphasizing their role in extracting meaningful information from data.