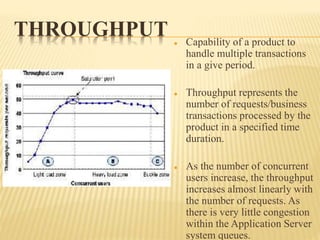

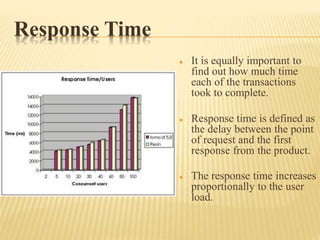

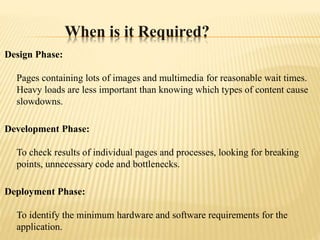

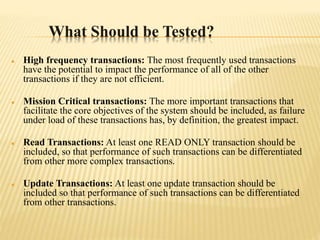

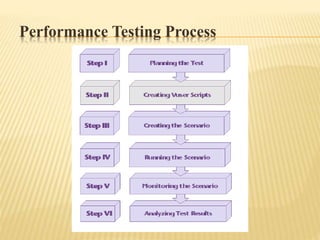

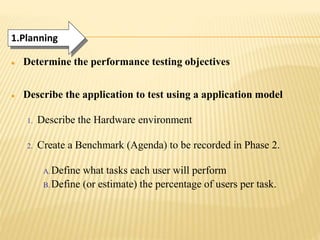

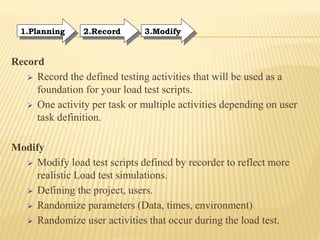

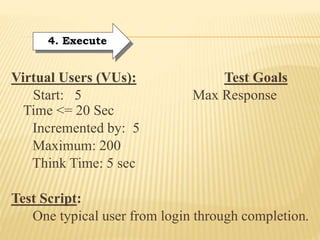

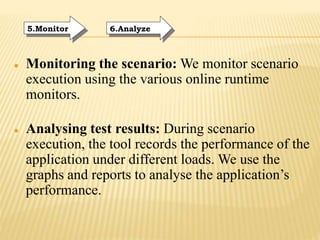

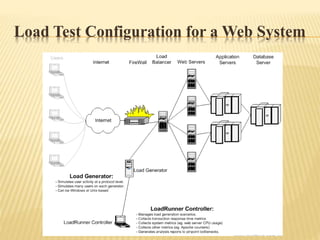

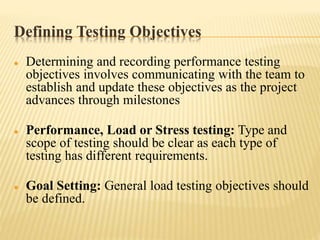

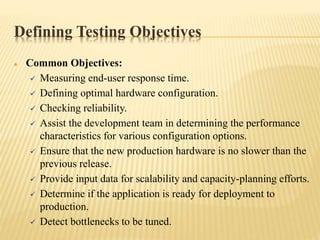

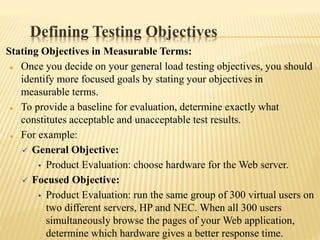

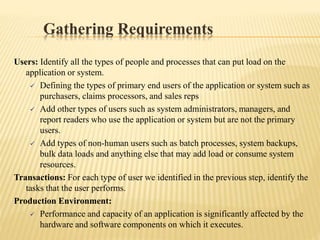

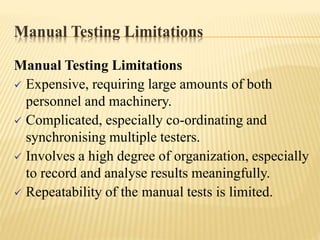

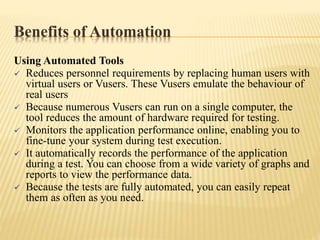

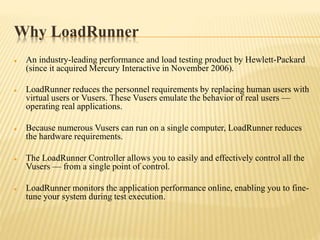

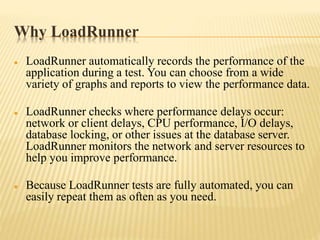

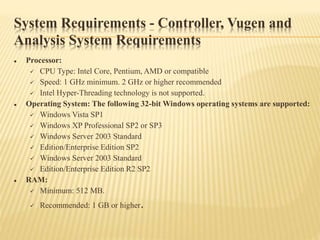

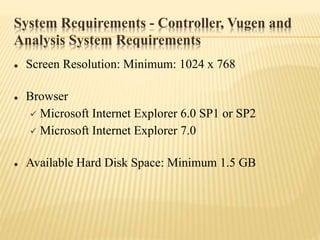

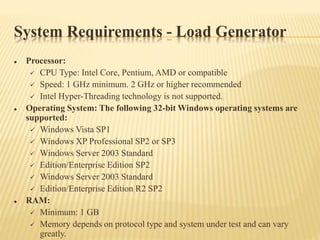

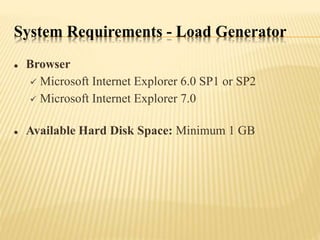

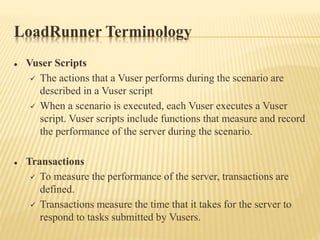

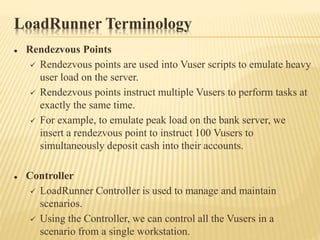

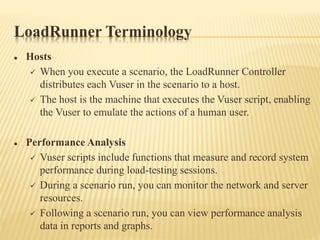

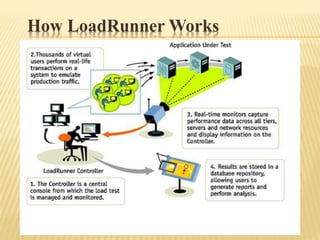

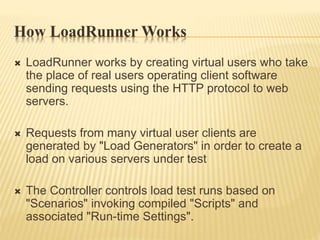

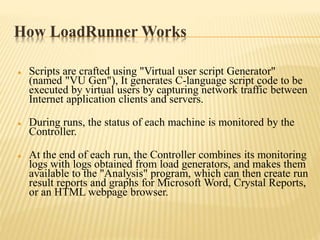

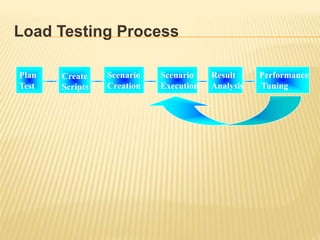

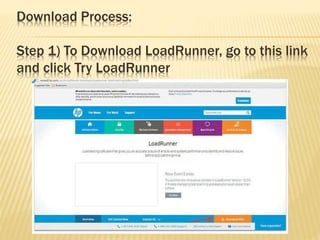

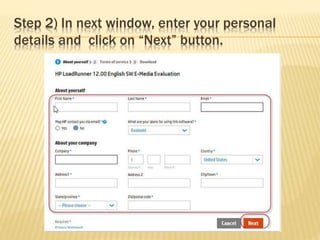

The document provides a comprehensive overview of HP LoadRunner, covering performance and load testing fundamentals, the differences between performance, load, and stress testing, and the importance of performance testing in software development. It explains key concepts like throughput, response time, and tuning, while outlining the performance testing process, objectives, and requirements. The document also details the technical specifications and operational guidelines for using LoadRunner effectively.