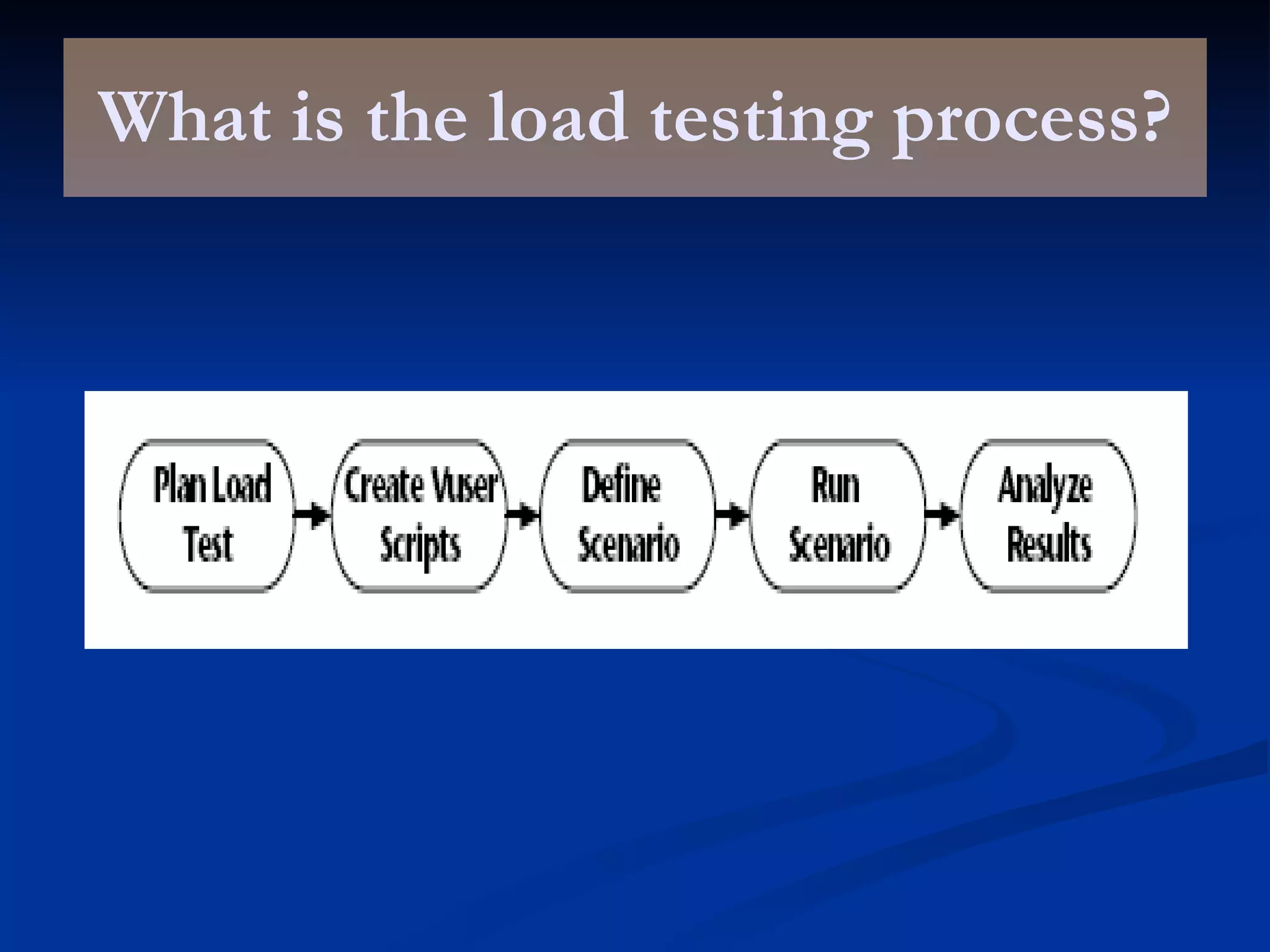

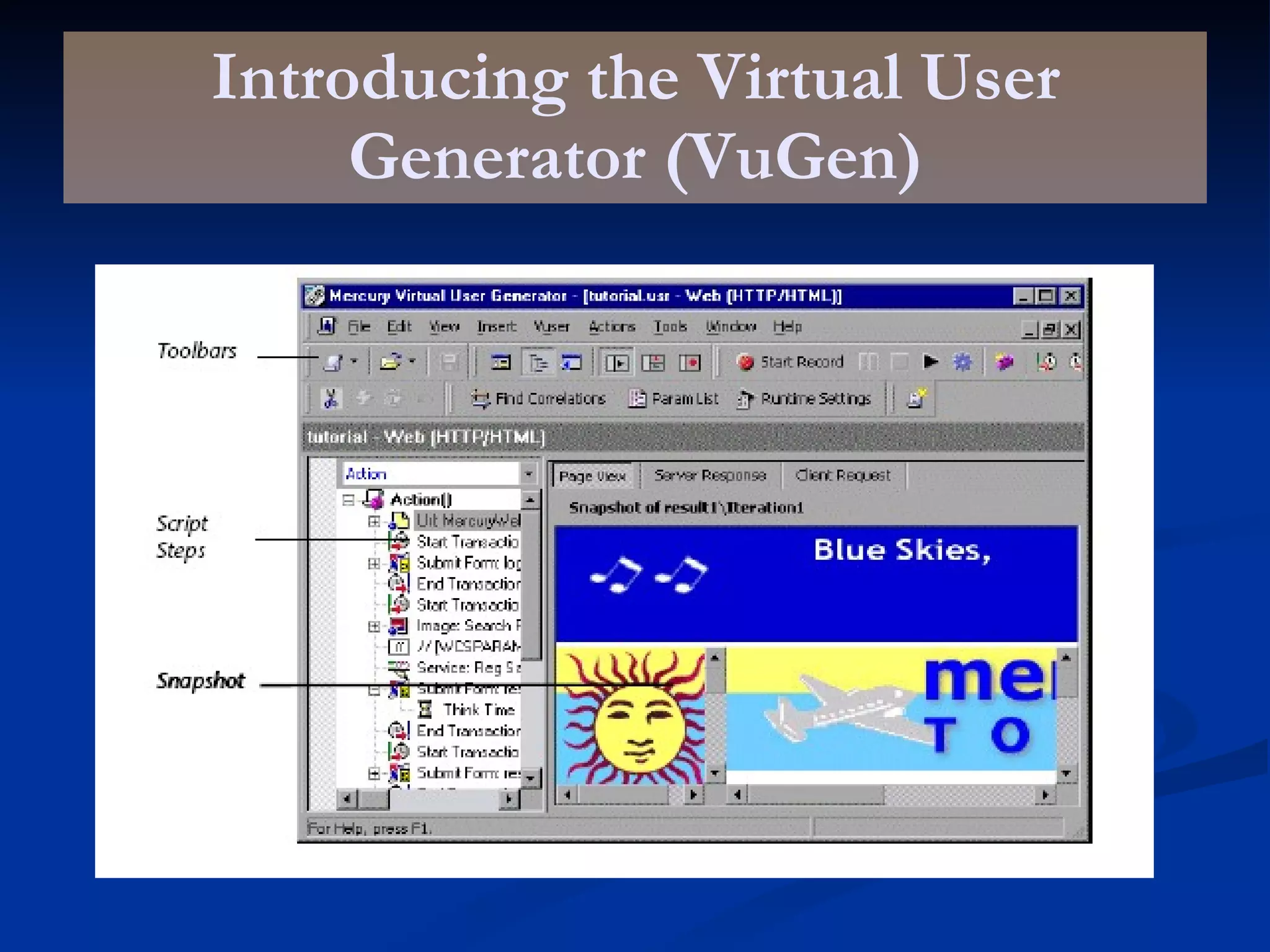

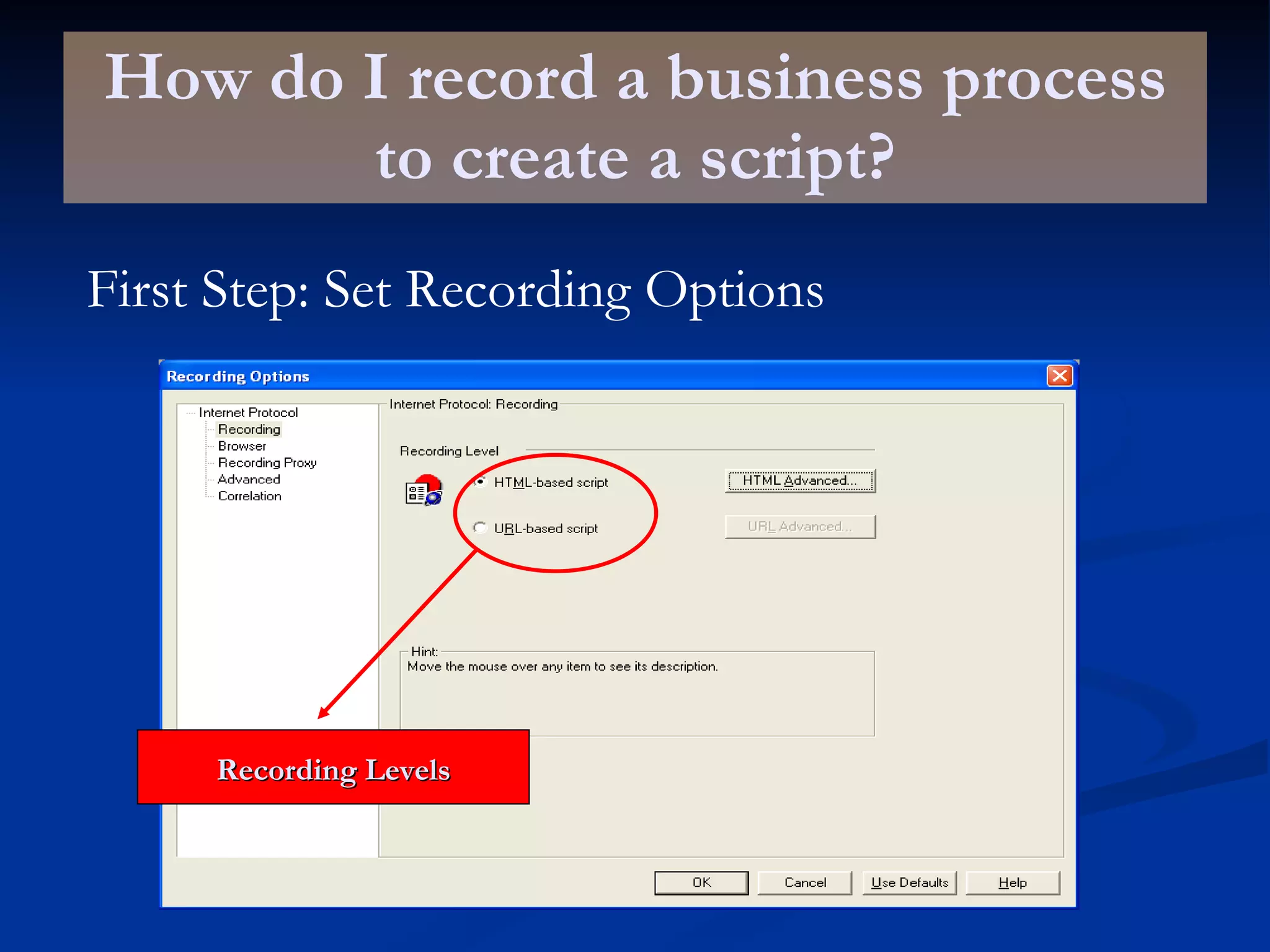

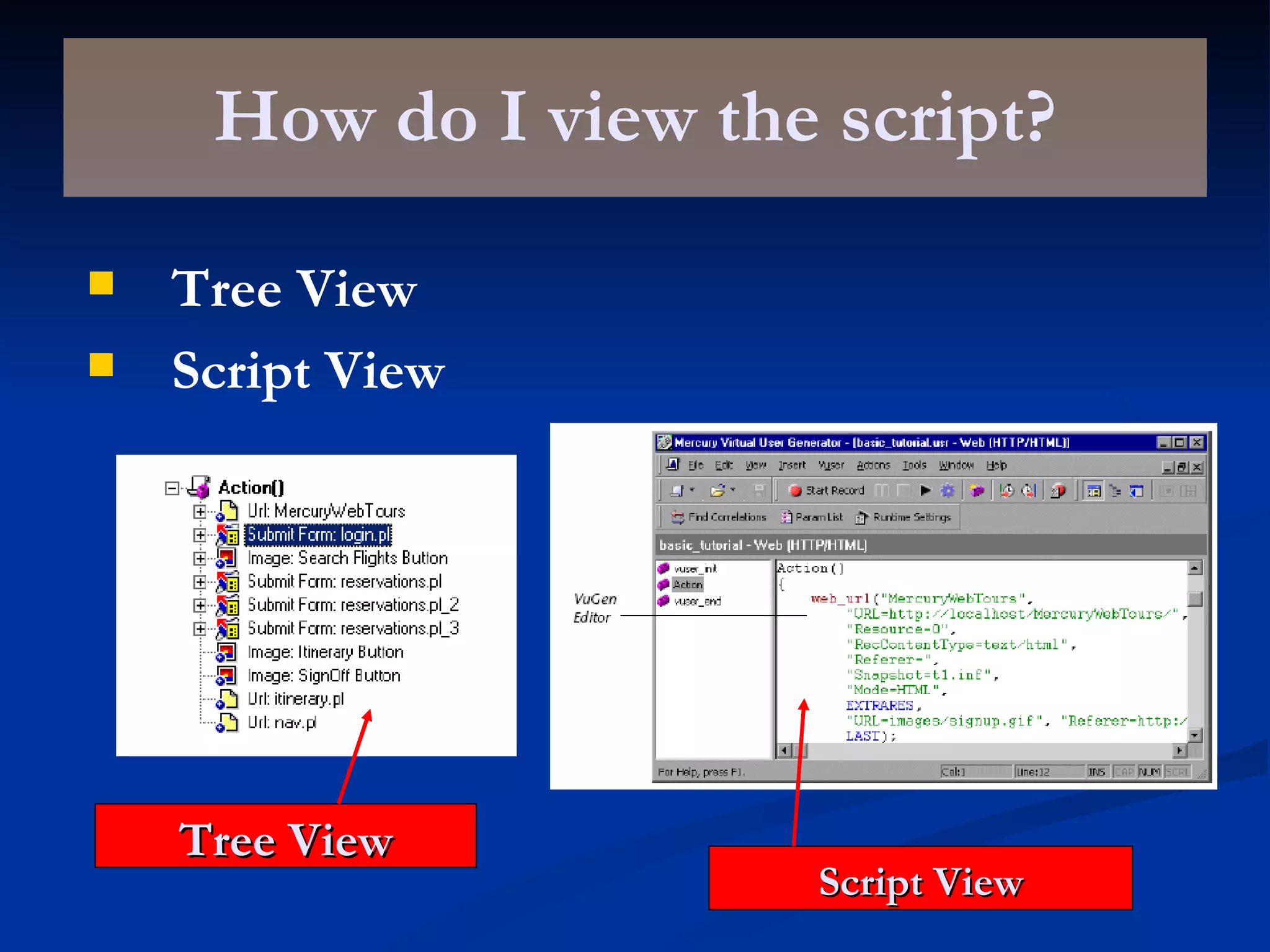

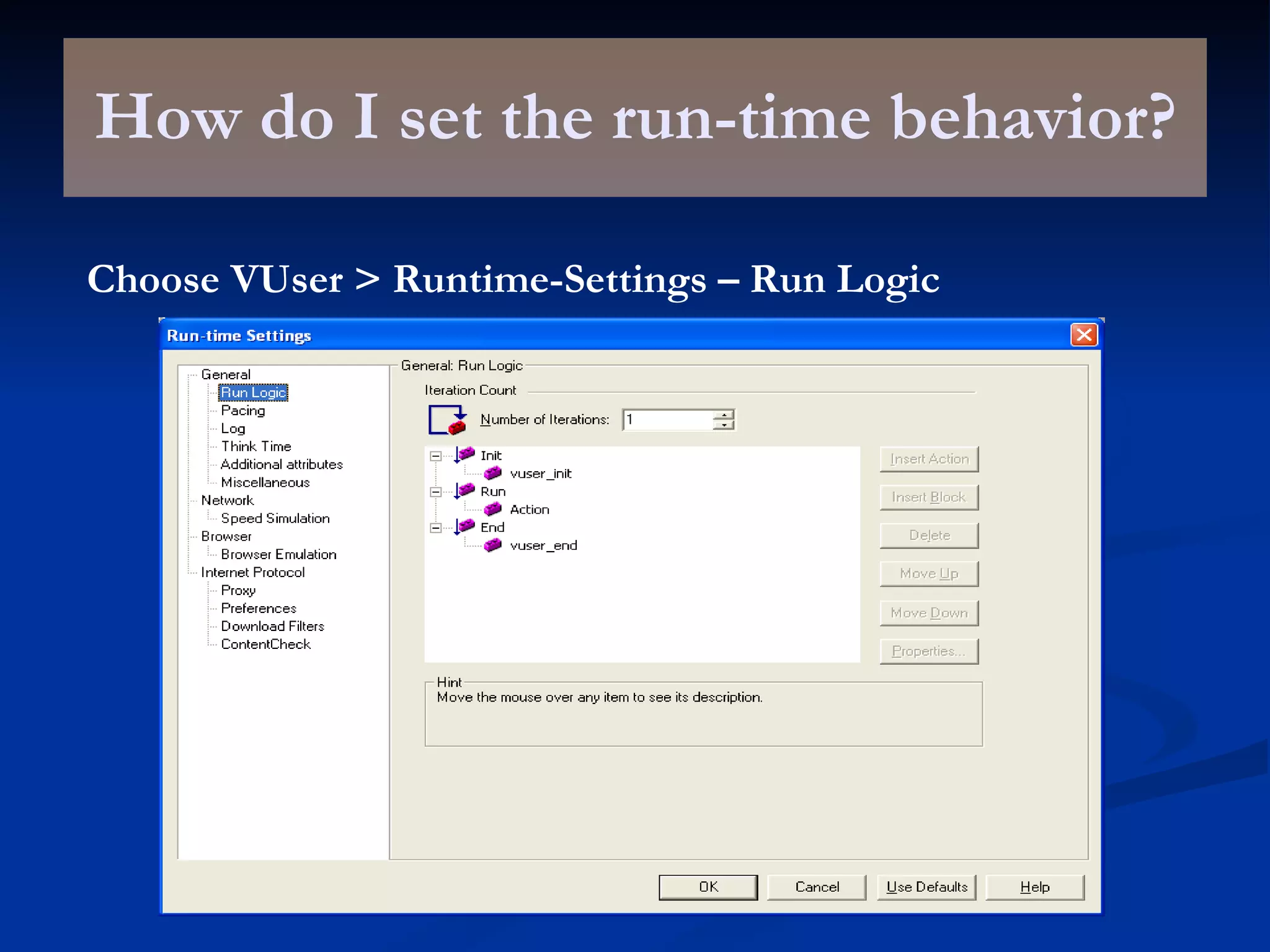

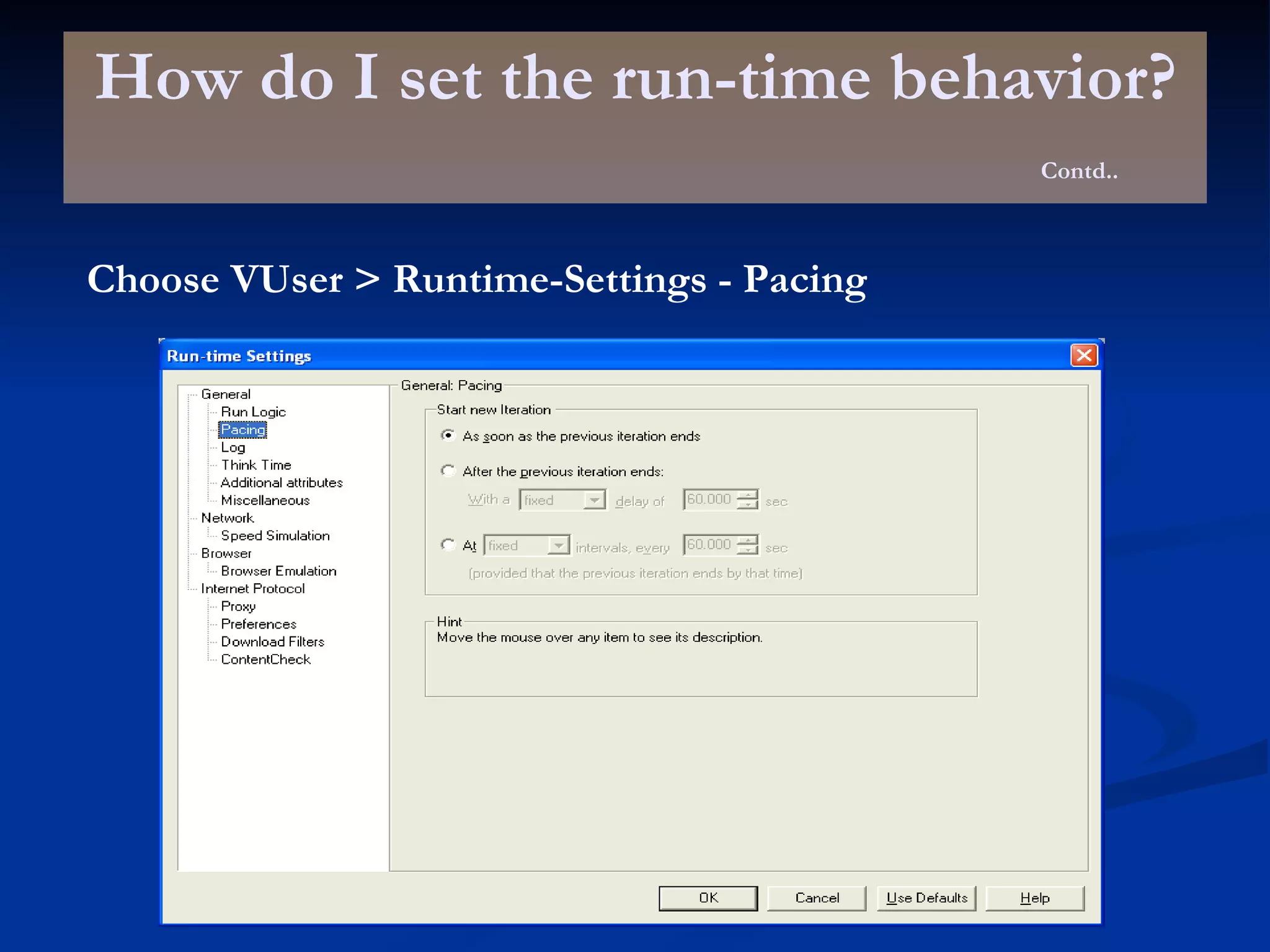

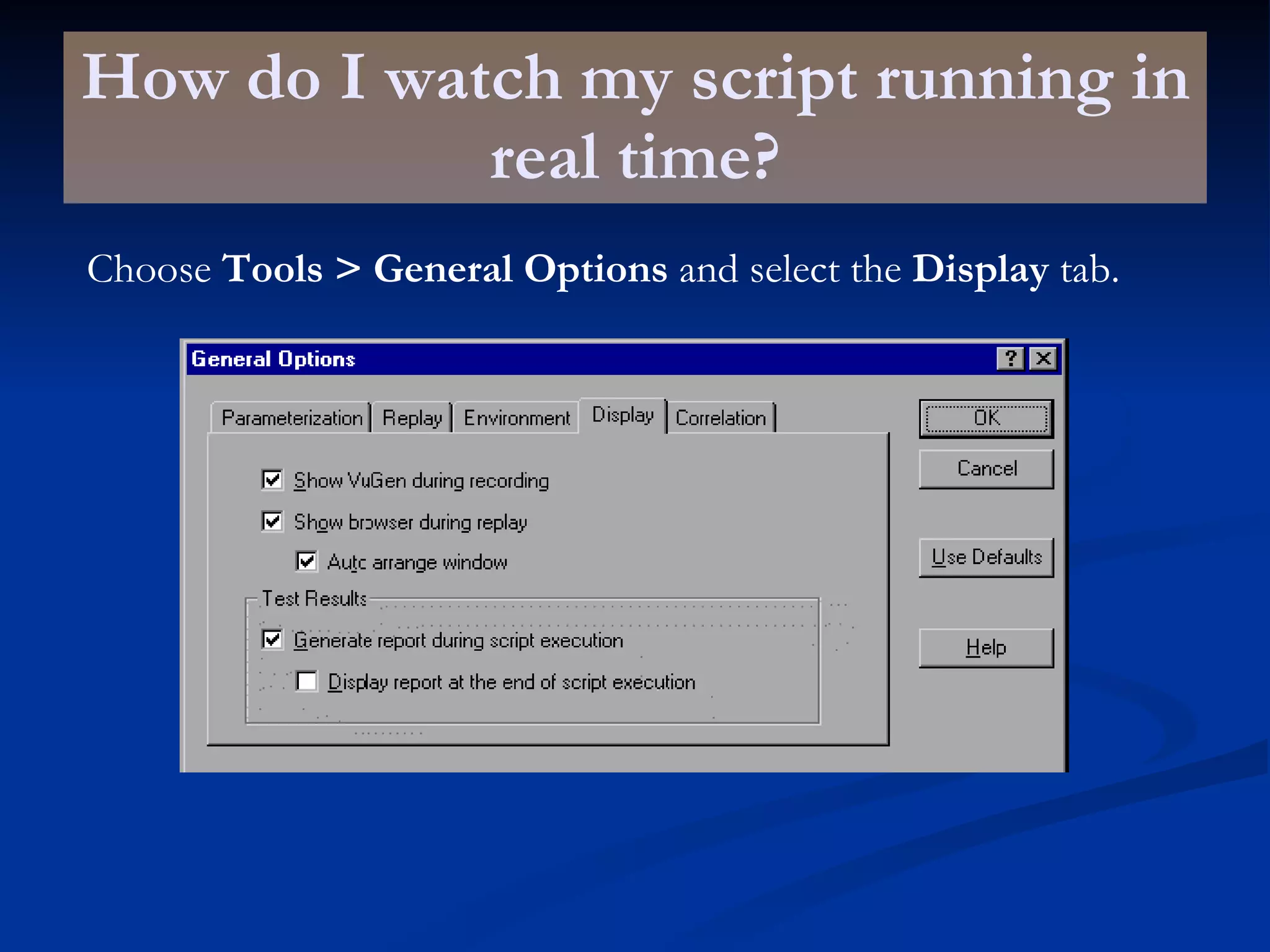

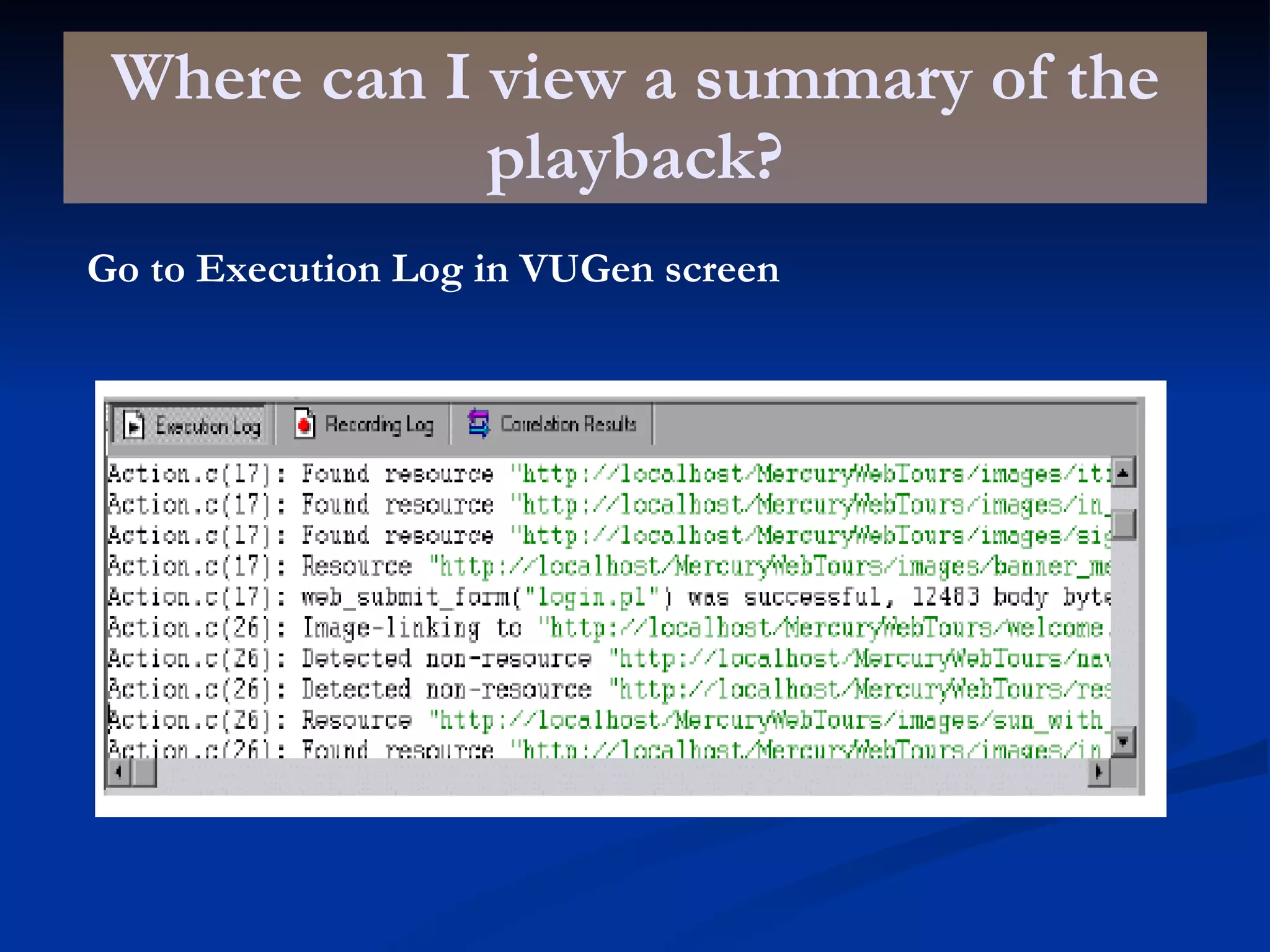

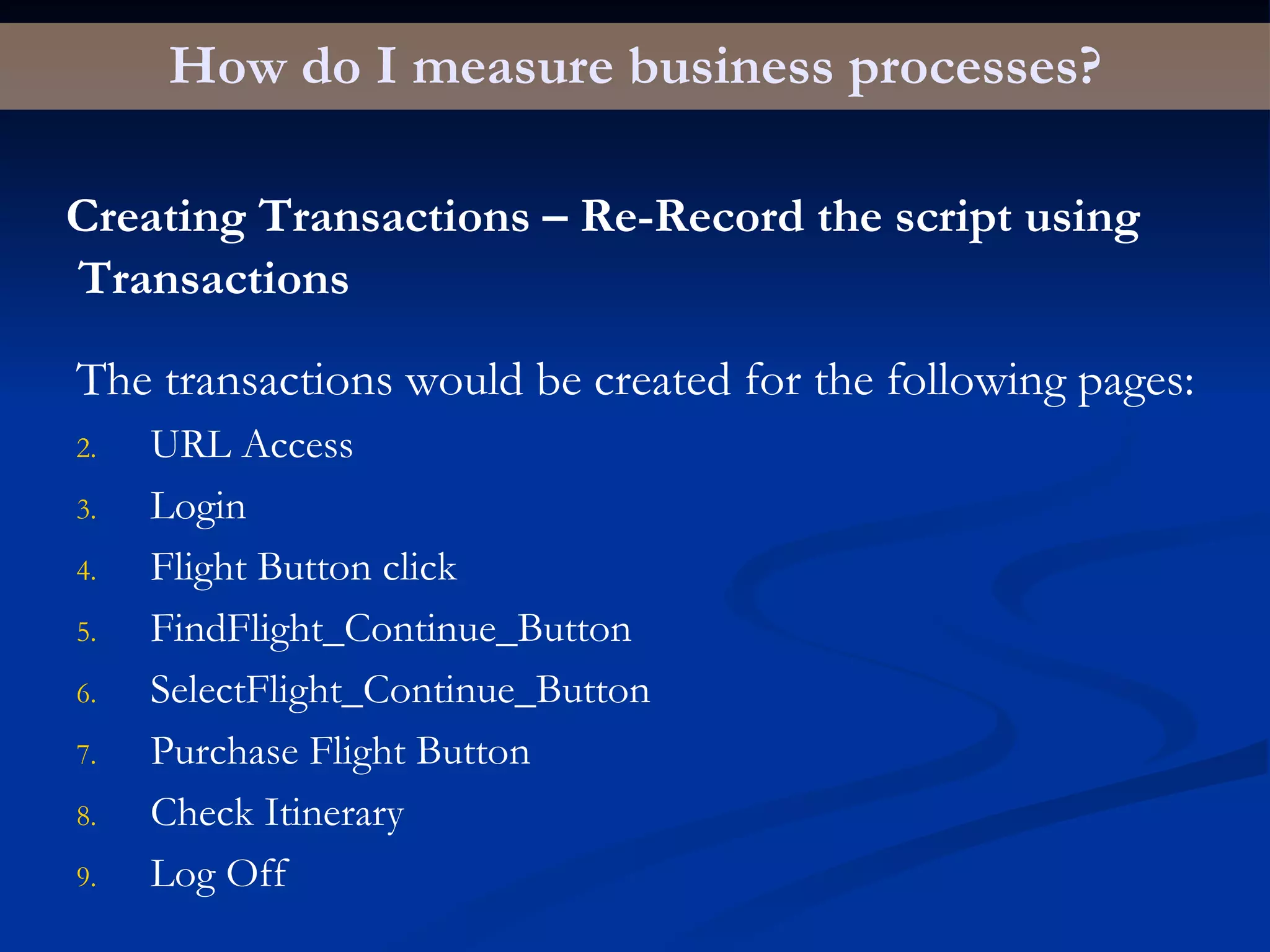

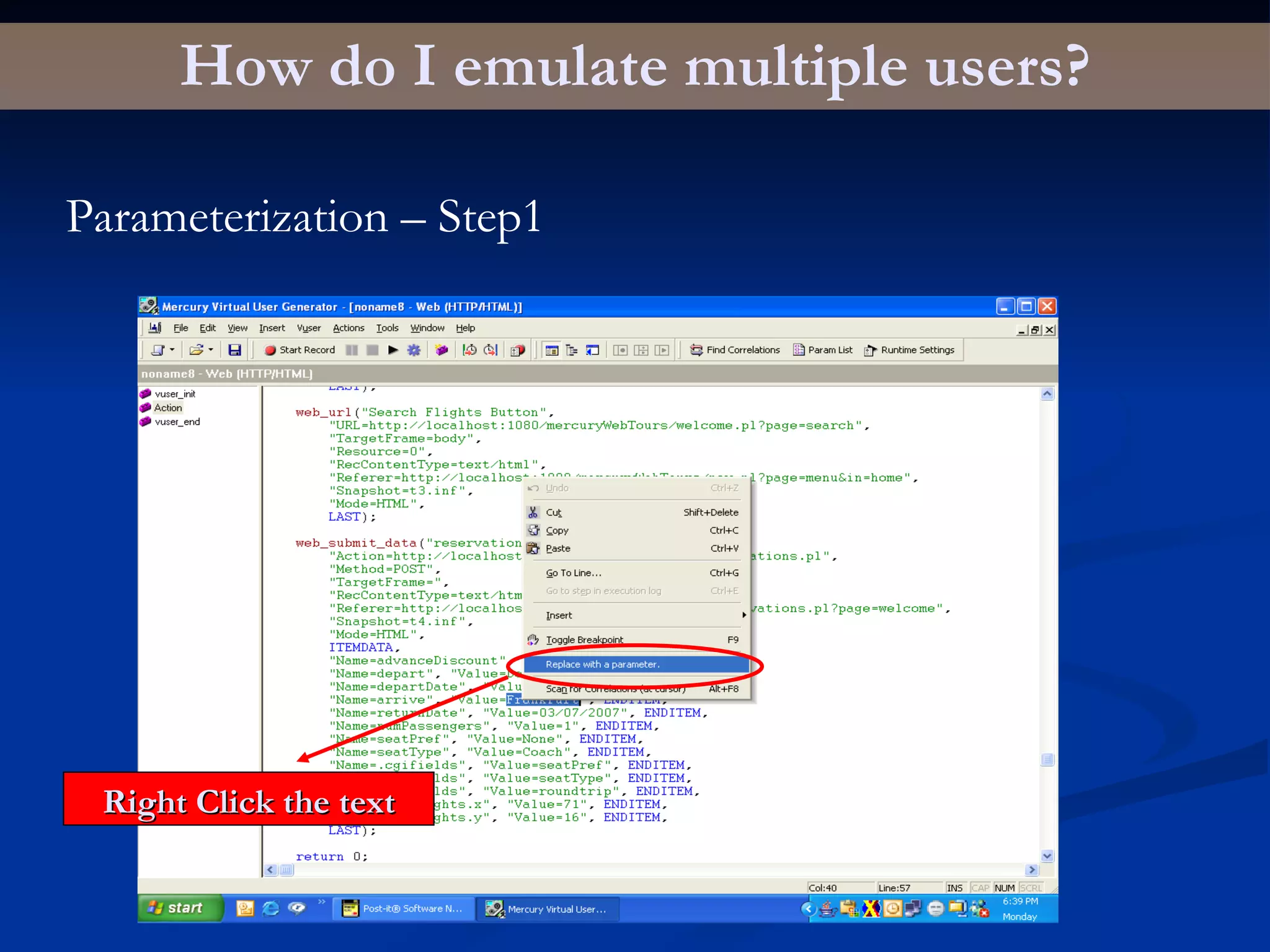

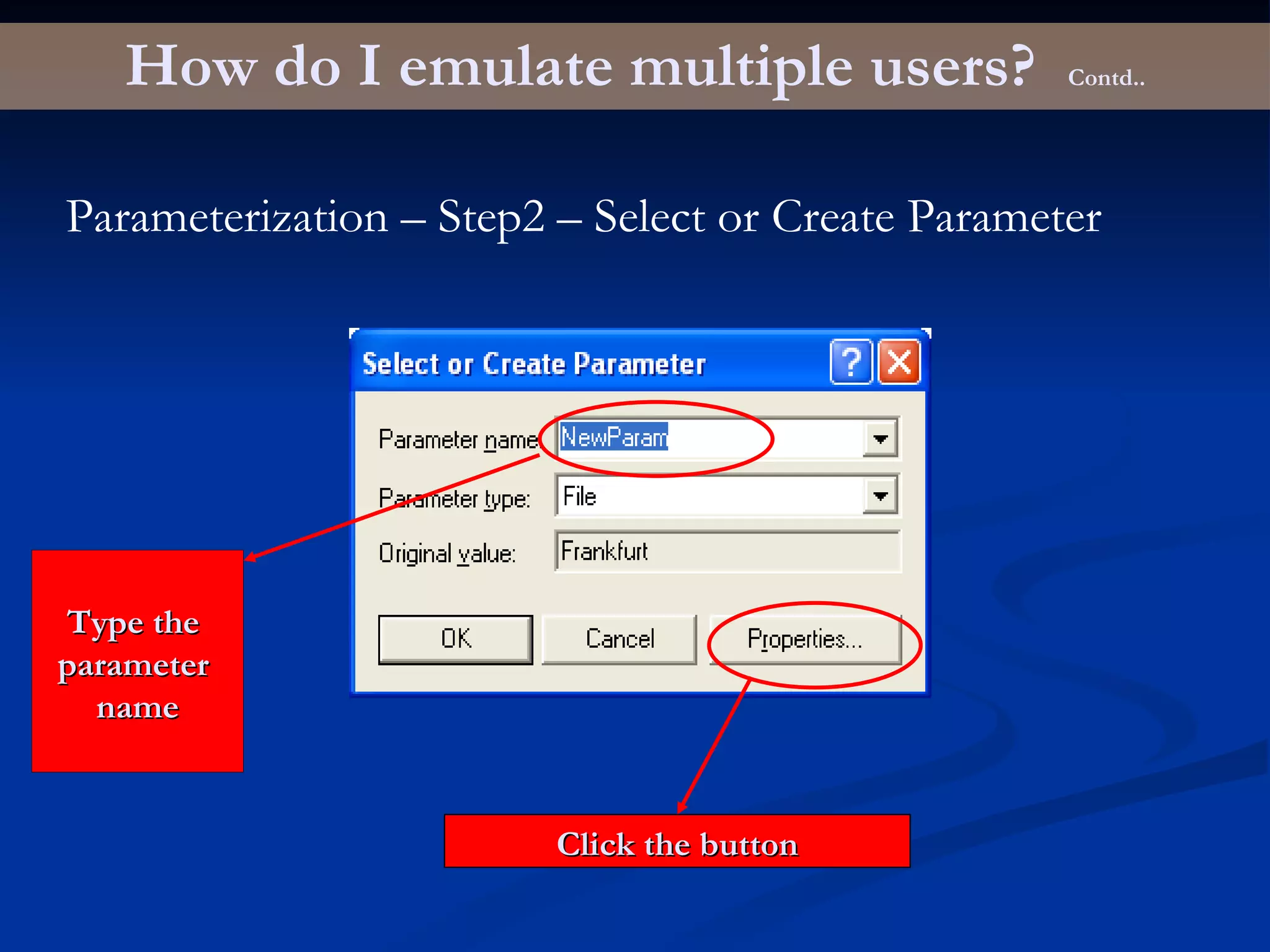

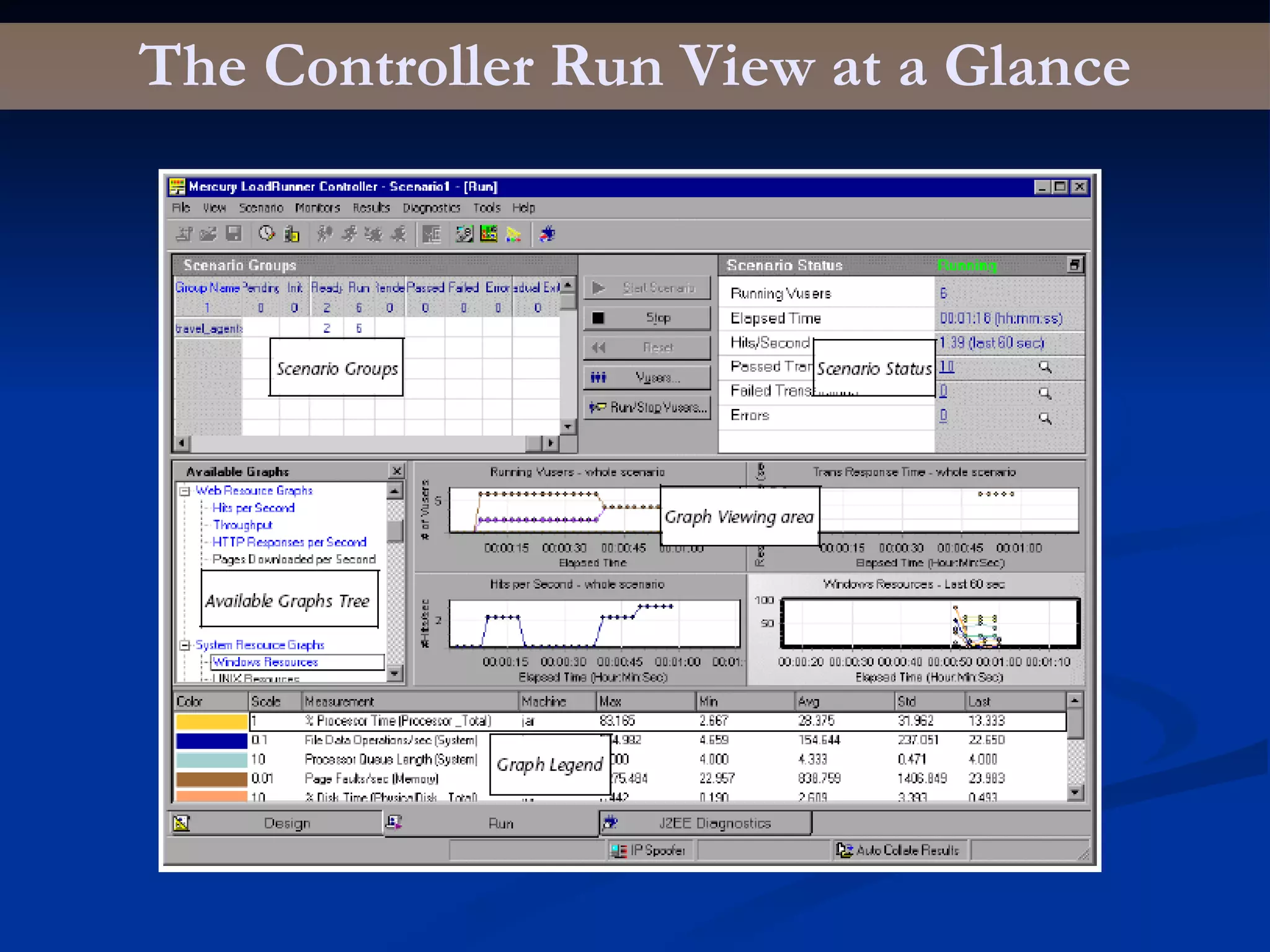

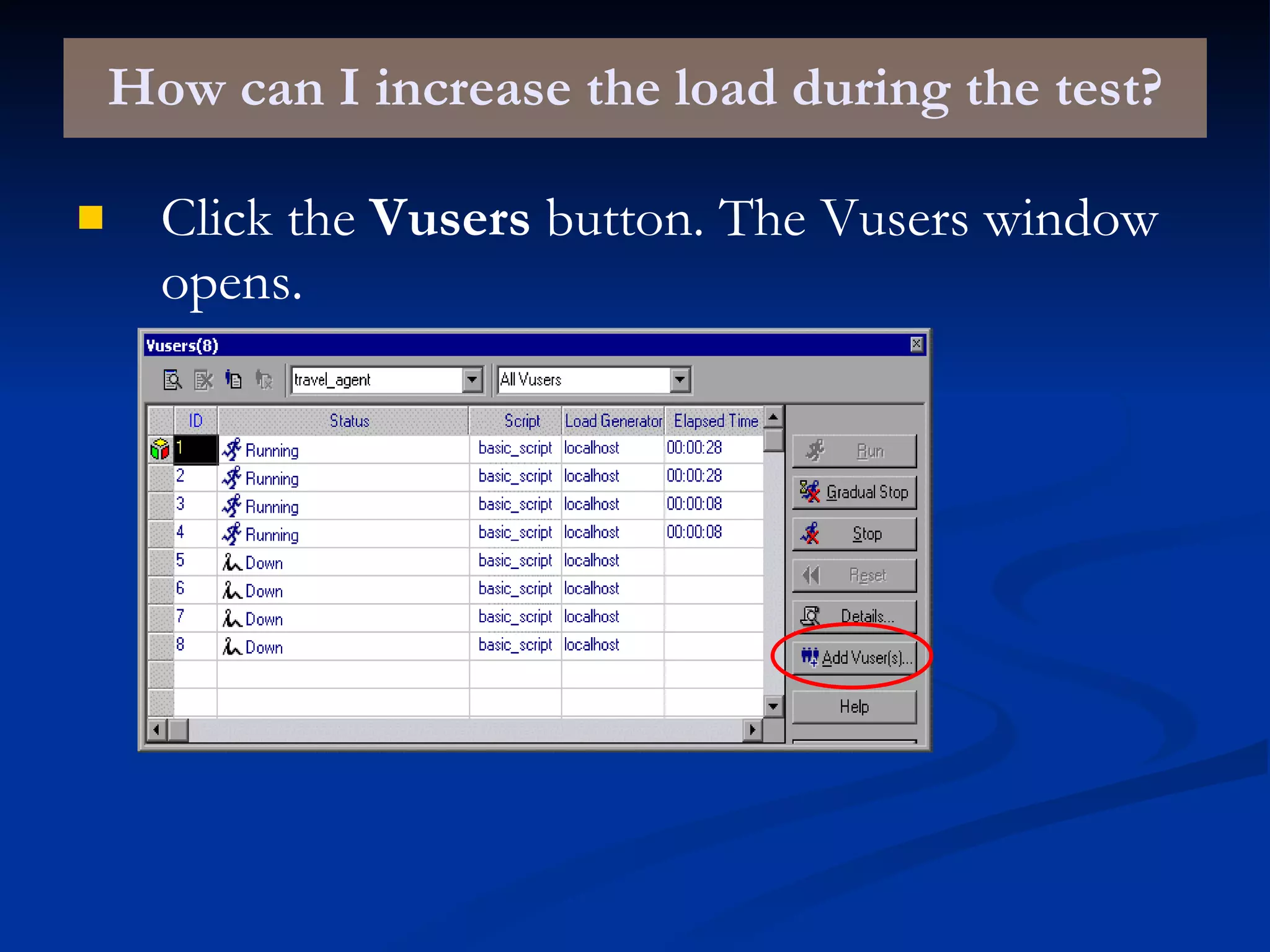

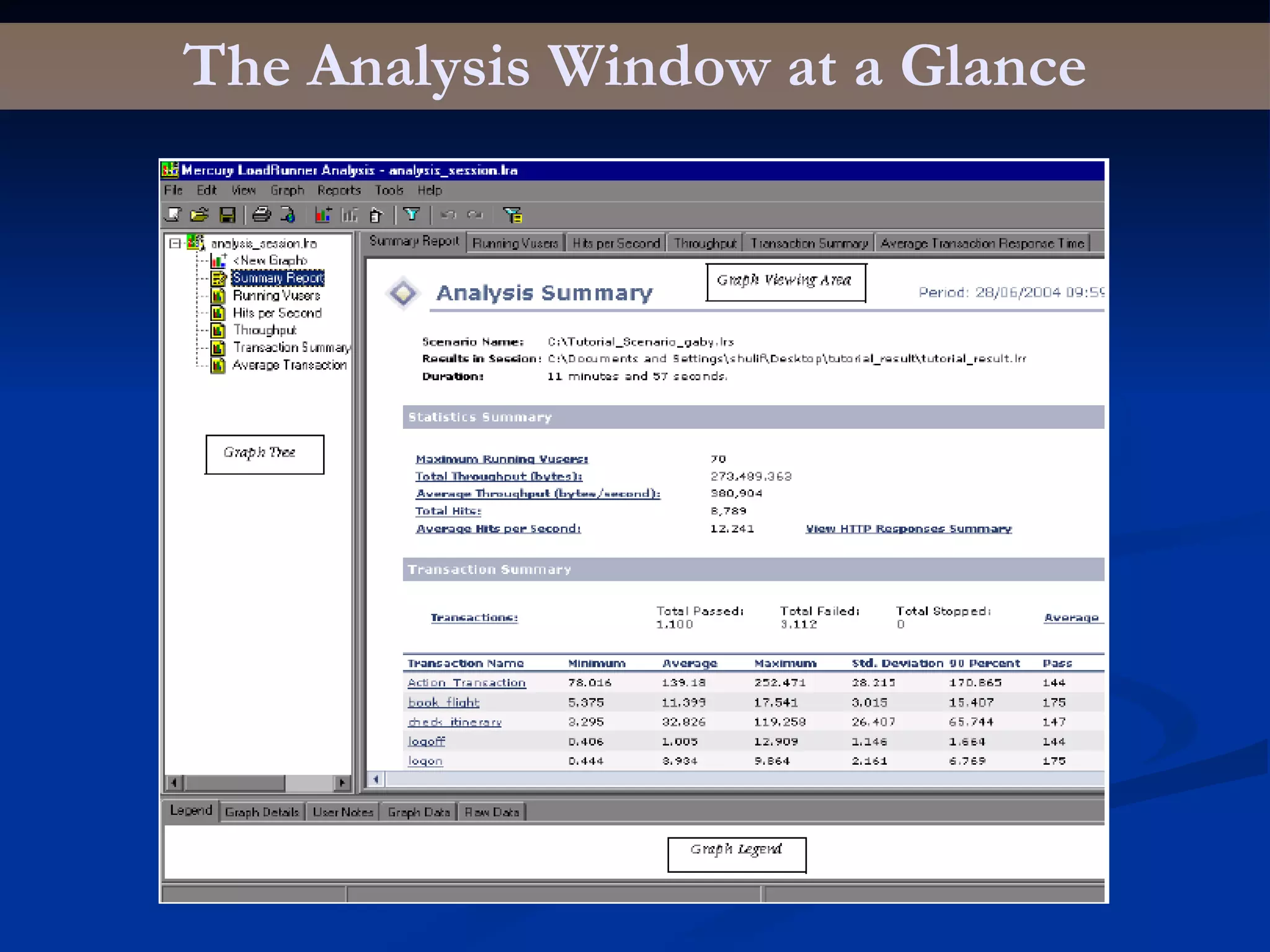

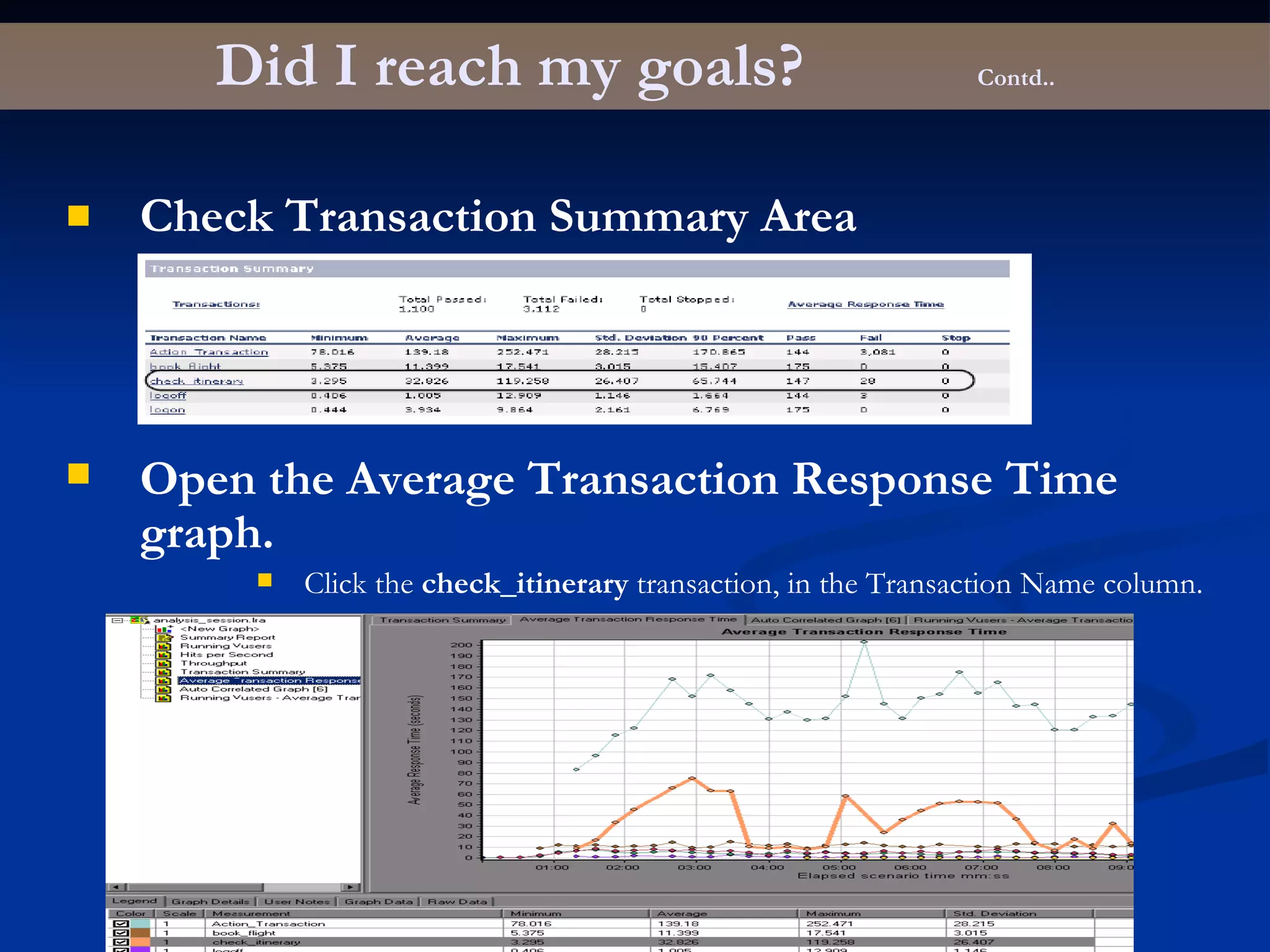

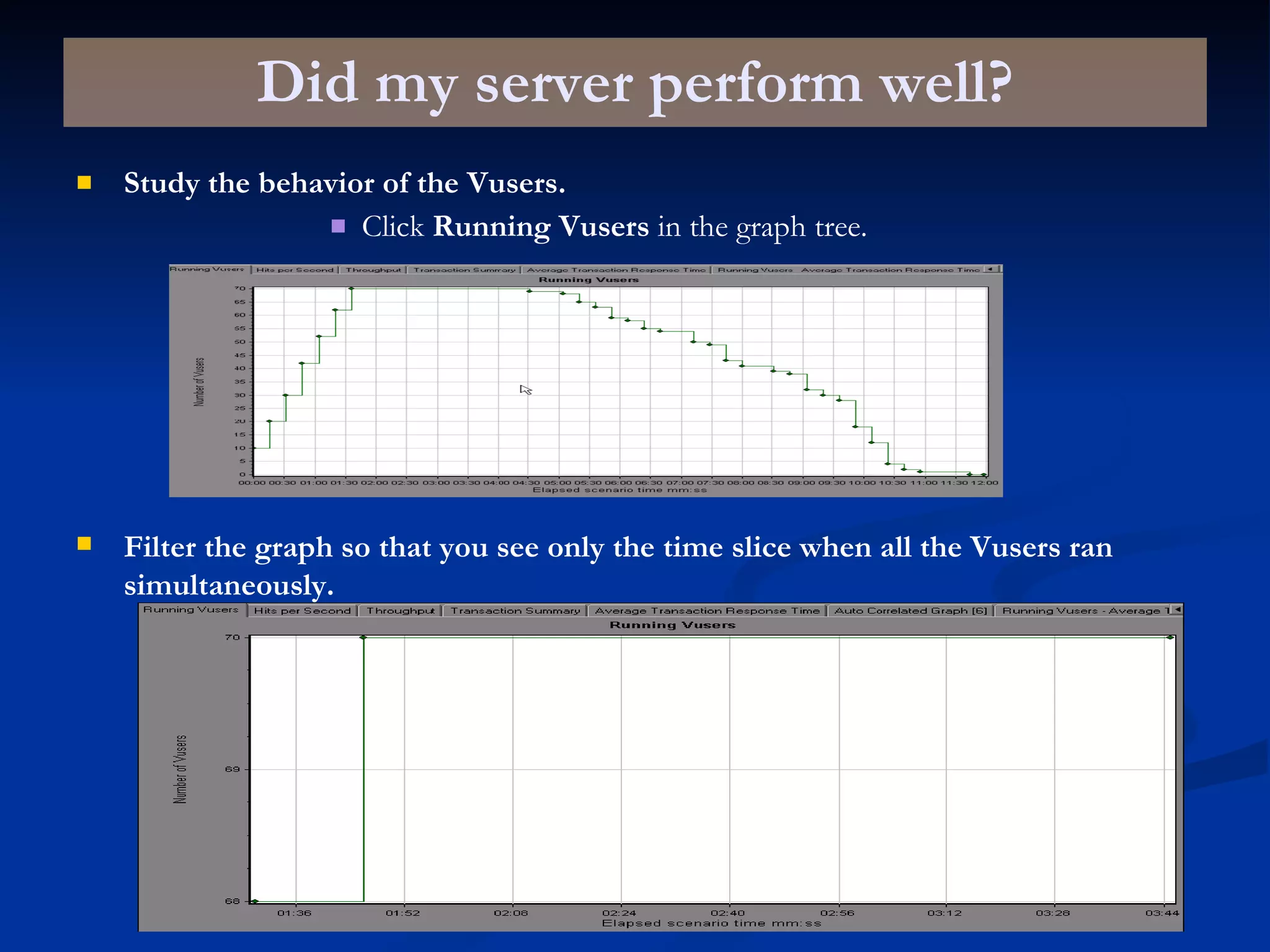

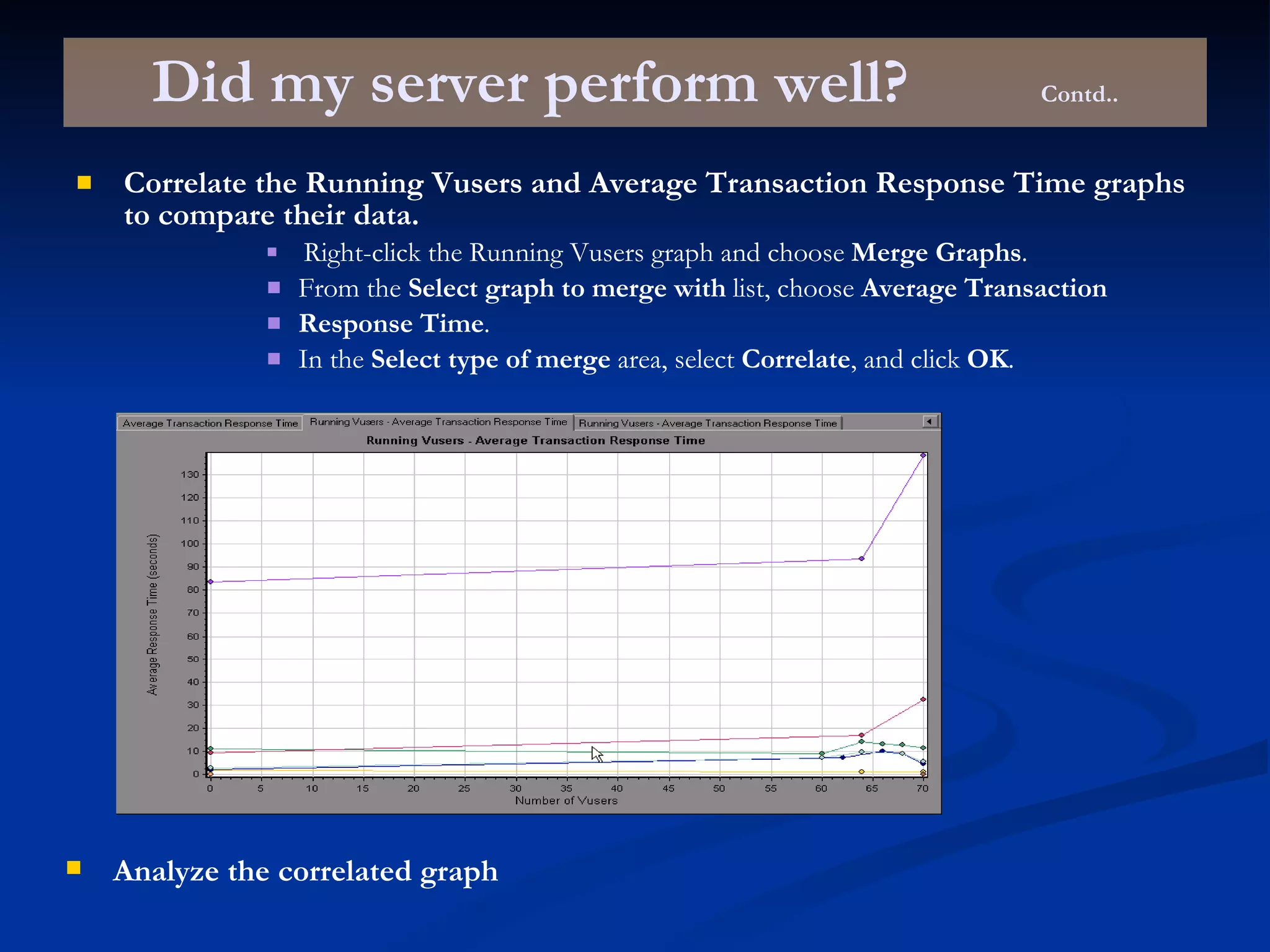

This document provides an overview of using LoadRunner to perform load and performance testing. It covers topics such as why performance testing is important, definitions of different types of testing, benchmark design, LoadRunner components, the load testing process, building scripts using the Virtual User Generator, playing back scripts, solving common issues, preparing scripts for load testing, creating load testing scenarios in the LoadRunner Controller, running load tests, and analyzing results.