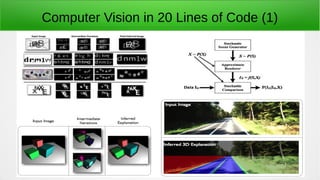

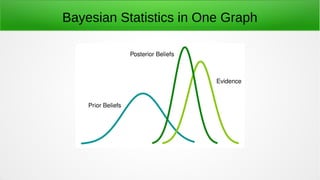

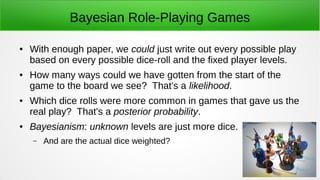

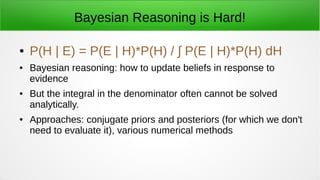

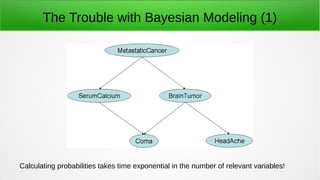

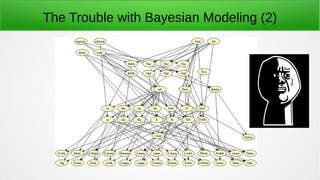

The document provides an overview of probabilistic programming, discussing Bayesian statistics and how it relates to modeling uncertainty through generative structures. It highlights the challenges and methodologies for inference, including the limitations of traditional Bayesian modeling and the benefits of probabilistic programming languages. Applications range from computer vision to artificial intelligence, emphasizing the potential for machines to learn from experience and the need for improved inference algorithms.

![The Elegance of Probabilistic Programming

● Programs = Distributions.

– Running a program = sampling an outcome from the distribution

– Monadic semantics: every function maps input values to output

distributions.

● Queries are just functions; language runtime performs

inference.

● '[H]allucinate possible worlds', and reason about them.](https://image.slidesharecdn.com/probabilisticprogramming-141231015626-conversion-gate01/85/Probabilistic-programming-12-320.jpg)