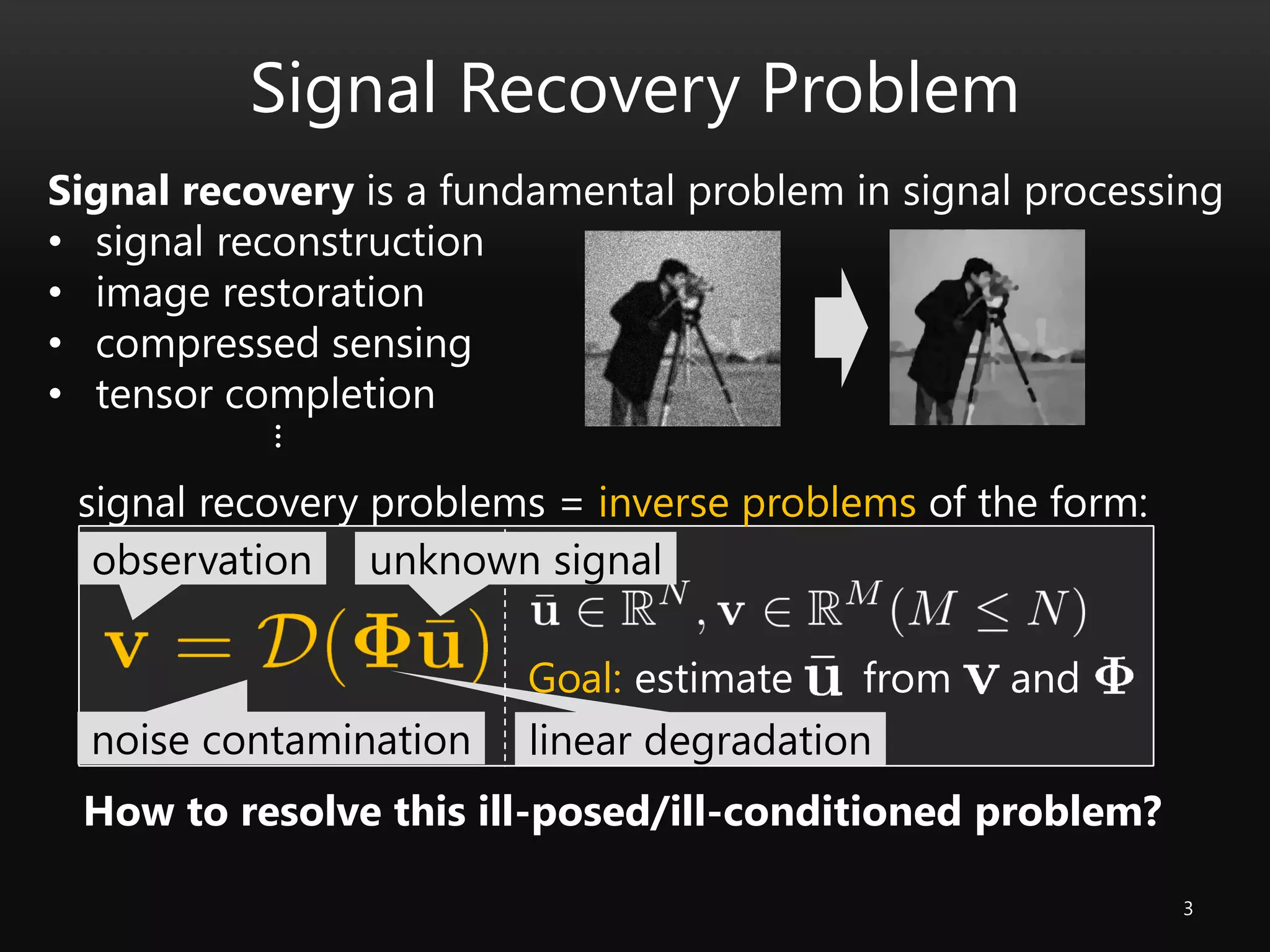

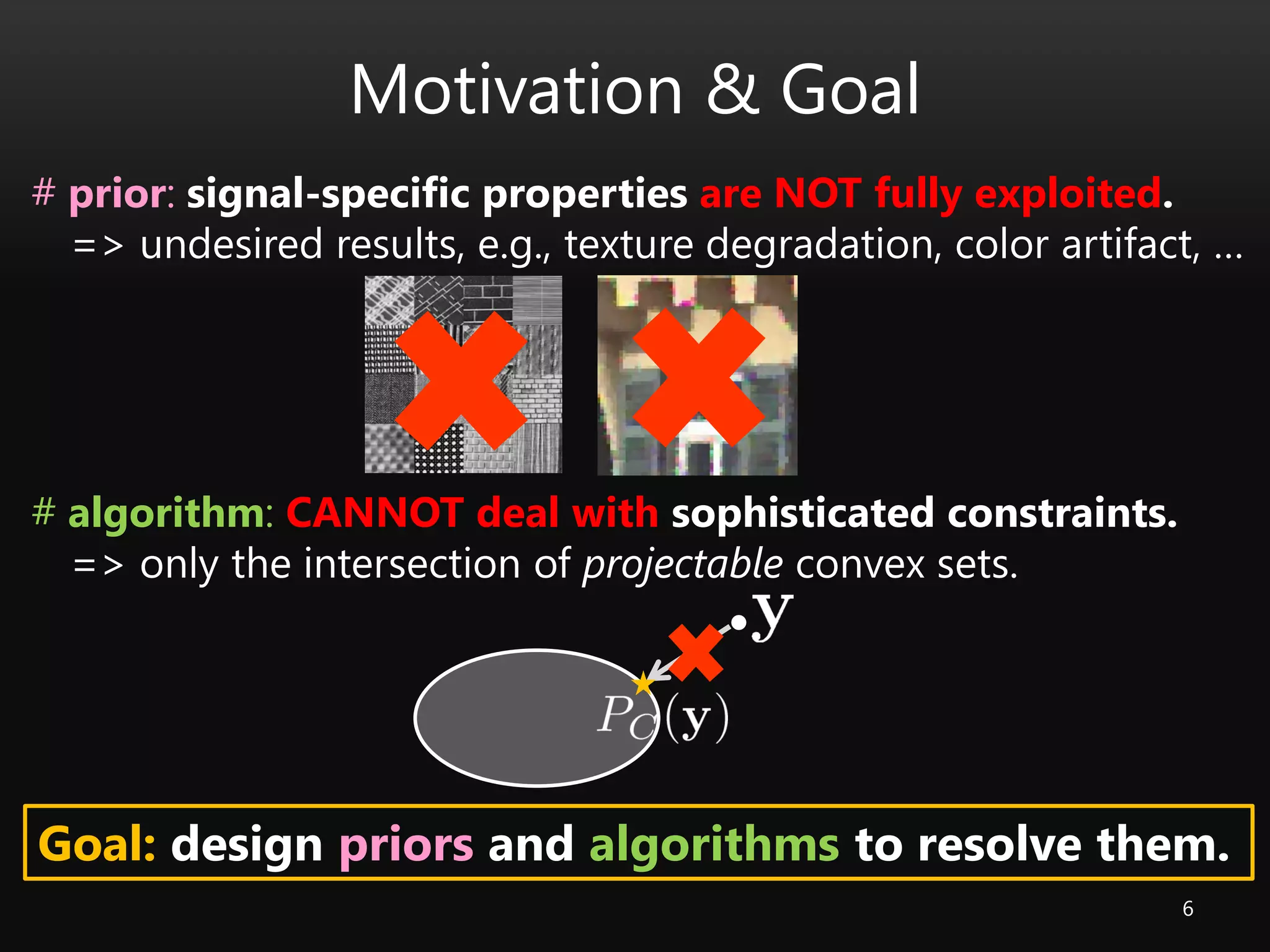

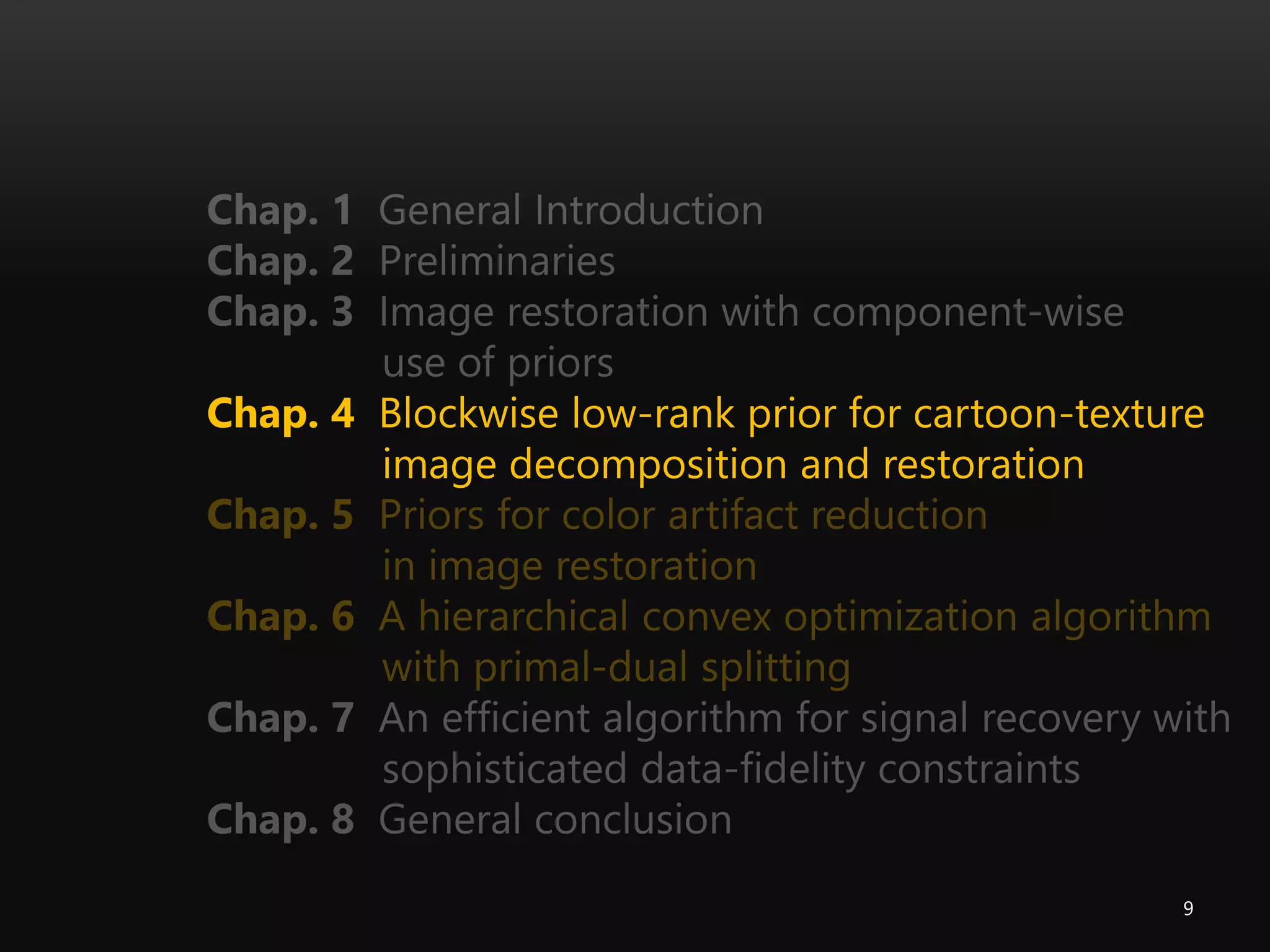

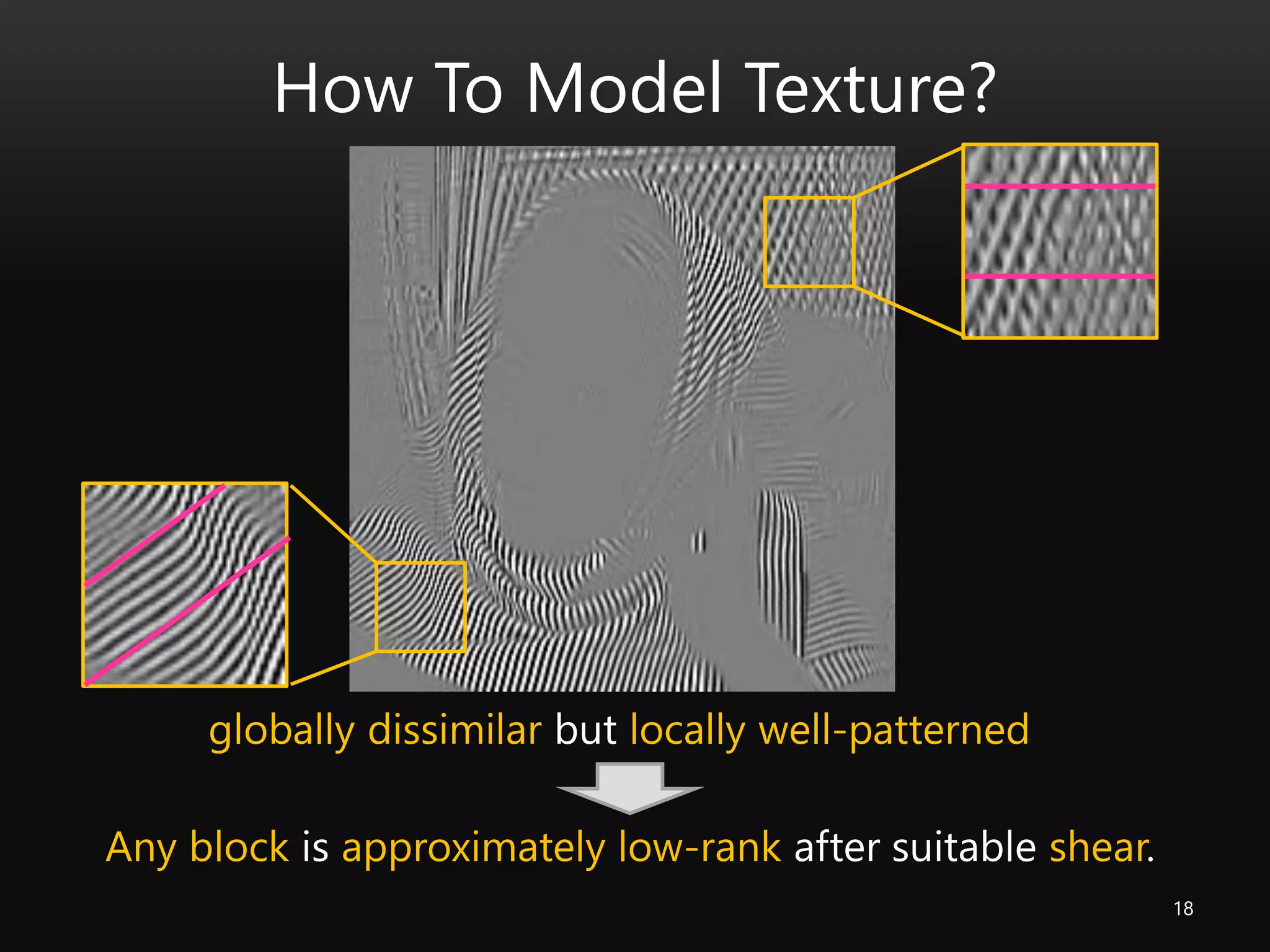

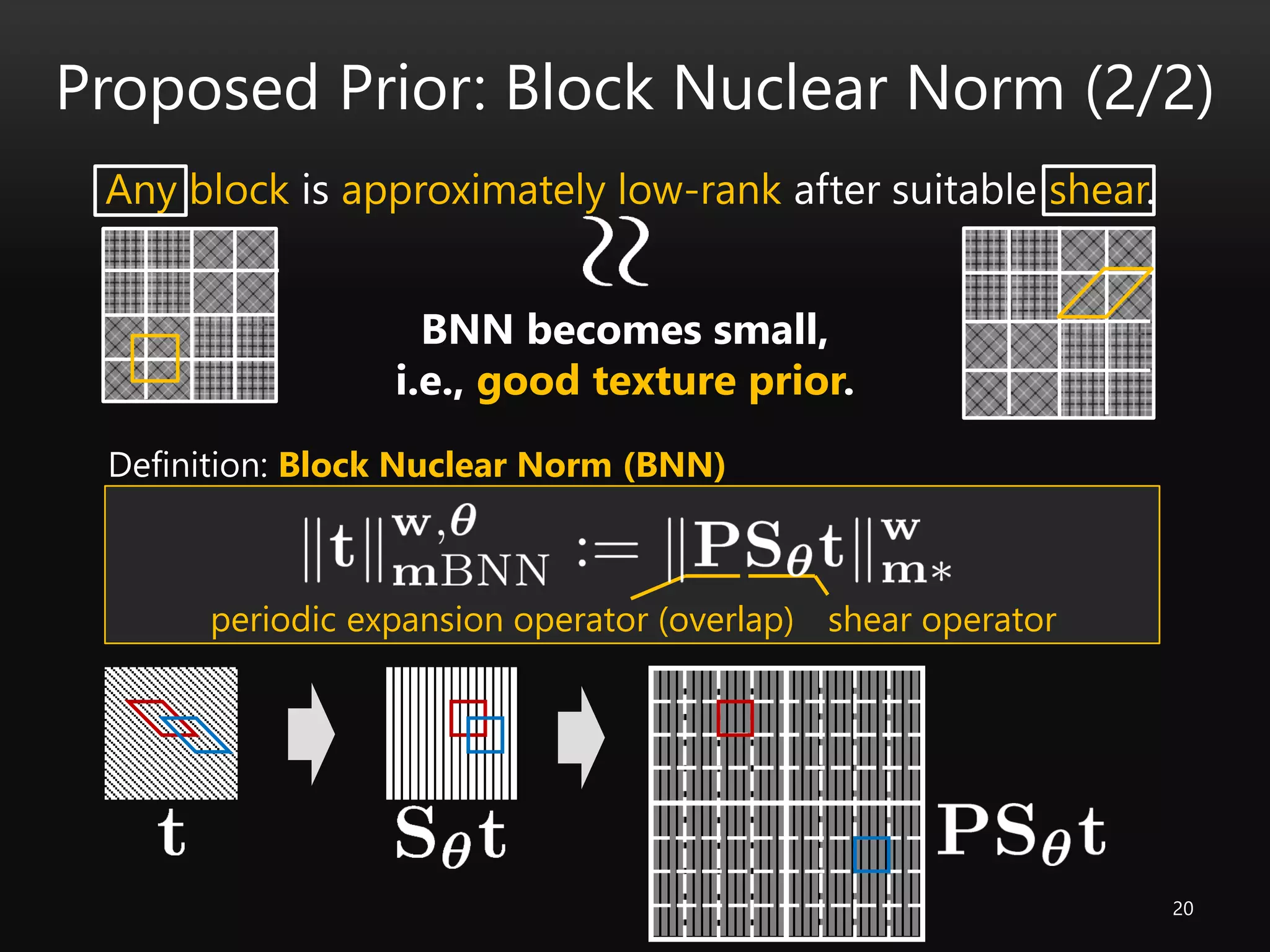

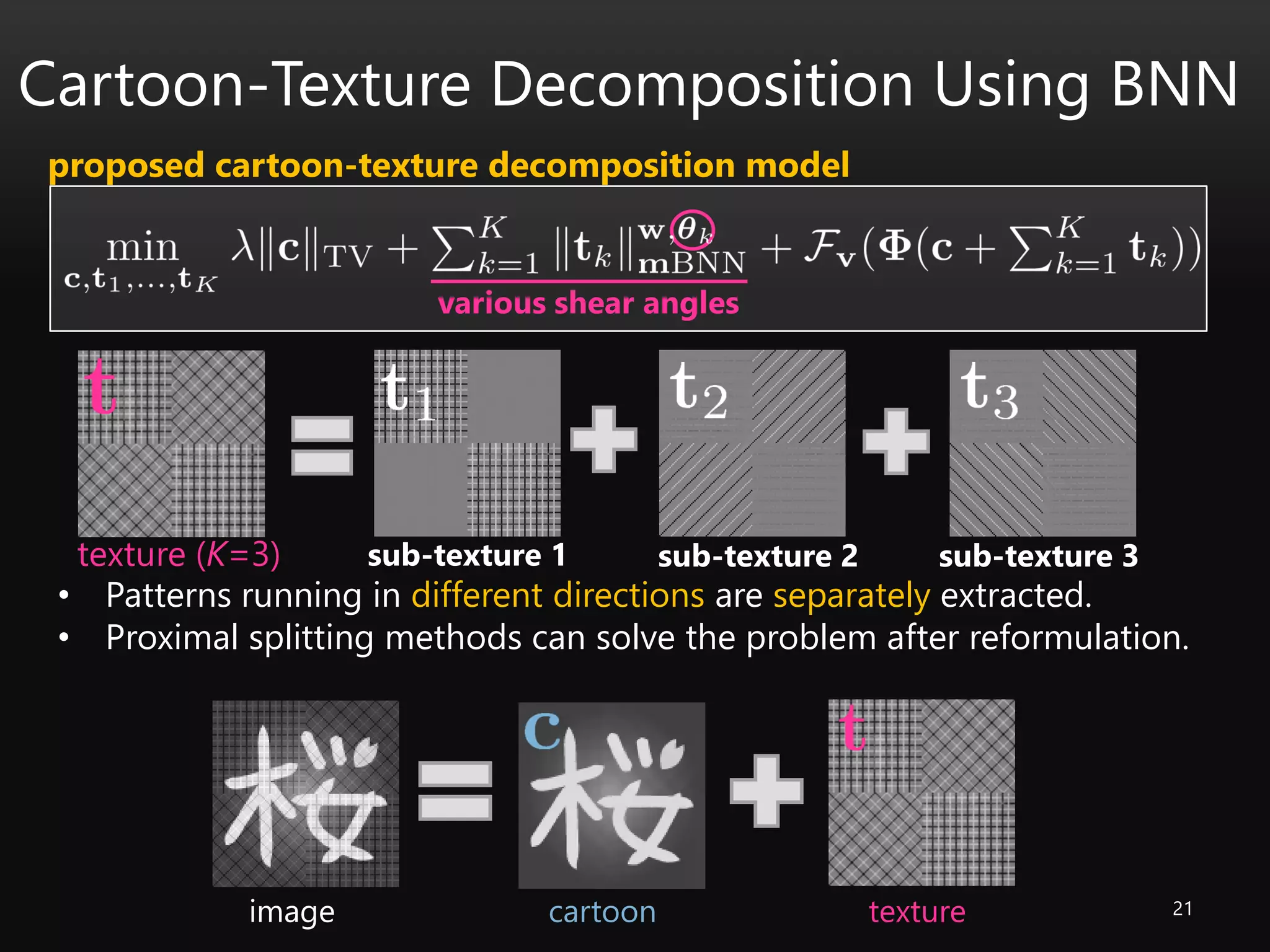

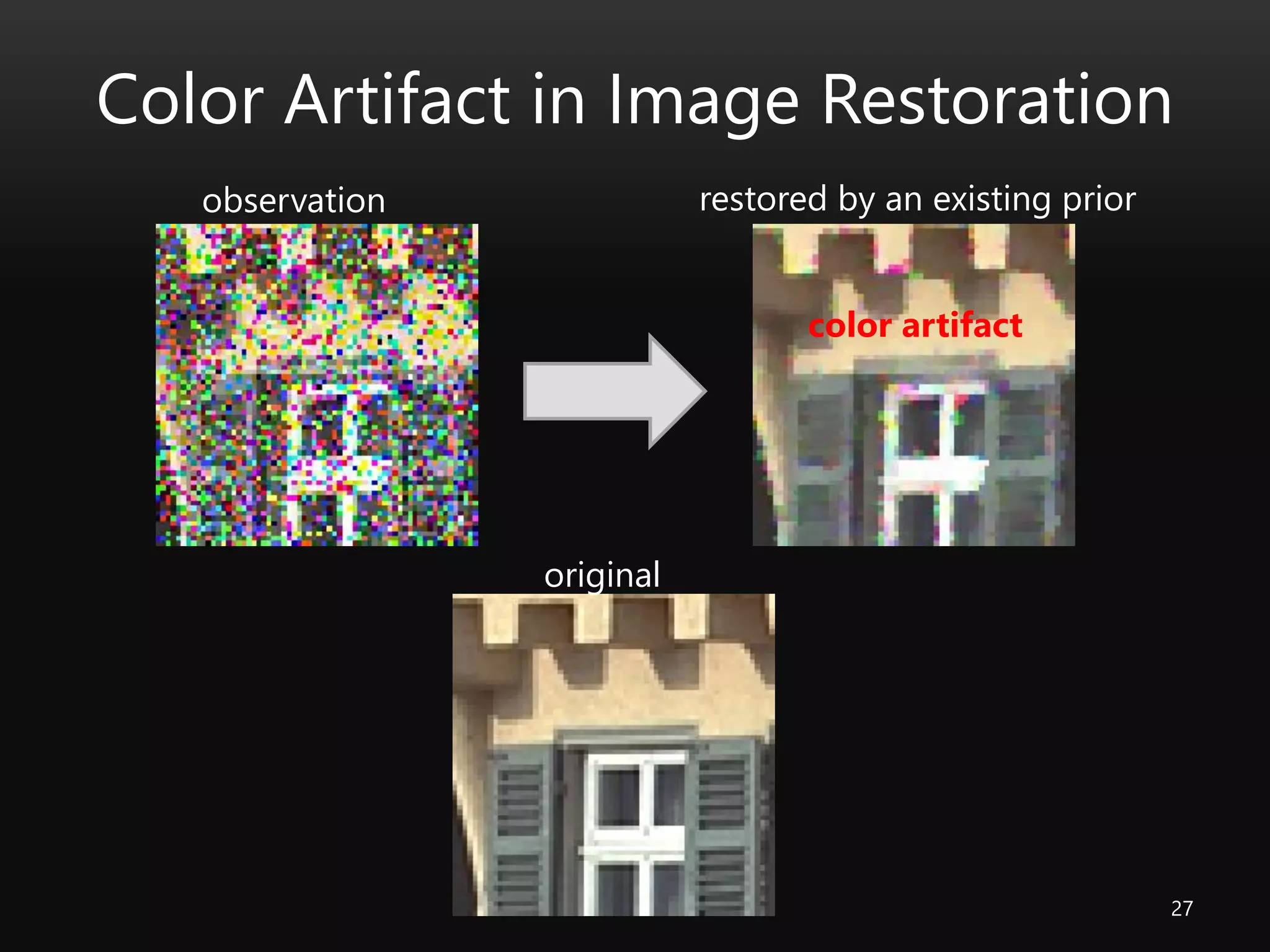

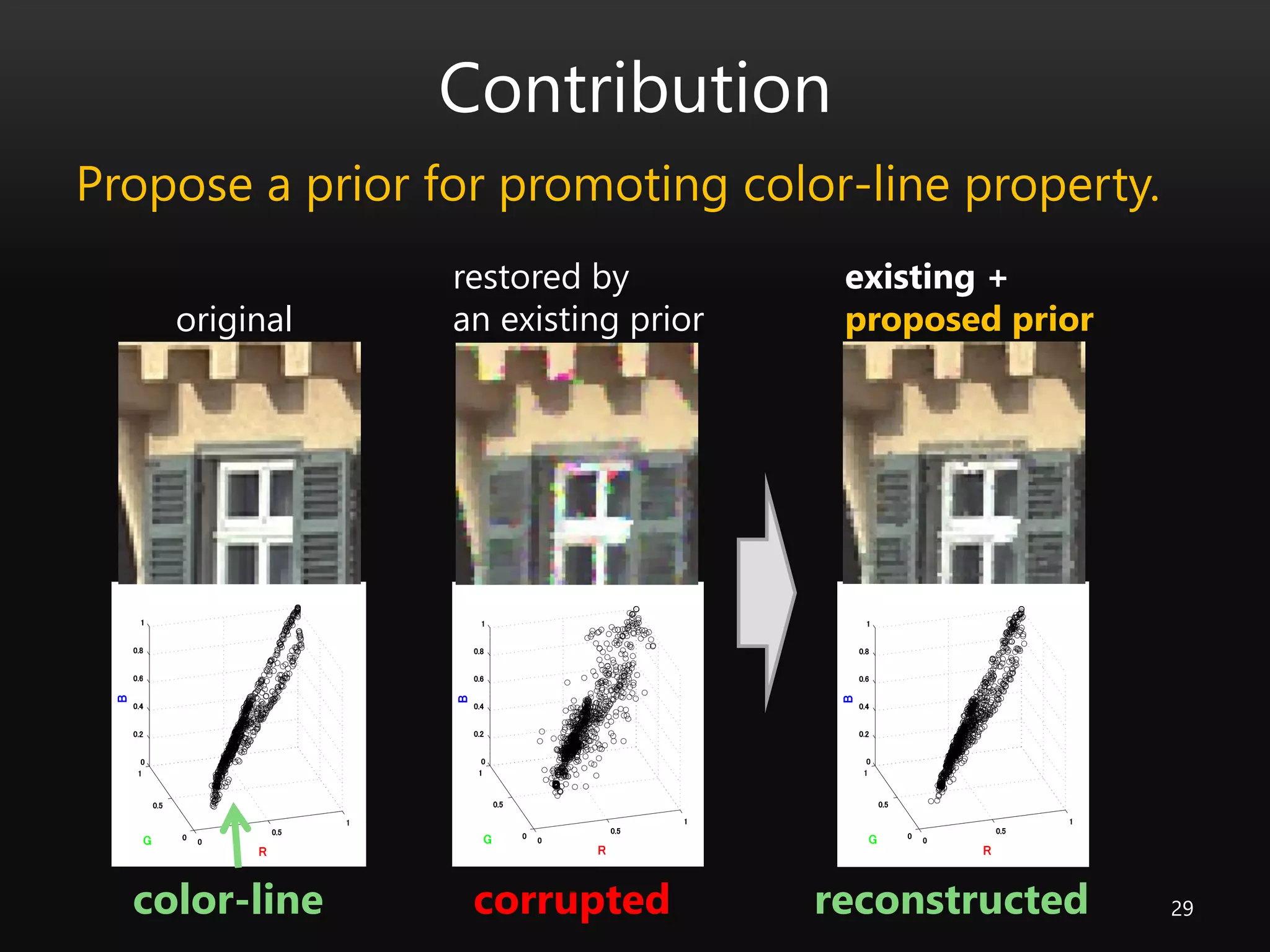

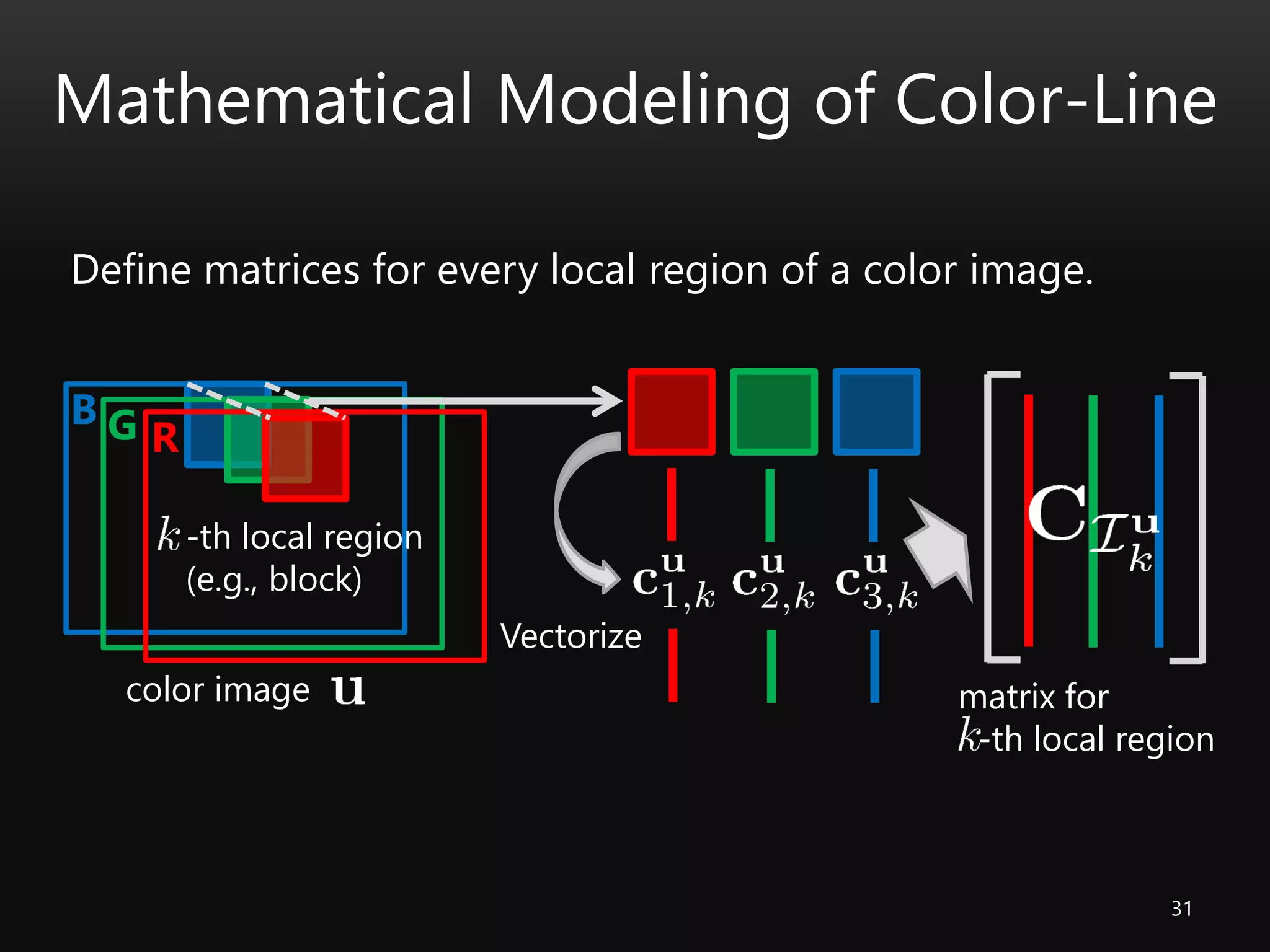

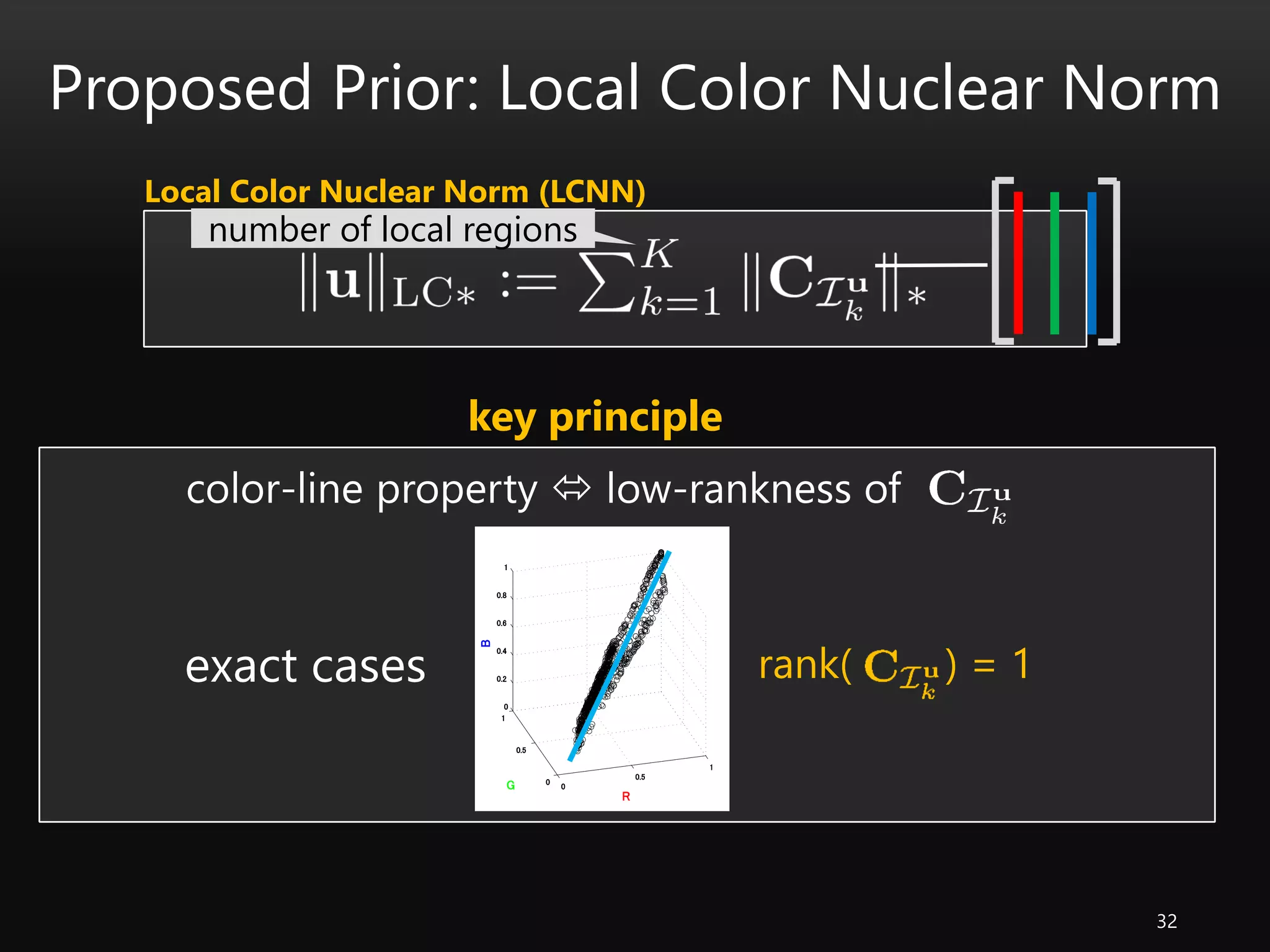

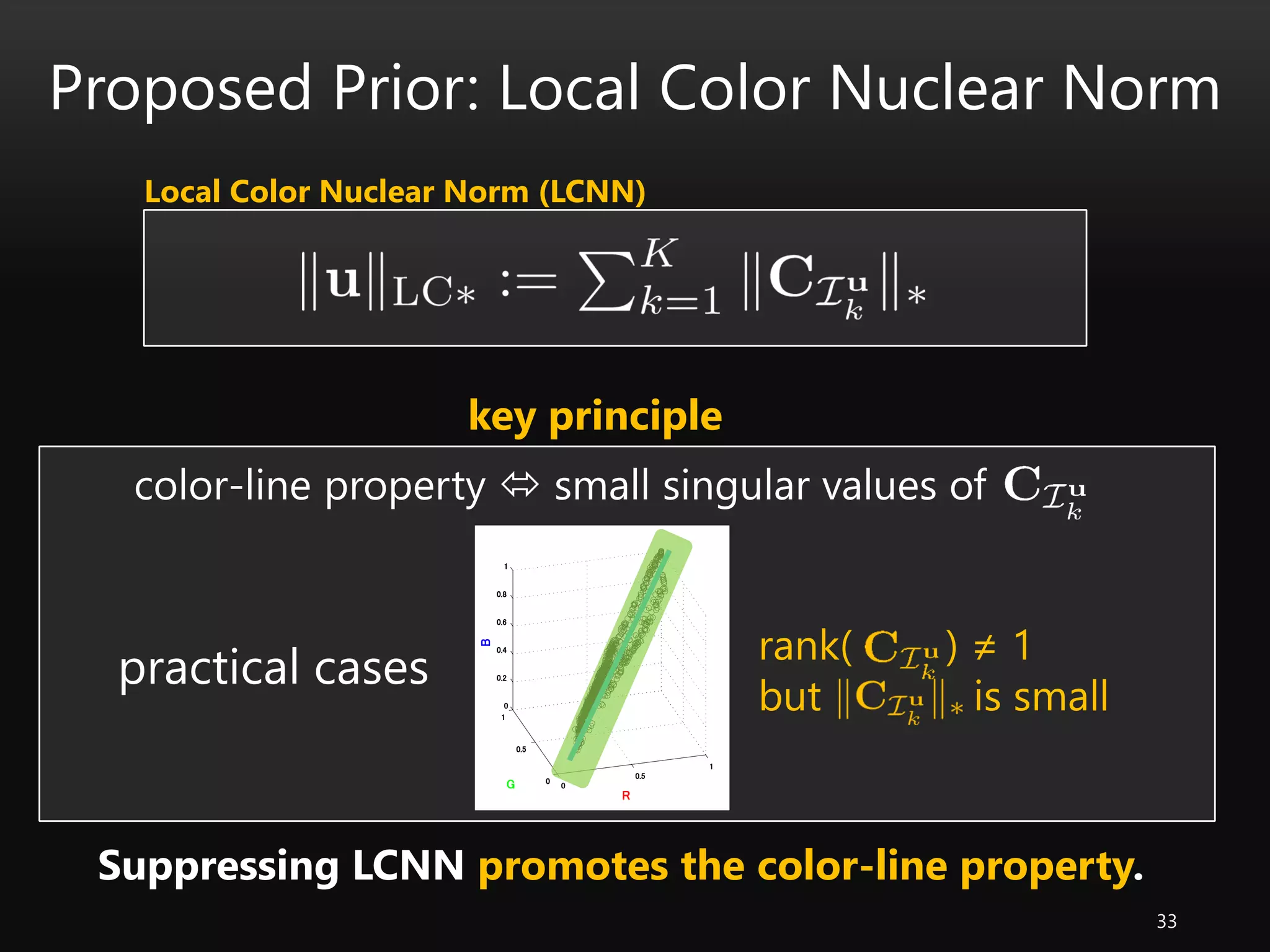

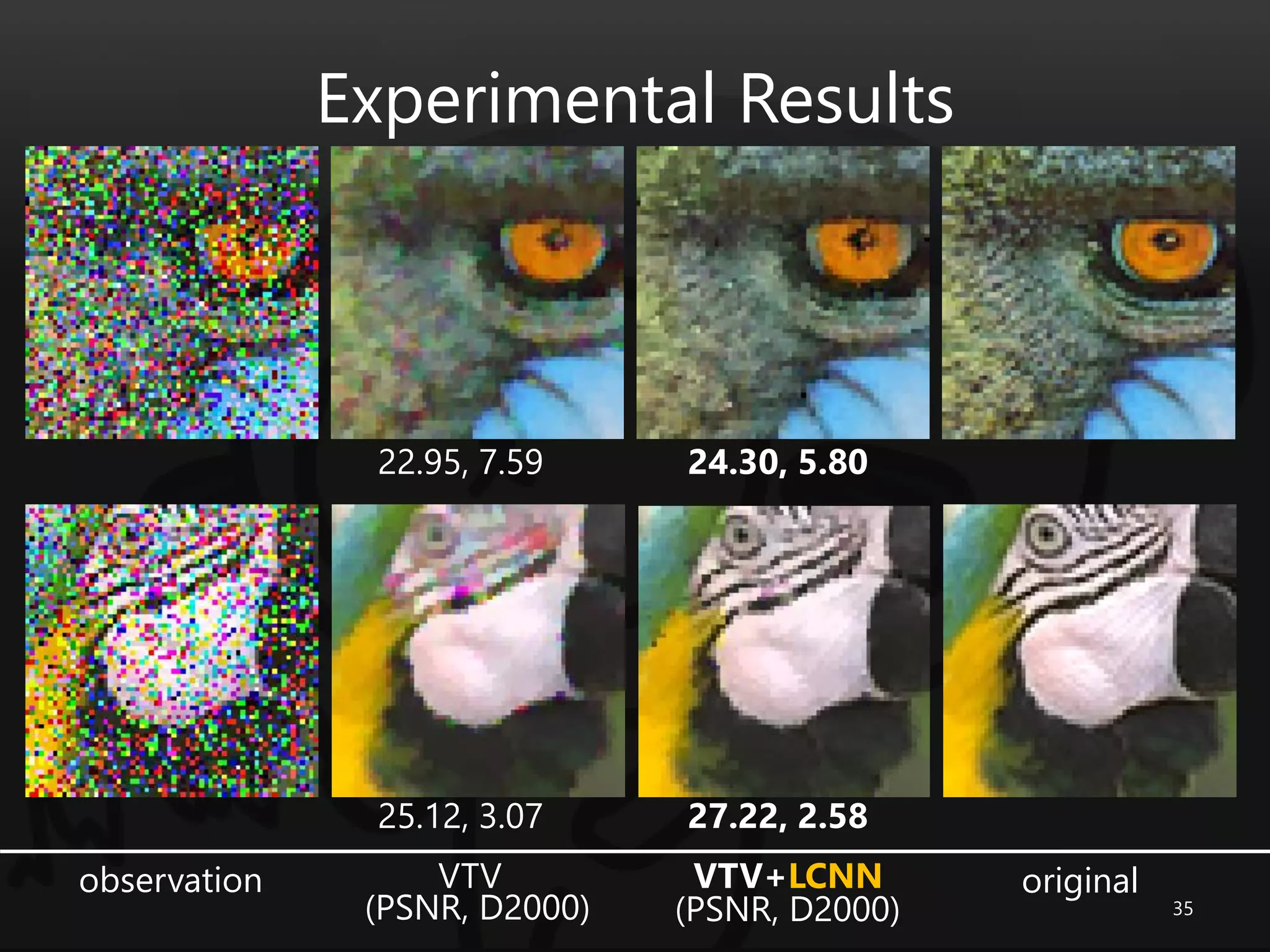

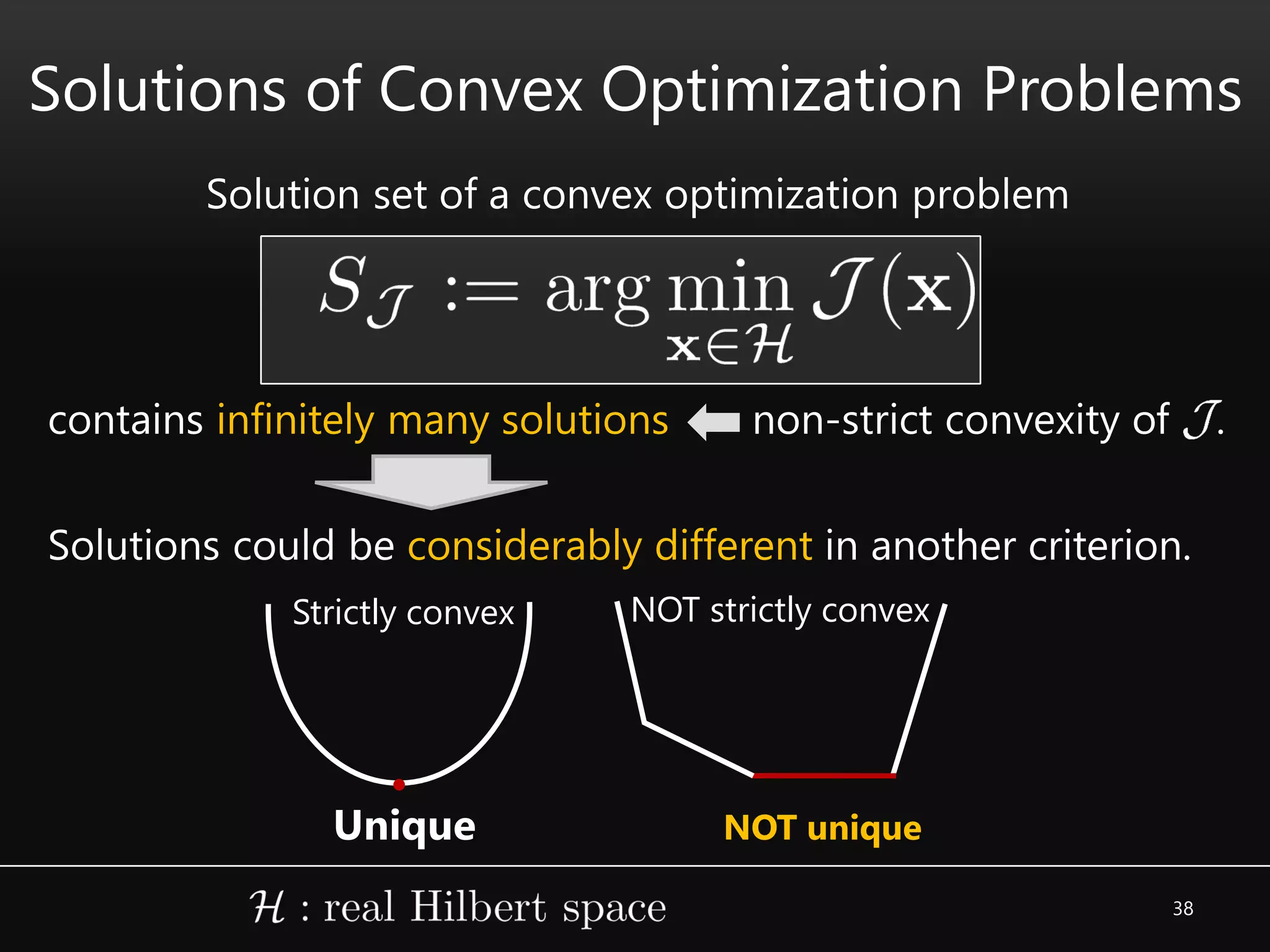

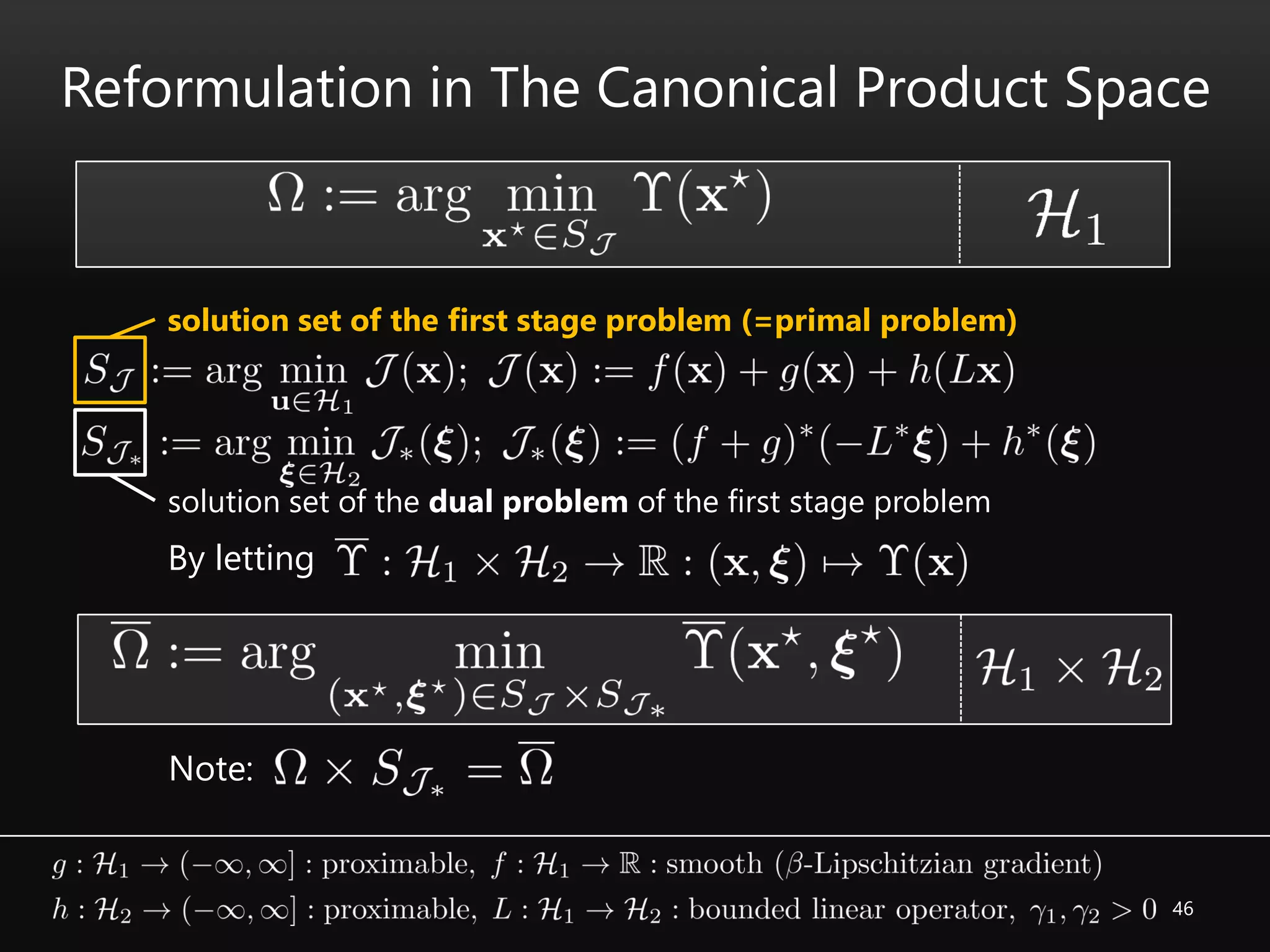

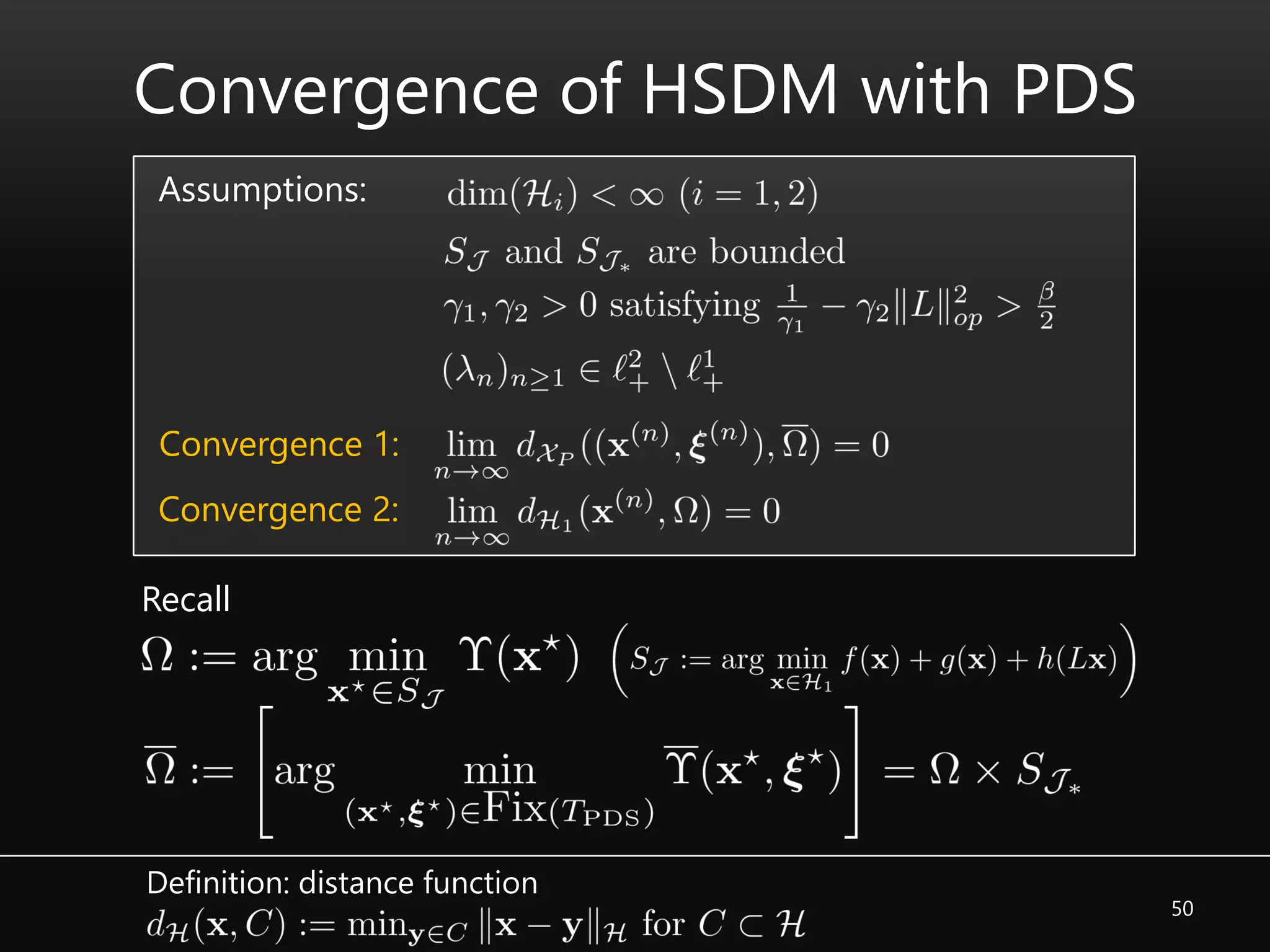

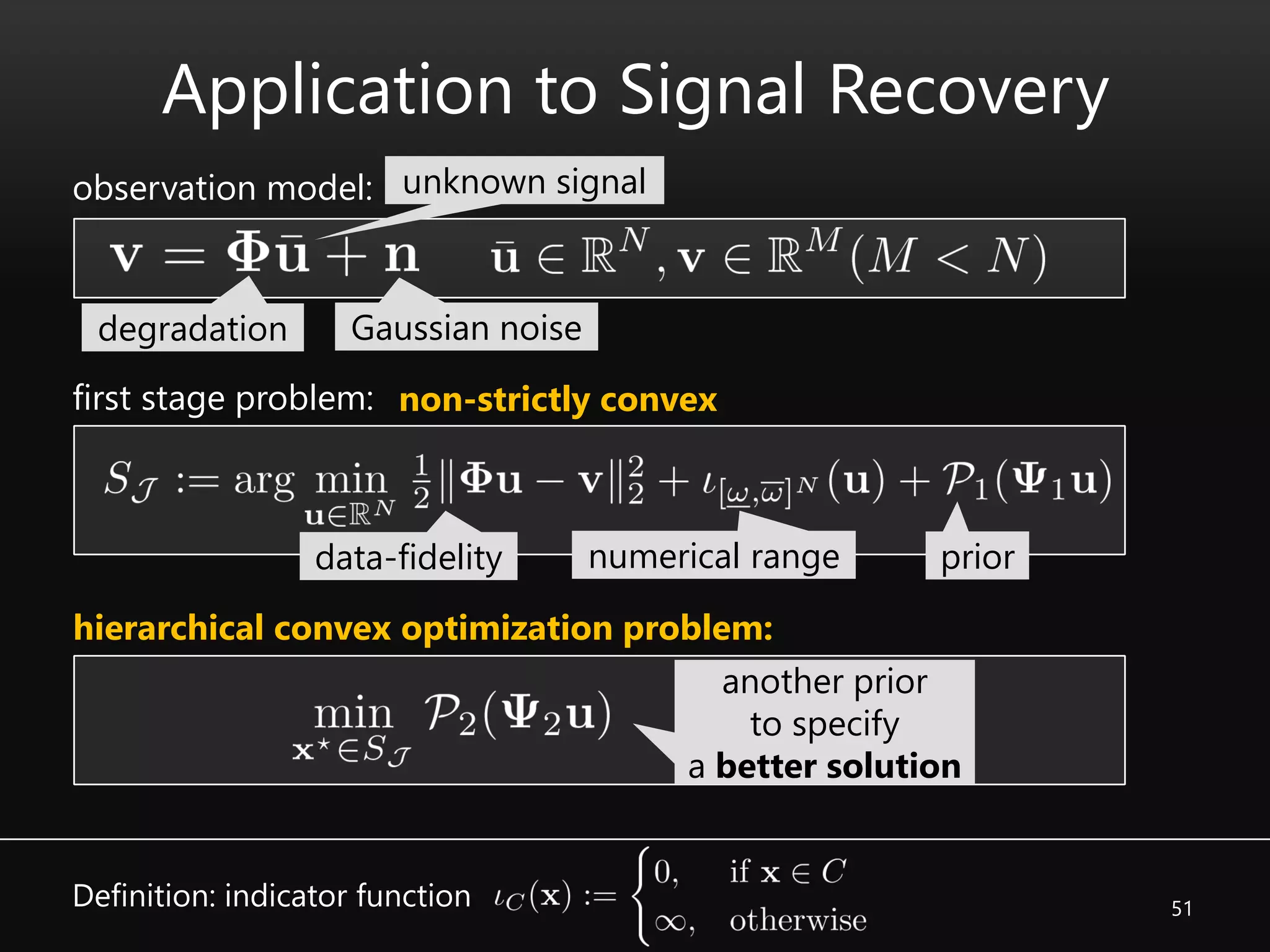

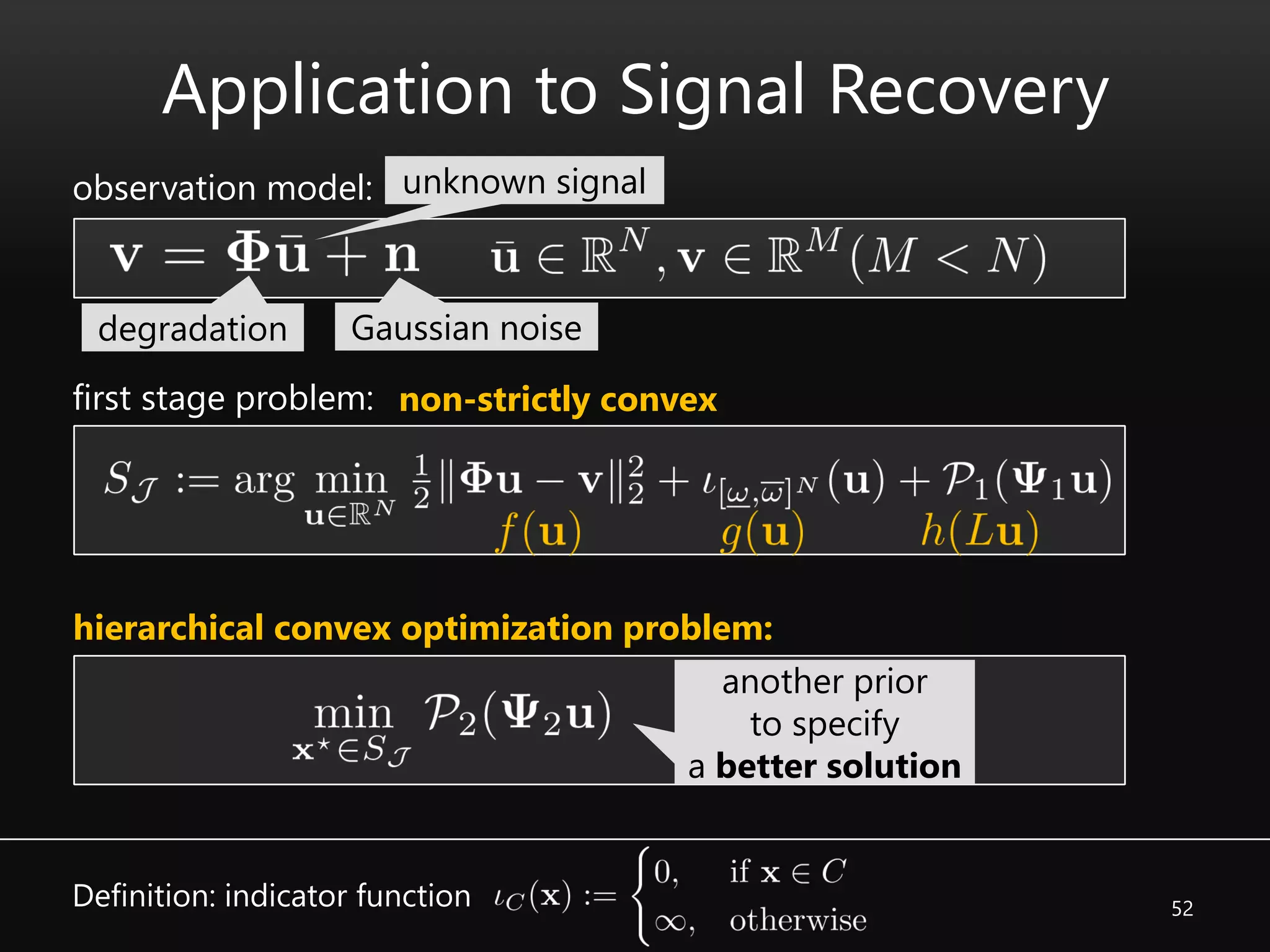

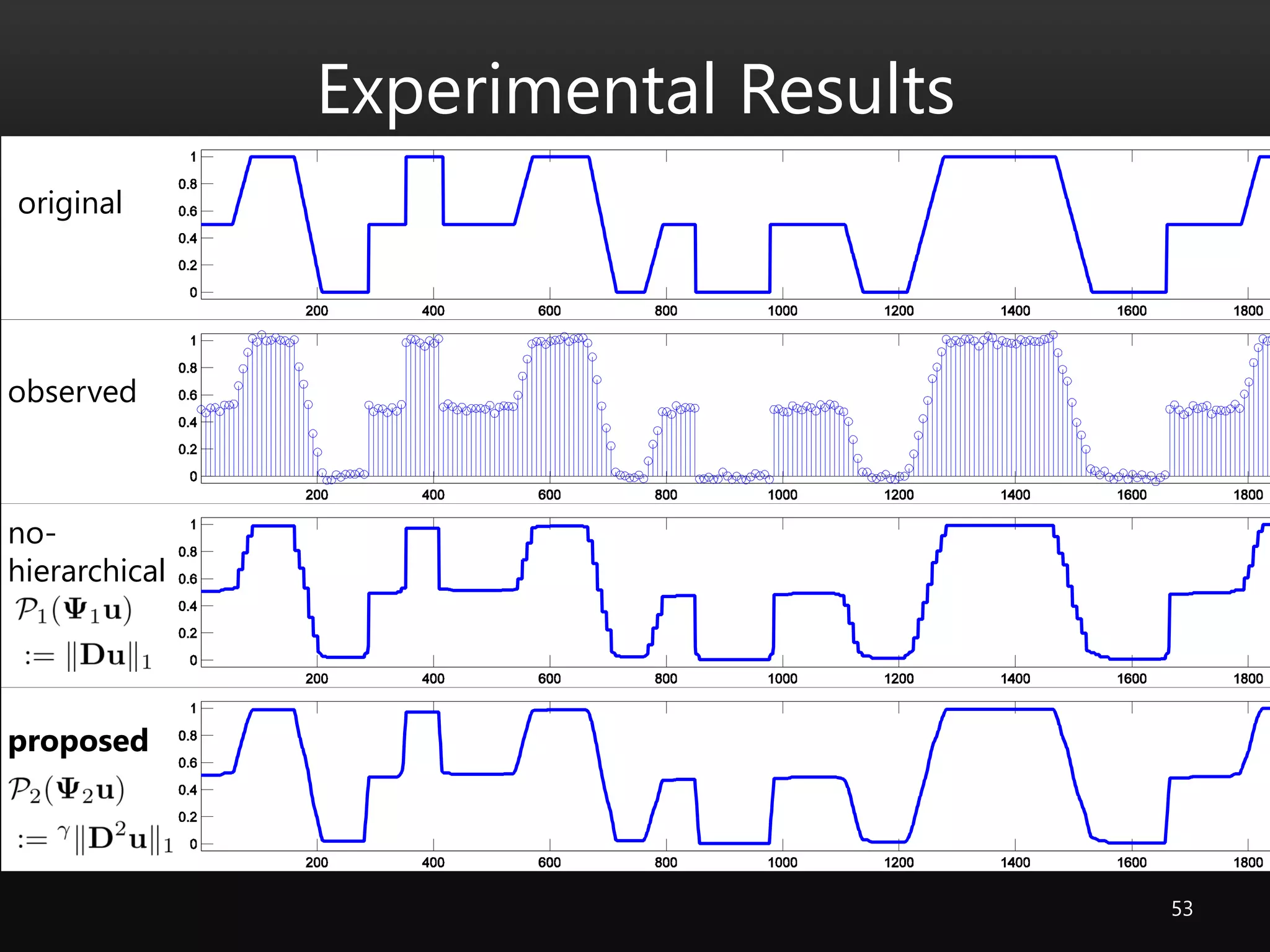

This document summarizes a dissertation on developing new priors and algorithms for signal recovery problems solved via convex optimization. Chapter 4 proposes a blockwise low-rank prior called the Block Nuclear Norm (BNN) to better model texture patterns in images. BNN represents textures as locally low-rank blocks under different shears. Chapter 5 introduces the Local Color Nuclear Norm (LCNN) prior to promote the color-line property and reduce color artifacts in restored images. Chapter 6 develops a hierarchical convex optimization algorithm using primal-dual splitting to solve problems with non-unique solutions and non-strictly convex objectives.

![4

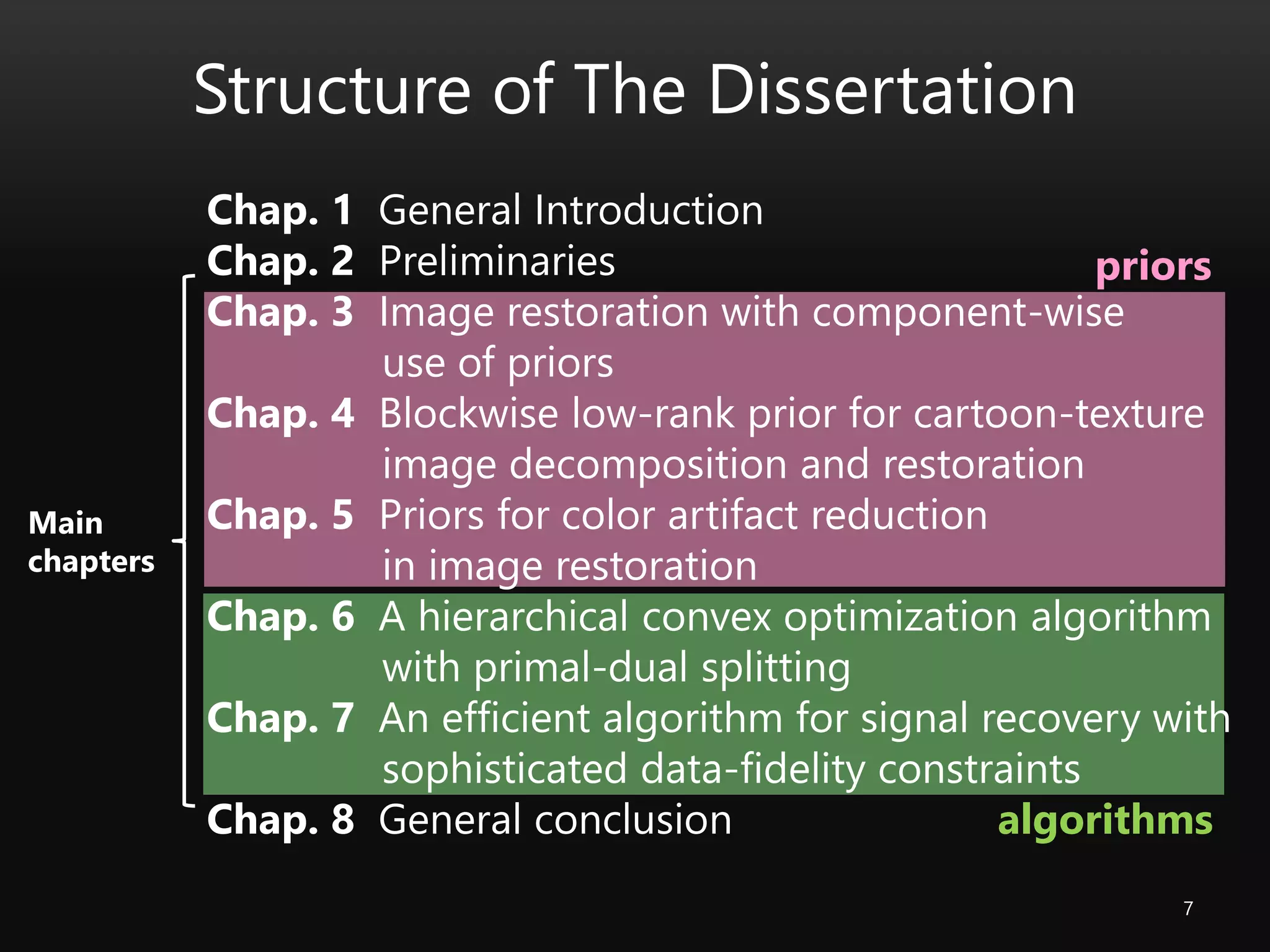

Prior and Convex Optimization

Some a priori information on signal of interest, e.g.,

• sparsity

• smoothness

• low-rankness

should be taken into consideration.

desired signal

convex function

convex set

1. D. P. Palomar and Y. C. Eldar, Eds., Convex Optimization in Signal Processing and Communications, Cambridge University Press, 2009.

2. J.-L. Starck et al., Sparse Image and Signal Processing: Wavelets, Curvelets,Morphological Diversity. Cambridge University Press, 2010.

3. H. H. Bauschke et al., Eds., Fixed-Point Algorithm for Inverse Problems in Science and Engineering, Springer-Verlag, 2011.

a-priori information convex function =: prior

• l1-norm [Donoho+ ‘03; Candes+ ‘06]

• total variation (TV) [Rudin+ ‘92; Chambolle ‘04]

• nuclear norm [Fazel ‘02; Recht et al. ‘11]

advantage: 1. local optimal = global optimal

2. flexible framework

A powerful approach: convex optimization [see, e.g., 1-3]](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-4-2048.jpg)

![5

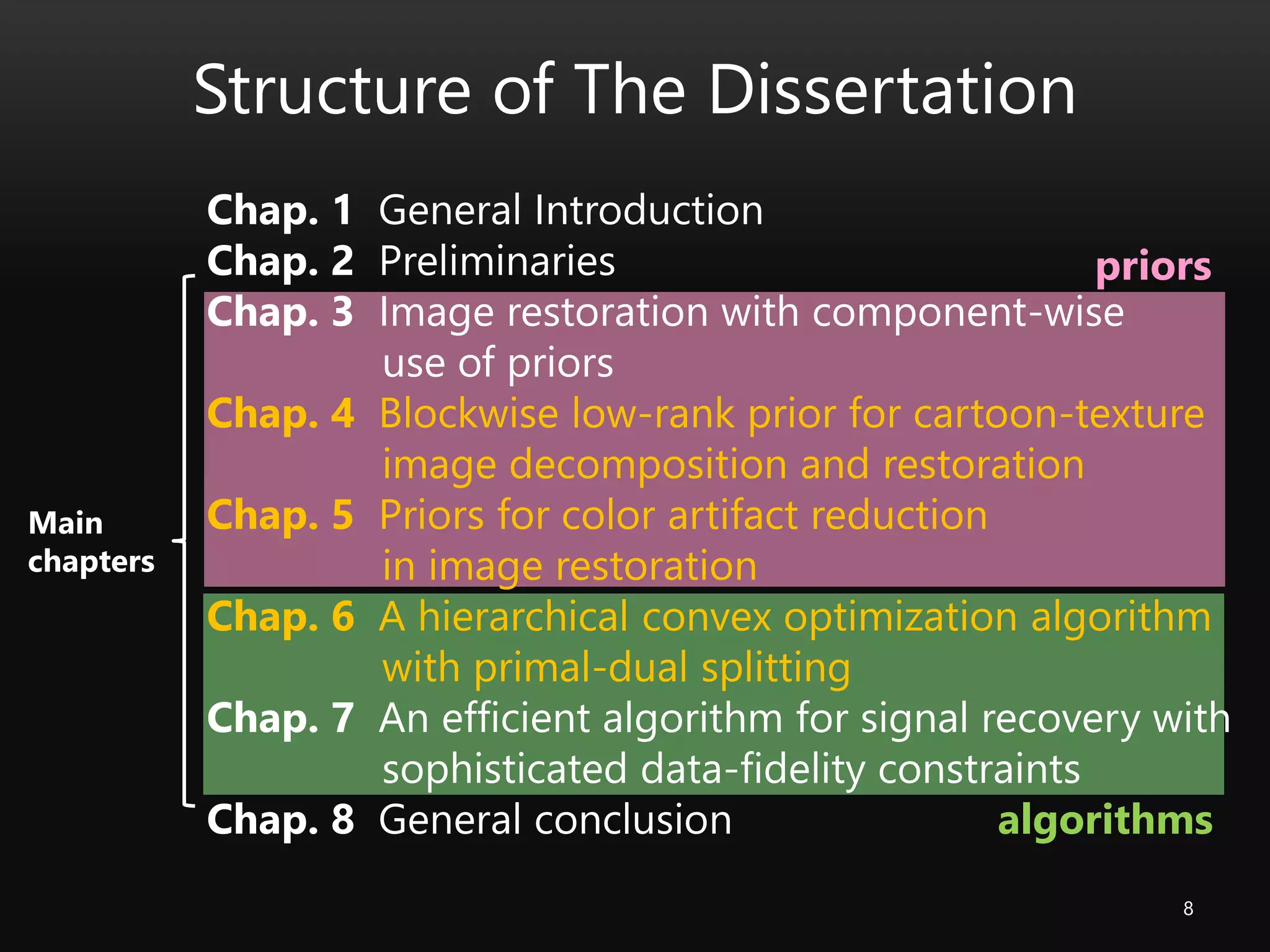

Optimization Algorithms for Signal Recovery

Optimization algorithms for signal recovery must deal with

• useful priors = nonsmooth convex function

• problem scale = often more than 10^4

proximal splitting methods [e.g., Gabay+ ‘76; Lions+ ‘79; Combettes+ ‘05; Condat ‘13]

• first-order (without Hessian)

• nonsmooth functions

• multiple constraints

Why do we need more?

Useful priors

Efficient

algorithms](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-5-2048.jpg)

![Cartoon-Texture Decomposition Model

11

image cartoon texture

Assumption: image = sum of two components

optimization problem [Meyer ‘01; Vese+ ‘03; Aujol+ ’05; ~ Schaeffer+ ‘13 ]

advantage: 1. prior suitable to each component

2. extraction of texture

priors for each component data-fidelity to](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-11-2048.jpg)

![Cartoon-Texture Decomposition Model

12

image

optimization problem [Meyer ‘01; Vese+ ‘03; Aujol+ ‘05; ~ Schaeffer+ ‘13 ]

total variation (TV) [Rudin 92]: suitable for cartoon

cartoon texture](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-12-2048.jpg)

![Cartoon-Texture Decomposition Model

13

image

optimization problem [Meyer ‘01; Vese+ ‘03; Aujol+ ‘05; ~ Schaeffer+ ‘13 ]

Texture is rather difficult to model…

cartoon texture](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-13-2048.jpg)

![Existing Texture Priors

14

G norm frame

2001 ~ 2005 ~ 2013

noise

fine pattern

local adaptivity

capability

prior

->

[Schaeffer+ ‘13][Meyer ‘01]

[Aujol+ ‘05]

[Ng+ ‘13]

[Daubechies+ ‘05]

[Elad+ ’05]

[Fadili+ ‘10]](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-14-2048.jpg)

![Contribution

15

Propose a prior for a better interpretation of texture.

2001 ~ 2005 ~ 2013

Noise

Fine pattern

Local adaptivity

Proposed

prior

G norm frame

[Schaeffer+ ‘13][Meyer ‘01]

[Aujol+ ‘05]

[Ng+ ‘13]

[Daubechies+ ‘05]

[Elad+ ’05]

[Fadili+ ‘10]](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-15-2048.jpg)

![SVD, Rank and Nuclear Norm

16

• singular value decomposition (SVD)

nonzero singular values

• number of nonzero singular values

• nuclear norm

tightest convex relaxation of rank [Fazel 02]

* applications to robust PCA (sparse + low-rank)

[Gandy & Yamada ‘10; Candes+ ‘11; Gandy, Recht, Yamada ‘11]

rank

nuclear norm](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-16-2048.jpg)

![Any block is approximately low-rank after suitable shear.

Proposed Prior: Block Nuclear Norm (1/2)

19

Definition: pre-Block-nuclear-norm (pre-BNN)

nuclear normpositive weight

Important property of pre-BNN

Pre-BNN is tightest convex relaxation of

weighted blockwise rank

* Generalization of [Fazel ‘02]](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-19-2048.jpg)

![22

Experimental Results

CASE 1: pure decomposition

compared with a state-of-the-art decomposition [Schaeffer & Osher, 2013]

image

cartoon

texture

cartoon

texture

[Schaeffer & Osher 2013] “A low patch-

rank interpretation of texture,” SIAM J.

Imag. Sci. [Schaeffer & Osher 2013] proposed](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-22-2048.jpg)

![23

Experimental Results

CASE 2: blur+20%missing pixels

compared also with [Schaeffer & Osher 2013]

PSNR: 23.20

SSIM: 0.6613

PSNR: 23.75

SSIM: 0.6978

observation [Schaeffer & Osher 2013] proposed](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-23-2048.jpg)

![24

Experimental Results

CASE 2: blur+20%missing pixels

Compared with a state-of-the-art decomposition [Schaeffer & Osher, 2013]

PSNR: 23.20

SSIM: 0.6613

PSNR: 23.75

SSIM: 0.6978

observation [Schaeffer & Osher 2013] proposed](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-24-2048.jpg)

![Color-Line Property

28color-line

restored by

an existing priororiginal

corrupted

# color-line: RGB entries are linearly distributed in local regions.

[Omer & Werman ‘04]](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-28-2048.jpg)

![Application to Denoising

34

color-line

Proximal splitting methods are applicable after reformulation.

smoothness [VTV]

dynamic range

data-fidelity robust to

Impulsive noise

: color image contaminated by impulsive noise

optimization problem

[VTV] Bresson et al. “Fast dual minimization of the vectorial total variation norm and

applications to color image processing”, Inverse Probl. Img., 2008.](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-34-2048.jpg)

![39

Hierarchical Convex Optimization

ideal strategy: hierarchical convex optimization:

highly involved (≠the intersection of projectable convex sets)

proximal splitting methods cannot solve the problem.

selector: smooth convex function

via fixed point set characterization

[e.g., Yamada ‘01; Ogura & Yamada‘03; Yamada, Yukawa, Yamagishi ‘11]

Definition: nonexpansive mapping

computable nonexpansive mapping on a certain Hilbert space](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-39-2048.jpg)

![40

Hierarchical Convex Optimization

fixed point set characterized problem

Hybrid Steepest Descent Method (HSDM) [e.g., Yamada ‘01; Ogura & Yamada ‘03]

nonexpansive mapping gradient of selector

Q. What kinds of are available?](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-40-2048.jpg)

![41

• Forward-Backward Splitting (FBS) method [Passty ’79; Combettes+ ‘05]

• Douglas-Rachford Splitting (DRS) method [Lions+ ‘79; Combettes+ ‘07]

Two characterizations underlying proximal splitting methods

are given in [Yamada, Yukawa, Yamagishi ‘11].

Q. Can we deal with a more flexible formulation?

Nonexpansive Mappings for

Definition: proximity operator [Moreau ‘62]](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-41-2048.jpg)

![42

• Forward-Backward Splitting (FBS) method [Passty ’79; Combettes+ ‘05]

• Douglas-Rachford Splitting (DRS) method [Lions+ ’79; Combettes+ ‘07]

• Primal-Dual Splitting (PDS) method [Condat ‘13; Vu ‘13]

Nonexpansive Mappings for

Two characterizations underlying proximal splitting methods

are given in [Yamada, Yukawa, Yamagishi ‘11].](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-42-2048.jpg)

![43

• Primal-Dual Splitting (PDS) method [Condat ‘13; Vu ‘13]

Contribution

• reveal convergence properties

• modify gradient computation

• extract operator-theoretic idea from [Condat 13]

• reformulate in a certain product space

incorporate

hierarchical convex optimization by HSDM](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-43-2048.jpg)

![45

Outline

Reformulate in the canonical product space with dual problem

Extract & incorporate fixed point set characterization from [Condat ‘13]

Install another inner product for nonexpansivity of by [Condat ‘13]

Apply HSDM with modified gradient computation w.r.t.](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-45-2048.jpg)

![47

Incorporation of PDS Characterization

Extract the PDS fixed point characterization from [Condat ‘13]](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-47-2048.jpg)

![48

Activation of Nonexpansivity

is nonexpansive NOT on the canonical product space

Definition: canonical inner product of

BUT on the following space with another inner product [Condat ‘13]

where

: strongly positive bounded linear operator](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-48-2048.jpg)

![49

Solver via HSDM

NOTE:

We can apply HSDM [e.g., Yamada ‘01; Ogura & Yamada ‘03]](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-49-2048.jpg)

![Related Publications

55

# Journal Papers

[J1] S. Ono, T. Miyata, I. Yamada, and K. Yamaoka, "Image Recovery by

Decomposition with Component-Wise Regularization,"

IEICE Trans. Fundamentals, vol. E95-A, no. 12, pp. 2470-2478, 2012.

(Best Paper Award from IEICE)

[J2] S. Ono, T. Miyata, and I. Yamada, "Cartoon-Texture Image Decomposition

Using Blockwise Low-Rank Texture Characterization,"

IEEE Trans. Image Process., vol. 23, no. 3, pp. 1028-1042, 2014.

[J3] S. Ono and I. Yamada, "Hierarchical Convex Optimization with Primal-Dual

Splitting,“ submitted to IEEE Trans. Signal Process (accepted conditionally

in May. 2014).

[J4] S. Ono and I. Yamada, "Signal Recovery Using Complicated Data-Fidelity

Constraints,“ in preparation.](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-55-2048.jpg)

![Related Publications

56

# Articles in Proceedings of International Conferences (reviewed)

[C1] S. Ono, T. Miyata, and K. Yamaoka, "Total Variation-Wavelet-Curvelet

Regularized Optimization for Image Restoration," IEEE ICIP 2011.

[C2] S. Ono, T. Miyata, I. Yamada, and K. Yamaoka, "Missing Region Recovery by

Promoting Blockwise Low-Rankness," IEEE ICASSP 2012.

[C3] S. Ono and I. Yamada, "A Hierarchical Convex Optimization Approach for High

Fidelity Solution Selection in Image Recovery,'' APSIPA ASC 2012, (Invited).

[C4] S. Ono and I. Yamada, "Poisson Image Restoration with Likelihood Constraint

via Hybrid Steepest Descent Method," IEEE ICASSP 2013.

[C5] S. Ono, M. Yamagishi, and I. Yamada, "A Sparse System Identification by Using

Adaptively-Weighted Total Variation via A Primal-Dual Splitting Approach,"

IEEE ICASSP 2013.

[C6] S. Ono and I. Yamada, "A Convex Regularizer for Reducing Color Artifact in

Color Image Recovery,“ IEEE Conf. CVPR 2013.

[C7] I. Yamada and S. Ono, "Signal Recovery by Minimizing The Moreau Envelope

over The Fixed Point Set of Nonexpansive Mappings," EUSIPCO 2013, (invited).

[C8] S. Ono and I. Yamada, “Second-Order Total Generalized Variation Constraint,”

IEEE ICASSP 2014.

[C9] S. Ono and I. Yamada, “Decorrelated Vectorial Total Variation,” IEEE Conf. CVPR

2014 (to appear).](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-56-2048.jpg)

![Other Publications

57

# Journal Papers

[J5] S. Ono, T. Miyata, and Y. Sakai, "Improvement of Colorization Based Coding by

Using Redundancy of The Color Assignment Information and Correct Color

Component," IEICE Trans. Information and Systems, vol. J93-D, no. 9, pp.

1638-1641, 2010 (in Japanese).

[J6] H. Kuroda, S. Ono, M. Yamagishi, and I. Yamada, "Exploiting Group Sparsity in

Nonlinear Acoustic Echo Cancellation by Adaptive Proximal Forward-Backward

Splitting," IEICE Trans. Fundamentals, vol.E96-A, no.10, pp.1918-1927, 2013.

[J7] T. Baba, R. Matsuoka, S. Ono, K. Shirai, and M. Okuda, "Image Composition Using

A Pair of Flash/No-Flash Images by Convex Optimization,“ IEICE Transactions on

Information and System, 2014 (in Japanese, to appear)](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-57-2048.jpg)

![Other Publications

58

# Articles in Proceedings of International Conference (reviewed)

[C10] S. Ono, T. Miyata, and Y. Sakai, "Colorization-Based Coding by Focusing on

Characteristics of Colorization Bases," PCS 2010.

[C11] M. Yamagishi, S. Ono, and I. Yamada, "Two Variants of Alternating Direction

Method of Multipliers without Inner Iterations and Their Application to Image

Super-Resolution,'' IEEE ICASSP 2012.

[C12] S. Ono and I. Yamada, "Optimized JPEG Image Decompression with Super-

Resolution Interpolation Using Multi-Order Total Variation," IEEE ICIP 2013

(top 10% of all accepted papers).

[C13] K. Toyokawa, S. Ono, M. Yamagishi, and I. Yamada, "Detecting Edges of

Reflections from a Single Image via Convex Optimization,“ IEEE ICASSP 2014.

[C14] T. Baba, R. Matsuoka, S. Ono, K. Shirai, and M. Okuda, "Flash/No-flash Image

Integration Using Convex Optimization,“ IEEE ICASSP 2014.

* Many other articles in proceedings of domestic conferences](https://image.slidesharecdn.com/phdpresentation-160425001723/75/Ph-D-Thesis-Presentation-A-Study-of-Priors-and-Algorithms-for-Signal-Recovery-by-Convex-Optimization-Techniques-58-2048.jpg)