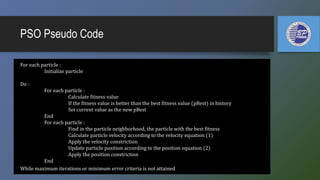

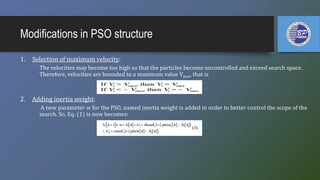

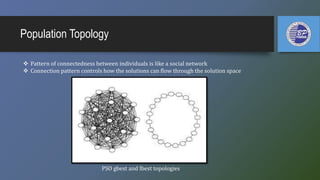

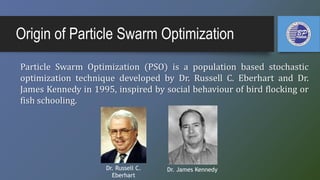

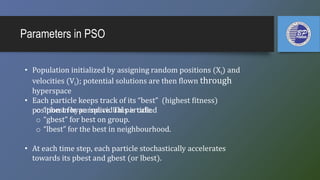

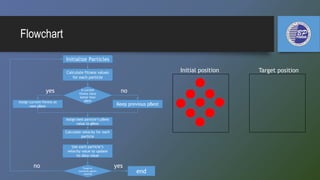

The document discusses swarm intelligence (SI) and introduces particle swarm optimization (PSO), a technique inspired by the social behaviors of birds and fish for solving optimization problems. PSO involves a swarm of particles communicating to find optimal solutions by updating their positions and velocities based on personal and global bests. It also covers modifications to the PSO structure, comparisons with other algorithms, and applications in neural network training and mobile robot path optimization.

![Mathematical Approach

Equation:

Vi = [vi1,vi2, ...,vin] called the velocity for particle i.

Xi = [xi1,xi2, ..., xin] represents the position of particle i.

Pbest : represents the best previous position of particle i(i.e., local-best position or its experience)

Gbest : represents the best position among all particles in the population X= [X1,X2, . . .,XN] (i.e. global-best

position)

Rand(.)and rand(.) : are two random variables between [0,1].

C1 and C2 : are positive numbers called acceleration coefficients that guide each particle toward the individual

best and the swarm best positions, respectively.](https://image.slidesharecdn.com/particleswarmoptimization-150429083653-conversion-gate01/85/Particle-swarm-optimization-12-320.jpg)