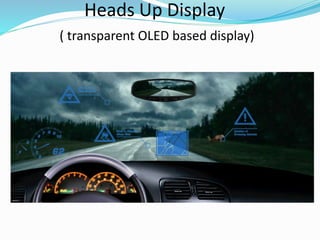

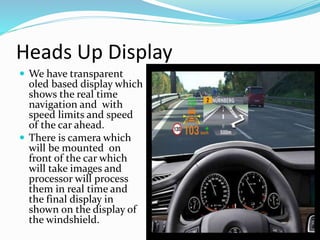

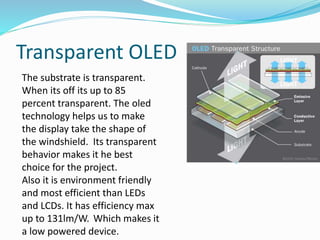

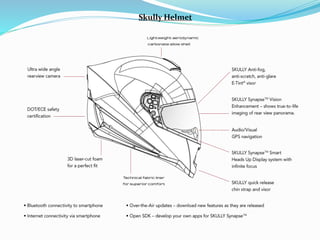

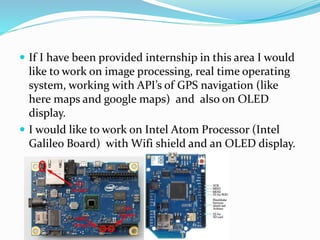

The document describes a proposed heads-up display project that would show real-time navigation and traffic conditions on a car's windshield. It would use a transparent OLED display mounted on the windshield and connected wirelessly to the internet. A camera mounted on the front of the car would take real-time images that a processor would analyze to display navigation directions, speed limits, and the speed of vehicles ahead, helping to prevent accidents. The display technology allows it to take the shape of the windshield and is more efficient than LED or LCD displays.