Embed presentation

Downloaded 42 times

The document summarizes Hadoop HDFS, which is a distributed file system designed for storing large datasets across clusters of commodity servers. It discusses that HDFS allows distributed processing of big data using a simple programming model. It then explains the key components of HDFS - the NameNode, DataNodes, and HDFS architecture. Finally, it provides some examples of companies using Hadoop and references for further information.

Presentation on Hadoop HDFS by Sudipta Ghosh from Burdwan University.

Definition and significance of Big Data in modern computing.

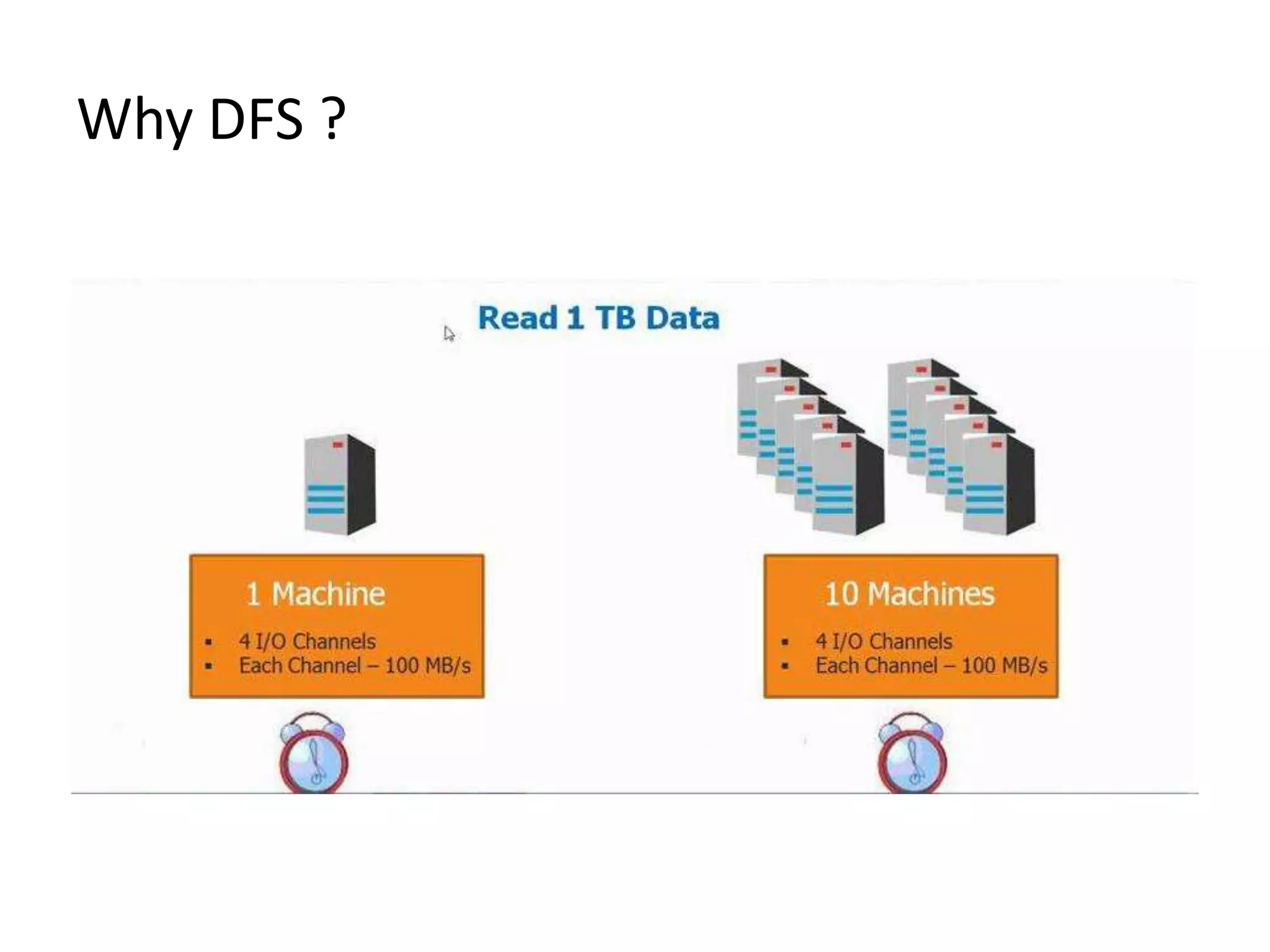

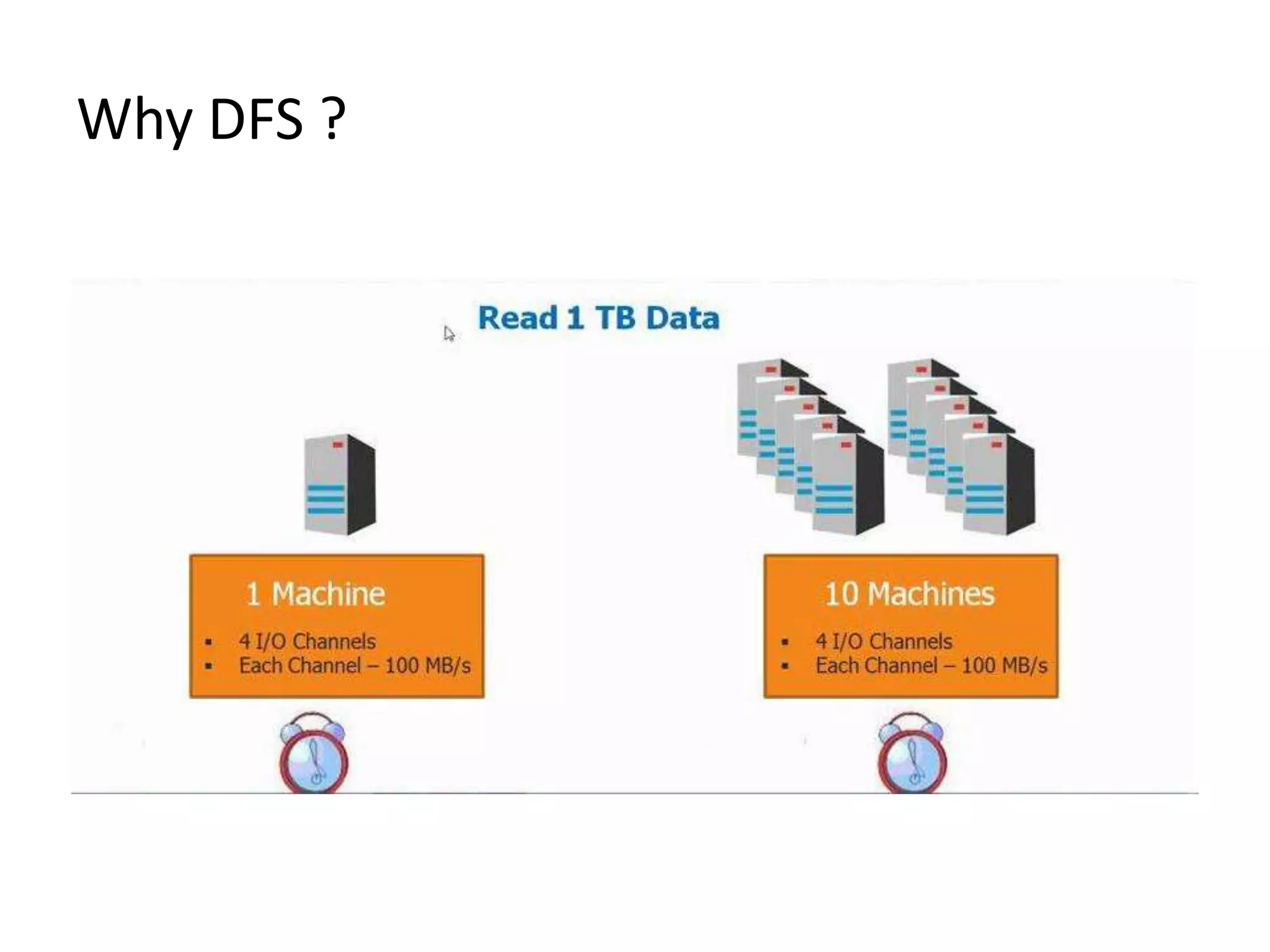

Exploration of reasons for implementing Distributed File Systems (DFS).

Hadoop is a distributed processing framework for large data sets across clustered commodity hardware.

List of major companies like Yahoo, Google, and Amazon that leverage Hadoop technology.

Hadoop Distributed File System (HDFS) is designed to store large files with streaming data access.

HDFS advantages include fault tolerance, high throughput, and suitability for large data applications.

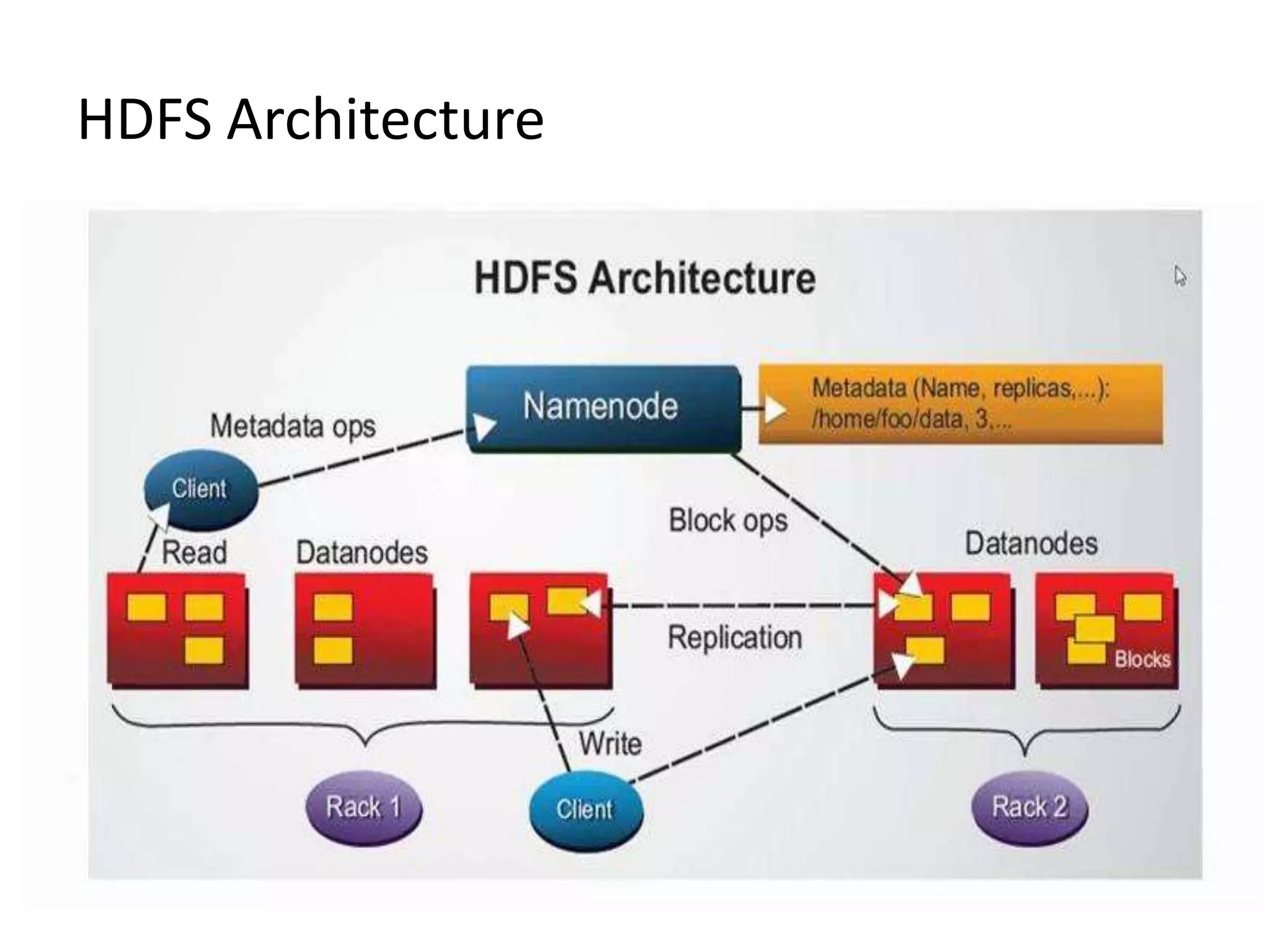

Overview of the main components: Name Node (master) and Data Nodes (storage).

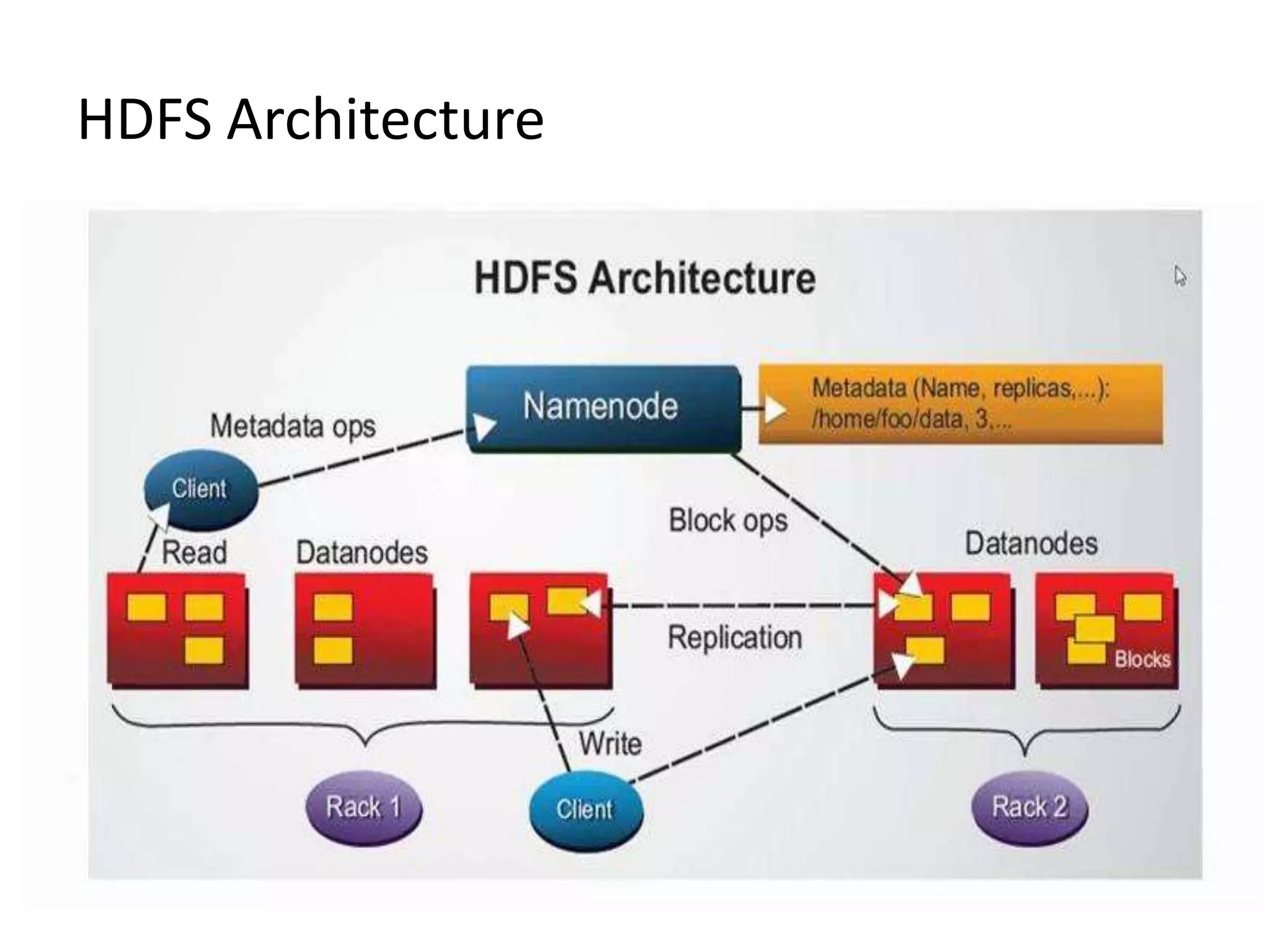

Diagrammatic representation of the architecture of HDFS.

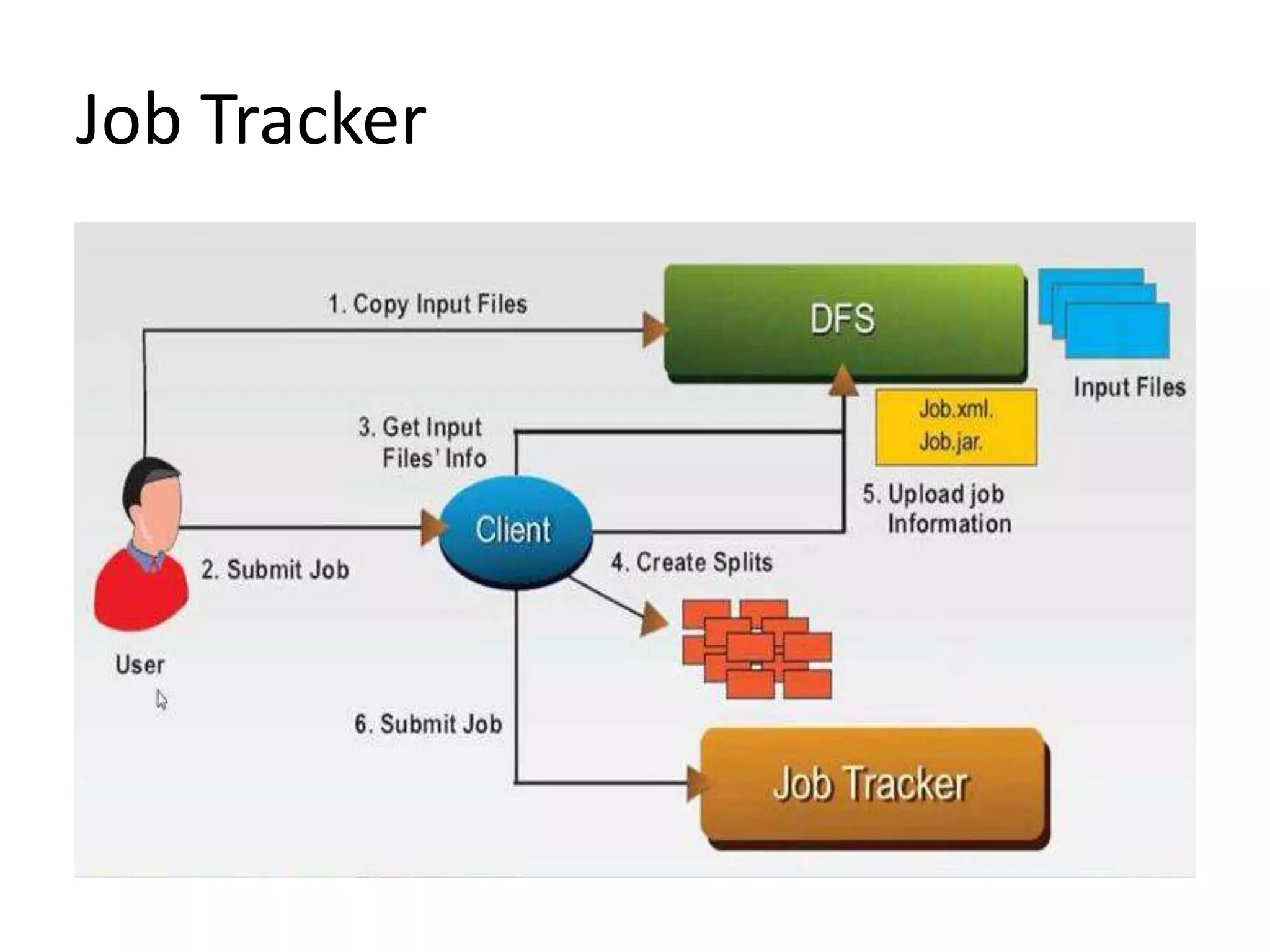

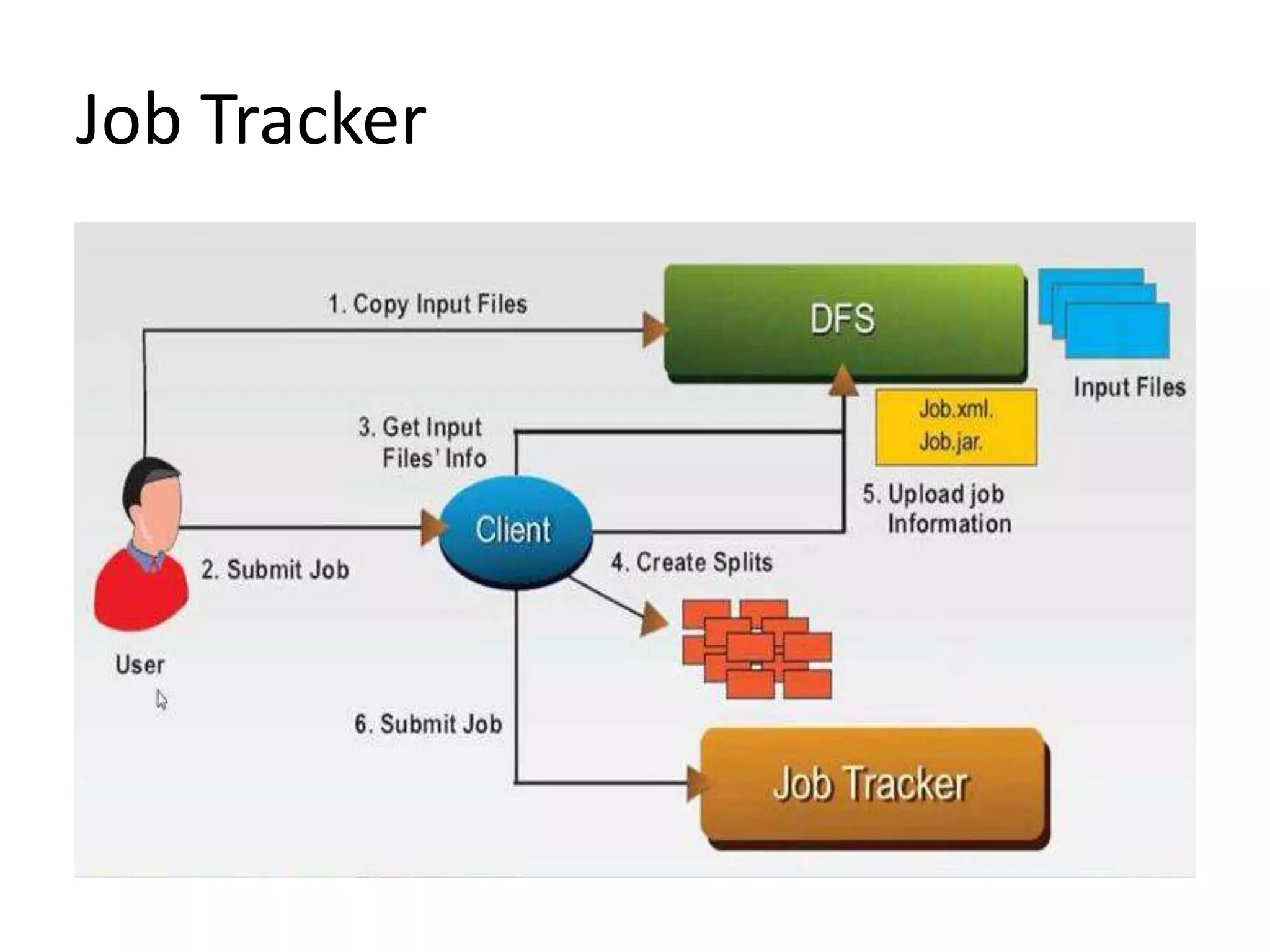

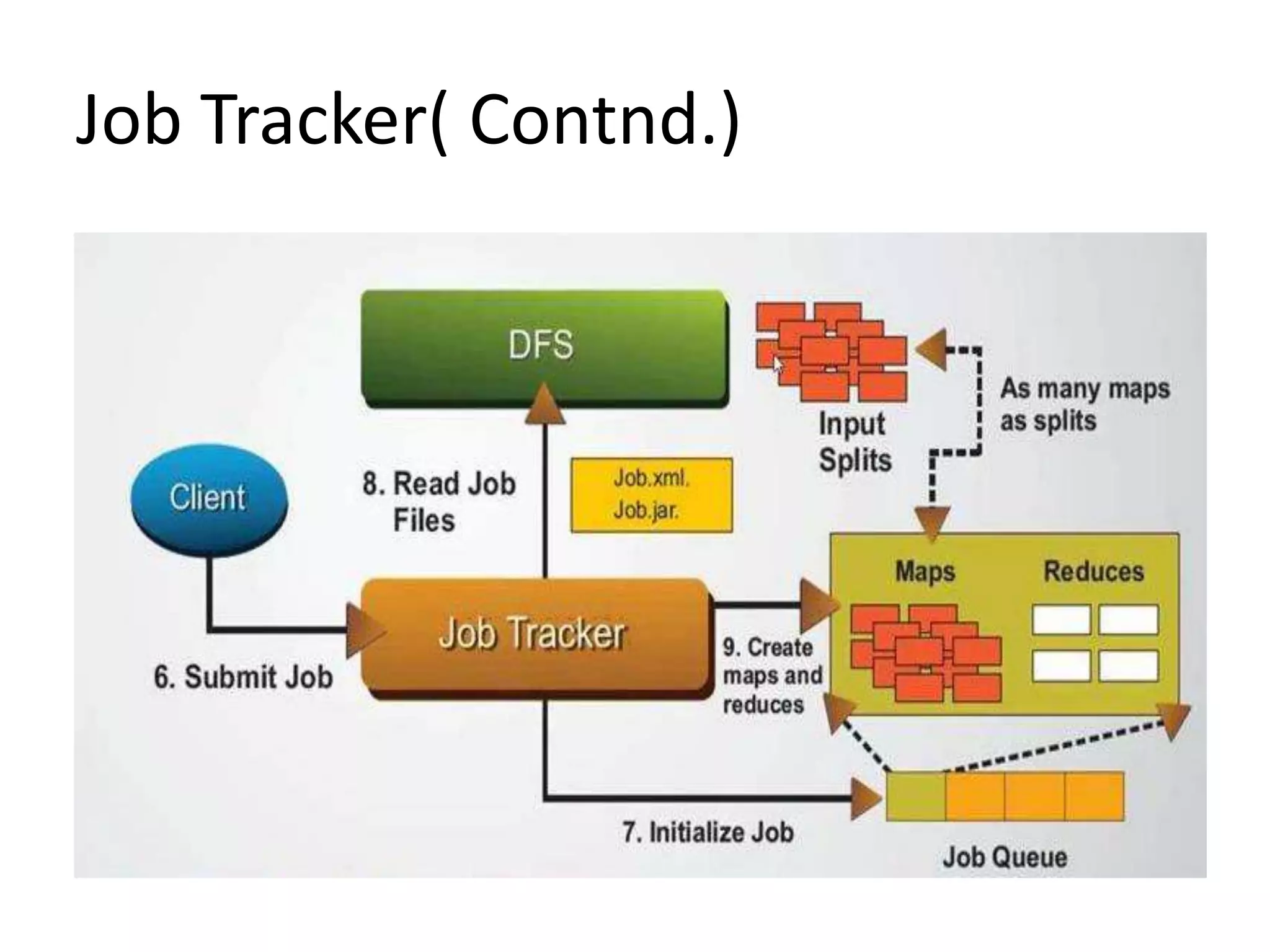

Introduction to Job Tracker, its function and significance in Hadoop operations.

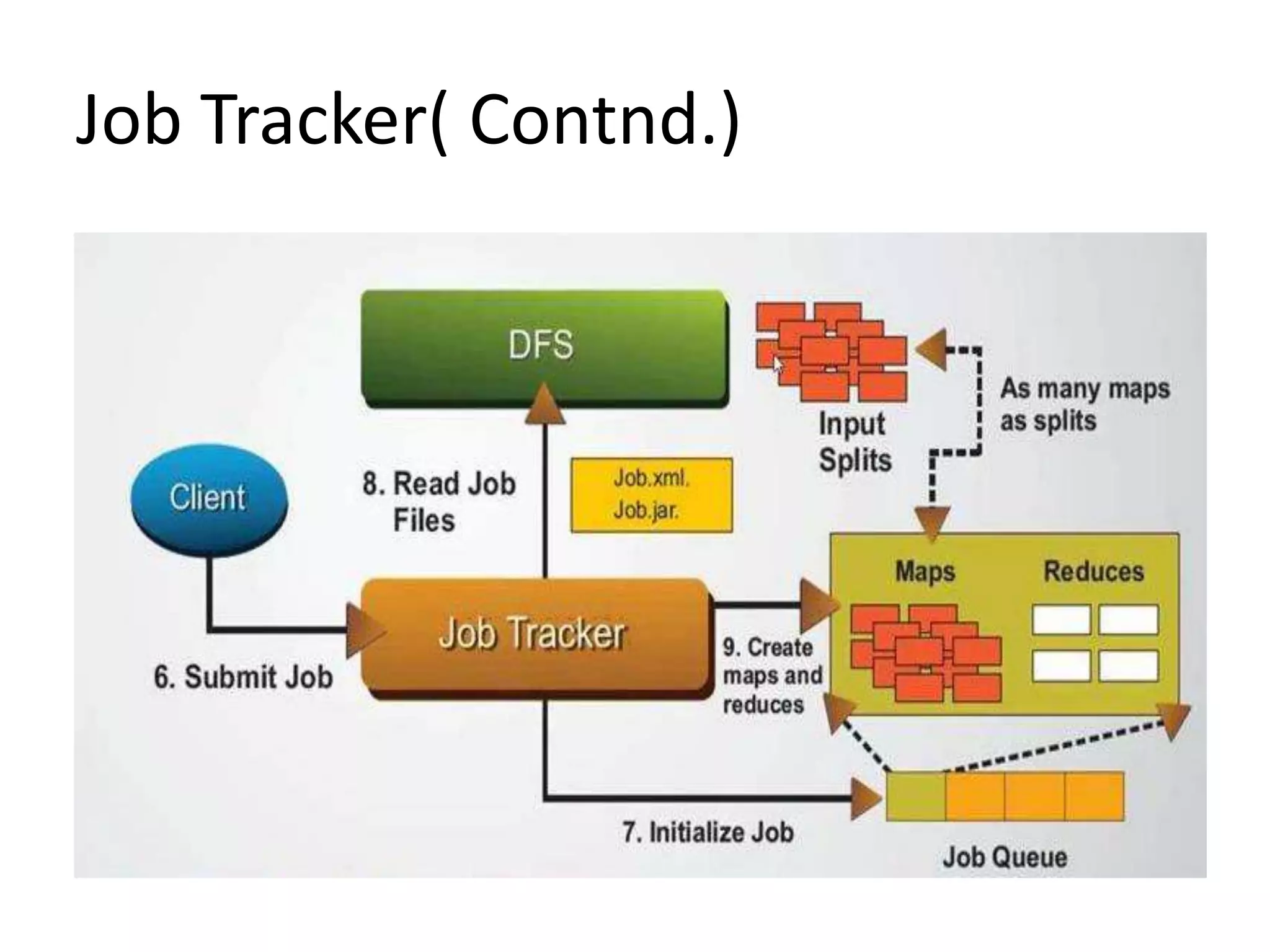

Continuation of Job Tracker explanation focusing on its management capabilities.

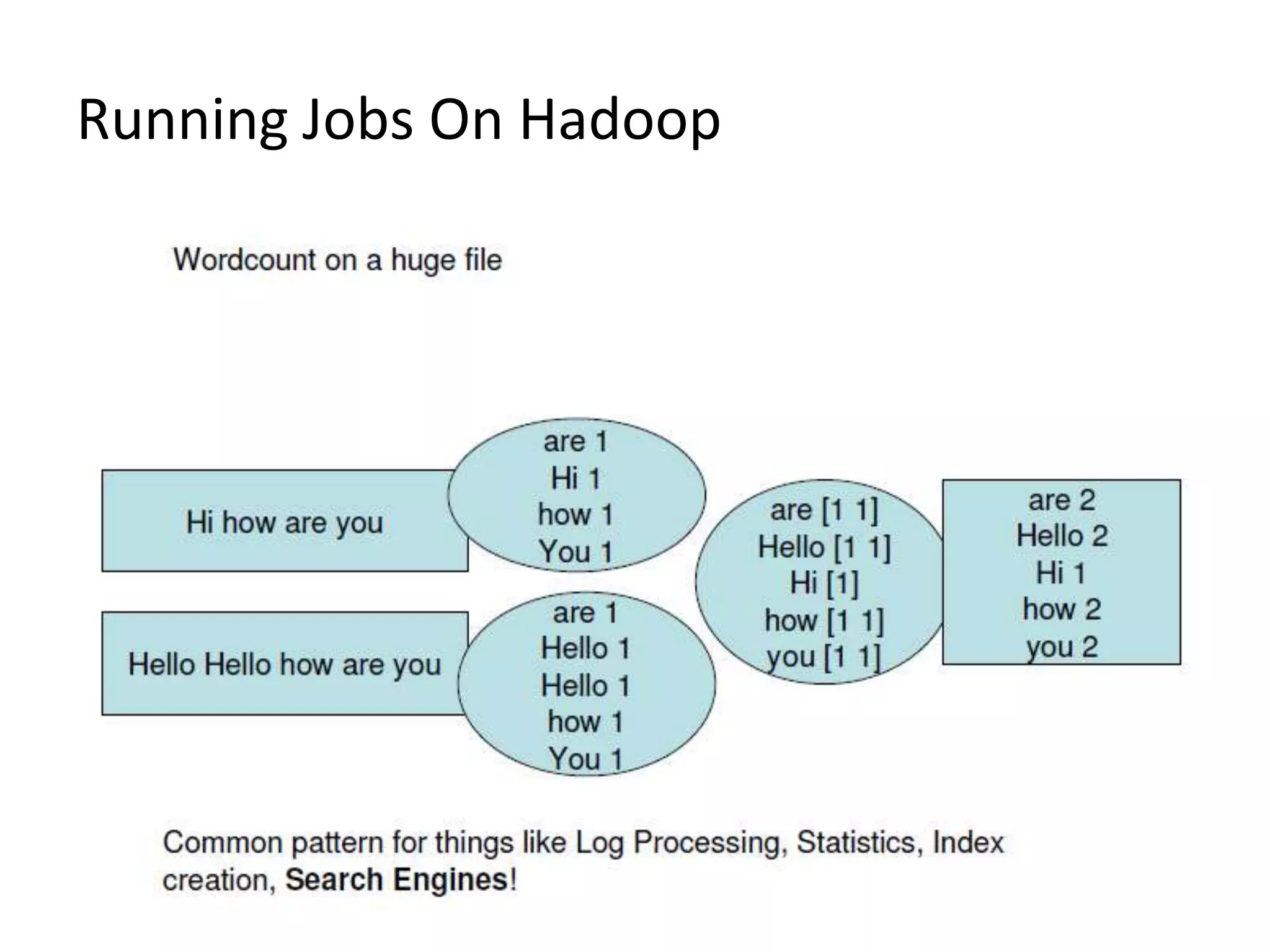

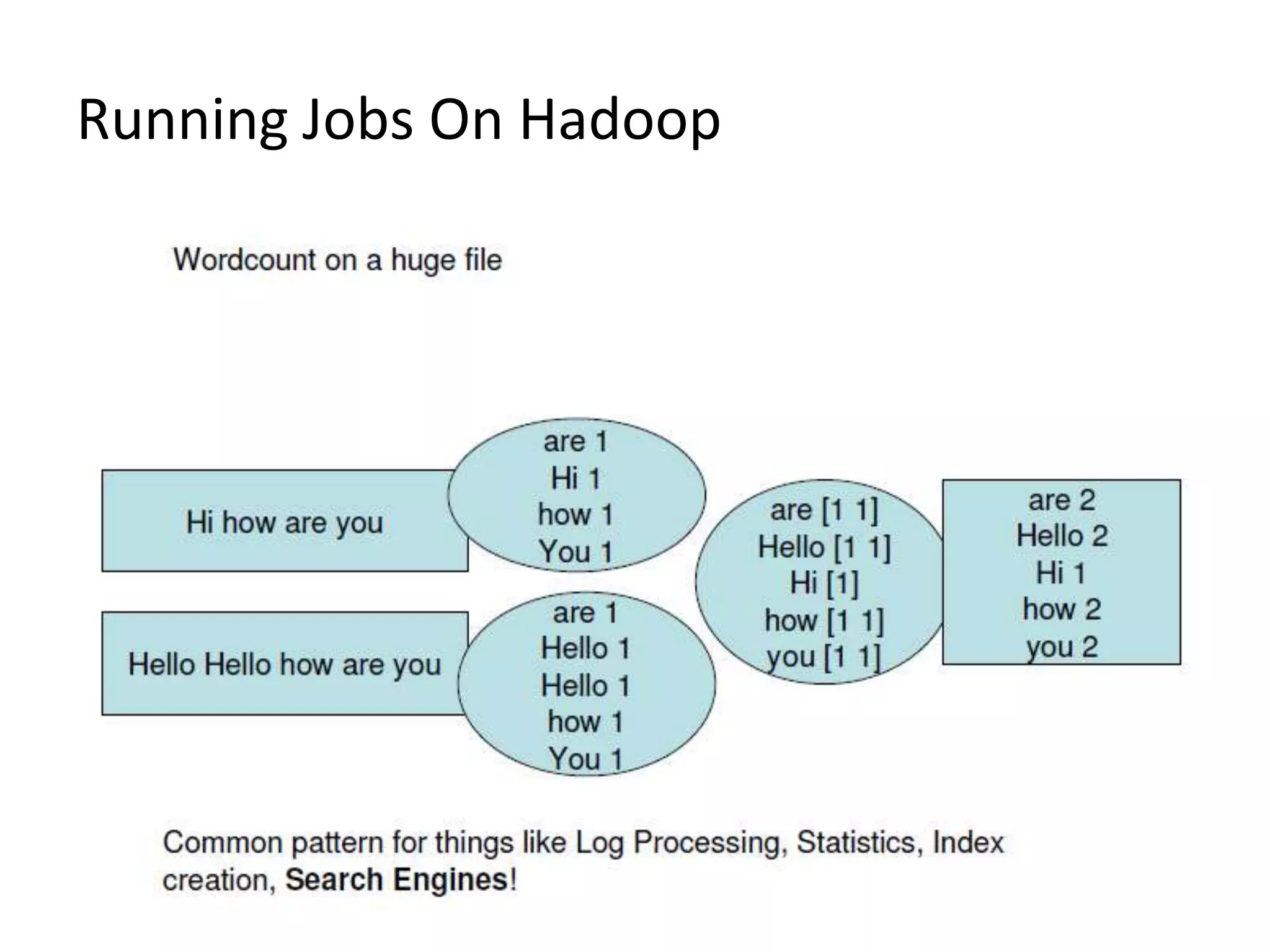

Overview of how jobs are run within the Hadoop ecosystem.

Areas where Hadoop is not effective: low-latency access, small files, and multiple writers.

References including links to Hadoop documentation and educational videos.

Closing remarks and thanking the audience for their attention.