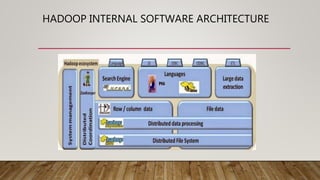

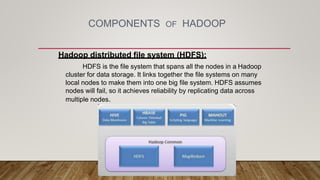

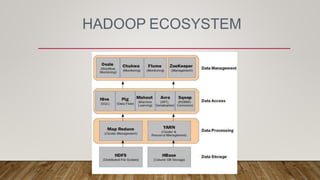

Hadoop is an open-source framework developed for distributed data processing, derived from Google's MapReduce paper and commonly used by companies like Yahoo and Facebook for data management and analysis. Its ecosystem includes the Hadoop Distributed File System (HDFS) and MapReduce, allowing organizations to efficiently store and process large datasets. Hadoop's scalability, cost-effectiveness, and flexibility make it a preferred choice for enterprises looking to consolidate data and enhance analytics capabilities.