Embed presentation

Download as PDF, PPTX

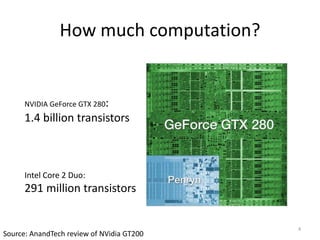

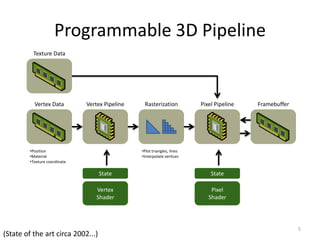

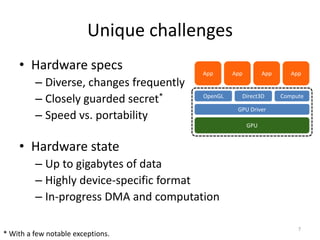

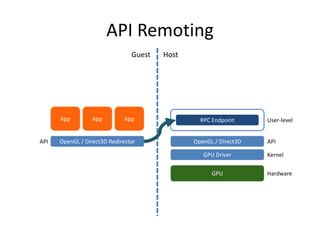

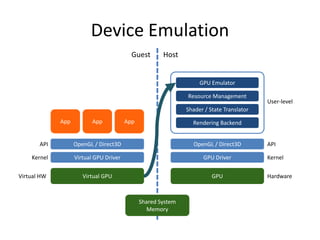

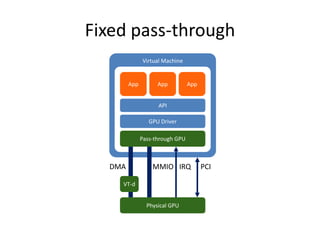

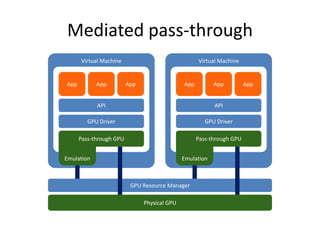

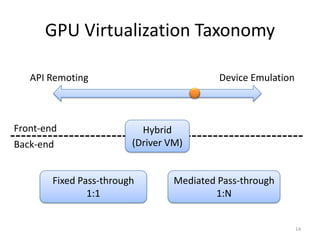

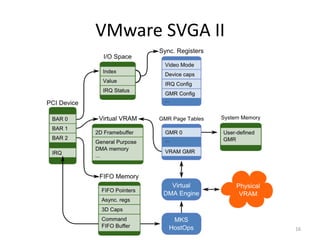

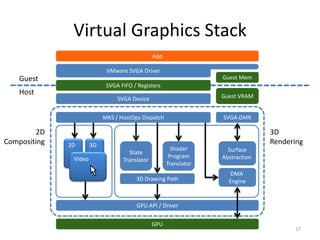

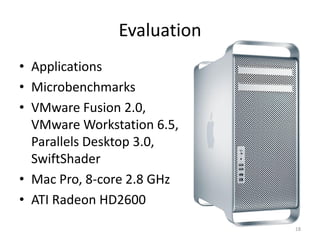

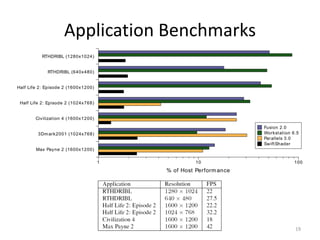

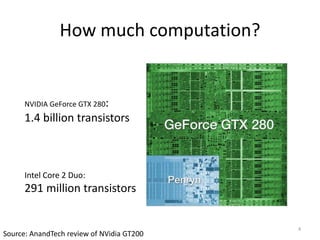

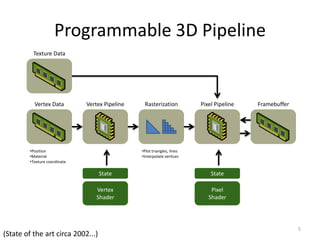

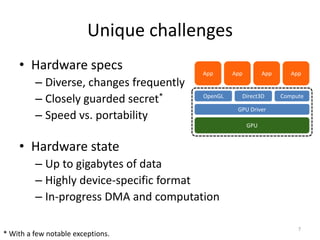

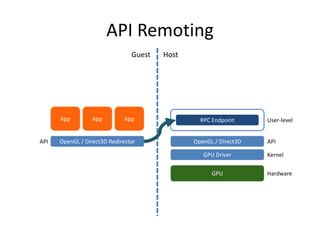

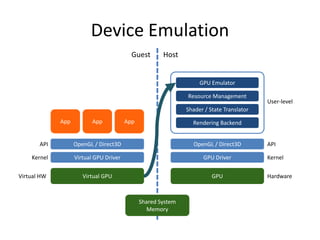

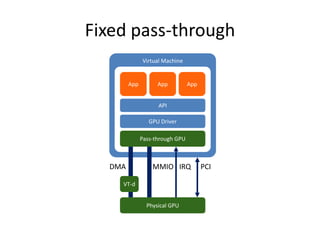

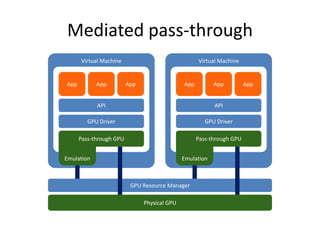

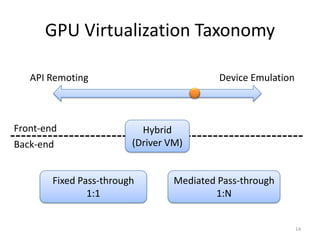

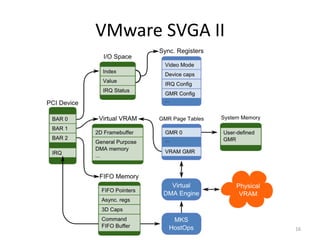

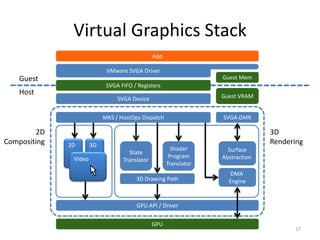

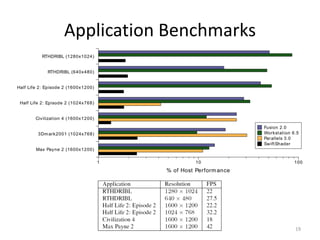

The document discusses GPU virtualization on VMware's hosted I/O architecture, addressing its complexity and importance for running interactive applications in virtual environments. It highlights unique challenges such as API diversity and hardware state management while outlining VMware's virtual GPU capabilities and resource management. The conclusion emphasizes the potential for future improvements in performance and functionality, including pass-through techniques and virtualization-aware benchmarks.