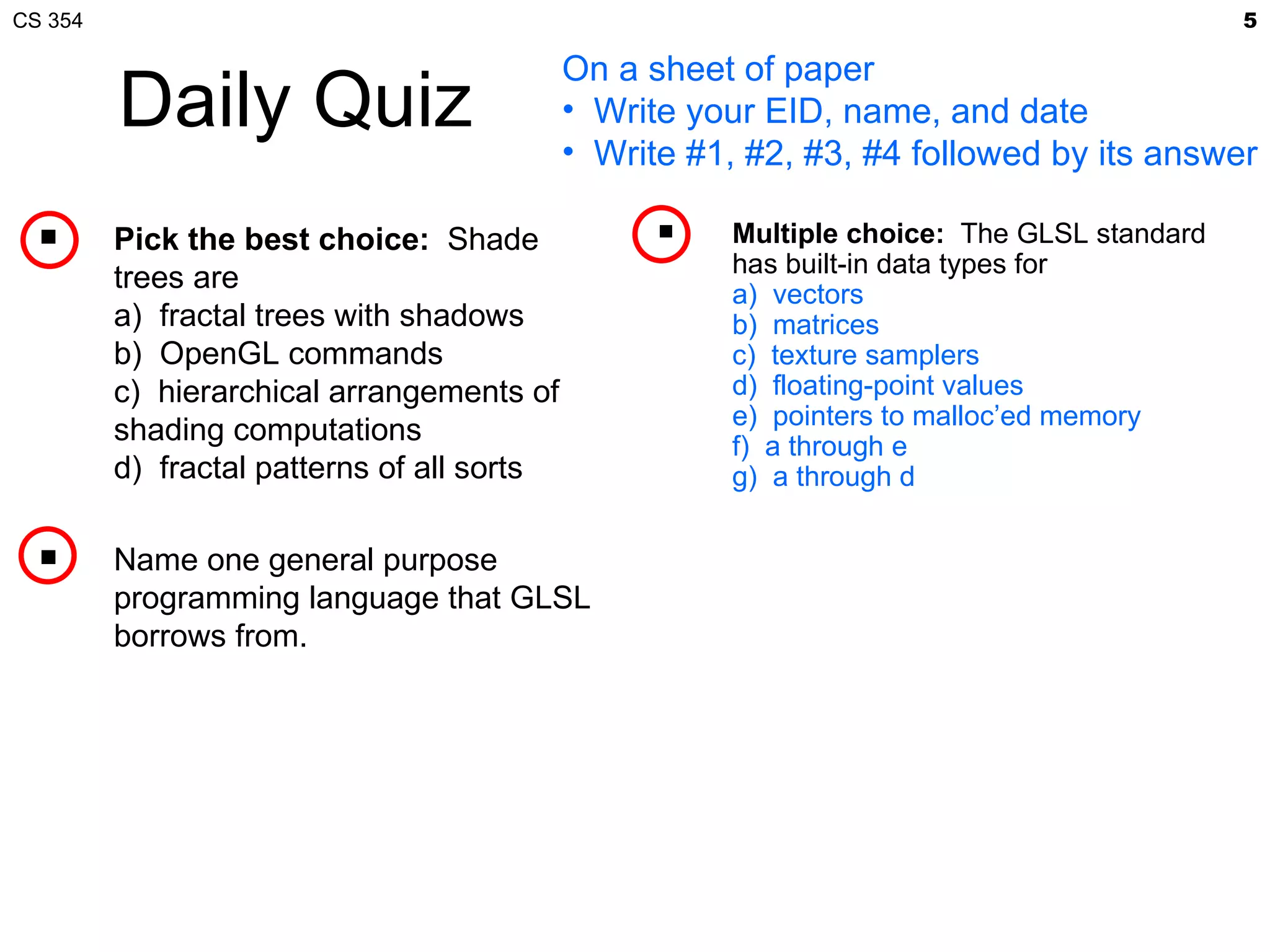

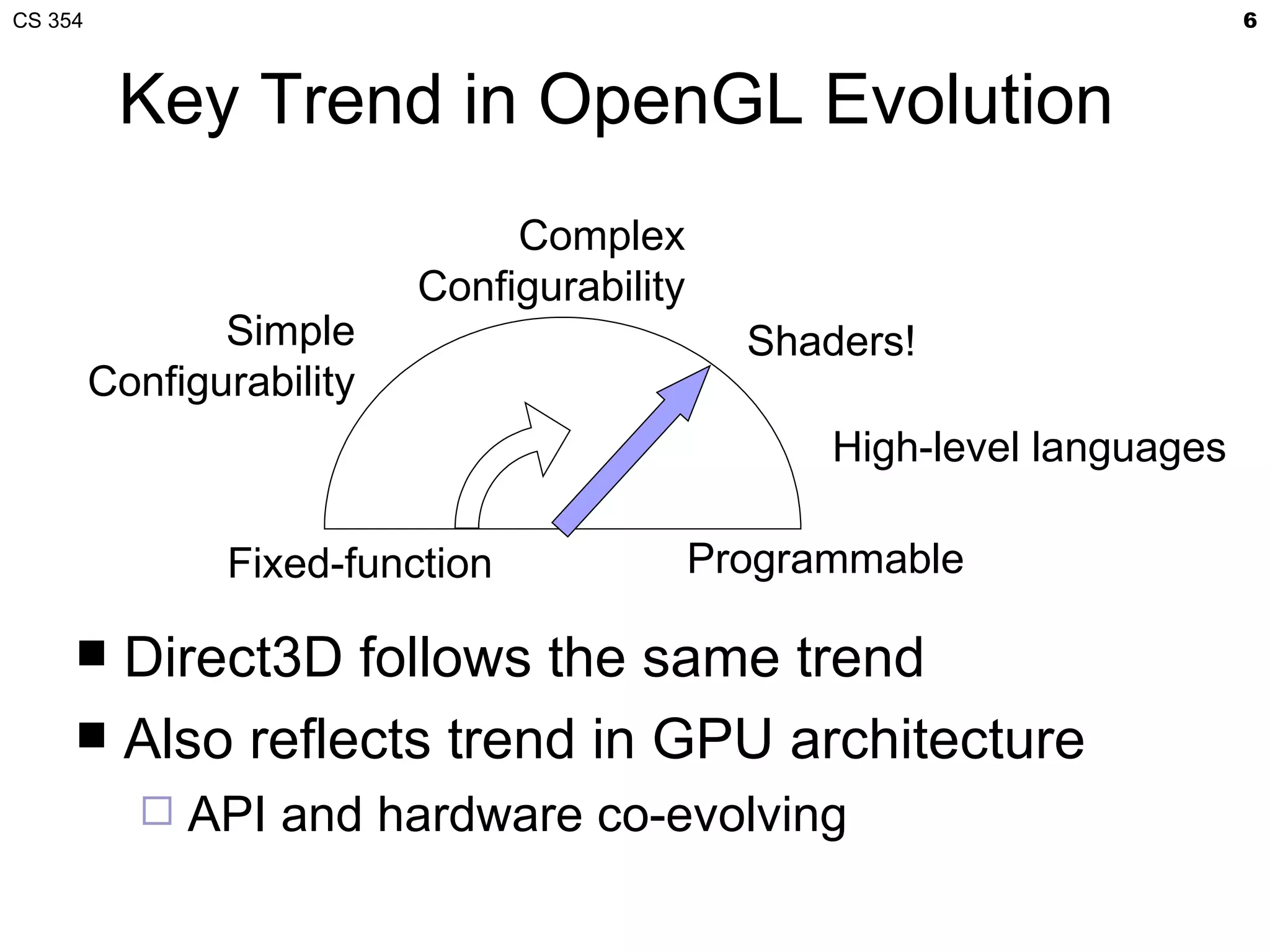

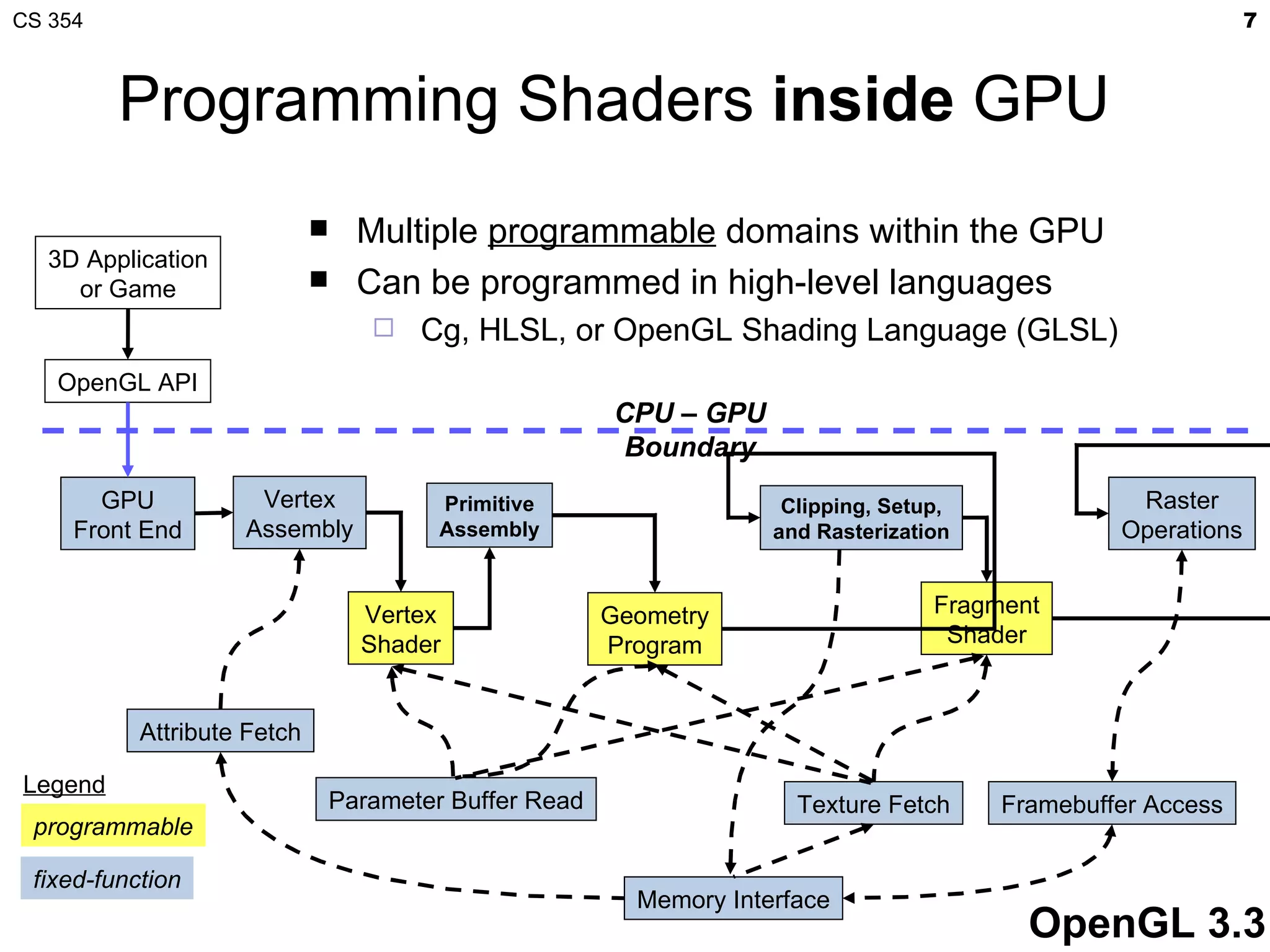

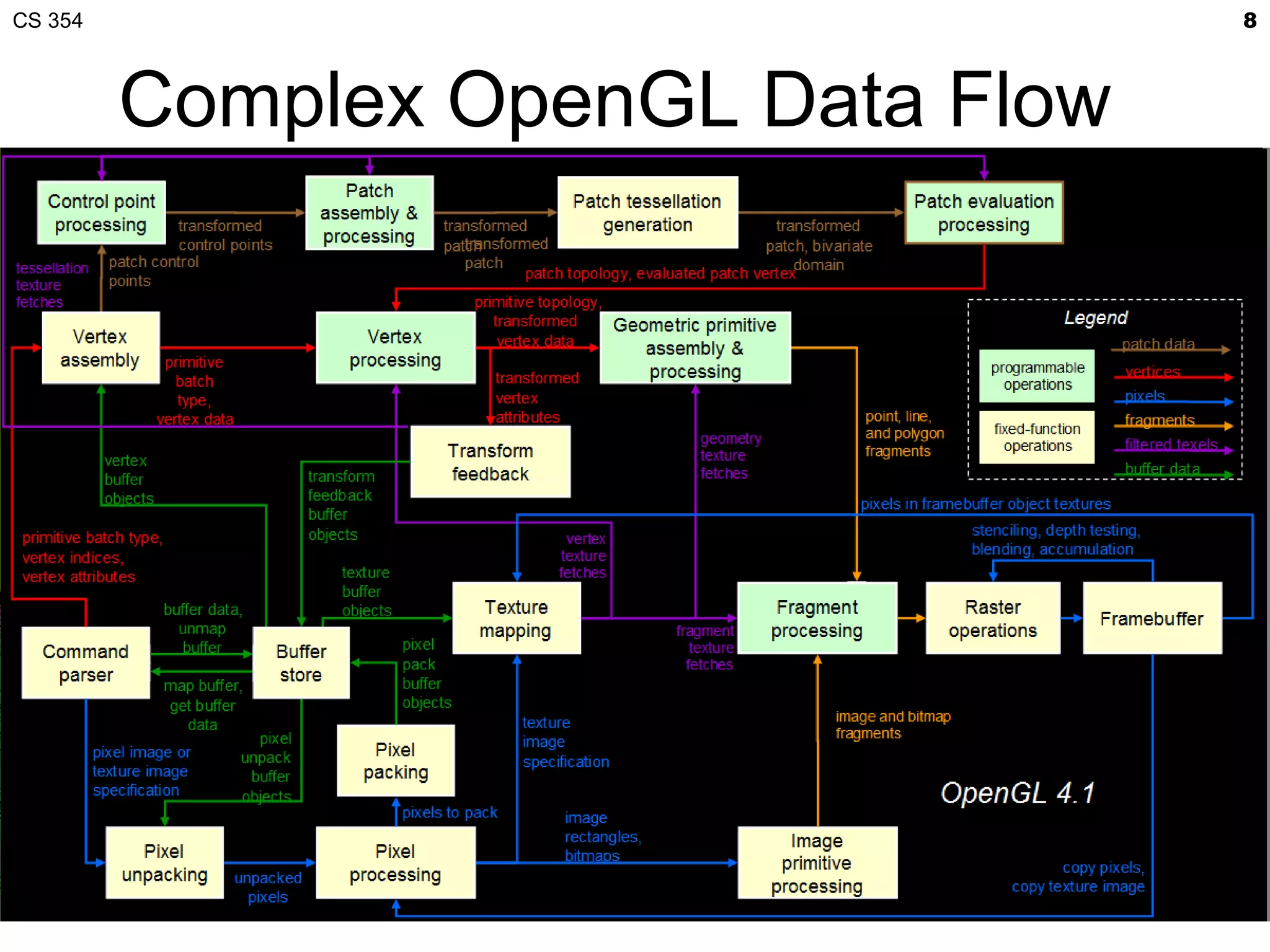

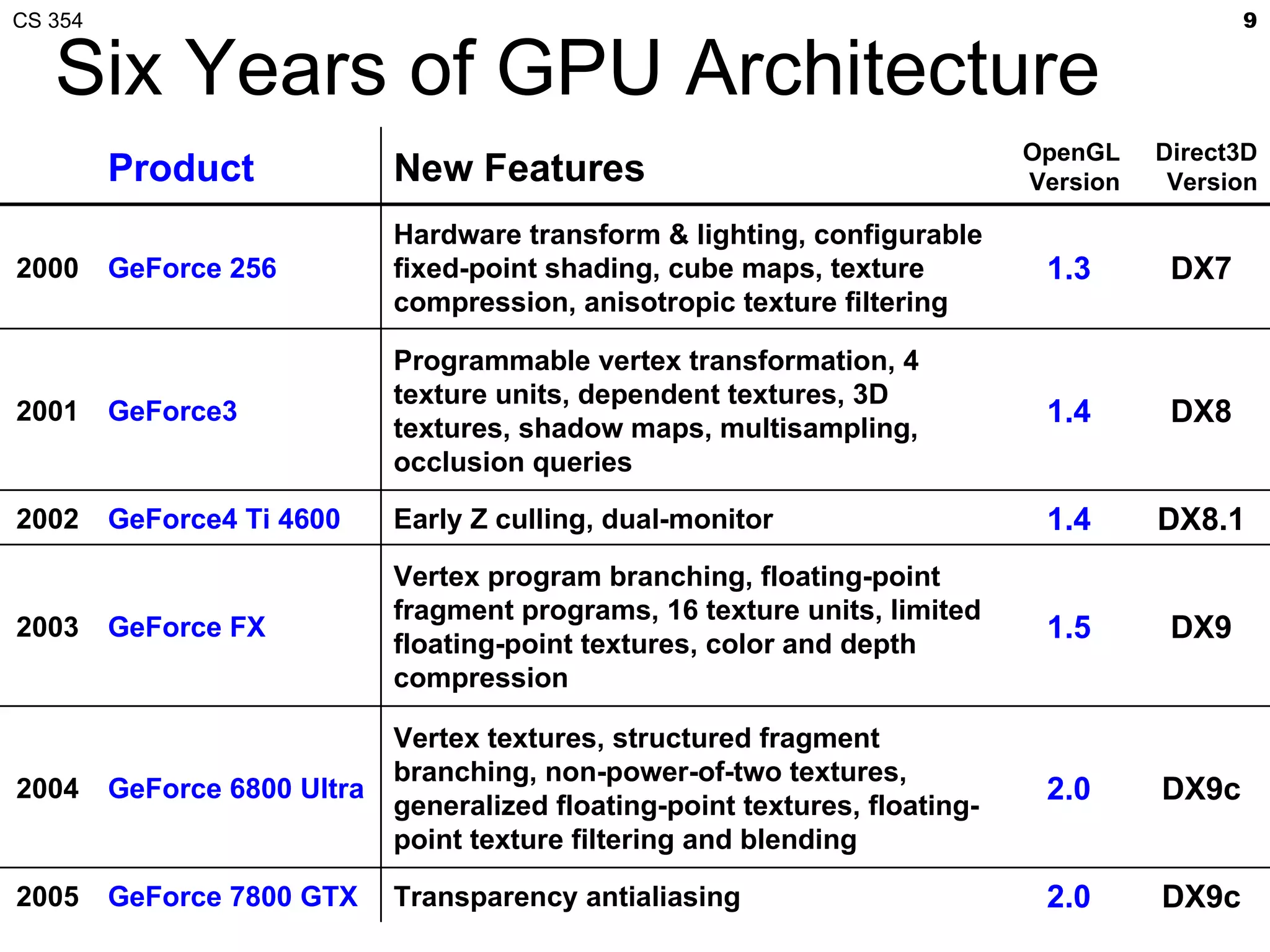

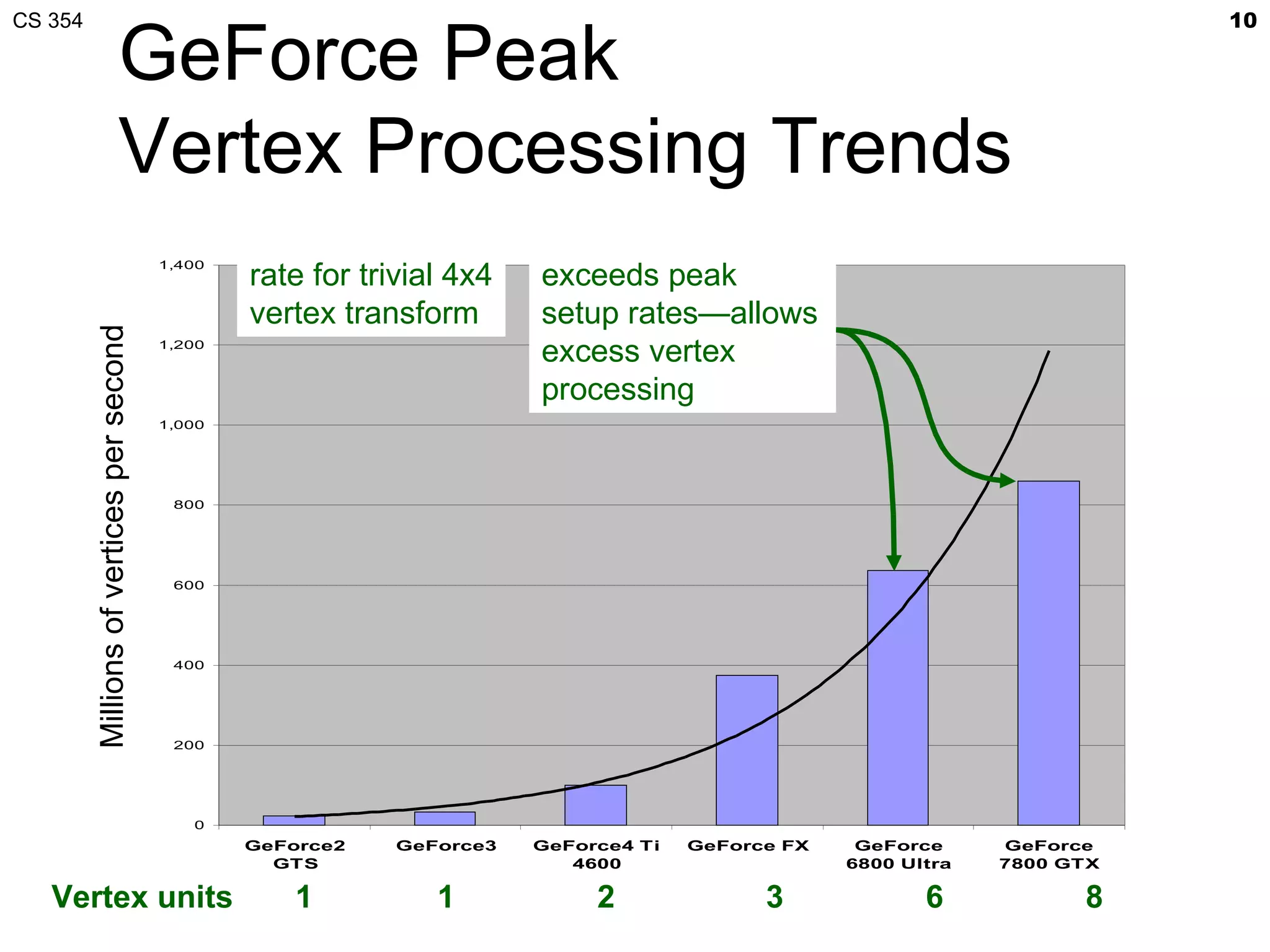

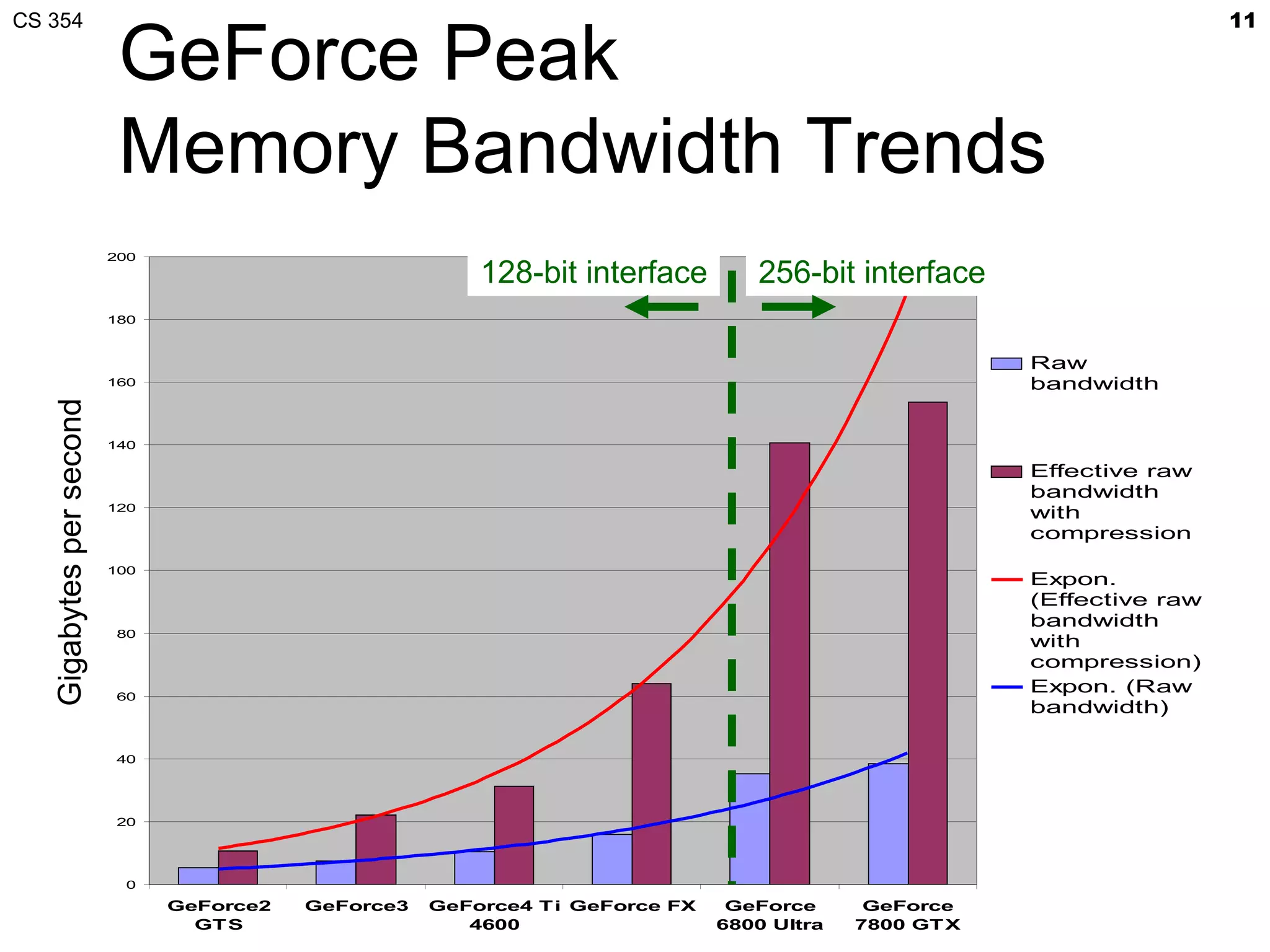

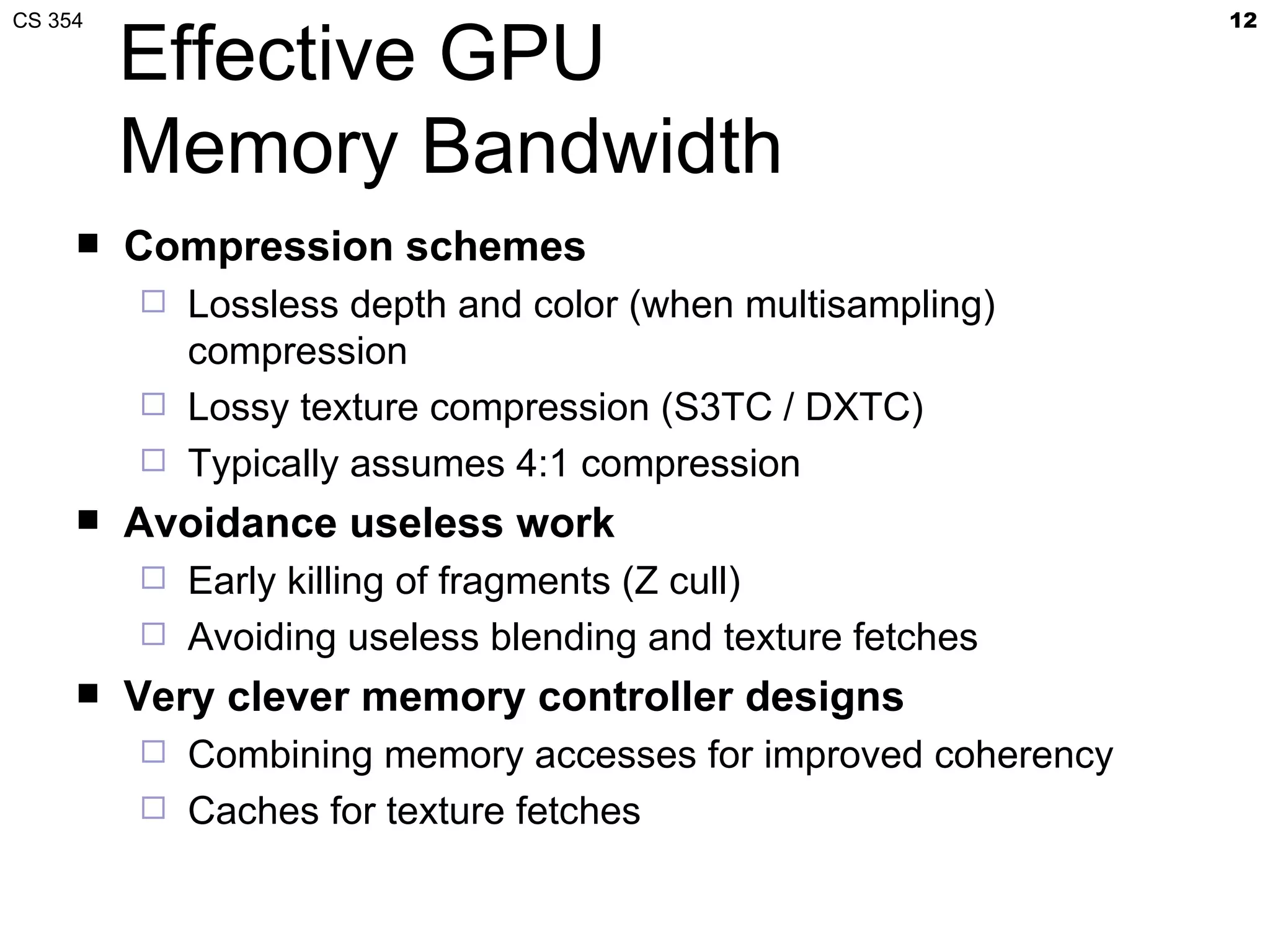

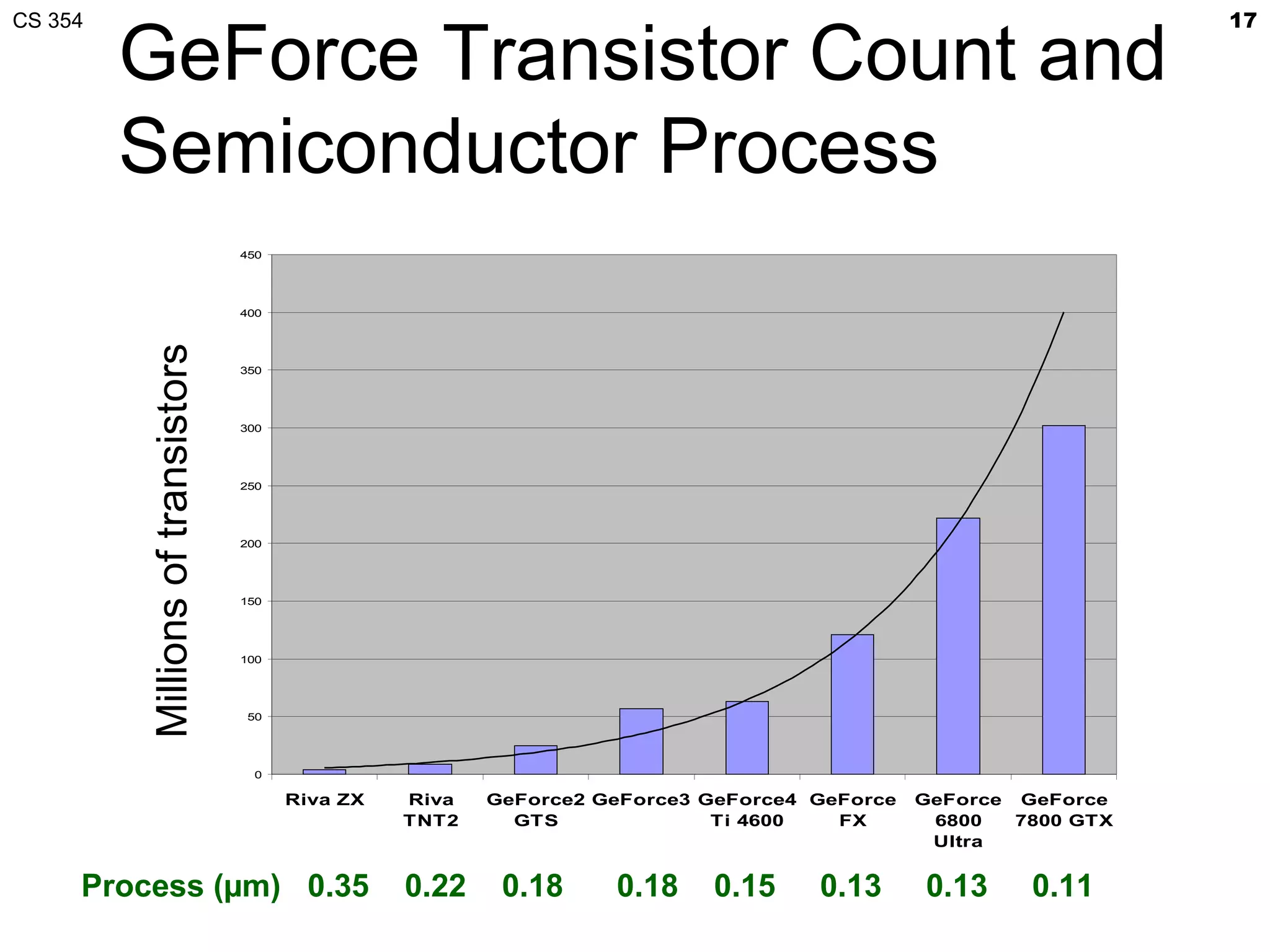

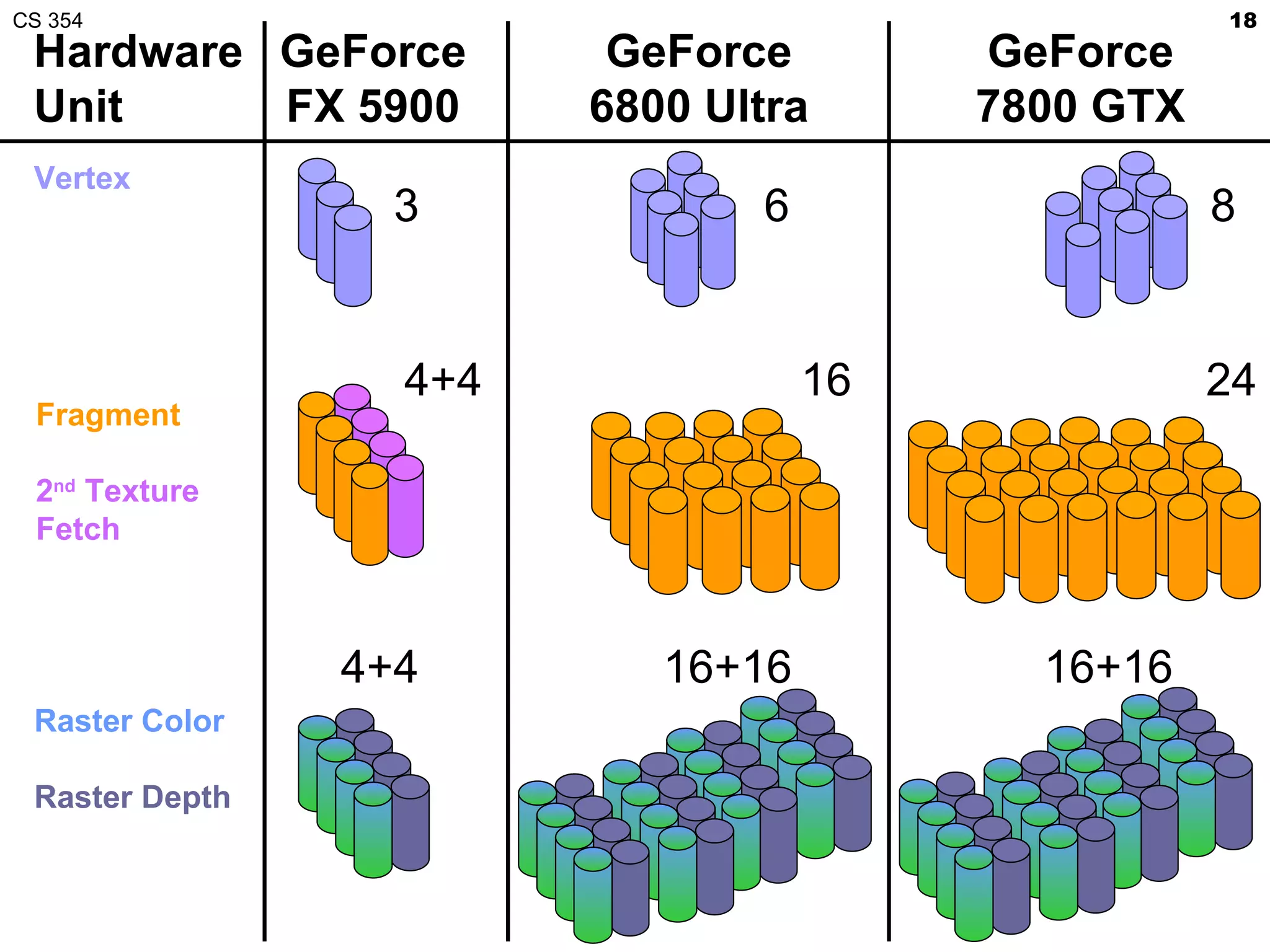

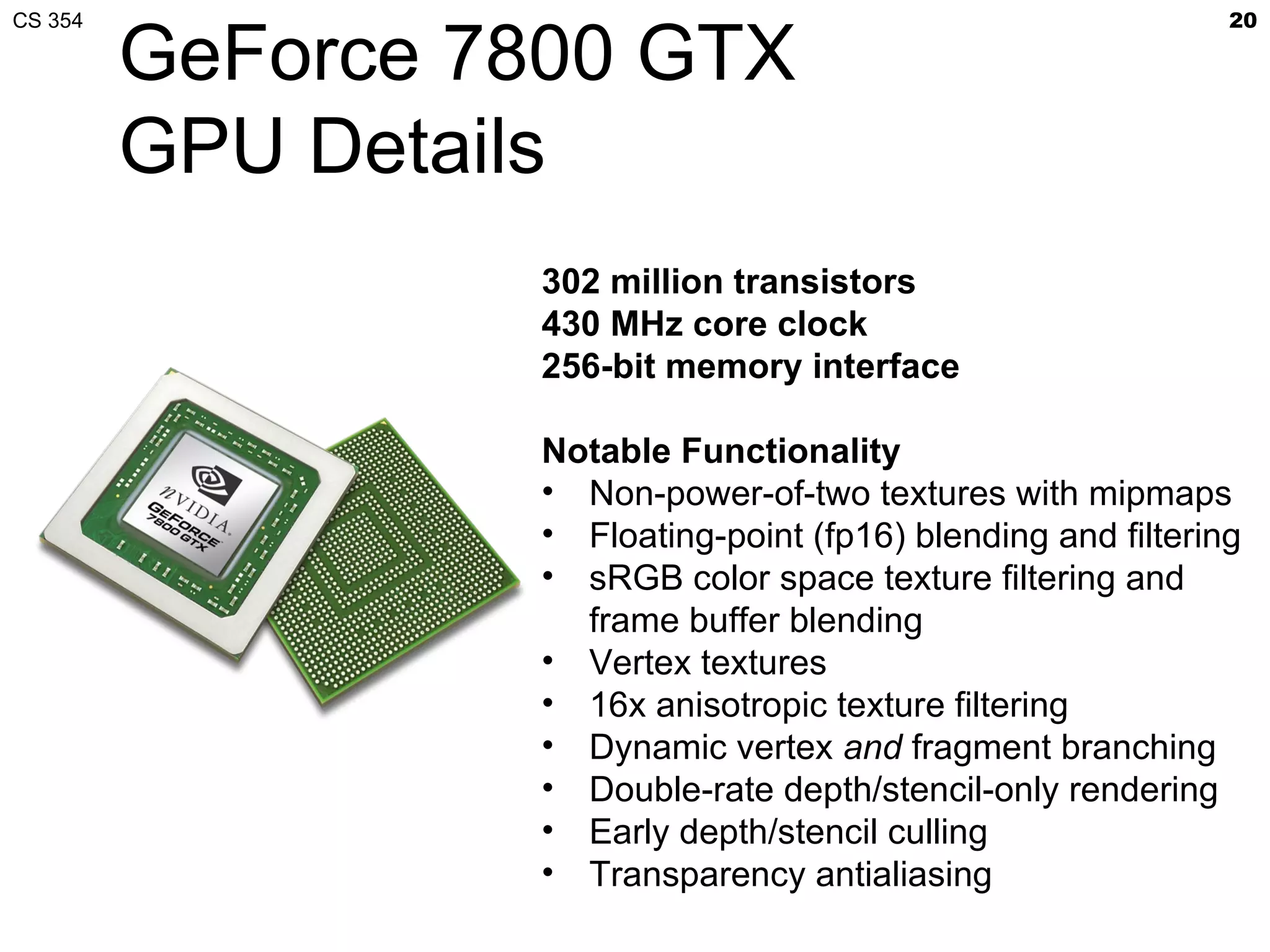

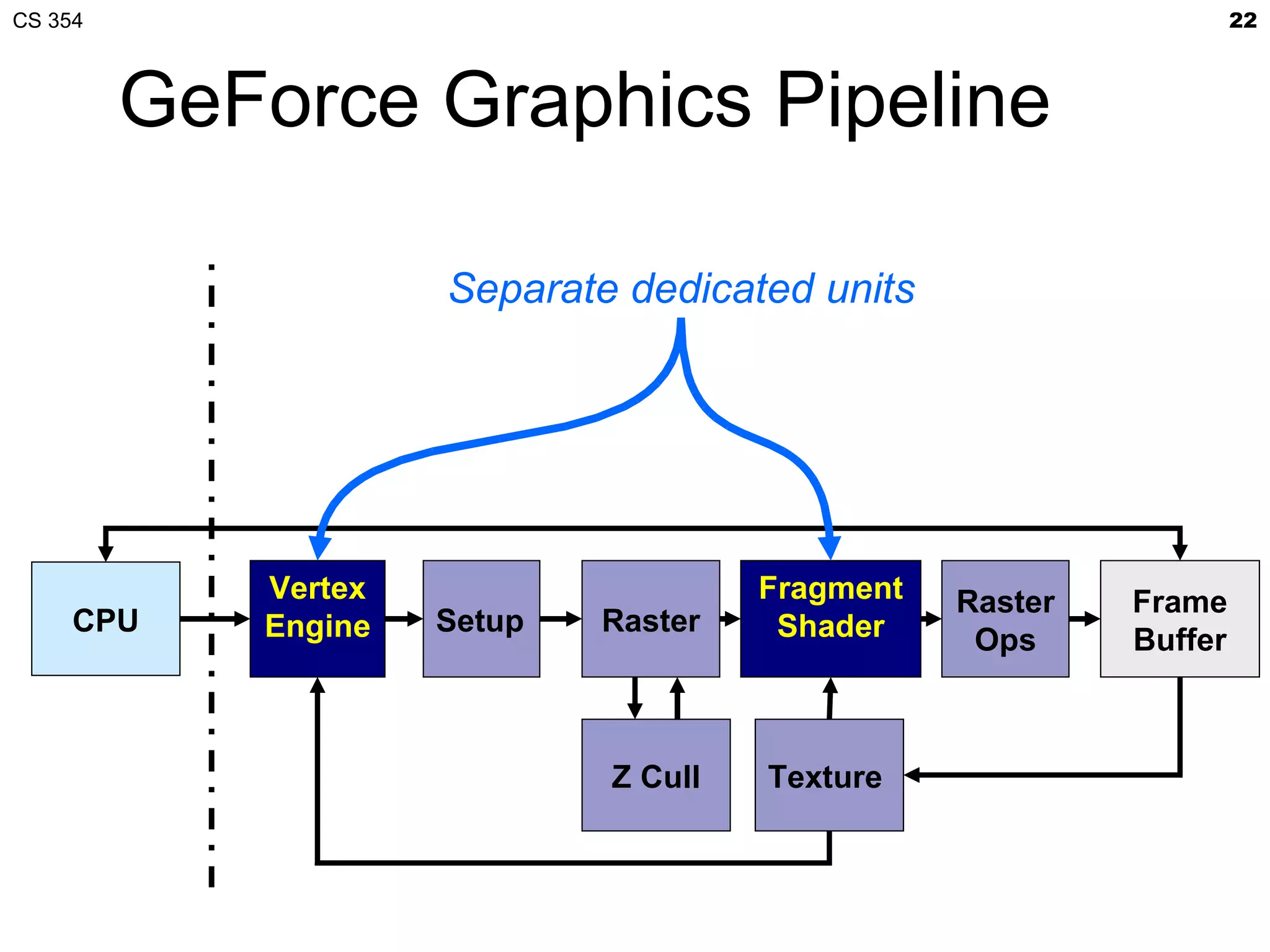

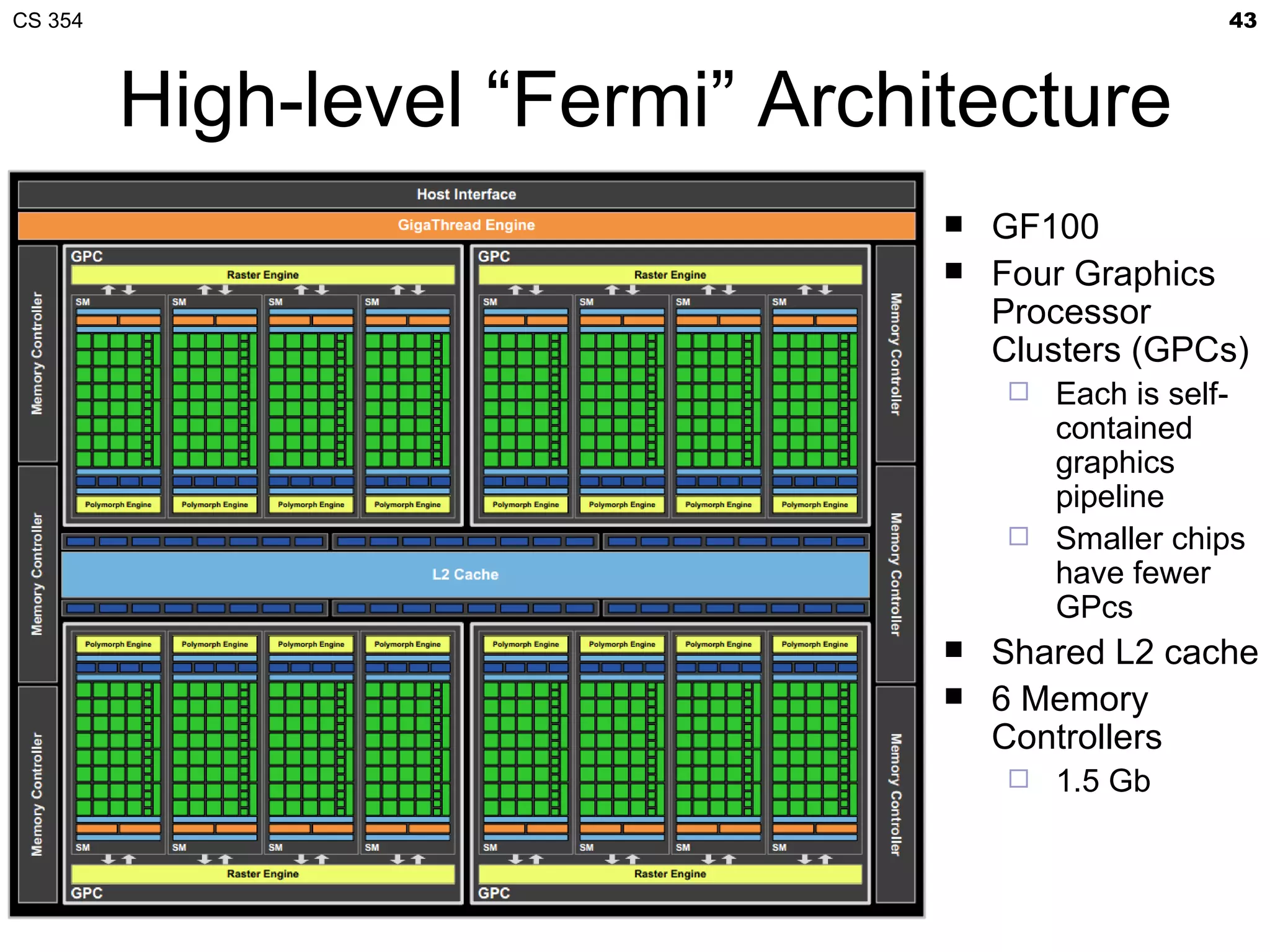

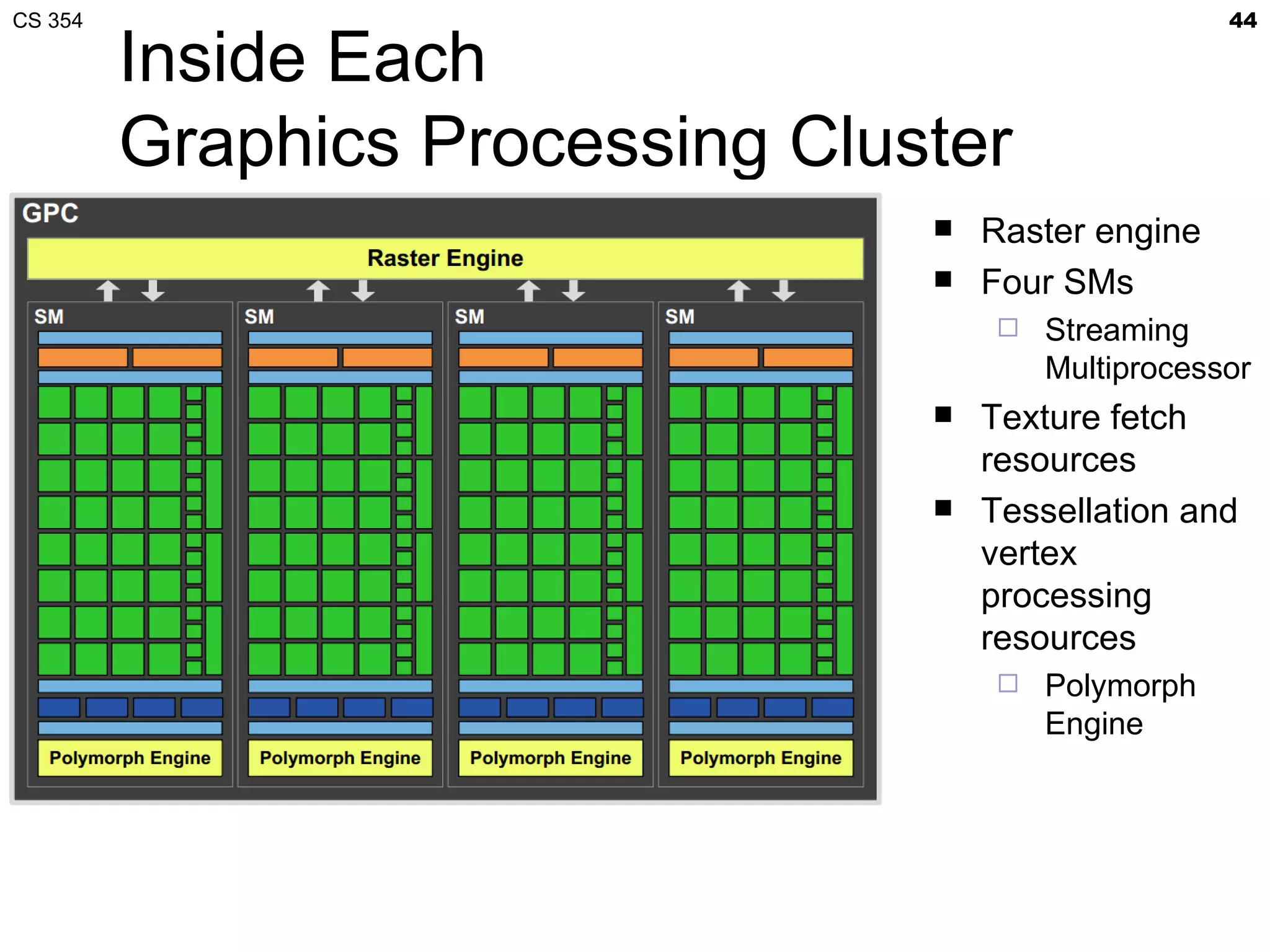

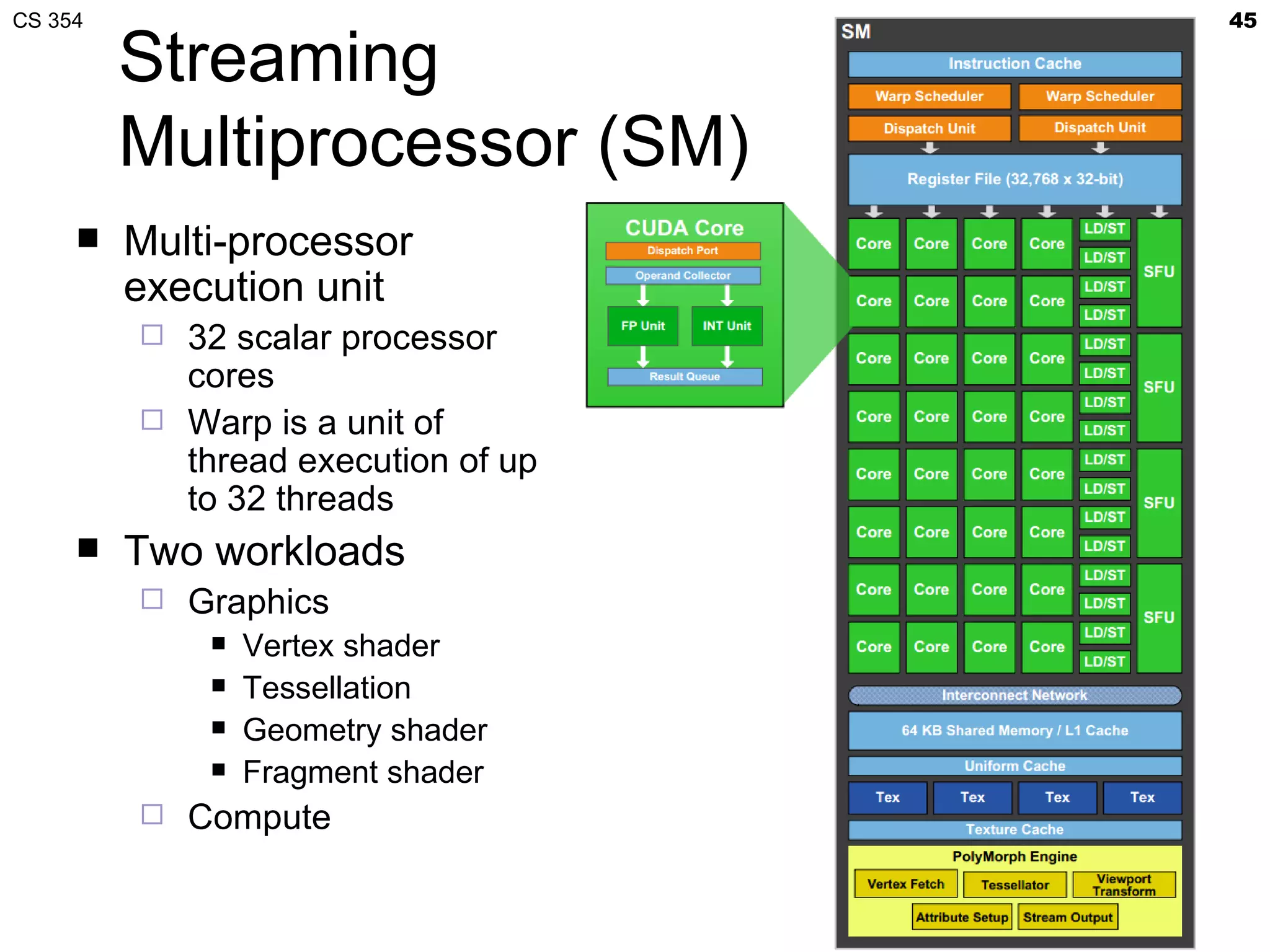

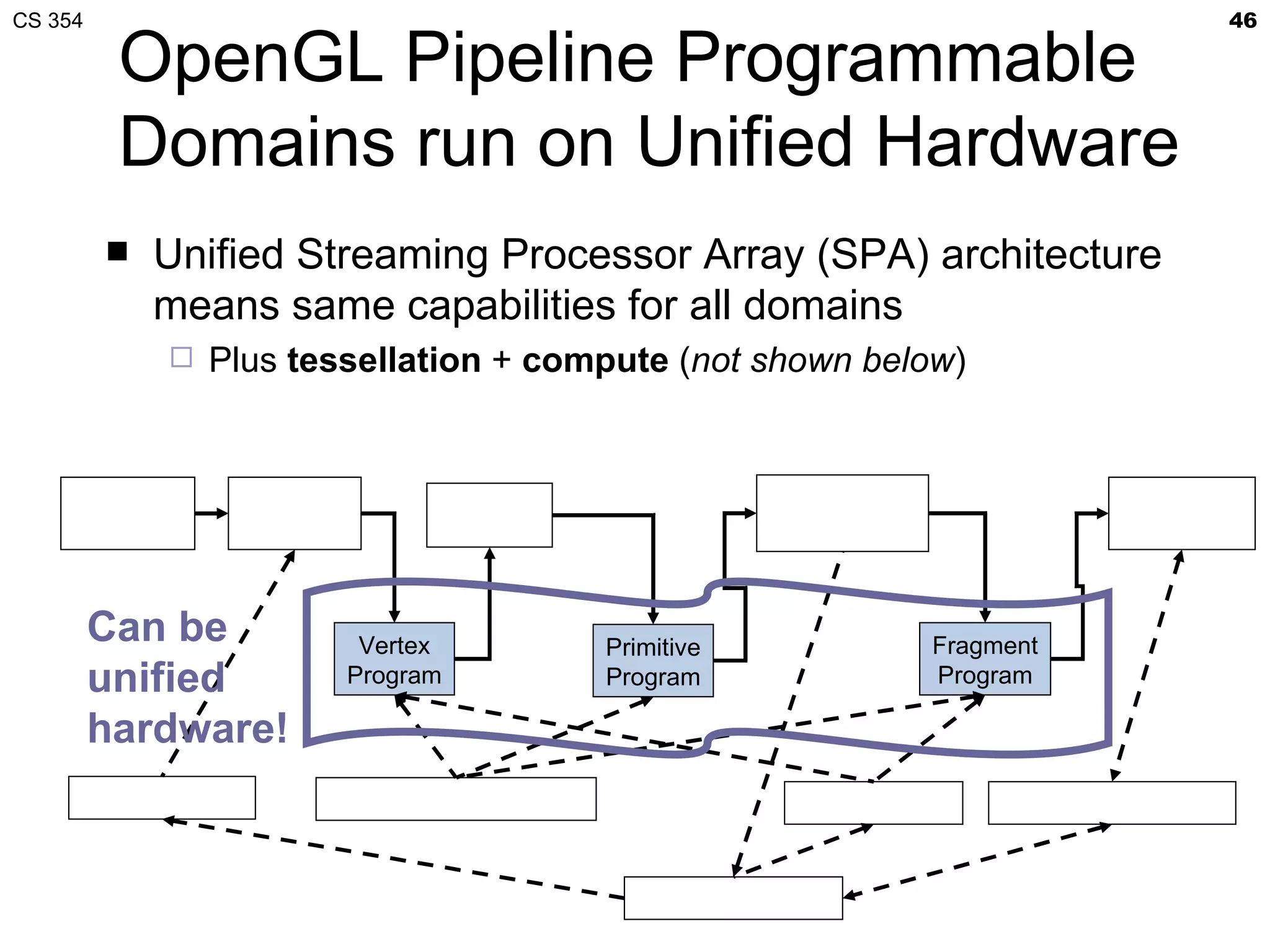

This document discusses a lecture on GPU architecture given by Mark Kilgard at the University of Texas on March 6, 2012. The lecture covers the architecture of graphics processing units and how they have evolved over the past six years. It also includes an in-class quiz, information about homework and projects, and the professor's office hours.