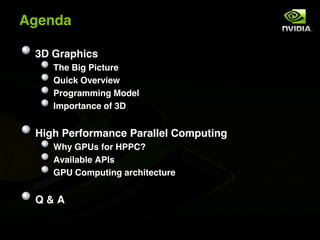

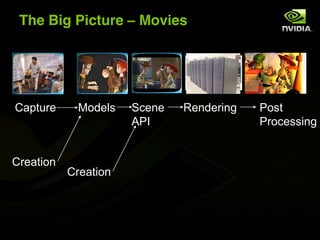

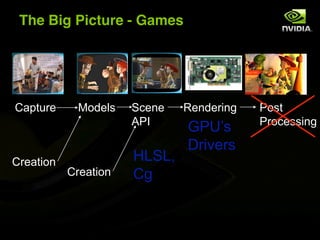

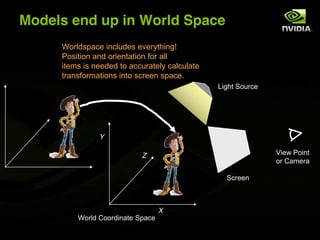

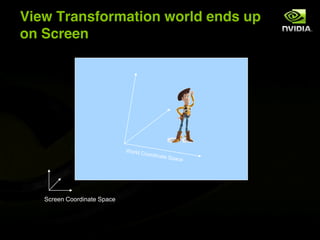

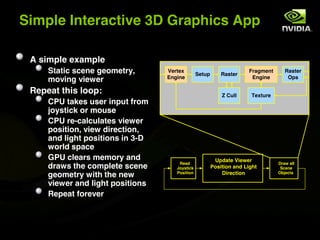

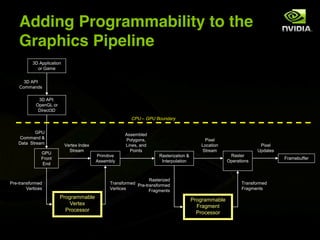

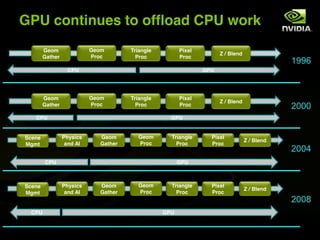

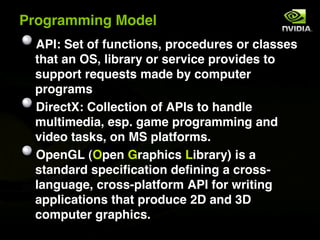

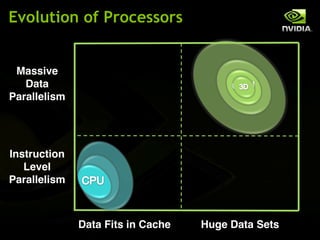

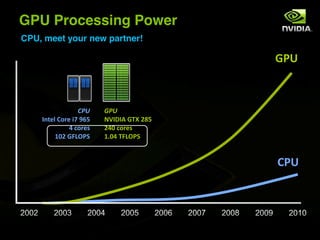

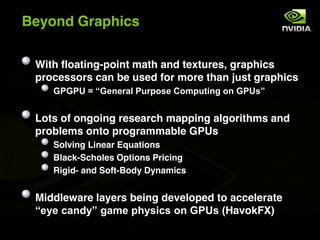

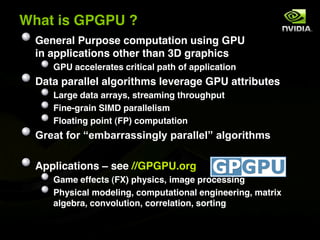

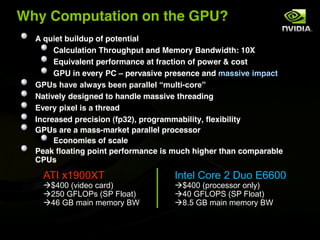

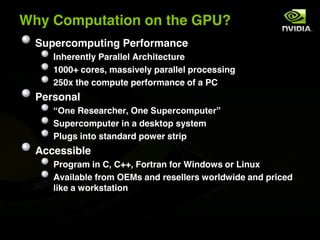

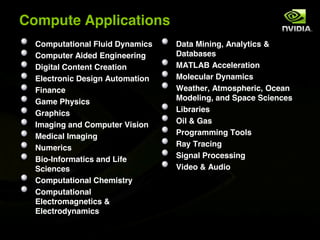

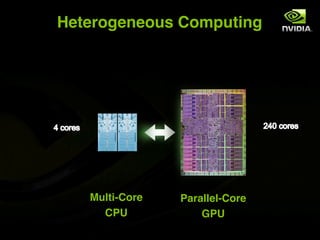

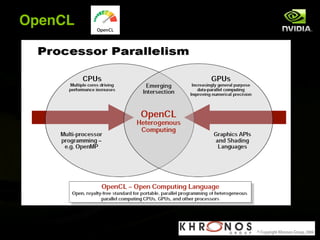

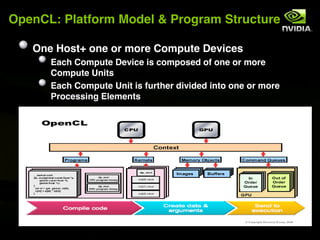

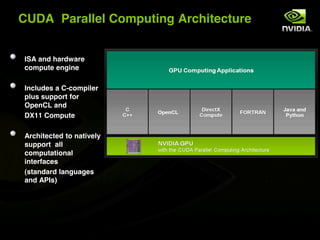

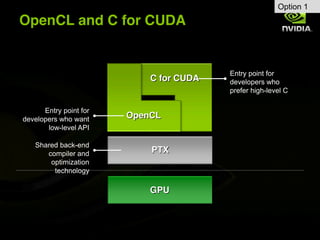

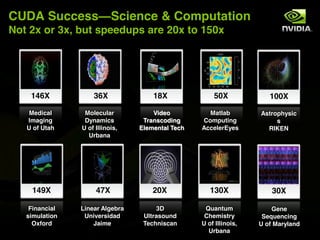

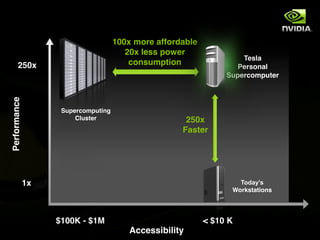

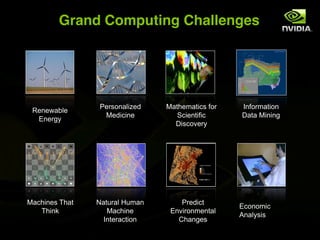

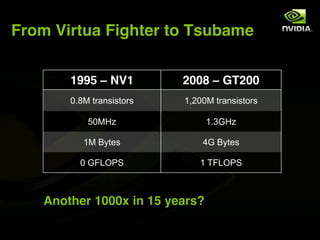

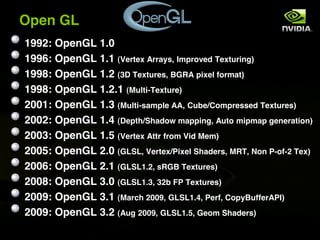

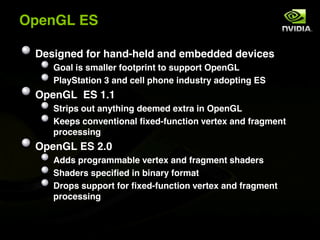

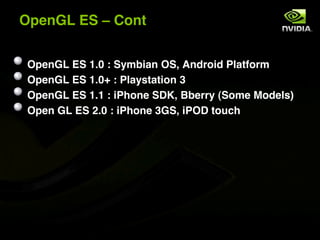

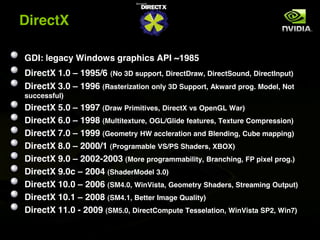

The document discusses the history and evolution of 3D graphics technologies including OpenGL and DirectX, provides an overview of GPU programming models and architectures, and explores how GPUs are increasingly being used for general purpose computing beyond just graphics through technologies like CUDA and OpenCL. It also highlights how GPUs can provide significant performance gains for parallel applications compared to CPUs.