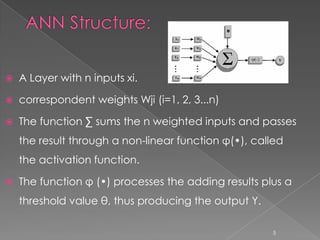

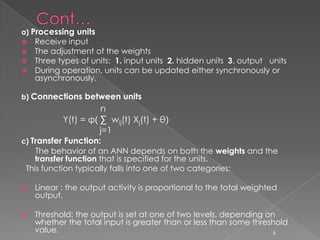

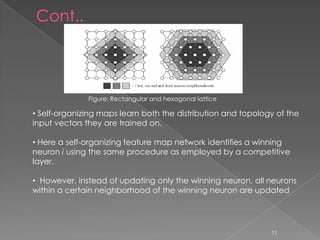

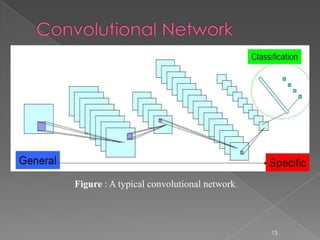

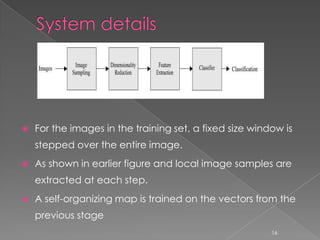

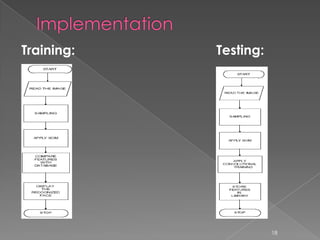

This document provides an overview of a face recognition system that uses artificial neural networks. It describes the structure and processing of artificial neural networks, including convolutional networks. It discusses how the system works, including local image sampling, the self-organizing map, and the convolutional network. It then provides details about the implementation and applications of the system for face recognition, and concludes by discussing the benefits of the system.