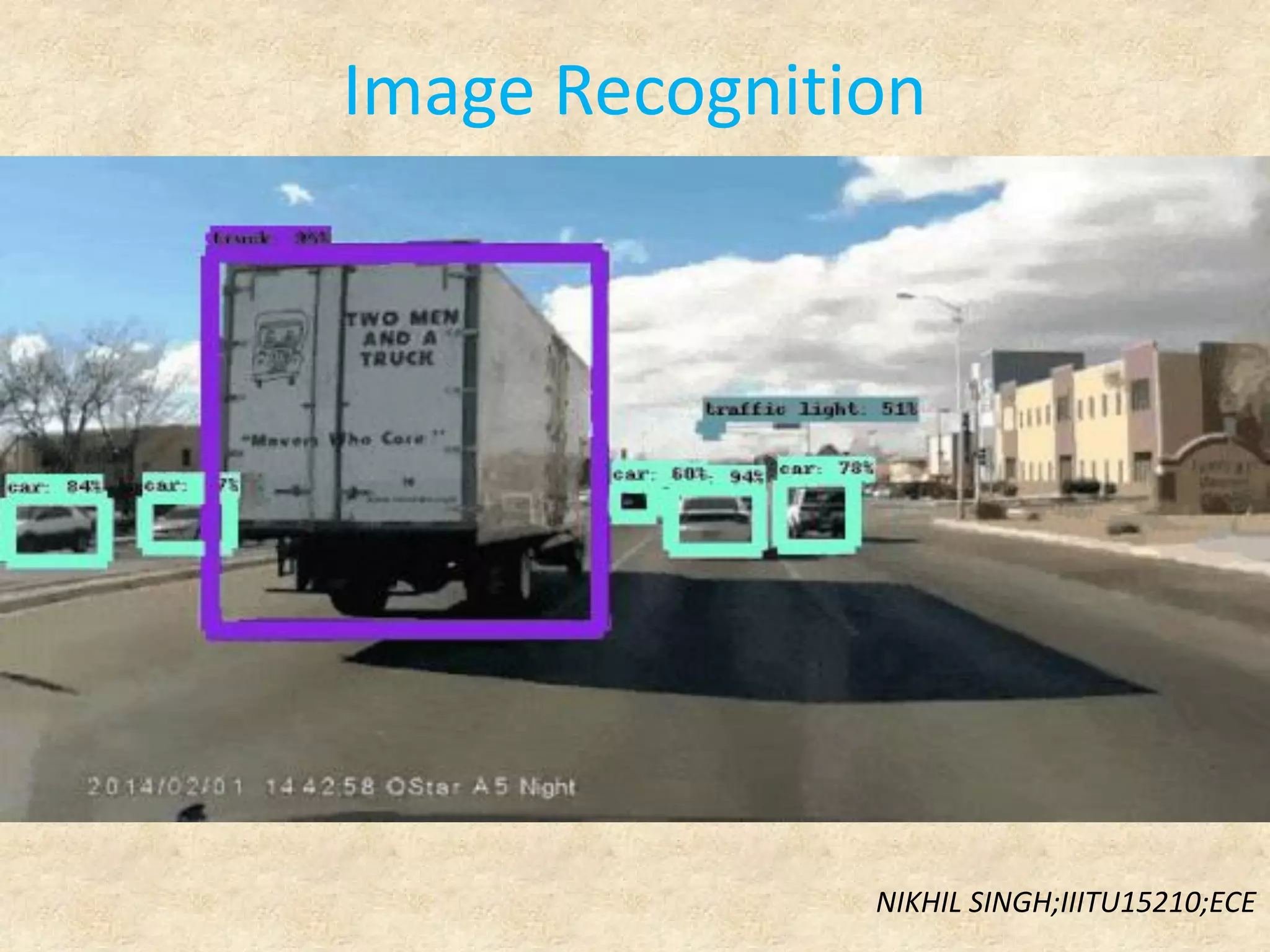

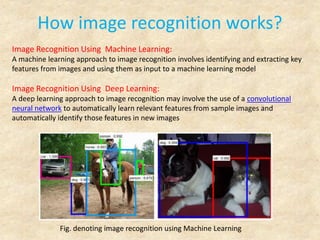

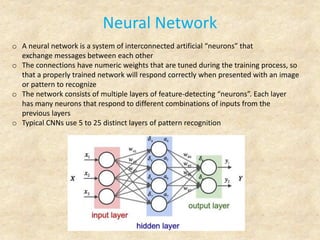

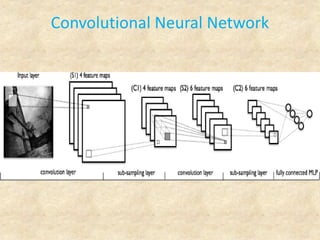

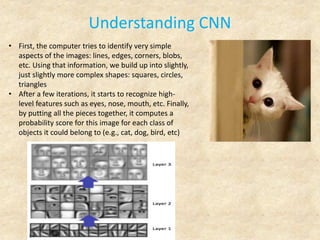

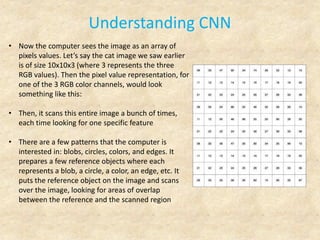

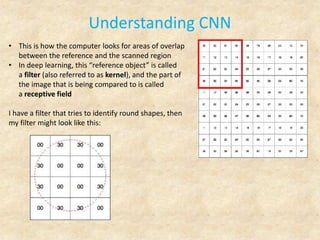

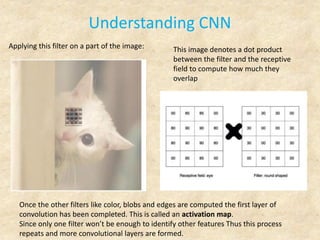

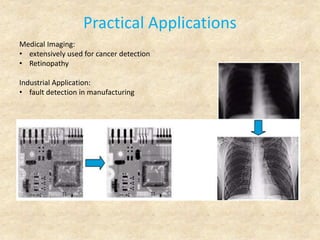

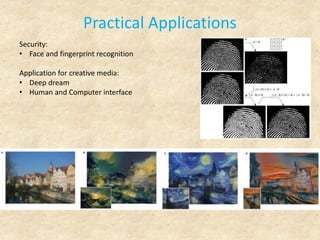

Image recognition is a technology that identifies and detects objects in images and videos, playing a crucial role in various fields such as robotics, security, and medical imaging. It utilizes machine learning and deep learning techniques, including convolutional neural networks, to extract features and make decisions based on visual data. The technology has numerous applications, from cancer detection to social media marketing insights, and holds significant future potential in various industries.