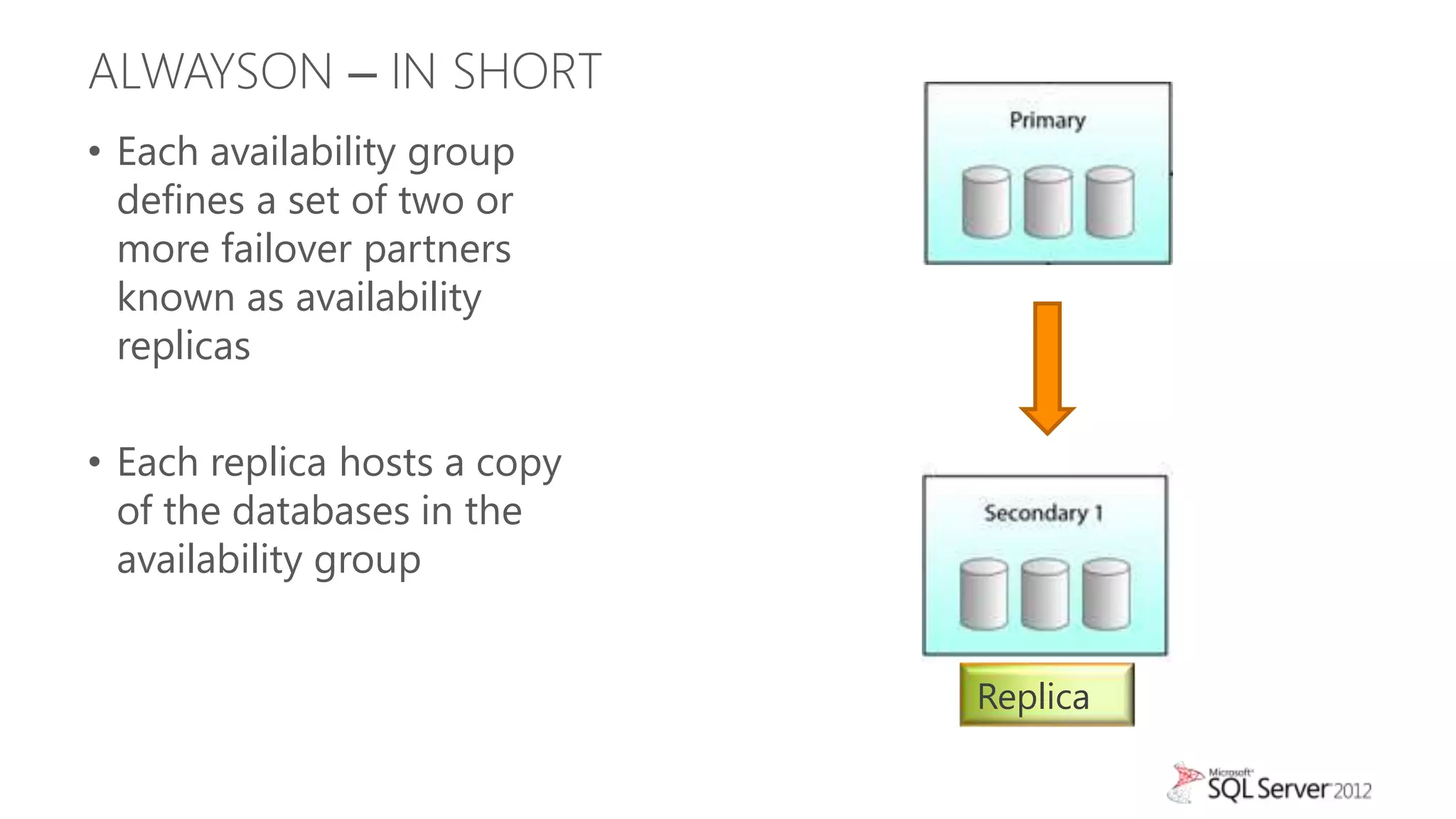

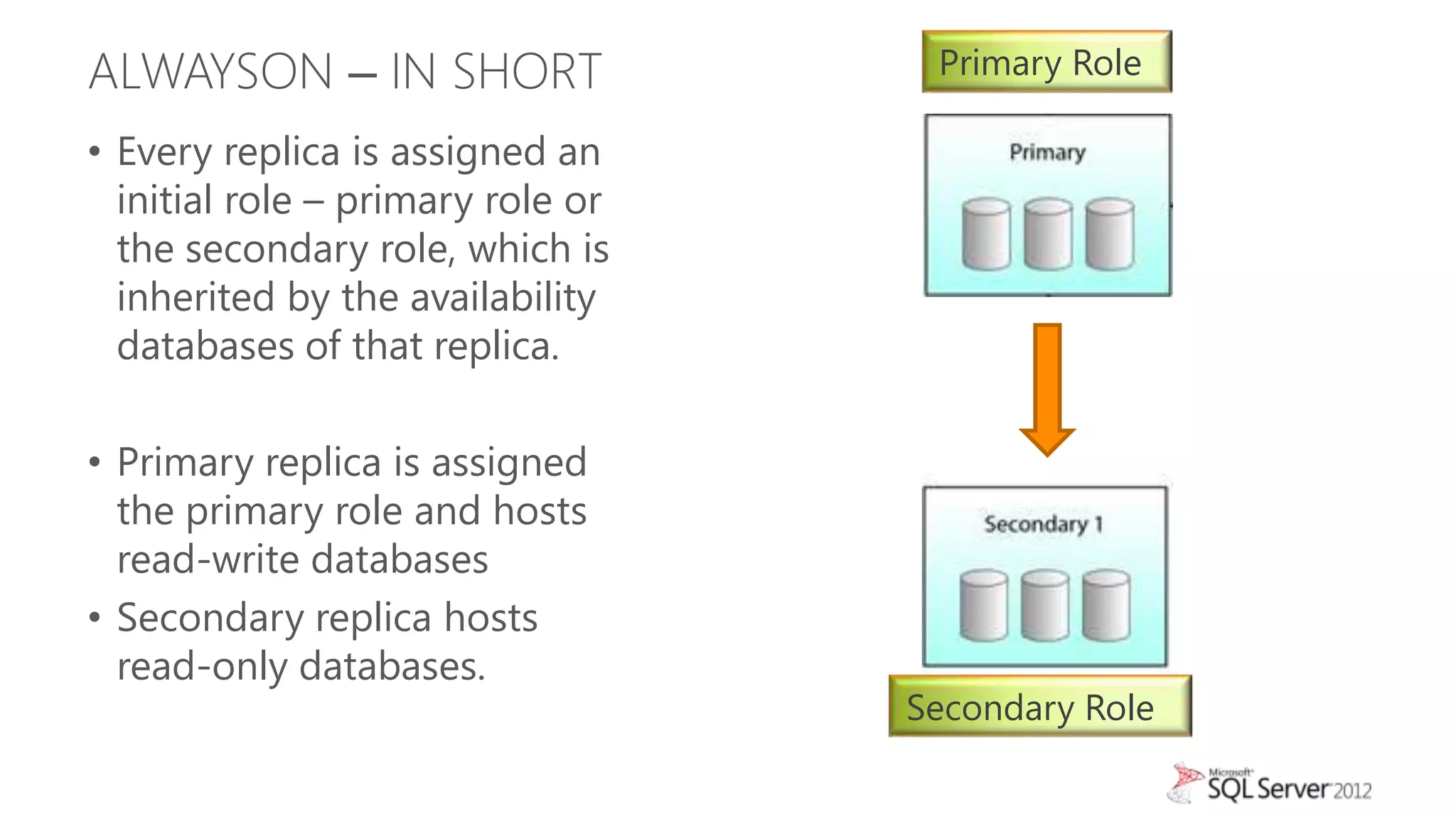

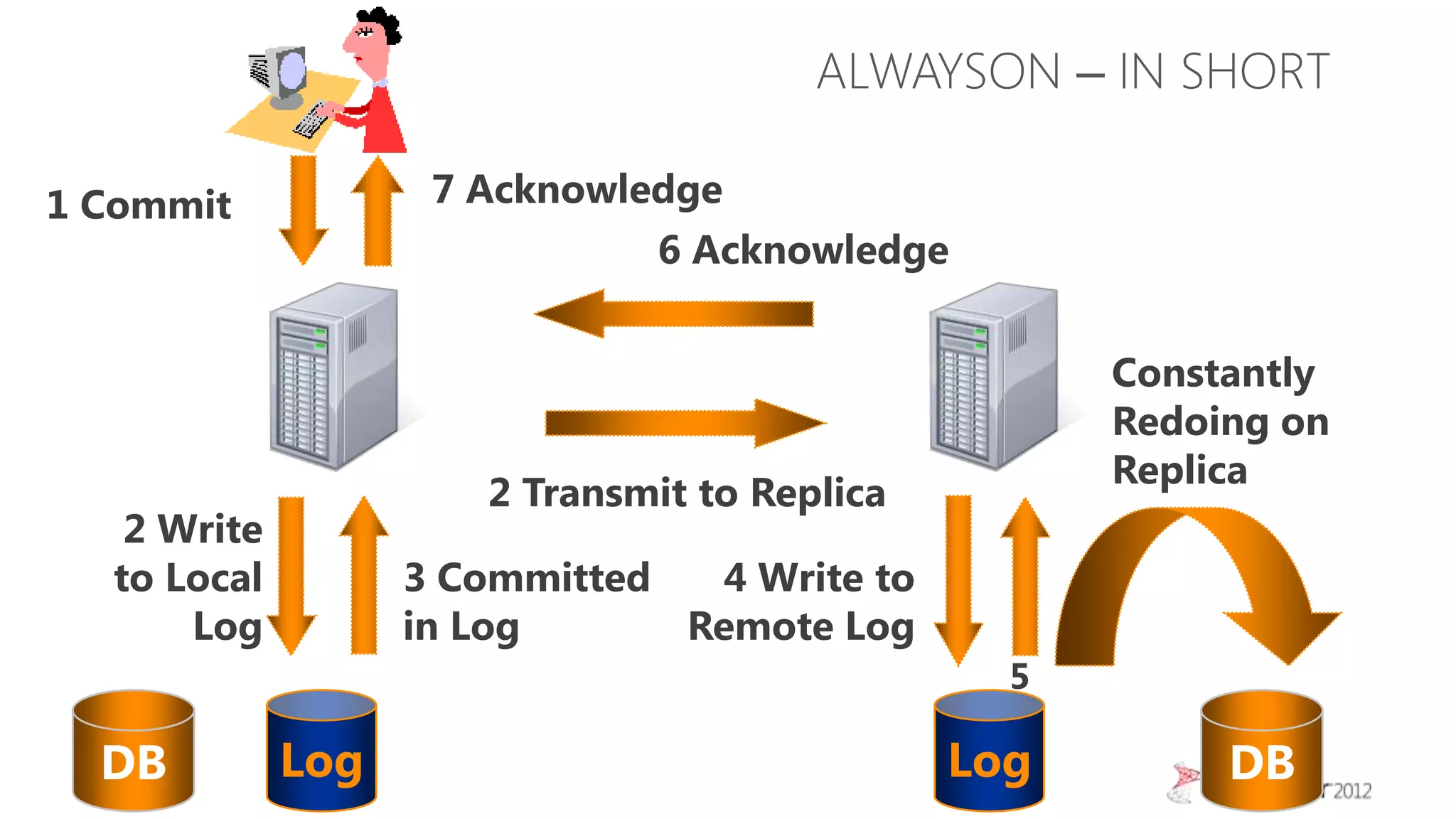

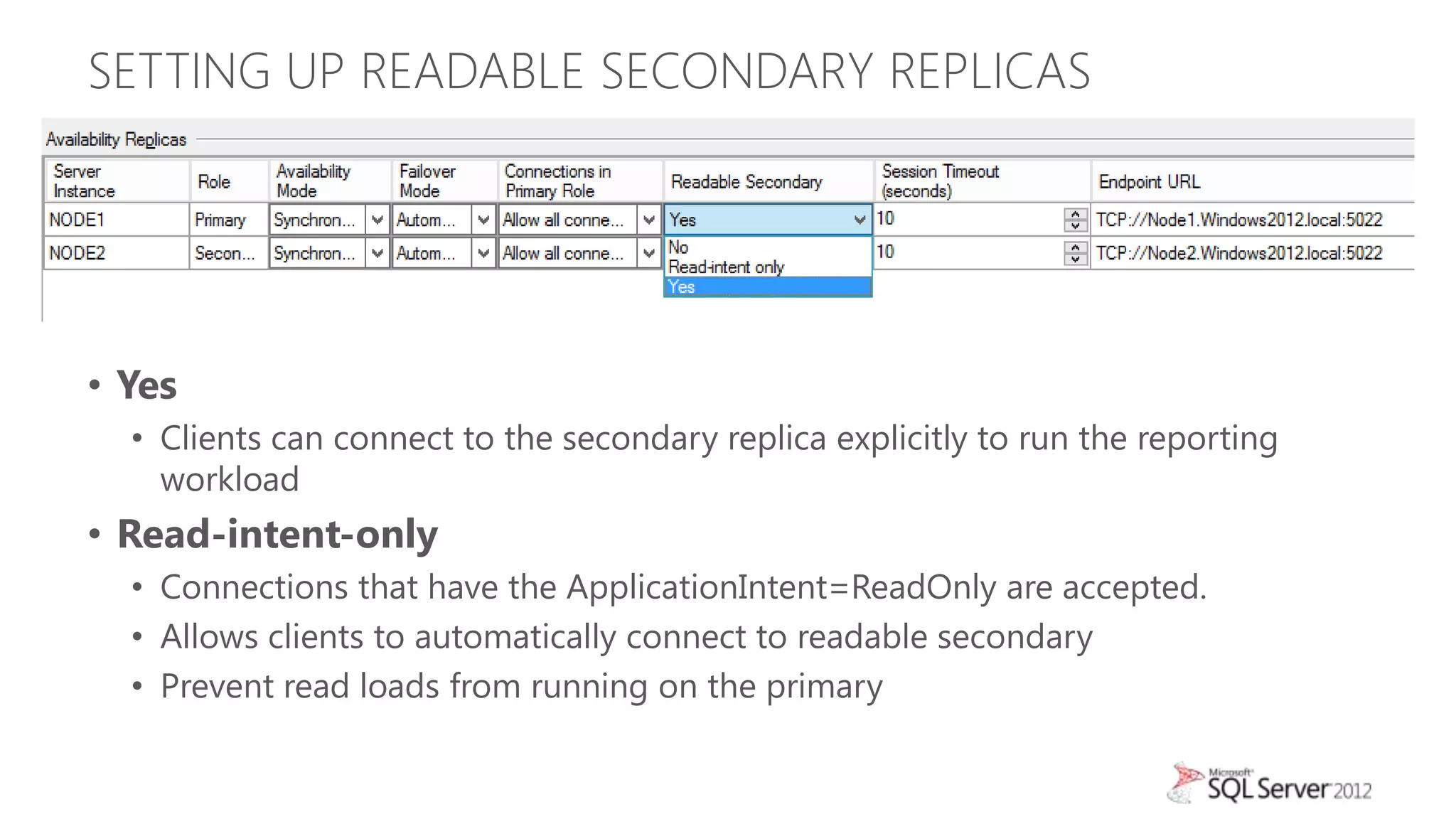

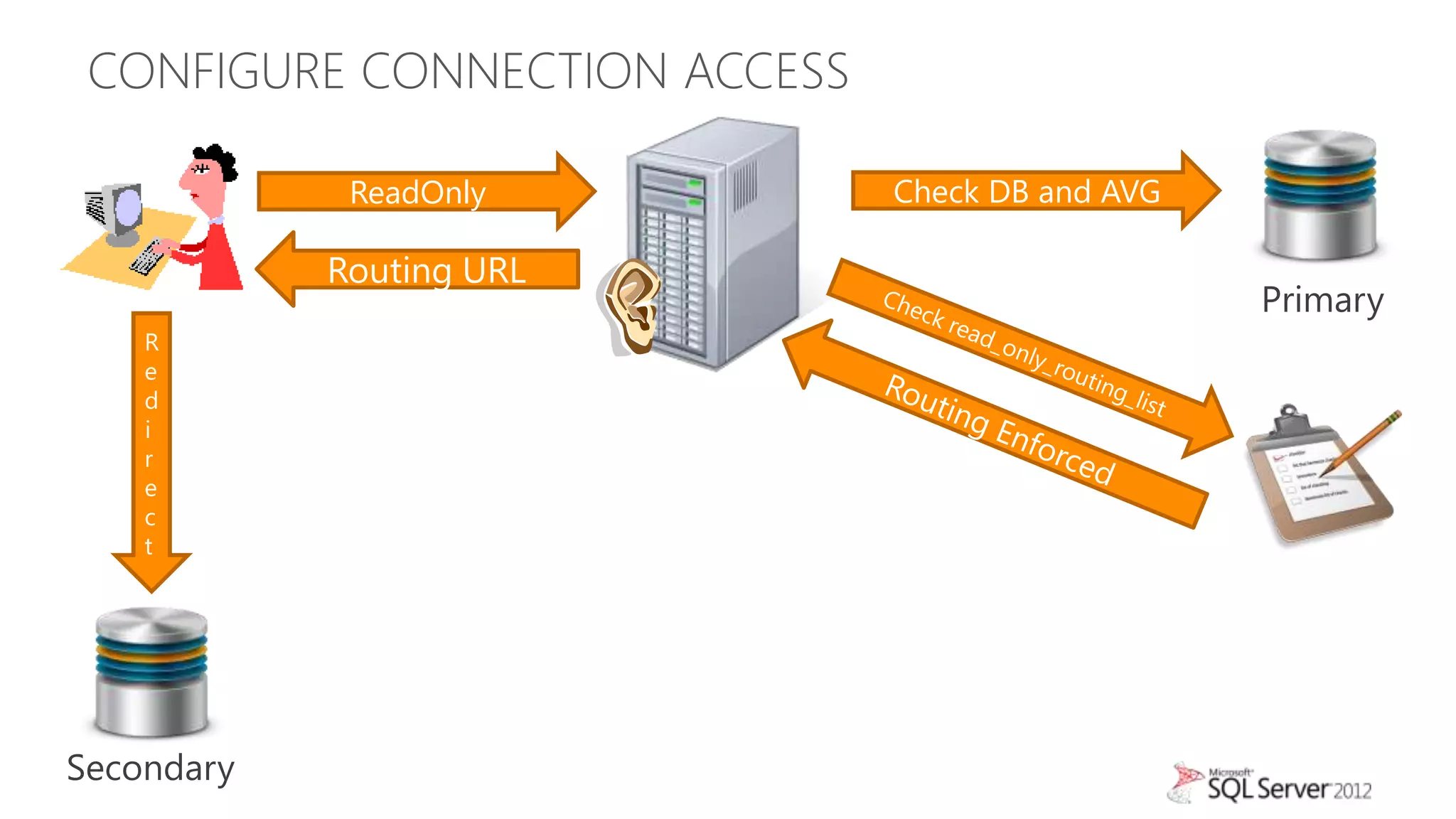

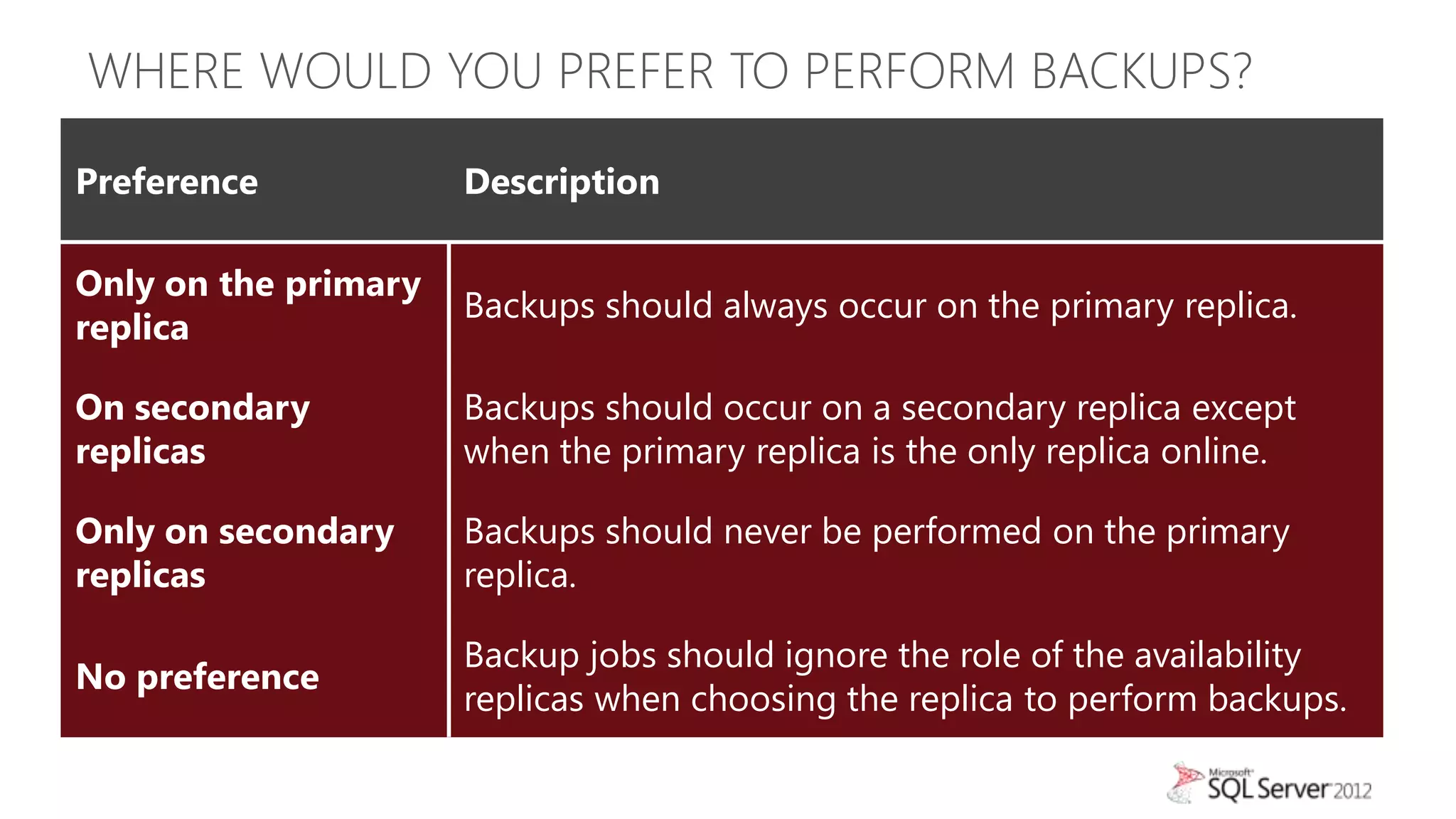

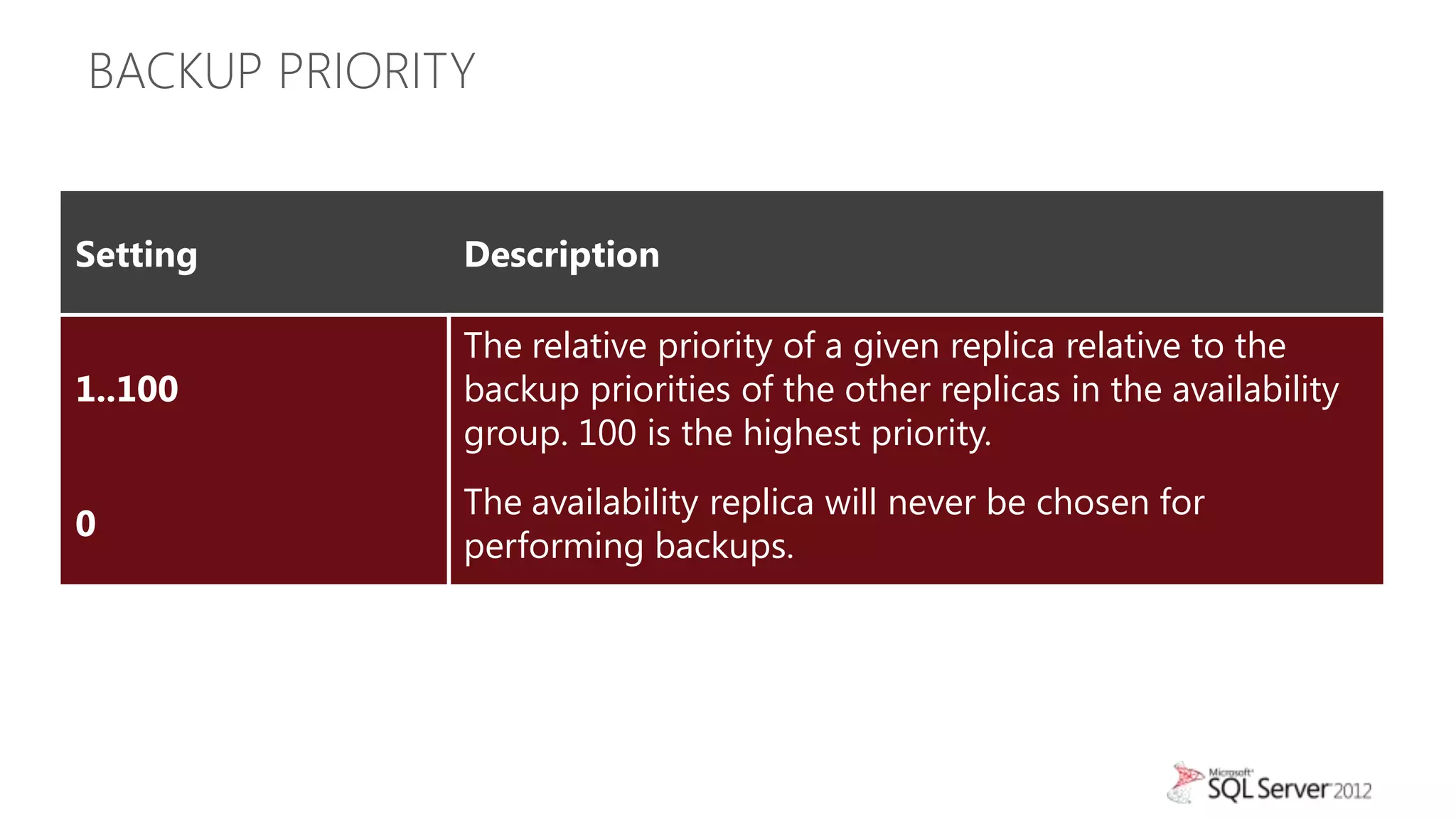

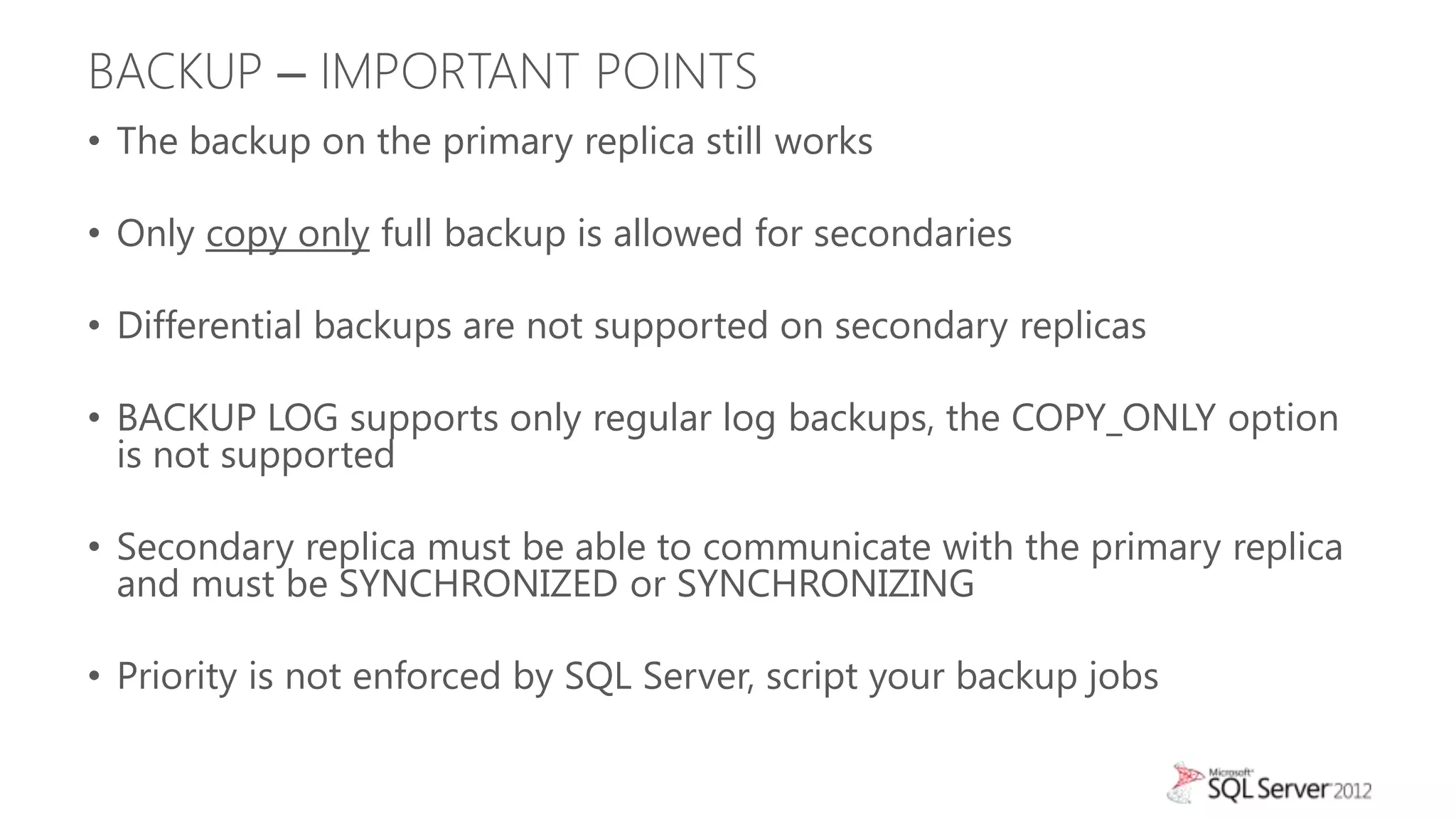

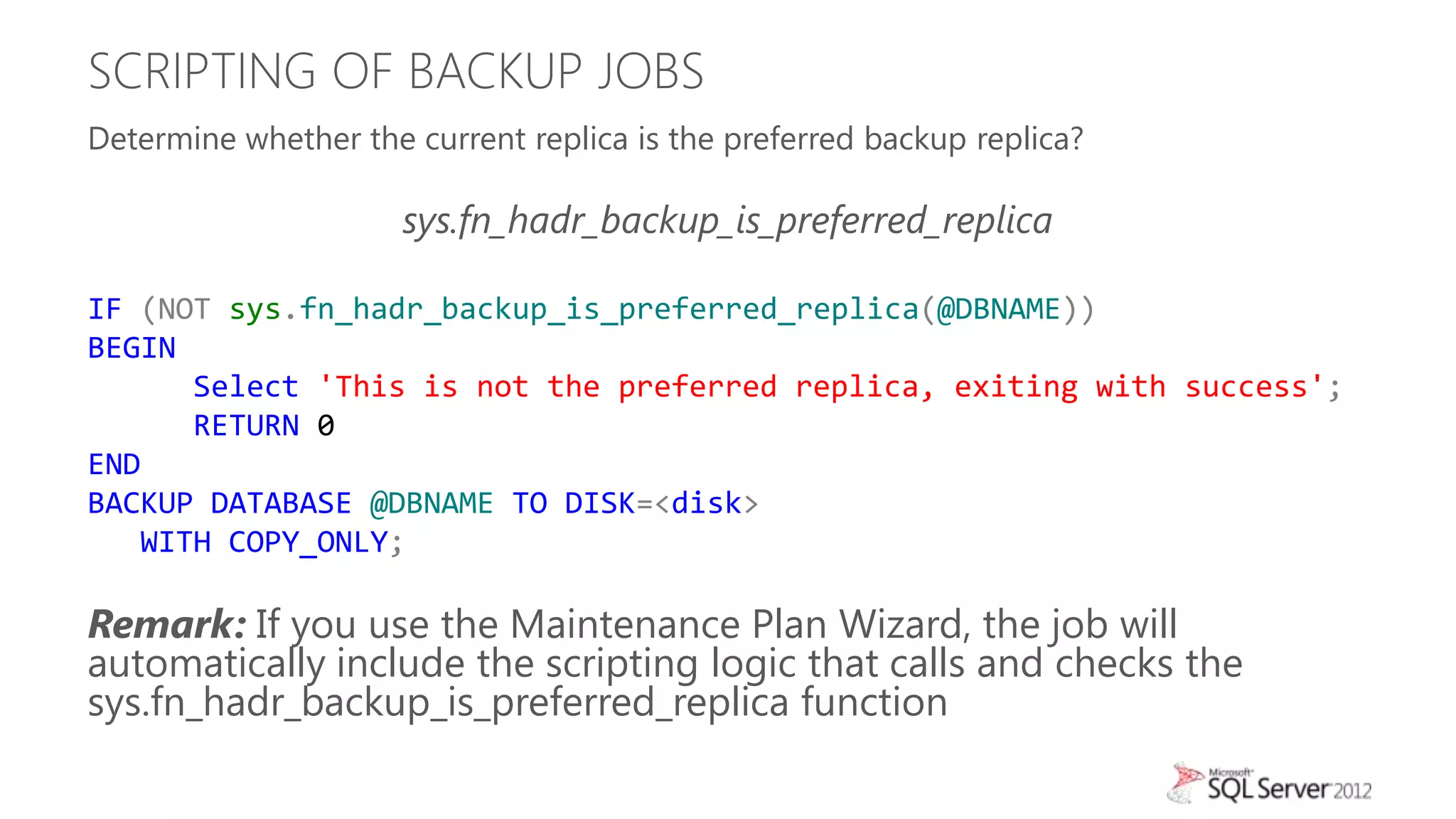

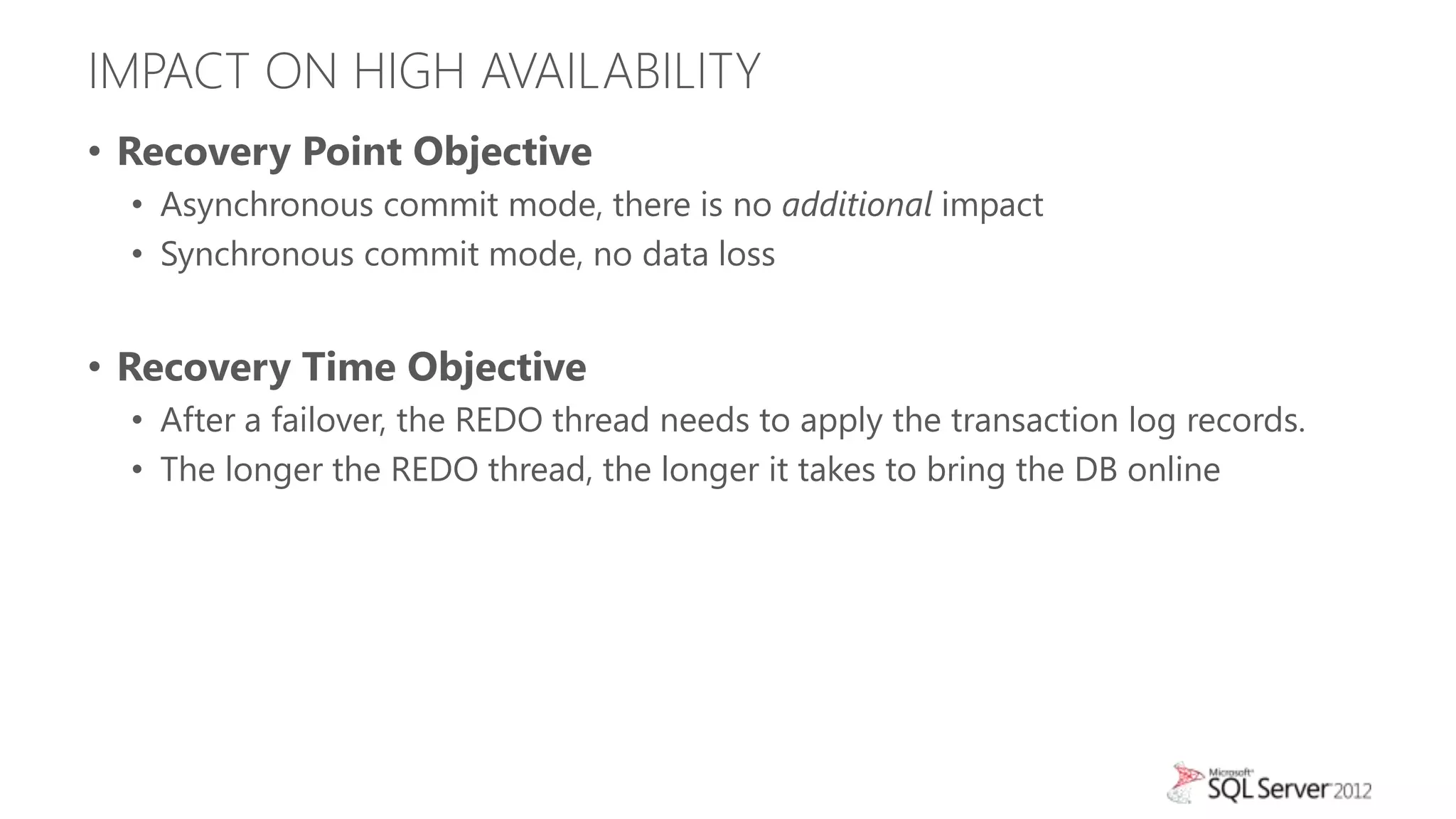

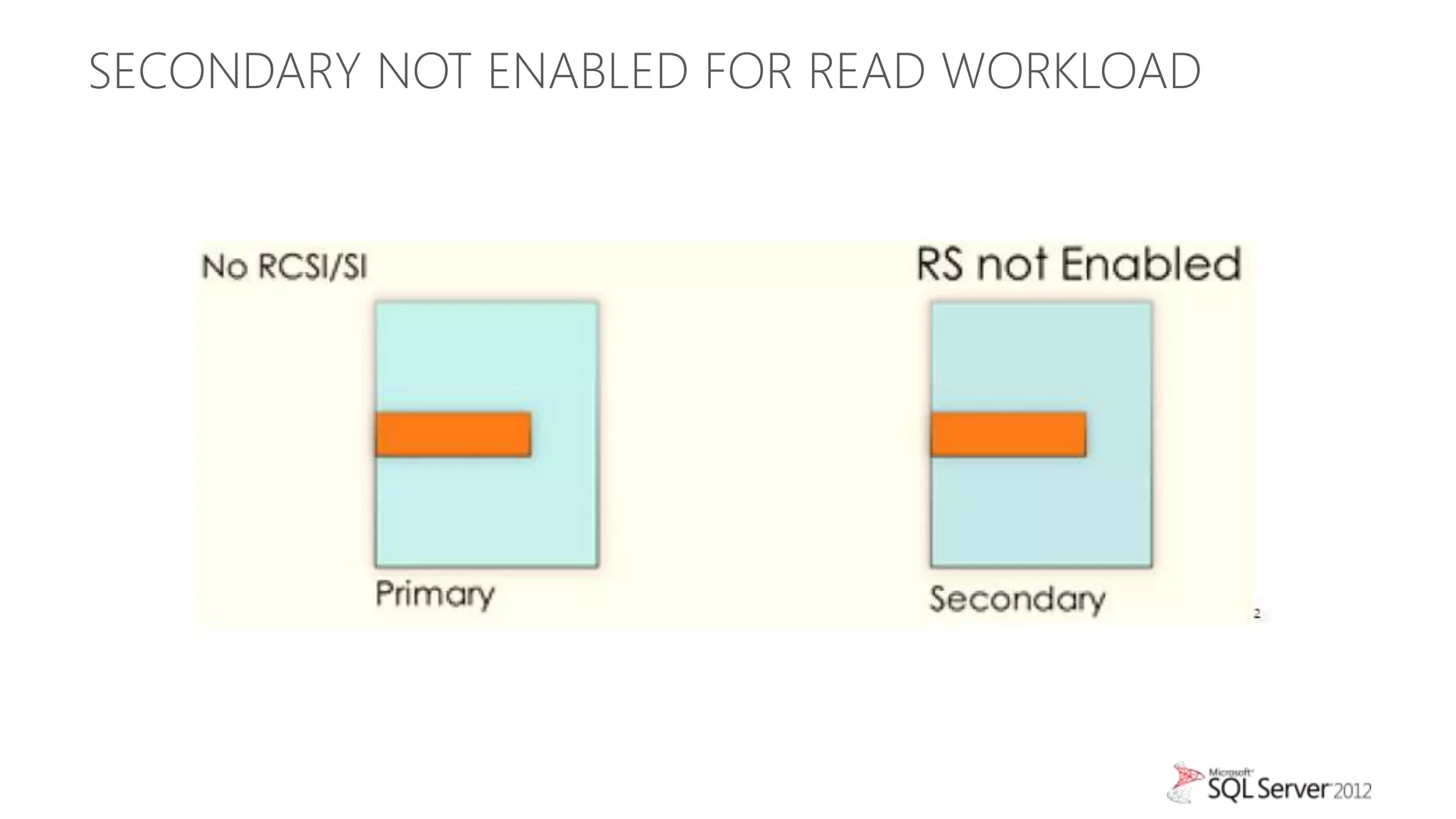

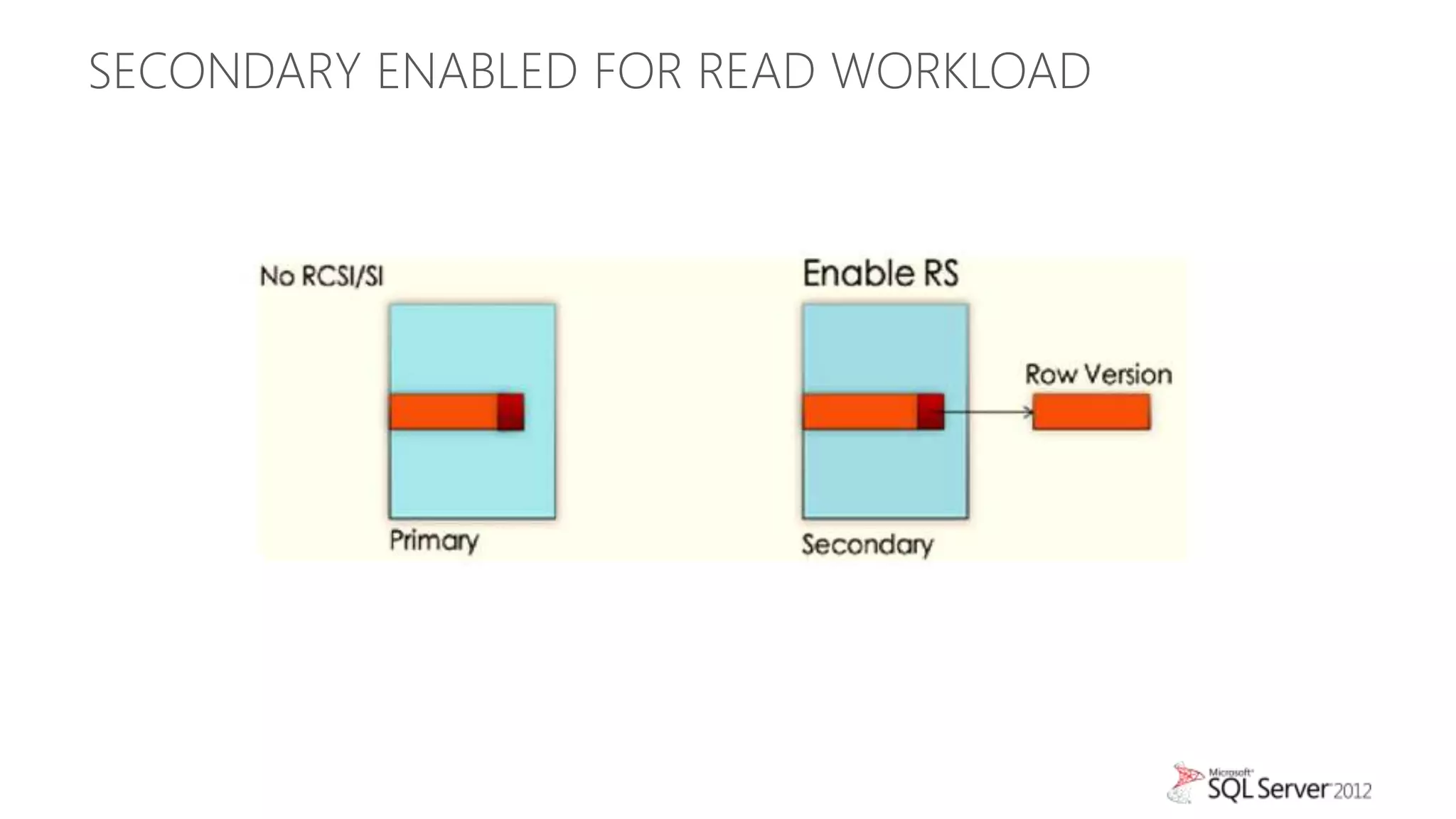

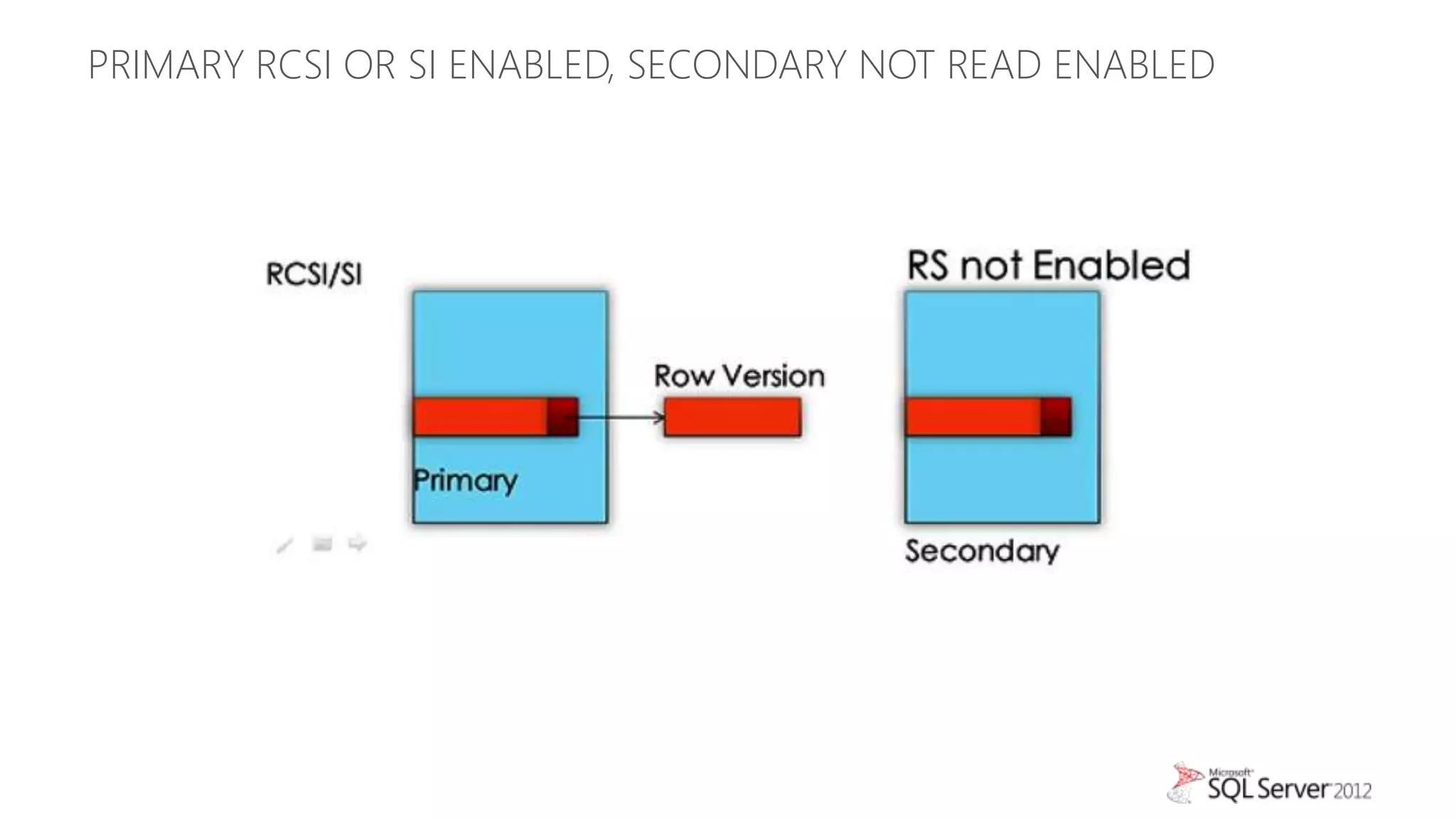

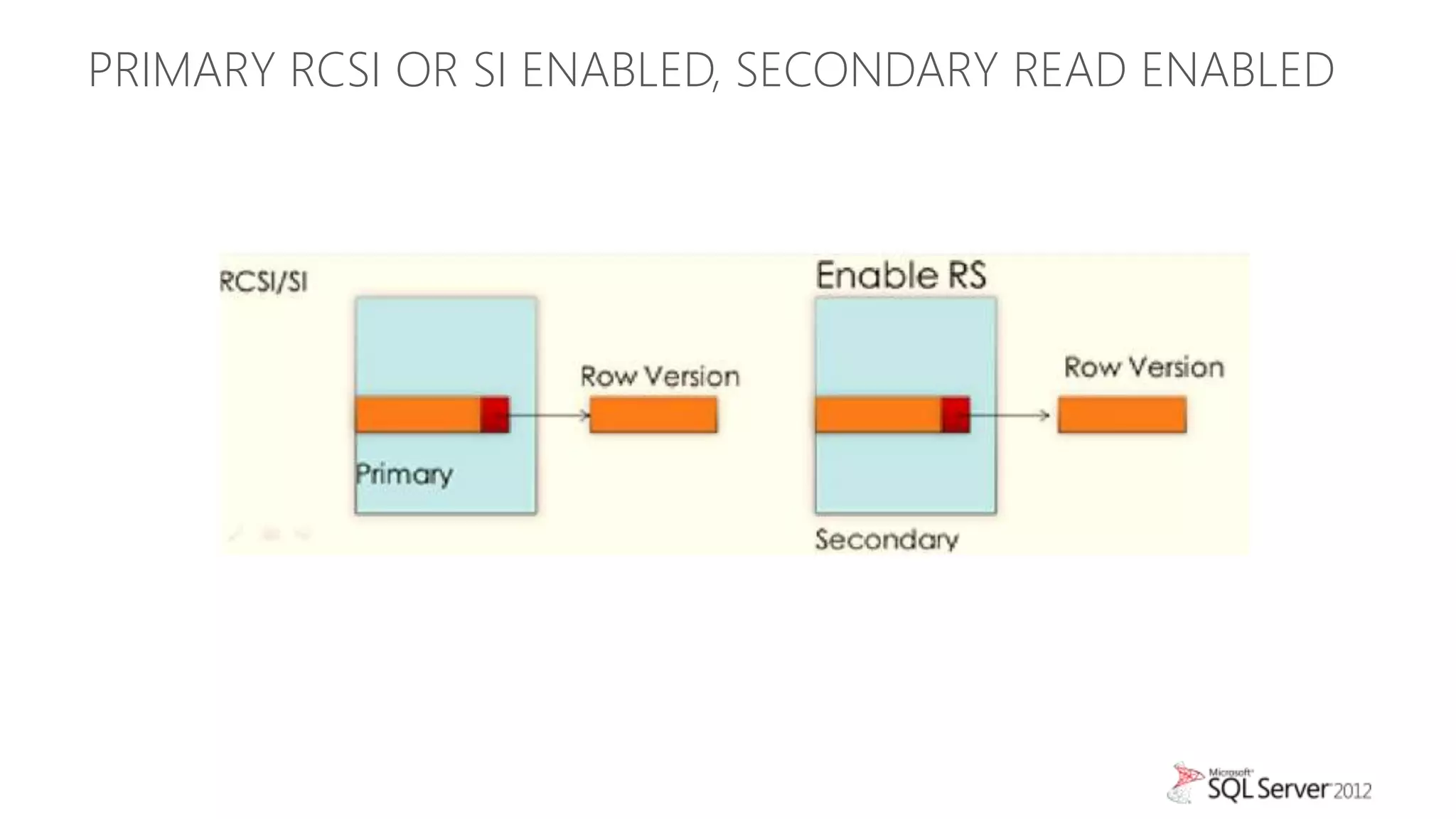

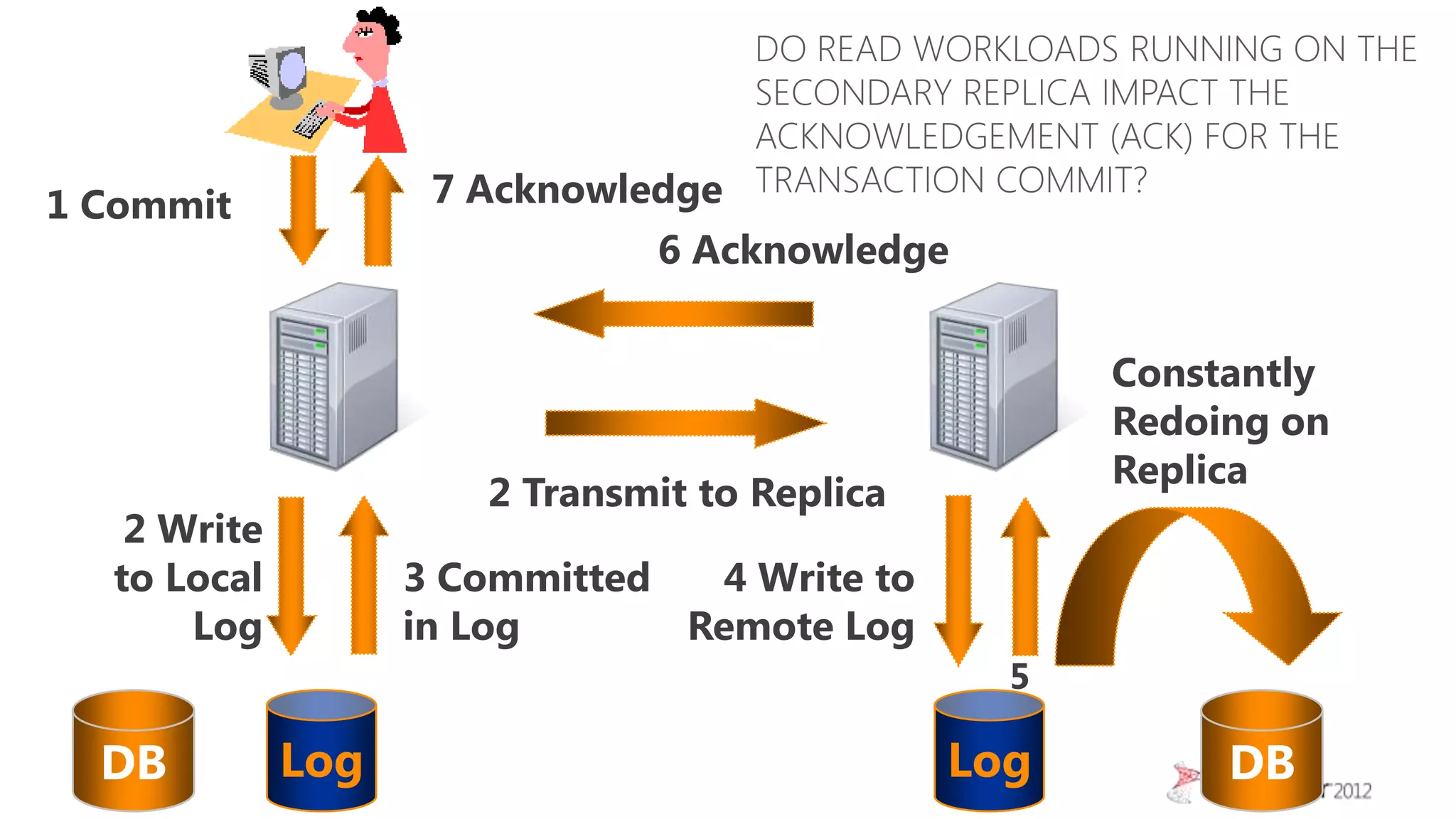

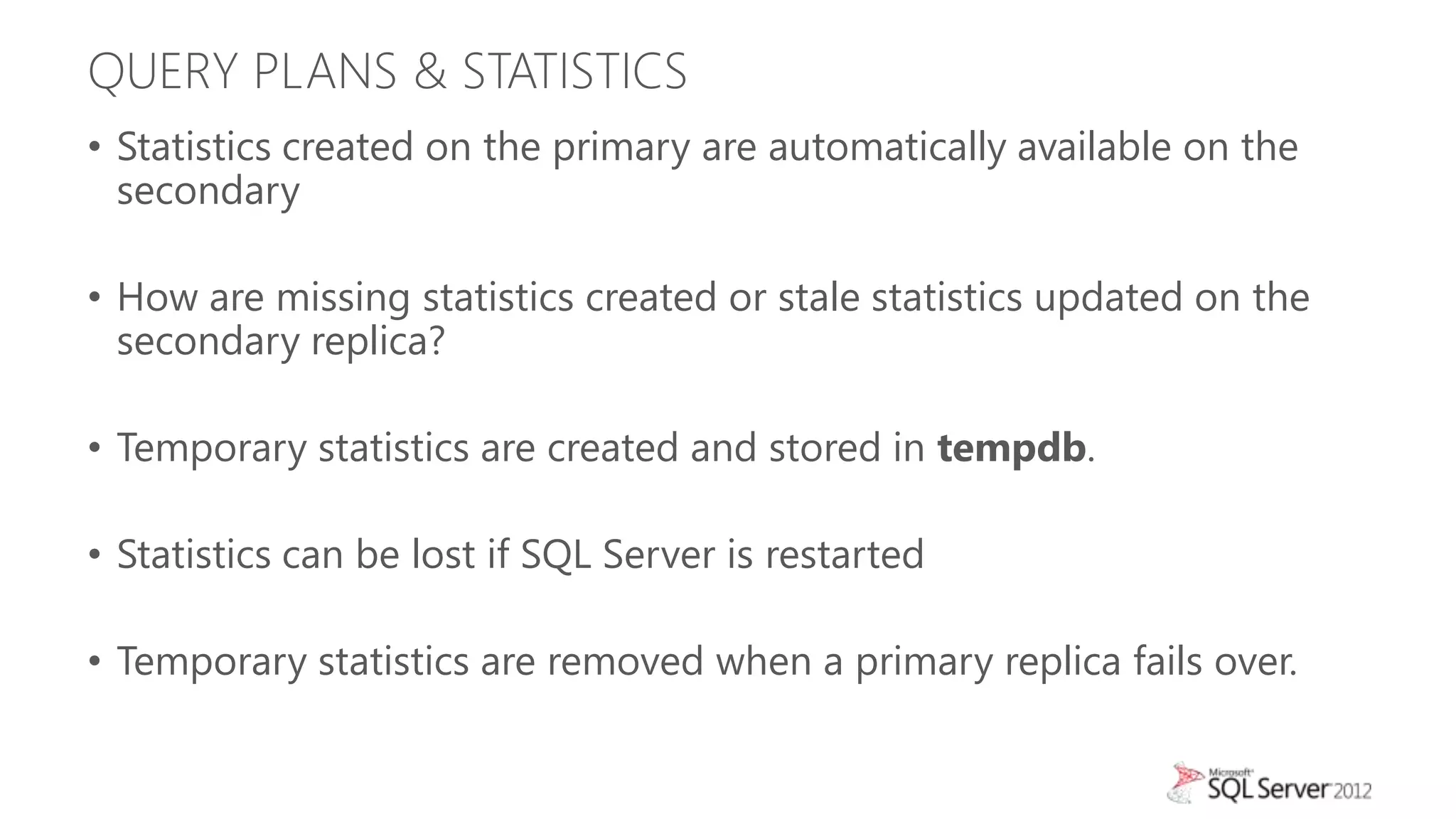

This document summarizes an agenda for a TechNet Live meeting about using SQL Server AlwaysOn availability groups to offload reporting workloads from production servers. The agenda includes an introduction to AlwaysOn, setting up readable secondary replicas, configuring connection access, performing backups on secondary replicas, and discussing the impact of workloads on high availability, primary servers, and query plans.