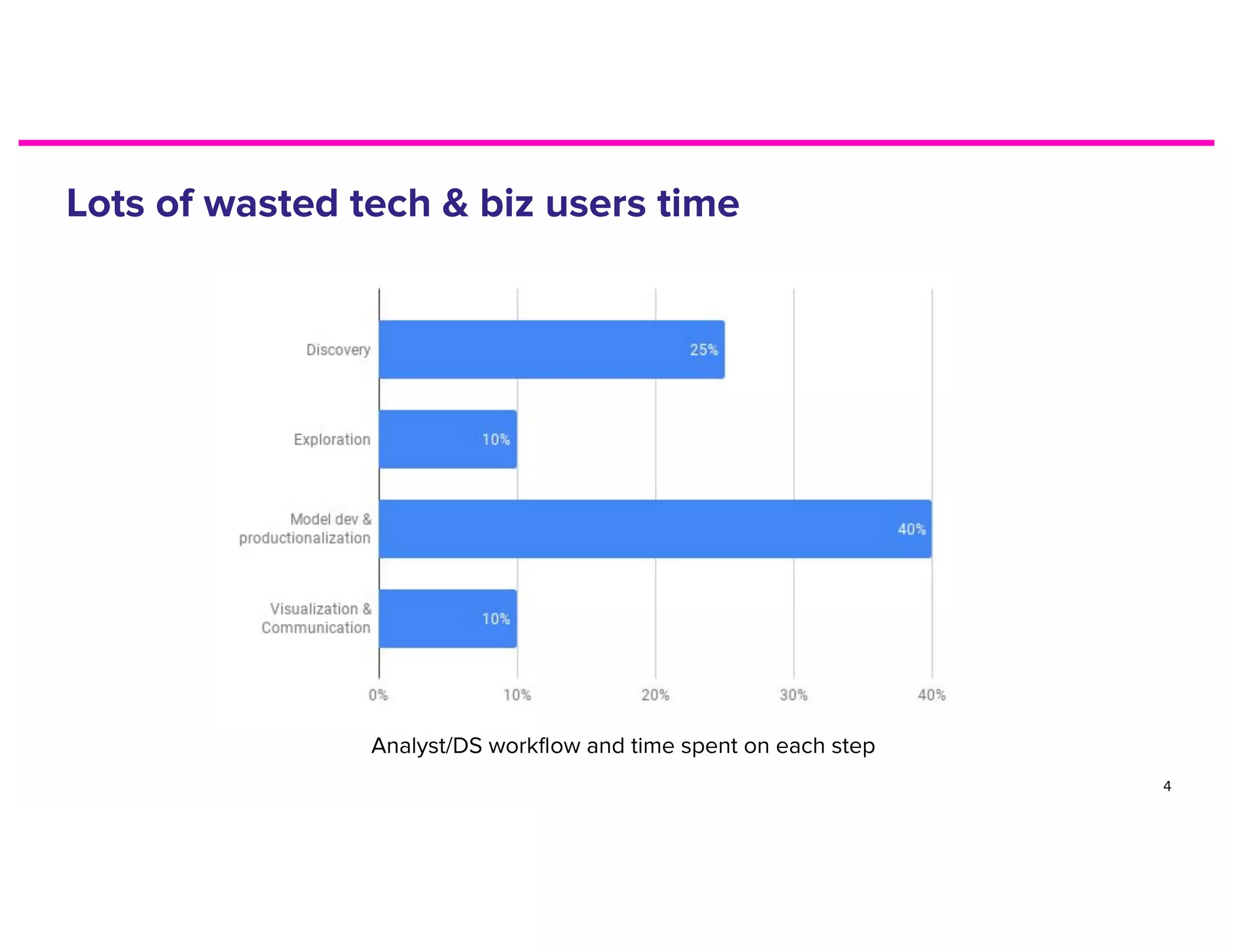

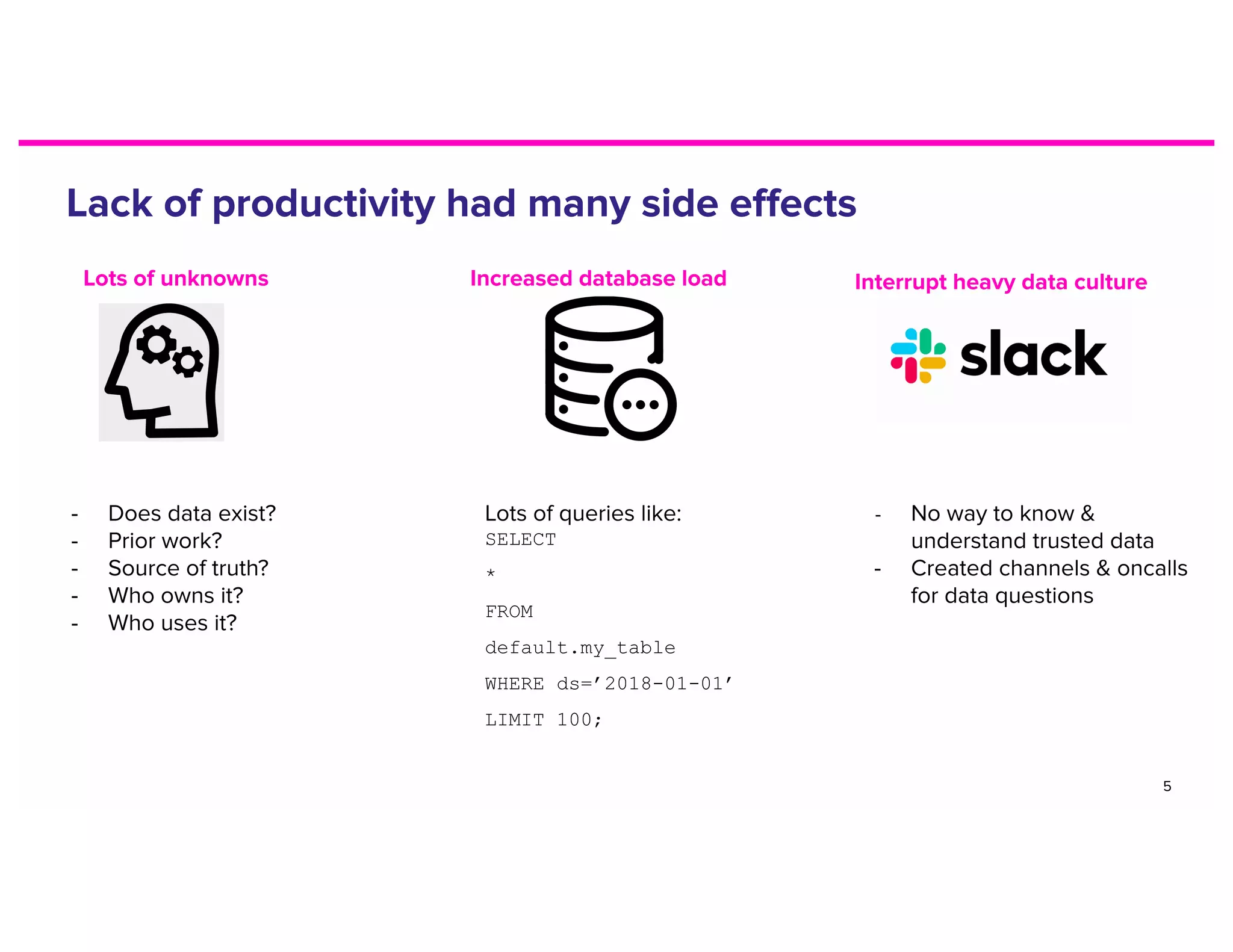

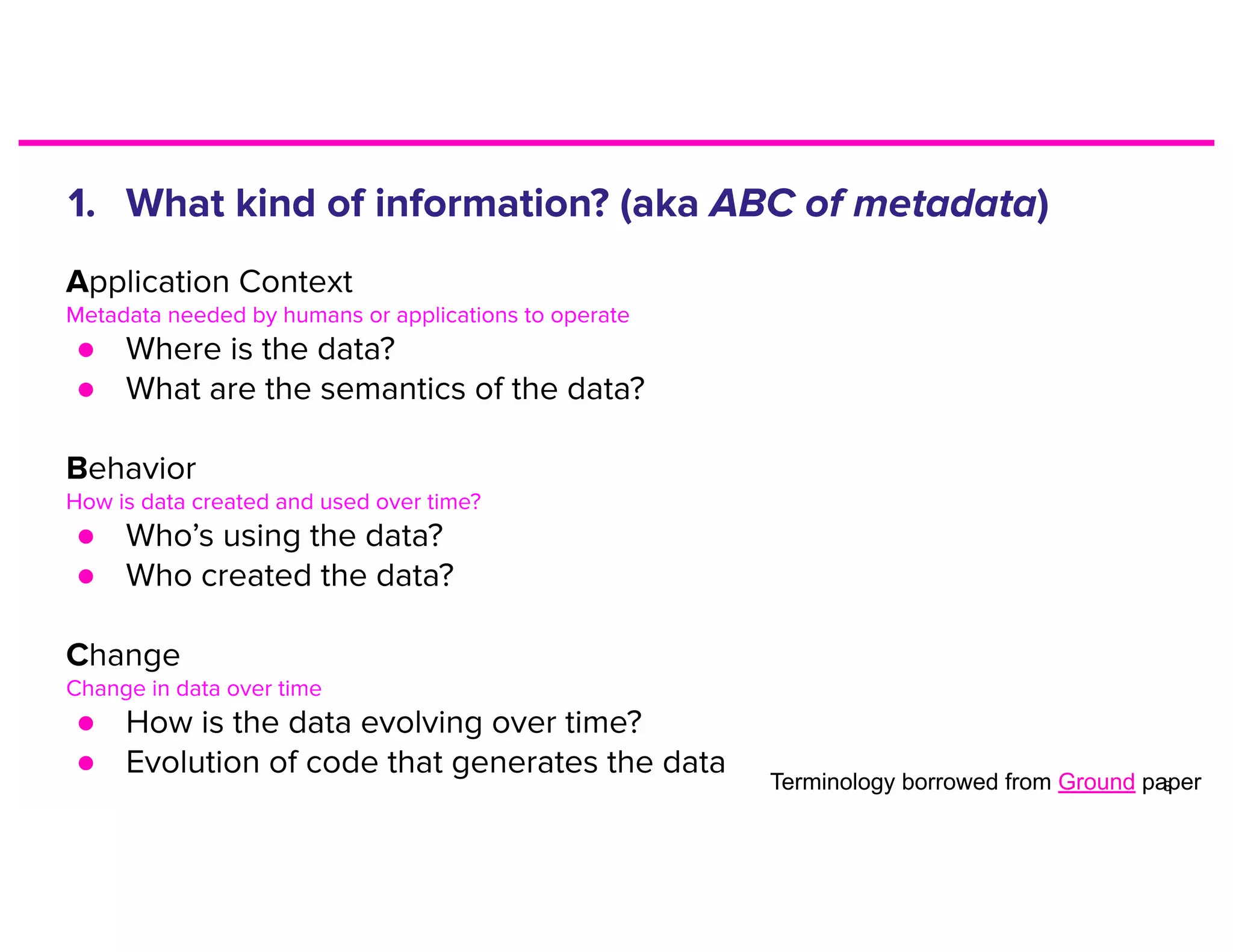

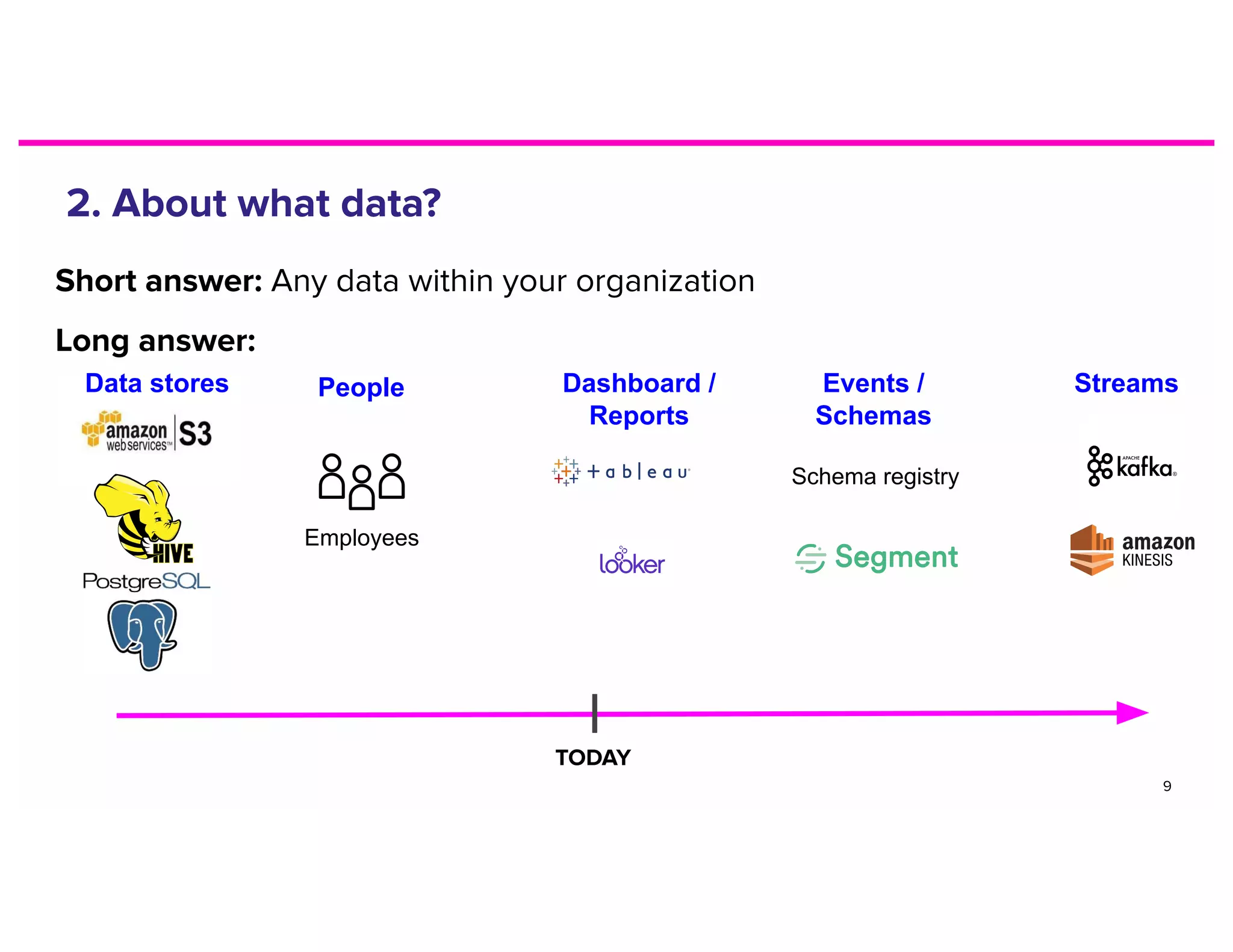

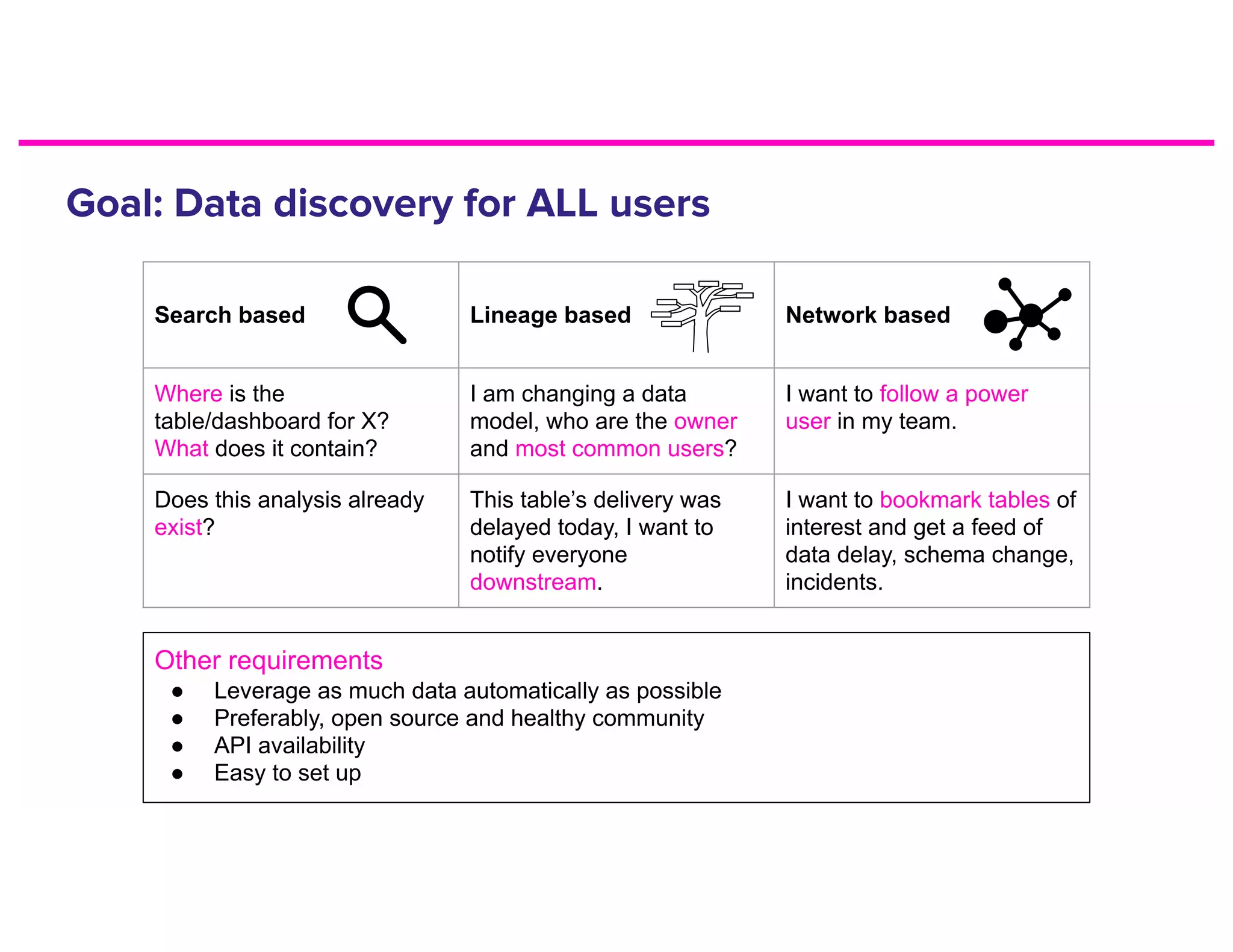

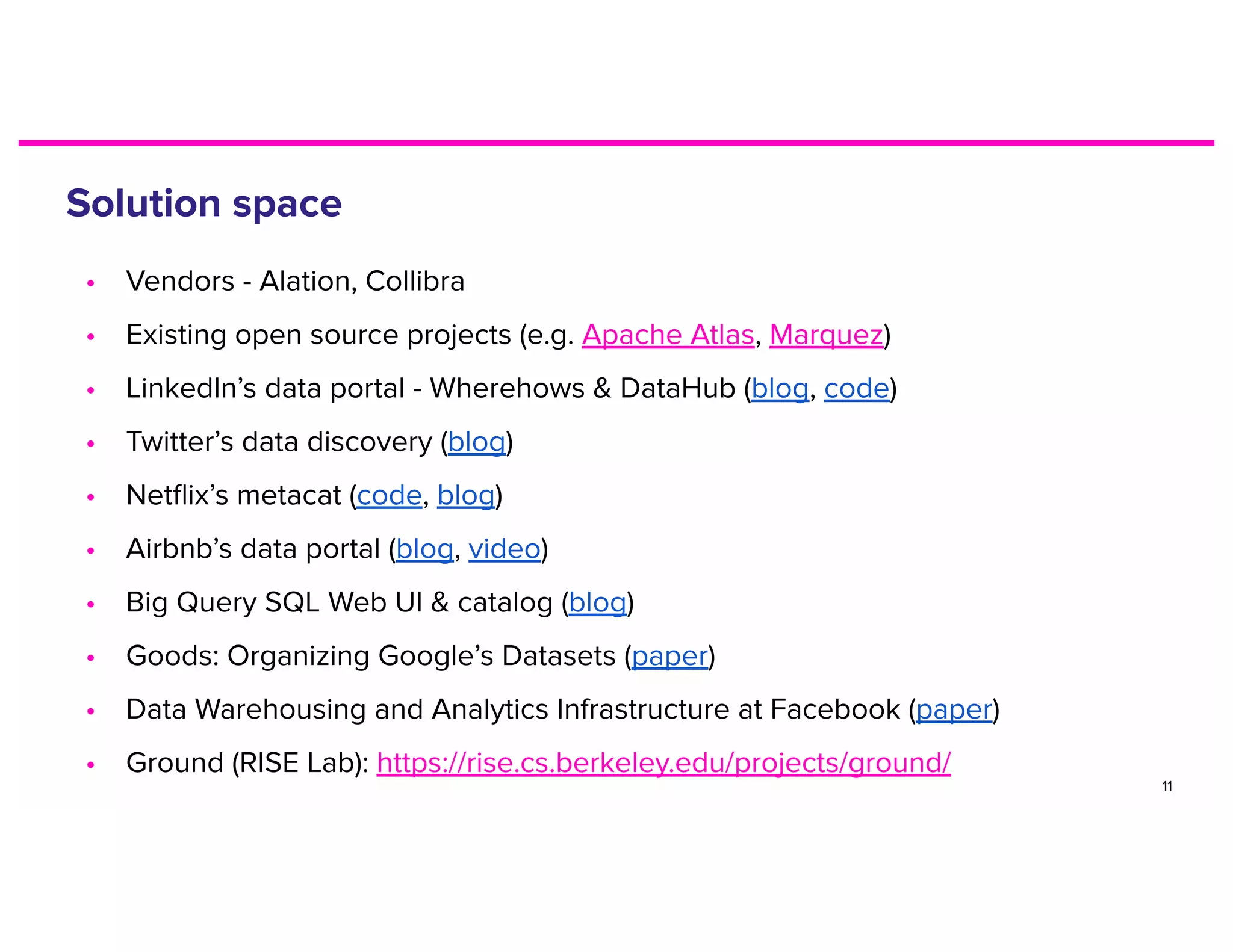

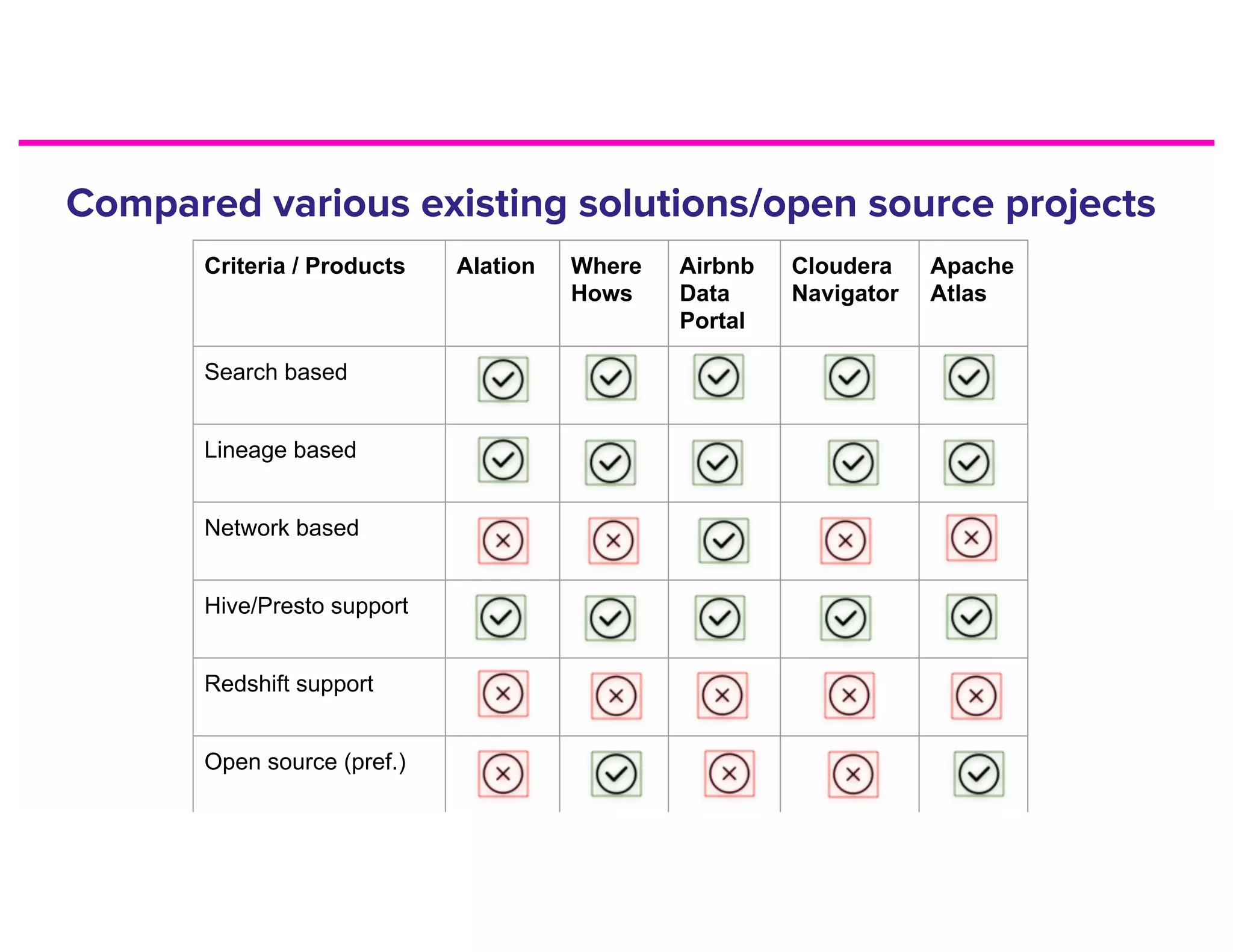

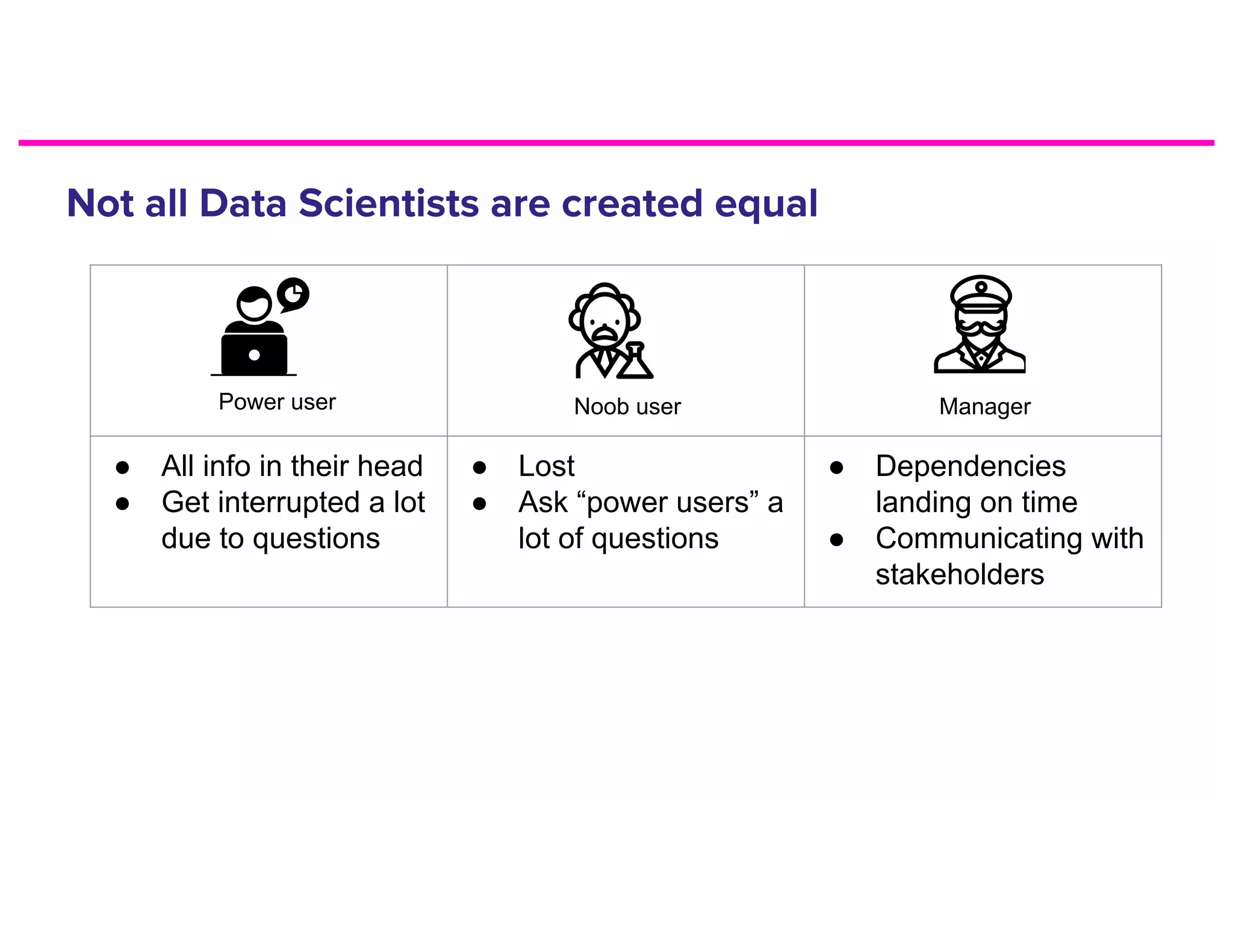

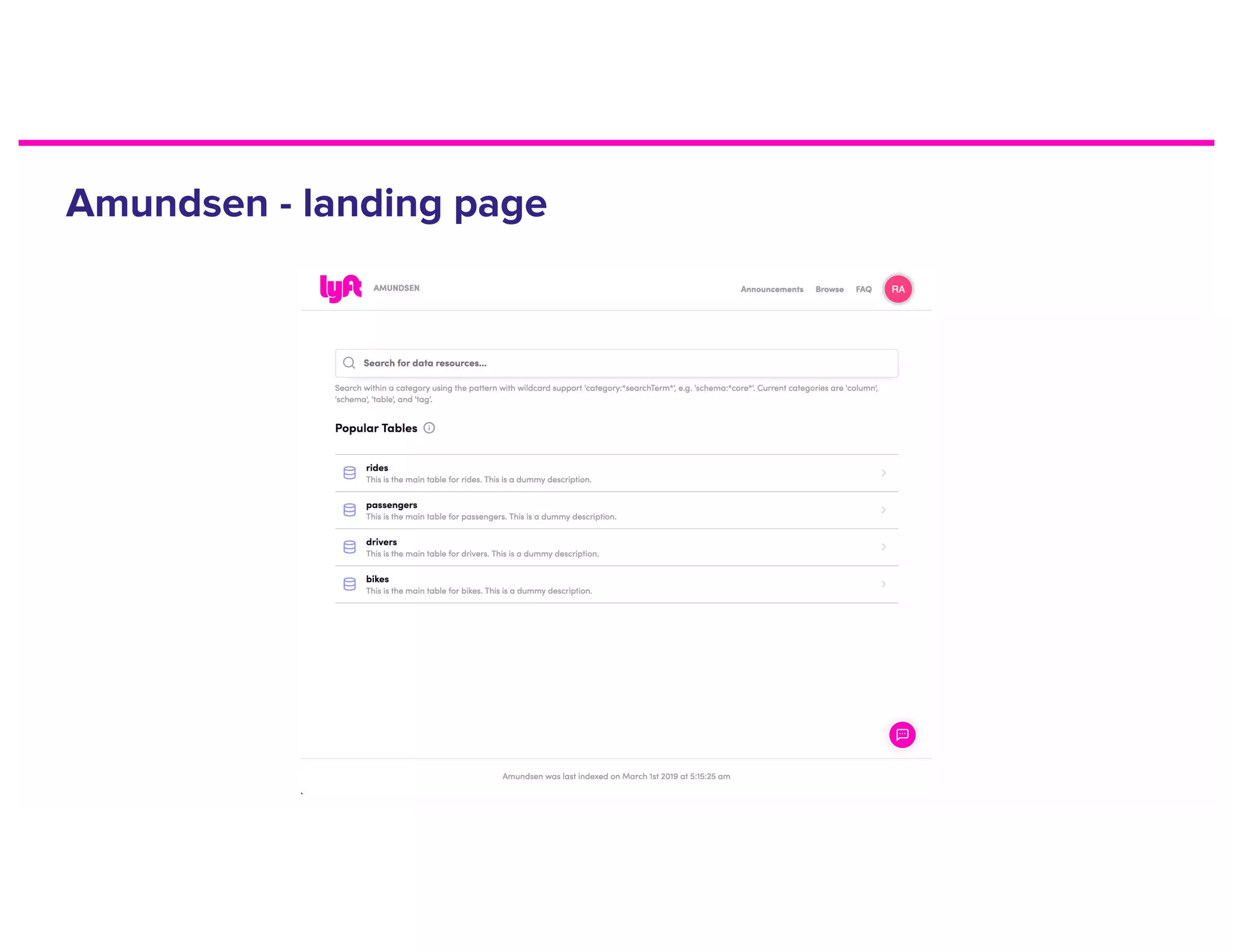

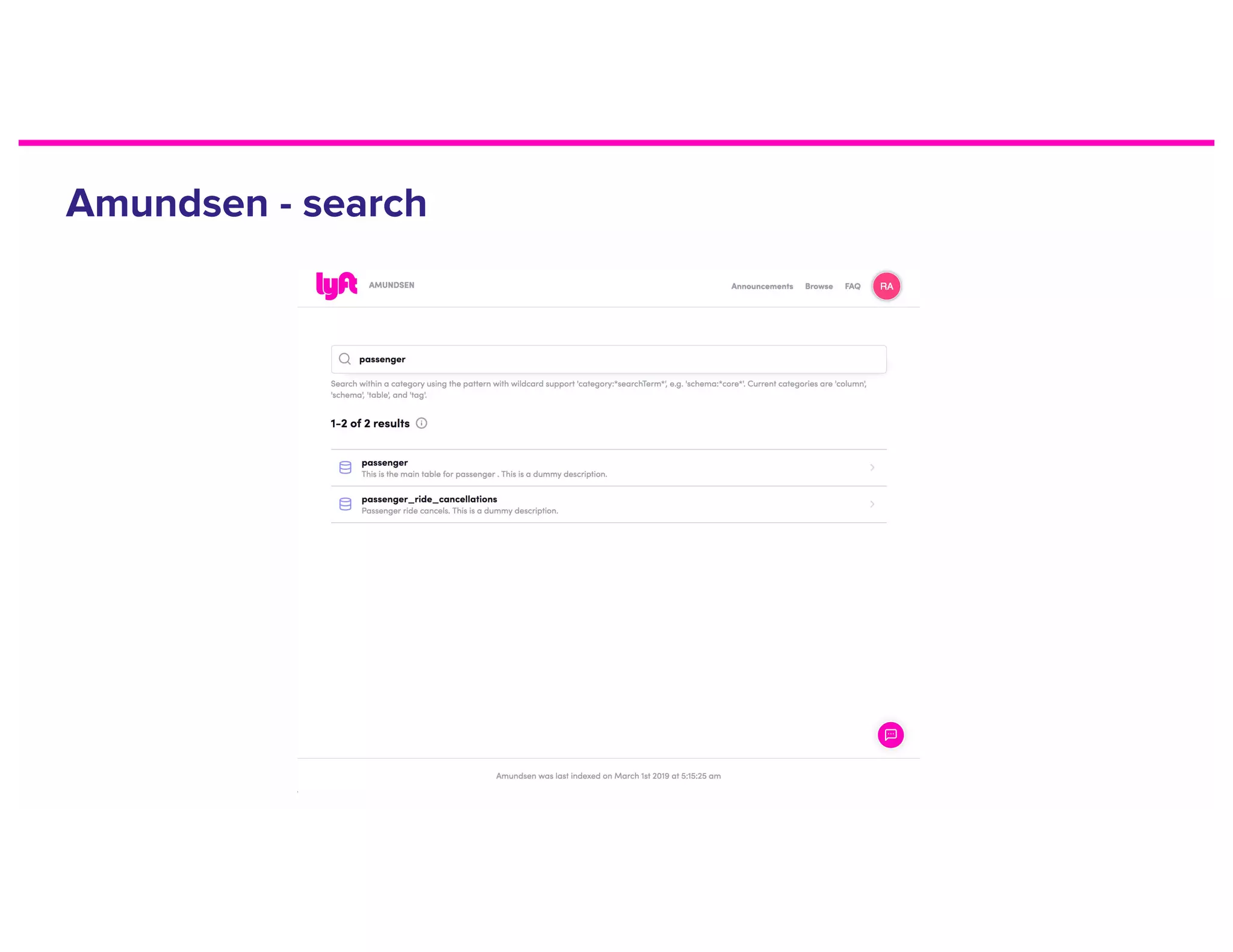

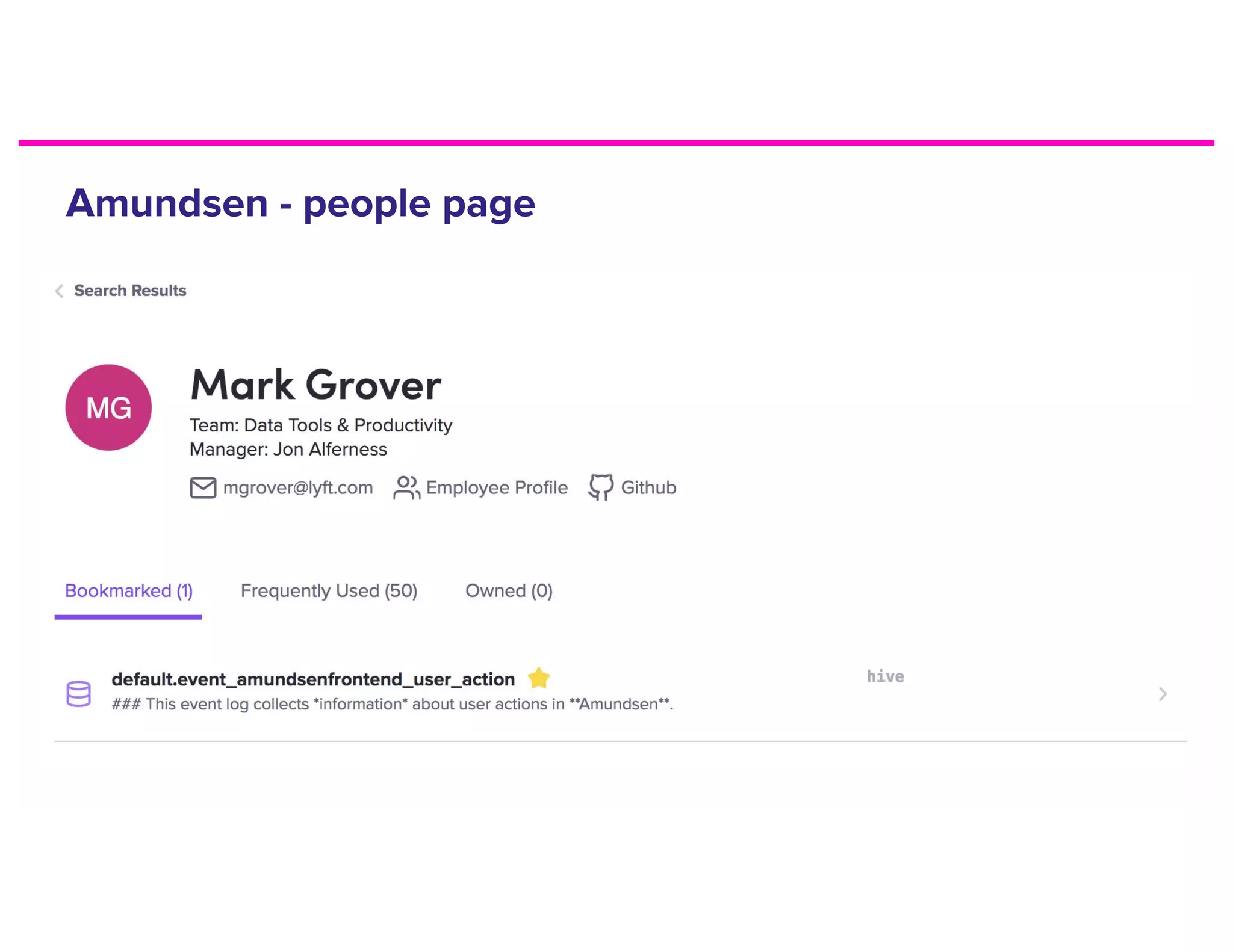

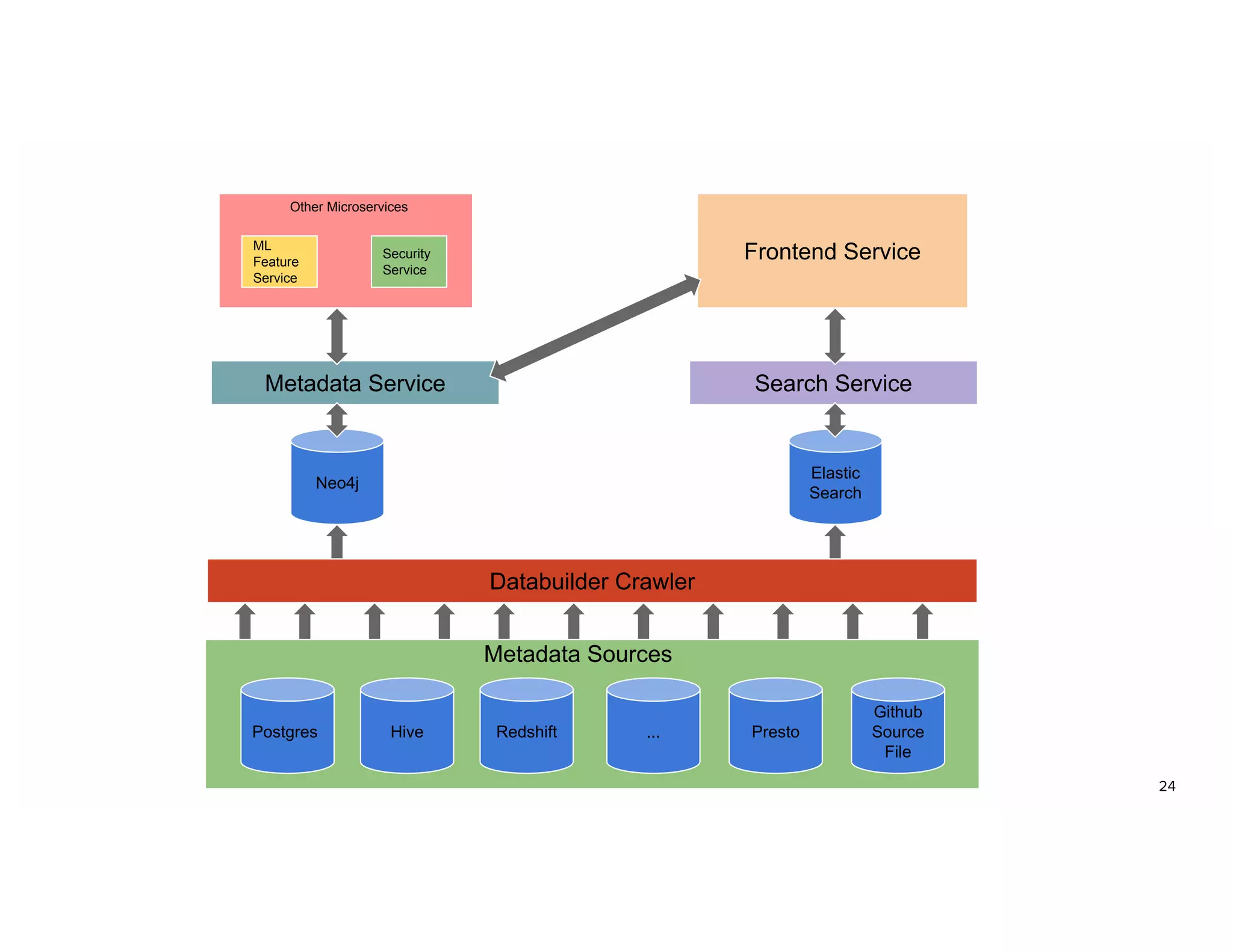

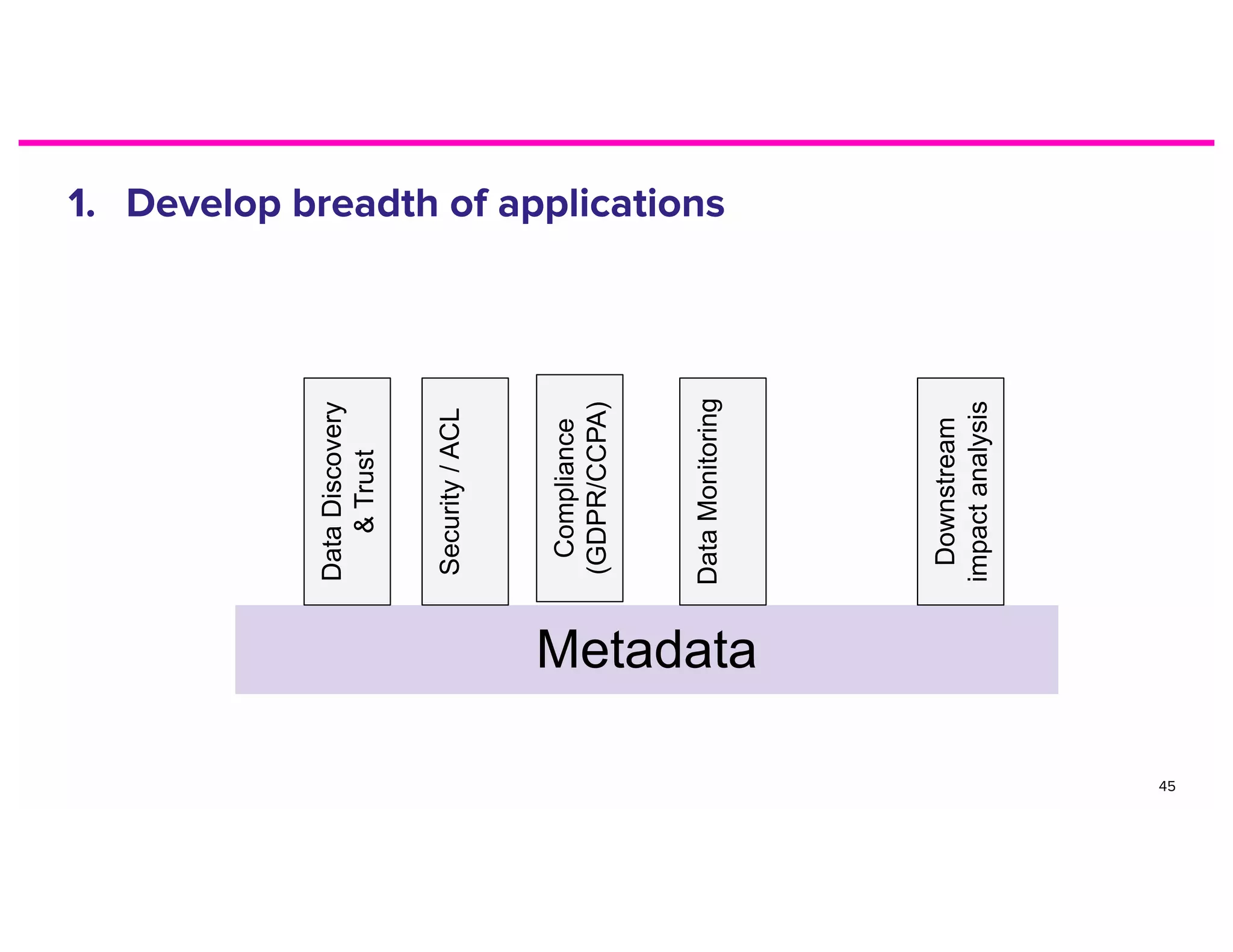

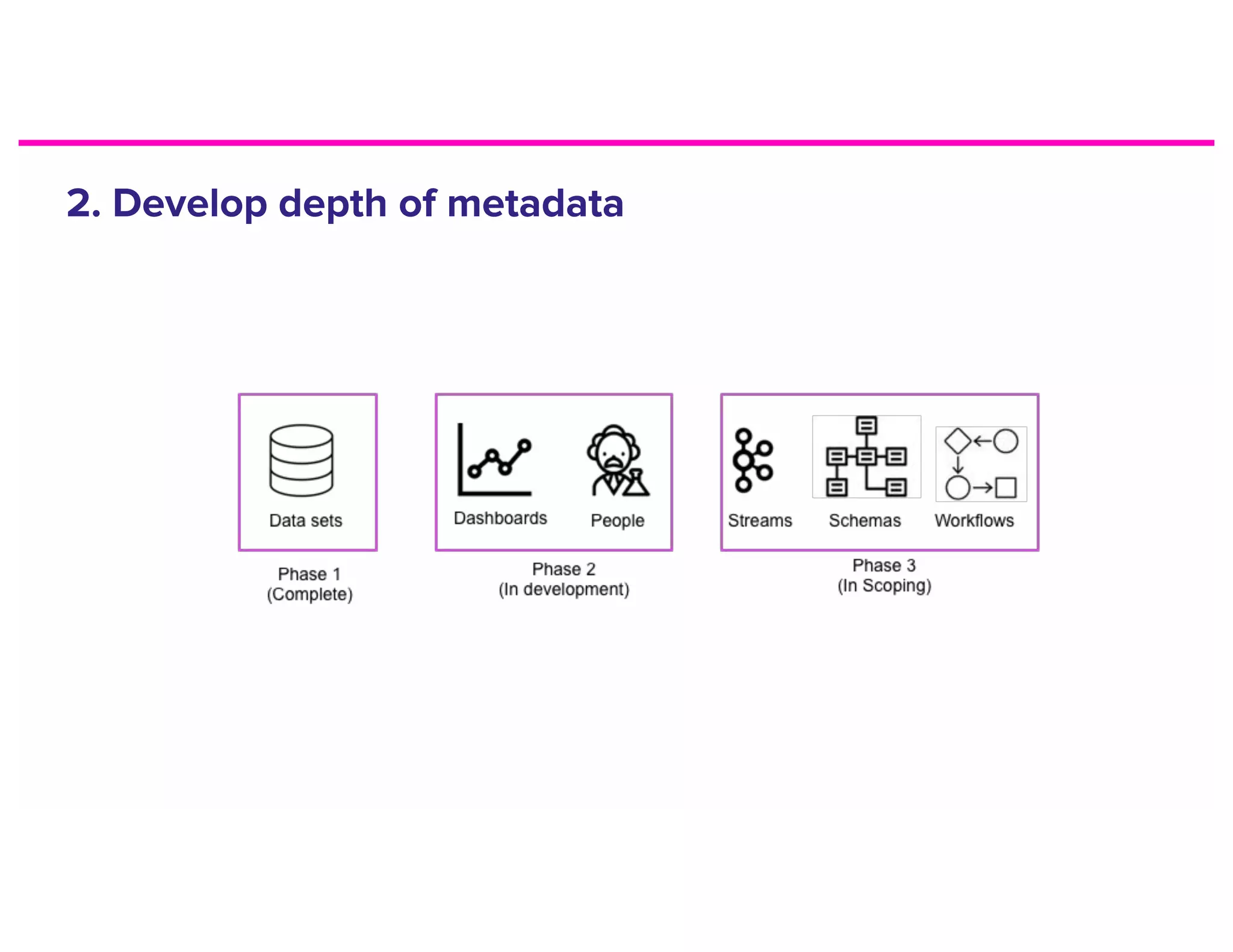

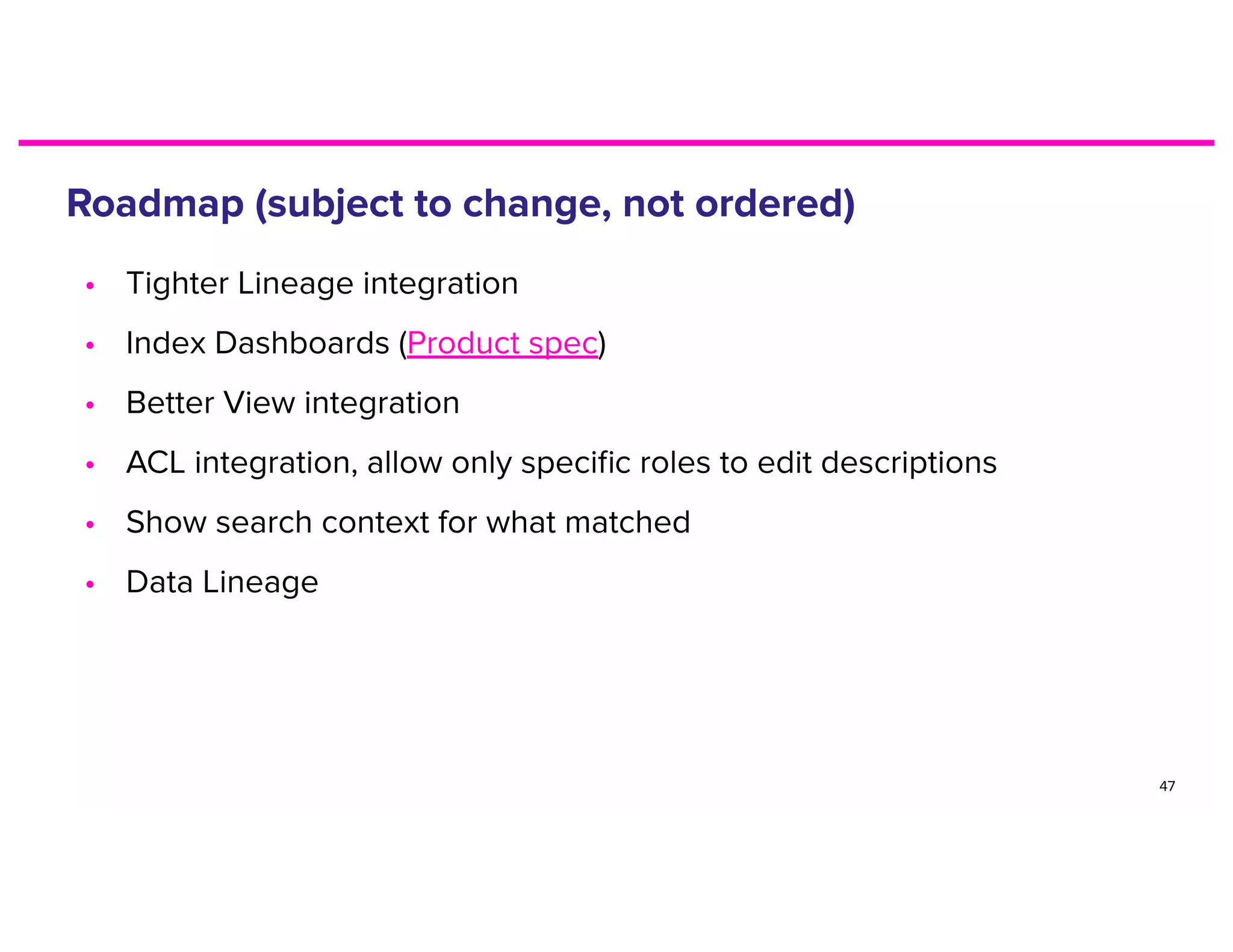

The document discusses metadata and the need for a metadata discovery tool. It provides an overview of metadata, describes different types of users and their needs related to finding and understanding data. It also evaluates different architectural approaches for a metadata graph and considerations for security, guidelines, and other challenges in building such a tool.