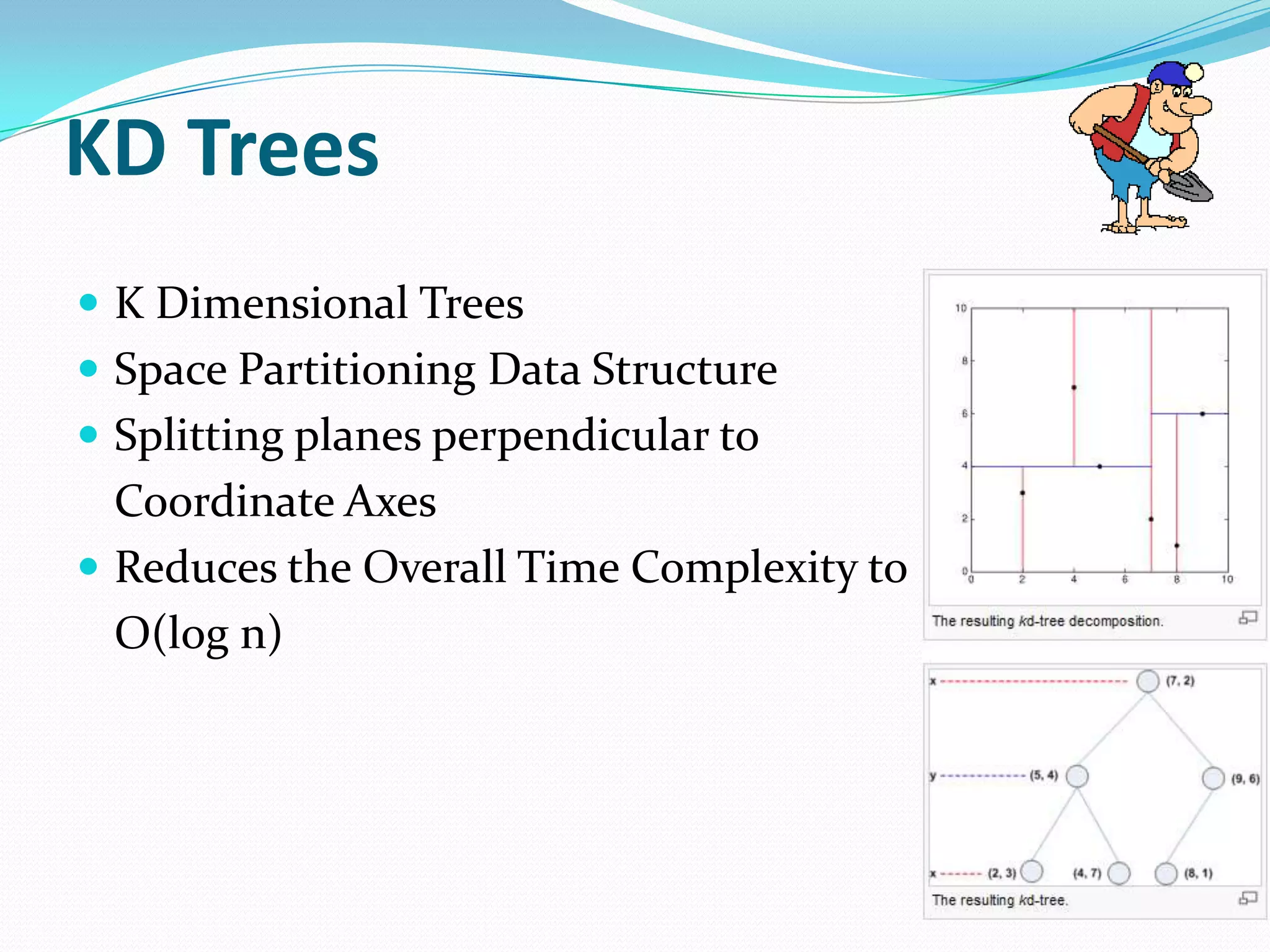

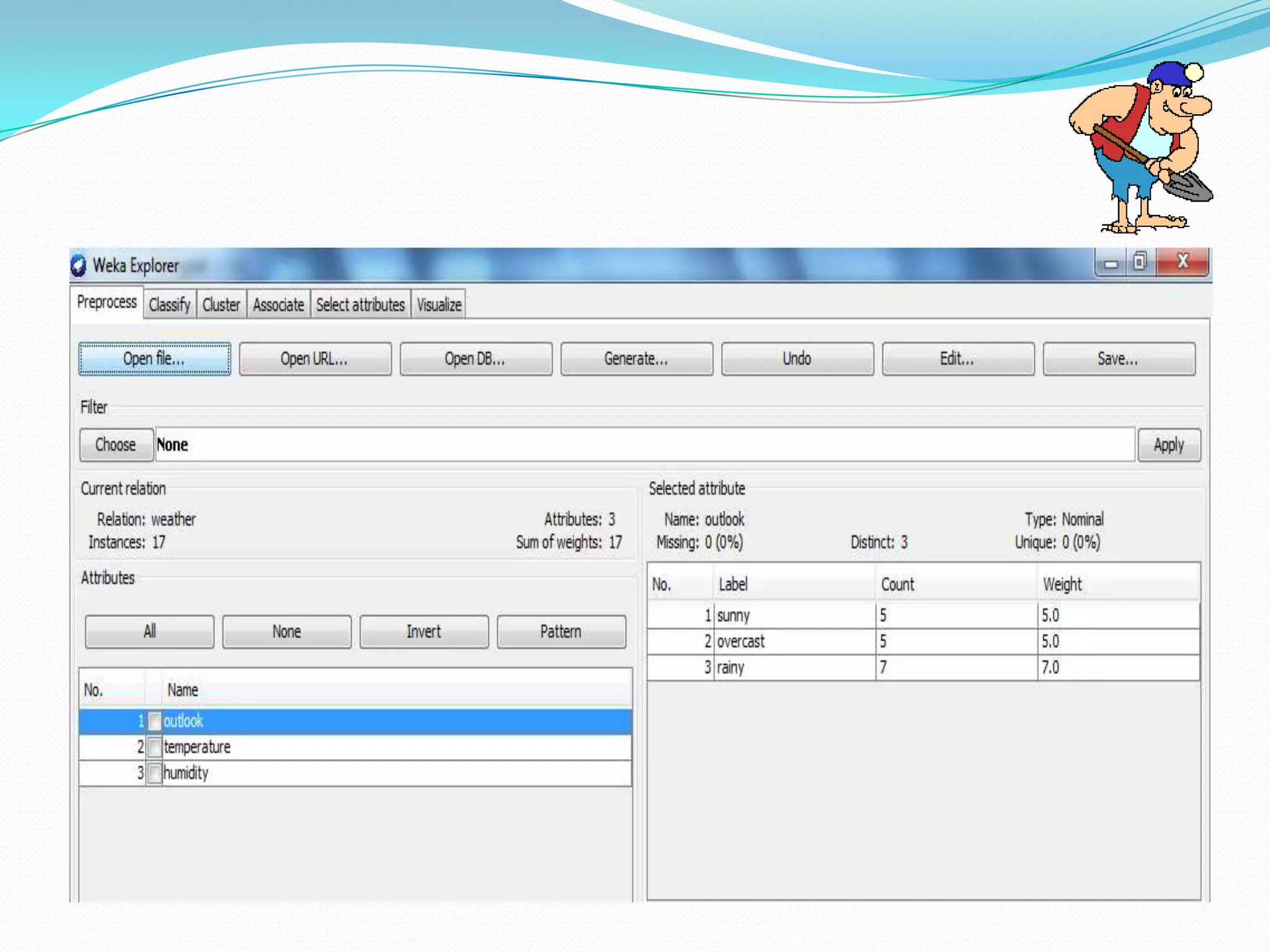

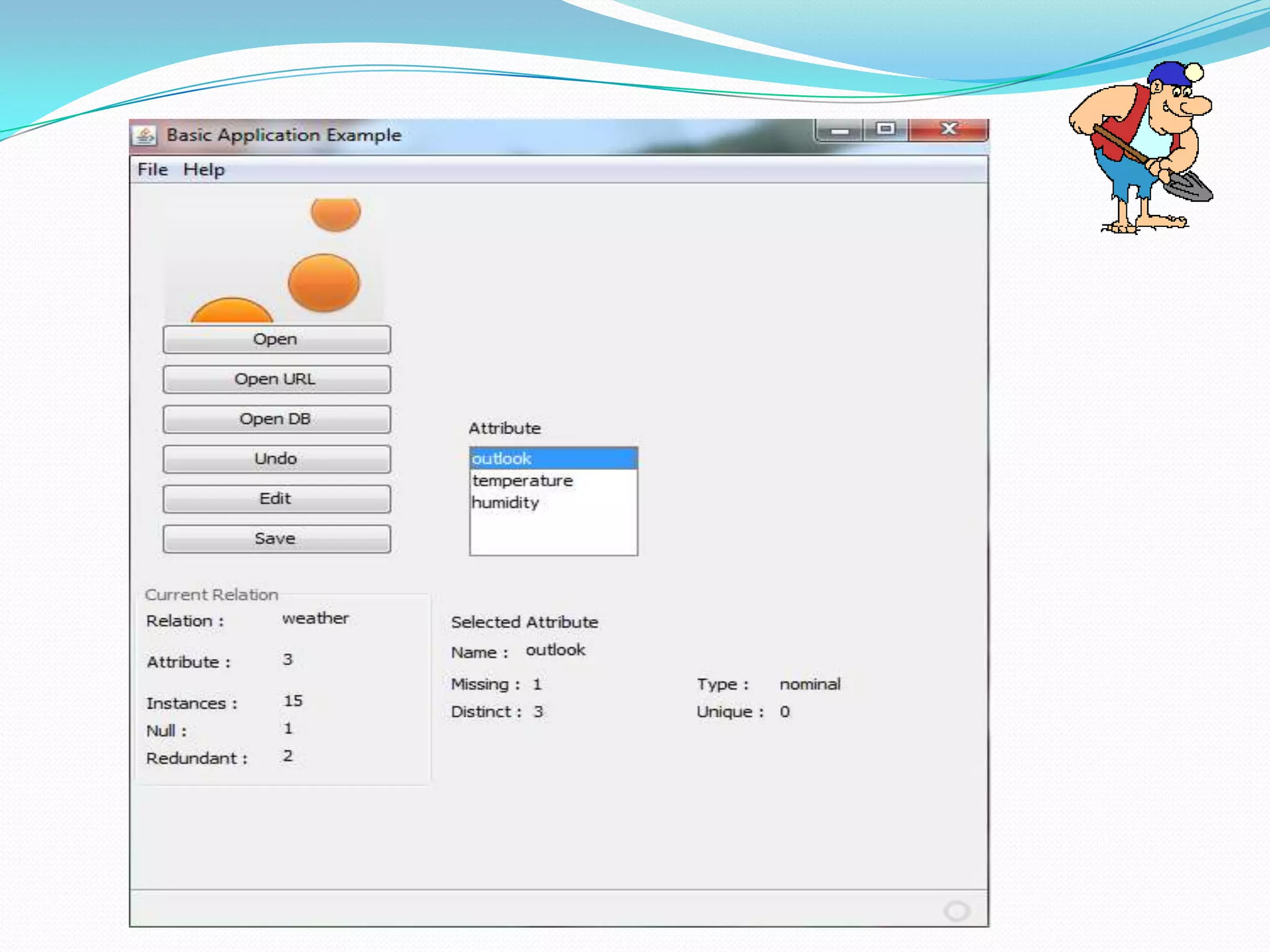

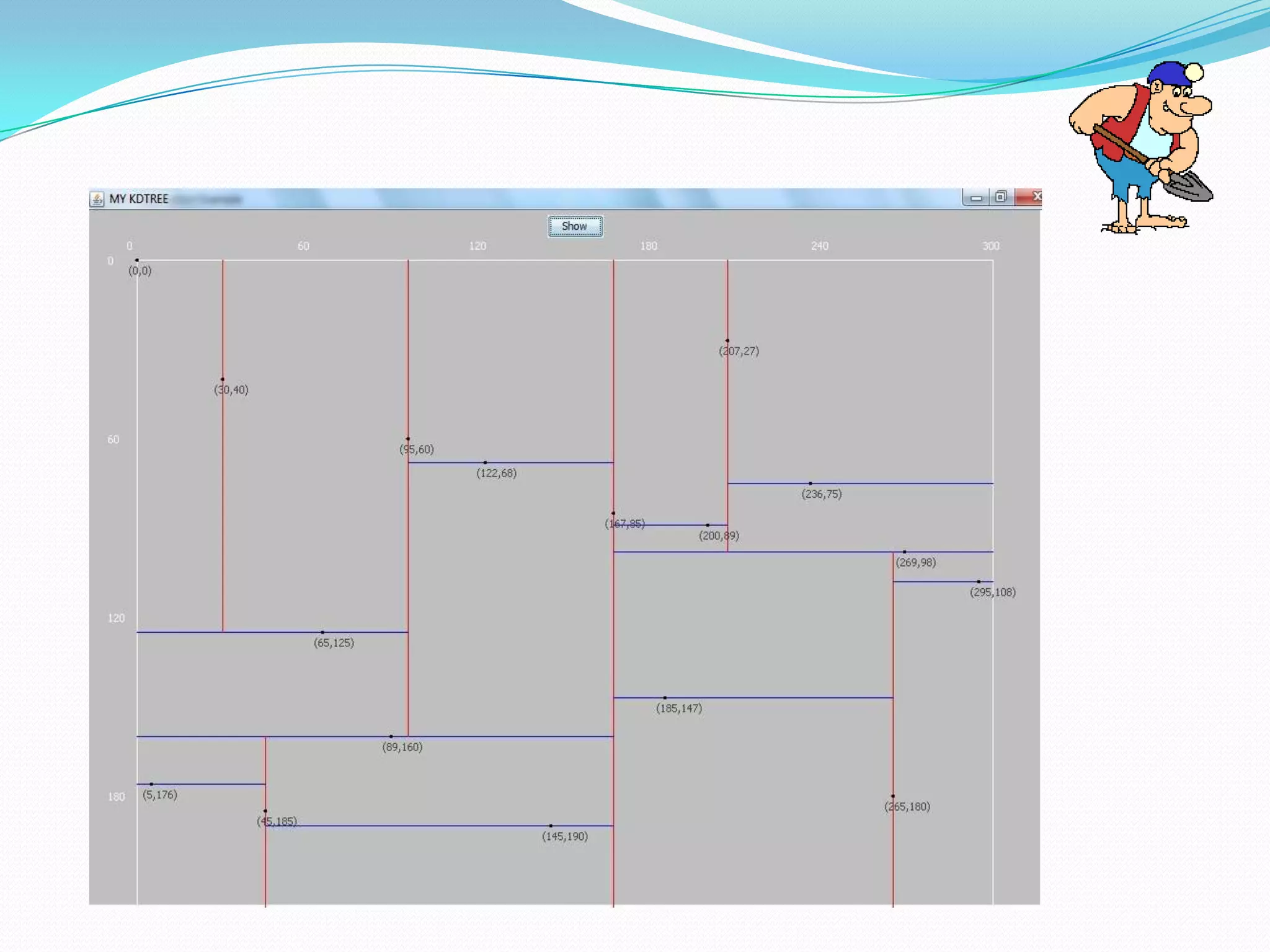

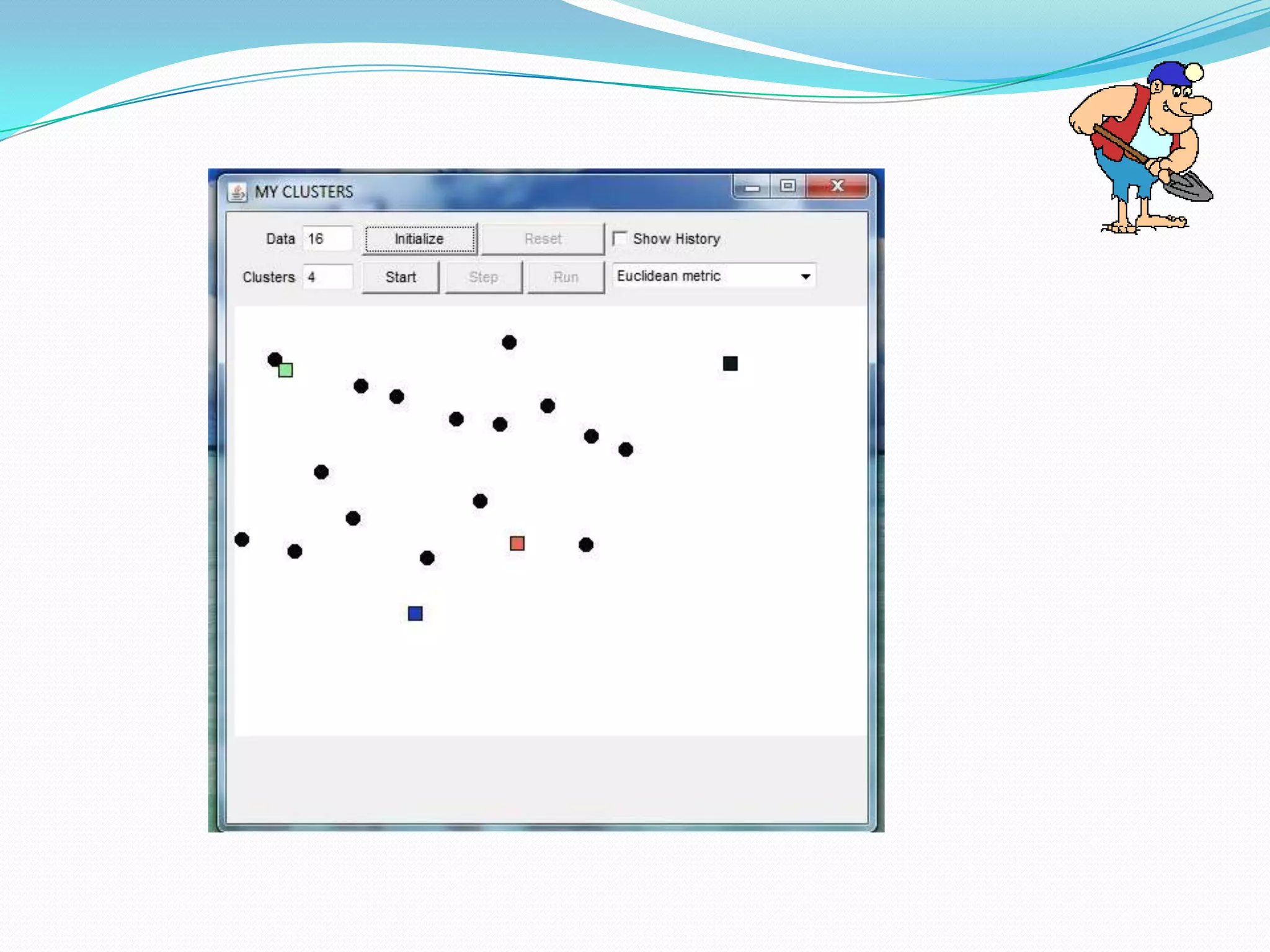

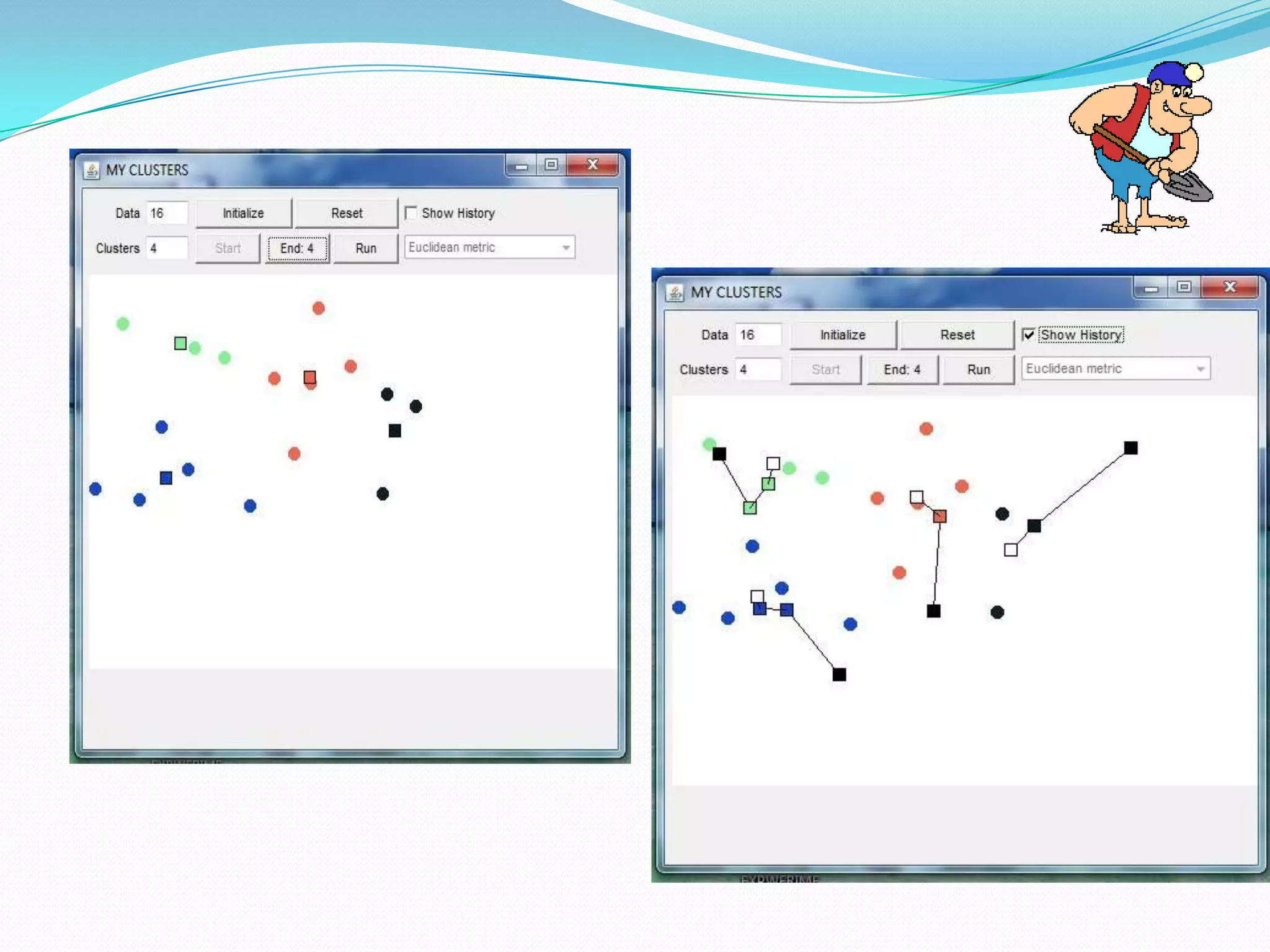

This document describes a new clustering tool for data mining called RAPID MINER. It discusses the need for clustering in applications like customer segmentation. The project aims to develop a new clustering algorithm using preprocessing techniques like removing null values and redundant data. It will implement clustering to distribute data into groups so that association is strong within clusters and weak between clusters. The document compares the new tool to Weka, discusses how it uses KD trees to improve efficiency over K-means clustering, and concludes that the new algorithm chooses better starting clusters and filters data faster using KD trees.