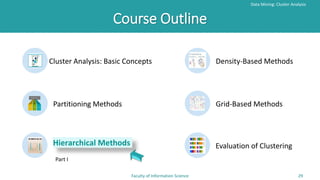

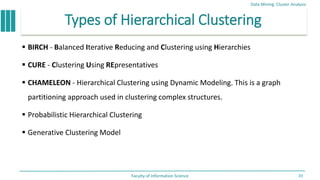

This document provides an overview of hierarchical clustering methods. It discusses agglomerative hierarchical clustering methods like AGNES that start by treating each object as a separate cluster and merge them into larger clusters. It also discusses divisive hierarchical clustering methods like DIANA that start with all objects in one cluster and split them into smaller clusters. A dendrogram is used to visualize the nested clustering formed at different levels in the hierarchical clustering tree. The document also discusses different measures for calculating the distance between clusters during the merging or splitting process.