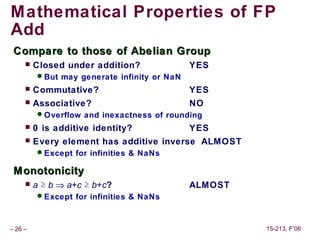

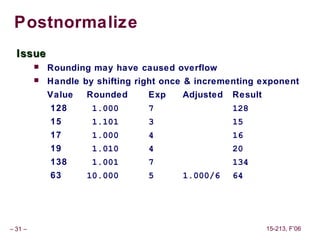

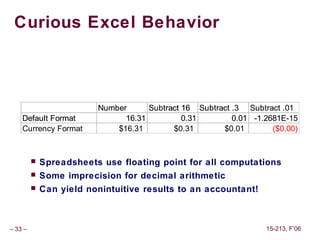

This document provides an overview of floating point representation and arithmetic based on the IEEE 754 standard. It discusses topics such as normalized and denormalized values, special values like infinity and NaN, and examples using tiny 8-bit floating point formats to illustrate concepts like dynamic range and value distribution. The goal is to explain how computers represent inexact real numbers using a finite number of bits.

![Representable Numbers

Limitation

Can only exactly represent numbers of the form x/2k

Other numbers have repeating bit representations

Value Representation

1/3 0.0101010101[01]…2

1/5 0.001100110011[0011]…2

1/10 0.0001100110011[0011]…2

–6– 15-213, F’06](https://image.slidesharecdn.com/class03-130302232754-phpapp02/85/Class03-6-320.jpg)