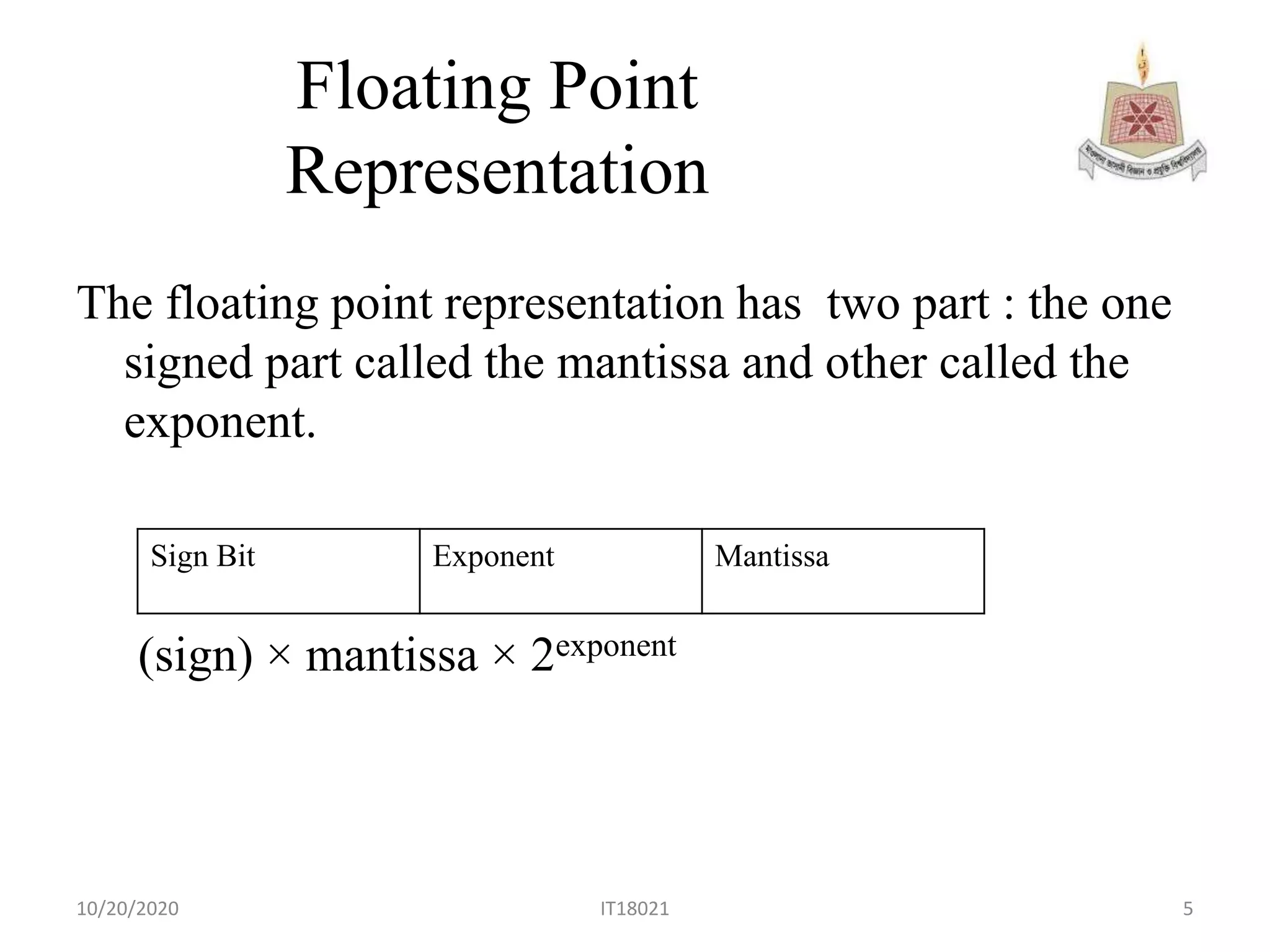

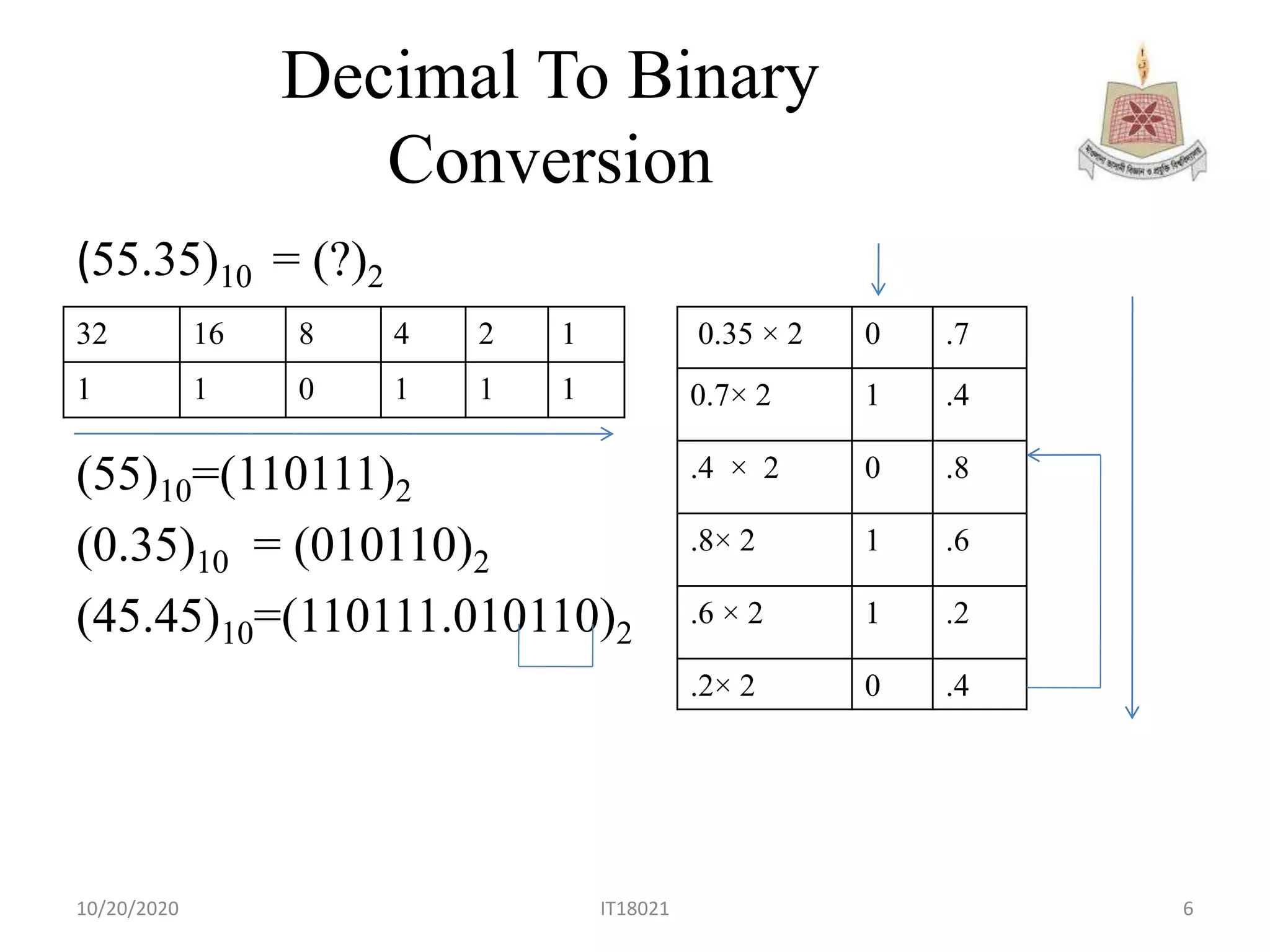

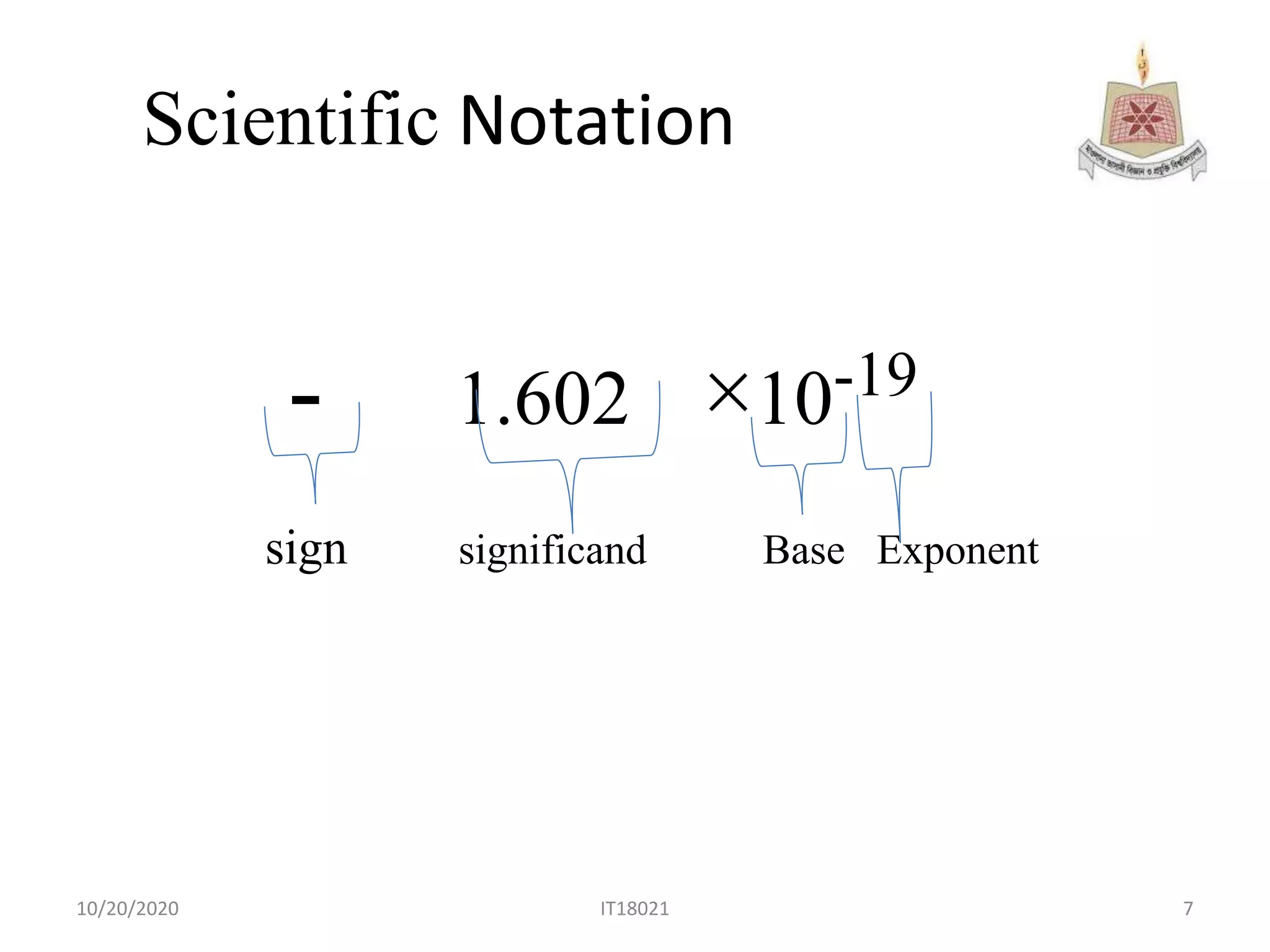

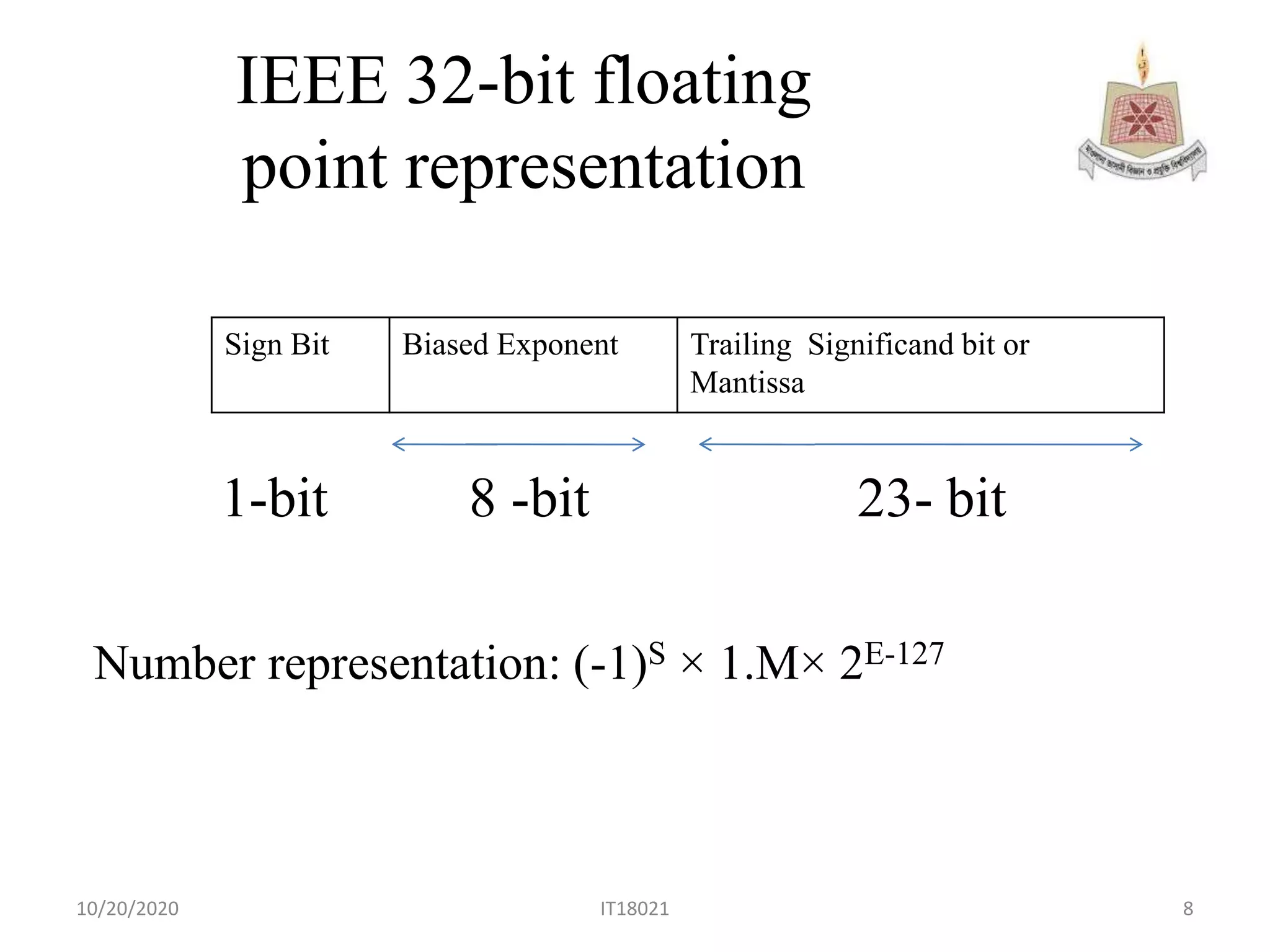

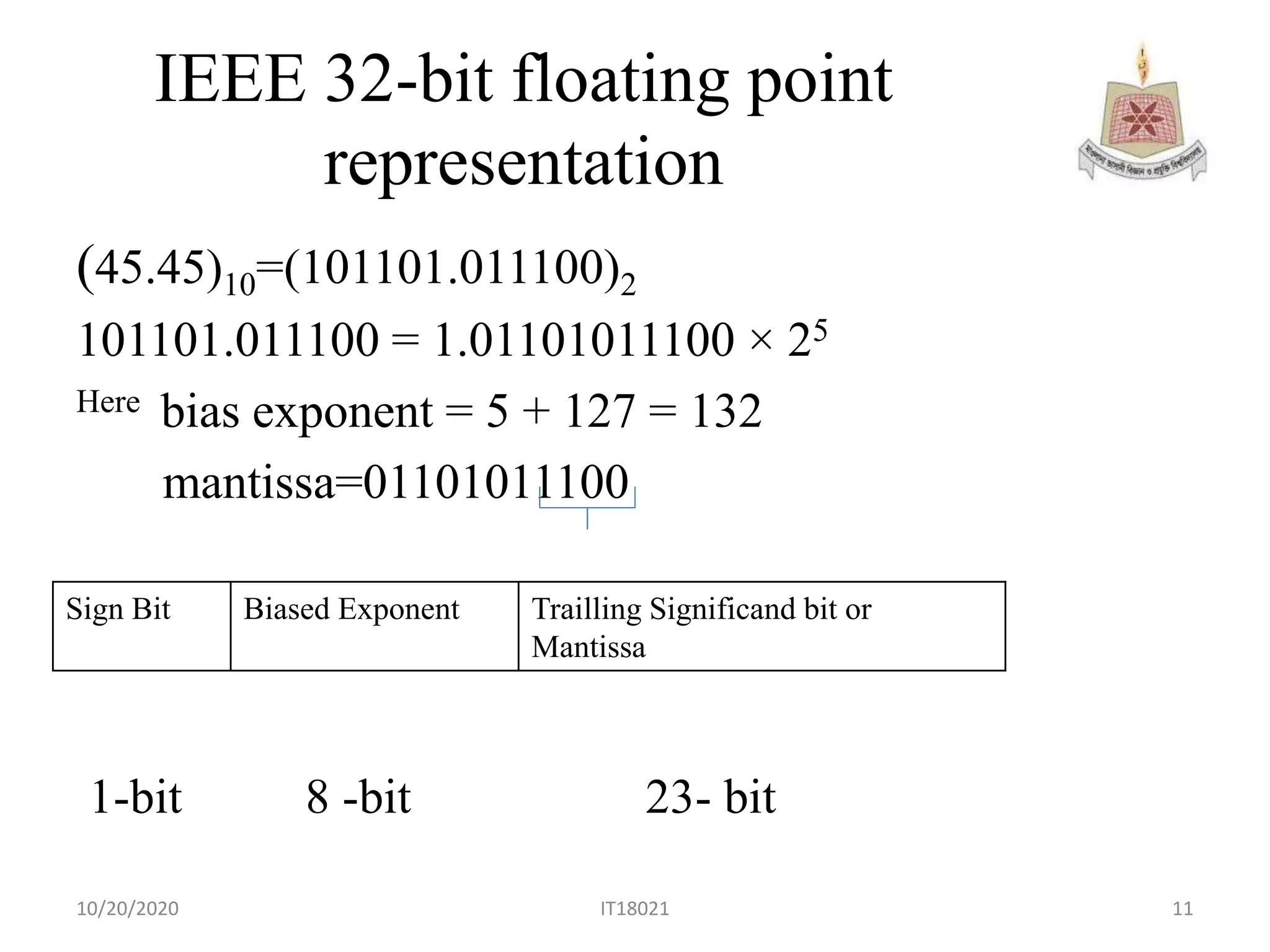

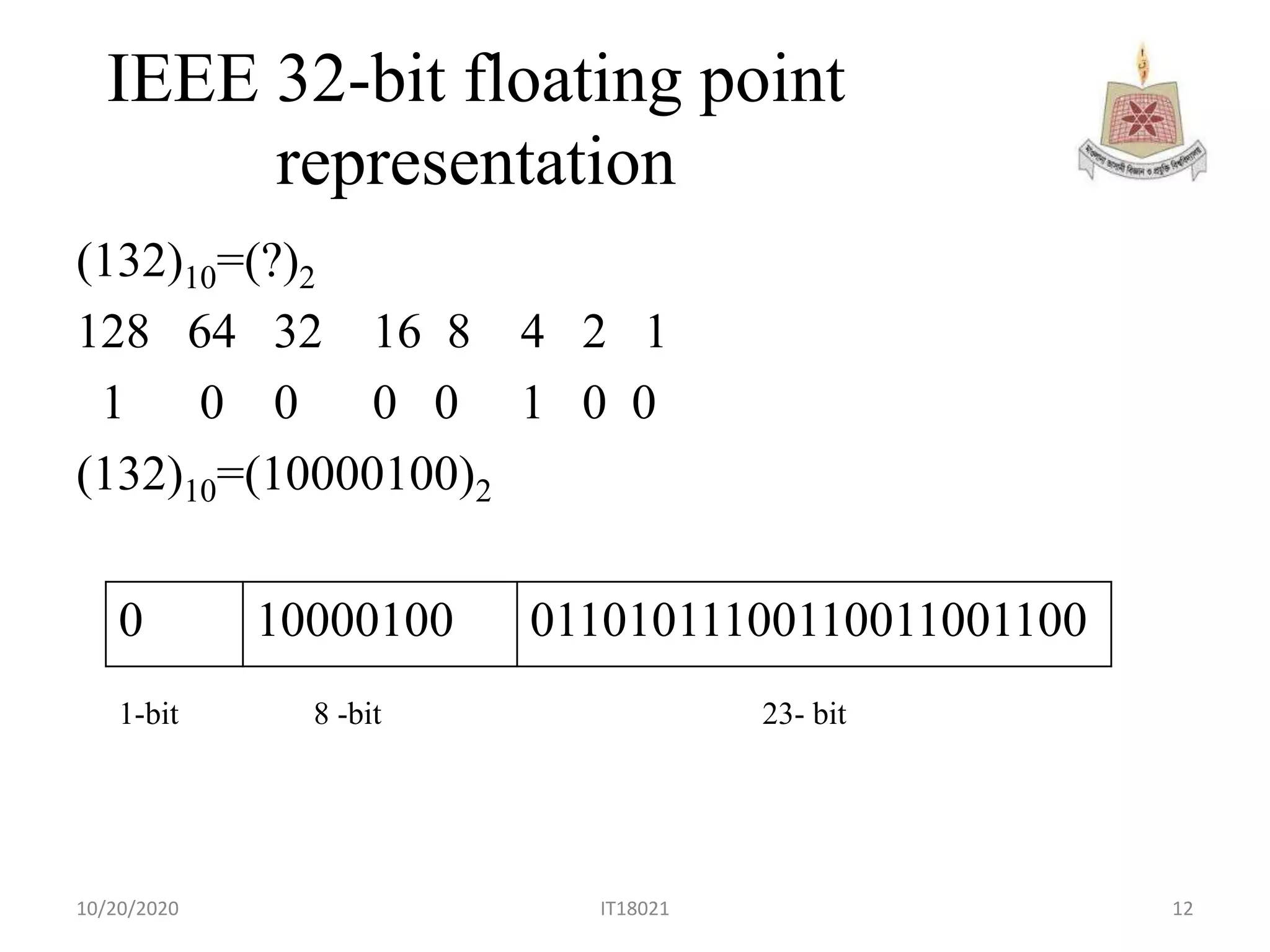

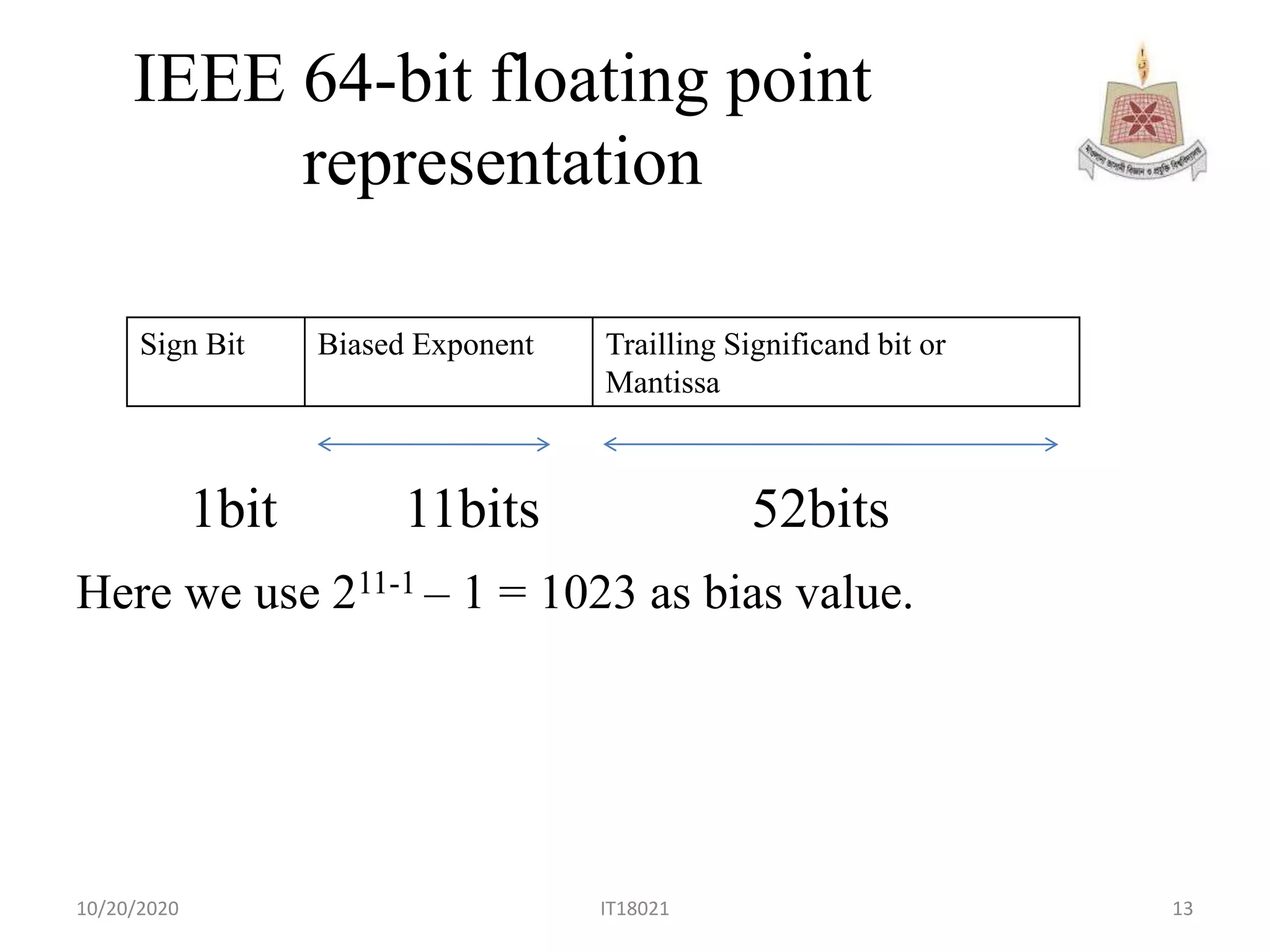

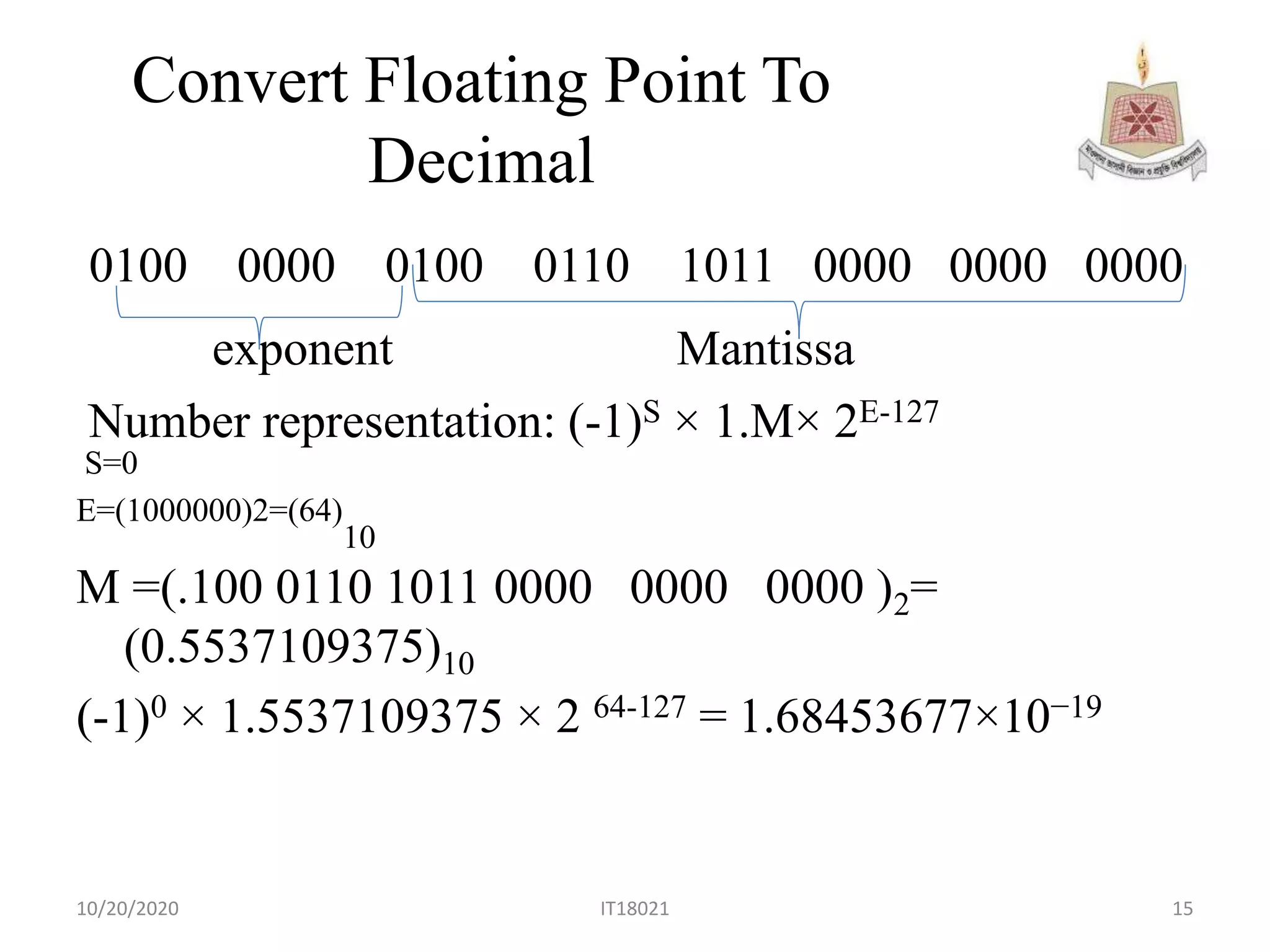

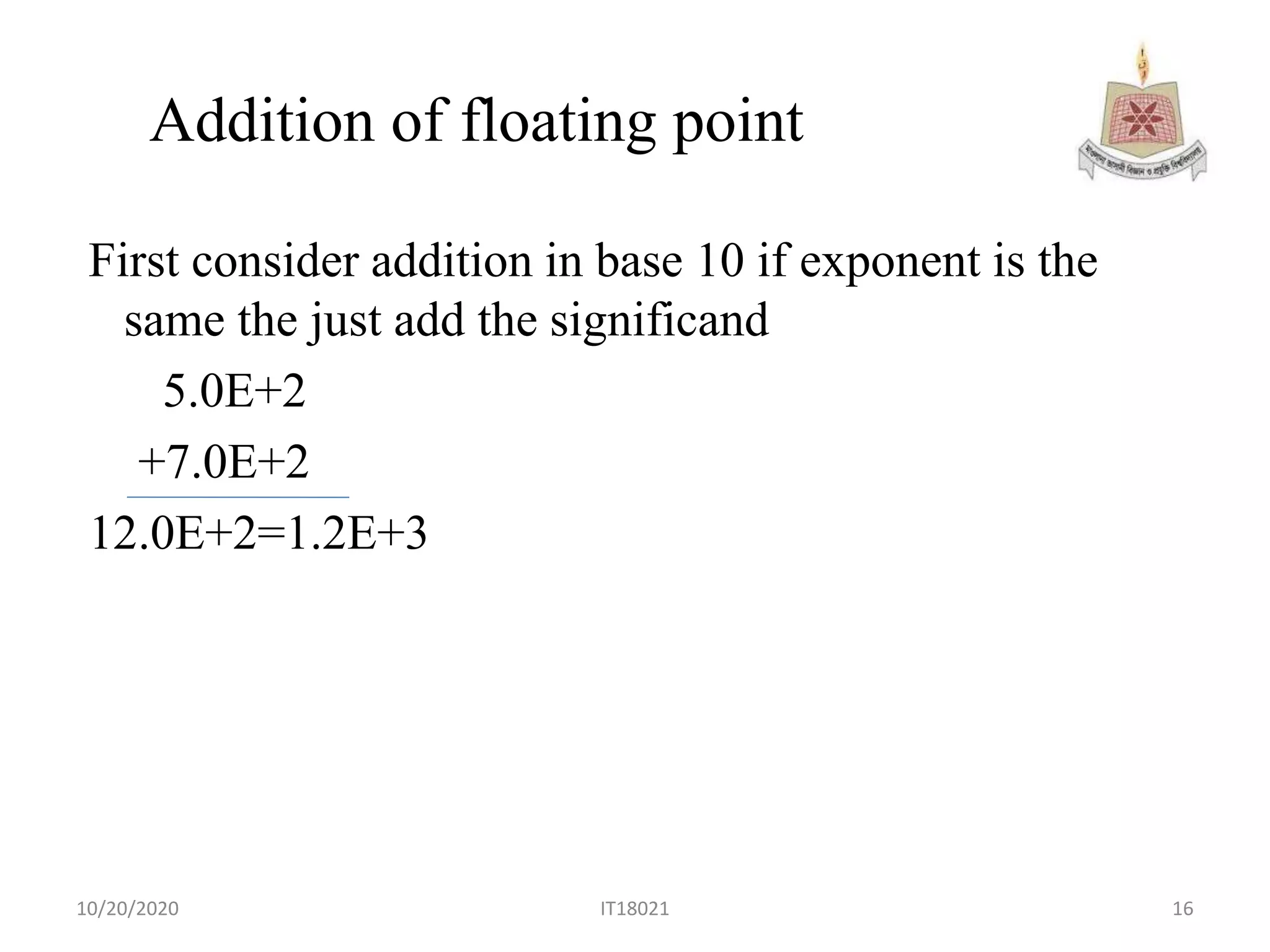

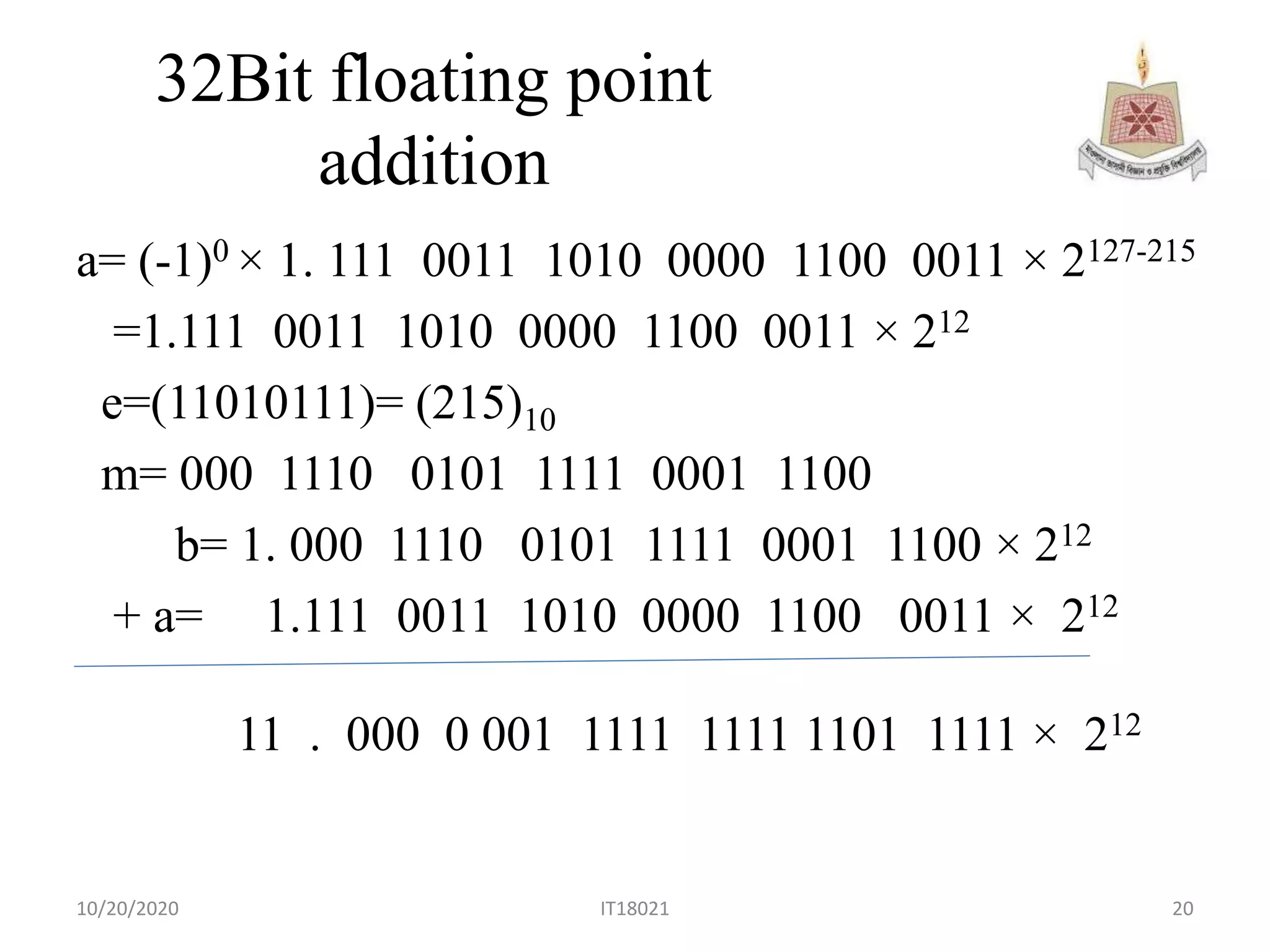

The document presents an overview of computer organization and architecture focusing on floating point representation and the IEEE 754 standards. It details the different types of floating point representations including half, single, double, and extended precision, along with their bit compositions. Additionally, it explains the steps for converting decimal to binary and the process for floating point addition.