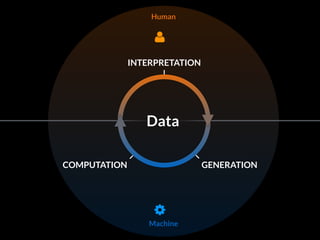

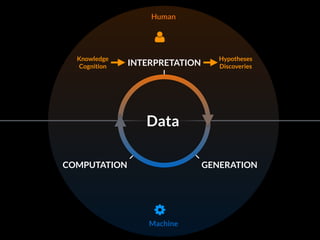

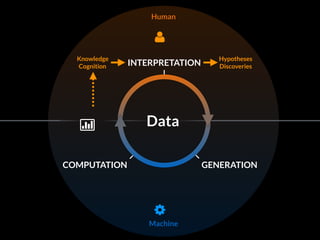

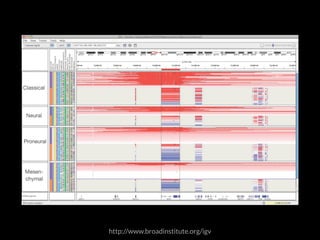

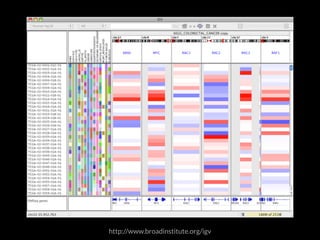

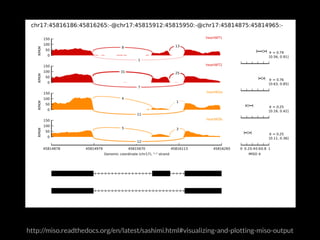

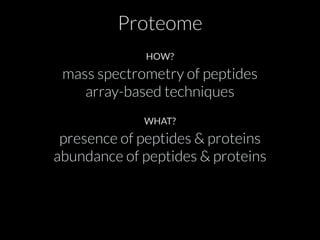

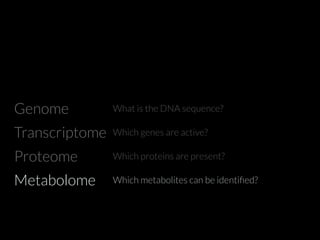

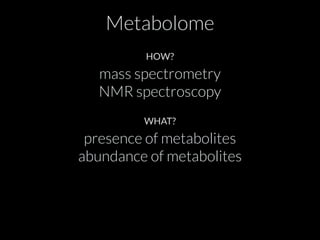

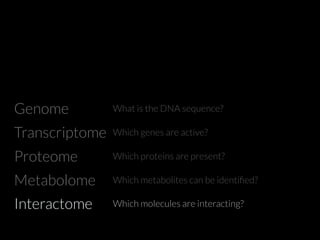

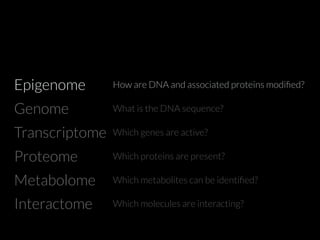

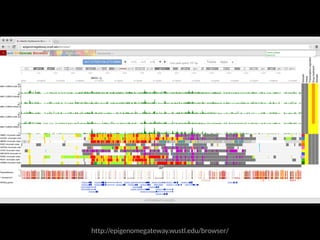

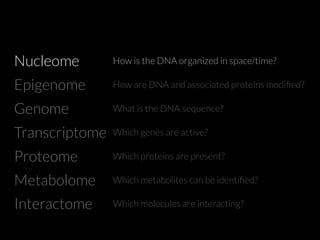

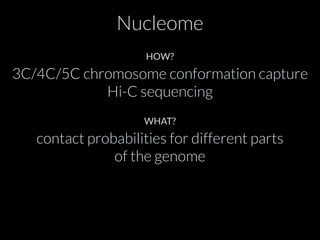

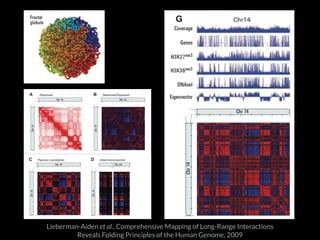

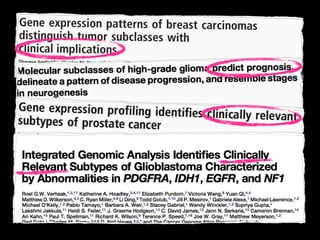

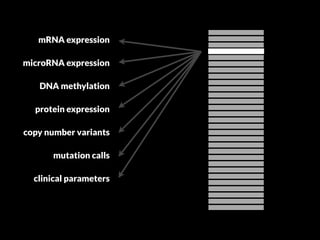

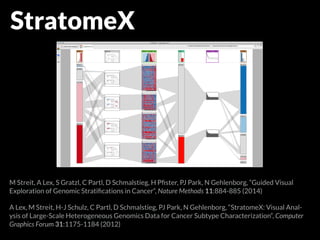

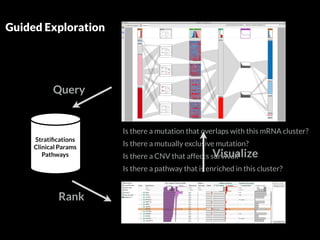

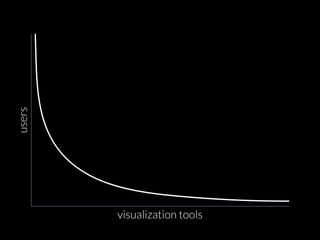

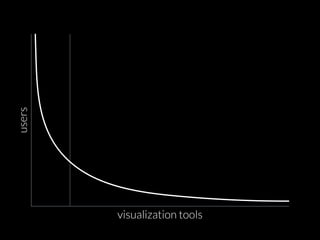

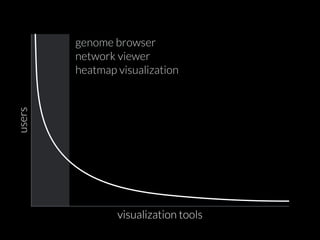

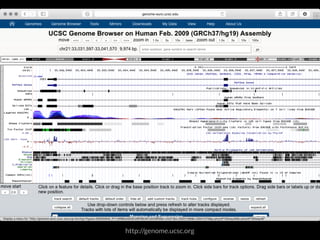

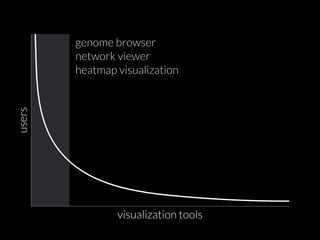

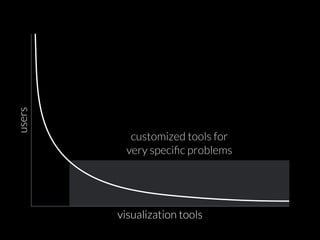

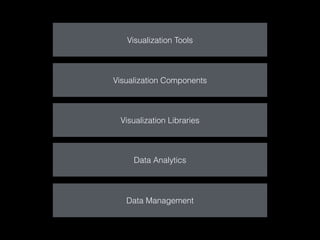

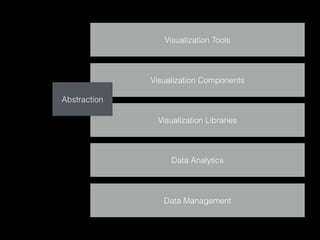

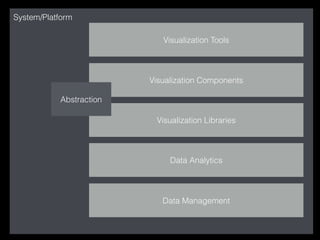

The document discusses various visualization approaches for analyzing biomedical omics data, including genome, transcriptome, proteome, metabolome, and interactome. It emphasizes the importance of integrating different types of data for better insights into biological processes and clinical implications. The document also highlights the need for building infrastructure and tools for effective data visualization and management in biomedical research.