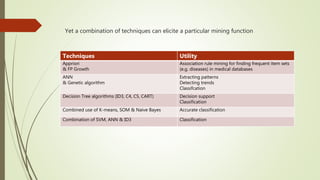

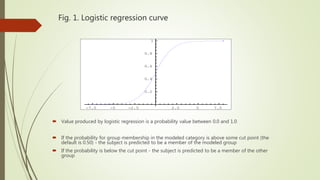

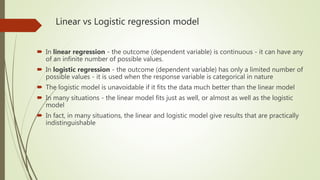

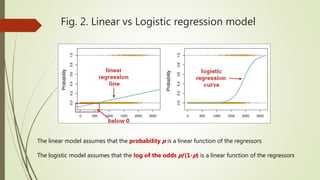

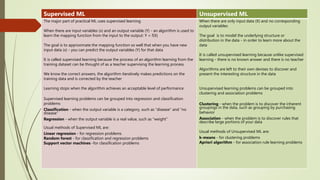

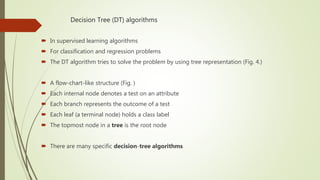

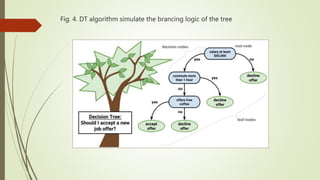

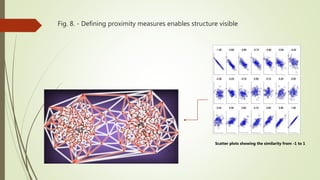

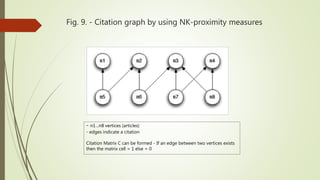

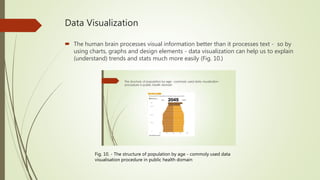

This document provides an overview of various data mining methods that are commonly used in health science and biomedical informatics. It discusses techniques such as logistic regression, support vector machines, association rule mining, decision trees, neural networks, and more. It also compares linear regression to logistic regression, describes how support vector machines work, and explains algorithms like Apriori for association rule mining and decision trees. Examples and figures are included to illustrate key concepts.