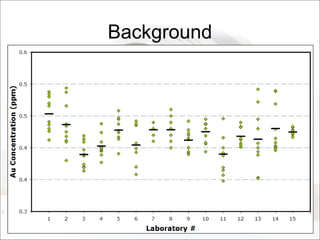

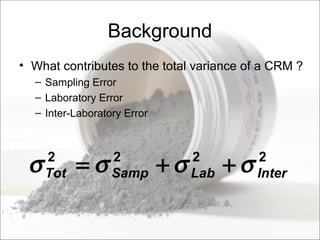

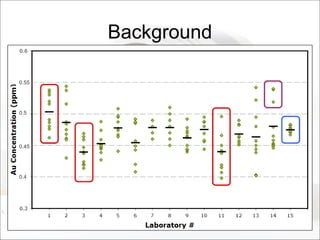

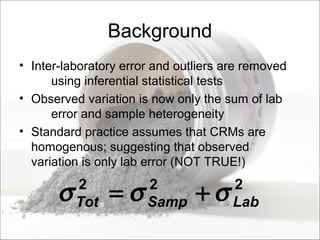

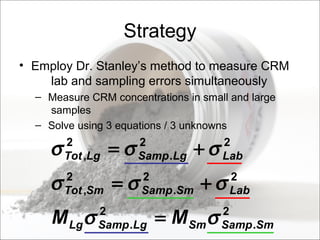

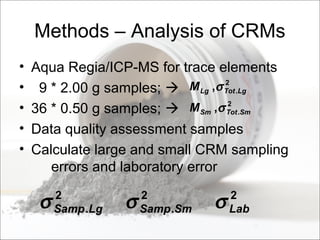

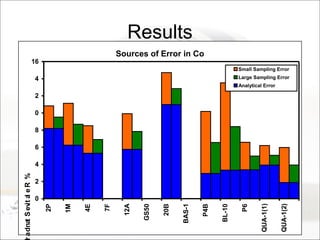

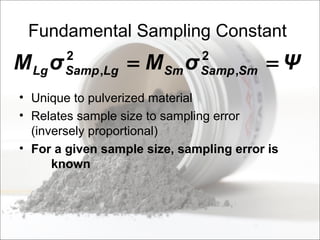

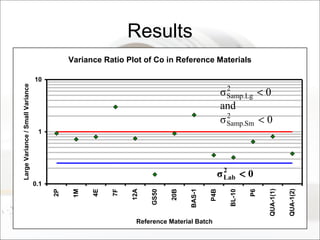

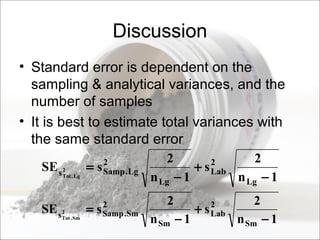

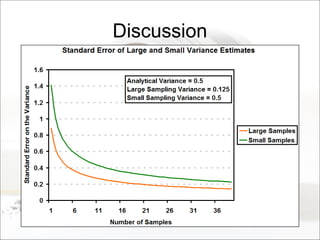

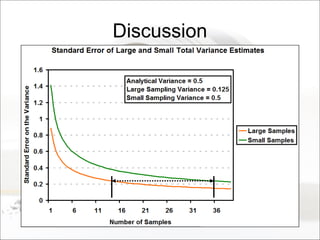

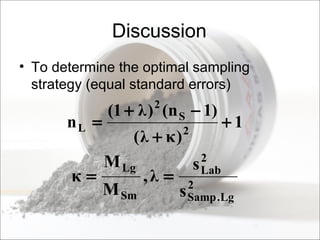

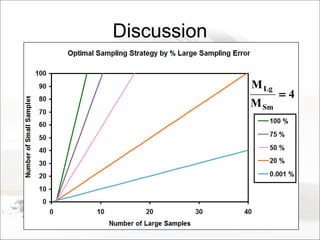

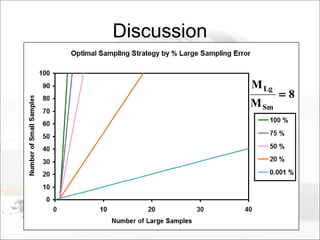

This document summarizes a study to determine the heterogeneity of certified reference materials (CRMs). The researchers analyzed multiple small and large samples of various CRMs to measure sampling error and laboratory error separately. They found that CRMs exhibit both sampling error and laboratory error, contradicting the standard assumption that CRMs are homogenous. The researchers developed a method using the variance of small and large samples to calculate sampling error and laboratory error simultaneously. They conclude CRM manufacturers should provide sampling error measurements and analytical procedures should aim to optimize precision.