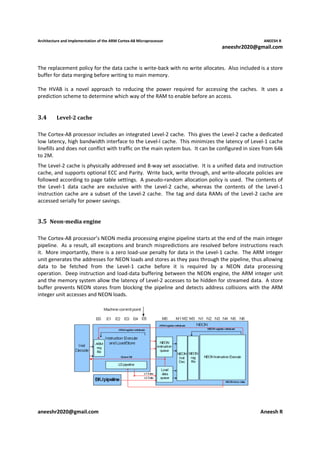

The document discusses the architecture and implementation of the ARM Cortex-A8 microprocessor. It introduces the Cortex-A8 as ARM's first applications microprocessor that delivers high performance and power efficiency for mobile and consumer applications. Key features include the Thumb-2 instruction set, NEON media processing, TrustZone security, and an integrated L2 cache. The Cortex-A8 achieves further performance gains through a dual-issue pipeline and deeper pipeline than prior ARM processors. It employs a combination of synthesized, structured, and custom implementation techniques to optimize for aggressive power, performance and area targets.