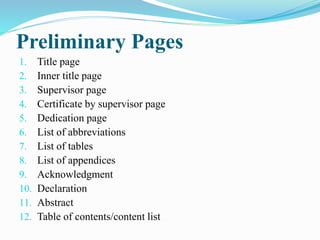

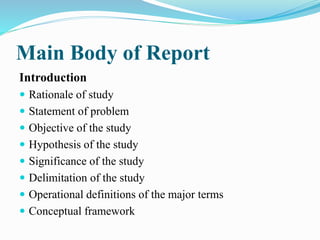

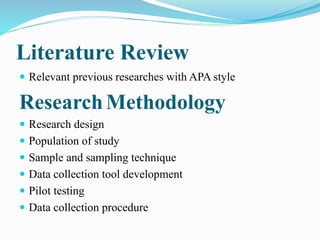

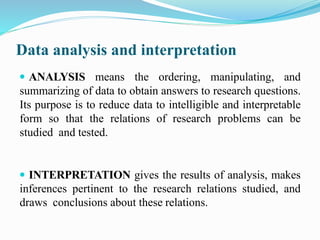

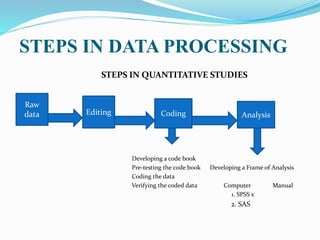

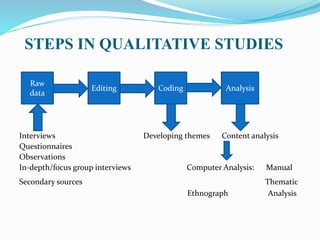

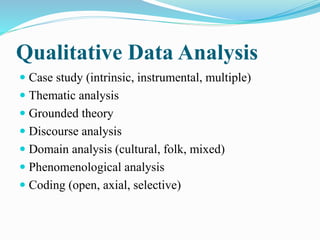

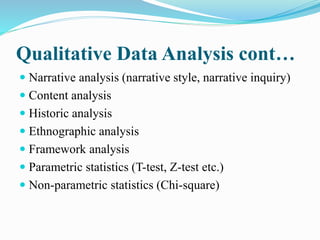

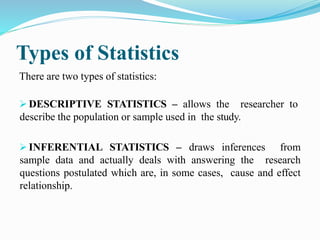

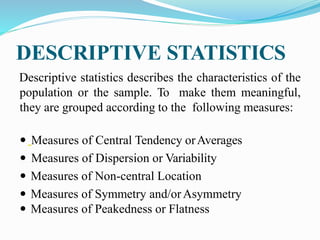

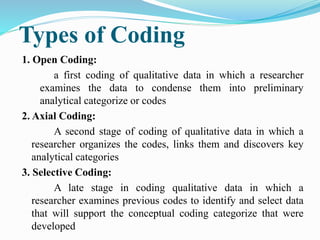

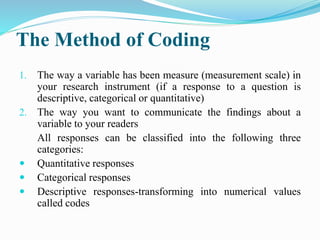

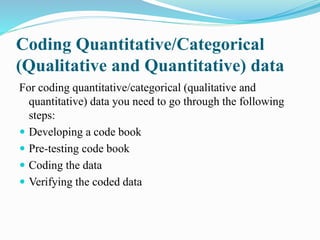

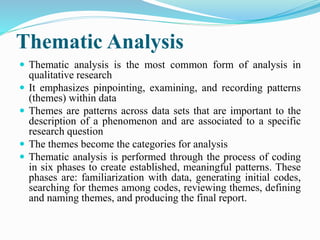

The document outlines the processes and methodologies for writing a research report, focusing on both qualitative and quantitative research methods. It includes detailed sections on report organization, data analysis techniques, coding, and thematic analysis, providing insights into statistical methods and qualitative analysis frameworks. Various types of coding, data collection procedures, and statistical tests such as parametric and non-parametric tests are discussed, emphasizing the importance of proper methodology in research.