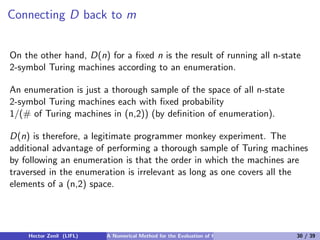

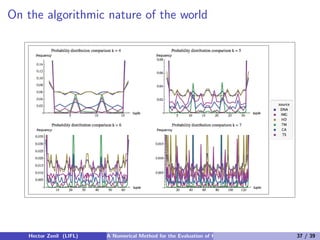

This document discusses a numerical method for evaluating Kolmogorov complexity, addressing its uncomputability and dependency on the choice of universal Turing machine. The work emphasizes the challenges associated with short strings and proposes the concept of algorithmic probability as an alternative measure for assessing complexity. It also highlights the relationship between the complexity and probability measures, underscoring the trade-offs involved in their computational evaluations.

![Algorithmic Complexity (cont.)

Definition

[Kolmogorov(1965), Chaitin(1966)]

K (s) = min{|p|, U(p) = s}

The algorithmic complexity K (s) of a string s is the length of the shortest

program p that produces s running on a universal Turing machine U.

The formula conveys the following idea: a string with low algorithmic

complexity is highly compressible, as the information that it contains can

be encoded in a program much shorter in length than the length of the

string itself.

Hector Zenil (LIFL) A Numerical Method for the Evaluation of Kolmogorov Complexity 5 / 39](https://image.slidesharecdn.com/presentationaronline-110703141459-phpapp02/85/A-Numerical-Method-for-the-Evaluation-of-Kolmogorov-Complexity-An-alternative-to-lossless-compression-algorithms-5-320.jpg)

![Example of an evaluation of K

The string 01010101...01 can be produced by the following program:

Program A:

1: n:= 0

2: Print n

3: n:= n+1 mod 2

4: Goto 2

The length of A (in bits) is an upper bound of K (010101...01).

Connections to predictability: The program A trivially allows a shortcut to

the value of an arbitrary digit through the following function f(n):

if n = 2m then f (n) = 1, f (n) = 0 otherwise.

Predictability characterization (Shnorr) [Downey(2010)]

simple ⇐⇒ predictable

random ⇐⇒ unpredictable

Hector Zenil (LIFL) A Numerical Method for the Evaluation of Kolmogorov Complexity 7 / 39](https://image.slidesharecdn.com/presentationaronline-110703141459-phpapp02/85/A-Numerical-Method-for-the-Evaluation-of-Kolmogorov-Complexity-An-alternative-to-lossless-compression-algorithms-7-320.jpg)

![Algorithmic Probability

There is a measure that describes the expected output of a random

program running on a universal Turing machine.

Definition

[Levin(1977)]

m(s) = Σp:U(p)=s 1/2|p| i.e. the sum over all the programs for which U (a

prefix free universal Turing machine) with p outputs the string s and halts.

m is traditionally called Levin’s semi-measure, Solomonof-Levin’s

semi-measure or the Universal distribution [Kirchherr and Li(1997)].

Hector Zenil (LIFL) A Numerical Method for the Evaluation of Kolmogorov Complexity 14 / 39](https://image.slidesharecdn.com/presentationaronline-110703141459-phpapp02/85/A-Numerical-Method-for-the-Evaluation-of-Kolmogorov-Complexity-An-alternative-to-lossless-compression-algorithms-14-320.jpg)

![The motivation for Solomonoff-Levin’s m(s) (cont.)

But if instead, the monkey is placed on a computer, the chances of

producing a program generating the digits of π are of only 1/50158

because it would take the monkey only 158 characters to produce the first

2400 digits of π using, for example, this C language code:

int a = 10000, b, c = 8400, d, e, f[8401], g; main(){for(; b-c; )

f[b + +] = a/5; for(; d = 0, g = c ∗ 2; c- = 14, printf(“%.4d”, e + d/a),

e = d%a)for(b = c; d+ = f[b] ∗ a, f[b] = d%–g, d/ = g–, –b; d∗ = b);

Implementations in any programming language, of any of the many known

formulae of π are shorter than the expansions of π and have therefore

greater chances to be produced by chance than producing the digits of π

one by one.

Hector Zenil (LIFL) A Numerical Method for the Evaluation of Kolmogorov Complexity 16 / 39](https://image.slidesharecdn.com/presentationaronline-110703141459-phpapp02/85/A-Numerical-Method-for-the-Evaluation-of-Kolmogorov-Complexity-An-alternative-to-lossless-compression-algorithms-16-320.jpg)

![Towards a semi-measure (cont.)

So for m(s) to be a probability measure, the universal Turing machine U

has to be a prefix-free Turing machine, that is a machine that does not

accept as a valid program one that has another valid program in its

beginning, e.g. program 2 starts with program 1, so if program 1 is a valid

program then program 2 cannot be a valid one.

The set of valid programs is said to form a prefix-free set, that is no

element is a prefix of any other, a property necessary to keep

0 < m(s) < 1. For more details see (Kraft’s inequality [Calude(2002)]).

However, some programs halt or some others don’t (actually, most do not

halt), so one can only run U and see what programs produce s

contributing to the sum. It is said then, that m(s) is semi-computable

from below, and therefore is considered a probability semi-measure (as

opposed to a full measure).

Hector Zenil (LIFL) A Numerical Method for the Evaluation of Kolmogorov Complexity 19 / 39](https://image.slidesharecdn.com/presentationaronline-110703141459-phpapp02/85/A-Numerical-Method-for-the-Evaluation-of-Kolmogorov-Complexity-An-alternative-to-lossless-compression-algorithms-19-320.jpg)

![The coding theorem

The greatest contributor in the summation of programs ΣU(p)=s 1/2|p| is

the shortest program p, given that it is when the denominator 2|p| reaches

its smallest value and therefore 1/2|p| its greatest value. The shortest

program p producing s is nothing but K (s), the algorithmic complexity of

s. The coding theorem [Levin(1977), Calude(2002)] describes this

connection between m(s) and K (s):

Theorem

K (s) = −log2 (m(s)) + c

Notice that the coding theorem reintroduces an additive constant! One

may not get rid of it, but the choices related to m(s) are much less

arbitrary than picking a universal Turing machine directly for K (s).

Hector Zenil (LIFL) A Numerical Method for the Evaluation of Kolmogorov Complexity 21 / 39](https://image.slidesharecdn.com/presentationaronline-110703141459-phpapp02/85/A-Numerical-Method-for-the-Evaluation-of-Kolmogorov-Complexity-An-alternative-to-lossless-compression-algorithms-21-320.jpg)

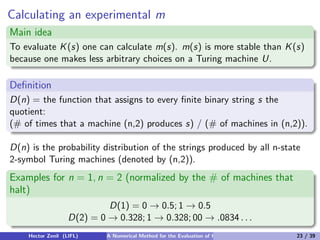

![Calculating an experimental m (cont.)

Definition

[T. Rad´(1962)]

o

A busy beaver is a n-state, 2-color Turing machine which writes a

maximum number of 1s before halting or performs a maximum number of

steps when started on an initially blank tape before halting.

Given that the Busy Beaver function values are known for n-state 2-symbol

Turing machines for n = 2, 3, 4 we could compute D(n) for n = 2, 3, 4.

We ran all 22 039 921 152 two-way tape Turing machines starting with a

tape filled with 0s and 1s in order to calculate D(4)2

Theorem

D(n) is noncomputable (by reduction to Rado’s Busy Beaver problem).

2

A 9-day calculation on a single 2.26 Core Duo Intel CPU.

Hector Zenil (LIFL) A Numerical Method for the Evaluation of Kolmogorov Complexity 24 / 39](https://image.slidesharecdn.com/presentationaronline-110703141459-phpapp02/85/A-Numerical-Method-for-the-Evaluation-of-Kolmogorov-Complexity-An-alternative-to-lossless-compression-algorithms-24-320.jpg)

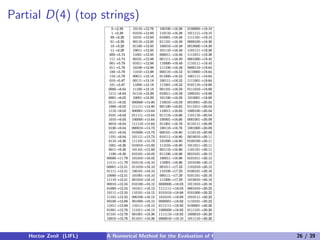

![Complexity Tables

Table: The 22 bit-strings in D(2) from 6 088 (2,2)-Turing machines that halt.

[Delahaye and Zenil(2011)]

0 → .328 010 → .00065

1 → .328 101 → .00065

00 → .0834 111 → .00065

01 → .0834 0000 → .00032

10 → .0834 0010 → .00032

11 → .0834 0100 → .00032

001 → .00098 0110 → .00032

011 → .00098 1001 → .00032

100 → .00098 1011 → .00032

110 → .00098 1101 → .00032

000 → .00065 1111 → .00032

Solving degenerate cases

“0” is the simplest string (together with “1”) according to D.

Hector Zenil (LIFL) A Numerical Method for the Evaluation of Kolmogorov Complexity 25 / 39](https://image.slidesharecdn.com/presentationaronline-110703141459-phpapp02/85/A-Numerical-Method-for-the-Evaluation-of-Kolmogorov-Complexity-An-alternative-to-lossless-compression-algorithms-25-320.jpg)

![Conclusions

Our method aimed to show that reasonable choices of formalisms for

evaluating the complexity of short strings through m(s) give consistent

measures of algorithmic complexity.

[Greg Chaitin (w.r.t our method)] ...the dreaded theoretical hole

in the foundations of algorithmic complexity turns out, in

practice, not to be as serious as was previously assumed.

Our method also seems notable in that it is an experimental approach that

comes into the rescue of the apparent holes left by the theory.

Hector Zenil (LIFL) A Numerical Method for the Evaluation of Kolmogorov Complexity 38 / 39](https://image.slidesharecdn.com/presentationaronline-110703141459-phpapp02/85/A-Numerical-Method-for-the-Evaluation-of-Kolmogorov-Complexity-An-alternative-to-lossless-compression-algorithms-38-320.jpg)

![Bibliography

C.S. Calude, Information and Randomness: An Algorithmic

Perspective (Texts in Theoretical Computer Science. An EATCS

Series), Springer, 2nd. edition, 2002.

G. J. Chaitin. On the length of programs for computing finite binary

sequences. Journal of the ACM, 13(4):547–569, 1966.

G. Chaitin, Meta Math!, Pantheon, 2005.

R.G. Downey and D. Hirschfeldt, Algorithmic Randomness and

Complexity, Springer Verlag, to appear, 2010.

J.P. Delahaye and H. Zenil, On the Kolmogorov-Chaitin complexity for

short sequences, in Cristian Calude (eds) Complexity and Randomness:

From Leibniz to Chaitin. World Scientific, 2007.

J.P. Delahaye and H. Zenil, Numerical Evaluation of Algorithmic

Complexity for Short Strings: A Glance into the Innermost Structure

of Randomness, arXiv:1101.4795v4 [cs.IT].

Hector Zenil (LIFL) A Numerical Method for the Evaluation of Kolmogorov Complexity 39 / 39](https://image.slidesharecdn.com/presentationaronline-110703141459-phpapp02/85/A-Numerical-Method-for-the-Evaluation-of-Kolmogorov-Complexity-An-alternative-to-lossless-compression-algorithms-39-320.jpg)