The document is a comprehensive guide on research data management, focusing on data protection, organization, and sharing practices. It emphasizes the importance of systematic file naming, version control, data classification, and proper folder organization to enhance data accessibility and reusability. Good data management practices are crucial for maintaining data integrity and ensuring seamless collaboration among researchers.

![File-naming #1

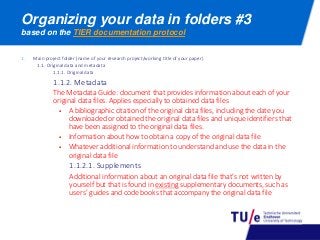

be consistent and aim for concise but informative names

Good file names are consistent (use file-naming

conventions), unique (distinguishes a file from files with

similar subjects as well as different versions of the file)

and meaningful (use descriptive names).

File-naming conventions help you find your data, help

others to find your data and help track which version of

a file is most current

Avoid using special characters in a file name: / : * ? < >

| [ ] & $

Use underscores instead of periods or spaces to

separate logical elements in a file name

Avoid very long names: usually 25 characters is sufficient

length

Names should include all necessary descriptive

information independent of where it is stored

Include dates and a version number on files

Add a readme.txt to each folder in which the file naming

and its meaning is explained

Source: File naming conventions](https://image.slidesharecdn.com/proofrdm2016part2versie2016-03-01-160302150535/85/A-basic-course-on-Reseach-data-management-part-2-protecting-and-organizing-your-data-4-320.jpg)