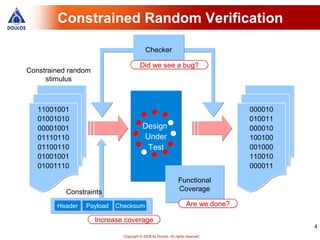

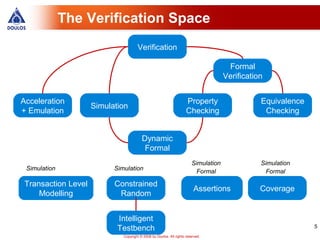

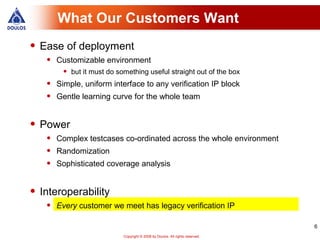

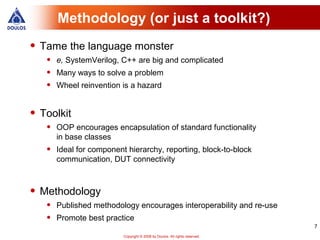

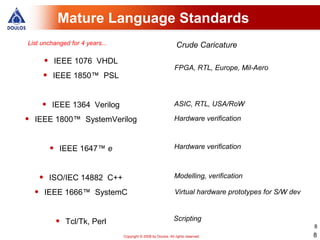

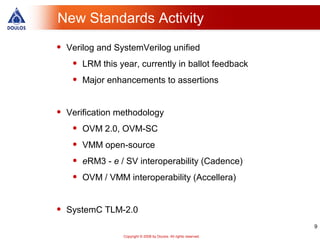

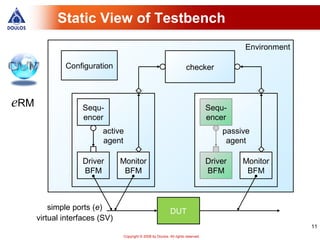

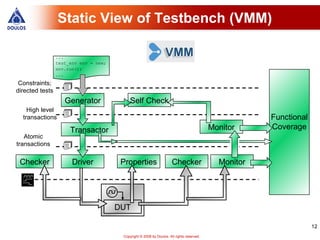

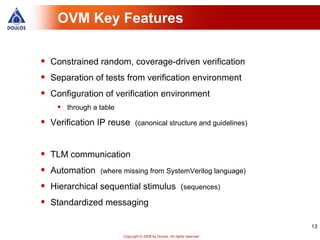

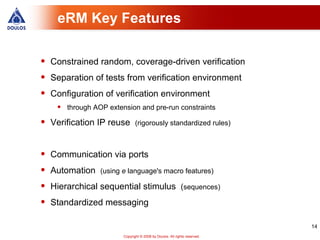

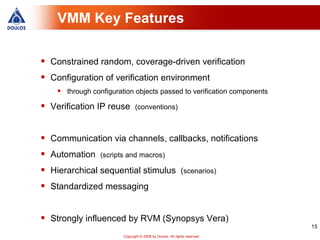

This document summarizes the verification methodology landscape. It discusses languages, methodologies, tools and standards used for hardware verification including OVM, VMM, and eRM. It also covers topics like interoperability between methodologies and convergence of approaches.

![Scenario Generator (VMM)

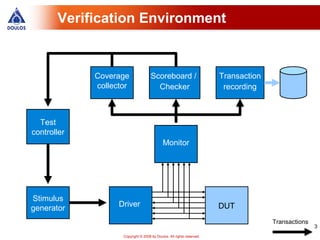

Verification environment

Scenario generator

scenario_set burst

items

[0] atomic

select_scenario

[1] burst

select

[2] RMW

copies of items

generator's output channel

Downstream transactor

21

Copyright © 2008 by Doulos. All rights reserved.](https://image.slidesharecdn.com/jonathanbromleydoulos-100716224102-phpapp02/85/Jonathan-bromley-doulos-21-320.jpg)