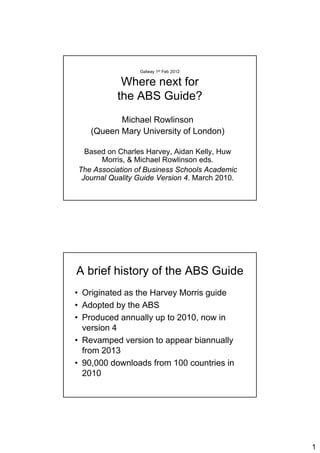

2012.02.01 Where Next for the ABS Guide

•

1 like•320 views

Professor Michael Rowlinson, Queen Mary, University of London, UK presented this seminar "Where Next for the ABS Guide" as part of the Whitaker Institute Seminar Series at the Whitaker Institute on 1st February 2012.

Report

Share

Report

Share

Download to read offline

Recommended

Impact Factor: An Index of Research Journal

PLEASE SUBSCRIBE OUR YOUTUBE CHANNEL OPENKNOWLEDGE or see URL https://youtu.be/nPLnJqLEknY

Research Indices are the indicators of the credibility and recognition of a researcher, a journal, an article and/or and institute. These include Impact Factor, immediacy Index, h-index etc. Researchers and students must know about these indices for better recognition in the academia and research. In the first part of the series we are discussing Impact Factor as a vital research Index. Impact factor (IF) is the most Important basis of selection of journal by the researchers and readers. Its a a measure of the reputation of a journal. IF is a measure of the frequency with which the "average article" in a journal has been cited in a particular year. The OER shall cover how (IF is calculated), Who (provides the IF), on which factors IF depends upon, The importance of IF in academic recognition and knowing the IF of journal. Also SUBSCRIBE OUR YOUTUBE CHANNEL OPENKNOWLEDGE or see https://youtu.be/nPLnJqLEknY

Understanding the Basics of Journal Metrics

By Leena Shah

Managing Editor, Ambassador for DOAJ

5th Annual Conference of Asian Council of Science Editors [ACSE]

Dubai, 21-22 March 2018

From RAE to REF

This document summarizes a presentation on research assessment in the UK. It outlines the Research Assessment Exercise (RAE) process and its impact on researcher behavior. It then discusses the transition to the new Research Excellence Framework (REF), which will place greater emphasis on citations, impact, and environment. The presentation notes that the RAE and REF influence what researchers study and how they disseminate their work, and that behaviors will continue adjusting in response to assessment changes.

Research metrics Apr2013

This document provides an overview of various bibliometric tools and metrics for measuring scientific output and impact. It discusses journal ranking metrics like impact factor, eigenfactor, SNIP, and SJR. It also covers article-level metrics including F1000 factors and citation analysis tools from Google Scholar, Web of Science, and Scopus. Additionally, it introduces author-level metrics such as the h-index and its variants that can be calculated using various databases and tools. Finally, the document briefly discusses altmetrics and ways to track scholarly impact on social media and the open web.

Research workshop presentation unisa

The document discusses the use of bibliometric data and citation metrics to evaluate research performance and support decision making. It notes the increasing importance of demonstrating research impact and return on investment. Thomson Reuters products like the Journal Citation Reports and Web of Science are positioned as providing objective citation and bibliometric data to help with research assessment and evaluation exercises. The document also provides examples of how this data can be used to analyze the research performance of institutions and individuals.

Altmetrics 101 - Altmetrics in Libraries

LITA’s Altmetrics and Digital Analytics Interest Group is proud to present Heather Coates, Richard Naples, and Lauren Collister in our second free webinar of the season. Heather will introduce the concept of altmetrics with a quick "Altmetrics 101," Richard will discuss the Smithsonian's implementation of Altmetric, and Lauren will share the University of Pittsburgh's experience with Plum Analytics.

Citation & altmetrics - a comparison

comparison of metrics for scholarly products, created in 2012 by Kellie Kaneshiro & Heather Coates, updated 2014

Open Access - Current Themes

Presentation from our AGM and afternoon of talks on the theme of Open.

https://www.eventbrite.co.uk/e/mmit-2016-agm-and-free-talks-on-open-libraries-research-and-education-tickets-28552110130#

Stephen Pinfield - Professor of Information Services Management at University of Sheffield - @StephenPinfield

Recommended

Impact Factor: An Index of Research Journal

PLEASE SUBSCRIBE OUR YOUTUBE CHANNEL OPENKNOWLEDGE or see URL https://youtu.be/nPLnJqLEknY

Research Indices are the indicators of the credibility and recognition of a researcher, a journal, an article and/or and institute. These include Impact Factor, immediacy Index, h-index etc. Researchers and students must know about these indices for better recognition in the academia and research. In the first part of the series we are discussing Impact Factor as a vital research Index. Impact factor (IF) is the most Important basis of selection of journal by the researchers and readers. Its a a measure of the reputation of a journal. IF is a measure of the frequency with which the "average article" in a journal has been cited in a particular year. The OER shall cover how (IF is calculated), Who (provides the IF), on which factors IF depends upon, The importance of IF in academic recognition and knowing the IF of journal. Also SUBSCRIBE OUR YOUTUBE CHANNEL OPENKNOWLEDGE or see https://youtu.be/nPLnJqLEknY

Understanding the Basics of Journal Metrics

By Leena Shah

Managing Editor, Ambassador for DOAJ

5th Annual Conference of Asian Council of Science Editors [ACSE]

Dubai, 21-22 March 2018

From RAE to REF

This document summarizes a presentation on research assessment in the UK. It outlines the Research Assessment Exercise (RAE) process and its impact on researcher behavior. It then discusses the transition to the new Research Excellence Framework (REF), which will place greater emphasis on citations, impact, and environment. The presentation notes that the RAE and REF influence what researchers study and how they disseminate their work, and that behaviors will continue adjusting in response to assessment changes.

Research metrics Apr2013

This document provides an overview of various bibliometric tools and metrics for measuring scientific output and impact. It discusses journal ranking metrics like impact factor, eigenfactor, SNIP, and SJR. It also covers article-level metrics including F1000 factors and citation analysis tools from Google Scholar, Web of Science, and Scopus. Additionally, it introduces author-level metrics such as the h-index and its variants that can be calculated using various databases and tools. Finally, the document briefly discusses altmetrics and ways to track scholarly impact on social media and the open web.

Research workshop presentation unisa

The document discusses the use of bibliometric data and citation metrics to evaluate research performance and support decision making. It notes the increasing importance of demonstrating research impact and return on investment. Thomson Reuters products like the Journal Citation Reports and Web of Science are positioned as providing objective citation and bibliometric data to help with research assessment and evaluation exercises. The document also provides examples of how this data can be used to analyze the research performance of institutions and individuals.

Altmetrics 101 - Altmetrics in Libraries

LITA’s Altmetrics and Digital Analytics Interest Group is proud to present Heather Coates, Richard Naples, and Lauren Collister in our second free webinar of the season. Heather will introduce the concept of altmetrics with a quick "Altmetrics 101," Richard will discuss the Smithsonian's implementation of Altmetric, and Lauren will share the University of Pittsburgh's experience with Plum Analytics.

Citation & altmetrics - a comparison

comparison of metrics for scholarly products, created in 2012 by Kellie Kaneshiro & Heather Coates, updated 2014

Open Access - Current Themes

Presentation from our AGM and afternoon of talks on the theme of Open.

https://www.eventbrite.co.uk/e/mmit-2016-agm-and-free-talks-on-open-libraries-research-and-education-tickets-28552110130#

Stephen Pinfield - Professor of Information Services Management at University of Sheffield - @StephenPinfield

Introducing SciVal

Using SciVal can help you to evaluate the research performance of individuals and institutions worldwide.

Supporting the ref5

1. The document discusses preparing researchers for the next Research Excellence Framework (REF) assessment in the UK. It covers open access policies, bibliometrics, altmetrics, and ORCID identifiers.

2. Open access requirements for REF submissions are that journal articles and conference papers be made publicly available within 3 months of acceptance in an institutional repository.

3. Bibliometrics like citation counts and journal impact factors may play a larger role in REF assessments in the future, though peer review will still be primary. Concerns about gaming the system and disciplinary biases remain.

Vincent Larivière - Journal Impact Factors: history, limitations and adverse ...

Vincent Larivière - Journal Impact Factors: history, limitations and adverse ...SciELO - Scientific Electronic Library Online

This panel addresses the application of bibliometric and scientometric methods applied in the assessment of journals and the research they publish.

The measurement of impact of scientific journals through citations has its origin in the documentalists activities at the late nineteenth century to organize the publications of specific areas. The unfolding of these efforts soon undertook quantitative approaches aiming at understanding trends which allowed us to establish, for example, the nucleus of journals and authors in the various areas, making it an important input for science historians and sociologists.

Regarding the treatment of scientific information, the essays of the first half of the twentieth century were materialized into a system that would offer a new form of information retrieval – in the diachronic sense – allowing to identify the relation that literature establishes from the publishing of an article. This relationship, which expresses the repercussion of a new knowledge in the literature, did not take much time to attribute the idea of scientific impact, whose expression occurs through citations. The citation index then revolutionizes the way of accessing literature in the second half of that century, at the same time as it becomes a unique source for impact indicators, which from there would represent the world science in evaluative processes around of the world.

At the turn of the twenty first century, many factors – such as subscription costs, the underrepresentation of the scientific literature of non-English-speaking countries, as well as the different practices of scientific communication among areas of knowledge – have given rise to initiatives aimed at broader sources of information, while at the same time facilitating free access to scientific information. However, besides the access issue, the already established need for impact measurement could not be ignored in order to provide the consolidated processes of evaluation of scientific output with more adequate indicators.

In this sense, it is necessary that the new information sources, taking advantage of the new methodologies proposed by the community specialized in quantitative methods of science evaluation, may contribute with indicators that make the assessment of national (Brazilian) scientific output more adequate to the national scenario. In doing so, it is hoped that the group’s discussions will contribute not only to evidence the best that has been produced locally, but also to allow scientific journals edited nationally, particularly those of the SciELO Network, to have their impact recognized, allowing circulation of global and inclusive scientific knowledge.

Syllabus

Information sources for generating impact indicators; specificity of the culture of scientific communication in the different areas, especially the Human and Social Sciences; the limitations of the Impact Factor and mainstream journal-based indicators; methodologies for…Bibliometrics in the library

This document discusses the use of bibliometrics and citation analysis at Wageningen University. It provides context about the university's evaluation cycle and criteria. It then describes the current research information system (Metis) and institutional repository (Wageningen Yield) that provide publication data. The document discusses challenges in comparing citation metrics across fields and researchers. It also considers sources of citation data and developing altmetric tools. Finally, it argues that the university library is well-positioned to conduct bibliometric analyses using these local data systems.

2018 Journal Impact Factor

The document provides information about how Journal Impact Factors are calculated. It defines Journal Impact Factor as the average number of times articles from a journal published in the last two years were cited in the current year. It then explains the formula used to calculate Journal Impact Factors and visualizes the calculation process. The document also addresses common questions about what is included in the numerator and denominator and how title changes, supplements, and self-citations are handled in the calculation.

Snowball recipe book

This document provides an introduction to Snowball Metrics, which aims to establish standard metrics that research universities can use to understand their strengths and weaknesses. The Snowball Metrics approach is a bottom-up collaboration between research universities and Elsevier. It takes a pragmatic approach, agreeing on core metrics first and reusing existing standards where possible. The goal is for Snowball Metrics to become a globally recognized standard to inform evidence-based institutional decision-making, rather than ranking. Universities own the process of defining the metrics, while Elsevier provides project management support and technical expertise.

SciVal

This document provides an overview and demonstration of the SciVal and Snowball Metrics tools. SciVal is a set of integrated modules that enables institutions to make evidence-based strategic decisions about research performance, benchmarking, and collaboration opportunities. It contains data on over 220 nations and 4,600 research institutions. The demonstration shows how to access and use the Overview, Benchmarking, and Collaboration modules to analyze the research performance of the University of Cape Town across metrics like publications, citations, collaborators, and more. It also provides guidance on selecting appropriate metrics and defining custom research areas for analysis in SciVal.

Altmetrics for Team Science

Academics must provide evidence to demonstrate the impact and outcomes of their scholarly work. This webinar, presented by librarians, will help faculty explore various forms of documentary evidence to support their case for excellence. Sponsored by the IUPUI Office of Academic Affairs.

Note: The webinar included demonstrations of Web of Science & Scopus, which the slides do not reflect.

Bibliometric Tools

This document discusses bibliometric tools that can be used to analyze scholarly literature and research impact. It explains that bibliometrics involves the quantitative analysis of bibliographic items like citations, authors, and keywords. Individual researchers and institutions can use bibliometric tools to evaluate research impact, identify collaborators, analyze journal metrics, and inform hiring and funding decisions. It provides examples of bibliometric databases like Web of Science, Scopus, and Google Scholar that contain citation data and metrics. Finally, it notes some limitations of bibliometric indicators and the need to consider citation behaviors and contexts across disciplines.

Researcher profiles and metrics that matter

This document discusses implementing ORCID identifiers at Northumbria University. It describes Northumbria as a research-rich university with over 1,300 academic staff across four faculties. The Scholarly Publications team provides support for research activities including the institutional repository and research data management. ORCID was first promoted in 2013 and is now integrated into the postgraduate researcher workflow and upcoming staff publishing workflows. ORCID helps with accurate attribution of authors in research metrics reporting and identifying collaborations. Maintaining central support and emphasizing the benefits to individuals have helped adoption.

Publishing your Work in a Rapidly Changing Scholarly Communications Environment

This document discusses the rapidly changing scholarly communications environment and issues surrounding publishing research. It notes debates around making federally funded research openly accessible and proposed legislation. It also covers tools for tracking citations and measuring impact, such as the Journal Impact Factor, Eigenfactor, Article Influence Score, and Hirsch index. Various publishing models and players in the field, including open access options, are outlined. Evaluation criteria like the CRAAP test for assessing information sources are presented.

ROI and Beyond - King

The document discusses various metrics that can be used to evaluate research performance and impact. It describes metrics for productivity, influence, efficiency, relative impact compared to norms, specialization within or across disciplines, emerging areas, and indirect impact. Examples of calculations and uses for different metrics are provided.

The State of SA Journals Project - Johann Mouton

Presentation at the National Scholarly Editors' Forum

Johannesburg

9 September 2015

Speaker: Johann Mouton

Journal Citation Reports - Finding Journal impact factors

How to find journal impact factors on Journal Citation Reports that will help you decide where to publish your research

Gathering Evidence to Demonstrate Impact

IUPUI Office of Academic Affairs & University Library workshop (2012) on gathering evidence for promotion and tenure dossiers.

Impact Factor Journals as per JCR, SNIP, SJR, IPP, CiteScore

Journal-level metrics

Metrics have become a fact of life in many - if not all - fields of research and scholarship. In an age of information abundance (often termed ‘information overload’), having a shorthand for the signals for where in the ocean of published literature to focus our limited attention has become increasingly important.

Research metrics are sometimes controversial, especially when in popular usage they become proxies for multidimensional concepts such as research quality or impact. Each metric may offer a different emphasis based on its underlying data source, method of calculation, or context of use. For this reason, Elsevier promotes the responsible use of research metrics encapsulated in two “golden rules”. Those are: always use both qualitative and quantitative input for decisions (i.e. expert opinion alongside metrics), and always use more than one research metric as the quantitative input. This second rule acknowledges that performance cannot be expressed by any single metric, as well as the fact that all metrics have specific strengths and weaknesses. Therefore, using multiple complementary metrics can help to provide a more complete picture and reflect different aspects of research productivity and impact in the final assessment. ( Elsevier)

Finding Journal Impact Factor using Journal Citation Reports

Journal Citation Reports - InCites provides journal impact factors and rankings across various disciplines. It collects citation data and calculates metrics like impact factor, immediacy index, and half-life for journals indexed in Web of Science. Impact factor is a measure of how frequently the average article in a journal is cited in a given year. It is calculated by dividing the number of times articles published in the last two years were cited by the total number of articles published in those two years. The document provides instructions on how to access Journal Citation Reports through the library database to search for journals, compare metrics between journals, and view trends in journal-level indicators over time.

Impact factor (using impact factor to assess the impact of a journal)

The impact factor (IF) is a measure of the frequency with which the average article in a journal has been cited in a particular year. It is used to measure the importance or rank of a journal by calculating the times it's articles are cited.

Impact Factors are useful, but they should not be the only consideration when judging quality. Not all journals are tracked in the JCR database and, as a result, do not have impact factors. New journals must wait until they have a record of citations before even being considered for inclusion. The scientific worth of an individual article has nothing to do with the impact factor of a journal.

Freely Available Scholarly Metrics

This document provides an overview of bibliometric metrics for evaluating scholarly work, including both freely available and paid subscription metrics. It discusses journal-level metrics like impact factor, acceptance rates, and Scimago rankings. Article-level metrics mentioned include citations, downloads, and Altmetric scores. Author-level metrics like the h-index are also covered. Paid bibliometrics from Web of Science and Scopus are noted. Freely available options highlighted are Google Scholar for citations, rankings, and profiles, as well as Scimago for journal rankings. Caveats about the limitations of metrics are also summarized.

Judging research quality bibliometrics and beyond

This document summarizes and discusses various methods for judging research quality, including bibliometrics and alternative approaches. It discusses bibliometrics such as impact factors and how they are calculated. However, it notes that bibliometrics only measure one dimension of quality and do not reflect the broader societal impacts of research. The document advocates considering additional factors beyond citation counts, such as qualitative evaluations and altmetrics, to more fully capture research quality.

2013.11.14 Big Data Workshop Michael Browne

Michael Browne from the Irish Centre for High End Computing presented this overview of Big Data and Computer Architecture during the Big Data Workshop hosted by the Social Sciences Computing Hub at the Whitaker Institute on the 14th November 2013

2012.06.13 Open Innovation: The Legal Implications part 2

Patricia McGovern, Head of the Intellectual Property Department, DFMG Solicitors, presented "Open Innovation: The Legal Implications" at the IntertradeIreland All-Island Innovation Programme annual conference 2012, Exploiting Industry and University Research, Development and Innovation: Why it Matters held at National University of Ireland, Galway, 12 - 13 June 2012. Part 2

More Related Content

What's hot

Introducing SciVal

Using SciVal can help you to evaluate the research performance of individuals and institutions worldwide.

Supporting the ref5

1. The document discusses preparing researchers for the next Research Excellence Framework (REF) assessment in the UK. It covers open access policies, bibliometrics, altmetrics, and ORCID identifiers.

2. Open access requirements for REF submissions are that journal articles and conference papers be made publicly available within 3 months of acceptance in an institutional repository.

3. Bibliometrics like citation counts and journal impact factors may play a larger role in REF assessments in the future, though peer review will still be primary. Concerns about gaming the system and disciplinary biases remain.

Vincent Larivière - Journal Impact Factors: history, limitations and adverse ...

Vincent Larivière - Journal Impact Factors: history, limitations and adverse ...SciELO - Scientific Electronic Library Online

This panel addresses the application of bibliometric and scientometric methods applied in the assessment of journals and the research they publish.

The measurement of impact of scientific journals through citations has its origin in the documentalists activities at the late nineteenth century to organize the publications of specific areas. The unfolding of these efforts soon undertook quantitative approaches aiming at understanding trends which allowed us to establish, for example, the nucleus of journals and authors in the various areas, making it an important input for science historians and sociologists.

Regarding the treatment of scientific information, the essays of the first half of the twentieth century were materialized into a system that would offer a new form of information retrieval – in the diachronic sense – allowing to identify the relation that literature establishes from the publishing of an article. This relationship, which expresses the repercussion of a new knowledge in the literature, did not take much time to attribute the idea of scientific impact, whose expression occurs through citations. The citation index then revolutionizes the way of accessing literature in the second half of that century, at the same time as it becomes a unique source for impact indicators, which from there would represent the world science in evaluative processes around of the world.

At the turn of the twenty first century, many factors – such as subscription costs, the underrepresentation of the scientific literature of non-English-speaking countries, as well as the different practices of scientific communication among areas of knowledge – have given rise to initiatives aimed at broader sources of information, while at the same time facilitating free access to scientific information. However, besides the access issue, the already established need for impact measurement could not be ignored in order to provide the consolidated processes of evaluation of scientific output with more adequate indicators.

In this sense, it is necessary that the new information sources, taking advantage of the new methodologies proposed by the community specialized in quantitative methods of science evaluation, may contribute with indicators that make the assessment of national (Brazilian) scientific output more adequate to the national scenario. In doing so, it is hoped that the group’s discussions will contribute not only to evidence the best that has been produced locally, but also to allow scientific journals edited nationally, particularly those of the SciELO Network, to have their impact recognized, allowing circulation of global and inclusive scientific knowledge.

Syllabus

Information sources for generating impact indicators; specificity of the culture of scientific communication in the different areas, especially the Human and Social Sciences; the limitations of the Impact Factor and mainstream journal-based indicators; methodologies for…Bibliometrics in the library

This document discusses the use of bibliometrics and citation analysis at Wageningen University. It provides context about the university's evaluation cycle and criteria. It then describes the current research information system (Metis) and institutional repository (Wageningen Yield) that provide publication data. The document discusses challenges in comparing citation metrics across fields and researchers. It also considers sources of citation data and developing altmetric tools. Finally, it argues that the university library is well-positioned to conduct bibliometric analyses using these local data systems.

2018 Journal Impact Factor

The document provides information about how Journal Impact Factors are calculated. It defines Journal Impact Factor as the average number of times articles from a journal published in the last two years were cited in the current year. It then explains the formula used to calculate Journal Impact Factors and visualizes the calculation process. The document also addresses common questions about what is included in the numerator and denominator and how title changes, supplements, and self-citations are handled in the calculation.

Snowball recipe book

This document provides an introduction to Snowball Metrics, which aims to establish standard metrics that research universities can use to understand their strengths and weaknesses. The Snowball Metrics approach is a bottom-up collaboration between research universities and Elsevier. It takes a pragmatic approach, agreeing on core metrics first and reusing existing standards where possible. The goal is for Snowball Metrics to become a globally recognized standard to inform evidence-based institutional decision-making, rather than ranking. Universities own the process of defining the metrics, while Elsevier provides project management support and technical expertise.

SciVal

This document provides an overview and demonstration of the SciVal and Snowball Metrics tools. SciVal is a set of integrated modules that enables institutions to make evidence-based strategic decisions about research performance, benchmarking, and collaboration opportunities. It contains data on over 220 nations and 4,600 research institutions. The demonstration shows how to access and use the Overview, Benchmarking, and Collaboration modules to analyze the research performance of the University of Cape Town across metrics like publications, citations, collaborators, and more. It also provides guidance on selecting appropriate metrics and defining custom research areas for analysis in SciVal.

Altmetrics for Team Science

Academics must provide evidence to demonstrate the impact and outcomes of their scholarly work. This webinar, presented by librarians, will help faculty explore various forms of documentary evidence to support their case for excellence. Sponsored by the IUPUI Office of Academic Affairs.

Note: The webinar included demonstrations of Web of Science & Scopus, which the slides do not reflect.

Bibliometric Tools

This document discusses bibliometric tools that can be used to analyze scholarly literature and research impact. It explains that bibliometrics involves the quantitative analysis of bibliographic items like citations, authors, and keywords. Individual researchers and institutions can use bibliometric tools to evaluate research impact, identify collaborators, analyze journal metrics, and inform hiring and funding decisions. It provides examples of bibliometric databases like Web of Science, Scopus, and Google Scholar that contain citation data and metrics. Finally, it notes some limitations of bibliometric indicators and the need to consider citation behaviors and contexts across disciplines.

Researcher profiles and metrics that matter

This document discusses implementing ORCID identifiers at Northumbria University. It describes Northumbria as a research-rich university with over 1,300 academic staff across four faculties. The Scholarly Publications team provides support for research activities including the institutional repository and research data management. ORCID was first promoted in 2013 and is now integrated into the postgraduate researcher workflow and upcoming staff publishing workflows. ORCID helps with accurate attribution of authors in research metrics reporting and identifying collaborations. Maintaining central support and emphasizing the benefits to individuals have helped adoption.

Publishing your Work in a Rapidly Changing Scholarly Communications Environment

This document discusses the rapidly changing scholarly communications environment and issues surrounding publishing research. It notes debates around making federally funded research openly accessible and proposed legislation. It also covers tools for tracking citations and measuring impact, such as the Journal Impact Factor, Eigenfactor, Article Influence Score, and Hirsch index. Various publishing models and players in the field, including open access options, are outlined. Evaluation criteria like the CRAAP test for assessing information sources are presented.

ROI and Beyond - King

The document discusses various metrics that can be used to evaluate research performance and impact. It describes metrics for productivity, influence, efficiency, relative impact compared to norms, specialization within or across disciplines, emerging areas, and indirect impact. Examples of calculations and uses for different metrics are provided.

The State of SA Journals Project - Johann Mouton

Presentation at the National Scholarly Editors' Forum

Johannesburg

9 September 2015

Speaker: Johann Mouton

Journal Citation Reports - Finding Journal impact factors

How to find journal impact factors on Journal Citation Reports that will help you decide where to publish your research

Gathering Evidence to Demonstrate Impact

IUPUI Office of Academic Affairs & University Library workshop (2012) on gathering evidence for promotion and tenure dossiers.

Impact Factor Journals as per JCR, SNIP, SJR, IPP, CiteScore

Journal-level metrics

Metrics have become a fact of life in many - if not all - fields of research and scholarship. In an age of information abundance (often termed ‘information overload’), having a shorthand for the signals for where in the ocean of published literature to focus our limited attention has become increasingly important.

Research metrics are sometimes controversial, especially when in popular usage they become proxies for multidimensional concepts such as research quality or impact. Each metric may offer a different emphasis based on its underlying data source, method of calculation, or context of use. For this reason, Elsevier promotes the responsible use of research metrics encapsulated in two “golden rules”. Those are: always use both qualitative and quantitative input for decisions (i.e. expert opinion alongside metrics), and always use more than one research metric as the quantitative input. This second rule acknowledges that performance cannot be expressed by any single metric, as well as the fact that all metrics have specific strengths and weaknesses. Therefore, using multiple complementary metrics can help to provide a more complete picture and reflect different aspects of research productivity and impact in the final assessment. ( Elsevier)

Finding Journal Impact Factor using Journal Citation Reports

Journal Citation Reports - InCites provides journal impact factors and rankings across various disciplines. It collects citation data and calculates metrics like impact factor, immediacy index, and half-life for journals indexed in Web of Science. Impact factor is a measure of how frequently the average article in a journal is cited in a given year. It is calculated by dividing the number of times articles published in the last two years were cited by the total number of articles published in those two years. The document provides instructions on how to access Journal Citation Reports through the library database to search for journals, compare metrics between journals, and view trends in journal-level indicators over time.

Impact factor (using impact factor to assess the impact of a journal)

The impact factor (IF) is a measure of the frequency with which the average article in a journal has been cited in a particular year. It is used to measure the importance or rank of a journal by calculating the times it's articles are cited.

Impact Factors are useful, but they should not be the only consideration when judging quality. Not all journals are tracked in the JCR database and, as a result, do not have impact factors. New journals must wait until they have a record of citations before even being considered for inclusion. The scientific worth of an individual article has nothing to do with the impact factor of a journal.

Freely Available Scholarly Metrics

This document provides an overview of bibliometric metrics for evaluating scholarly work, including both freely available and paid subscription metrics. It discusses journal-level metrics like impact factor, acceptance rates, and Scimago rankings. Article-level metrics mentioned include citations, downloads, and Altmetric scores. Author-level metrics like the h-index are also covered. Paid bibliometrics from Web of Science and Scopus are noted. Freely available options highlighted are Google Scholar for citations, rankings, and profiles, as well as Scimago for journal rankings. Caveats about the limitations of metrics are also summarized.

Judging research quality bibliometrics and beyond

This document summarizes and discusses various methods for judging research quality, including bibliometrics and alternative approaches. It discusses bibliometrics such as impact factors and how they are calculated. However, it notes that bibliometrics only measure one dimension of quality and do not reflect the broader societal impacts of research. The document advocates considering additional factors beyond citation counts, such as qualitative evaluations and altmetrics, to more fully capture research quality.

What's hot (20)

Vincent Larivière - Journal Impact Factors: history, limitations and adverse ...

Vincent Larivière - Journal Impact Factors: history, limitations and adverse ...

Publishing your Work in a Rapidly Changing Scholarly Communications Environment

Publishing your Work in a Rapidly Changing Scholarly Communications Environment

Journal Citation Reports - Finding Journal impact factors

Journal Citation Reports - Finding Journal impact factors

Impact Factor Journals as per JCR, SNIP, SJR, IPP, CiteScore

Impact Factor Journals as per JCR, SNIP, SJR, IPP, CiteScore

Finding Journal Impact Factor using Journal Citation Reports

Finding Journal Impact Factor using Journal Citation Reports

Impact factor (using impact factor to assess the impact of a journal)

Impact factor (using impact factor to assess the impact of a journal)

Viewers also liked

2013.11.14 Big Data Workshop Michael Browne

Michael Browne from the Irish Centre for High End Computing presented this overview of Big Data and Computer Architecture during the Big Data Workshop hosted by the Social Sciences Computing Hub at the Whitaker Institute on the 14th November 2013

2012.06.13 Open Innovation: The Legal Implications part 2

Patricia McGovern, Head of the Intellectual Property Department, DFMG Solicitors, presented "Open Innovation: The Legal Implications" at the IntertradeIreland All-Island Innovation Programme annual conference 2012, Exploiting Industry and University Research, Development and Innovation: Why it Matters held at National University of Ireland, Galway, 12 - 13 June 2012. Part 2

2011.04.15 bring knowledge to life

Dr Jimmy Huang, Warwick Business School, University of Warwick, UK presented this seminar "Bring Knowledge to Life: A Case Study of National palace Museum, Taipei" at the Whitaker Institute on 15th April 2011.

2012.07.02 The Story from St. James'

Dr. Jeanne Moriarty, St. James' Hospital Dublin, presented "The Story from St. James'" at Simulation in Irish Medical Education: Where Are We, and Where Are We Going? held at NUI Galway on the 2nd July 2012.

2013.11.14 Big Data Workshop Adam Ralph - 2nd set of slides

Adam Ralph from the Irish Centre for High End Computing presented this Introduction to Basic R during the Big Data Workshop hosted by the Social Sciences Computing Hub at the Whitaker Institute on the 14th November 2013

2012.06.15 Marie Curie Programme FP7 Information Session

Dr. Jennifer Brennan, National Contact Point for Marie Curie, Irish Universities Association presented this seminar "FP7 Information Session: Marie Curie Programme" at the Whitaker Institute on 15th June 2012.

2012.06.12 Research on Academic Entrepreneurship: Lessons Learnt Part 2

This document summarizes a presentation on academic entrepreneurship research in the U.S. and Europe. It discusses key findings from quantitative and qualitative studies on university technology transfer. Some findings include that organizational factors help explain differences in university performance, and that incentives for faculty involvement are important. It also outlines remaining questions and an agenda for additional research on the institutions and agents involved in academic entrepreneurship.

2012.08.23 Scandinavian Insights on International Entrepreneurship and Innova...

Professor Svante Andersson, Halmstad University, Sweden presented this seminar as part of a session on Scandinavian Insights on International Entrepreneurship and Innovation at the Whitaker Institute on the 23rd August 2012.

2012.07.02 the story from the asset centre

Dr. John McAdoo, ASSET Centre, UCC, presented "The Story from the ASSET Centre" at Simulation in Irish Medical Education: Where Are We, and Where Are We Going? held at NUI Galway on the 2nd July 2012.

2016.05.05 collective intelligence – an innovative research approach to promo...

Dr. Patricia McHugh, Dr. Veronica McCauley & Dr. Kevin Davison presented this seminar on Collective Intelligence - An Innovative Research Approach to Promoting Ocean Literacies in Ireland under the Sea Change project. They spoke on behalf of the Social Innovation, Participation and Policy Cluster (SIPPs) as part of the Whitaker Institute's Ideas Forum on 5th May 2016.

2013.11.14 Big Data Workshop Bruno Voisin

Bruno Voisin from the Irish Centre for High End Computing presented this Introduction to Data Analytics Techniques and their Implementation in R during the Big Data Workshop hosted by the Social Sciences Computing Hub at the Whitaker Institute on the 14th November 2013

2011.02.03 Publishing in Top Journals

Professor Martin Kilduff, Judge Business School, University of Cambridge, UK presented this seminar "Publishing in Top Journals: A guide for the perplexed" at the Whitaker Institute on 3rd February 2011.

2011.11.28 Collective Intelligence - Problems and Possibilities

Dr Michael Hogan, School of Psychology, NUI Galway presented this seminar "Collective Intelligence - Problems and Possibilities" as part of the Break the Barrier Seminar Series at the Whitaker Institute on 28th November 2011.

2012.02.08 An Insider's Guide to Getting Published in International Journals

Professor Thomas Garavan, Kemmy Business School, University of Limerick presented this seminar "An Insider's Guide to Getting Published in International Journals" as part of the Whitaker Institute Seminar Series at the Whitaker Institute on 8th February 2012.

2012.06.13 Economic Growth and Academic Entrepreneurship: Lessons and Implica...

Professor Donald Siegel, University at Albany, State University of New York, presented the second keynote address "Economic Growth and Academic Entrepreneurship: Lessons and Implications for Industry, Academia and Policymakers" at the IntertradeIreland All-Island Innovation Programme annual conference 2012, Exploiting Industry and University Research, Development and Innovation: Why it Matters held at National University of Ireland, Galway, 12 - 13 June 2012. Part 1

2012.06.20 International and Collaborative Research

Professor Chris Brewster, Henley Business School, University of Reading, UK presented this seminar "International and Collaborative Research" at the Whitaker Institute on 20th June 2012.

2012.08.23 An ethnography of innovation processes in the food industry

Dr. Thomas Hoholm, BI Norwegian Business School presented this seminar, "The Contrary Forces of Innovation: An ethnography of innovation processes in the food industry", as part of a session on Scandinavian Insights on International Entrepreneurship and Innovation at the Whitaker Institute on the 23rd August 2012.

2012.09.05 The Management of Human Resources and the Governance of Employment

This document discusses the governance of employment and human resource management. It argues that while HRM focuses on managing people within organizations, governance looks at the wider social context and actors involved in governing work. Governance recognizes the complexity, distributed nature, dynamism and contingency of the social, economic and political factors influencing work. The governance of employment involves many actors like government, employers, unions, training providers operating through hierarchies, markets and networks to shape labor markets, vocational education and training policy. The document examines reforms to vocational training policy and institutions over time in the UK and Ireland as an example of the challenges of establishing stable governance arrangements.

2012.02.18 Reducing Human Error in Healthcare - Getting Doctors to Swallow th...

Dr Paul O'Connor, Whitaker Institute, NUI Galway presented this seminar "Reducing Human Error in Healthcare - Getting Doctors to Swallow the Blue Pill" as part of the NUI Galway Research Office Lunchtime Seminar Series on 18th January 2012.

2011.02.04 The Awestruck Effect: Transformational Leadership and Followers’ E...

Professor Martin Kilduff, Judge Business School, University of Cambridge, UK presented this seminar "The Awestruck Effect: Transformational Leadership and Followers’ Emotion Suppression" at the Whitaker Institute on 4th February 2011.

Viewers also liked (20)

2012.06.13 Open Innovation: The Legal Implications part 2

2012.06.13 Open Innovation: The Legal Implications part 2

2013.11.14 Big Data Workshop Adam Ralph - 2nd set of slides

2013.11.14 Big Data Workshop Adam Ralph - 2nd set of slides

2012.06.15 Marie Curie Programme FP7 Information Session

2012.06.15 Marie Curie Programme FP7 Information Session

2012.06.12 Research on Academic Entrepreneurship: Lessons Learnt Part 2

2012.06.12 Research on Academic Entrepreneurship: Lessons Learnt Part 2

2012.08.23 Scandinavian Insights on International Entrepreneurship and Innova...

2012.08.23 Scandinavian Insights on International Entrepreneurship and Innova...

2016.05.05 collective intelligence – an innovative research approach to promo...

2016.05.05 collective intelligence – an innovative research approach to promo...

2011.11.28 Collective Intelligence - Problems and Possibilities

2011.11.28 Collective Intelligence - Problems and Possibilities

2012.02.08 An Insider's Guide to Getting Published in International Journals

2012.02.08 An Insider's Guide to Getting Published in International Journals

2012.06.13 Economic Growth and Academic Entrepreneurship: Lessons and Implica...

2012.06.13 Economic Growth and Academic Entrepreneurship: Lessons and Implica...

2012.06.20 International and Collaborative Research

2012.06.20 International and Collaborative Research

2012.08.23 An ethnography of innovation processes in the food industry

2012.08.23 An ethnography of innovation processes in the food industry

2012.09.05 The Management of Human Resources and the Governance of Employment

2012.09.05 The Management of Human Resources and the Governance of Employment

2012.02.18 Reducing Human Error in Healthcare - Getting Doctors to Swallow th...

2012.02.18 Reducing Human Error in Healthcare - Getting Doctors to Swallow th...

2011.02.04 The Awestruck Effect: Transformational Leadership and Followers’ E...

2011.02.04 The Awestruck Effect: Transformational Leadership and Followers’ E...

Similar to 2012.02.01 Where Next for the ABS Guide

Responsible metrics in research assessment

Researcher KnowHow session presented on 19-11-2020 by Zuzana Oriou, Open Research Team, University of Liverpool Library.

Cardiff bibliometrics repository ref_july 2014

This document discusses bibliometrics and their use at Cardiff University. It begins with an introduction to bibliometric measures like citations, impact factors, and altmetrics. It then discusses how bibliometric data is presented in Cardiff's institutional repository and how it was used to provide context for research evaluations in the UK's REF2014 assessment exercise. The document concludes by outlining Cardiff's trial of the SciVal analytics tool and plans for a new research information system to better integrate bibliometric and altmetric data.

Scopus Journals

This document provides guidelines for selecting the appropriate journal to submit research for publication. It discusses exploring a journal's aims and scope, checking if similar articles have been published, considering restrictions and impact factor. Online tools are presented to help identify journals. Common reasons for manuscript rejection are outlined. The importance of thoroughly responding to reviewer comments is emphasized.

Quality Assurance for Journal Guidance

Definitions

What is the need for quality assurance in journals ?

Type of journals

Bibliometric indicators

How to identify credible journals ?

Predatory/cloned journals

Dsc 5530 lecture 2

This document discusses literature on torrefaction economics and technologies. It summarizes 34 peer-reviewed and non-peer reviewed studies on mass/energy balances, plant sizes, capital and operating costs, ROIs, and feedstock costs for torrefaction systems. It also compares the properties of wood, torrefied wood, and other fuels. Finally, it outlines various torrefaction reactor technologies and the companies involved in each.

A Review Of Reasons For Rejection Of Manuscripts

This document summarizes reasons for rejection of manuscripts submitted to academic journals. It discusses that rejection is common, with 85-90% of prominent authors experiencing rejection. Technical reasons for rejection include incomplete data, poor analysis, and inappropriate methodology. Editorial reasons include the manuscript being out of scope or lacking proper structure/formatting. Characteristics of successful manuscripts include an informative title/abstract, strong literature review, clearly described methodology, and significance discussed in conclusions. The peer review process evaluates manuscripts' originality, validity, and potential contribution to help editors decide to accept or reject submissions.

Wenner gren citation-non_sense_reedijk

This document summarizes Jan Reedijk's presentation on the value and accuracy of citation metrics in scientific evaluations. The presentation discusses how impact factors, h-indices, and other bibliometric indicators are often misused or inaccurately applied to evaluate scientists, research groups, and journals. It provides examples of errors in large citation databases, variations in h-index values depending on the data source, and strategies employed by journals to artificially inflate their impact factors. The document recommends that research assessment not rely solely on bibliometric indicators and calls for greater transparency and accuracy in bibliometric analysis.

Faux

This document provides guidance on preparing and publishing academic papers in journals. It discusses best practices for each section of a paper from the title page to conclusions. It also covers the peer review process and strategies for revising papers based on reviewer feedback. Additionally, it examines debates around measuring the impact and quality of academic research, journals, and institutions. Metrics discussed include journal rankings, citation counts, the H-index, and holistic approaches that consider impact on knowledge, teaching, practice, policy, the economy, and society. The document aims to help authors navigate the publishing process and issues relating to research assessment.

Feedback on the draft summary report

The document provides feedback on a draft summary report for research evaluation methodology in the Czech Republic. It covers many topics and opinions are divided on several issues. Some view the reports as well-written and justified while others see them as too general. There is contrasting feedback on topics like self-assessment, treatment of PhD students and temporary workers, and assessment of research environment. The document also notes a few incorrect statements in the draft report and provides counterpoints on issues like applied research outputs and dividing duties between teaching and research. It advocates for a learning process to begin in applying the new methodology.

Bibliometrics: journals, articles, authors (v2)

Presented to members of the Psychology department as part of the New Tricks Seminar series (February 2016)

• journal metrics using WoS and Scopus

• article level metrics in WoS, Scopus and Google Scholar, and from publishers and the differences in each. Touch on altmetrics.

• author metrics in the above. Touch on Publish or Perish

Tanya Williamson, Academic Liaison Librarian

Author Seminar NUI Galway July 2019

This document discusses how to write a good paper and get published in a high-quality journal. It provides information on identifying the right journal, how publishers add value, writing the different sections of a paper, addressing authorship and references correctly, and tips for increasing the impact of published research. Key metrics for evaluating journals like impact factor, CiteScore, and submissions/acceptances over time are presented for a selection of Elsevier journals. The presentation aims to help researchers improve their chances of successful publication.

Improving the quality of my journal and increase its impact factor

Apresentação feita por Danilo Collalto Paulo no IV Ciclo de Debates Periódicos UFSC realizado no dia 05 de maio de 2015 na Biblioteca Central da UFSC

Abstracting and indexing_Dr. Guenther Eichhorn

This document provides an overview of various abstracting and indexing services including Thomson Reuters (ISI), PubMed/Medline, Google Scholar, Microsoft Academic Search, and Scopus/EI Compendex. It describes the application and evaluation process for each service, what databases and indexes they contain, and how journals can improve metrics like the Impact Factor. Guidelines are provided for optimizing discoverability in these services to increase citations and rankings.

Standards libraries higher education

The document provides standards for libraries in higher education. It outlines 9 principles that academic libraries should adhere to, including institutional effectiveness, professional values, educational role, discovery, collections, space, management/administration, personnel, and external relations. For each principle, the document lists performance indicators that further define how the principle can be achieved. The standards are designed to guide libraries and help them advance their role in supporting education and their institution's mission through assessment and continuous improvement.

Preparing and publishing a scientific manuscript

This document provides guidance on preparing and publishing a scientific manuscript. It discusses important steps like choosing an appropriate journal, writing each section of the manuscript such as the introduction, materials and methods, and results. Key details that should be included in each section are outlined, such as stating the study aims clearly in the introduction and providing enough methodological details to allow other researchers to replicate the study. Following guidelines for reporting clinical trials, observational studies, and systematic reviews can improve manuscript clarity and completeness. The overall goal is to help researchers overcome barriers and successfully publish their work.

Research Webinar.pptx

This document provides an overview of a webinar on getting published and increasing the chances of success. The webinar will include a presentation on choosing publishing venues, preparing manuscripts, and submitting papers for peer review. It will also feature an open Q&A session. Presenters will discuss challenges facing researchers from developing countries and how to identify predatory journals. The webinar aims to provide guidance to researchers throughout the research cycle.

JCR_Training_for_Researchers.pdf

The document provides information about the Journal Citation Reports (JCR) from Clarivate. It discusses what the JCR is, how it can be used by publishers, librarians, researchers and data scientists, and some of the metrics it includes like impact factor, immediacy index, and cited half-life. It also summarizes some strategies for publishing, including aiming for high ranked journals, journals that are cited for a long time or quickly, and internationally recognized or government accredited journals. Key points are that context is important when using metrics, and the JCR can help evaluate journals and find related publications.

JCR_Training_for_Researchers.pdf

The document provides information about the Journal Citation Reports (JCR) from Clarivate. It discusses what the JCR is, how it can be used by publishers, librarians, researchers and data scientists, and some of the metrics it includes like impact factor, immediacy index, and cited half-life. It also summarizes some strategies for publishing, including aiming for high ranked journals, journals that are cited for a long time or quickly, and internationally recognized or government accredited journals. Key points are that context is important when using metrics, and the JCR can help evaluate journals and find related publications.

Where to Publish: evaluating journals

This document from La Trobe University discusses factors to consider when selecting journals to publish research in, such as choosing high impact journals relevant to one's discipline. It identifies the main issues like publishing in quality journals, selecting those relevant to one's focus area, and where other experts publish. It recommends evaluating journals using criteria like impact factors, indexing, relevance, rankings, and peer review process. It also provides resources one can use to check journals, such as Journal Citation Reports, Eigenfactor, Scopus Journal Analyzer, and university research support services.

DORA and the reinvention of research assessment

This document discusses problems with current research assessment approaches and recommendations from DORA to address them. It outlines eLife's motivations to serve science through a swift, fair review process and exploiting digital media. Current metrics like the Journal Impact Factor are journal-based, proprietary and incomplete. DORA recommends evaluating research based on its own merits using multiple indicators rather than journal names. Funders, institutions, publishers and metric providers are encouraged to consider article-level metrics and outcomes over journal reputation. Progress is being made as funders and institutions explore narrative assessments and considering a wider range of research outputs and impacts.

Similar to 2012.02.01 Where Next for the ABS Guide (20)

Improving the quality of my journal and increase its impact factor

Improving the quality of my journal and increase its impact factor

More from NUI Galway

Vincenzo MacCarrone, Explaining the trajectory of collective bargaining in Ir...

Vincenzo MacCarrone, UCD, Explaining the trajectory of collective bargaining in Ireland: 2000-2017 presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Tom Turner, Tipping the scales for labour in Ireland?

Dr Tom Turner, University of Limerick, Tipping the scales for labour in Ireland? Collective bargaining and the industrial relations amendment) act 2015 presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Tom McDonnell, Medium-term trends in the Irish labour market and possibilitie...

The document summarizes medium-term trends in Ireland's labor market from 1998-2017. It finds that while employment doubled over this period, the employment rate remains below other Northern European countries. There was a shift away from industry and agriculture towards healthcare and education. Female labor force participation lags the EU average, and regional employment growth has not significantly favored Dublin. Wage and productivity growth in Ireland has also been comparatively weak. Key barriers to employment include the high cost of childcare and lack of an industrial policy following industry declines. Volatility in employment may be difficult to avoid in small open economies.

Stephen Byrne, A non-employment index for Ireland

Stephen Byrne, Central Bank of Ireland, A non-employment index for Ireland presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Sorcha Foster, The risk of automation of work in Ireland

Sorcha Foster, Oxford University, The risk of automation of work in Ireland – both sides of the border presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Sinead Pembroke, Living with uncertainty: The social implications of precario...

Dr Sinéad Pembroke, TASC, Living with uncertainty: The social implications of precarious work presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Paul MacFlynn, A low skills equilibrium in Northern Ireland

Paul Mac Flynn, NERI, A low skills equilibrium in Northern Ireland presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Nuala Whelan, The role of labour market activation in building a healthy work...

Dr Nuala Whelan, Maynooth University & Ballymun Job Club, The role of labour market activation in building a healthy workforce: Enhancing well-being for the long-term unemployed through positive psychological interventions presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Michéal Collins, and Dr Michelle Maher, Auto enrolment

Dr Michéal Collins, UCD and Dr Michelle Maher, Maynooth University, Auto enrolment: into what, for whom and how much? presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Michael Taft, A new enterprise model

Michael Taft, SIPTU, A new enterprise model: The long march through the market economy presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Luke Rehill, Patterns of firm-level productivity in Ireland

The document summarizes results from an analysis of firm-level productivity in Ireland between 2006-2014 using a multi-factor productivity model. Key findings include: productivity growth has declined since the 1990s both in Ireland and globally; a small number of large firms account for most value added and employment; foreign-owned firms have significantly higher productivity and wages than domestic firms; and productivity dispersion between the most and least productive firms has widened over time. The analysis finds potential for improving efficiency of resource allocation across firms.

Lucy Pyne, Evidence from the Social Inclusion and Community Activation Programme

Ms Lucy Pyne, Pobal, Evidence from the Social Inclusion and Community Activation Programme (SICAP) presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Lisa Wilson, The gendered nature of job quality and job insecurity

Dr Lisa Wilson, NERI, The gendered nature of job quality and job insecurity presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Karina Doorley, axation, labour force participation and gender equality in Ir...

The document summarizes a presentation on taxation, work and gender equality in Ireland. It finds that Ireland's partial individualization of the income tax system in 2000 increased the employment rate of married women by 5-6 percentage points and their weekly work hours by 2 hours on average. It also reduced the weekly hours of unpaid childcare performed by married women with children by 3 hours. The policy achieved its goal of increasing incentives for spouses, especially women, to join the labor force. Further individualization may be considered but must account for distributional impacts and ways to address fixed costs of work.

Jason Loughrey, Household income volatility in Ireland

Dr Jason Loughrey, Teagasc, Household income volatility in Ireland presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Ivan Privalko, What do Workers get from Mobility?

Voluntary job mobility, such as quits and promotions, is assumed to lead to improved wages and working conditions. However, studies have found mixed and inconsistent results regarding the effects of different types of voluntary mobility on objective and subjective work outcomes. This document analyzes data from the British Household Panel Survey to compare the effects of internal voluntary mobility (promotions), external voluntary mobility (quits), and involuntary mobility (demotions, layoffs) on subjective satisfaction and objective pay. It finds that external voluntary mobility most increases subjective satisfaction, while internal voluntary mobility provides the largest objective pay benefits. Voluntary mobility within versus between employers leads to different work rewards.

Helen Johnston, Labour market transitions: barriers and enablers

Dr Helen Johnston, NESC, Labour market transitions: barriers and enablers presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Gail Irvine, Fulfilling work in Ireland

Gail Irvine, Carnegie UK Trust, Fulfilling work in Ireland presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Frank Walsh, Assessing competing explanations for the decline in trade union ...

Dr Frank Walsh, UCD, Assessing competing explanations for the decline in trade union density in Ireland presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

Eamon Murphy, An overview of labour market participation in Ireland over the ...

Eamon Murphy, Social Justice Ireland, An overview of labour market participation in Ireland over the last two decades presented at the 6th Annual NERI Labour Market Conference in association with the Whitaker Institute, NUI Galway, 22nd May, 2018.

More from NUI Galway (20)

Vincenzo MacCarrone, Explaining the trajectory of collective bargaining in Ir...

Vincenzo MacCarrone, Explaining the trajectory of collective bargaining in Ir...

Tom Turner, Tipping the scales for labour in Ireland?

Tom Turner, Tipping the scales for labour in Ireland?

Tom McDonnell, Medium-term trends in the Irish labour market and possibilitie...

Tom McDonnell, Medium-term trends in the Irish labour market and possibilitie...

Sorcha Foster, The risk of automation of work in Ireland

Sorcha Foster, The risk of automation of work in Ireland

Sinead Pembroke, Living with uncertainty: The social implications of precario...

Sinead Pembroke, Living with uncertainty: The social implications of precario...

Paul MacFlynn, A low skills equilibrium in Northern Ireland

Paul MacFlynn, A low skills equilibrium in Northern Ireland

Nuala Whelan, The role of labour market activation in building a healthy work...

Nuala Whelan, The role of labour market activation in building a healthy work...

Michéal Collins, and Dr Michelle Maher, Auto enrolment

Michéal Collins, and Dr Michelle Maher, Auto enrolment

Luke Rehill, Patterns of firm-level productivity in Ireland

Luke Rehill, Patterns of firm-level productivity in Ireland

Lucy Pyne, Evidence from the Social Inclusion and Community Activation Programme

Lucy Pyne, Evidence from the Social Inclusion and Community Activation Programme

Lisa Wilson, The gendered nature of job quality and job insecurity

Lisa Wilson, The gendered nature of job quality and job insecurity

Karina Doorley, axation, labour force participation and gender equality in Ir...

Karina Doorley, axation, labour force participation and gender equality in Ir...

Jason Loughrey, Household income volatility in Ireland

Jason Loughrey, Household income volatility in Ireland

Helen Johnston, Labour market transitions: barriers and enablers

Helen Johnston, Labour market transitions: barriers and enablers

Frank Walsh, Assessing competing explanations for the decline in trade union ...

Frank Walsh, Assessing competing explanations for the decline in trade union ...

Eamon Murphy, An overview of labour market participation in Ireland over the ...

Eamon Murphy, An overview of labour market participation in Ireland over the ...

Recently uploaded

Community pharmacy- Social and preventive pharmacy UNIT 5

Covered community pharmacy topic of the subject Social and preventive pharmacy for Diploma and Bachelor of pharmacy

বাংলাদেশ অর্থনৈতিক সমীক্ষা (Economic Review) ২০২৪ UJS App.pdf

বাংলাদেশের অর্থনৈতিক সমীক্ষা ২০২৪ [Bangladesh Economic Review 2024 Bangla.pdf] কম্পিউটার , ট্যাব ও স্মার্ট ফোন ভার্সন সহ সম্পূর্ণ বাংলা ই-বুক বা pdf বই " সুচিপত্র ...বুকমার্ক মেনু 🔖 ও হাইপার লিংক মেনু 📝👆 যুক্ত ..

আমাদের সবার জন্য খুব খুব গুরুত্বপূর্ণ একটি বই ..বিসিএস, ব্যাংক, ইউনিভার্সিটি ভর্তি ও যে কোন প্রতিযোগিতা মূলক পরীক্ষার জন্য এর খুব ইম্পরট্যান্ট একটি বিষয় ...তাছাড়া বাংলাদেশের সাম্প্রতিক যে কোন ডাটা বা তথ্য এই বইতে পাবেন ...

তাই একজন নাগরিক হিসাবে এই তথ্য গুলো আপনার জানা প্রয়োজন ...।

বিসিএস ও ব্যাংক এর লিখিত পরীক্ষা ...+এছাড়া মাধ্যমিক ও উচ্চমাধ্যমিকের স্টুডেন্টদের জন্য অনেক কাজে আসবে ...

South African Journal of Science: Writing with integrity workshop (2024)

South African Journal of Science: Writing with integrity workshop (2024)Academy of Science of South Africa

A workshop hosted by the South African Journal of Science aimed at postgraduate students and early career researchers with little or no experience in writing and publishing journal articles.ISO/IEC 27001, ISO/IEC 42001, and GDPR: Best Practices for Implementation and...

Denis is a dynamic and results-driven Chief Information Officer (CIO) with a distinguished career spanning information systems analysis and technical project management. With a proven track record of spearheading the design and delivery of cutting-edge Information Management solutions, he has consistently elevated business operations, streamlined reporting functions, and maximized process efficiency.

Certified as an ISO/IEC 27001: Information Security Management Systems (ISMS) Lead Implementer, Data Protection Officer, and Cyber Risks Analyst, Denis brings a heightened focus on data security, privacy, and cyber resilience to every endeavor.

His expertise extends across a diverse spectrum of reporting, database, and web development applications, underpinned by an exceptional grasp of data storage and virtualization technologies. His proficiency in application testing, database administration, and data cleansing ensures seamless execution of complex projects.

What sets Denis apart is his comprehensive understanding of Business and Systems Analysis technologies, honed through involvement in all phases of the Software Development Lifecycle (SDLC). From meticulous requirements gathering to precise analysis, innovative design, rigorous development, thorough testing, and successful implementation, he has consistently delivered exceptional results.

Throughout his career, he has taken on multifaceted roles, from leading technical project management teams to owning solutions that drive operational excellence. His conscientious and proactive approach is unwavering, whether he is working independently or collaboratively within a team. His ability to connect with colleagues on a personal level underscores his commitment to fostering a harmonious and productive workplace environment.

Date: May 29, 2024

Tags: Information Security, ISO/IEC 27001, ISO/IEC 42001, Artificial Intelligence, GDPR

-------------------------------------------------------------------------------

Find out more about ISO training and certification services

Training: ISO/IEC 27001 Information Security Management System - EN | PECB

ISO/IEC 42001 Artificial Intelligence Management System - EN | PECB

General Data Protection Regulation (GDPR) - Training Courses - EN | PECB

Webinars: https://pecb.com/webinars

Article: https://pecb.com/article

-------------------------------------------------------------------------------

For more information about PECB:

Website: https://pecb.com/

LinkedIn: https://www.linkedin.com/company/pecb/

Facebook: https://www.facebook.com/PECBInternational/

Slideshare: http://www.slideshare.net/PECBCERTIFICATION

How to Add Chatter in the odoo 17 ERP Module

In Odoo, the chatter is like a chat tool that helps you work together on records. You can leave notes and track things, making it easier to talk with your team and partners. Inside chatter, all communication history, activity, and changes will be displayed.

Digital Artifact 1 - 10VCD Environments Unit

Digital Artifact 1 - 10VCD Environments Unit - NGV Pavilion Concept Design

Walmart Business+ and Spark Good for Nonprofits.pdf

"Learn about all the ways Walmart supports nonprofit organizations.

You will hear from Liz Willett, the Head of Nonprofits, and hear about what Walmart is doing to help nonprofits, including Walmart Business and Spark Good. Walmart Business+ is a new offer for nonprofits that offers discounts and also streamlines nonprofits order and expense tracking, saving time and money.

The webinar may also give some examples on how nonprofits can best leverage Walmart Business+.

The event will cover the following::

Walmart Business + (https://business.walmart.com/plus) is a new shopping experience for nonprofits, schools, and local business customers that connects an exclusive online shopping experience to stores. Benefits include free delivery and shipping, a 'Spend Analytics” feature, special discounts, deals and tax-exempt shopping.

Special TechSoup offer for a free 180 days membership, and up to $150 in discounts on eligible orders.

Spark Good (walmart.com/sparkgood) is a charitable platform that enables nonprofits to receive donations directly from customers and associates.

Answers about how you can do more with Walmart!"

Exploiting Artificial Intelligence for Empowering Researchers and Faculty, In...

Exploiting Artificial Intelligence for Empowering Researchers and Faculty, In...Dr. Vinod Kumar Kanvaria

Exploiting Artificial Intelligence for Empowering Researchers and Faculty,

International FDP on Fundamentals of Research in Social Sciences

at Integral University, Lucknow, 06.06.2024

By Dr. Vinod Kumar KanvariaRPMS TEMPLATE FOR SCHOOL YEAR 2023-2024 FOR TEACHER 1 TO TEACHER 3

RPMS Template 2023-2024 by: Irene S. Rueco

The simplified electron and muon model, Oscillating Spacetime: The Foundation...

Discover the Simplified Electron and Muon Model: A New Wave-Based Approach to Understanding Particles delves into a groundbreaking theory that presents electrons and muons as rotating soliton waves within oscillating spacetime. Geared towards students, researchers, and science buffs, this book breaks down complex ideas into simple explanations. It covers topics such as electron waves, temporal dynamics, and the implications of this model on particle physics. With clear illustrations and easy-to-follow explanations, readers will gain a new outlook on the universe's fundamental nature.

Main Java[All of the Base Concepts}.docx

This is part 1 of my Java Learning Journey. This Contains Custom methods, classes, constructors, packages, multithreading , try- catch block, finally block and more.

How to Make a Field Mandatory in Odoo 17

In Odoo, making a field required can be done through both Python code and XML views. When you set the required attribute to True in Python code, it makes the field required across all views where it's used. Conversely, when you set the required attribute in XML views, it makes the field required only in the context of that particular view.

DRUGS AND ITS classification slide share

Any substance (other than food) that is used to prevent, diagnose, treat, or relieve symptoms of a

disease or abnormal condition

BBR 2024 Summer Sessions Interview Training

Qualitative research interview training by Professor Katrina Pritchard and Dr Helen Williams

Natural birth techniques - Mrs.Akanksha Trivedi Rama University

Natural birth techniques - Mrs.Akanksha Trivedi Rama UniversityAkanksha trivedi rama nursing college kanpur.

Natural birth techniques are various type such as/ water birth , alexender method, hypnosis, bradley method, lamaze method etcRecently uploaded (20)

Community pharmacy- Social and preventive pharmacy UNIT 5

Community pharmacy- Social and preventive pharmacy UNIT 5

বাংলাদেশ অর্থনৈতিক সমীক্ষা (Economic Review) ২০২৪ UJS App.pdf

বাংলাদেশ অর্থনৈতিক সমীক্ষা (Economic Review) ২০২৪ UJS App.pdf

South African Journal of Science: Writing with integrity workshop (2024)

South African Journal of Science: Writing with integrity workshop (2024)

ISO/IEC 27001, ISO/IEC 42001, and GDPR: Best Practices for Implementation and...

ISO/IEC 27001, ISO/IEC 42001, and GDPR: Best Practices for Implementation and...

Film vocab for eal 3 students: Australia the movie

Film vocab for eal 3 students: Australia the movie

Walmart Business+ and Spark Good for Nonprofits.pdf