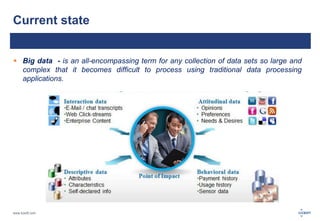

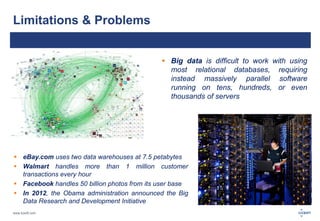

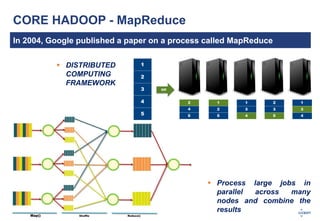

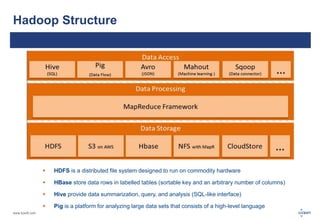

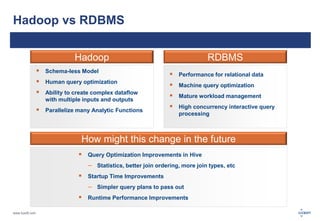

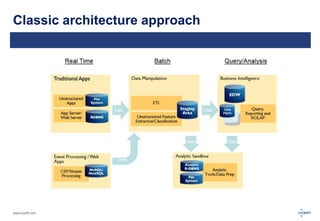

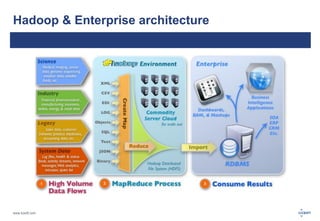

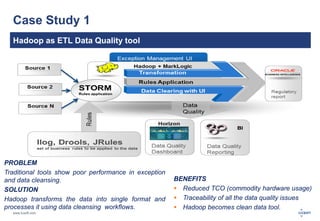

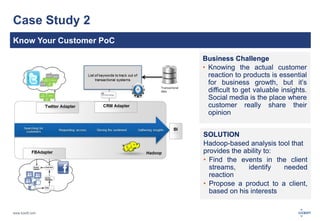

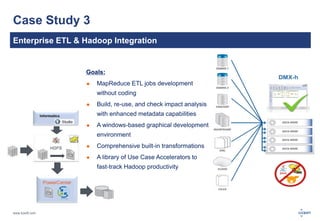

The document discusses big data, its challenges, and the advantages of using Hadoop over traditional relational databases (RDBMS). It highlights the limitations of RDBMS for processing large datasets and the structure and components of Hadoop that make it suitable for handling big data. Additionally, it presents case studies demonstrating Hadoop's effectiveness in ETL processes and customer analytics.