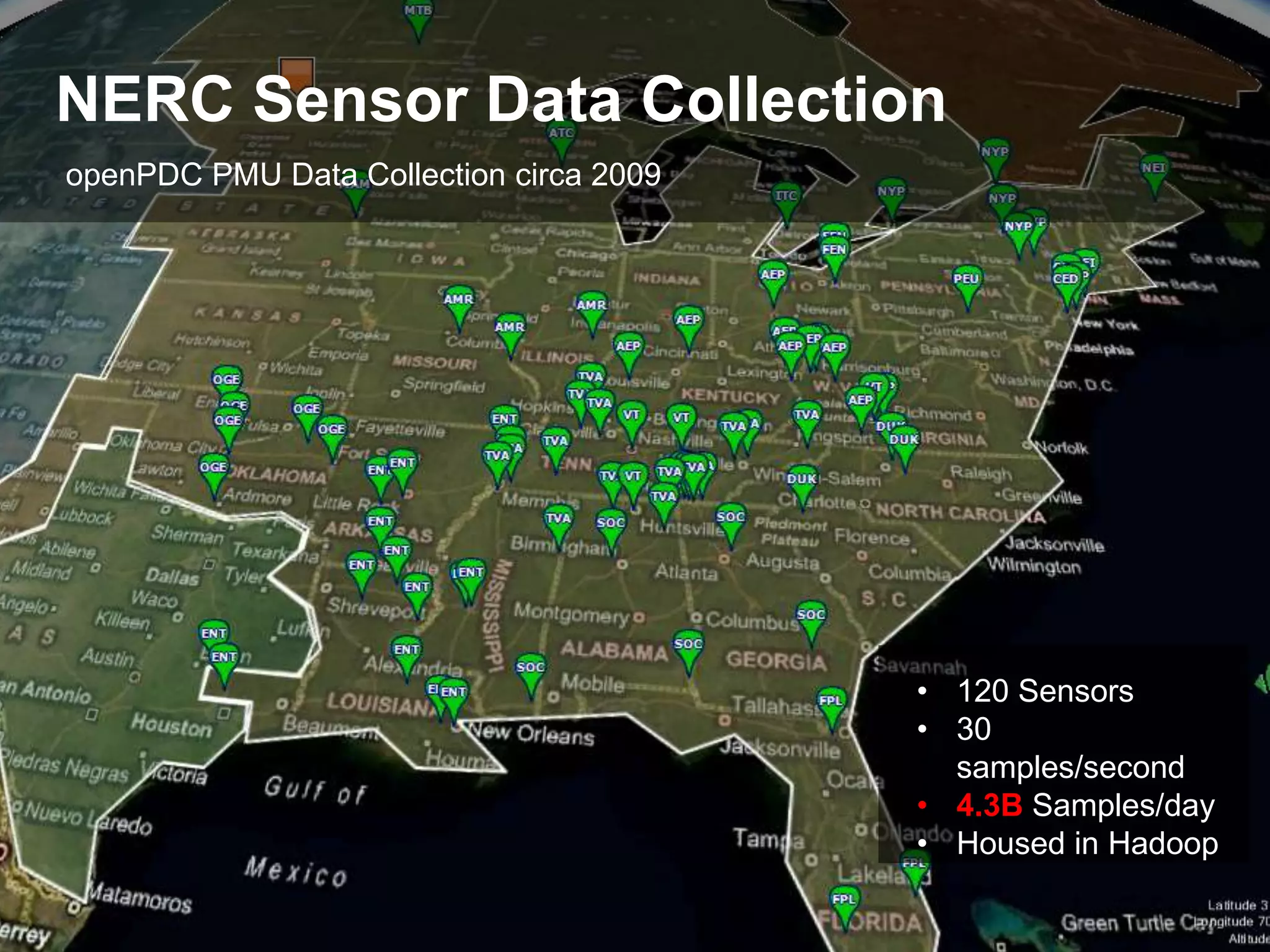

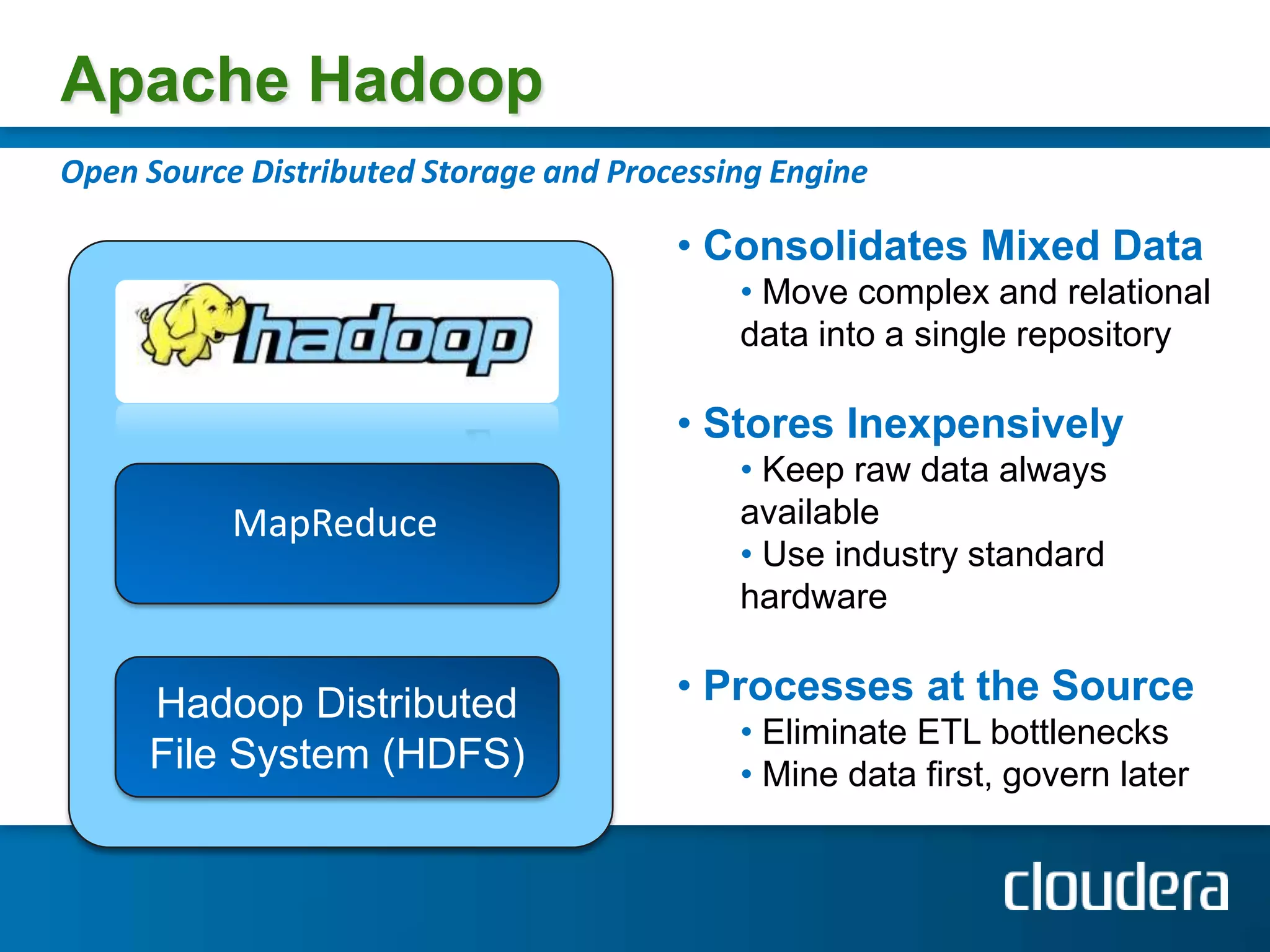

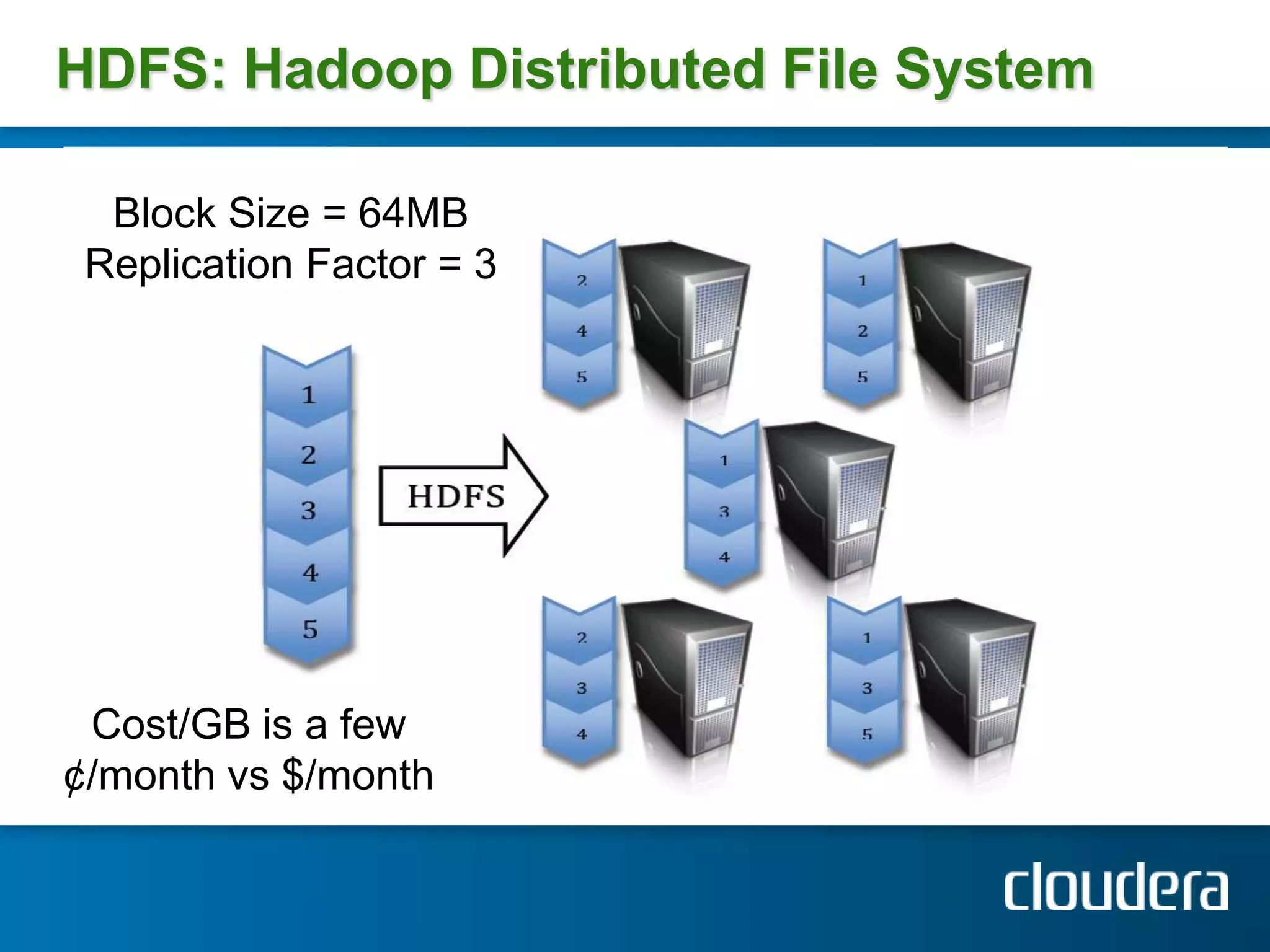

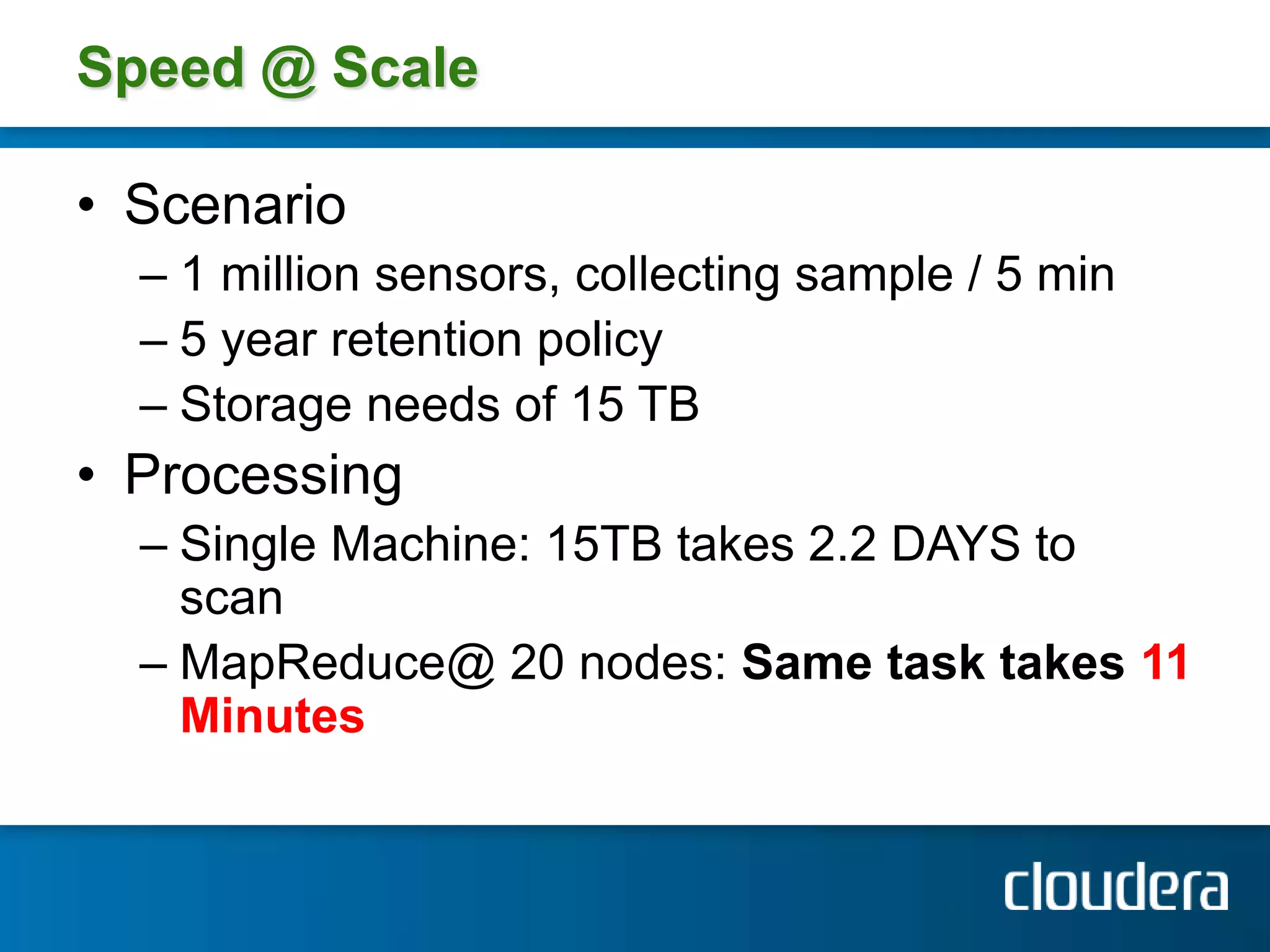

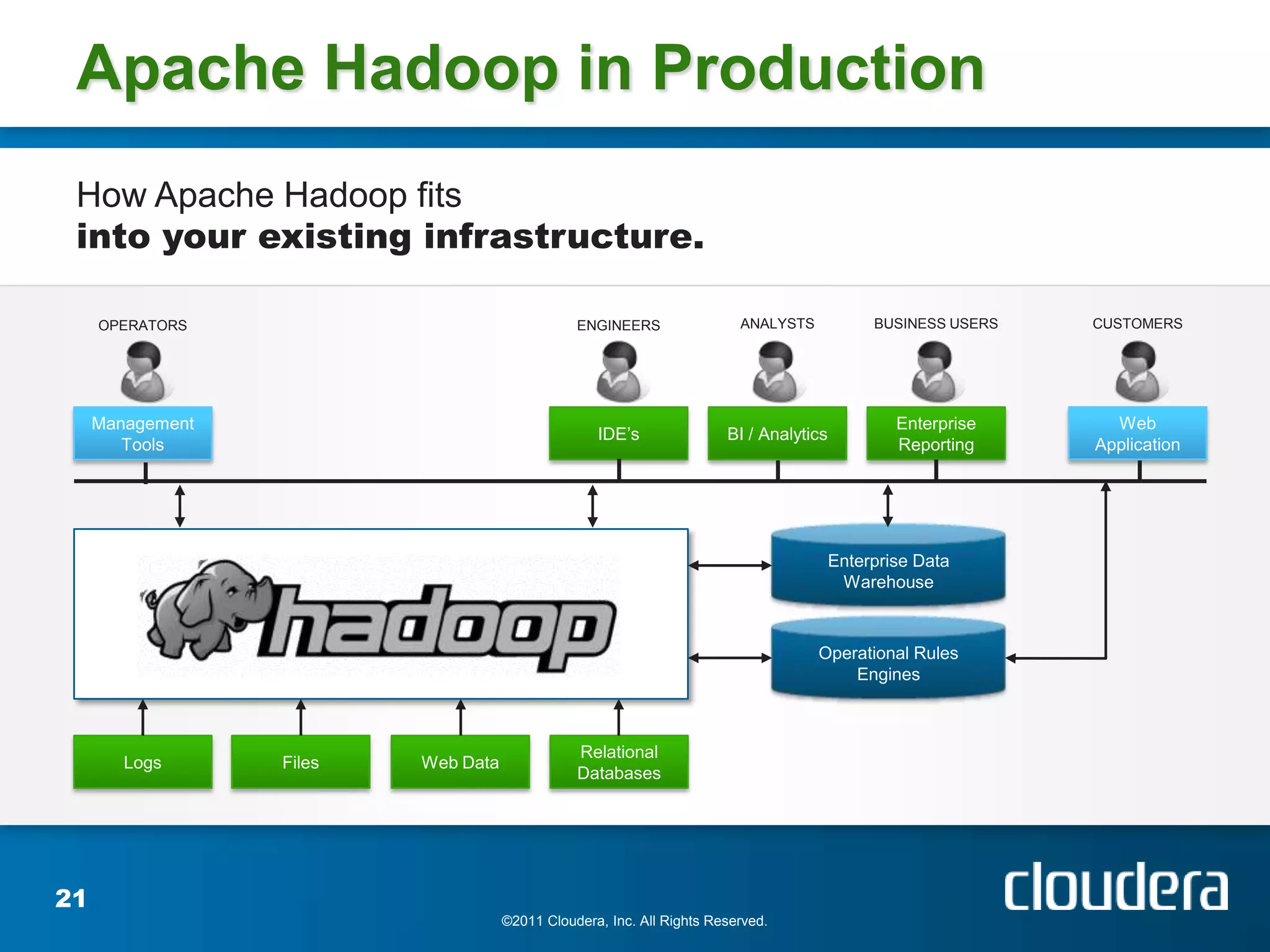

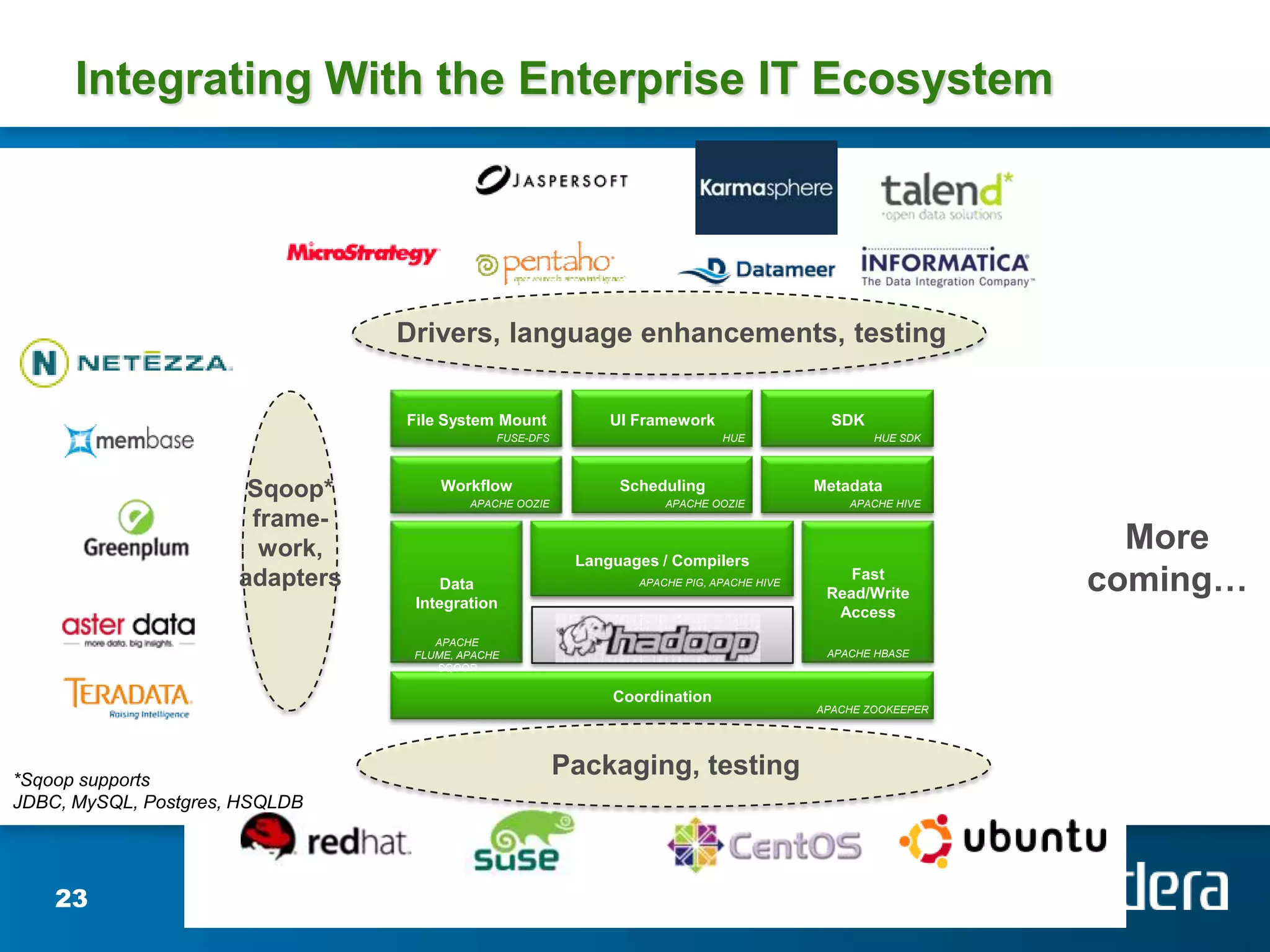

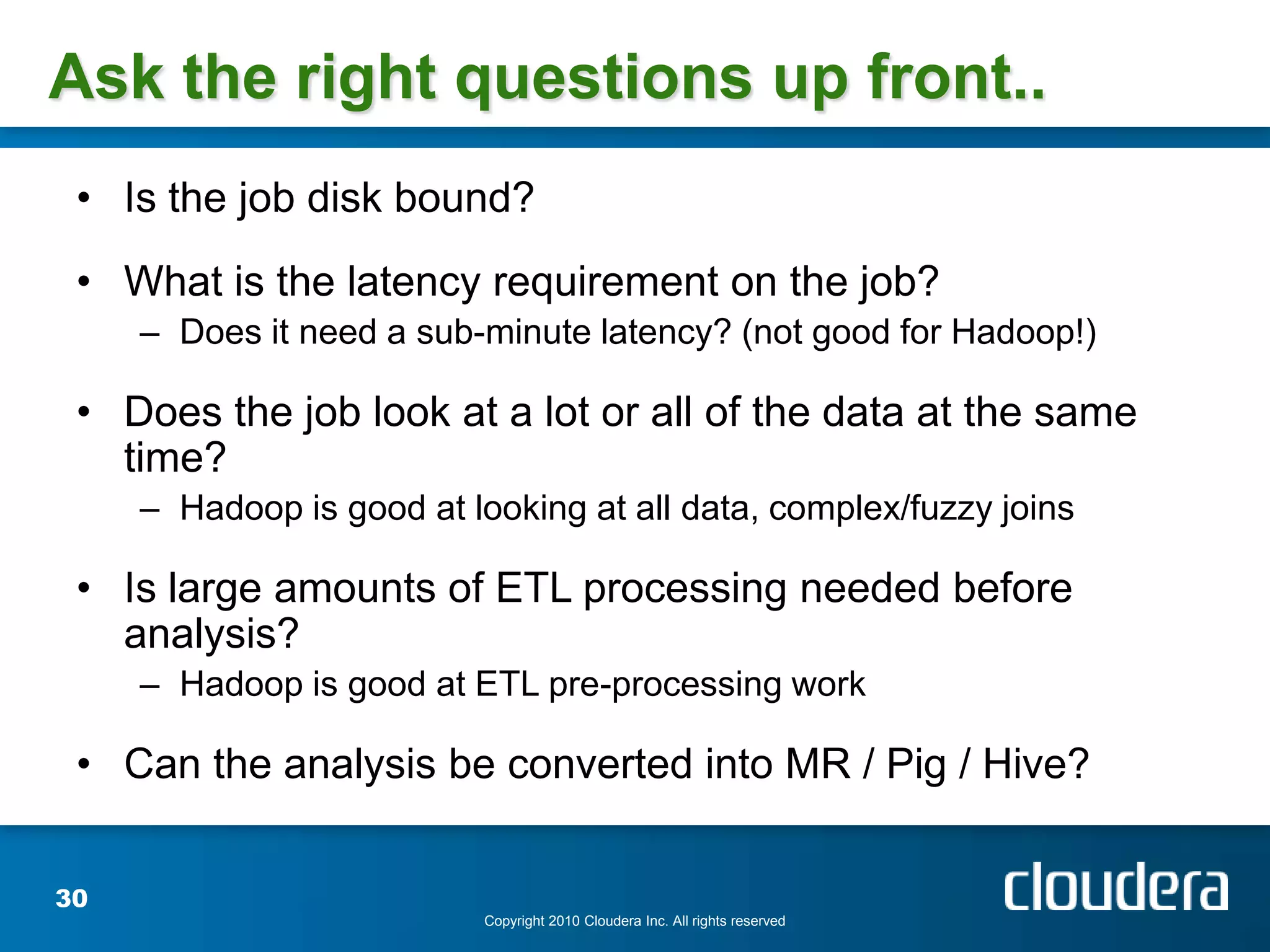

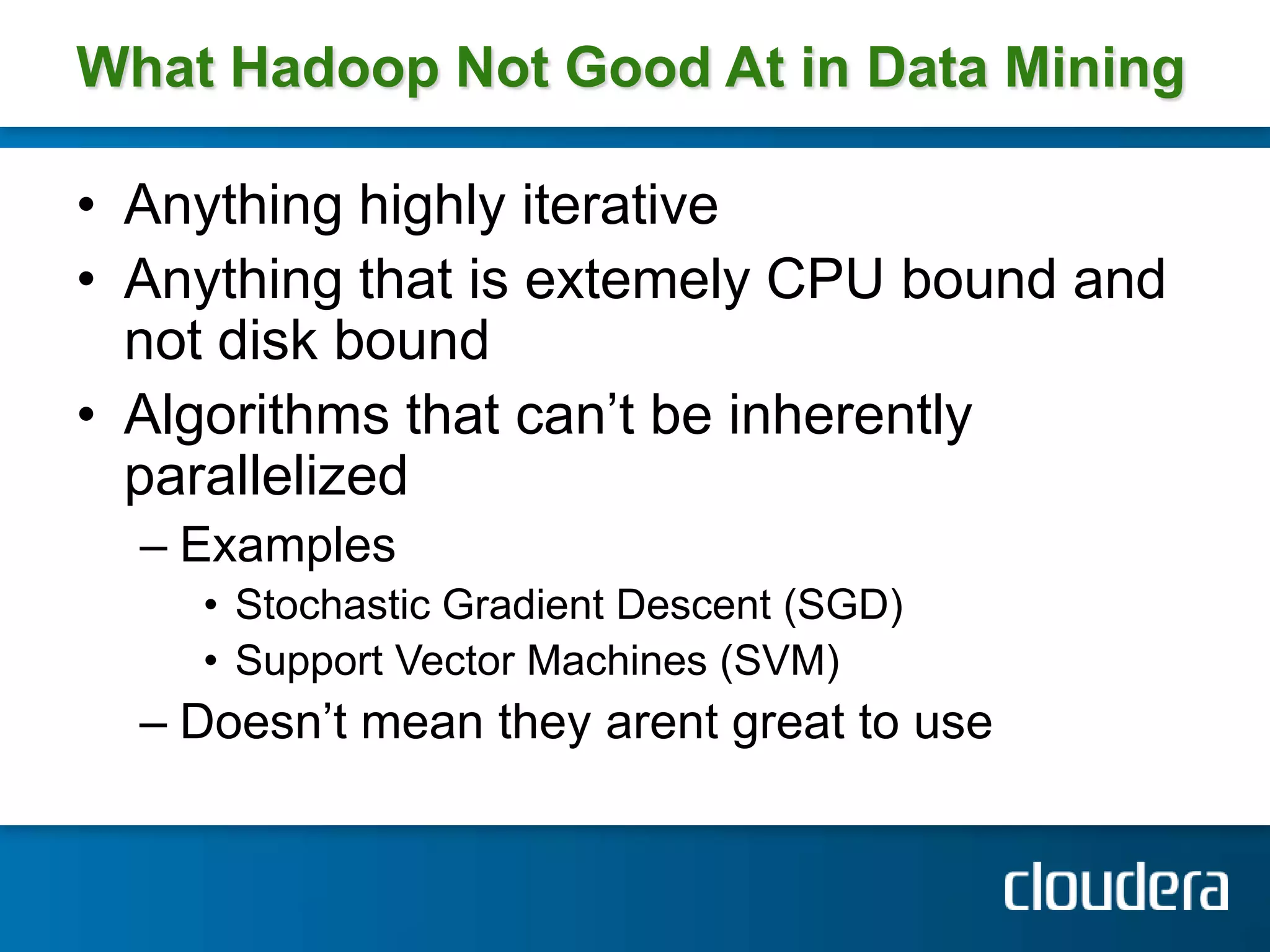

Josh Patterson gave a presentation on Hadoop and how it has been used. He discussed his background working on Hadoop projects including for the Tennessee Valley Authority. He outlined what Hadoop is, how it works, and examples of use cases. This includes how Hadoop was used to store and analyze large amounts of smart grid sensor data for the openPDC project. He discussed integrating Hadoop with existing enterprise systems and tools for working with Hadoop like Pig and Hive.