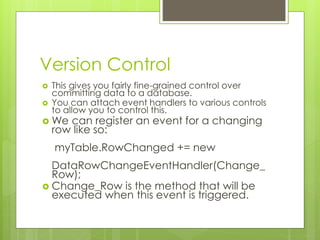

This document discusses data validation techniques in ADO.NET. It compares data readers and datasets, explaining that data readers provide real-time querying while datasets allow for disconnected and typed data access. The document then covers using events and exceptions to validate data and reject invalid changes, ensuring data integrity when committing updates to the database.

![Reading From A Reader

We use the read method of a data

reader to have it read the next record in

the query.

This returns a true or a false to indicate success.

myReader.read();

Once we’ve read in a record, we access

it in the reader directly:

txtId.Text =

myReader[“customer id”];](https://image.slidesharecdn.com/08-strongtypinganddatavalidation-140618172649-phpapp01/85/PATTERNS08-Strong-Typing-and-Data-Validation-in-NET-8-320.jpg)

![Reading From A Reader

If we need to read all records, we put it in

a loop:

while(myReader.Read())

{

cmbItems.Items.Add

(myReader["CustomerName"]);

}](https://image.slidesharecdn.com/08-strongtypinganddatavalidation-140618172649-phpapp01/85/PATTERNS08-Strong-Typing-and-Data-Validation-in-NET-9-320.jpg)

![Untyped Data Sets

This is perfectly valid in an untyped Data Set:

myData.Tables[1].Rows[6]

[“Customer Name”]

What if there’s only one table?

What if there are only five rows?

What if there’s no customer name

field?](https://image.slidesharecdn.com/08-strongtypinganddatavalidation-140618172649-phpapp01/85/PATTERNS08-Strong-Typing-and-Data-Validation-in-NET-13-320.jpg)

![Using a Typed Data Set

Now, your data set will contain an

enumeration representing the table:

myData.Customer[0];

And each element of that enumeration

will have a property reflecting a field

name:

myData.Customer[0].CustomerName;](https://image.slidesharecdn.com/08-strongtypinganddatavalidation-140618172649-phpapp01/85/PATTERNS08-Strong-Typing-and-Data-Validation-in-NET-17-320.jpg)

![Typed Data Set

Typed data sets also expose a range of

other useful methods.

Specifically, each field gets an ‘is null’ method:

for (int i = 0; i < myData.Customer.Count - 1; i++)

{

if (myData.Customer[0].IsCustomerNameNull()

== false)

{

cmbItems.Items.Add

(myData.Customer[i].CustomerName);

}

}](https://image.slidesharecdn.com/08-strongtypinganddatavalidation-140618172649-phpapp01/85/PATTERNS08-Strong-Typing-and-Data-Validation-in-NET-18-320.jpg)

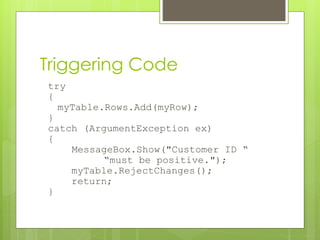

![Event Handler

private static void Change_Row(object sender,

DataRowChangeEventArgs e) {

if ((int)e.Row["CustomerId"] < 0)

{

throw new ArgumentException("Customer ID must “ +

“be positive");

}

}

Why do this?

Why not let the database itself handle it?

This way we can provide meaningful user

feedback.

And take advantage of strong typing to provide

context and convenience.](https://image.slidesharecdn.com/08-strongtypinganddatavalidation-140618172649-phpapp01/85/PATTERNS08-Strong-Typing-and-Data-Validation-in-NET-31-320.jpg)