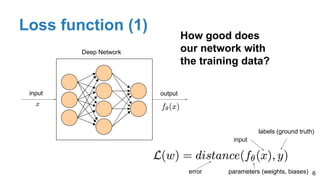

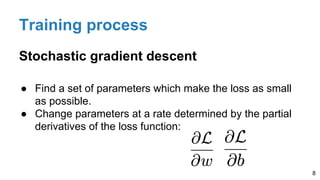

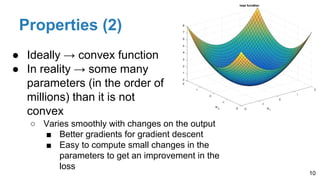

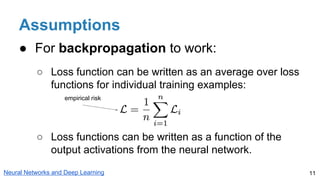

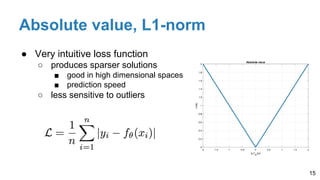

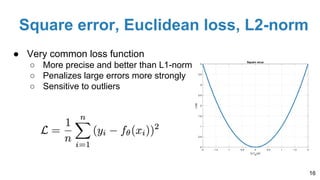

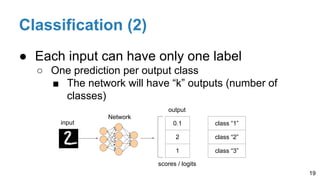

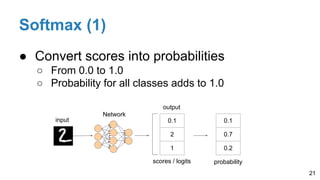

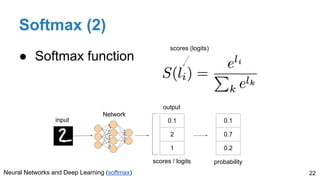

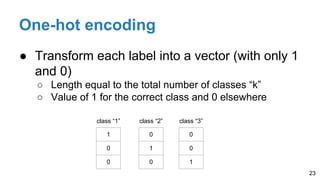

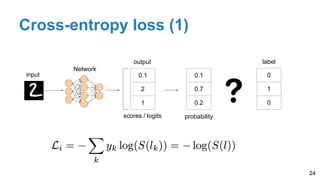

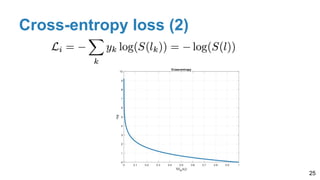

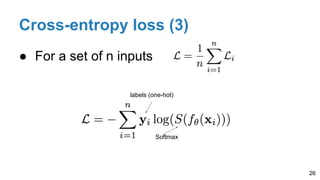

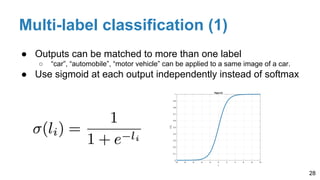

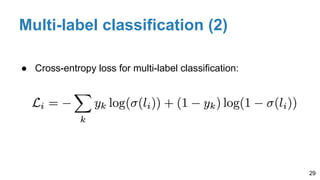

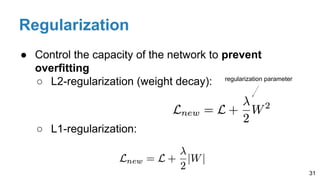

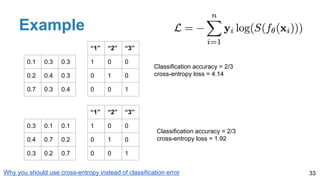

The document discusses the role and properties of loss functions in supervised deep learning, particularly in the context of regression and classification tasks. It explains key concepts such as the training process guided by loss functions, the common types like cross-entropy and square error, and the importance of regularization to prevent overfitting. The document also highlights the implementation of techniques like softmax and one-hot encoding for effective classification.

![[course site]

Javier Ruiz Hidalgo

javier.ruiz@upc.edu

Associate Professor

Universitat Politecnica de Catalunya

Technical University of Catalonia

Loss functions

#DLUPC](https://image.slidesharecdn.com/dlai2017d4l2lossfunctions-171101161756/75/Loss-functions-DLAI-D4L2-2017-UPC-Deep-Learning-for-Artificial-Intelligence-1-2048.jpg)