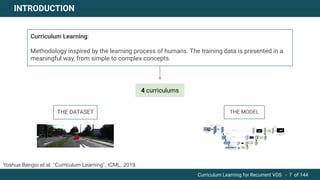

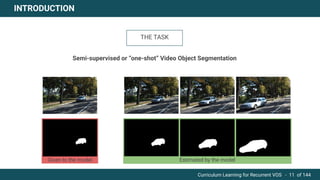

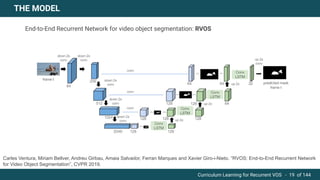

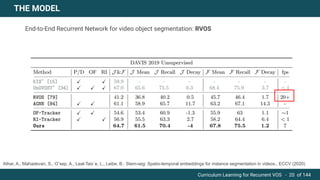

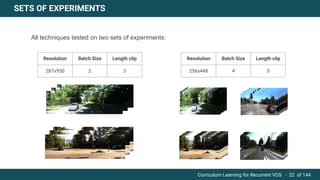

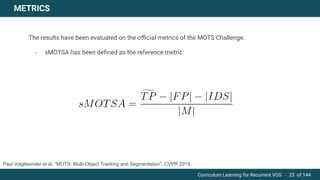

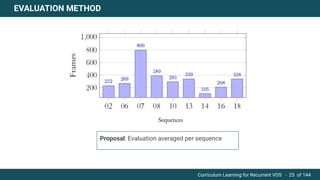

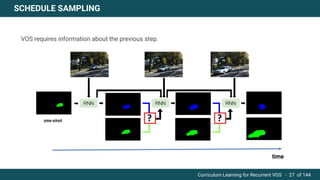

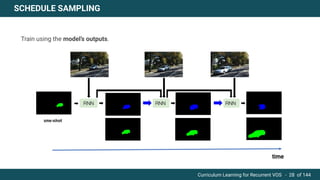

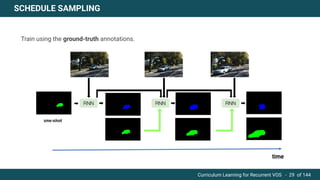

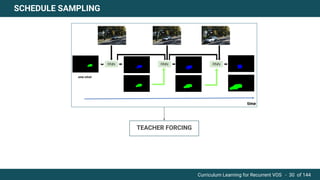

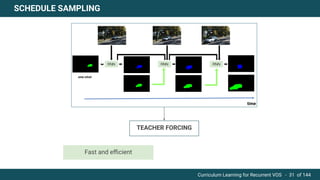

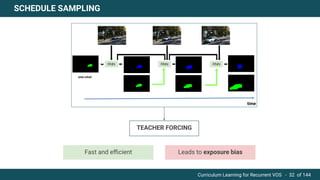

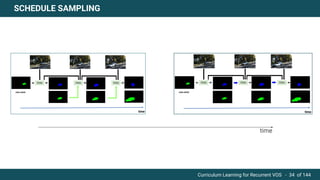

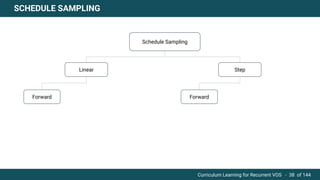

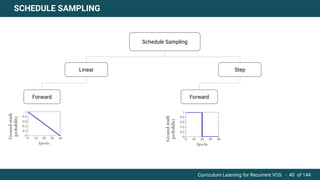

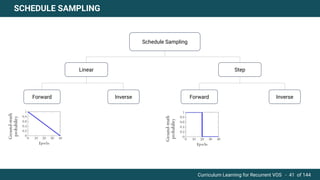

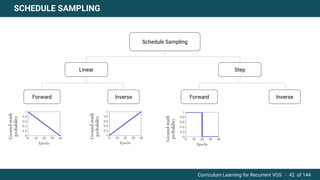

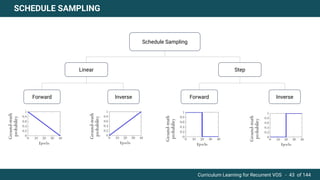

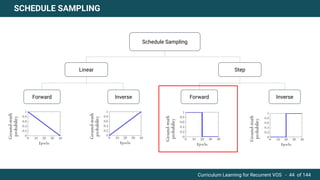

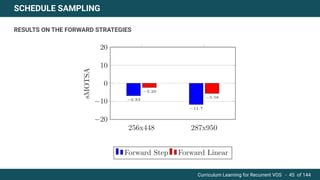

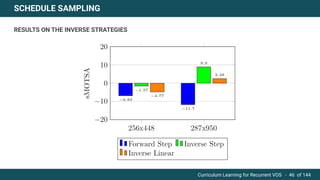

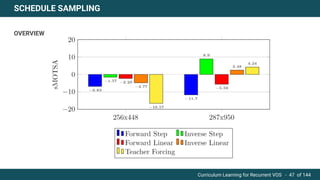

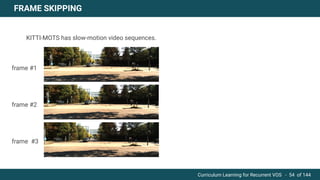

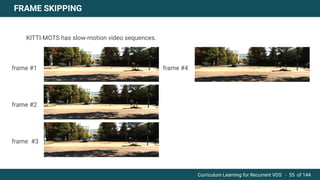

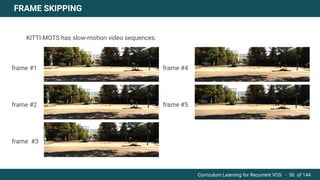

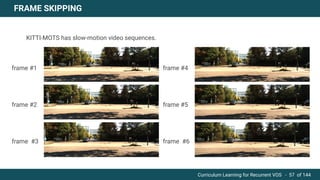

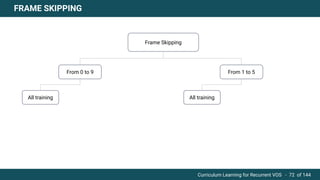

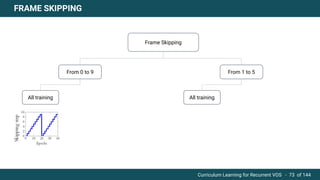

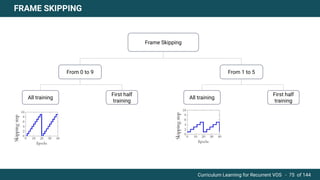

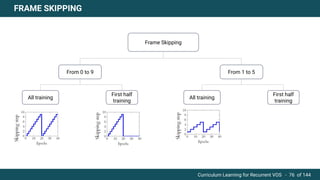

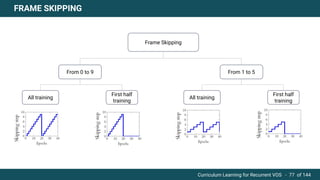

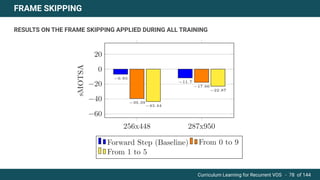

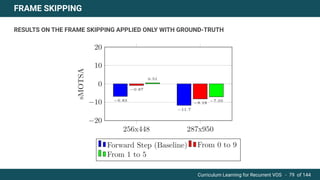

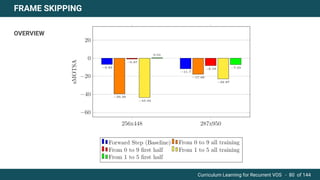

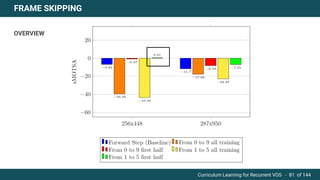

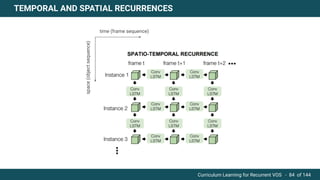

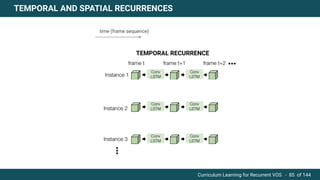

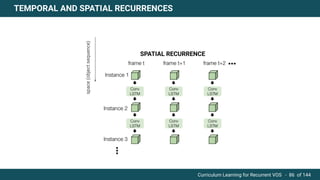

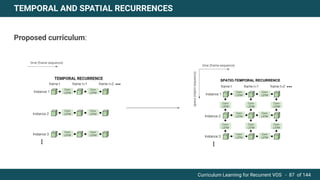

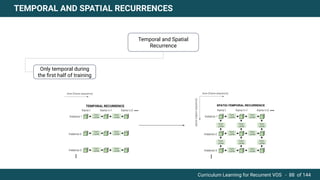

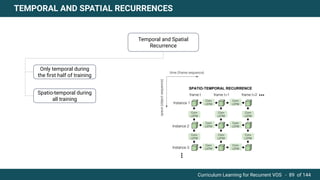

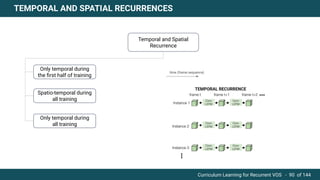

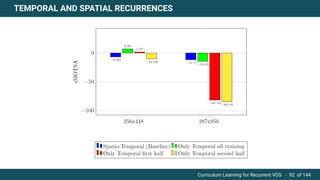

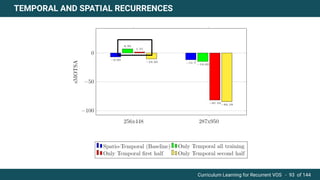

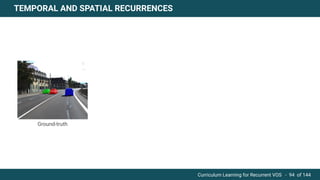

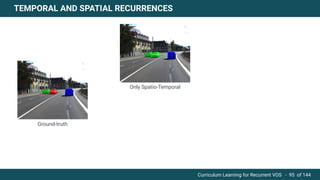

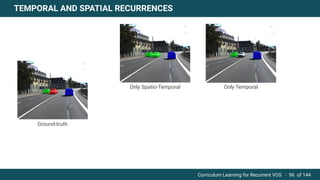

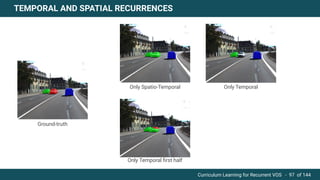

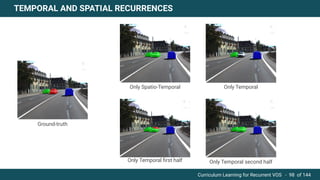

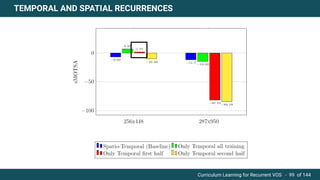

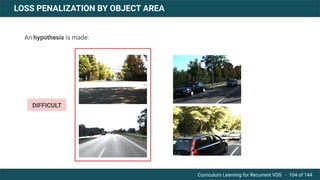

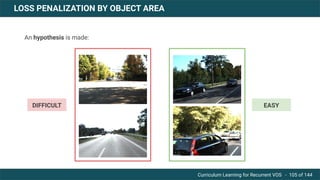

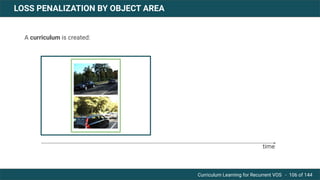

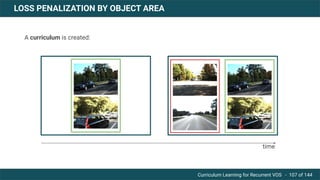

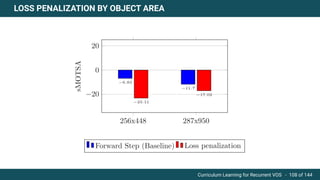

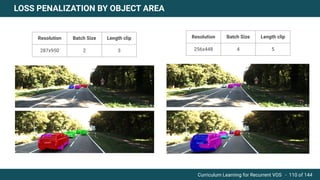

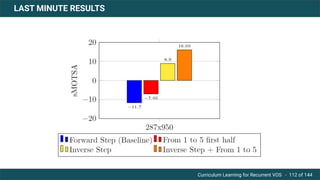

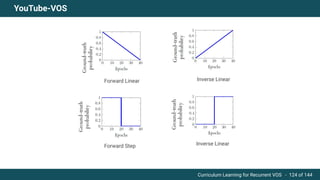

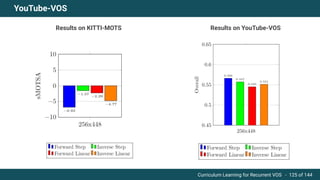

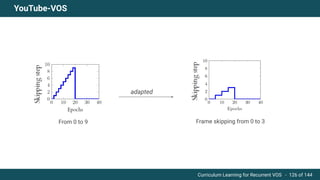

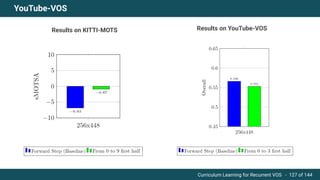

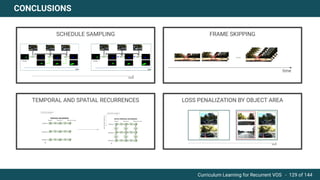

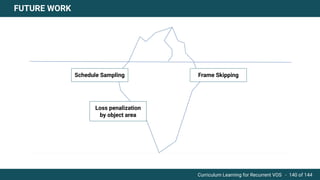

The document discusses curriculum learning applied to recurrent video object segmentation, presenting a methodology where training data is introduced progressively from simple to complex concepts. It explores the Kitti-MOTS dataset, describing an end-to-end recurrent network model for video object segmentation, along with various experimental setups and evaluation metrics. The conclusions highlight the importance of dataset understanding and suggest directions for future work, including further adjustments to training techniques.