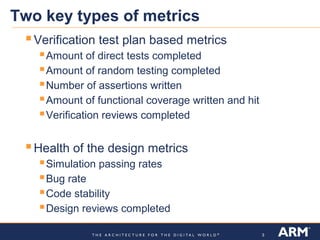

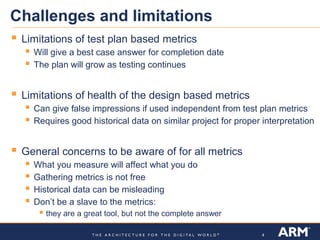

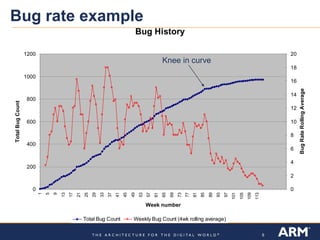

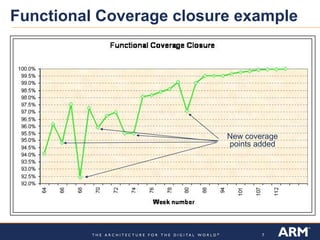

The document discusses verification metrics for CPU design projects. There are two key types of metrics: 1) verification test plan metrics like tests completed and assertions written to track progress, and 2) health of the design metrics like bug rates and simulation passing rates. While metrics provide useful information, they have limitations and challenges like historical data sometimes being misleading. Examples of metrics include a bug rate graph showing a "knee in the curve" and a functional coverage closure chart.