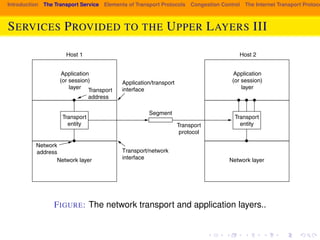

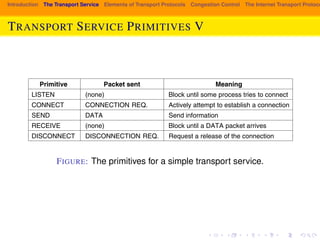

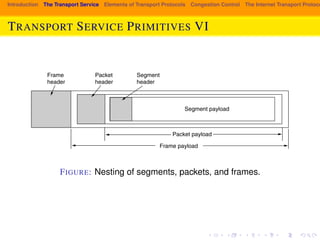

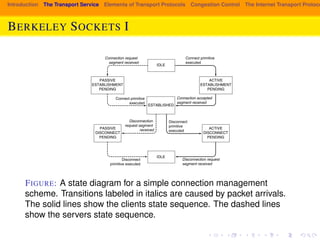

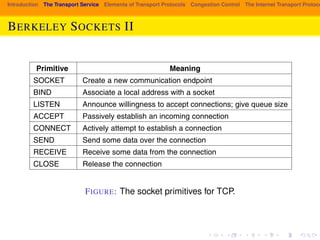

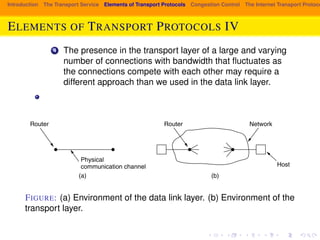

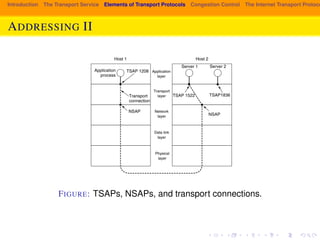

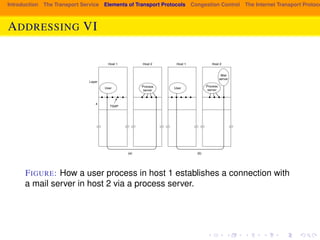

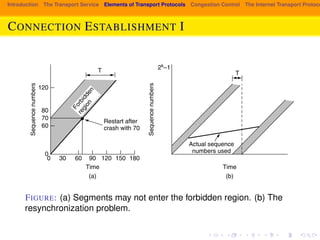

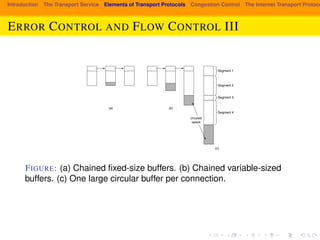

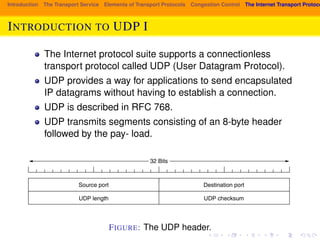

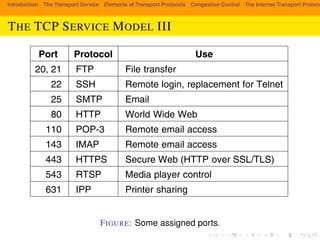

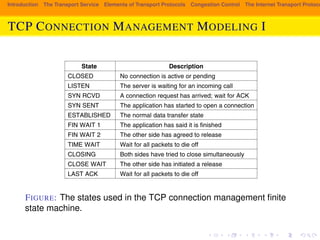

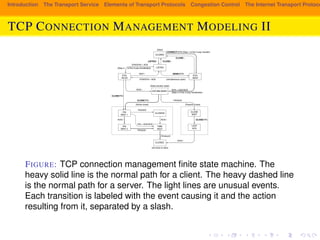

The document provides an overview of the transport layer in computer networks. It discusses the key services provided by the transport layer, including reliable data transmission and isolating applications from the underlying network technology. It introduces some common transport layer protocols like TCP and UDP, and describes important transport layer concepts such as connection-oriented vs. connectionless services, transport service primitives, and elements that transport protocols must address like error control and flow control.