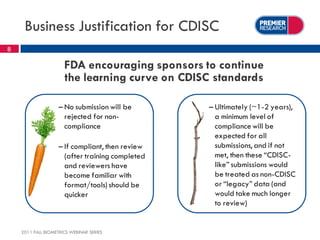

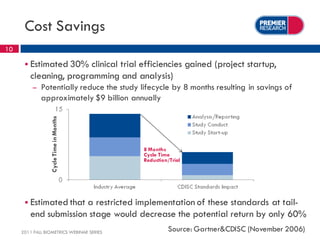

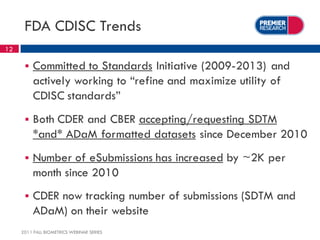

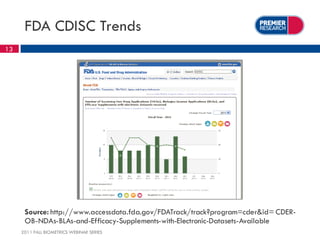

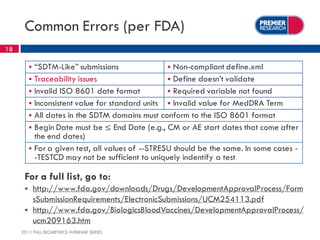

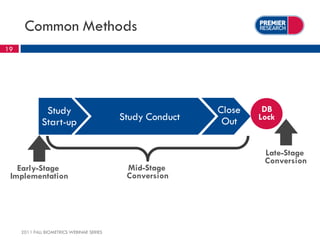

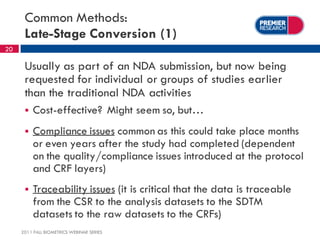

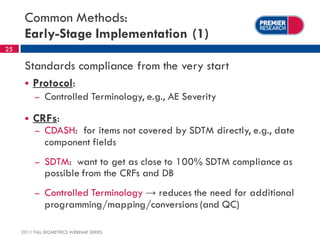

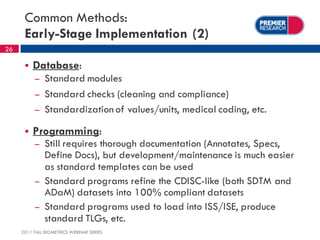

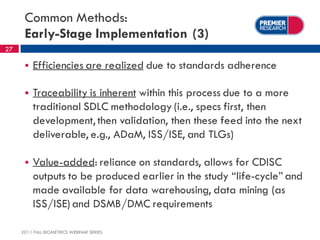

The webinar, presented by Thomas Kalfas, focuses on the implementation of CDISC standards in clinical trials, addressing challenges, timing, and methods for delivering compliant datasets. It highlights the FDA's encouragement for standardized submissions to improve efficiency and quality in the review process while emphasizing the benefits of early-stage implementation over late-stage conversions. Common mistakes and best practices for producing CDISC-compliant deliverables are discussed, underscoring the importance of adherence to standards throughout the research lifecycle.