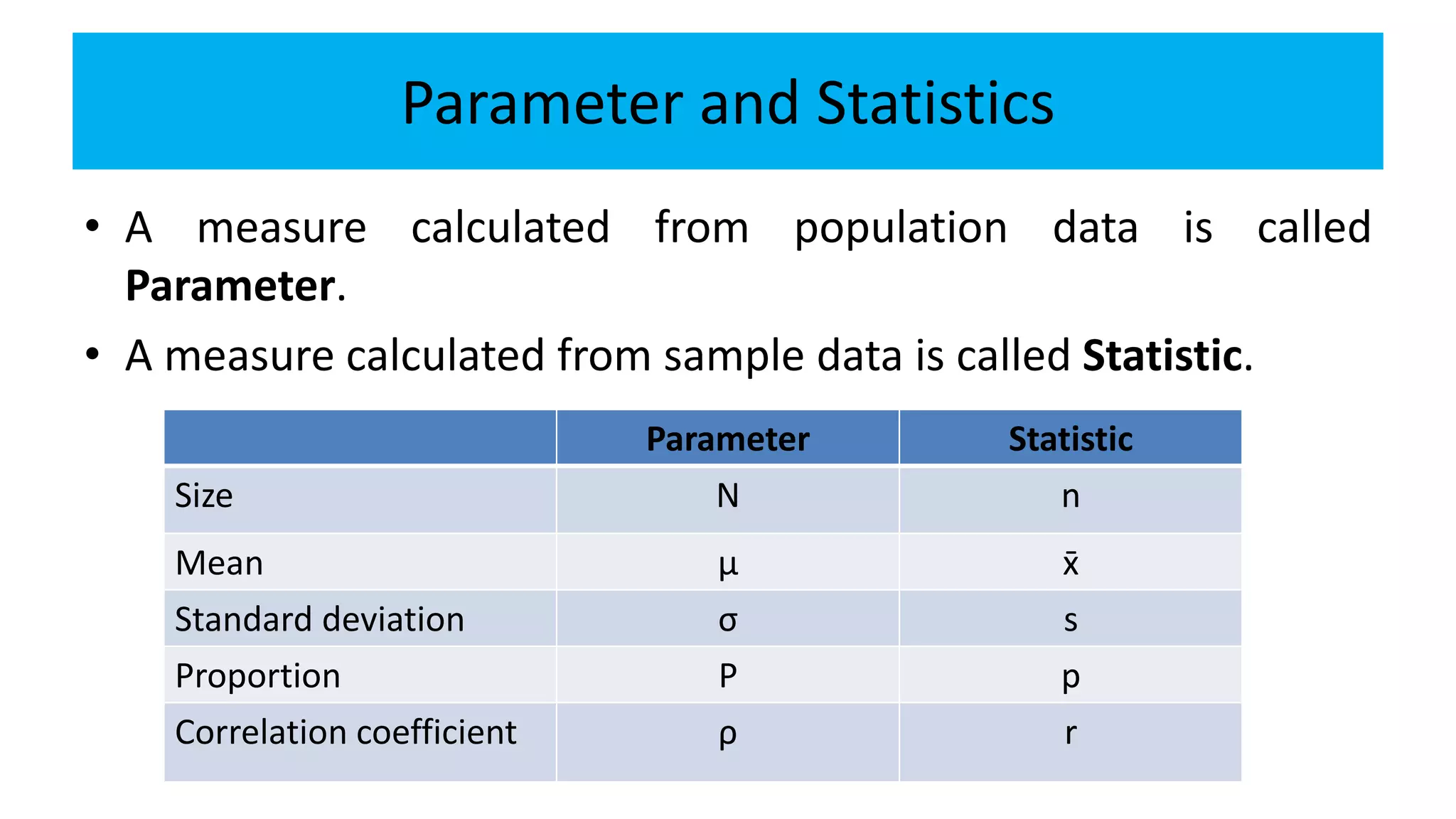

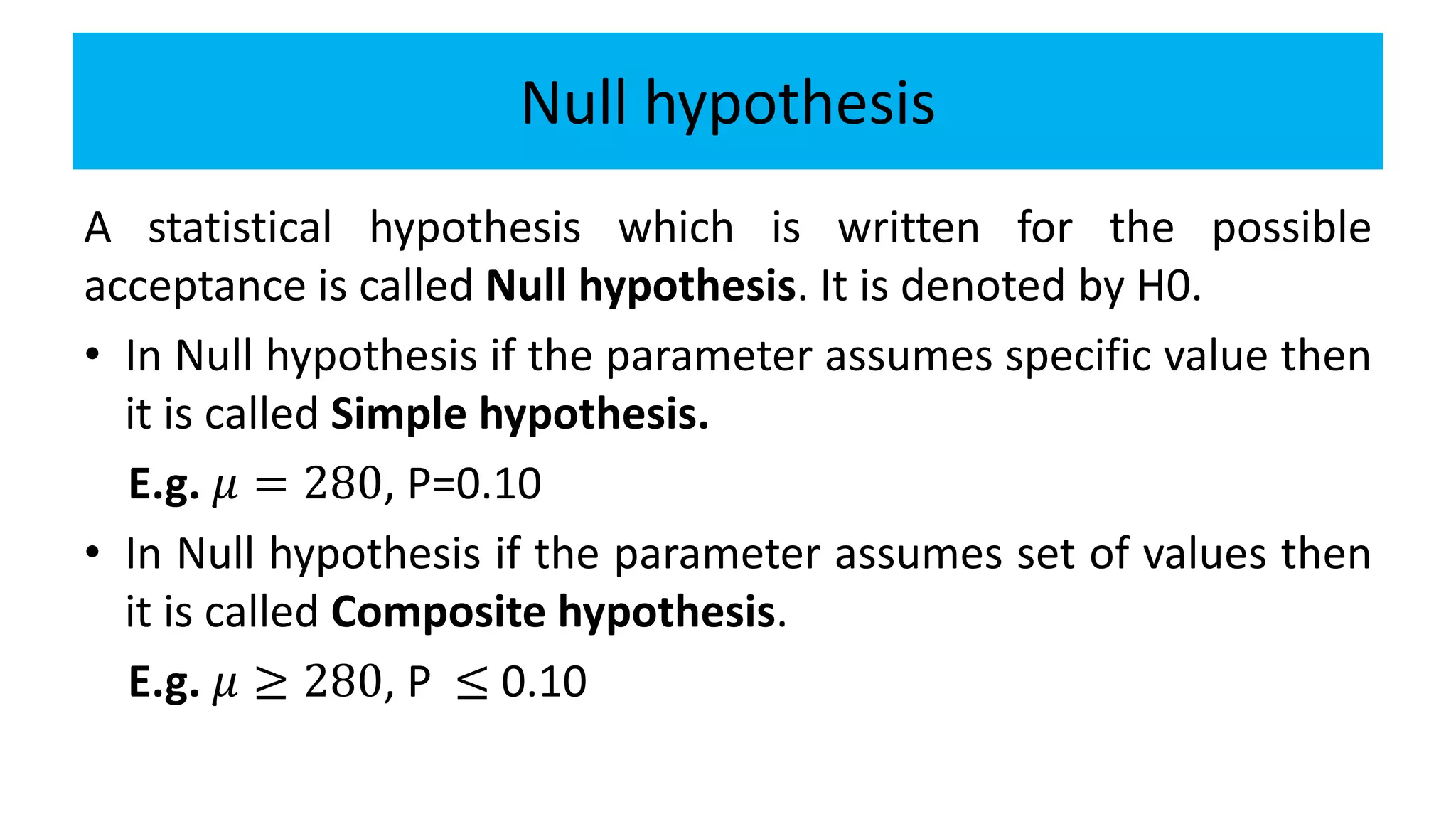

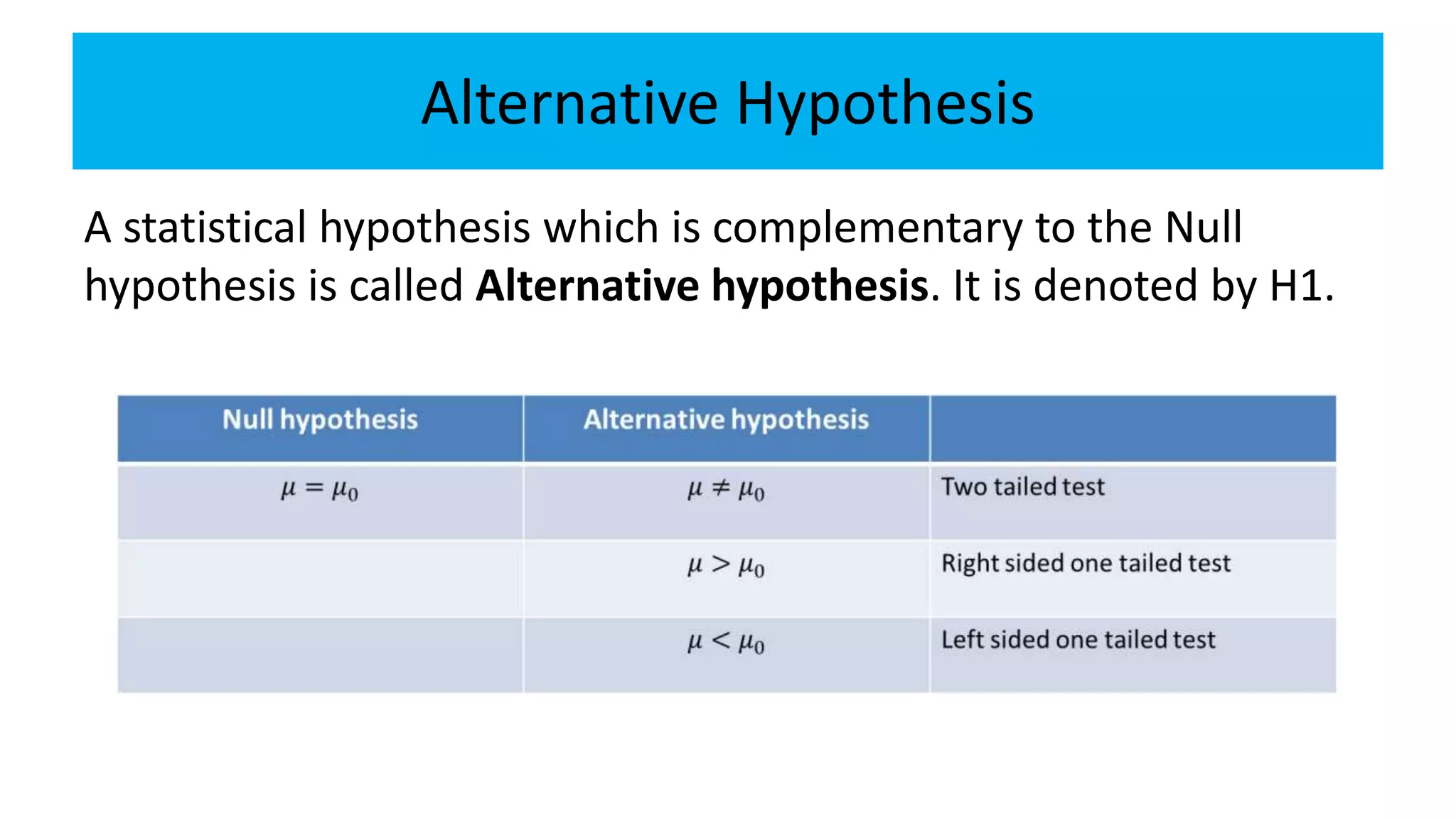

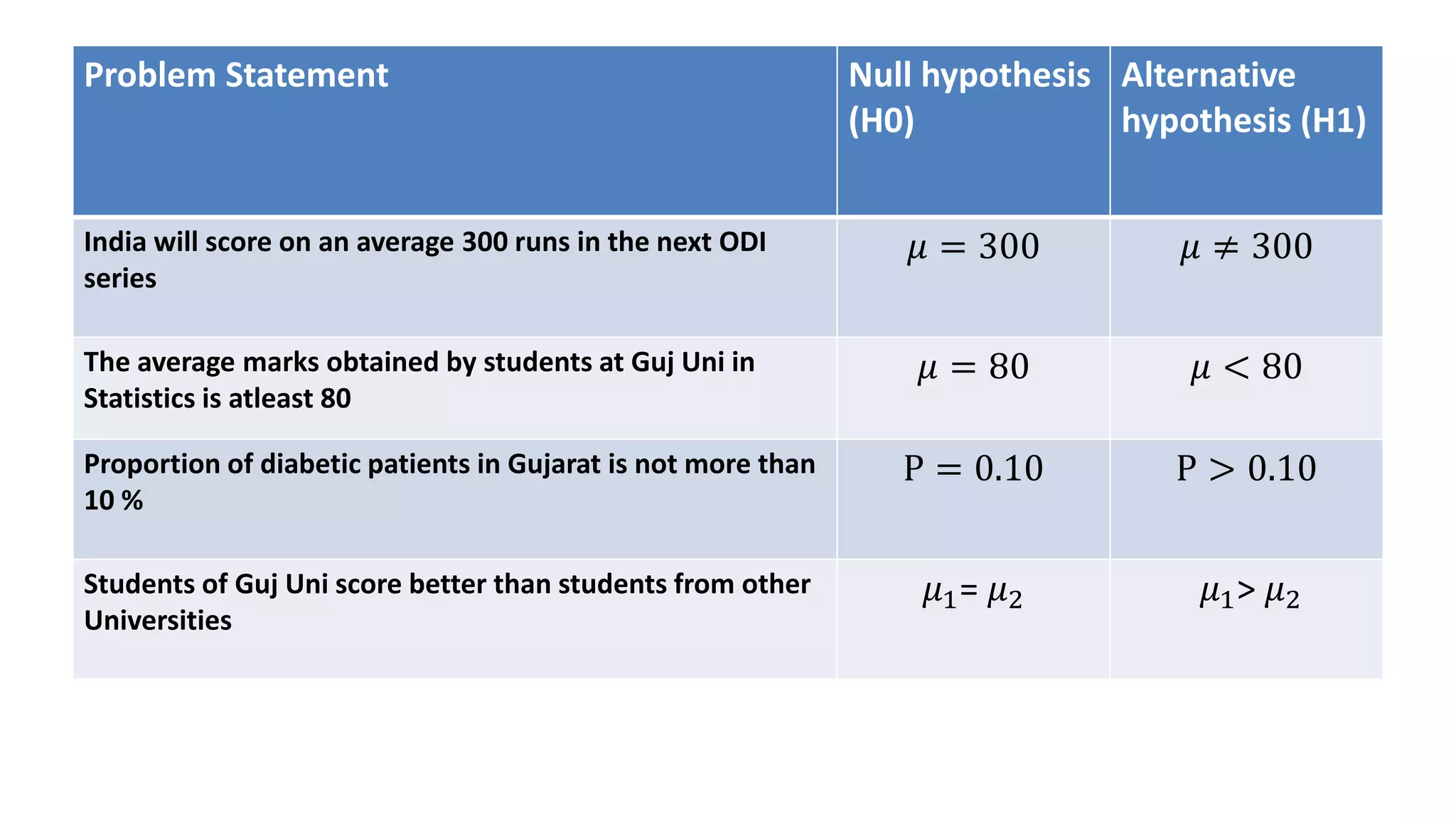

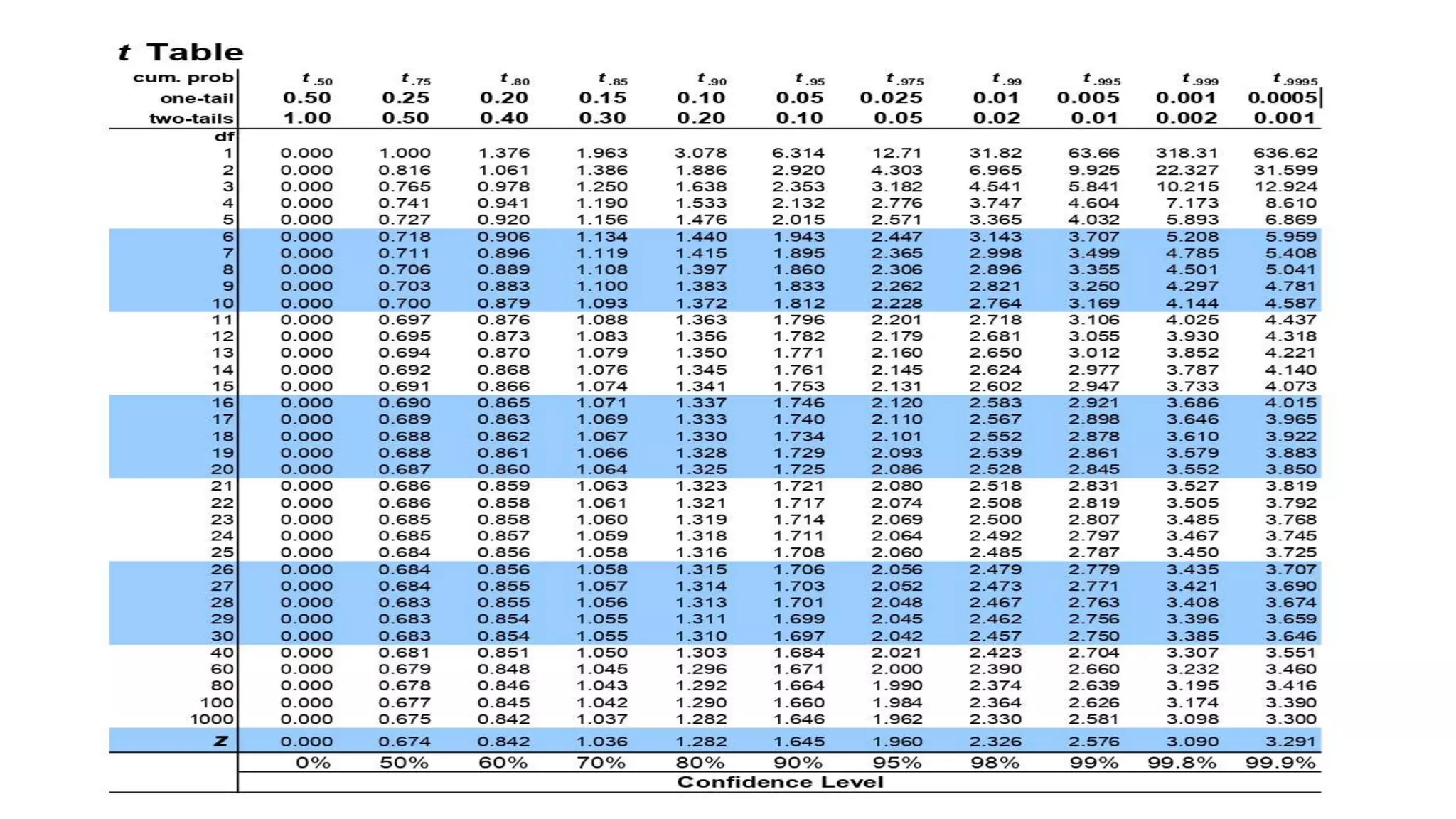

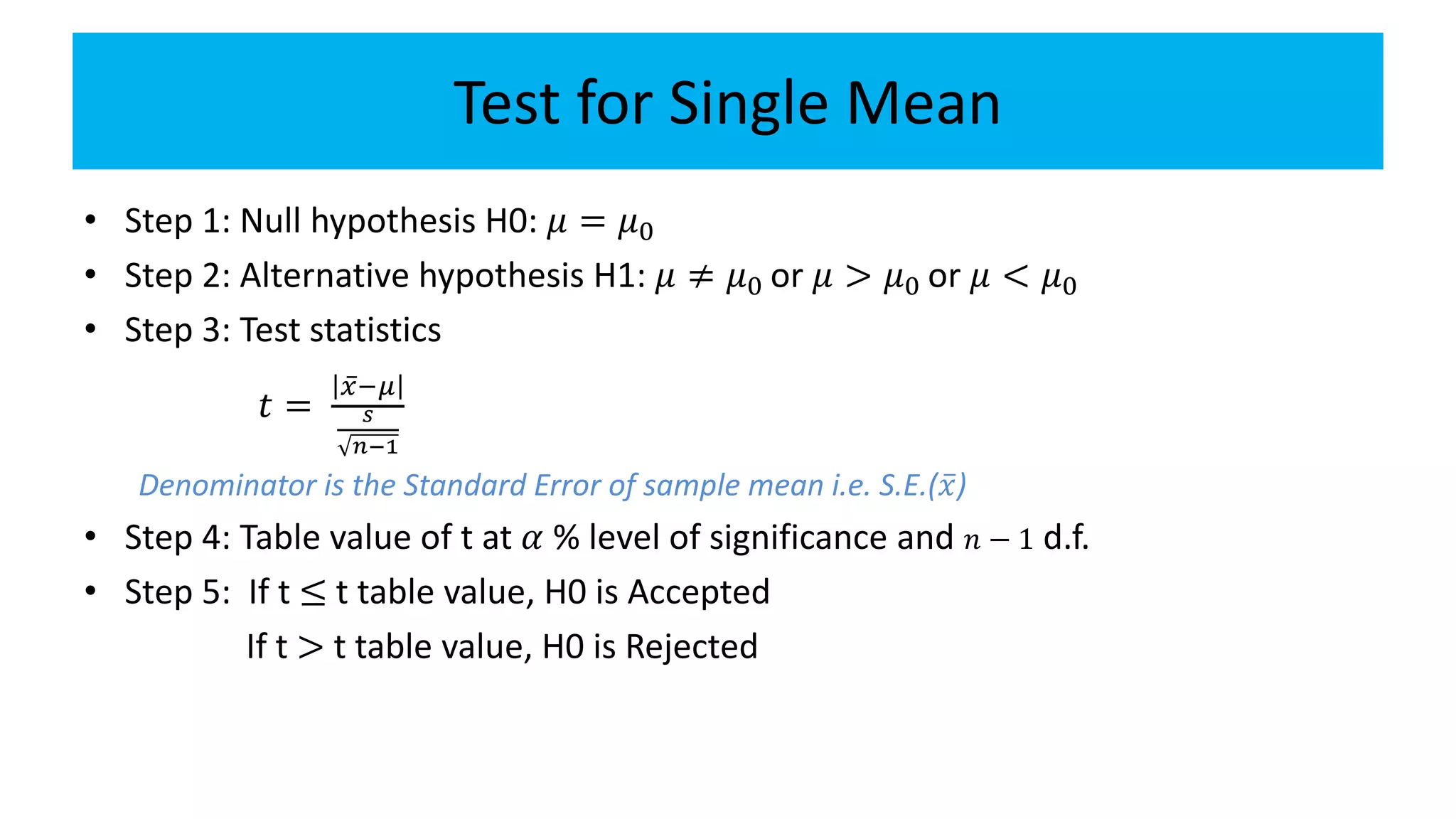

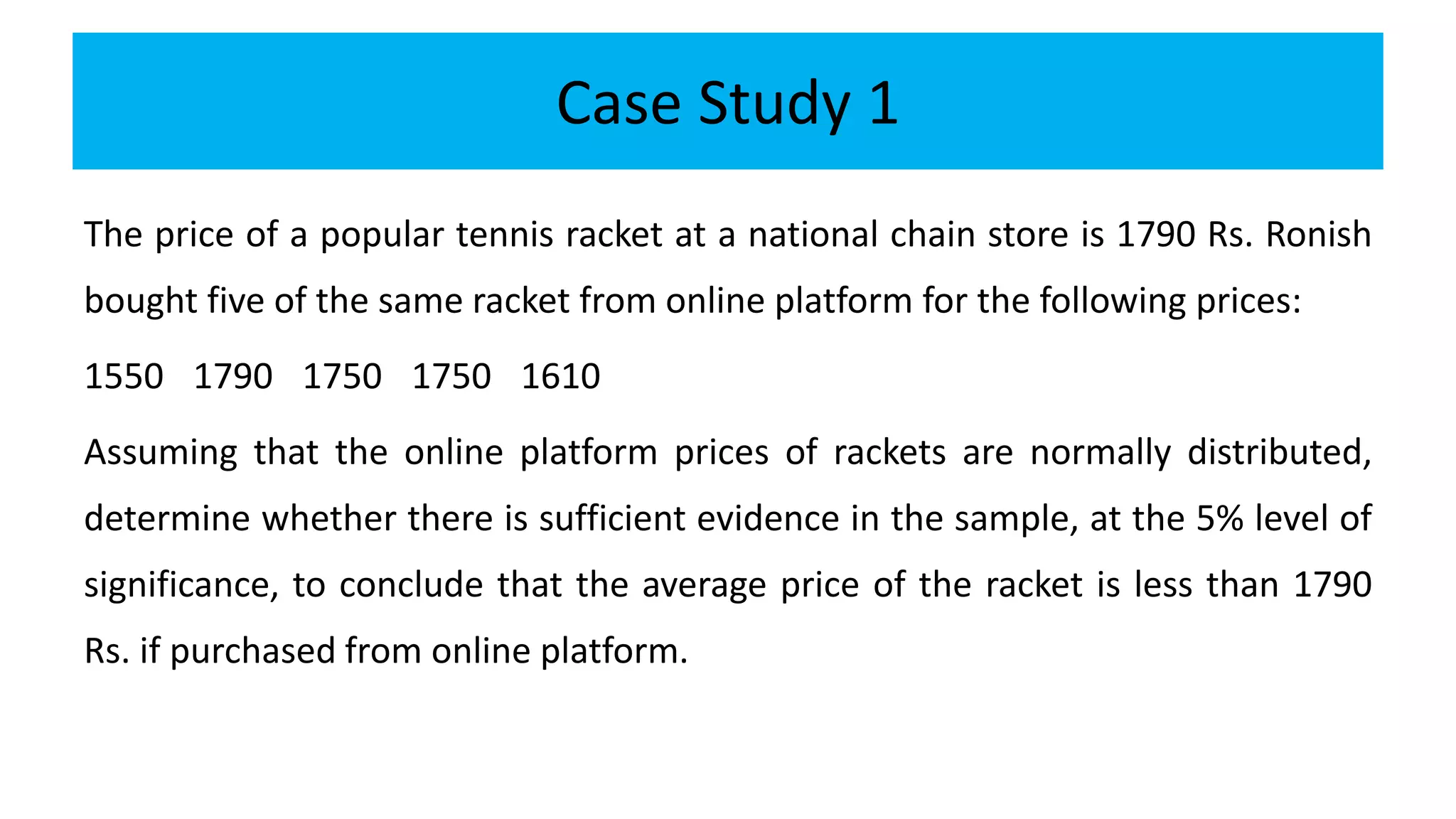

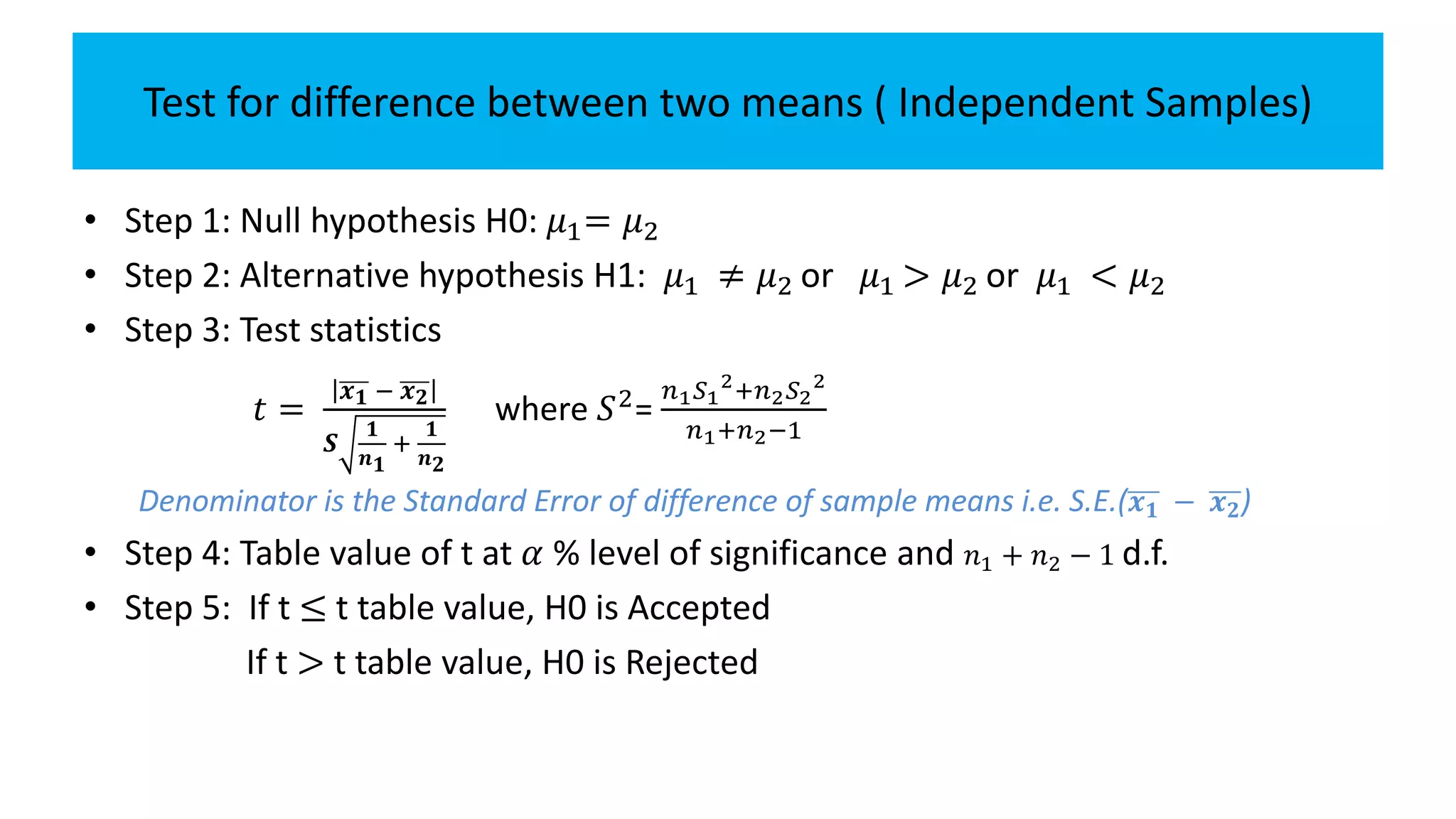

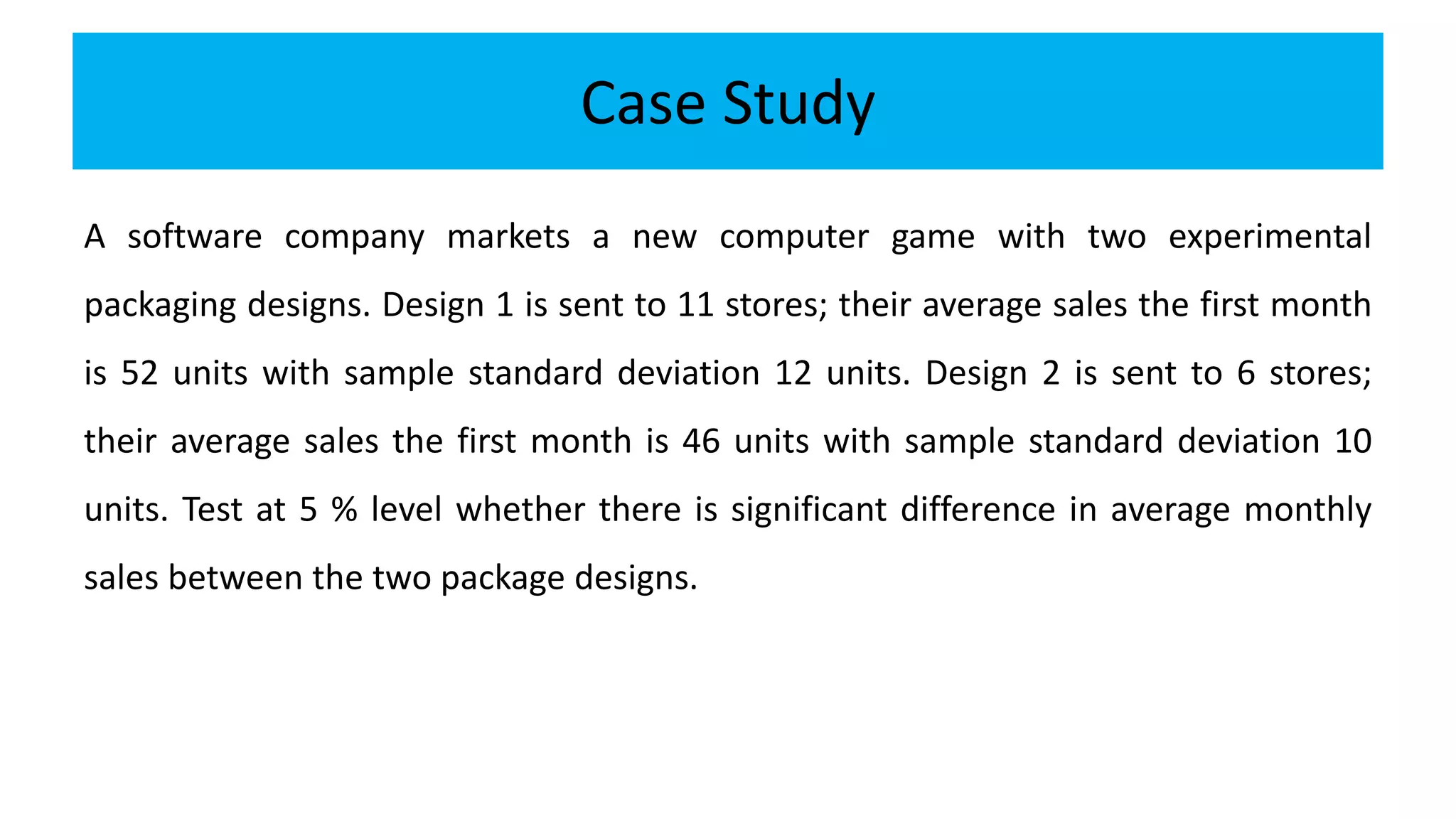

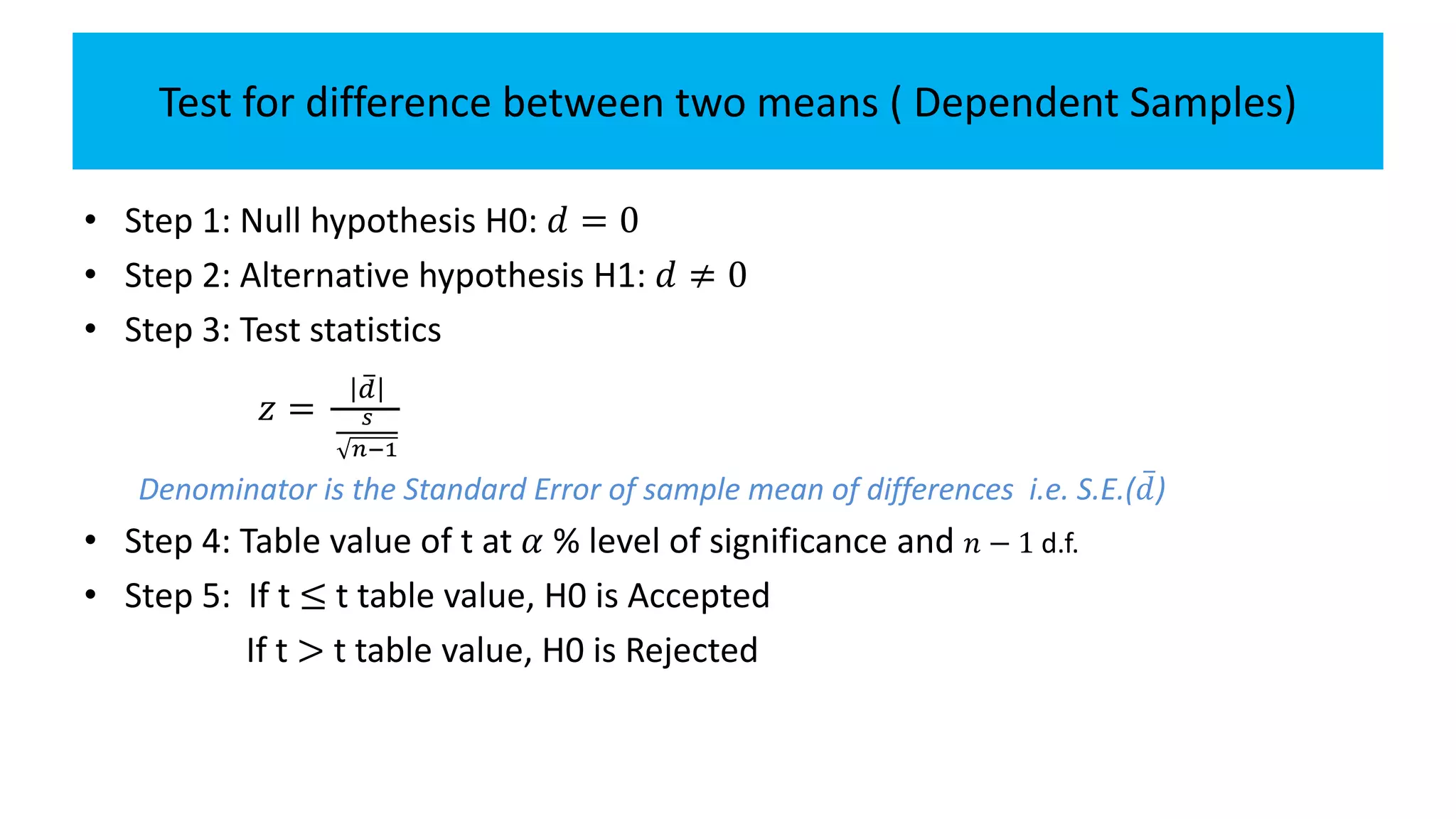

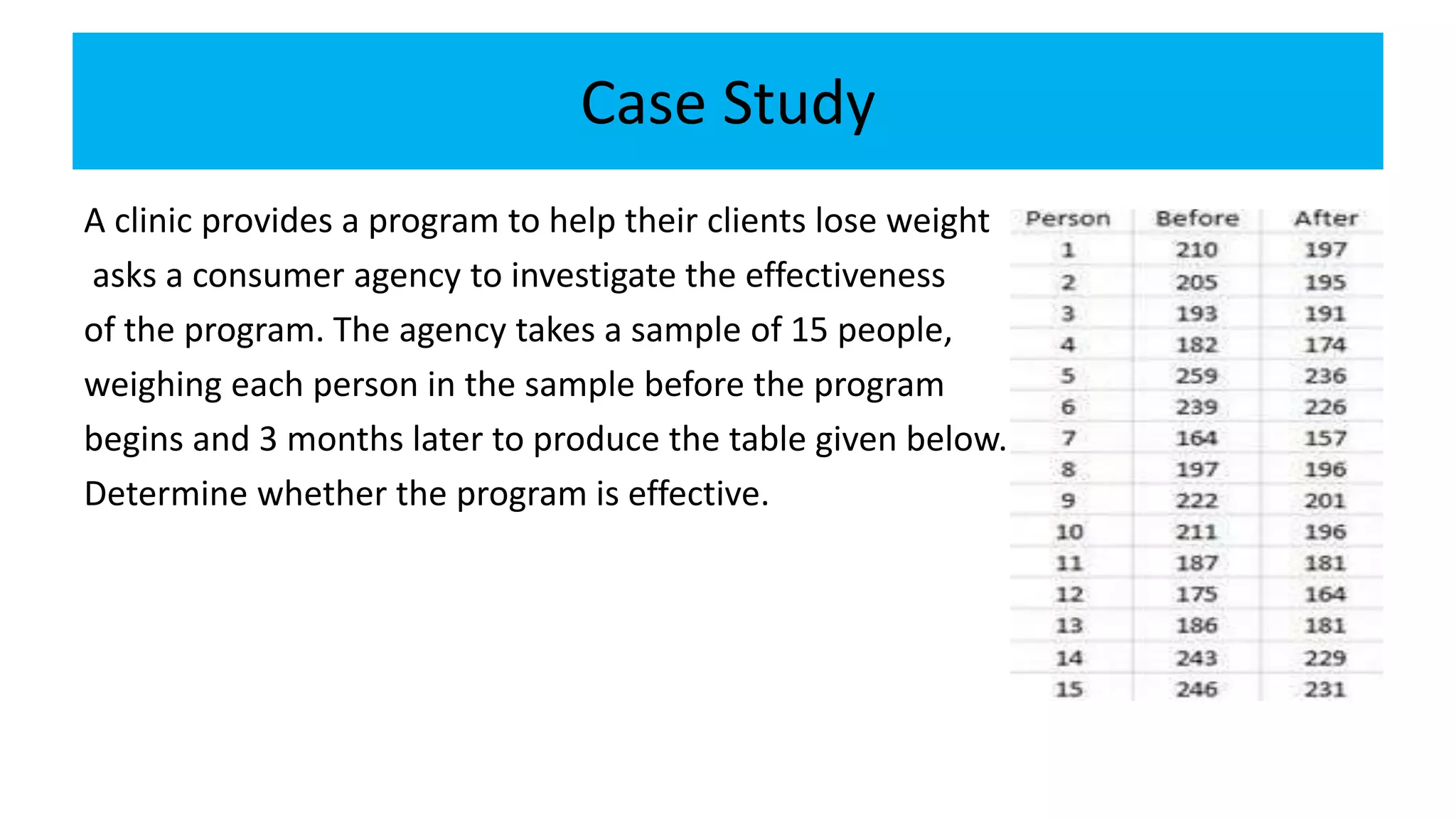

The document explains the concepts of hypothesis testing, including definitions of parameters and statistics, types of hypotheses (null and alternative), and procedures for testing hypotheses with sample data. It discusses different test statistics used based on sample sizes and outlines steps for conducting various tests, including tests for single means and differences between means. Case studies illustrate practical applications of hypothesis testing in real-world scenarios.