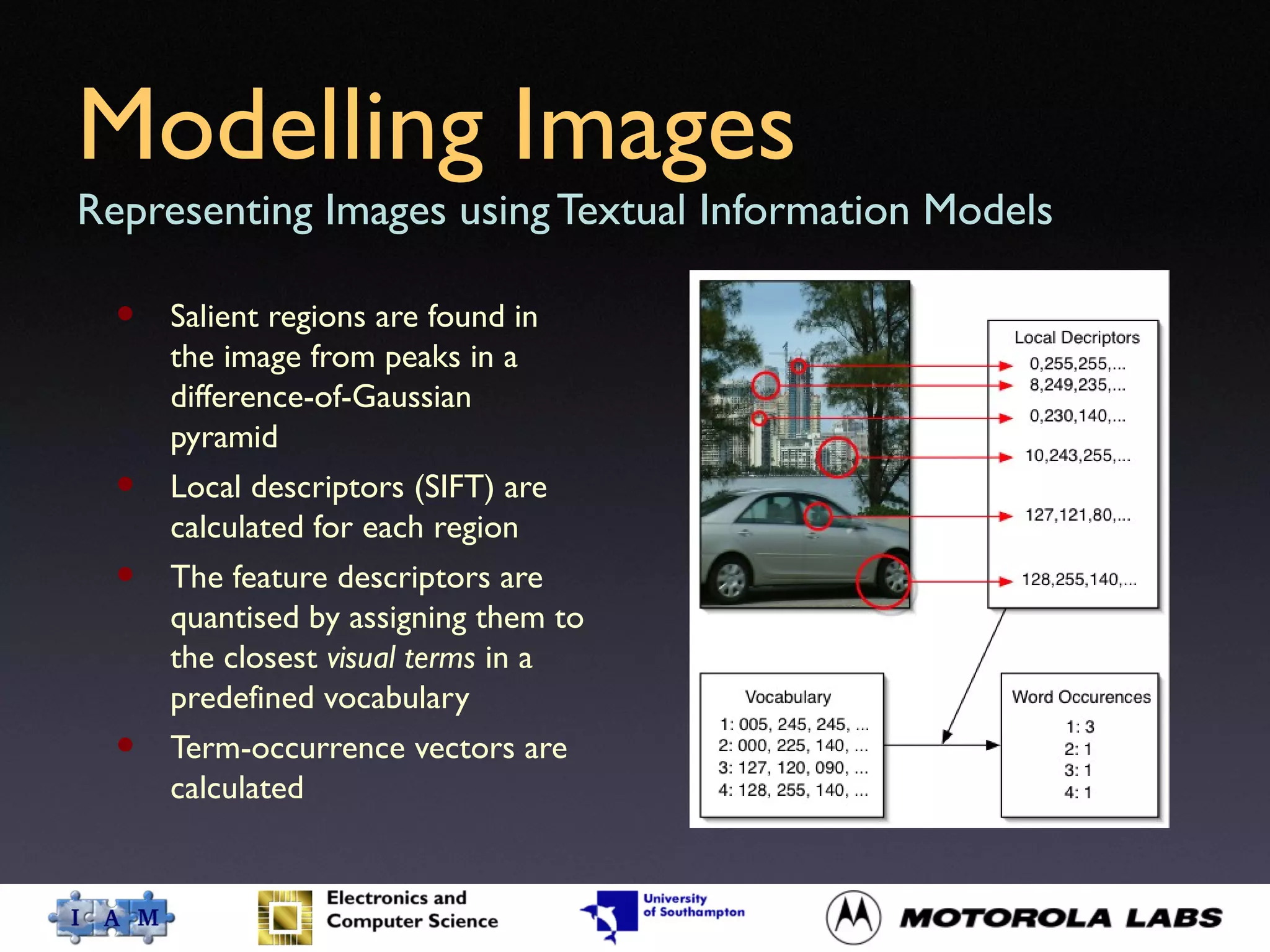

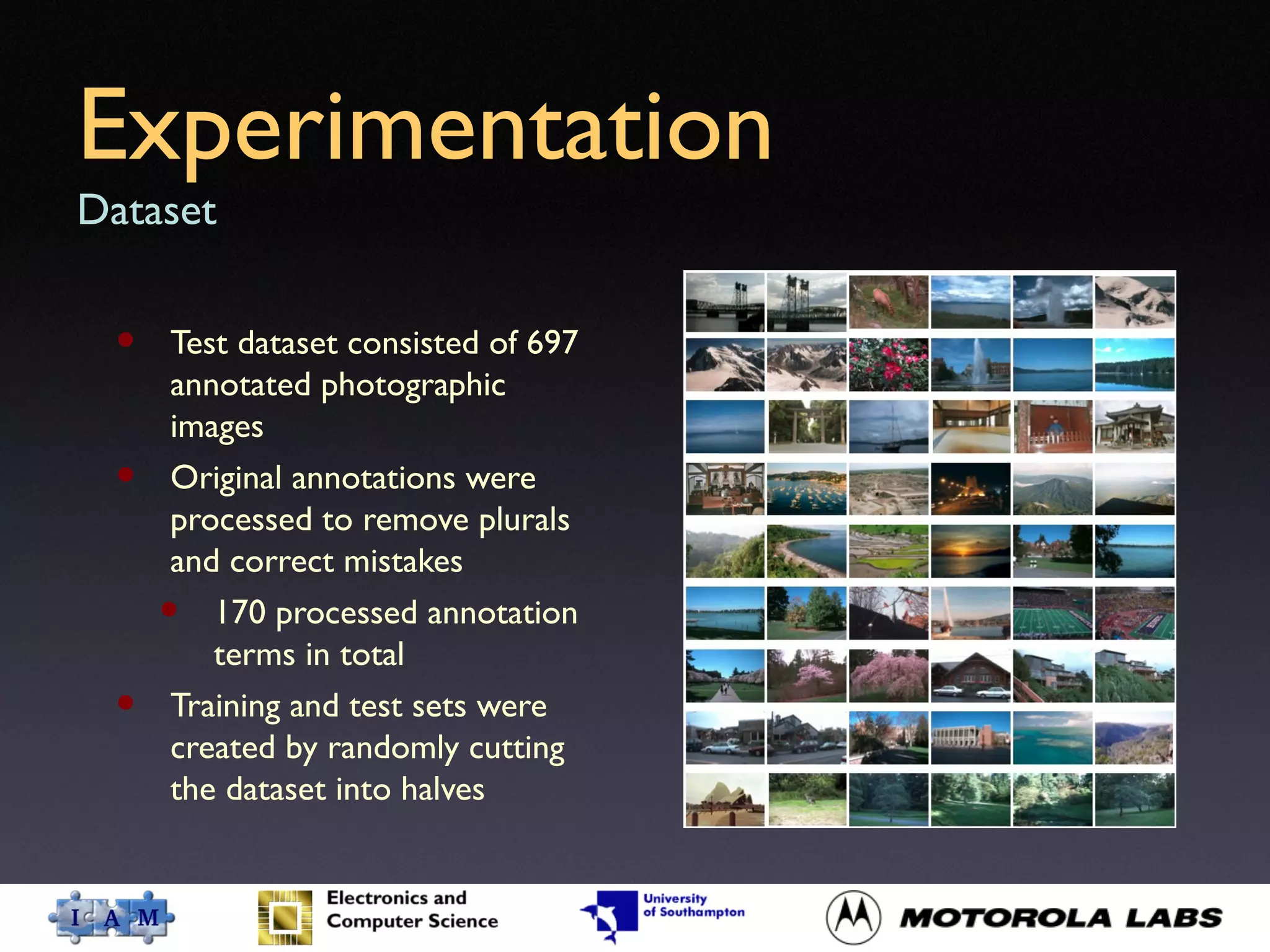

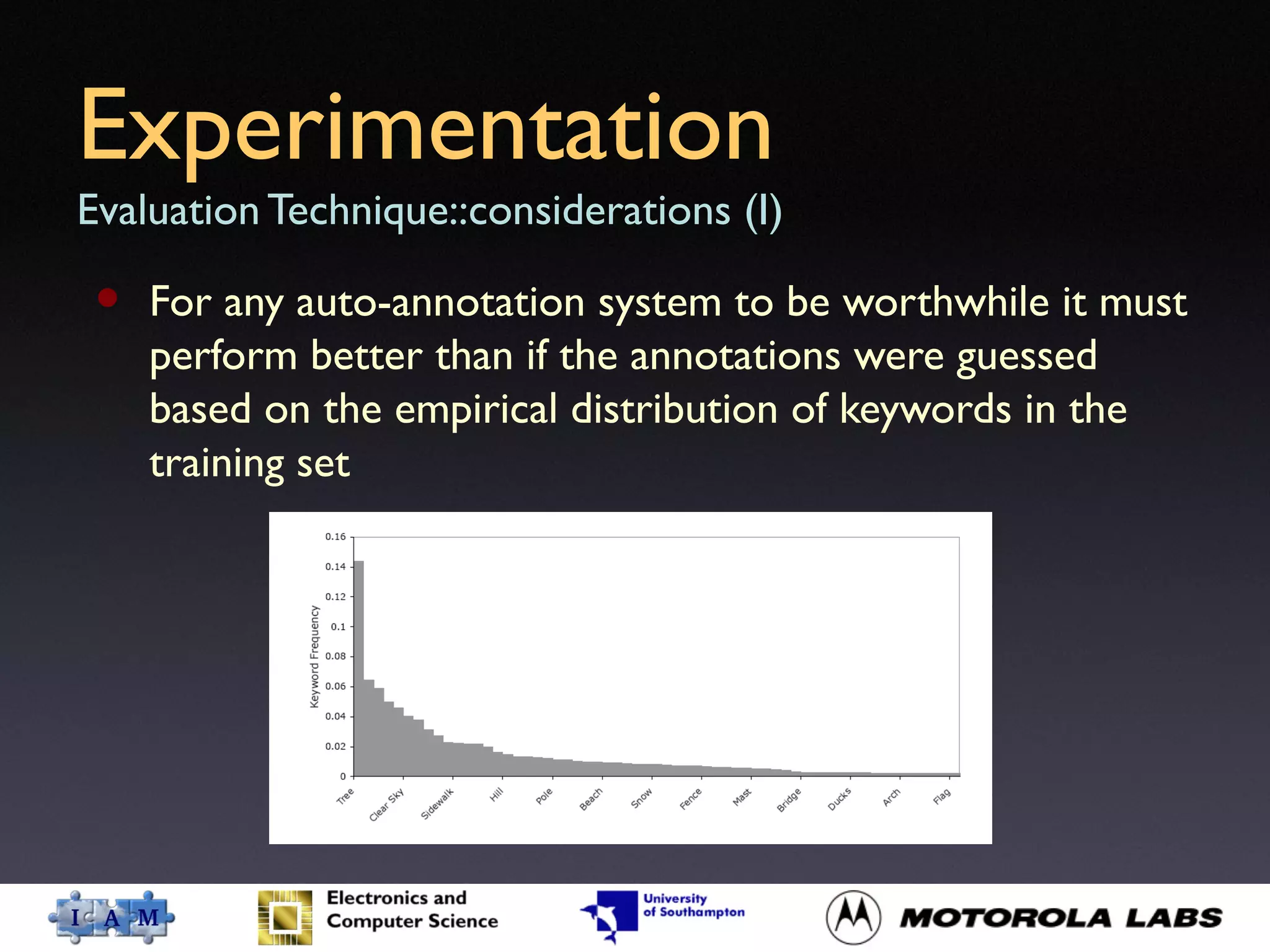

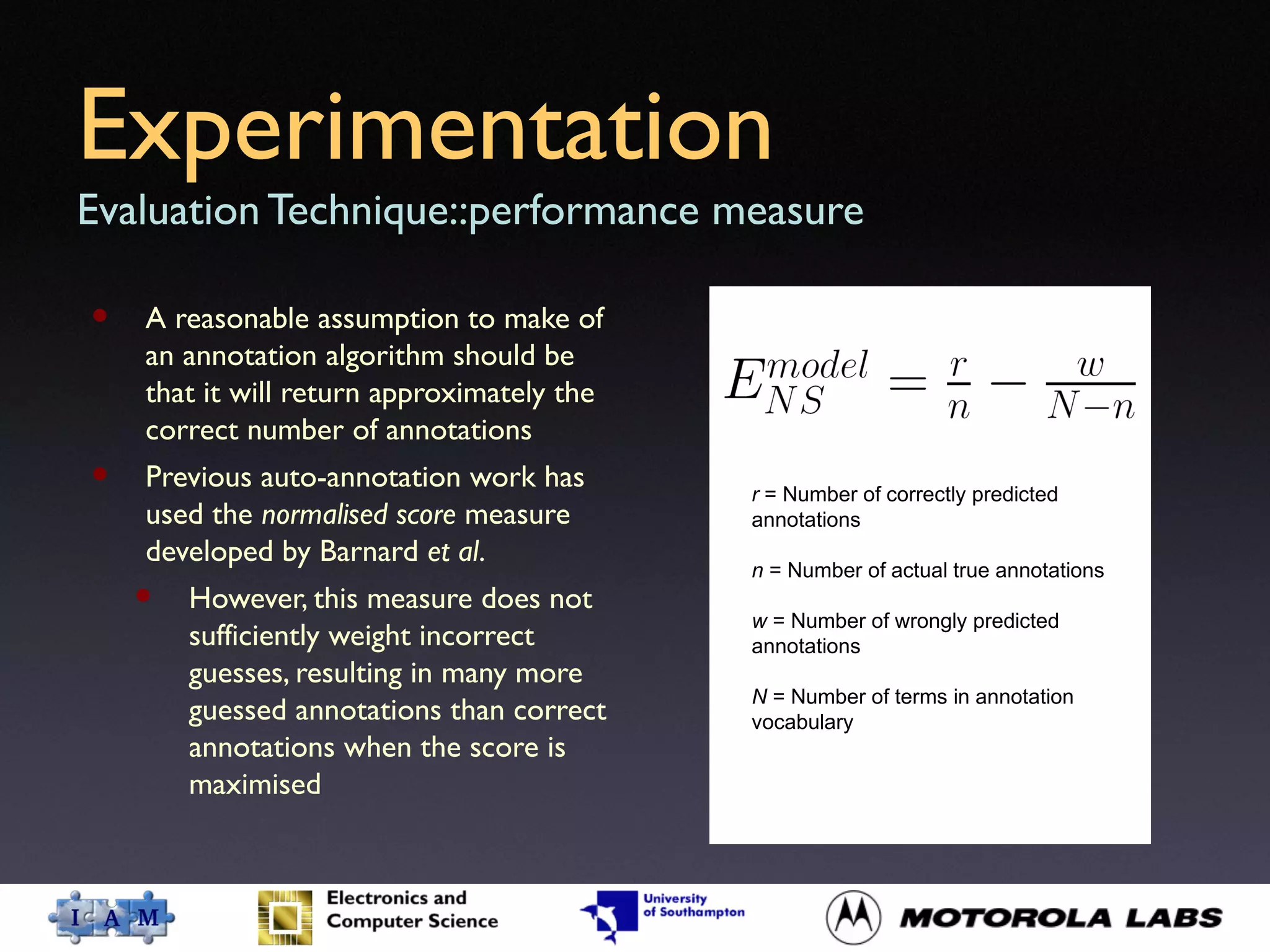

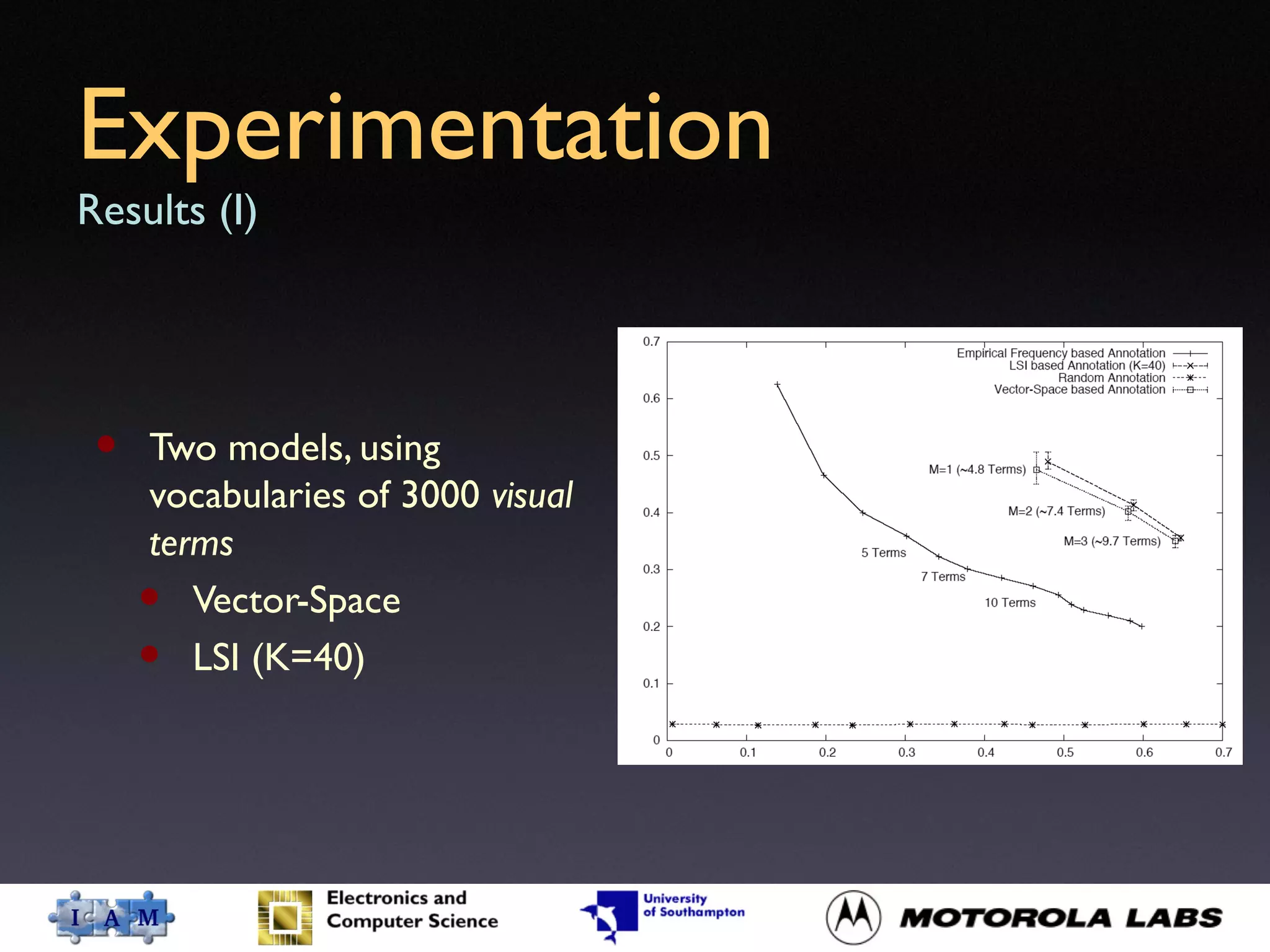

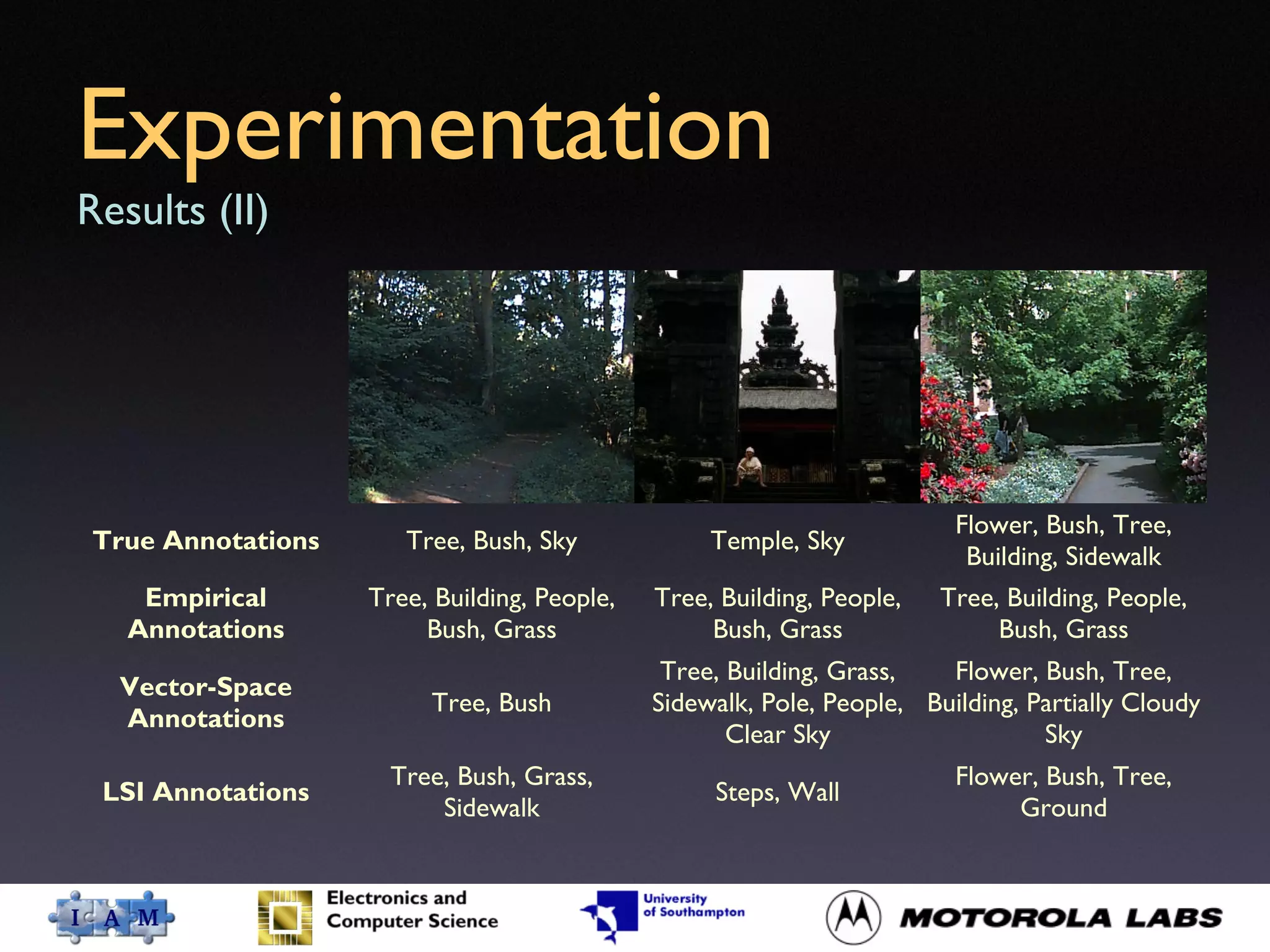

This document presents a method for automatic image annotation by assessing visual similarity through local descriptors of salient regions. It discusses two models for image content representation: vector-space and latent semantic indexing (LSI), with LSI showing slightly better performance in experimentation. The paper concludes that while the proposed auto-annotation technique demonstrates promise, future improvements can be made by integrating additional local descriptors and adapting the number of training images dynamically.