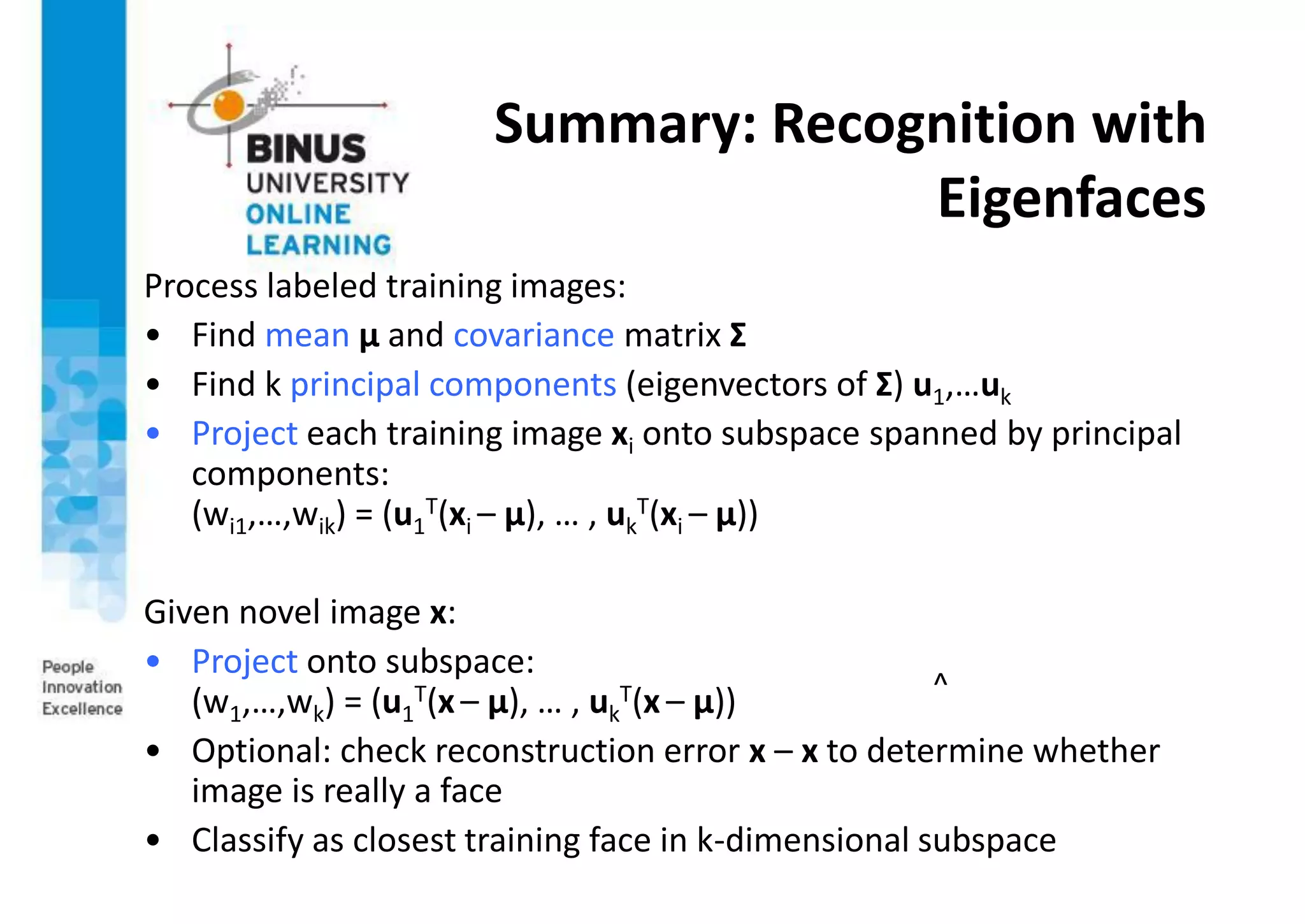

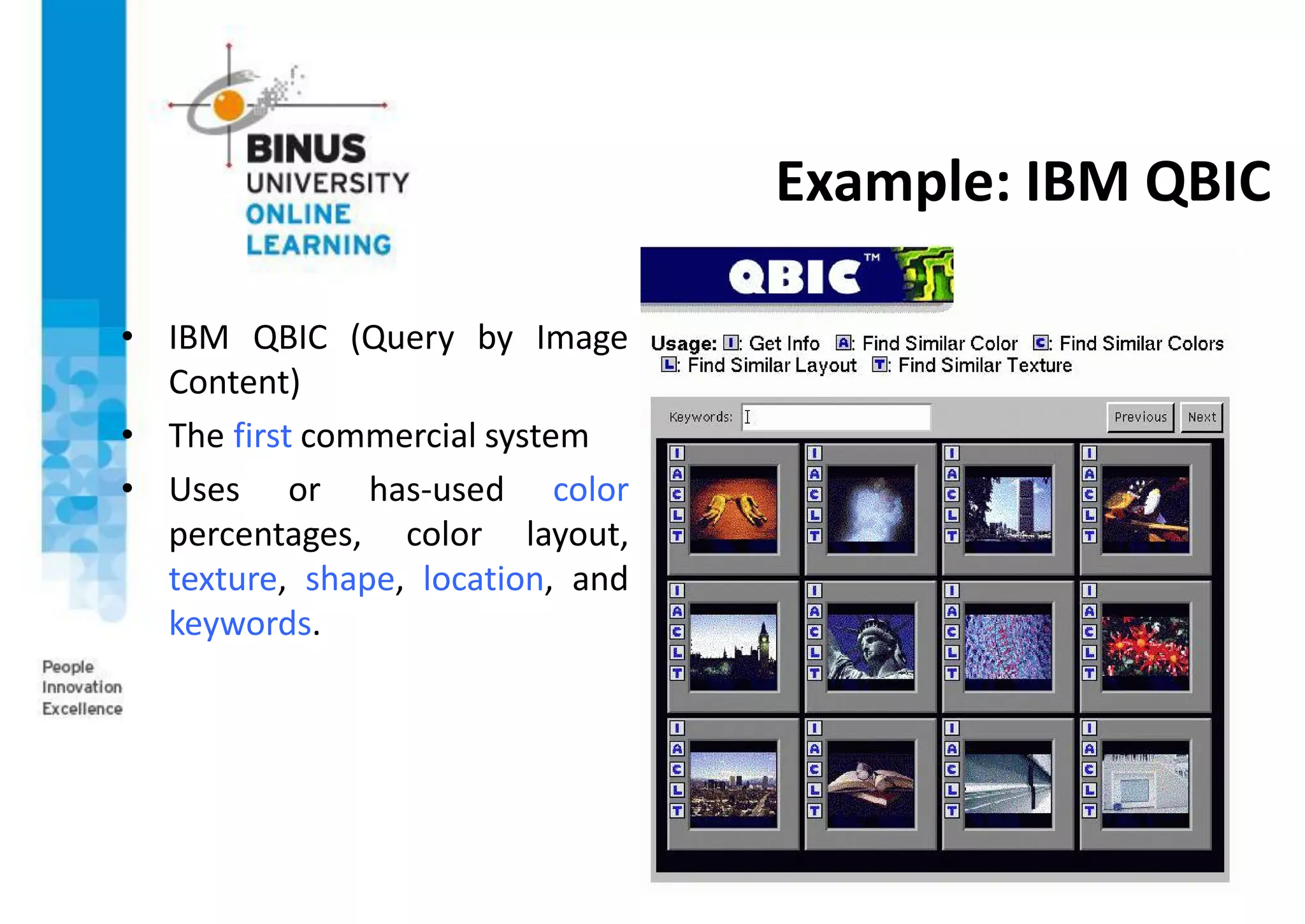

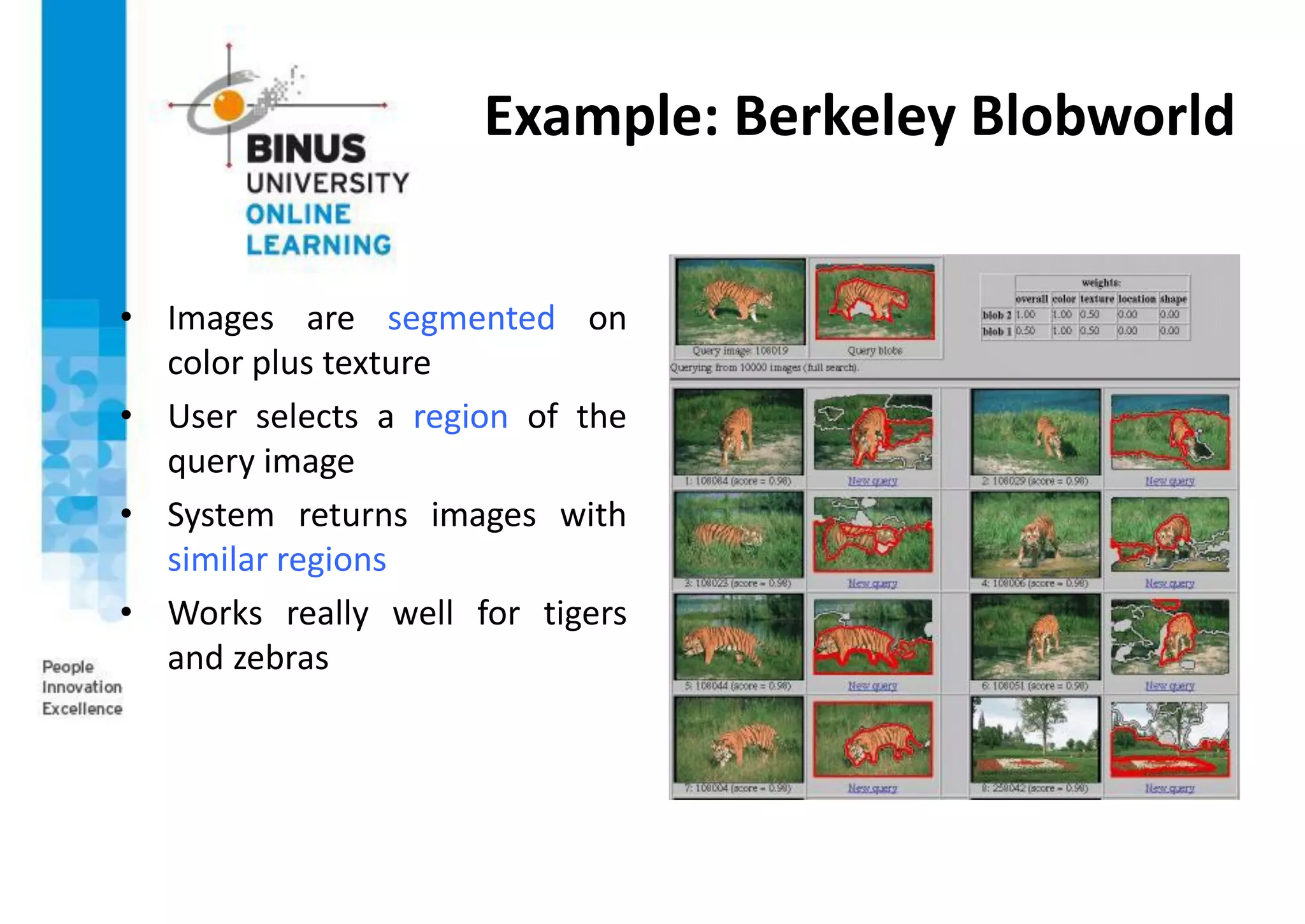

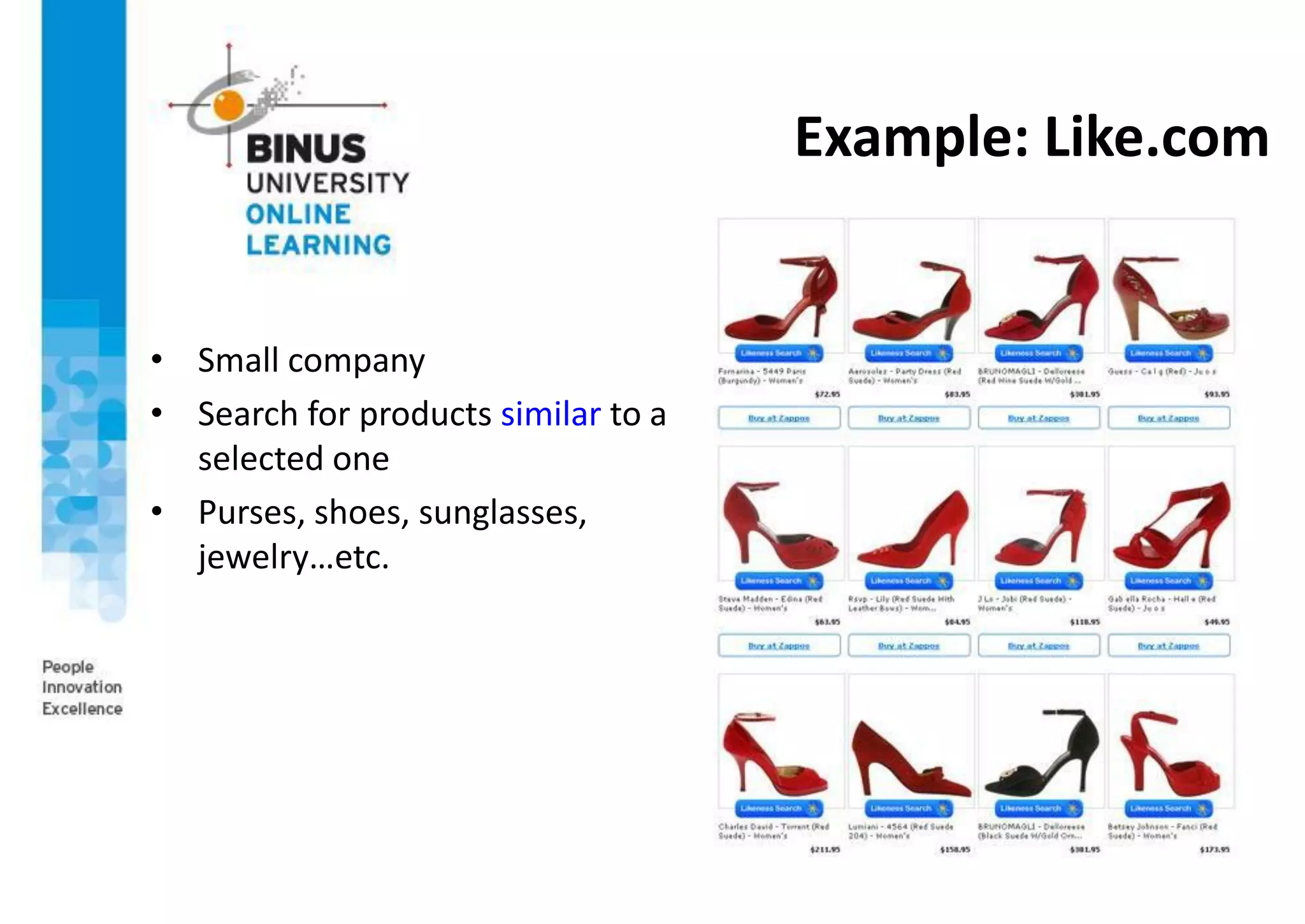

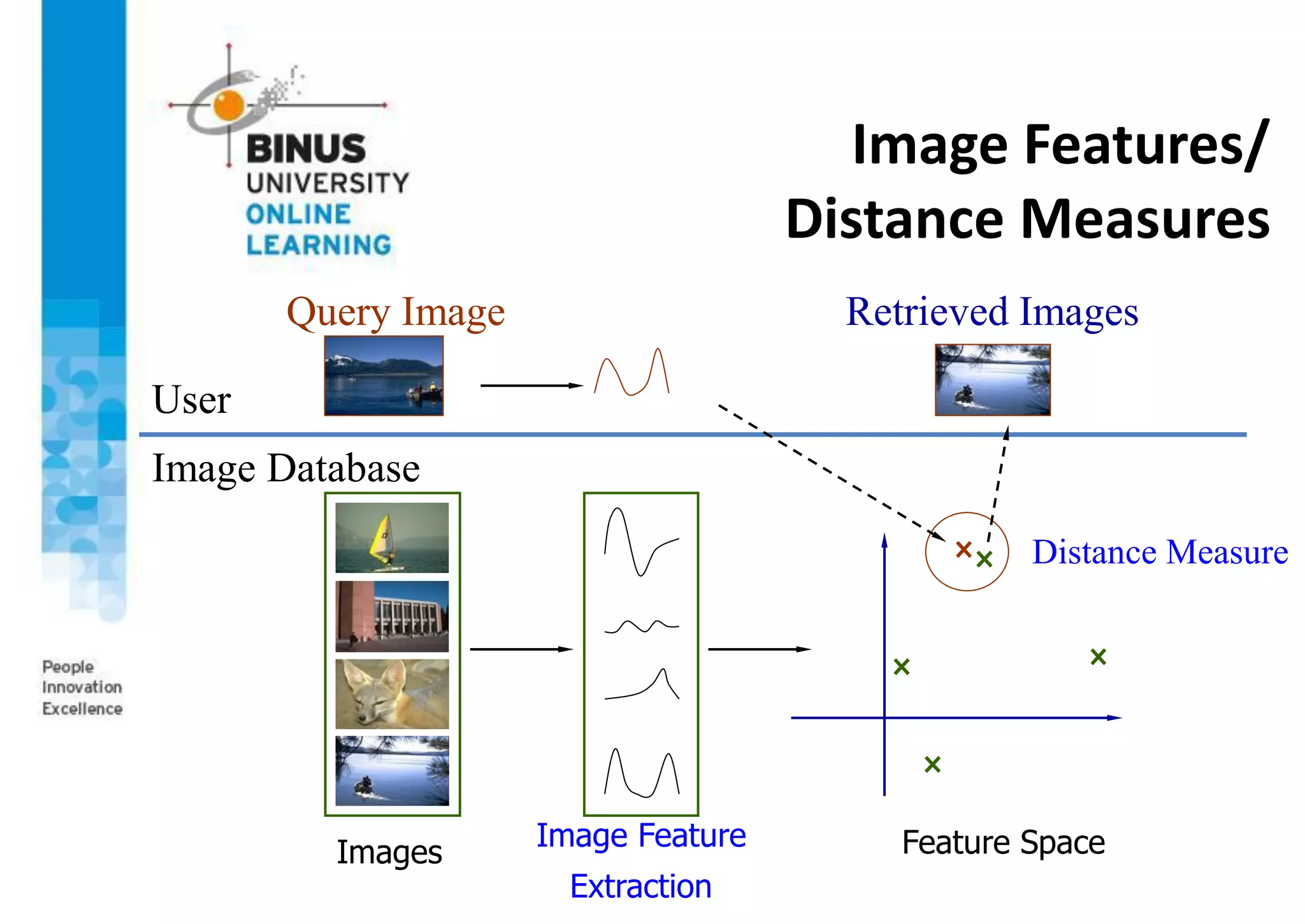

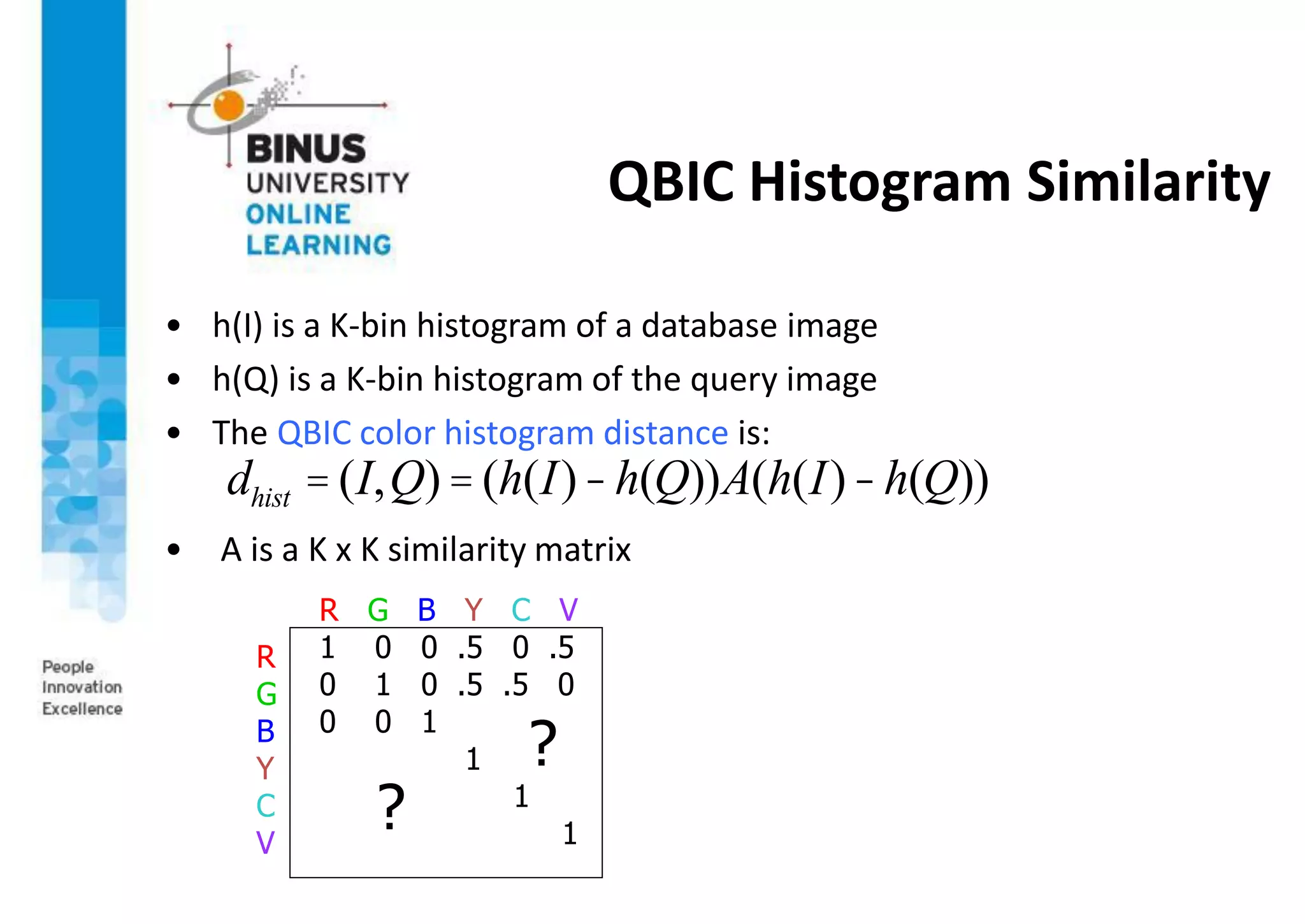

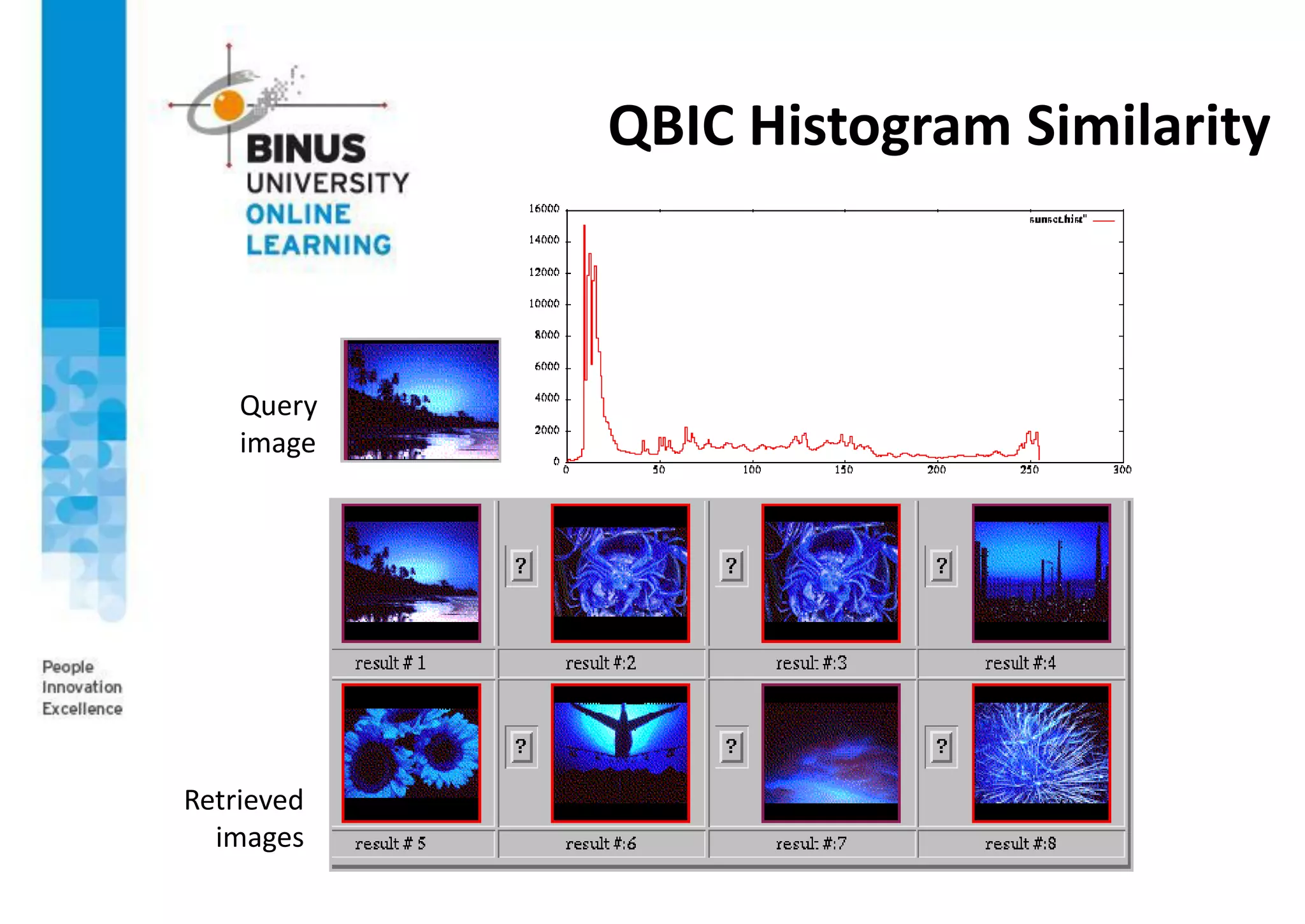

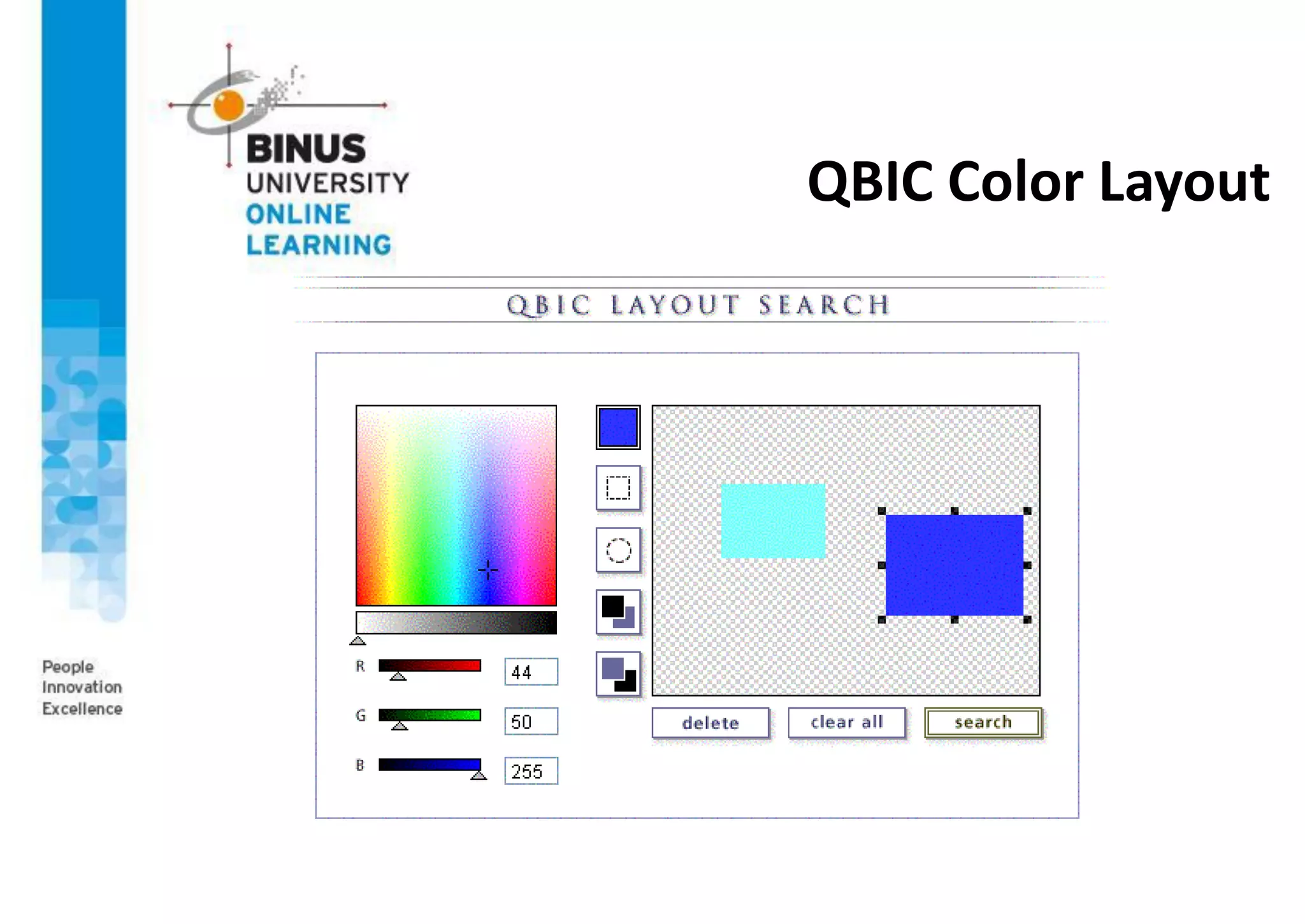

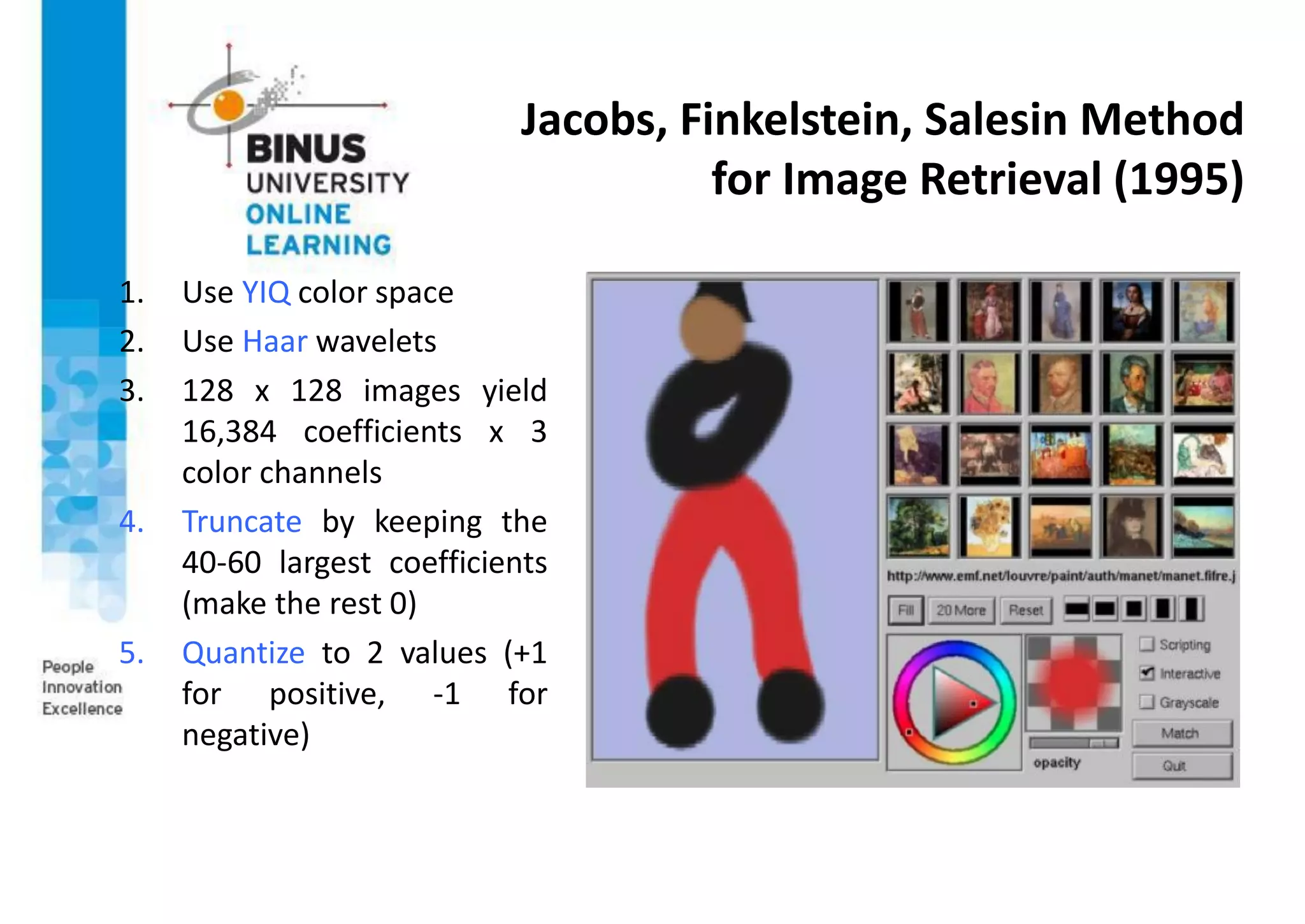

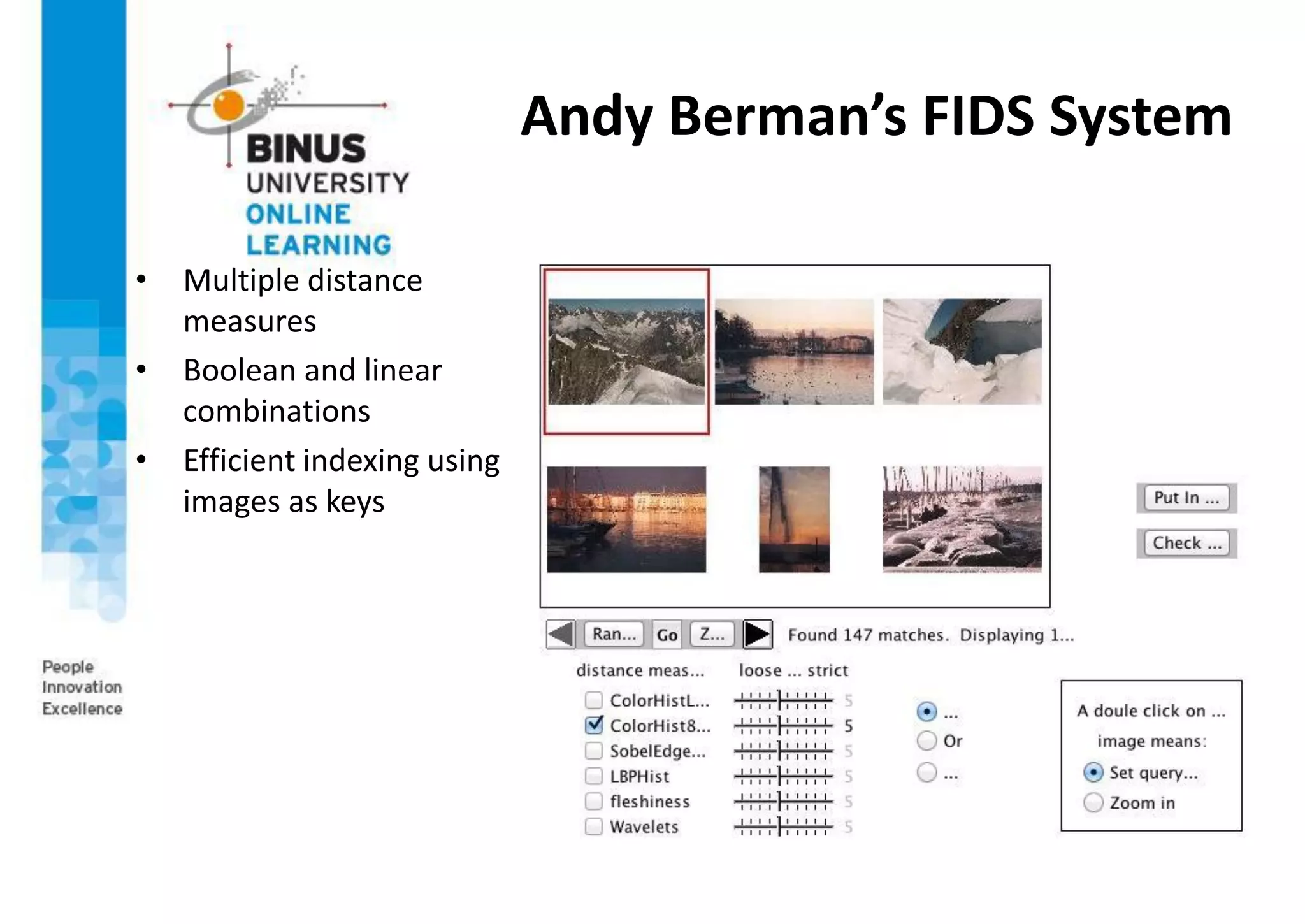

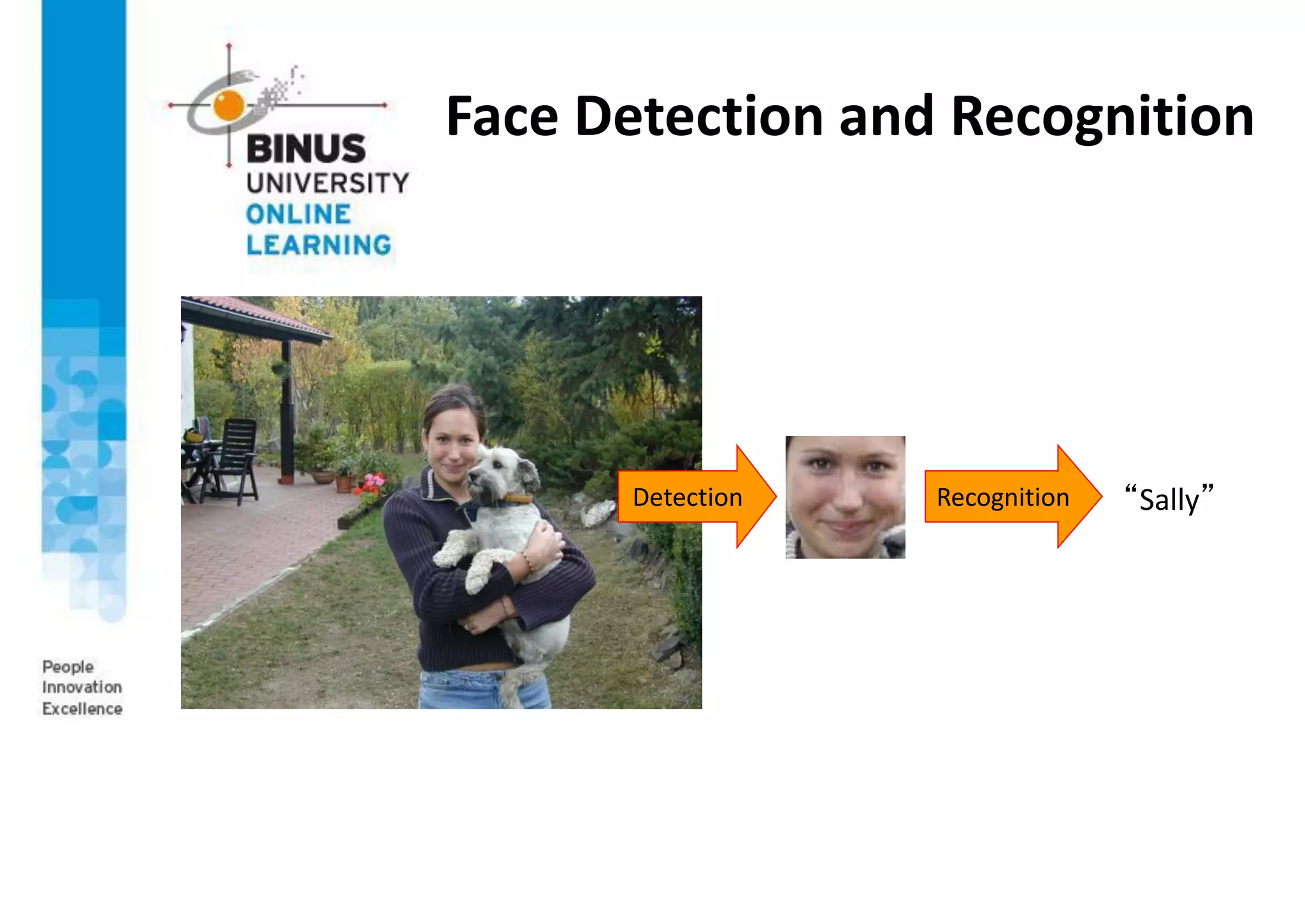

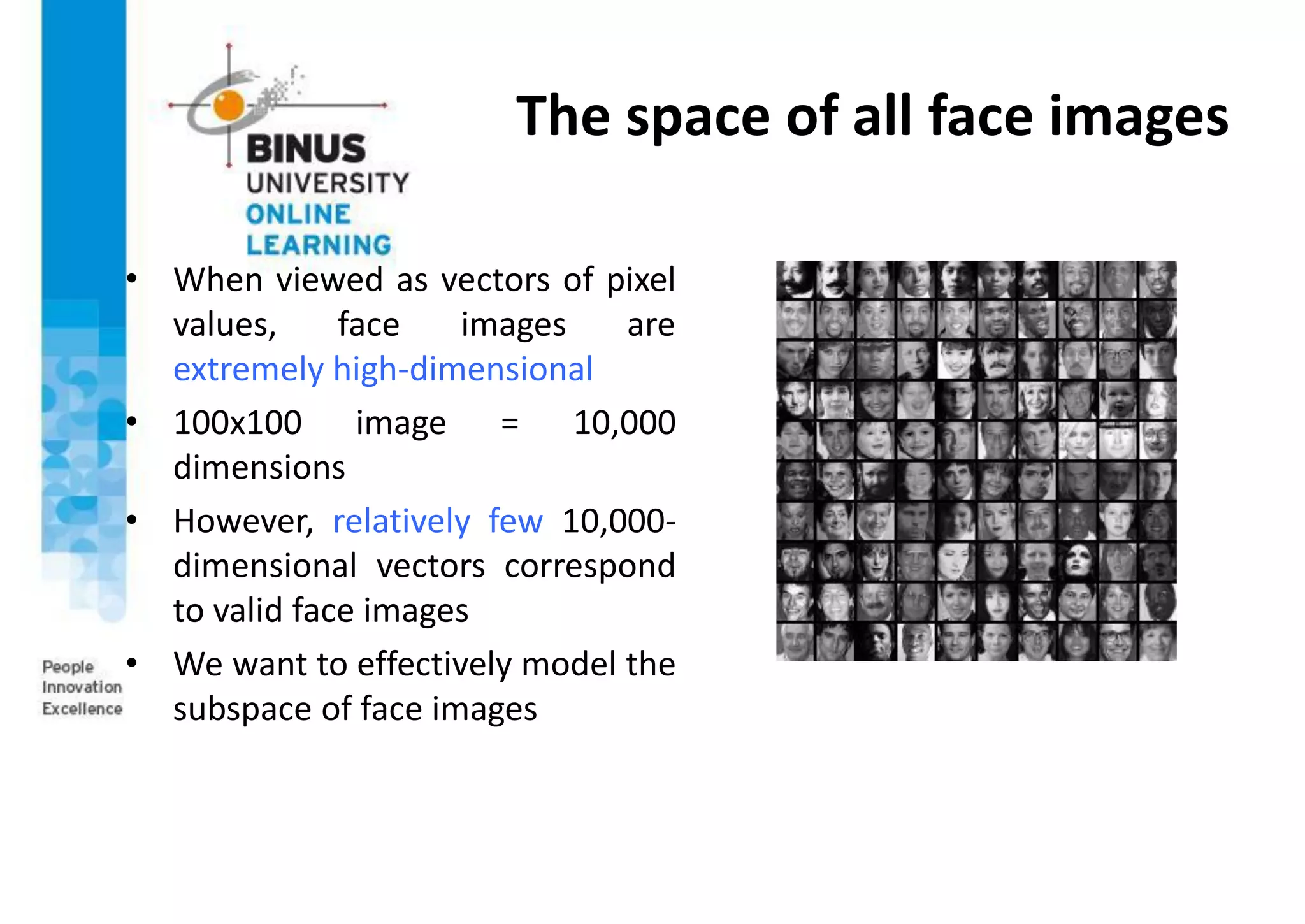

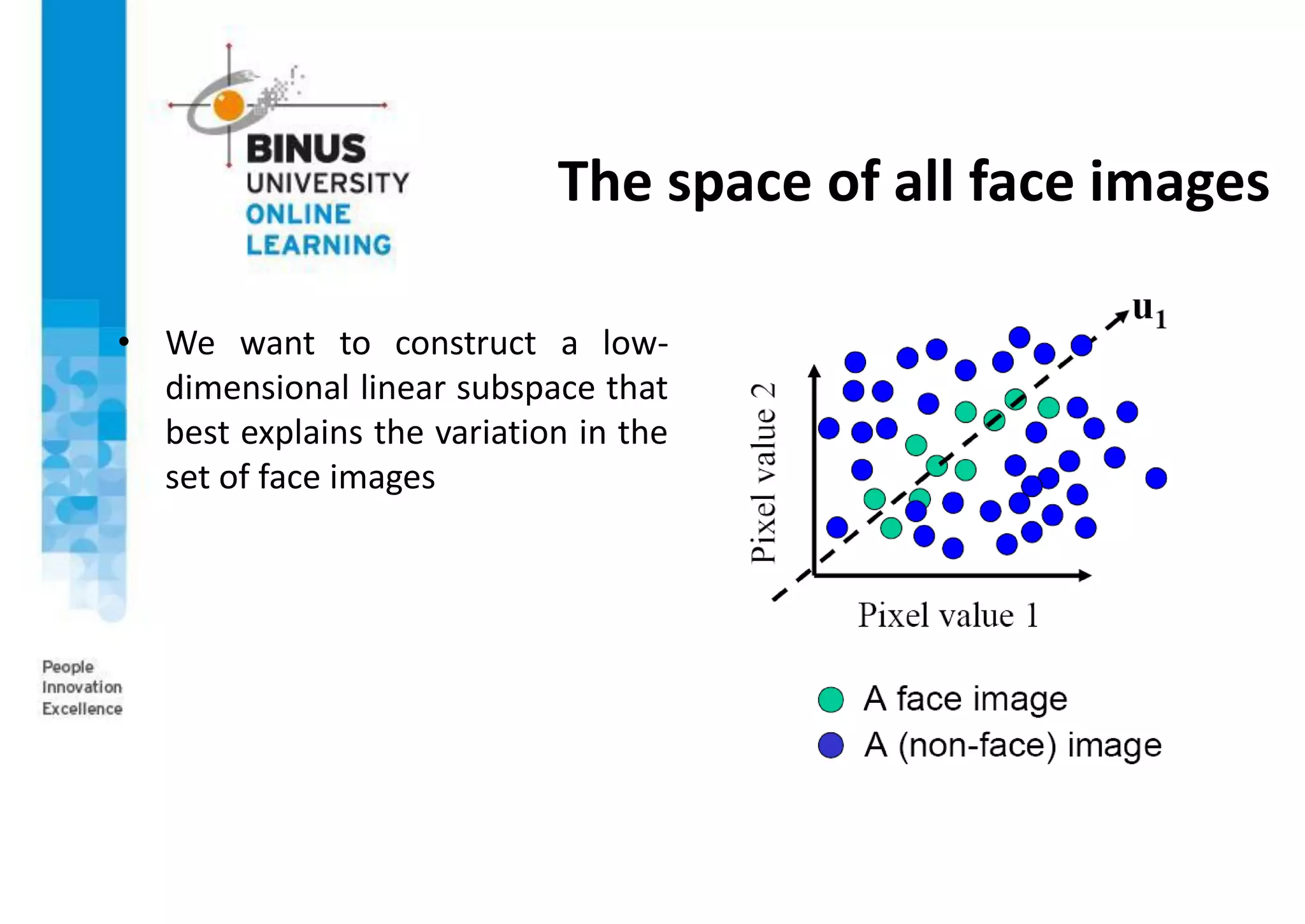

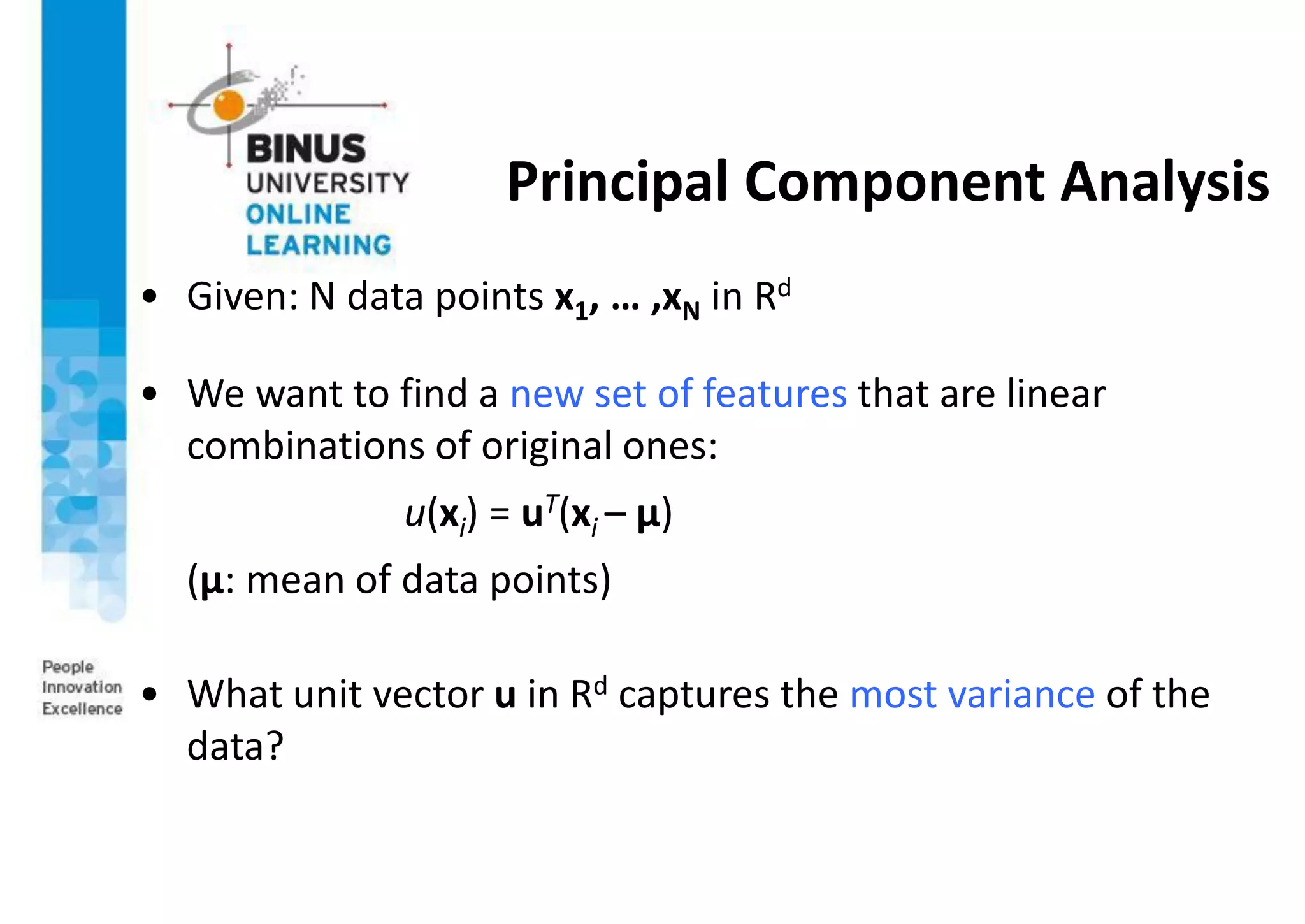

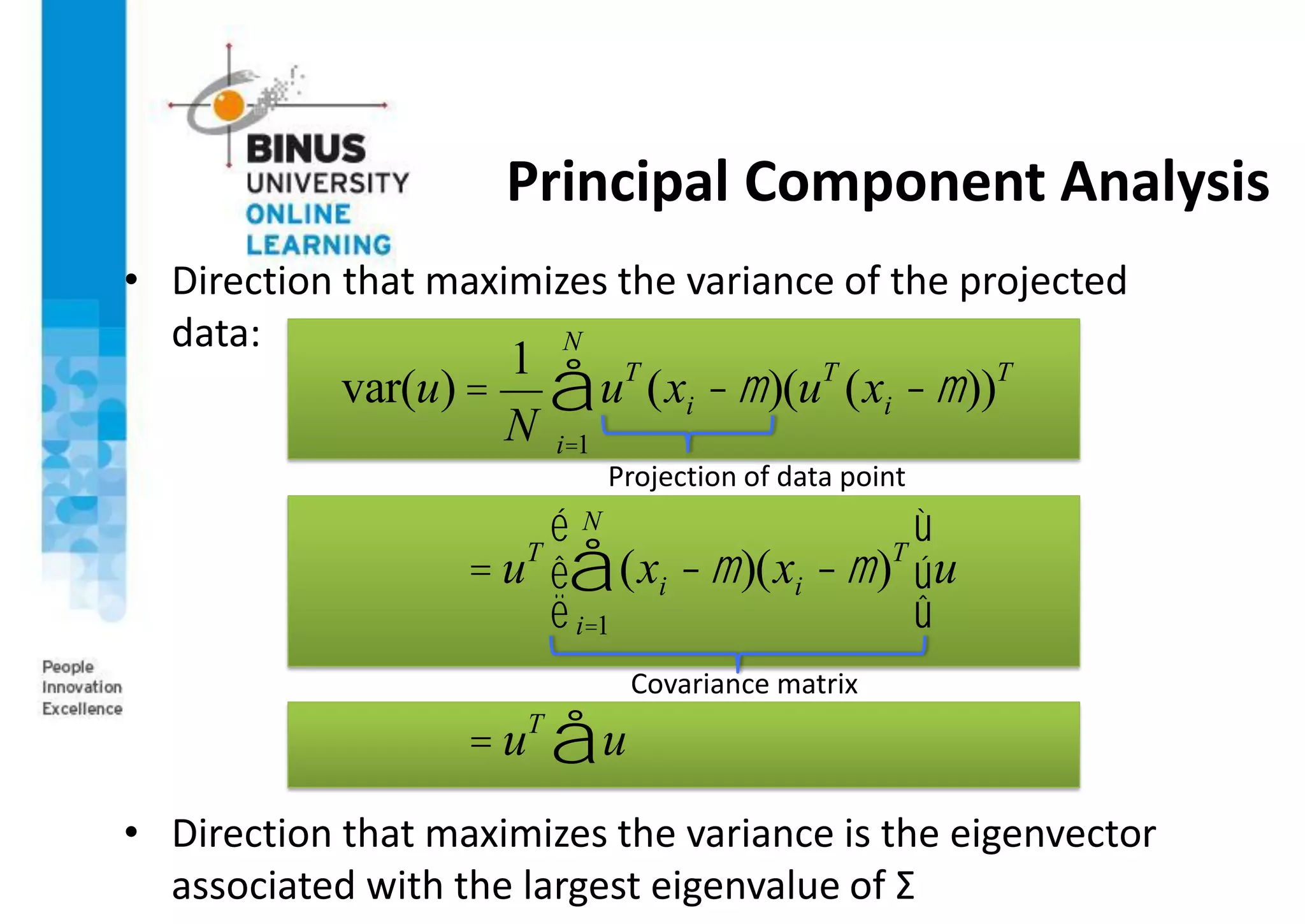

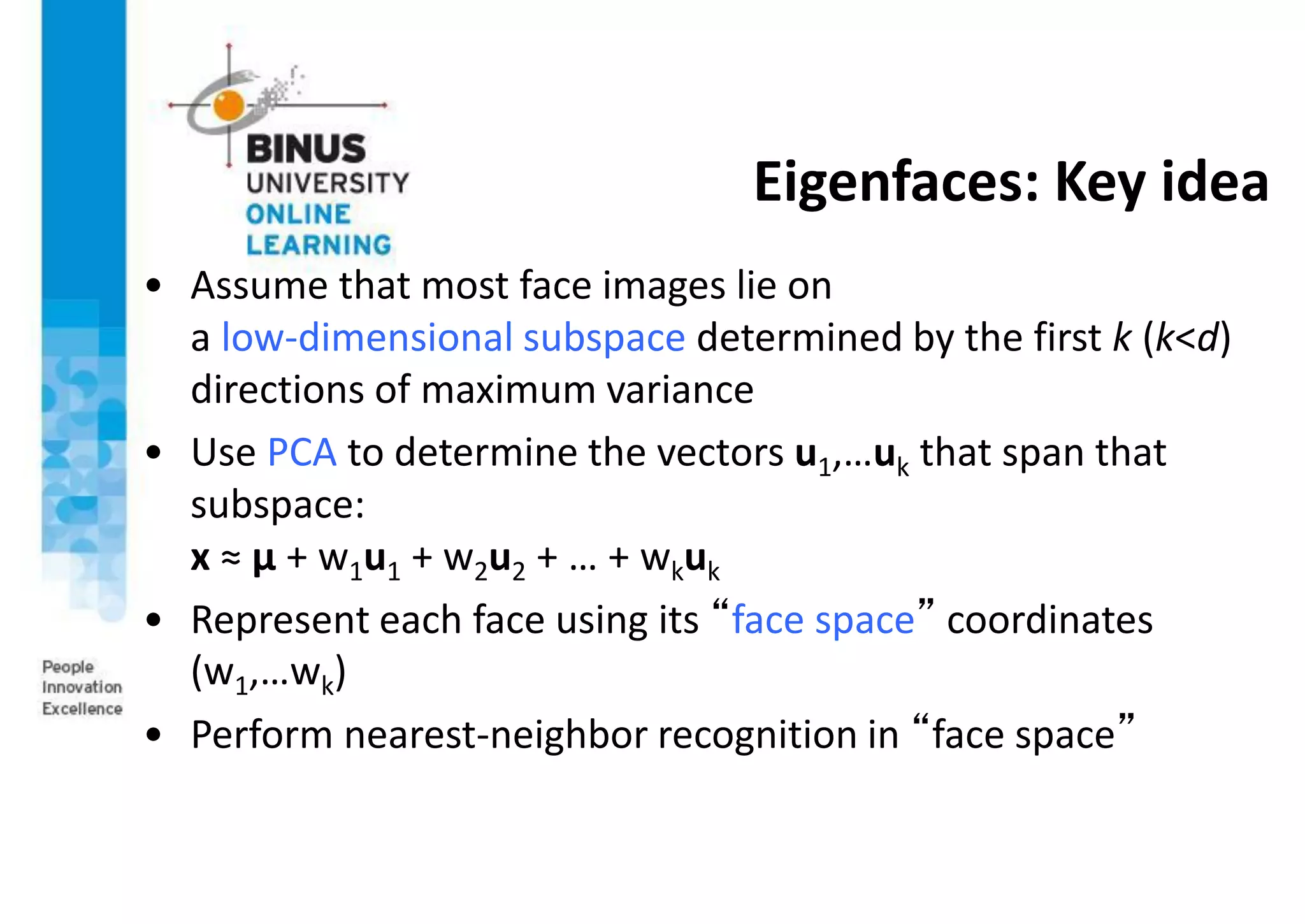

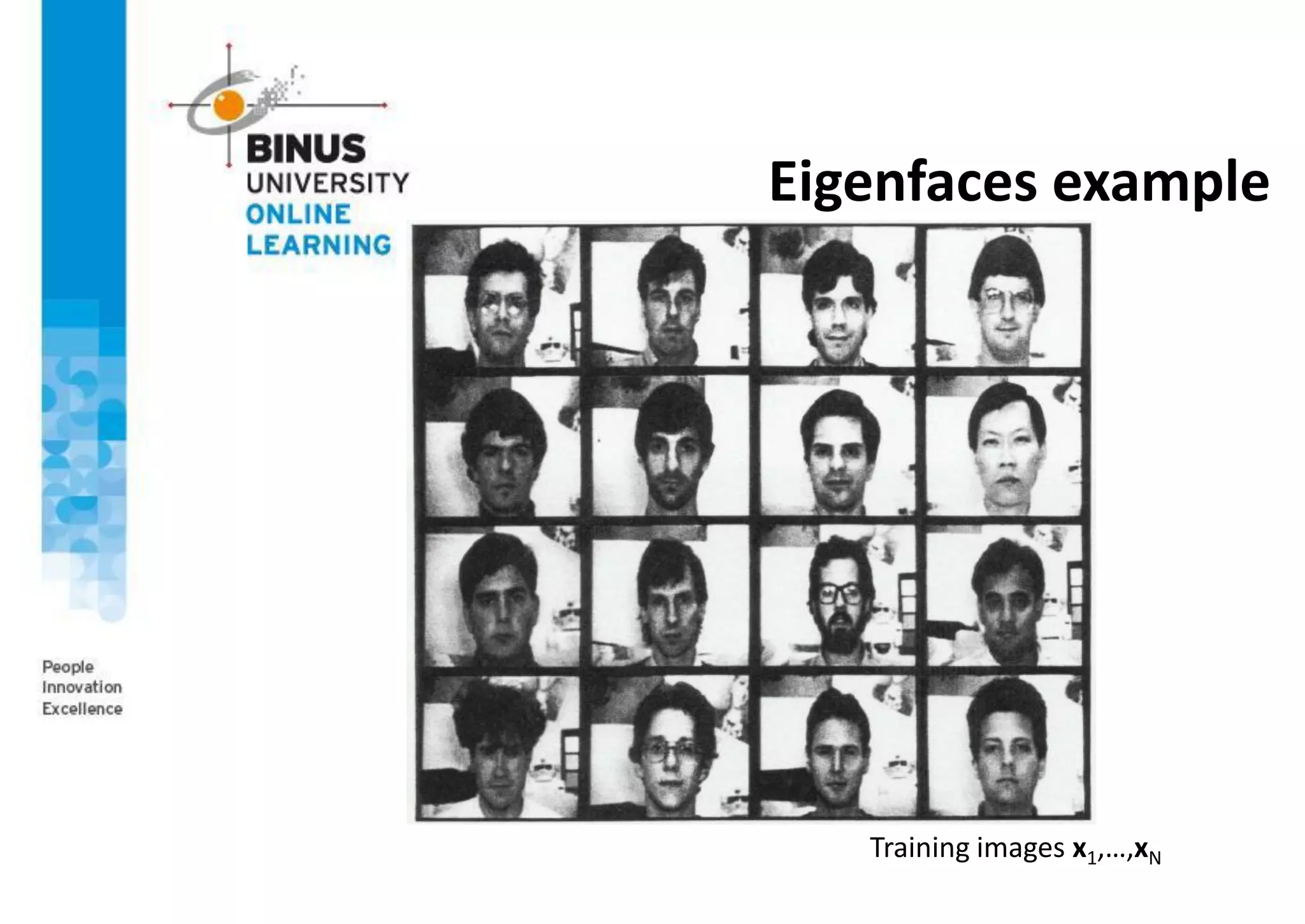

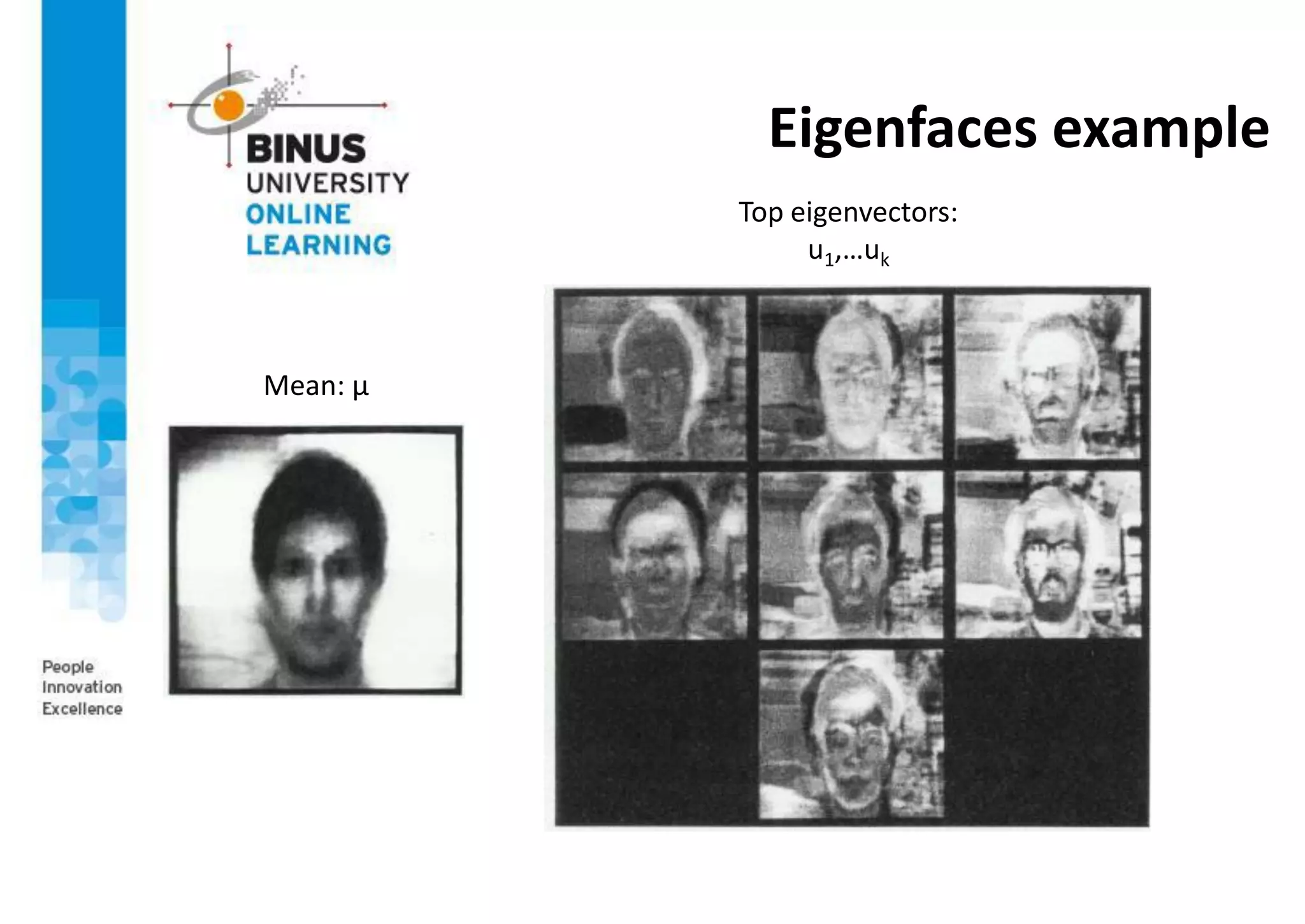

This document provides an overview of machine vision applications including content-based image retrieval and face recognition. It discusses how content-based image retrieval systems work by extracting image features, calculating distances between images, and returning similar images from a database based on a query image. Examples of content-based image retrieval systems and the features they use are described. The document also covers face detection and recognition techniques, including the use of eigenfaces which represent faces as locations in a lower-dimensional space.

![Eigenfaces example

Face x in “face space” coordinates:

Reconstruction:

= +

µ + w1u1 + w2u2 + w3u3 + w4u4 + …

^

x =

x [u1

T

(x -m),...,uk

T

(x -m)]

= w1,w2,...,wk](https://image.slidesharecdn.com/ppt-s12-machinevision-s2-210626032714/75/PPT-s12-machine-vision-s2-40-2048.jpg)