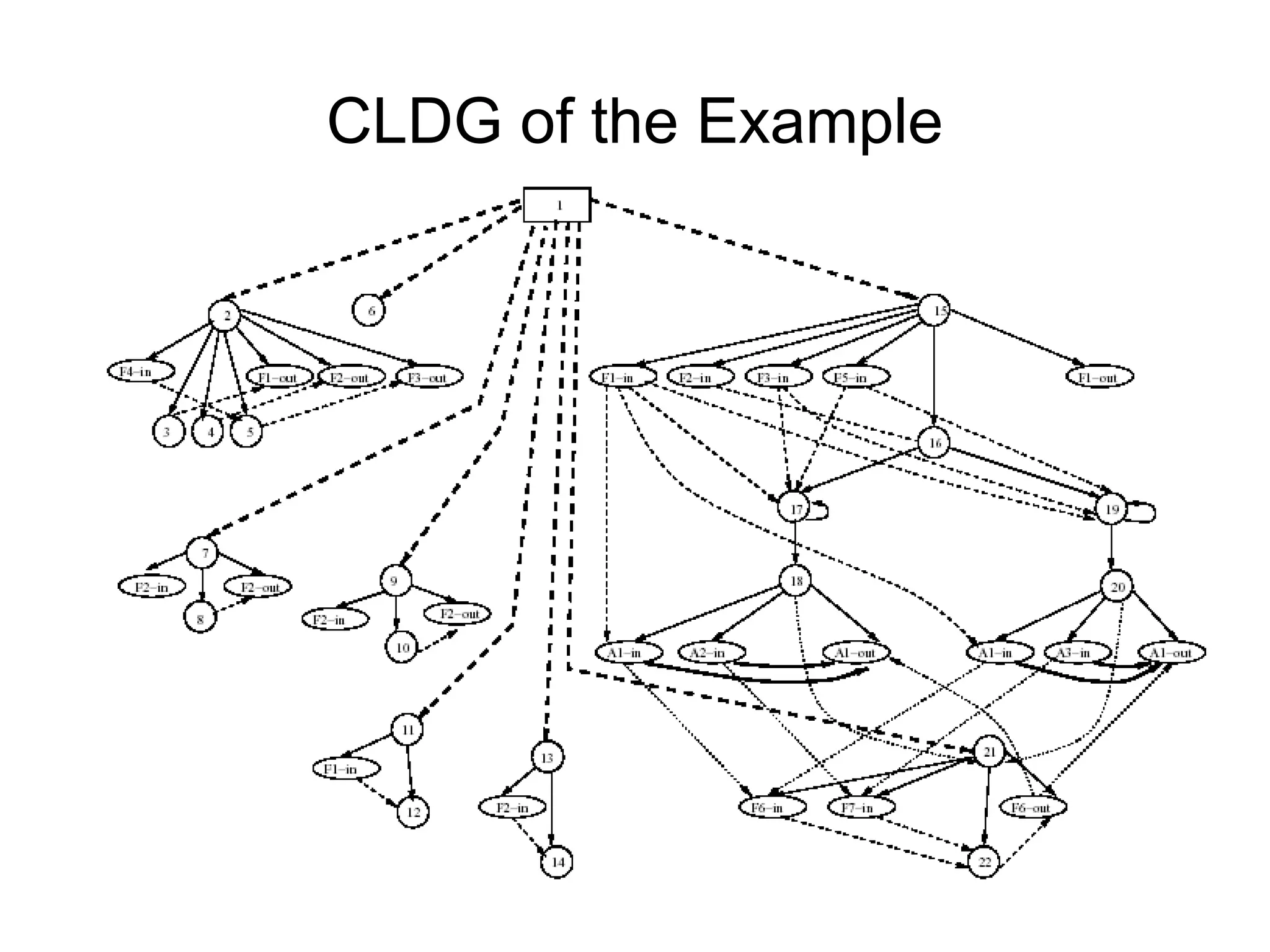

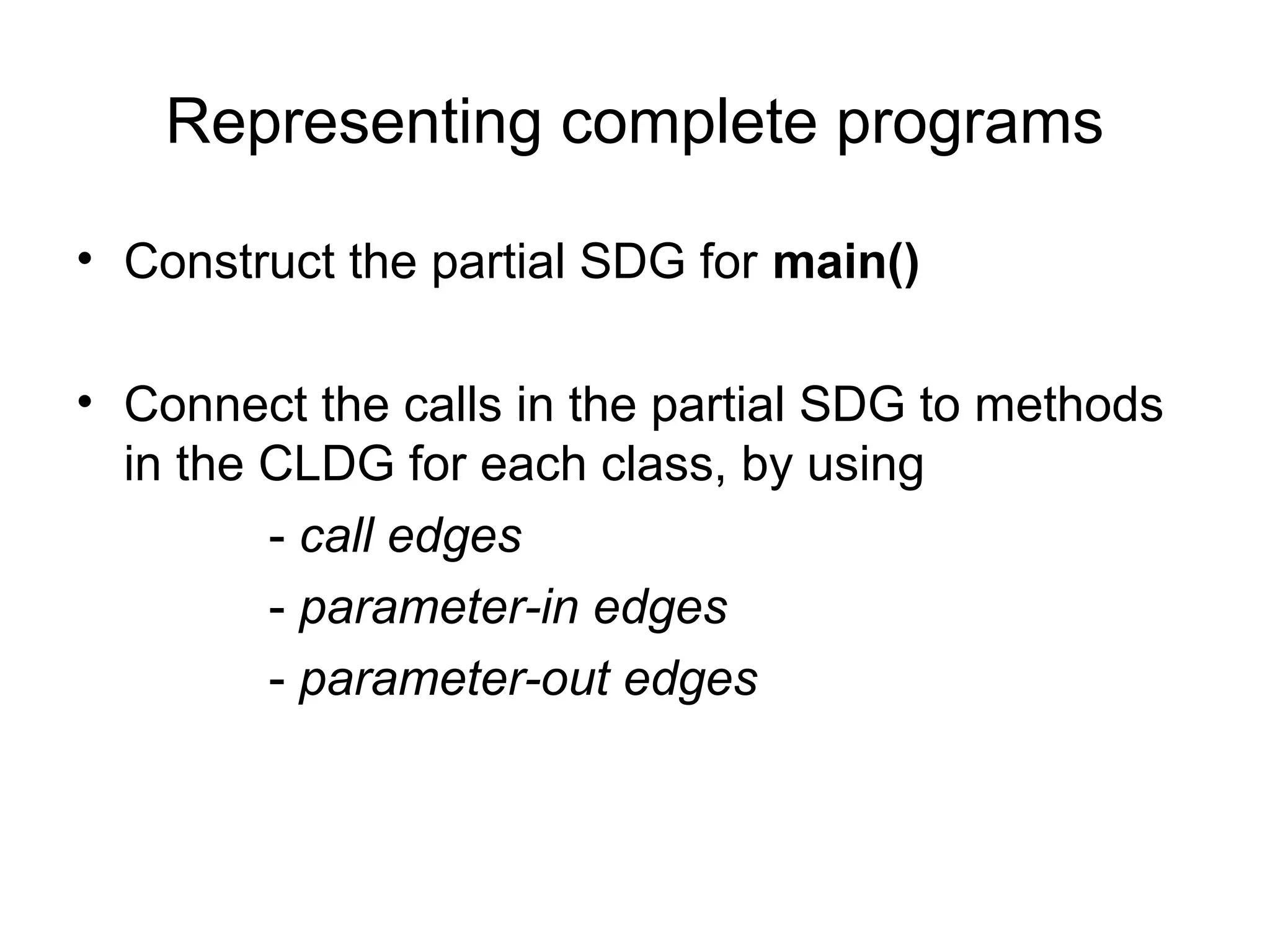

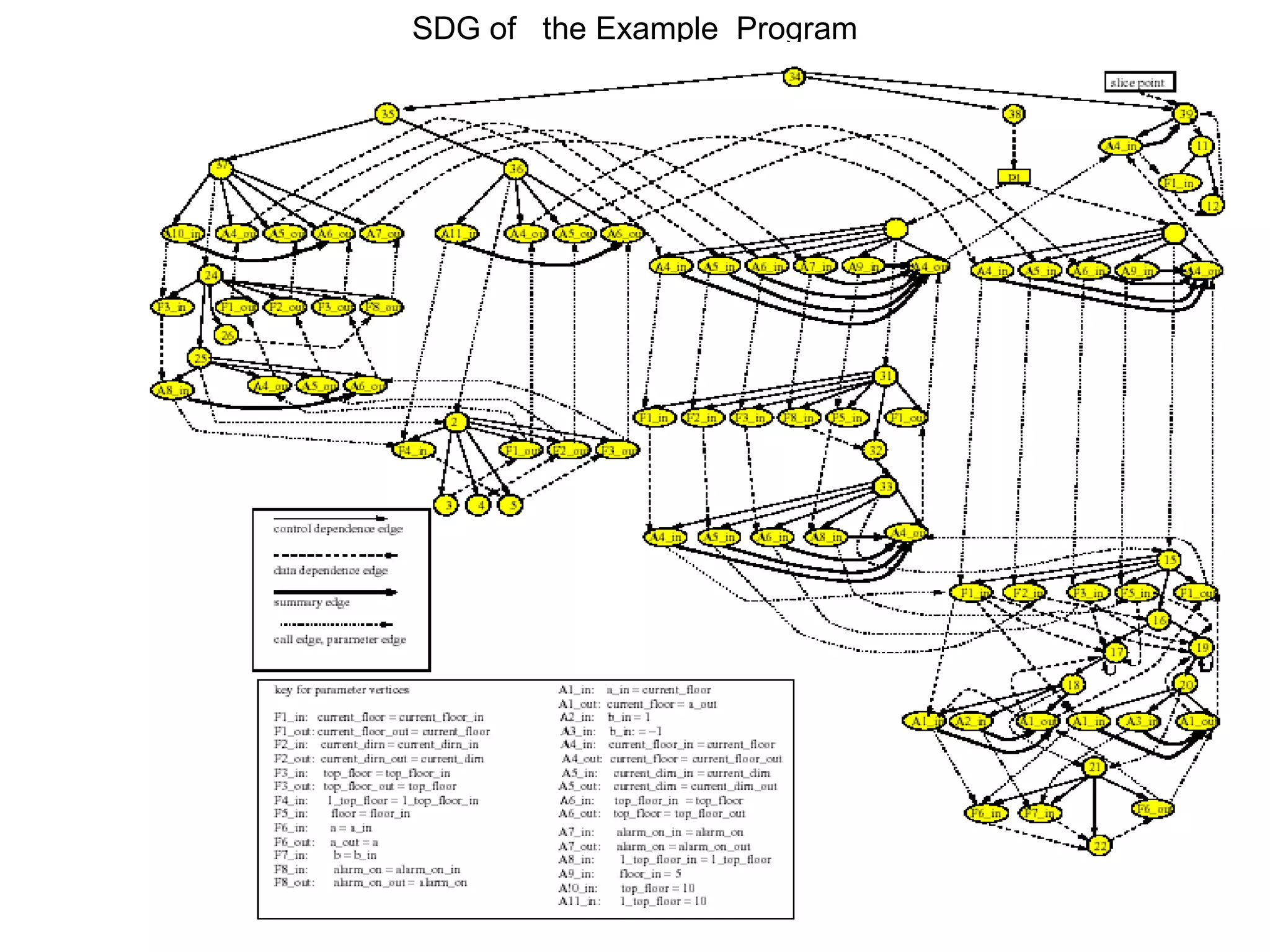

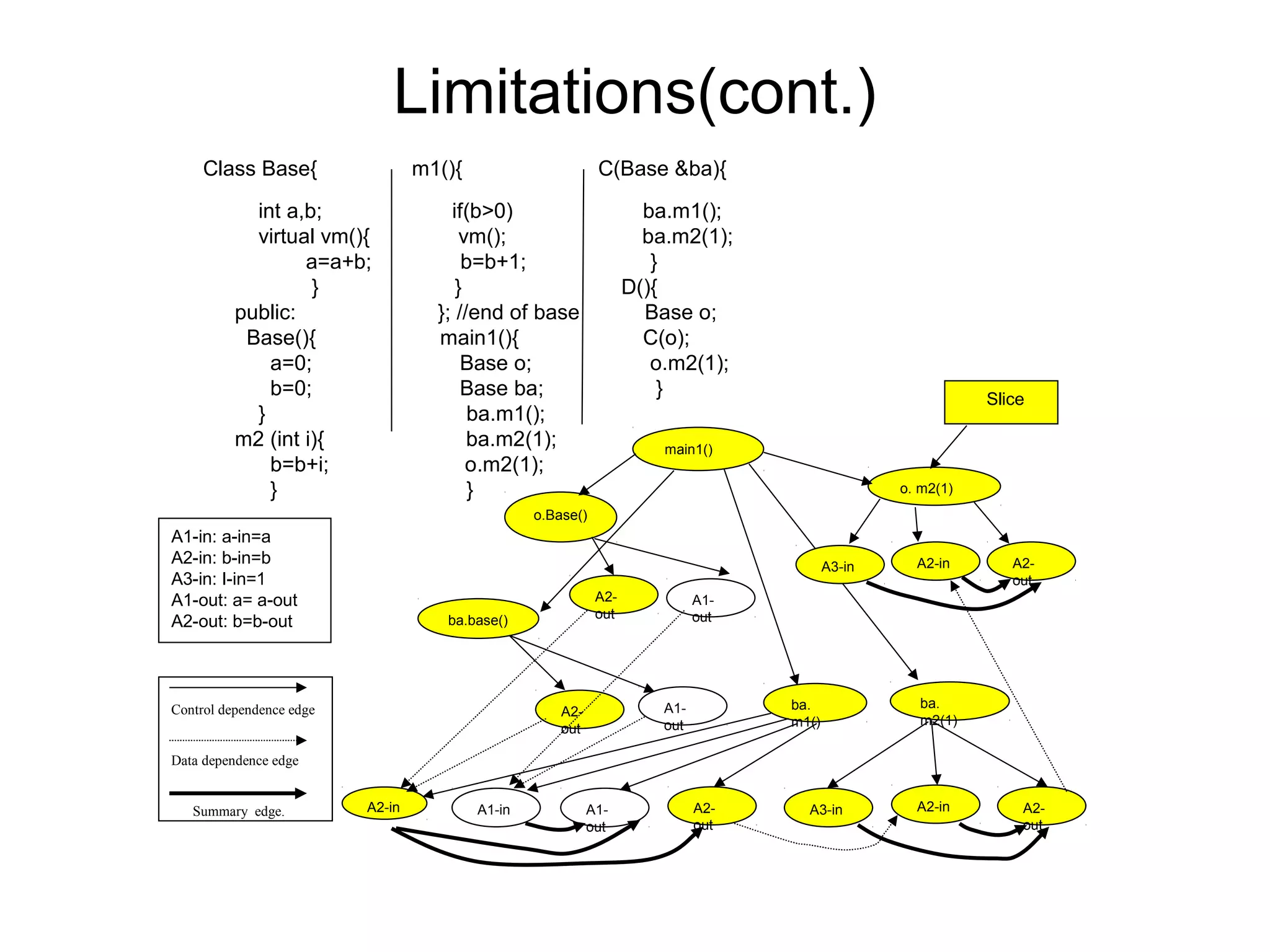

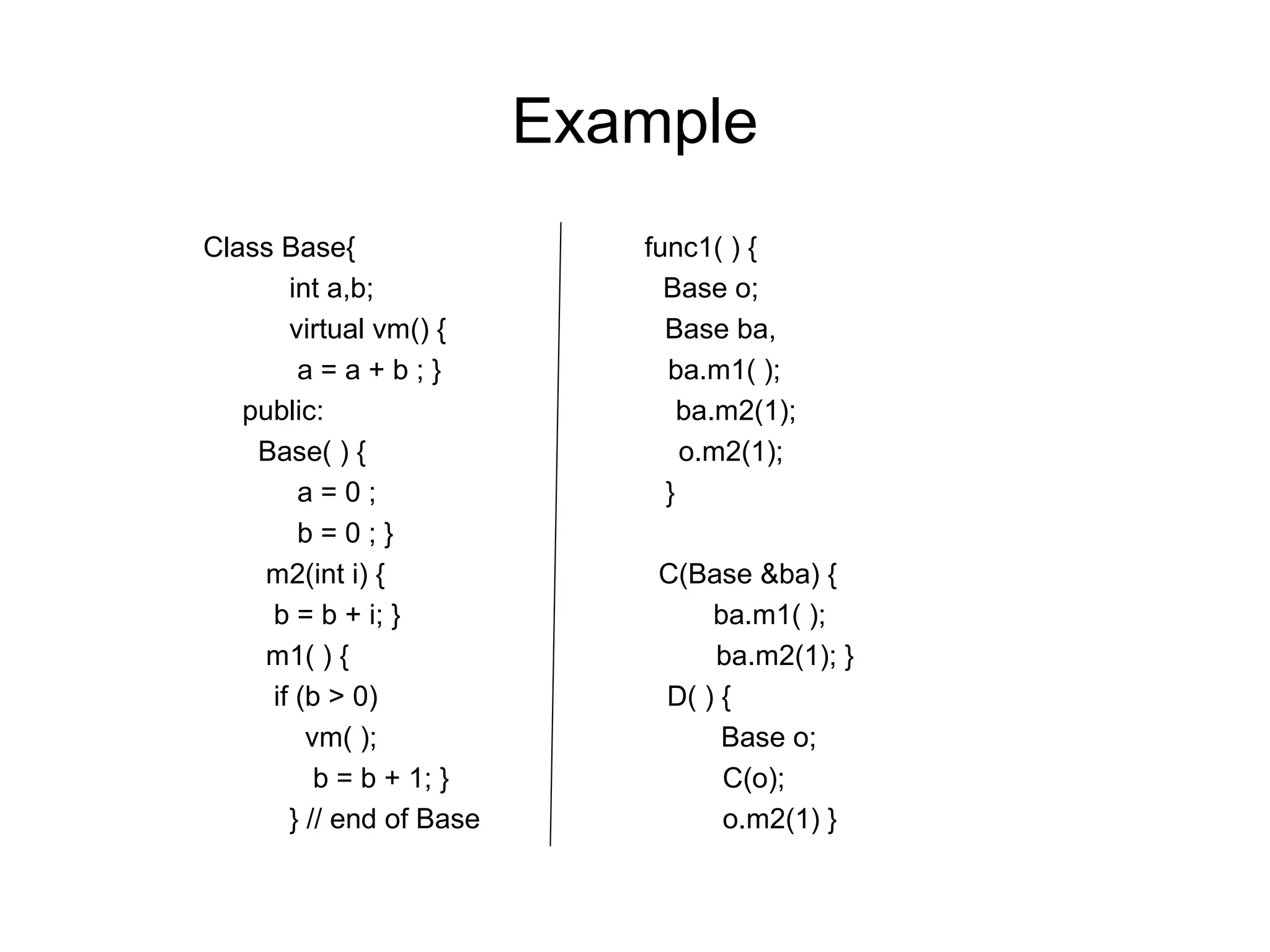

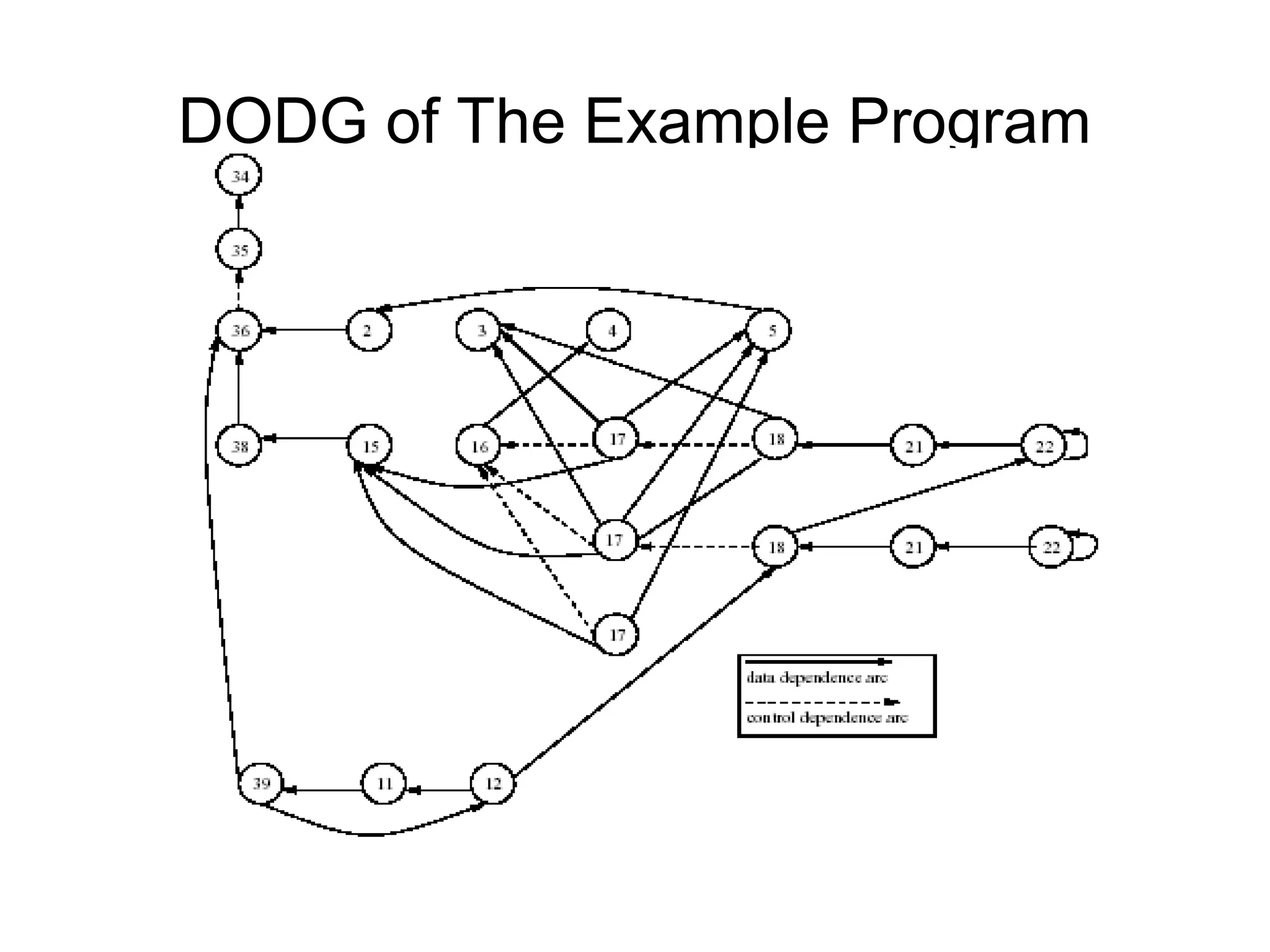

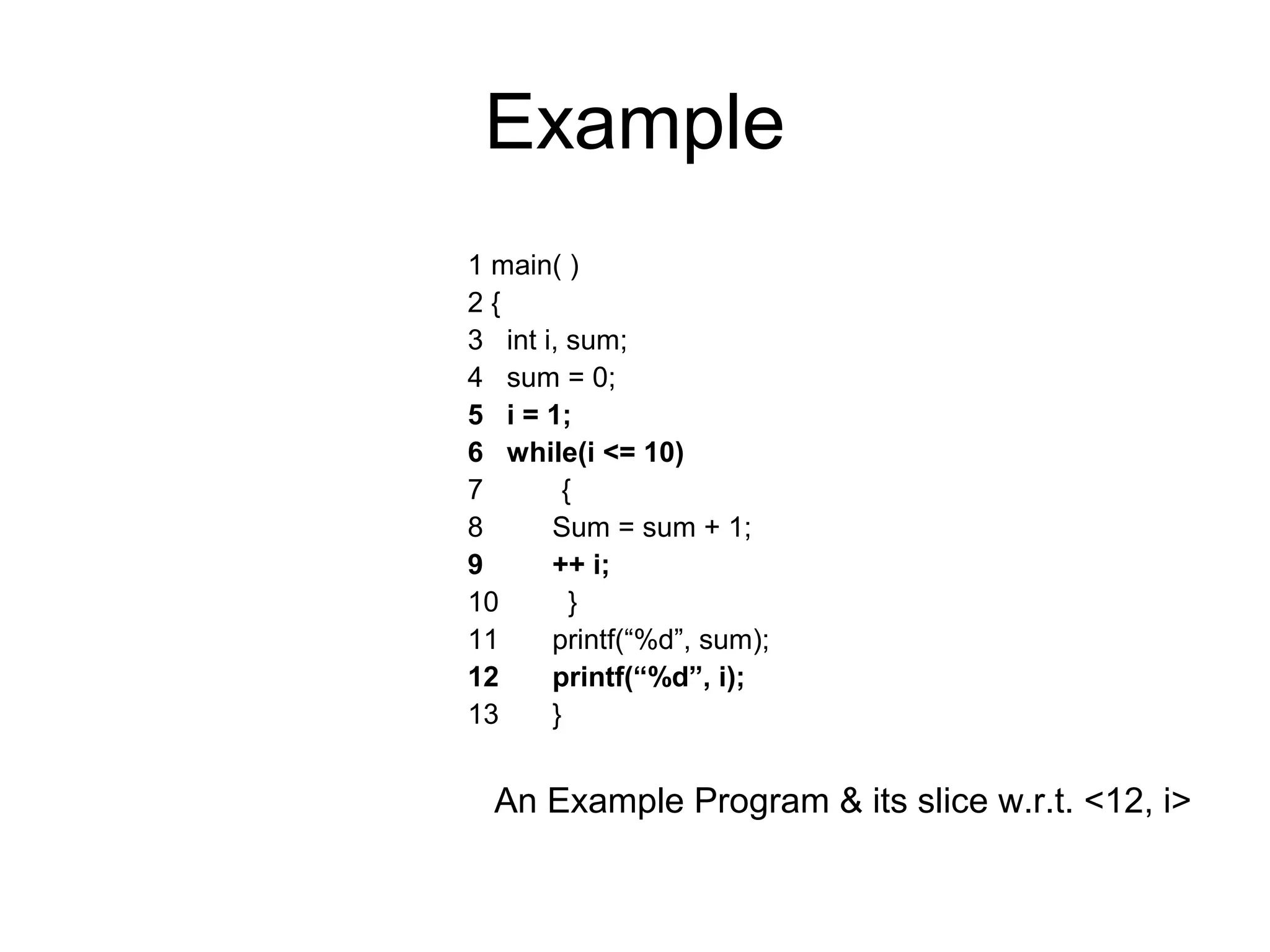

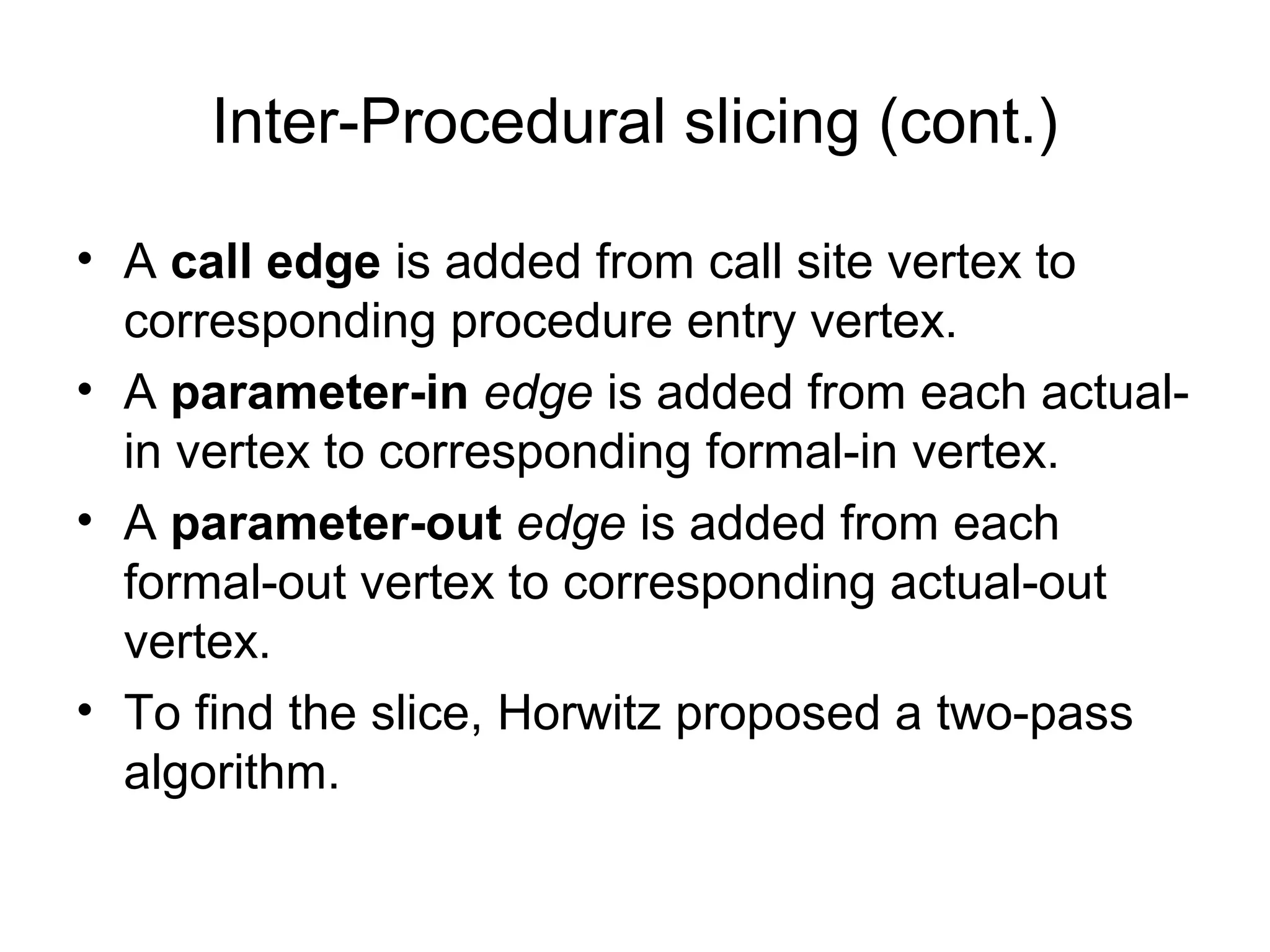

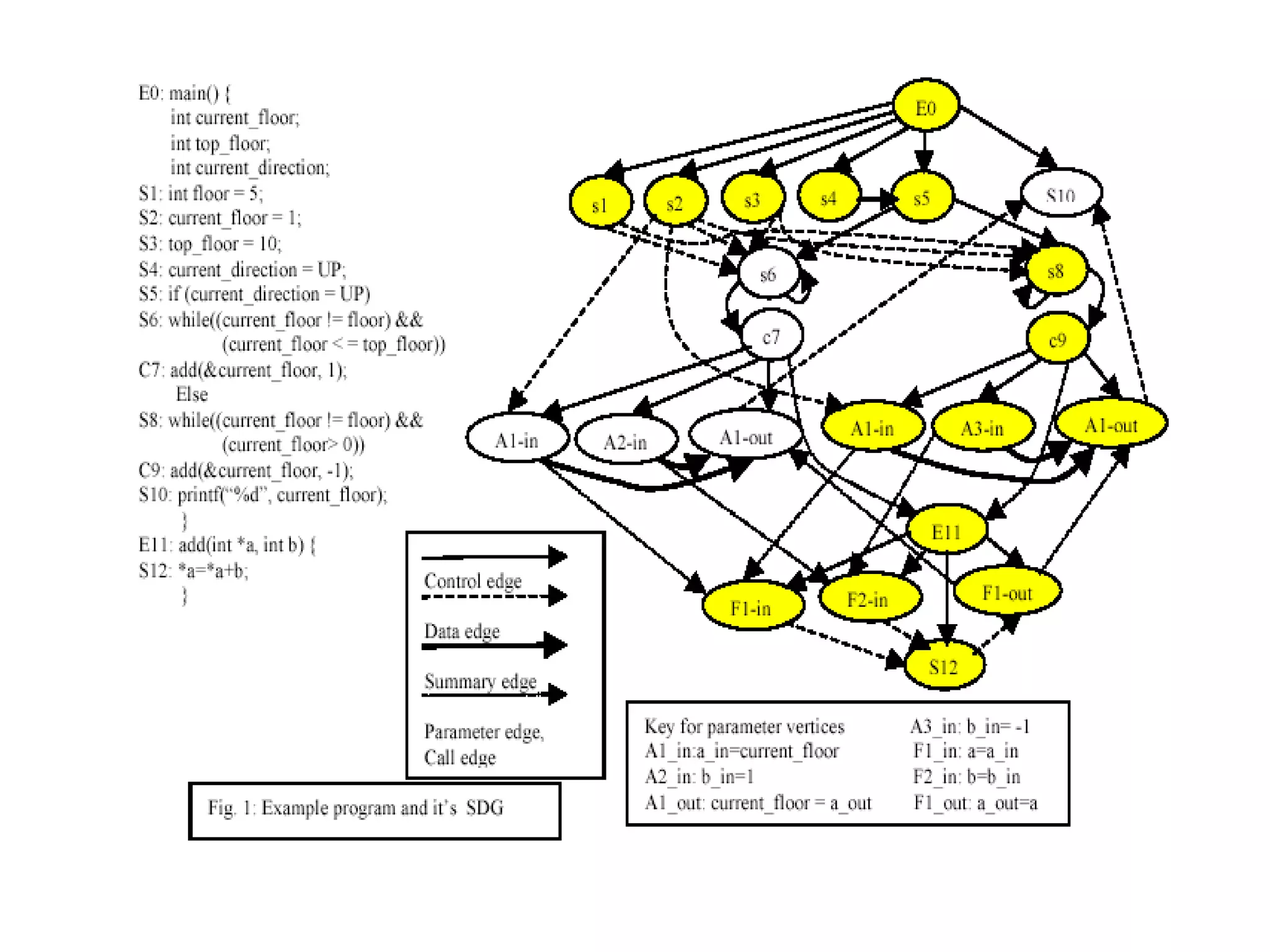

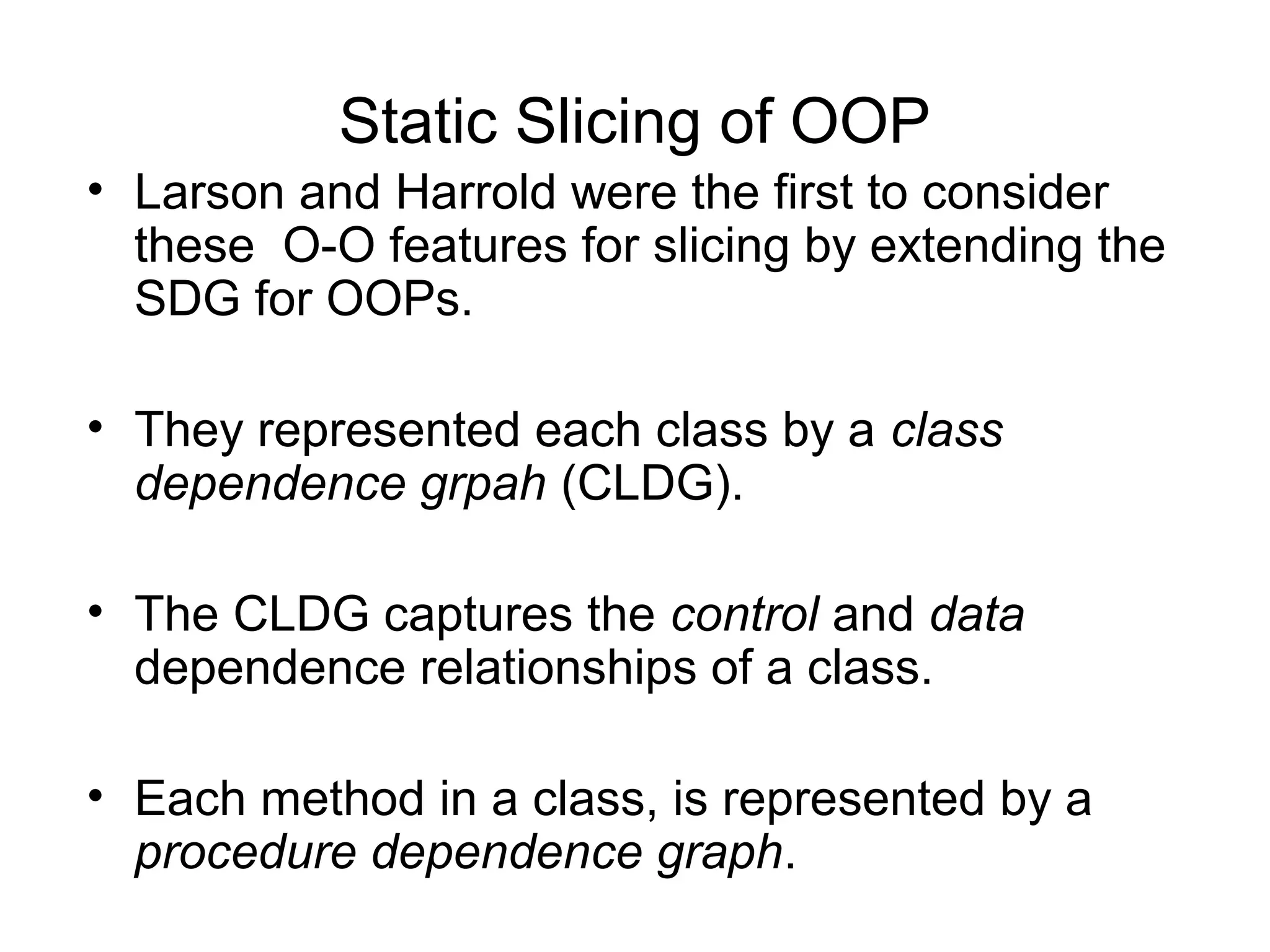

- The document summarizes techniques for slicing object-oriented programs. It discusses static and dynamic slicing, and limitations of previous approaches.

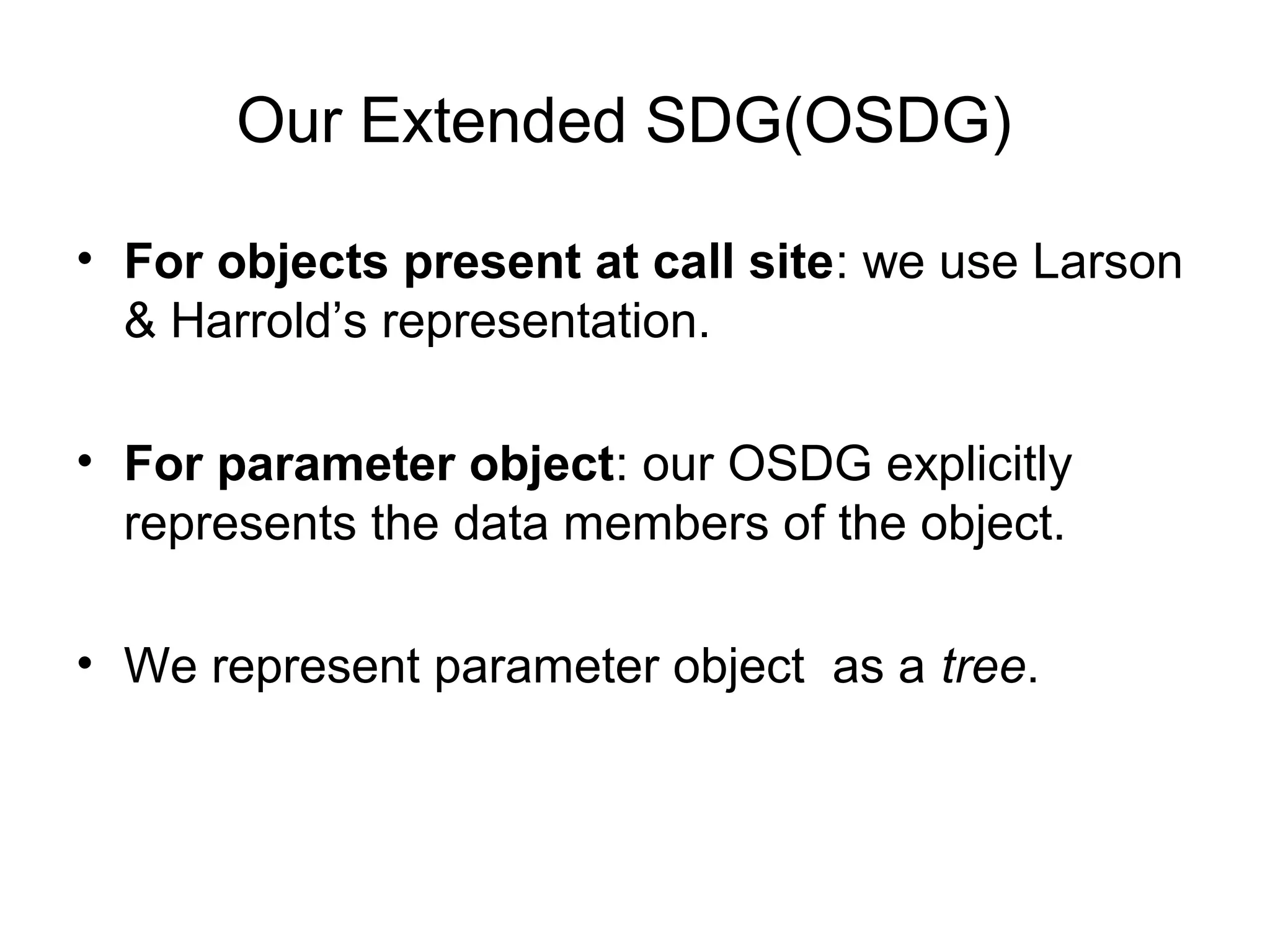

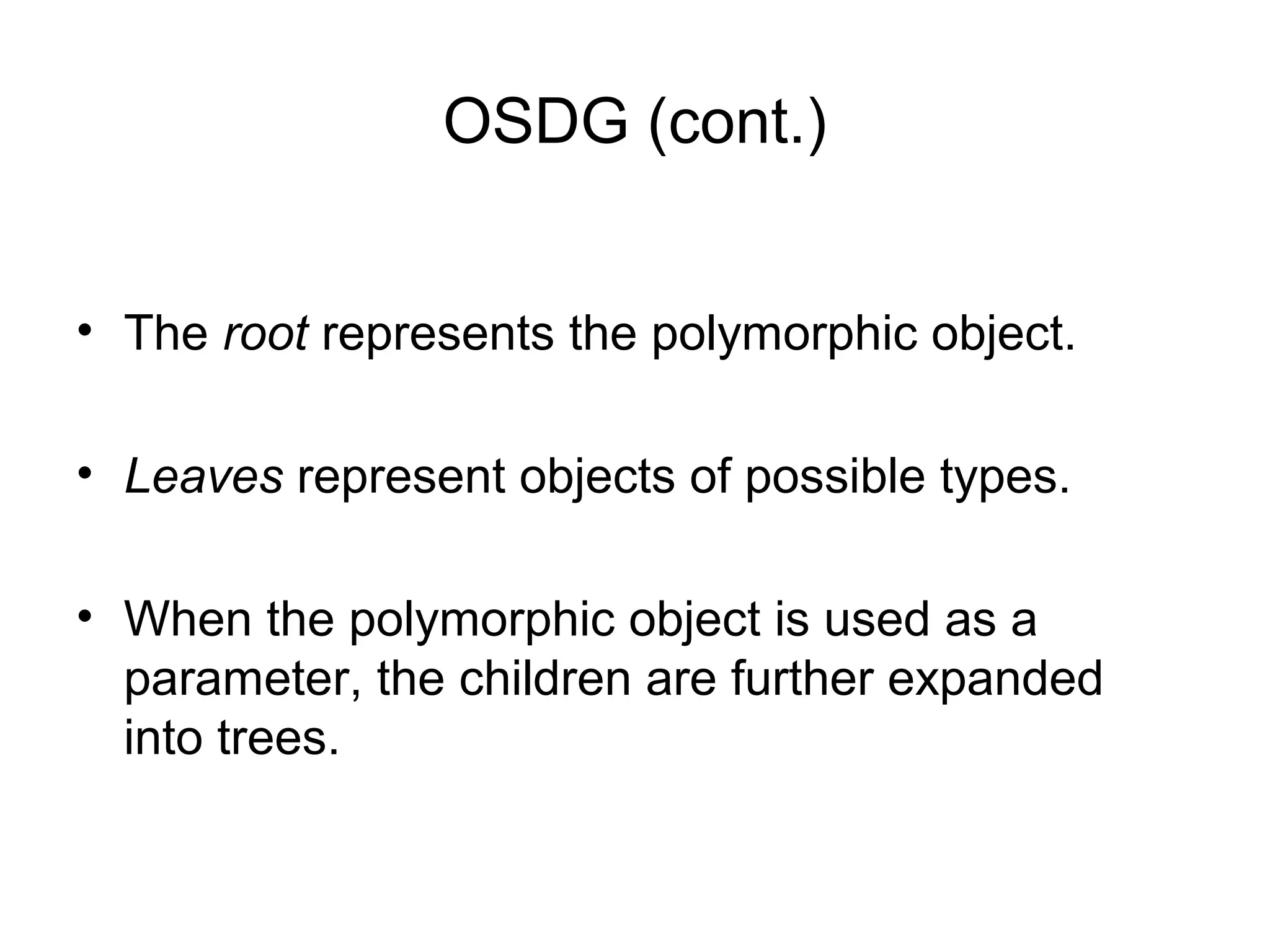

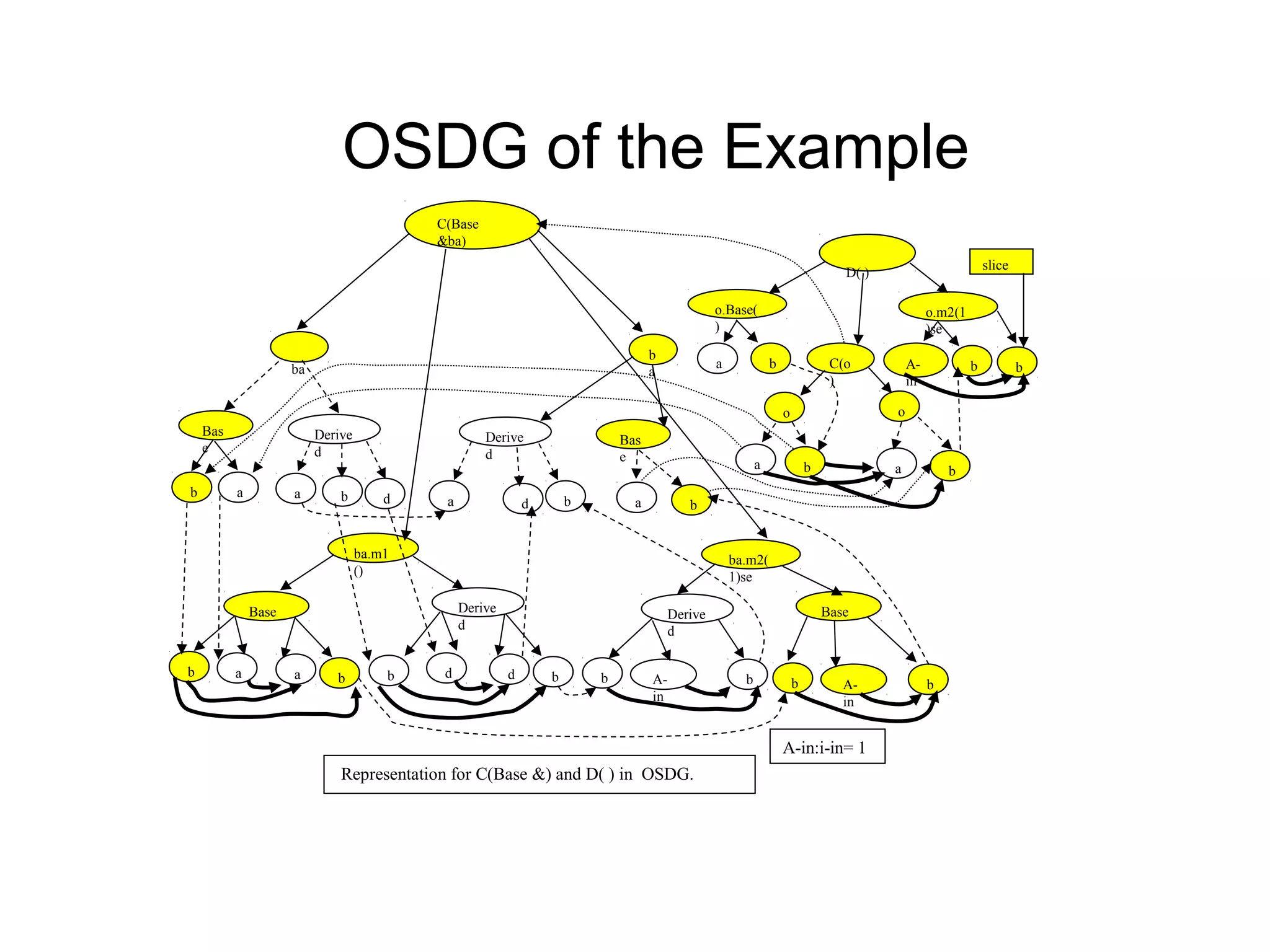

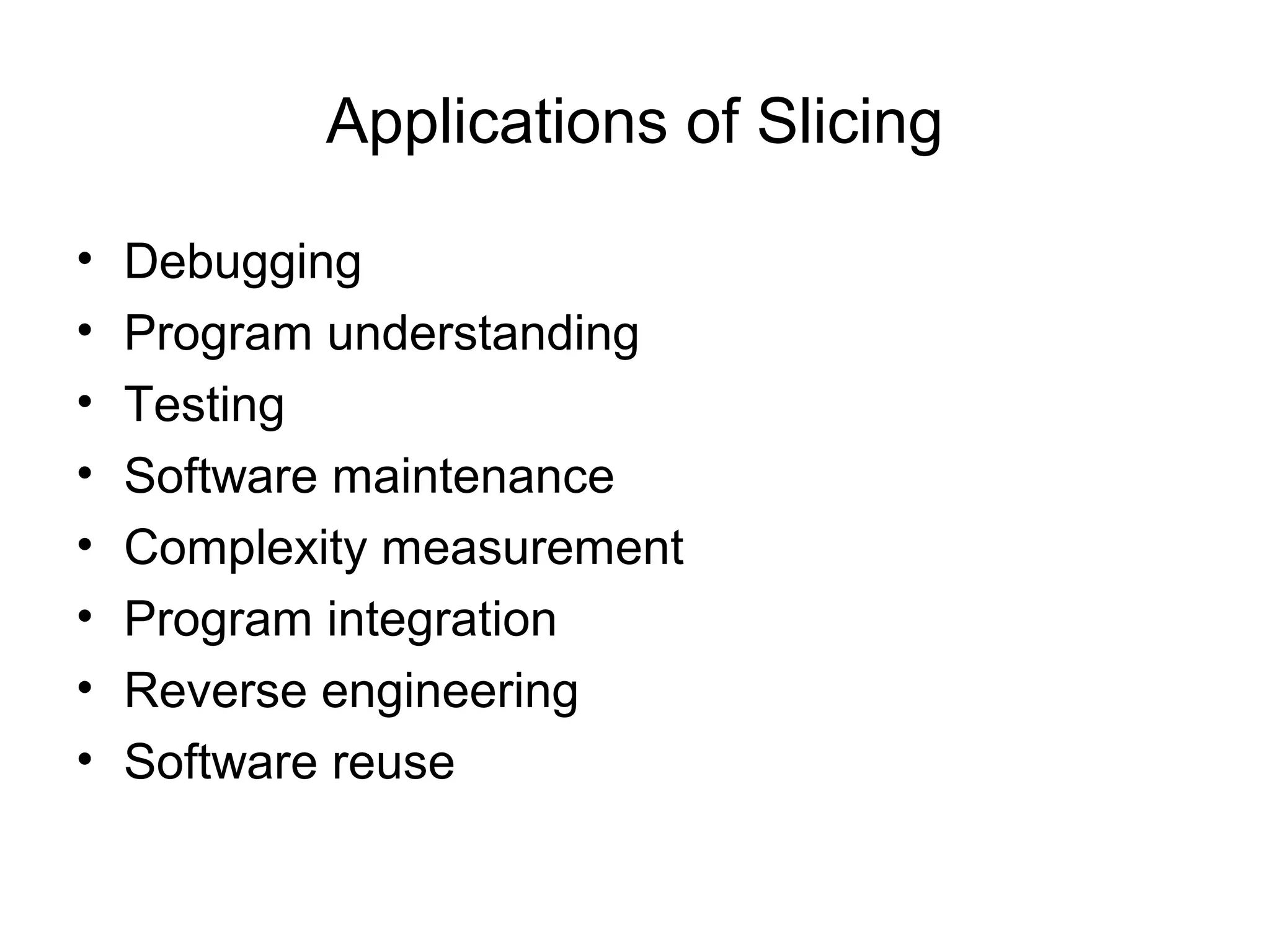

- It proposes a new intermediate representation called the Object-Oriented System Dependence Graph (OSDG) to more precisely capture dependencies in object-oriented programs. The OSDG explicitly represents data members of objects.

- An edge-marking algorithm is presented for efficiently performing dynamic slicing of object-oriented programs using the OSDG. This avoids recomputing the entire slice after each statement.

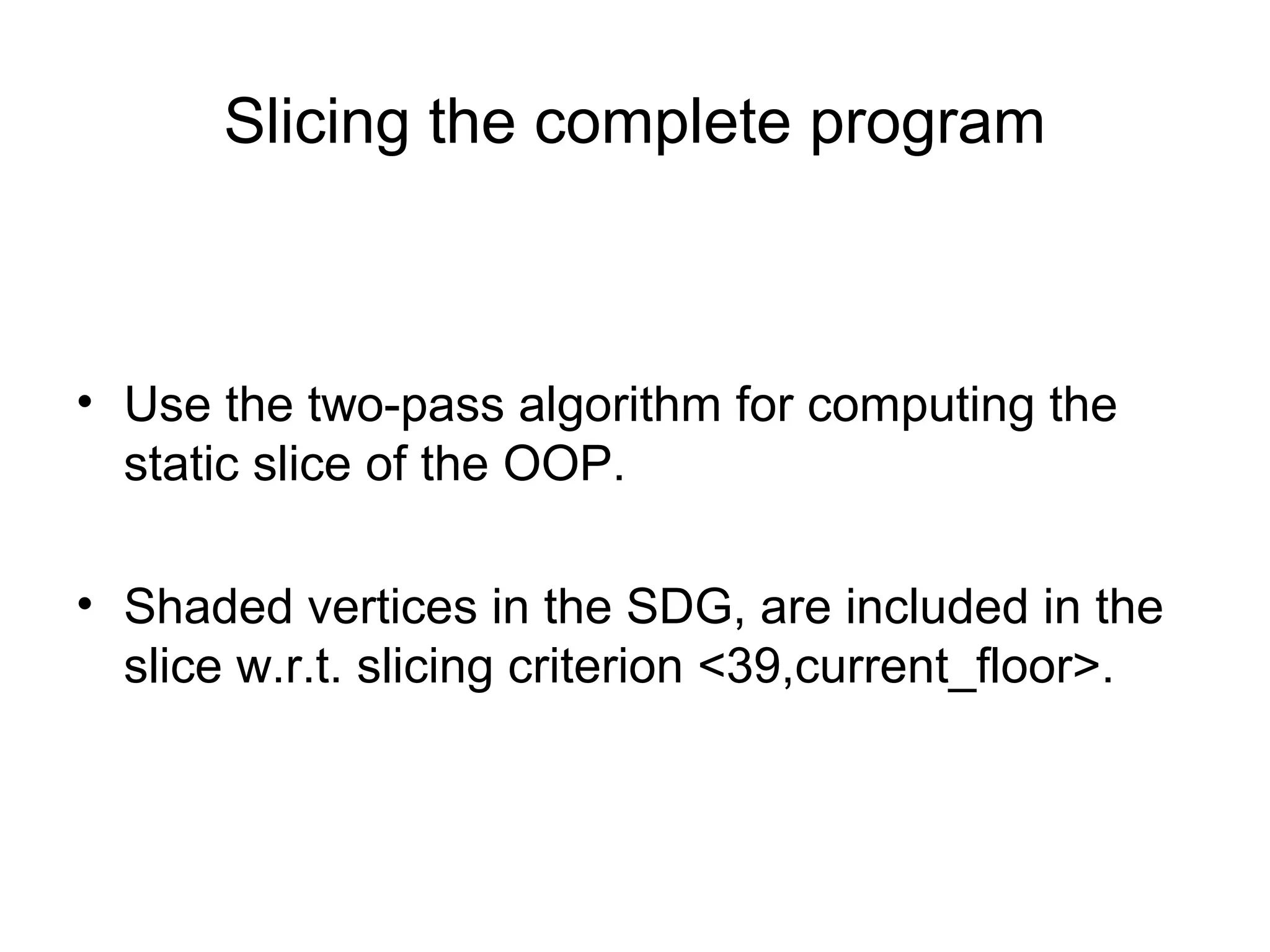

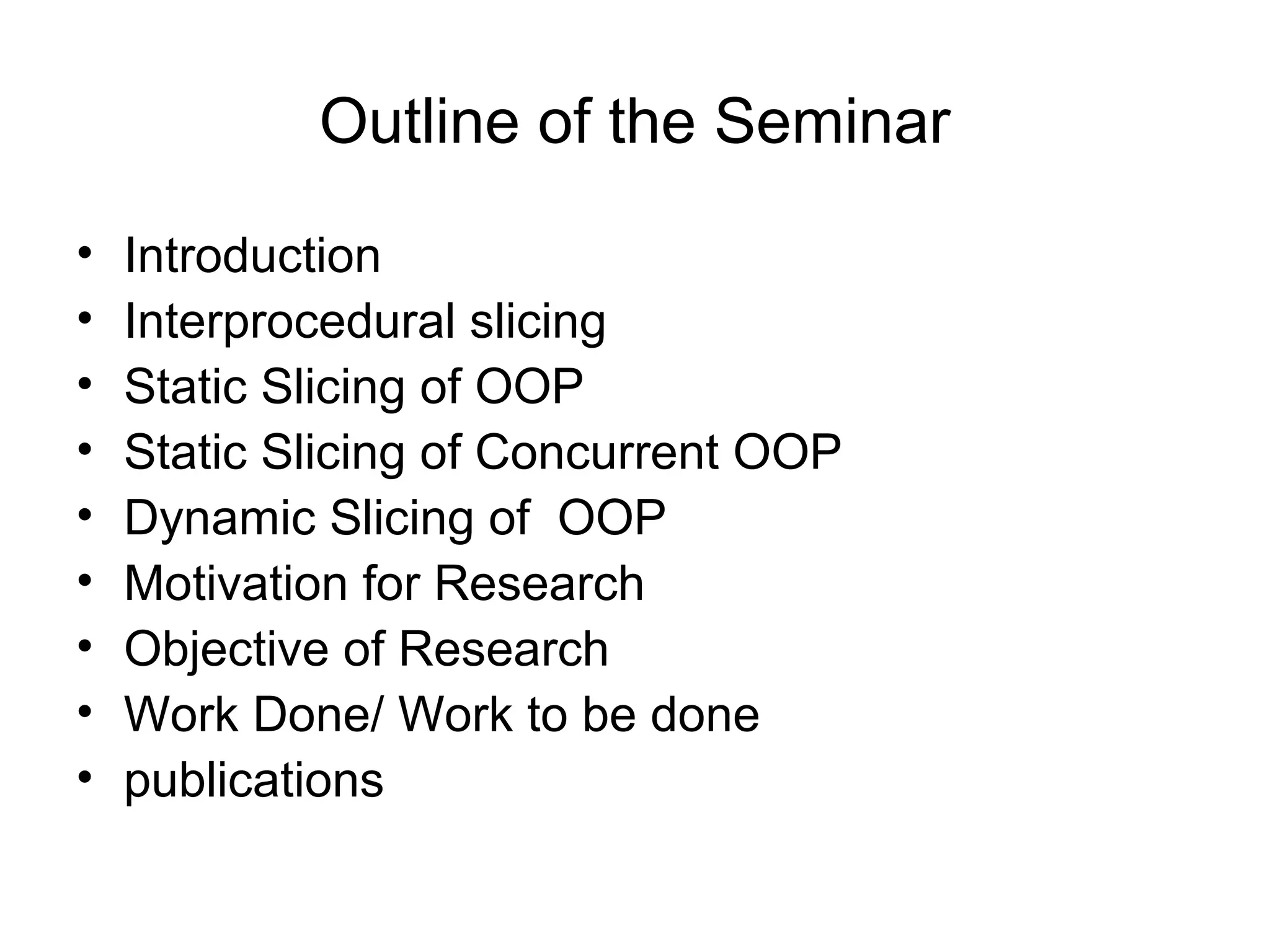

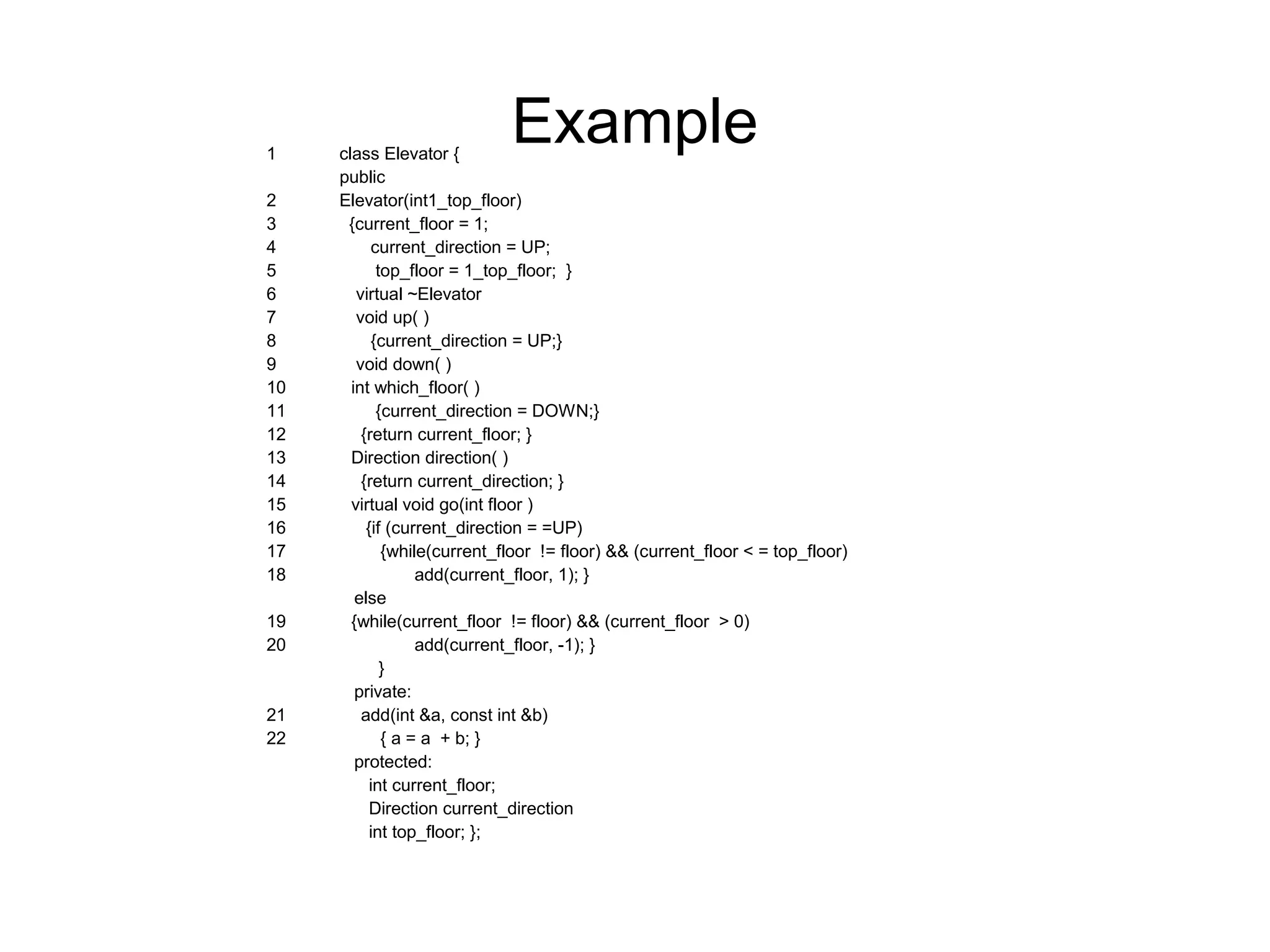

![23 class AlarmElevator : public Elevator {

public

24 AlarmElevator ( int top_floor)

25 Elevator (top_floor)

26 {alarm_on = 0;}

27 void set_alarm( )

28 {alarm_on = 1;}

29 void reset_alarm( )

30 {alarm_on = 0;}

31 void go (int floor)

32 { if (!alarm_on)

33 Elevator :: go (floor)

};

protected:

int alarm_on;

} ;

34 main( int argc, char **argv) {

Elevator *e_ptr;

35 If (argv[1])

36 e_ptr = new Elevator (10);

else

37 e_ptr = new AlarmElevator (10);

38 e_ptr - > go(5);

39 c_out << “n currently on floor :” << e_ptr -> which_floor ( );

}](https://image.slidesharecdn.com/rseminarp-150301084852-conversion-gate01/75/Slicing-of-Object-Oriented-Programs-18-2048.jpg)