Randomness conductors

•Download as PPT, PDF•

0 likes•282 views

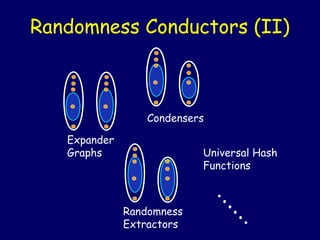

Randomness conductors are a general framework that unifies various combinatorial objects like expanders, extractors, condensers, and universal hash functions. They can transform a probability distribution X with a certain amount of "entropy" into another distribution X' with a specified amount of entropy. The document discusses how expanders, extractors, and other objects are special cases of randomness conductors. It also describes how zigzag graph products can be used to construct explicit constant-degree randomness conductors and discusses some open problems in further studying and constructing these objects.

Report

Share

Report

Share

Recommended

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

In this talk we consider the question of how to use QMC with an empirical dataset, such as a set of points generated by MCMC. Using ideas from partitioning for parallel computing, we apply recursive bisection to reorder the points, and then interleave the bits of the QMC coordinates to select the appropriate point from the dataset. Numerical tests show that in the case of known distributions this is almost as effective as applying QMC directly to the original distribution. The same recursive bisection can also be used to thin the dataset, by recursively bisecting down to many small subsets of points, and then randomly selecting one point from each subset. This makes it possible to reduce the size of the dataset greatly without significantly increasing the overall error. Co-author: Fei XieProgram on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

The standard Galerkin formulation of the acoustic wave propagation, governed by the Helmholtz partial differential equation (PDE), is indefinite for large wavenumbers. However, the Helmholtz PDE is in general not indefinite. The lack of coercivity (indefiniteness) is one of the major difficulties for approximation and simulation of heterogeneous media wave propagation models, including application to stochastic wave propagation Quasi Monte Carlo (QMC) analysis. We will present a new class of sign-definite continuous and discrete preconditioned FEM Helmholtz wave propagation models.MLHEP 2015: Introductory Lecture #1

* ML in HEP

* classification and regression

* knn classification and regression

* ROC curve

* optimal bayesian classifier

* Fisher's QDA

* intro to Logistic Regression

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

The generation of Gaussian random fields over a physical domain is a challenging problem in computational mathematics, especially when the correlation length is short and the field is rough. The traditional approach is to make use of a truncated Karhunen-Loeve (KL) expansion, but the generation of even a single realisation of the field may then be effectively beyond reach (especially for 3-dimensional domains) if the need is to obtain an expected L2 error of say 5%, because of the potentially very slow convergence of the KL expansion. In this talk, based on joint work with Ivan Graham, Frances Kuo, Dirk Nuyens, and Rob Scheichl, a completely different approach is used, in which the field is initially generated at a regular grid on a 2- or 3-dimensional rectangle that contains the physical domain, and then possibly interpolated to obtain the field at other points. In that case there is no need for any truncation. Rather the main problem becomes the factorisation of a large dense matrix. For this we use circulant embedding and FFT ideas. Quasi-Monte Carlo integration is then used to evaluate the expected value of some functional of the finite-element solution of an elliptic PDE with a random field as input.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

One of the central tasks in computational mathematics and statistics is to accurately approximate unknown target functions. This is typically done with the help of data — samples of the unknown functions. The emergence of Big Data presents both opportunities and challenges. On one hand, big data introduces more information about the unknowns and, in principle, allows us to create more accurate models. On the other hand, data storage and processing become highly challenging. In this talk, we present a set of sequential algorithms for function approximation in high dimensions with large data sets. The algorithms are of iterative nature and involve only vector operations. They use one data sample at each step and can handle dynamic/stream data. We present both the numerical algorithms, which are easy to implement, as well as rigorous analysis for their theoretical foundation.Robust Control of Uncertain Switched Linear Systems based on Stochastic Reach...

This presentation proposes an approach to algorithmically synthesize control strategies for

set-to-set transitions of uncertain discrete-time switched linear systems based on a combination

of tree search and reachable set computations in a stochastic setting. For given Gaussian

distributions of the initial states and disturbances, state sets wich are reachable to a chosen

confidence level under the effect of time-variant hybrid control laws are computed by using

principles of the ellipsoidal calculus. The proposed algorithm iterates over sequences of the

discrete states and LMI-constrained semi-definite programming (SDP) problems to compute

stabilizing controllers, while polytopic input constraints are considered. An example for illustration is included.

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

A fundamental numerical problem in many sciences is to compute integrals. These integrals can often be expressed as expectations and then approximated by sampling methods. Monte Carlo sampling is very competitive in high dimensions, but has a slow rate of convergence. One reason for this slowness is that the MC points form clusters and gaps. Quasi-Monte Carlo methods greatly reduce such clusters and gaps, and under modest smoothness demands on the integrand they can greatly improve accuracy. This can even take place in problems of surprisingly high dimension. This talk will introduce the basics of QMC and randomized QMC. It will include discrepancy and the Koksma-Hlawka inequality, some digital constructions and some randomized QMC methods that allow error estimation and sometimes bring improved accuracy.MLHEP 2015: Introductory Lecture #3

* Ensembles strategies

* Bagging

* Random Forest

* comparing distributions

* AdaBoost, AdaLoss (= ExpLoss)

* Gradient Boosting, it's parameters

* GB fot classification, regression, ranking

* Boosting to uniformity (uBoost, FlatnessLoss)

Recommended

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

In this talk we consider the question of how to use QMC with an empirical dataset, such as a set of points generated by MCMC. Using ideas from partitioning for parallel computing, we apply recursive bisection to reorder the points, and then interleave the bits of the QMC coordinates to select the appropriate point from the dataset. Numerical tests show that in the case of known distributions this is almost as effective as applying QMC directly to the original distribution. The same recursive bisection can also be used to thin the dataset, by recursively bisecting down to many small subsets of points, and then randomly selecting one point from each subset. This makes it possible to reduce the size of the dataset greatly without significantly increasing the overall error. Co-author: Fei XieProgram on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

The standard Galerkin formulation of the acoustic wave propagation, governed by the Helmholtz partial differential equation (PDE), is indefinite for large wavenumbers. However, the Helmholtz PDE is in general not indefinite. The lack of coercivity (indefiniteness) is one of the major difficulties for approximation and simulation of heterogeneous media wave propagation models, including application to stochastic wave propagation Quasi Monte Carlo (QMC) analysis. We will present a new class of sign-definite continuous and discrete preconditioned FEM Helmholtz wave propagation models.MLHEP 2015: Introductory Lecture #1

* ML in HEP

* classification and regression

* knn classification and regression

* ROC curve

* optimal bayesian classifier

* Fisher's QDA

* intro to Logistic Regression

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

The generation of Gaussian random fields over a physical domain is a challenging problem in computational mathematics, especially when the correlation length is short and the field is rough. The traditional approach is to make use of a truncated Karhunen-Loeve (KL) expansion, but the generation of even a single realisation of the field may then be effectively beyond reach (especially for 3-dimensional domains) if the need is to obtain an expected L2 error of say 5%, because of the potentially very slow convergence of the KL expansion. In this talk, based on joint work with Ivan Graham, Frances Kuo, Dirk Nuyens, and Rob Scheichl, a completely different approach is used, in which the field is initially generated at a regular grid on a 2- or 3-dimensional rectangle that contains the physical domain, and then possibly interpolated to obtain the field at other points. In that case there is no need for any truncation. Rather the main problem becomes the factorisation of a large dense matrix. For this we use circulant embedding and FFT ideas. Quasi-Monte Carlo integration is then used to evaluate the expected value of some functional of the finite-element solution of an elliptic PDE with a random field as input.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

One of the central tasks in computational mathematics and statistics is to accurately approximate unknown target functions. This is typically done with the help of data — samples of the unknown functions. The emergence of Big Data presents both opportunities and challenges. On one hand, big data introduces more information about the unknowns and, in principle, allows us to create more accurate models. On the other hand, data storage and processing become highly challenging. In this talk, we present a set of sequential algorithms for function approximation in high dimensions with large data sets. The algorithms are of iterative nature and involve only vector operations. They use one data sample at each step and can handle dynamic/stream data. We present both the numerical algorithms, which are easy to implement, as well as rigorous analysis for their theoretical foundation.Robust Control of Uncertain Switched Linear Systems based on Stochastic Reach...

This presentation proposes an approach to algorithmically synthesize control strategies for

set-to-set transitions of uncertain discrete-time switched linear systems based on a combination

of tree search and reachable set computations in a stochastic setting. For given Gaussian

distributions of the initial states and disturbances, state sets wich are reachable to a chosen

confidence level under the effect of time-variant hybrid control laws are computed by using

principles of the ellipsoidal calculus. The proposed algorithm iterates over sequences of the

discrete states and LMI-constrained semi-definite programming (SDP) problems to compute

stabilizing controllers, while polytopic input constraints are considered. An example for illustration is included.

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

A fundamental numerical problem in many sciences is to compute integrals. These integrals can often be expressed as expectations and then approximated by sampling methods. Monte Carlo sampling is very competitive in high dimensions, but has a slow rate of convergence. One reason for this slowness is that the MC points form clusters and gaps. Quasi-Monte Carlo methods greatly reduce such clusters and gaps, and under modest smoothness demands on the integrand they can greatly improve accuracy. This can even take place in problems of surprisingly high dimension. This talk will introduce the basics of QMC and randomized QMC. It will include discrepancy and the Koksma-Hlawka inequality, some digital constructions and some randomized QMC methods that allow error estimation and sometimes bring improved accuracy.MLHEP 2015: Introductory Lecture #3

* Ensembles strategies

* Bagging

* Random Forest

* comparing distributions

* AdaBoost, AdaLoss (= ExpLoss)

* Gradient Boosting, it's parameters

* GB fot classification, regression, ranking

* Boosting to uniformity (uBoost, FlatnessLoss)

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

We present recent result on the numerical analysis of Quasi Monte-Carlo quadrature methods, applied to forward and inverse uncertainty quantification for elliptic and parabolic PDEs. Particular attention will be placed on Higher

-Order QMC, the stable and efficient generation of

interlaced polynomial lattice rules, and the numerical analysis of multilevel QMC Finite Element discretizations with applications to computational uncertainty quantification. QMC: Transition Workshop - Density Estimation by Randomized Quasi-Monte Carlo...

QMC: Transition Workshop - Density Estimation by Randomized Quasi-Monte Carlo...The Statistical and Applied Mathematical Sciences Institute

We examine the effectiveness of randomized quasi Monte Carlo (RQMC) to improve the convergence rate of the mean integrated square error, compared with crude Monte Carlo (MC), when estimating the density of a random variable X defined as a function over the s-dimensional unit cube (0,1)^s. We consider histograms and kernel density estimators. We show both theoretically and empirically that RQMC estimators can achieve faster convergence rates in

some situations.

This is joint work with Amal Ben Abdellah, Art B. Owen, and Florian Puchhammer.MLHEP 2015: Introductory Lecture #4

* tuning gradient boosting over decision trees (GBDT)

* speeding up predictions for online triggers: lookup tables

* PCA, autoencoder, manifold learning

* structural learning: Markov chain, LDA

* remarks on collaborative research

MLHEP 2015: Introductory Lecture #2

* Logistic regression, logistic loss (log loss)

* stochastic optimization

* adding new features, generalized linear model

* Kernel trick, intro to SVM

* Overfitting

* Decision trees for classification and regression

* Building trees greedily: Gini index, entropy

* Trees fighting with overfitting: pre-stopping and post-pruning

* Feature importances

QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...

QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...The Statistical and Applied Mathematical Sciences Institute

We will describe and analyze accurate and efficient numerical algorithms to interpolate and approximate the integral of multivariate functions. The algorithms can be applied when we are given the function values at an arbitrary positioned, and usually small, existing sparse set of function values (samples), and additional samples are impossible, or difficult (e.g. expensive) to obtain. The methods are based on local, and global, tensor-product sparse quasi-interpolation methods that are exact for a class of sparse multivariate orthogonal polynomials.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.Catalogue of Models for Electricity Prices Part 2

Short overview of stochastic models for electricity markets - Part II

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

Multidimensional integrals may be approximated by weighted averages of integrand values. Quasi-Monte Carlo (QMC) methods are more accurate than simple Monte Carlo methods because they carefully choose where to evaluate the integrand. This tutorial focuses on how quickly QMC methods converge to the correct answer as the number of integrand values increases. The answer may depend on the smoothness of the integrand and the sophistication of the QMC method. QMC error analysis may assumes the integrand belongs to a reproducing kernel Hilbert space or may assume that the integrand is an instance of a stochastic process with known covariance structure. These two approaches have interesting parallels. This tutorial also explores how the computational cost of achieving a good approximation to the integral depends on the dimension of the domain of the integrand. Finally, this tutorial explores methods for determining how many integrand values are needed to satisfy the error tolerance. Relevant software is described.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

Markov chain Monte Carlo (MCMC) methods are popularly used in Bayesian computation. However, they need large number of samples for convergence which can become costly when the posterior distribution is expensive to evaluate. Deterministic sampling techniques such as Quasi-Monte Carlo (QMC) can be a useful alternative to MCMC, but the existing QMC methods are mainly developed only for sampling from unit hypercubes. Unfortunately, the posterior distributions can be highly correlated and nonlinear making them occupy very little space inside a hypercube. Thus, most of the samples from QMC can get wasted. The QMC samples can be saved if they can be pulled towards the high probability regions of the posterior distribution using inverse probability transforms. But this can be done only when the distribution function is known, which is rarely the case in Bayesian problems. In this talk, I will discuss a deterministic sampling technique, known as minimum energy designs, which can directly sample from the posterior distributions.Implicit schemes for wave models

Implicit schemes are needed in order to have fast runtime in wave models. Parallelization using the Message Passing Interface are needed in order to run on computers with thousands of processors. Implicit schemes rely on preconditioner in order for the iterative schemes to converge fast. Thus we need fast preconditioners and we present those here.

Minimax optimal alternating minimization \\ for kernel nonparametric tensor l...

PFN主催NIPS2016読み会での招待講演資料です.

同名の論文と関連する話題を紹介します.

MVPA with SpaceNet: sparse structured priors

The GraphNet (aka S-Lasso), as well as other “sparsity + structure” priors like TV (Total-Variation), TV-L1, etc., are not easily applicable to brain data because of technical problems

relating to the selection of the regularization parameters. Also, in

their own right, such models lead to challenging high-dimensional optimization problems. In this manuscript, we present some heuristics for speeding up the overall optimization process: (a) Early-stopping, whereby one halts the optimization process when the test score (performance on leftout data) for the internal cross-validation for model-selection stops improving, and (b) univariate feature-screening, whereby irrelevant (non-predictive) voxels are detected and eliminated before the optimization problem is entered, thus reducing the size of the problem. Empirical results with GraphNet on real MRI (Magnetic Resonance Imaging) datasets indicate that these heuristics are a win-win strategy, as they add speed without sacrificing the quality of the predictions. We expect the proposed heuristics to work on other models like TV-L1, etc.

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.Litvinenko low-rank kriging +FFT poster

We combined: low-rank tensor techniques and FFT to compute kriging, estimate variance, compute conditional covariance. We are able to solve 3D problems with very high resolution

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

Sequential quasi-Monte Carlo (SQMC) is a quasi-Monte Carlo (QMC) version of sequential Monte Carlo (or particle filtering), a popular class of Monte Carlo techniques used to carry out inference in state space models. In this talk I will first review the SQMC methodology as well as some theoretical results. Although SQMC converges faster than the usual Monte Carlo error rate its performance deteriorates quickly as the dimension of the hidden variable increases. However, I will show with an example that SQMC may perform well for some "high" dimensional problems. I will conclude this talk with some open problems and potential applications of SQMC in complicated settings.More Related Content

What's hot

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

We present recent result on the numerical analysis of Quasi Monte-Carlo quadrature methods, applied to forward and inverse uncertainty quantification for elliptic and parabolic PDEs. Particular attention will be placed on Higher

-Order QMC, the stable and efficient generation of

interlaced polynomial lattice rules, and the numerical analysis of multilevel QMC Finite Element discretizations with applications to computational uncertainty quantification. QMC: Transition Workshop - Density Estimation by Randomized Quasi-Monte Carlo...

QMC: Transition Workshop - Density Estimation by Randomized Quasi-Monte Carlo...The Statistical and Applied Mathematical Sciences Institute

We examine the effectiveness of randomized quasi Monte Carlo (RQMC) to improve the convergence rate of the mean integrated square error, compared with crude Monte Carlo (MC), when estimating the density of a random variable X defined as a function over the s-dimensional unit cube (0,1)^s. We consider histograms and kernel density estimators. We show both theoretically and empirically that RQMC estimators can achieve faster convergence rates in

some situations.

This is joint work with Amal Ben Abdellah, Art B. Owen, and Florian Puchhammer.MLHEP 2015: Introductory Lecture #4

* tuning gradient boosting over decision trees (GBDT)

* speeding up predictions for online triggers: lookup tables

* PCA, autoencoder, manifold learning

* structural learning: Markov chain, LDA

* remarks on collaborative research

MLHEP 2015: Introductory Lecture #2

* Logistic regression, logistic loss (log loss)

* stochastic optimization

* adding new features, generalized linear model

* Kernel trick, intro to SVM

* Overfitting

* Decision trees for classification and regression

* Building trees greedily: Gini index, entropy

* Trees fighting with overfitting: pre-stopping and post-pruning

* Feature importances

QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...

QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...The Statistical and Applied Mathematical Sciences Institute

We will describe and analyze accurate and efficient numerical algorithms to interpolate and approximate the integral of multivariate functions. The algorithms can be applied when we are given the function values at an arbitrary positioned, and usually small, existing sparse set of function values (samples), and additional samples are impossible, or difficult (e.g. expensive) to obtain. The methods are based on local, and global, tensor-product sparse quasi-interpolation methods that are exact for a class of sparse multivariate orthogonal polynomials.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.Catalogue of Models for Electricity Prices Part 2

Short overview of stochastic models for electricity markets - Part II

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

Multidimensional integrals may be approximated by weighted averages of integrand values. Quasi-Monte Carlo (QMC) methods are more accurate than simple Monte Carlo methods because they carefully choose where to evaluate the integrand. This tutorial focuses on how quickly QMC methods converge to the correct answer as the number of integrand values increases. The answer may depend on the smoothness of the integrand and the sophistication of the QMC method. QMC error analysis may assumes the integrand belongs to a reproducing kernel Hilbert space or may assume that the integrand is an instance of a stochastic process with known covariance structure. These two approaches have interesting parallels. This tutorial also explores how the computational cost of achieving a good approximation to the integral depends on the dimension of the domain of the integrand. Finally, this tutorial explores methods for determining how many integrand values are needed to satisfy the error tolerance. Relevant software is described.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

Markov chain Monte Carlo (MCMC) methods are popularly used in Bayesian computation. However, they need large number of samples for convergence which can become costly when the posterior distribution is expensive to evaluate. Deterministic sampling techniques such as Quasi-Monte Carlo (QMC) can be a useful alternative to MCMC, but the existing QMC methods are mainly developed only for sampling from unit hypercubes. Unfortunately, the posterior distributions can be highly correlated and nonlinear making them occupy very little space inside a hypercube. Thus, most of the samples from QMC can get wasted. The QMC samples can be saved if they can be pulled towards the high probability regions of the posterior distribution using inverse probability transforms. But this can be done only when the distribution function is known, which is rarely the case in Bayesian problems. In this talk, I will discuss a deterministic sampling technique, known as minimum energy designs, which can directly sample from the posterior distributions.Implicit schemes for wave models

Implicit schemes are needed in order to have fast runtime in wave models. Parallelization using the Message Passing Interface are needed in order to run on computers with thousands of processors. Implicit schemes rely on preconditioner in order for the iterative schemes to converge fast. Thus we need fast preconditioners and we present those here.

Minimax optimal alternating minimization \\ for kernel nonparametric tensor l...

PFN主催NIPS2016読み会での招待講演資料です.

同名の論文と関連する話題を紹介します.

MVPA with SpaceNet: sparse structured priors

The GraphNet (aka S-Lasso), as well as other “sparsity + structure” priors like TV (Total-Variation), TV-L1, etc., are not easily applicable to brain data because of technical problems

relating to the selection of the regularization parameters. Also, in

their own right, such models lead to challenging high-dimensional optimization problems. In this manuscript, we present some heuristics for speeding up the overall optimization process: (a) Early-stopping, whereby one halts the optimization process when the test score (performance on leftout data) for the internal cross-validation for model-selection stops improving, and (b) univariate feature-screening, whereby irrelevant (non-predictive) voxels are detected and eliminated before the optimization problem is entered, thus reducing the size of the problem. Empirical results with GraphNet on real MRI (Magnetic Resonance Imaging) datasets indicate that these heuristics are a win-win strategy, as they add speed without sacrificing the quality of the predictions. We expect the proposed heuristics to work on other models like TV-L1, etc.

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.Litvinenko low-rank kriging +FFT poster

We combined: low-rank tensor techniques and FFT to compute kriging, estimate variance, compute conditional covariance. We are able to solve 3D problems with very high resolution

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

Sequential quasi-Monte Carlo (SQMC) is a quasi-Monte Carlo (QMC) version of sequential Monte Carlo (or particle filtering), a popular class of Monte Carlo techniques used to carry out inference in state space models. In this talk I will first review the SQMC methodology as well as some theoretical results. Although SQMC converges faster than the usual Monte Carlo error rate its performance deteriorates quickly as the dimension of the hidden variable increases. However, I will show with an example that SQMC may perform well for some "high" dimensional problems. I will conclude this talk with some open problems and potential applications of SQMC in complicated settings.What's hot (20)

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

QMC: Transition Workshop - Density Estimation by Randomized Quasi-Monte Carlo...

QMC: Transition Workshop - Density Estimation by Randomized Quasi-Monte Carlo...

QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...

QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Minimax optimal alternating minimization \\ for kernel nonparametric tensor l...

Minimax optimal alternating minimization \\ for kernel nonparametric tensor l...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Viewers also liked

Viewers also liked (19)

Quantum conditional states, bayes' rule, and state compatibility

Quantum conditional states, bayes' rule, and state compatibility

Stinespring’s theorem for maps on hilbert c star modules

Stinespring’s theorem for maps on hilbert c star modules

Security of continuous variable quantum key distribution against general attacks

Security of continuous variable quantum key distribution against general attacks

Course 10 example application of random signals - oversampling and noise sh...

Course 10 example application of random signals - oversampling and noise sh...

Manipulating continuous variable photonic entanglement

Manipulating continuous variable photonic entanglement

Entropic characteristics of quantum channels and the additivity problem

Entropic characteristics of quantum channels and the additivity problem

Similar to Randomness conductors

Density theorems for Euclidean point configurations

Croatian-German meeting on analysis and mathematical physics, March 25, 2021

Advances in Directed Spanners

Slides of a talk at CMU Theory lunch (http://www.cs.cmu.edu/~theorylunch/20111116.html) and Capital Area Theory seminar (http://www.cs.umd.edu/areas/Theory/CATS/#Grigory).

Numerical Linear Algebra for Data and Link Analysis.

Talk at Google about spectral graph partitioning and distributed pager rank computing using linear systems

Subgradient Methods for Huge-Scale Optimization Problems - Юрий Нестеров, Cat...

We consider a new class of huge-scale problems, the problems with sparse subgradients. The most important functions of this type are piecewise linear. For optimization problems with uniform sparsity of corresponding linear operators, we suggest a very efficient implementation of subgradient iterations, the total cost of which depends logarithmically in the dimension. This technique is based on a recursive update of the results of matrix/vector products and the values of symmetric functions. It works well, for example, for matrices with few nonzero diagonals and for max-type functions.

We show that the updating technique can be efficiently coupled with the simplest subgradient methods. Similar results can be obtained for a new non-smooth random variant of a coordinate descent scheme. We also present promising results of preliminary computational experiments.

The Gaussian Hardy-Littlewood Maximal Function

My presentation at the Young Functional Analyst Seminar 2014 at Lancaster, UK

Andreas Eberle

"The Metropolis adjusted Langevin Algorithm

for log-concave probability measures in high

dimensions", talk by Andreas Elberle at the BigMC seminar, 9th June 2011, Paris

1535 graph algorithms

Mathematics (from Greek μάθημα máthēma, “knowledge, study, learning”) is the study of topics such as quantity (numbers), structure, space, and change. There is a range of views among mathematicians and philosophers as to the exact scope and definition of mathematics

Sketching and locality sensitive hashing for alignment

Sketching and locality sensitive hashing for alignment

Guillaume Marcais

CMU

CLIM Fall 2017 Course: Statistics for Climate Research, Statistics of Climate...

CLIM Fall 2017 Course: Statistics for Climate Research, Statistics of Climate...The Statistical and Applied Mathematical Sciences Institute

This class was presented as part of the Statistics for Climate Research Fall Course.Similar to Randomness conductors (20)

Density theorems for Euclidean point configurations

Density theorems for Euclidean point configurations

Numerical Linear Algebra for Data and Link Analysis.

Numerical Linear Algebra for Data and Link Analysis.

Subgradient Methods for Huge-Scale Optimization Problems - Юрий Нестеров, Cat...

Subgradient Methods for Huge-Scale Optimization Problems - Юрий Нестеров, Cat...

Sketching and locality sensitive hashing for alignment

Sketching and locality sensitive hashing for alignment

CLIM Fall 2017 Course: Statistics for Climate Research, Statistics of Climate...

CLIM Fall 2017 Course: Statistics for Climate Research, Statistics of Climate...

More from wtyru1989

More from wtyru1989 (16)

Continuous variable quantum entanglement and its applications

Continuous variable quantum entanglement and its applications

Postselection technique for quantum channels and applications for qkd

Postselection technique for quantum channels and applications for qkd

Studies on next generation access technology using radio over free space opti...

Studies on next generation access technology using radio over free space opti...

Recently uploaded

UiPath Test Automation using UiPath Test Suite series, part 4

Welcome to UiPath Test Automation using UiPath Test Suite series part 4. In this session, we will cover Test Manager overview along with SAP heatmap.

The UiPath Test Manager overview with SAP heatmap webinar offers a concise yet comprehensive exploration of the role of a Test Manager within SAP environments, coupled with the utilization of heatmaps for effective testing strategies.

Participants will gain insights into the responsibilities, challenges, and best practices associated with test management in SAP projects. Additionally, the webinar delves into the significance of heatmaps as a visual aid for identifying testing priorities, areas of risk, and resource allocation within SAP landscapes. Through this session, attendees can expect to enhance their understanding of test management principles while learning practical approaches to optimize testing processes in SAP environments using heatmap visualization techniques

What will you get from this session?

1. Insights into SAP testing best practices

2. Heatmap utilization for testing

3. Optimization of testing processes

4. Demo

Topics covered:

Execution from the test manager

Orchestrator execution result

Defect reporting

SAP heatmap example with demo

Speaker:

Deepak Rai, Automation Practice Lead, Boundaryless Group and UiPath MVP

Key Trends Shaping the Future of Infrastructure.pdf

Keynote at DIGIT West Expo, Glasgow on 29 May 2024.

Cheryl Hung, ochery.com

Sr Director, Infrastructure Ecosystem, Arm.

The key trends across hardware, cloud and open-source; exploring how these areas are likely to mature and develop over the short and long-term, and then considering how organisations can position themselves to adapt and thrive.

Neuro-symbolic is not enough, we need neuro-*semantic*

Neuro-symbolic (NeSy) AI is on the rise. However, simply machine learning on just any symbolic structure is not sufficient to really harvest the gains of NeSy. These will only be gained when the symbolic structures have an actual semantics. I give an operational definition of semantics as “predictable inference”.

All of this illustrated with link prediction over knowledge graphs, but the argument is general.

Dev Dives: Train smarter, not harder – active learning and UiPath LLMs for do...

💥 Speed, accuracy, and scaling – discover the superpowers of GenAI in action with UiPath Document Understanding and Communications Mining™:

See how to accelerate model training and optimize model performance with active learning

Learn about the latest enhancements to out-of-the-box document processing – with little to no training required

Get an exclusive demo of the new family of UiPath LLMs – GenAI models specialized for processing different types of documents and messages

This is a hands-on session specifically designed for automation developers and AI enthusiasts seeking to enhance their knowledge in leveraging the latest intelligent document processing capabilities offered by UiPath.

Speakers:

👨🏫 Andras Palfi, Senior Product Manager, UiPath

👩🏫 Lenka Dulovicova, Product Program Manager, UiPath

AI for Every Business: Unlocking Your Product's Universal Potential by VP of ...

AI for Every Business: Unlocking Your Product's Universal Potential by VP of Product, Slack

When stars align: studies in data quality, knowledge graphs, and machine lear...

Keynote at DQMLKG workshop at the 21st European Semantic Web Conference 2024

Unsubscribed: Combat Subscription Fatigue With a Membership Mentality by Head...

Unsubscribed: Combat Subscription Fatigue With a Membership Mentality by Head of Product, Amazon Games

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scalable Platform by VP of Product, The New York Times

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

In this insightful webinar, Inflectra explores how artificial intelligence (AI) is transforming software development and testing. Discover how AI-powered tools are revolutionizing every stage of the software development lifecycle (SDLC), from design and prototyping to testing, deployment, and monitoring.

Learn about:

• The Future of Testing: How AI is shifting testing towards verification, analysis, and higher-level skills, while reducing repetitive tasks.

• Test Automation: How AI-powered test case generation, optimization, and self-healing tests are making testing more efficient and effective.

• Visual Testing: Explore the emerging capabilities of AI in visual testing and how it's set to revolutionize UI verification.

• Inflectra's AI Solutions: See demonstrations of Inflectra's cutting-edge AI tools like the ChatGPT plugin and Azure Open AI platform, designed to streamline your testing process.

Whether you're a developer, tester, or QA professional, this webinar will give you valuable insights into how AI is shaping the future of software delivery.

Empowering NextGen Mobility via Large Action Model Infrastructure (LAMI): pav...

Empowering NextGen Mobility via Large Action Model Infrastructure (LAMI)

Connector Corner: Automate dynamic content and events by pushing a button

Here is something new! In our next Connector Corner webinar, we will demonstrate how you can use a single workflow to:

Create a campaign using Mailchimp with merge tags/fields

Send an interactive Slack channel message (using buttons)

Have the message received by managers and peers along with a test email for review

But there’s more:

In a second workflow supporting the same use case, you’ll see:

Your campaign sent to target colleagues for approval

If the “Approve” button is clicked, a Jira/Zendesk ticket is created for the marketing design team

But—if the “Reject” button is pushed, colleagues will be alerted via Slack message

Join us to learn more about this new, human-in-the-loop capability, brought to you by Integration Service connectors.

And...

Speakers:

Akshay Agnihotri, Product Manager

Charlie Greenberg, Host

Knowledge engineering: from people to machines and back

Keynote at the 21st European Semantic Web Conference

GenAISummit 2024 May 28 Sri Ambati Keynote: AGI Belongs to The Community in O...

“AGI should be open source and in the public domain at the service of humanity and the planet.”

Essentials of Automations: Optimizing FME Workflows with Parameters

Are you looking to streamline your workflows and boost your projects’ efficiency? Do you find yourself searching for ways to add flexibility and control over your FME workflows? If so, you’re in the right place.

Join us for an insightful dive into the world of FME parameters, a critical element in optimizing workflow efficiency. This webinar marks the beginning of our three-part “Essentials of Automation” series. This first webinar is designed to equip you with the knowledge and skills to utilize parameters effectively: enhancing the flexibility, maintainability, and user control of your FME projects.

Here’s what you’ll gain:

- Essentials of FME Parameters: Understand the pivotal role of parameters, including Reader/Writer, Transformer, User, and FME Flow categories. Discover how they are the key to unlocking automation and optimization within your workflows.

- Practical Applications in FME Form: Delve into key user parameter types including choice, connections, and file URLs. Allow users to control how a workflow runs, making your workflows more reusable. Learn to import values and deliver the best user experience for your workflows while enhancing accuracy.

- Optimization Strategies in FME Flow: Explore the creation and strategic deployment of parameters in FME Flow, including the use of deployment and geometry parameters, to maximize workflow efficiency.

- Pro Tips for Success: Gain insights on parameterizing connections and leveraging new features like Conditional Visibility for clarity and simplicity.

We’ll wrap up with a glimpse into future webinars, followed by a Q&A session to address your specific questions surrounding this topic.

Don’t miss this opportunity to elevate your FME expertise and drive your projects to new heights of efficiency.

"Impact of front-end architecture on development cost", Viktor Turskyi

I have heard many times that architecture is not important for the front-end. Also, many times I have seen how developers implement features on the front-end just following the standard rules for a framework and think that this is enough to successfully launch the project, and then the project fails. How to prevent this and what approach to choose? I have launched dozens of complex projects and during the talk we will analyze which approaches have worked for me and which have not.

Mission to Decommission: Importance of Decommissioning Products to Increase E...

Mission to Decommission: Importance of Decommissioning Products to Increase Enterprise-Wide Efficiency by VP Data Platform, American Express

Recently uploaded (20)

UiPath Test Automation using UiPath Test Suite series, part 4

UiPath Test Automation using UiPath Test Suite series, part 4

FIDO Alliance Osaka Seminar: Passkeys at Amazon.pdf

FIDO Alliance Osaka Seminar: Passkeys at Amazon.pdf

Key Trends Shaping the Future of Infrastructure.pdf

Key Trends Shaping the Future of Infrastructure.pdf

Neuro-symbolic is not enough, we need neuro-*semantic*

Neuro-symbolic is not enough, we need neuro-*semantic*

Dev Dives: Train smarter, not harder – active learning and UiPath LLMs for do...

Dev Dives: Train smarter, not harder – active learning and UiPath LLMs for do...

FIDO Alliance Osaka Seminar: Passkeys and the Road Ahead.pdf

FIDO Alliance Osaka Seminar: Passkeys and the Road Ahead.pdf

AI for Every Business: Unlocking Your Product's Universal Potential by VP of ...

AI for Every Business: Unlocking Your Product's Universal Potential by VP of ...

When stars align: studies in data quality, knowledge graphs, and machine lear...

When stars align: studies in data quality, knowledge graphs, and machine lear...

Unsubscribed: Combat Subscription Fatigue With a Membership Mentality by Head...

Unsubscribed: Combat Subscription Fatigue With a Membership Mentality by Head...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

FIDO Alliance Osaka Seminar: FIDO Security Aspects.pdf

FIDO Alliance Osaka Seminar: FIDO Security Aspects.pdf

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

Empowering NextGen Mobility via Large Action Model Infrastructure (LAMI): pav...

Empowering NextGen Mobility via Large Action Model Infrastructure (LAMI): pav...

Connector Corner: Automate dynamic content and events by pushing a button

Connector Corner: Automate dynamic content and events by pushing a button

Knowledge engineering: from people to machines and back

Knowledge engineering: from people to machines and back

GenAISummit 2024 May 28 Sri Ambati Keynote: AGI Belongs to The Community in O...

GenAISummit 2024 May 28 Sri Ambati Keynote: AGI Belongs to The Community in O...

Essentials of Automations: Optimizing FME Workflows with Parameters

Essentials of Automations: Optimizing FME Workflows with Parameters

"Impact of front-end architecture on development cost", Viktor Turskyi

"Impact of front-end architecture on development cost", Viktor Turskyi

Mission to Decommission: Importance of Decommissioning Products to Increase E...

Mission to Decommission: Importance of Decommissioning Products to Increase E...

Randomness conductors

- 1. Randomness Conductors (II) Condensers Expander Graphs Universal Hash Functions .. ... Randomness Extractors .

- 2. Randomness Conductors – Motivation • Various relations between expanders, extractors, condensers & universal hash functions. • Unifying all of these as instances of a more general combinatorial object: – Useful in constructions. – Possible to study new phenomena not captured by either individual object.

- 3. Randomness Conductors Meta-Definition N M Prob. dist. X Prob. dist. X’ D x x’ An R-conductor if for every (k,k’) ∈ R, X has ≥ k bits of “entropy” ⇒ X’ has ≥ k’ bits of “entropy”.

- 4. Measures of Entropy • A naïve measure - support size • Collision(X) = Pr[X(1)=X(2)] = ||X||2 • Min-entropy(X) ≥ k if ∀x, Pr[x] ≤ 2-k • X and Y are ε-close if maxT | Pr[X∈T] - Pr[Y∈T] | = ½ ||X-Y||1 ≤ ε • X’ is ε-close Y of min-entropy k ⇒ | Support(X’)|≥ (1-ε) 2k

- 5. Vertex Expansion N N |Support(X’)| |Support(X)|≤ K D ≥ A |Support(X)| (A > 1) Lossless expanders: A > (1-ε) D (for ε < ½)

- 6. 2nd Eigenvalue Expansion N N X D X’ λ < β < 1, collision(X’) –1/N ≤ λ2 (collision(X) –1/N)

- 7. Unbalanced Expanders / Condensers N M≪N X X’ D • Farewell constant degree (for any non-trivial task |Support(X)|= N0.99, |Support(X’)|≥ 10D) • Requiring small collision(X’) too strong (same for large min-entropy(X’)).

- 8. Dispersers and Extractors [Sipser 88,NZ 93] N M≪N X X’ D • (k,ε)-disperser if |Support(X)| ≥ 2k ⇒ |Support(X’)|≥ (1-ε) M • (k,ε)-extractor if Min-entropy(X) ≥ k ⇒ X’ ε-close to uniform

- 9. Randomness Conductors • Expanders, extractors, condensers & universal hash functions are all functions, f : [N] × [D] → [M], that transform: X “of entropy” k ⇒ X’ = f (X,Uniform) “of entropy” k’ Randomness conductors: • Many flavors: – Measure of entropy. As in extractors. – Balanced vs. unbalanced. – Lossless vs. lossy. Allows the entire – Lower vs. upper bound on k. spectrum. – Is X’ close to uniform? – …

- 10. Conductors: Broad Spectrum Approach N M≪N X X’ D • An ε-conductor, ε:[0, log N]×[0, log M]→[0,1], if: ∀ k, k’, min-entropy(X’) ≥ k ⇒ X’ ε (k,k’)-close to some Y of min-entropy k’

- 11. Constructions Most applications need explicit expanders. Could mean: • Should be easy to build G (in time poly N). • When N is huge (e.g. 260) need: – Given vertex name x and edge label i easy to find the ith neighbor of x (in time poly log N).

- 12. [CRVW 02]: Const. Degree, Lossless Expanders … N N ∀S, |S|≤ K |Γ(S)| ≥ (1-ε) D |S| D (K=Ω (N))

- 13. … That Can Even Be Slightly Unbalanced N M=δ N ∀S, |S|≤ K |Γ(S)| ≥ (1-ε) D |S| D 0<ε,δ≤ 1 are constants ⇒ D is constant & K=Ω (N) For the curious: K=Ω (ε M/D) & D= poly (1/ε, log (1/δ)) (fully explicit: D= quasi poly (1/ε, log (1/δ)).

- 14. History • Explicit construction of constant-degree expanders was difficult. • Celebrated sequence of algebraic constructions [Mar73 ,GG80,JM85,LPS86,AGM87,Mar88,Mor94]. • Achieved optimal 2nd eigenvalue (Ramanujan graphs), but this only implies expansion ≤ D/2 [Kah95]. • “Combinatorial” constructions: Ajtai [Ajt87], more explicit and very simple: [RVW00]. • “Lossless objects”: [Alo95,RR99,TUZ01] • Unique neighbor, constant degree expanders [Cap01,AC02].

- 15. The Lossless Expanders • Starting point [RVW00]: A combinatorial construction of constant-degree expanders with simple analysis. • Heart of construction – New Zig-Zag Graph Product: Compose large graph w/ small graph to obtain a new graph which (roughly) inherits – Size of large graph. – Degree from the small graph. – Expansion from both.

- 16. The Zigzag Product z “Theorem”: Expansion (G1 z G2) ≈ min {Expansion (G1), Expansion (G2)}

- 17. Zigzag Intuition (Case I) Conditional distributions within “clouds” far from uniform – The first “small step” adds entropy. – Next two steps can’t lose entropy.

- 18. Zigzag Intuition (Case II) Conditional distributions within clouds uniform • First small step does nothing. • Step on big graph “scatters” among clouds (shifts entropy) • Second small step adds entropy.

- 19. Reducing to the Two Cases • Need to show: the transition prob. matrix M of G1 z 2 shrinks every vector π∈ℜND that is G perp. to uniform. 1 2 … … D • Write π as N×D Matrix: 1 π ⊥ uniform ⇒ sum of … entries is 0. u .4 -.3 … … 0 – RowSums(x) = “distribution” … on clouds themselves N • Can decompose π = π|| + π⊥ , where π|| is constant on rows, and all rows of π⊥ are perp. to uniform. • Suffices to show M shrinks π|| and π⊥ individually!

- 20. Results & Extensions [RVW00] • Simple analysis in terms of second eigenvalue mimics the intuition. • Can obtain degree 3 ! • Additional results (high min-entropy extractors and their applications). • Subsequent work [ALW01,MW01] relates to semidirect product of groups ⇒ new results on expanding Cayley graphs.

- 21. Closer Look: Rotation Maps • Expanders normally viewed as maps (vertex)×(edge label) → (vertex). X,i Y,j • Here: (vertex)×(edge label) → (vertex)×(edge label). Permutation ⇒ The big step never lose. (X,i) → (Y,j) if (X, i ) and (Y, j ) Inspired by ideas from the setting of correspond to “extractors” [RR99]. same edge of G1

- 22. Inherent Entropy Loss – In each case, only one of two small steps “works” – But paid for both in degree.

- 23. Trying to improve ??? ???

- 24. Zigzag for Unbalanced Graphs • The zig-zag product for conductors can produce constant degree, lossless expanders. • Previous constructions and composition techniques from the extractor literature extend to (useful) explicit constructions of conductors.

- 25. Some Open Problems • Being lossless from both sides (the non-bipartite case). • Better expansion yet? • Further study of randomness conductors.