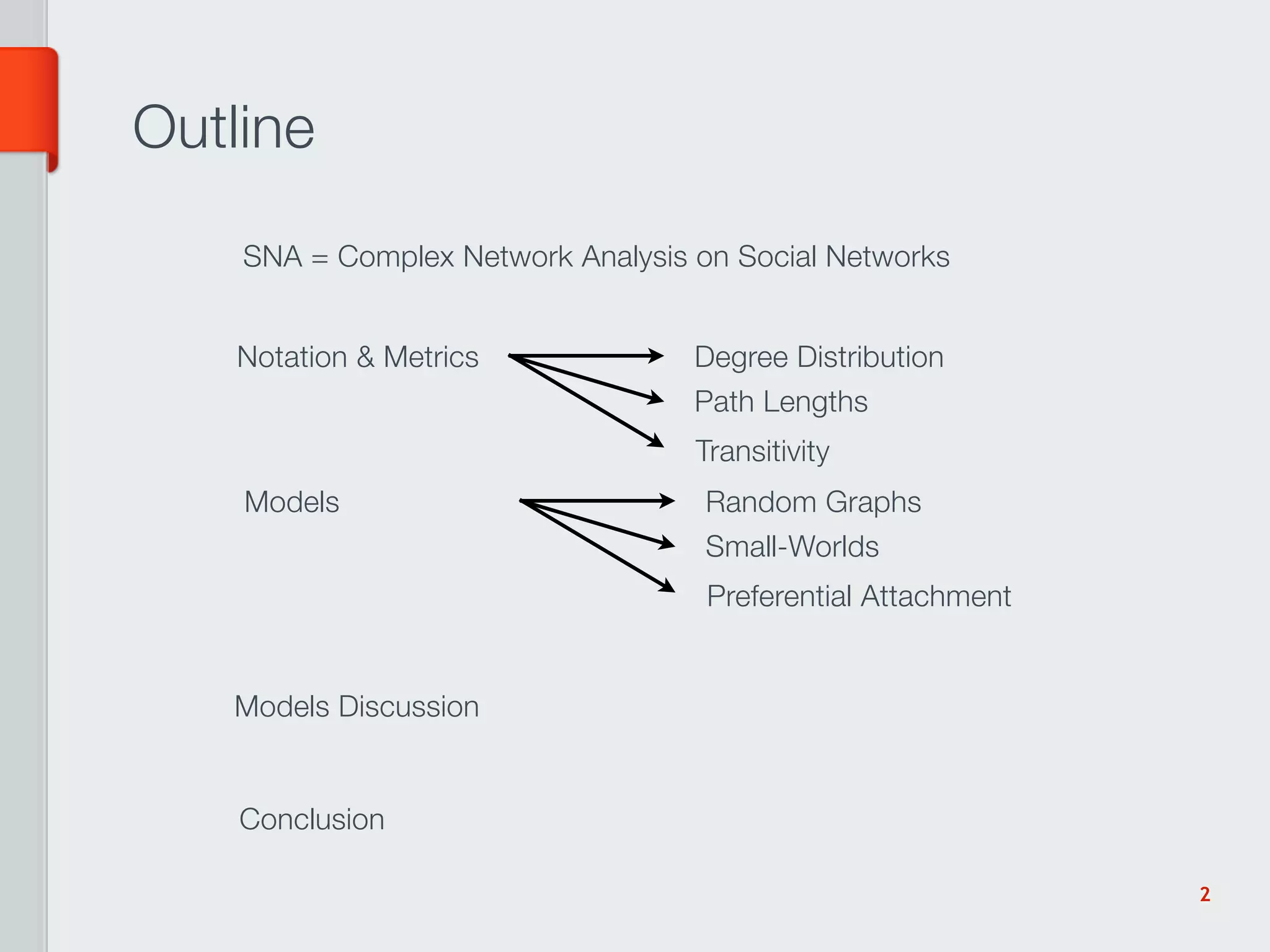

The document summarizes key concepts in social network analysis including metrics like degree distribution, path lengths, transitivity, and clustering coefficients. It also discusses models of network growth and structure like random graphs, small-world networks, and preferential attachment. Computational aspects of analyzing large networks like calculating shortest paths and the diameter are also covered.

![Shortest Path Length and Diameter

scalar operations

AB = A + .⋅ B The matrix product depends from

( A,+,⋅) [ AB]ij = ∑ A ik ⋅ Bkj

the operations of the semi-ring

k

Set of Adjacency Matrices

min

Other matrix products make sense: e.g., ( A,+,^ ) or ( A,^,+ )

We consider: (

Sk (M) = M + .^ M k ^ .+ M k )

Shortest path lengths matrix: L = ( Sn … S1 ) ( M )

Diameter: d = max L Average shortest path: = Lij

ij

5](https://image.slidesharecdn.com/sna-120125090150-phpapp02/75/Social-Network-Analysis-5-2048.jpg)

![Transitive Linking Model [Davidsen 02]

Transitive Linking

I At each step:

TL: a random node is chosen, and it introduces two other nodes that

are linked to it; if the node does not have 2 edges, it introduces

himself to a random node

RM: with probability p a node is chosen and removed along its edges

and replaced with a node with one random edge

I When p ⇤ 1 the TL dominates the process:

I the degree distribution is a power-law with cutoff

I 1 C = p(⌅k ⇧ 1), i.e., quite large in practice

I For larger values of p the two different process concur to form an

exponential degree distribution

I for p ⇥ 1 the degree distribution is essentially a Poisson

distribution

Instead of p it would make sense to have distinct p and r

Bergenti, Franchi, Poggi (Univ. Parma) Models for Agent-based Simulation of SN SNAMAS ’11 11 / 19

parameters for nodes leaving and entering the network

Few analytic results available.

20](https://image.slidesharecdn.com/sna-120125090150-phpapp02/75/Social-Network-Analysis-20-2048.jpg)

![[1]

Dorogovtsev, S. N. and Mendes, J. F. F. 2003 Evolution of Networks: From Biological Nets

to the Internet and WWW (Physics). Oxford University Press, USA.

[2]

Watts, D. J. 2003 Small Worlds: The Dynamics of Networks between Order and

Randomness (Princeton Studies in Complexity). Princeton University Press.

[3]

Jackson, M. O. 2010 Social and Economic Networks. Princeton University Press.

[4]

Newman, M. 2010 Networks: An Introduction. Oxford University Press, USA.

[5]

Wasserman, S. and Faust, K. 1994 Social Network Analysis: Methods and Applications

(Structural Analysis in the Social Sciences). Cambridge University Press.

[6]

Scott, J. P. 2000 Social Network Analysis: A Handbook. Sage Publications Ltd.

[7]

Kepner, J. and Gilbert, J. 2011 Graph Algorithms in the Language of Linear Algebra

(Software, Environments, and Tools). Society for Industrial & Applied Mathematics.

[8]

Cormen, T. H., Leiserson, C. E., Rivest, R. L., and Stein, C. 2009 Introduction to

Algorithms. The MIT Press.

[9]

Skiena, S. S. 2010 The Algorithm Design Manual. Springer.

[10]

Bollobas, B. 1998 Modern Graph Theory. Springer.

[11]

Watts, D. J. and Strogatz, S. H. 1998. Collective dynamics of ‘small-world’networks.

Nature. 393, 6684, 440-442.

[12]

Barabási, A. L. and Albert, R. 1999. Emergence of scaling in random networks. Science.

286, 5439, 509.

[13]

Kleinberg, J. 2000. The small-world phenomenon: an algorithm perspective. Proceedings of

the thirty-second annual ACM symposium on Theory of computing. 163-170.

[14]

Milgram, S. 1967. The small world problem. Psychology today. 2, 1, 60-67.

21](https://image.slidesharecdn.com/sna-120125090150-phpapp02/75/Social-Network-Analysis-21-2048.jpg)