The document provides an overview of quantum computing basics, including:

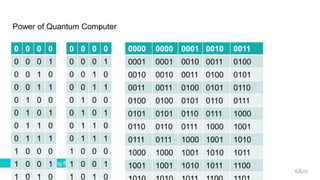

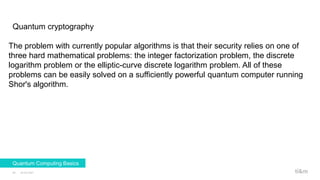

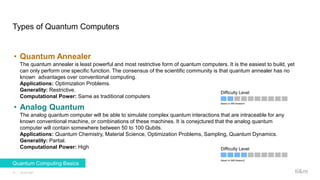

- Types of quantum computers such as quantum annealers, analog quantum computers, and universal quantum computers.

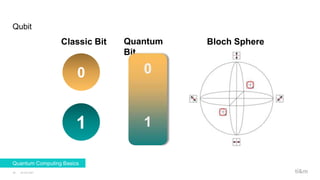

- Key concepts such as qubits, the smallest unit of quantum information that can be in a superposition of states, and common physical implementations like ions and photons.

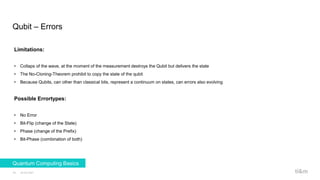

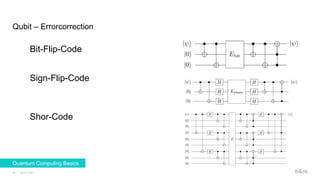

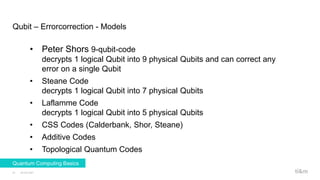

- Challenges like errors that can occur and approaches to error correction using techniques like Shor's code and topological quantum codes.

- An example of Schrodinger's cat thought experiment that illustrates the strange nature of quantum superposition.

![Quantum

23.02.2021

13

Quantum Computing Basics

Photon

It is the quantum of the

electromagnetic field

including electromagnetic

radiation such as light and

radio waves, and the force

carrier for the

electromagnetic force (even

when static via virtual

particles). The invariant mass

of the photon is zero; it

always moves at the speed of

light in a vacuum.

Phonon

Is a collective excitation in a

periodic, elastic arrangement

of atoms or molecules in

condensed matter,

specifically in solids and

some liquids. Often

designated a quasiparticle,[1]

it represents an excited state

in the quantum mechanical

quantization of the modes of

vibrations of elastic structures

of interacting particles.

Plasmon

Is a quantum of plasma

oscillation. Just as light (an

optical oscillation) consists of

photons, the plasma

oscillation consists of

plasmons. The plasmon can

be considered as a

quasiparticle since it arises

from the quantization of

plasma oscillations, just like

phonons are quantizations of

mechanical vibrations.

Magnon

Is a quasiparticle, a collective

excitation of the electrons'

spin structure in a crystal

lattice. In the equivalent wave

picture of quantum

mechanics, a magnon can be

viewed as a quantized spin

wave.](https://image.slidesharecdn.com/ti8mquantumcomputingbasics-210223120224/85/Quantum-Computing-Basics-13-320.jpg)

![Quantum

23.02.2021

14

Quantum Computing Basics

Quant of the angular

momentum

Is the rotational equivalent of

linear momentum. It is an

important quantity in physics

because it is a conserved

quantity—the total angular

momentum of a closed

system remains constant.

Gluon

Is an elementary particle that

acts as the exchange particle

(or gauge boson) for the

strong force between quarks.

It is analogous to the

exchange of photons in the

electromagnetic force

between two charged

particles.[6] In layman's

terms, they "glue" quarks

together, forming hadrons

such as protons and

neutrons.

Graviton (maybe)

s the hypothetical quantum of

gravity, an elementary

particle that mediates the

force of gravity. There is no

complete quantum field

theory of gravitons due to an

outstanding mathematical

problem with renormalization

in general relativity. In string

theory, believed to be a

consistent theory of quantum

gravity, the graviton is a

massless state of a

fundamental string.](https://image.slidesharecdn.com/ti8mquantumcomputingbasics-210223120224/85/Quantum-Computing-Basics-14-320.jpg)