This document discusses process migration and allocation in distributed systems. It covers:

1) Process allocation is easier in multiprocessor systems where all processors share memory and resources, compared to multicomputer systems without shared memory.

2) Processes can either be non-migratory and run on one system, or migratory and move between systems to improve resource utilization. Ensuring transparency is important for migratory processes.

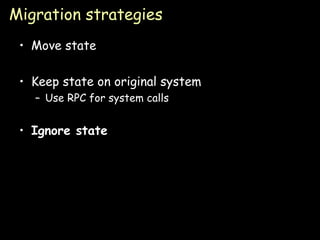

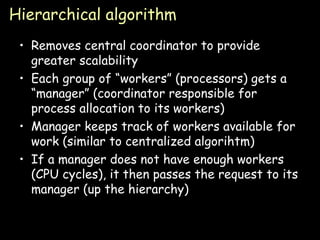

3) Different strategies for process migration include moving state, keeping state on the original system and using RPC, or ignoring state. Centralized, hierarchical, and distributed algorithms can be used to determine optimal or suboptimal migration.

![Process Migration & Allocation Paul Krzyzanowski [email_address] [email_address] Distributed Systems Except as otherwise noted, the content of this presentation is licensed under the Creative Commons Attribution 2.5 License.](https://image.slidesharecdn.com/24-processorallocation-090902071147-phpapp01/85/Processor-Allocation-Distributed-computing-1-320.jpg)