1. There are two main approaches to distributed mutual exclusion - token-based and non-token based. Token based approaches use a shared token to allow only one process access at a time, while non-token approaches use message passing to determine access order.

2. A common token based algorithm uses a centralized coordinator process that grants access to the requesting process. Ring-based algorithms pass a token around a logical ring, allowing the process holding it to enter the critical section.

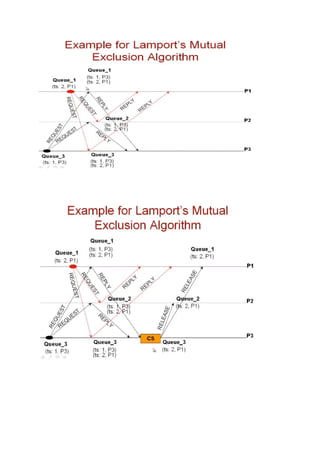

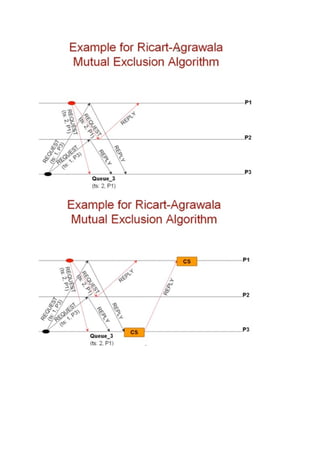

3. Lamport's non-token algorithm uses message passing of requests and timestamps to build identical request queues at each process, allowing the process at the head of the queue to enter the critical section. The Ricart-Agrawala