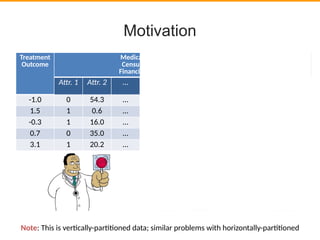

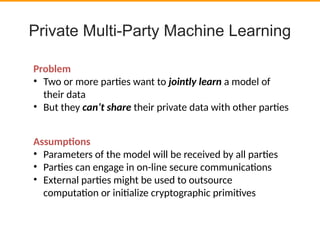

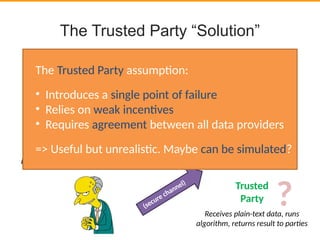

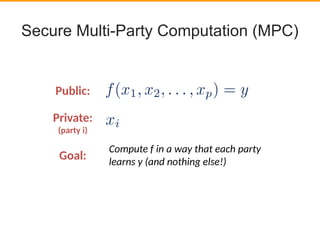

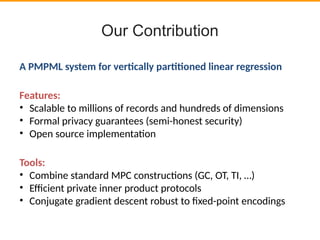

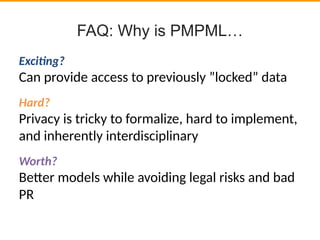

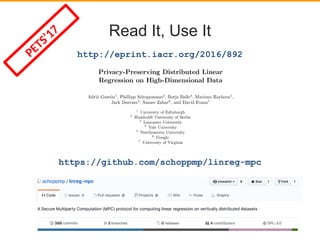

This document discusses privacy-preserving data analysis and multi-party machine learning. It introduces the challenges of jointly learning models on private data from multiple parties without sharing the raw data. Secure multi-party computation techniques allow parties to engage in online secure communications to compute functions while learning only the output and nothing else. The document presents work on a system for privacy-preserving distributed linear regression on vertically partitioned data with formal privacy guarantees. It also discusses other applications like private document classification in federated databases and privacy-preserving distributed hypothesis testing.

![●

Problem: Check security properties on (private)

source code.

●

“Public” equivalent: MOPS [1], and some others.

– Security property expressed as regular expression over

sequences of instructions

– Find all paths in control flow graph that match path

●

Application of Private Regular Path Queries

[1] Hao Chen and David Wagner. 2002. MOPS: an infrastructure for examining security properties of software.

In Proceedings of the 9th ACM conference on Computer and communications security (CCS '02), Vijay Atluri

(Ed.). ACM, New York, NY, USA, 235-244. DOI=http://dx.doi.org/10.1145/586110.586142

Privacy-Preserving Model Checking](https://image.slidesharecdn.com/privacy-preserving-data-analysis-talk-170921103751/85/Privacy-Preserving-Data-Analysis-Adria-Gascon-18-320.jpg)

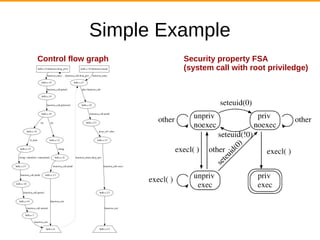

![Simple Example

1 #include <stdio.h>

2 #include <sys/types.h>

3 #include <unistd.h>

4 #include <pwd.h>

5

6 void drop_priv()

7 {

8 struct passwd *passwd;

9

10 if ((passwd = getpwuid(getuid())) == NULL)

11 {

12 printf("getpwuid() failed");

13 return;

14 }

15 printf("Drop user %s's privilegen", passwd-

>pw_name);

16 seteuid(getuid());

17 }

18

19 int main(int argc, char *argv[])

20 {

21 drop_priv();

22 printf("About to execn");

hello.c](https://image.slidesharecdn.com/privacy-preserving-data-analysis-talk-170921103751/85/Privacy-Preserving-Data-Analysis-Adria-Gascon-20-320.jpg)

![Related Work

Verification Across Intellectual Property Boundaries [2]:

[2] Chaki, Sagar, Christian Schallhart, and Helmut Veith. "Verification across intellectual property boundaries."

ACM Transactions on Software Engineering and Methodology (TOSEM) 22.2 (2013): 15.](https://image.slidesharecdn.com/privacy-preserving-data-analysis-talk-170921103751/85/Privacy-Preserving-Data-Analysis-Adria-Gascon-23-320.jpg)

![Related Work

Verification Across Intellectual Property Boundaries [2]

They also say...

“While we are aware of advanced methods such as secure multiparty computation

[Goldreich 2002] and zeroknowledge proofs [Ben-Or et al. 1988], we believe that they are

impracticable for our problem, as such methods cannot be easily wrapped over given

validation tools. Finally, we believe that any advanced method without an intuitive proof for

its secrecy will be heavily opposed by the supplier—and might therefore be hard to

establish in practice.”](https://image.slidesharecdn.com/privacy-preserving-data-analysis-talk-170921103751/85/Privacy-Preserving-Data-Analysis-Adria-Gascon-24-320.jpg)