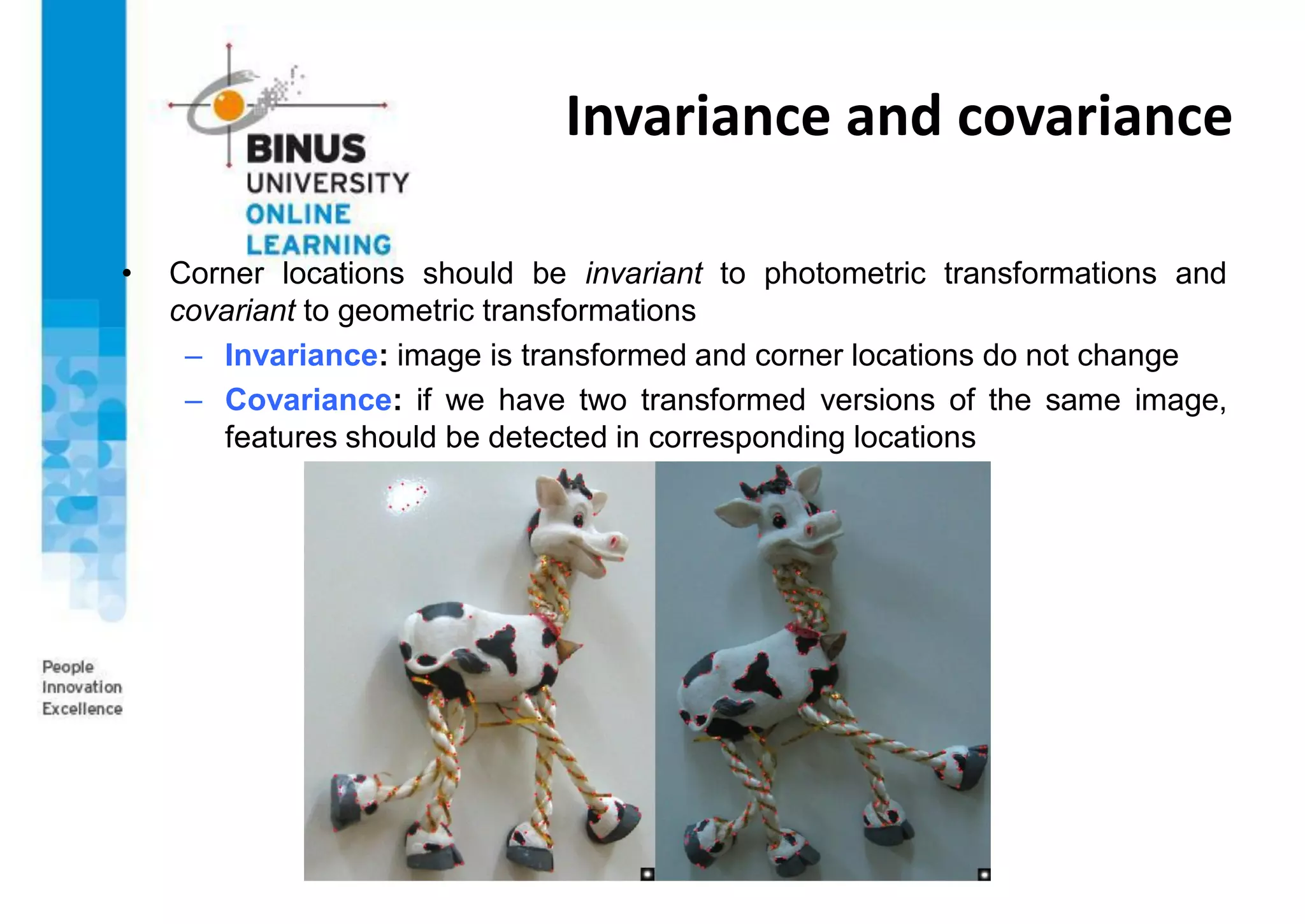

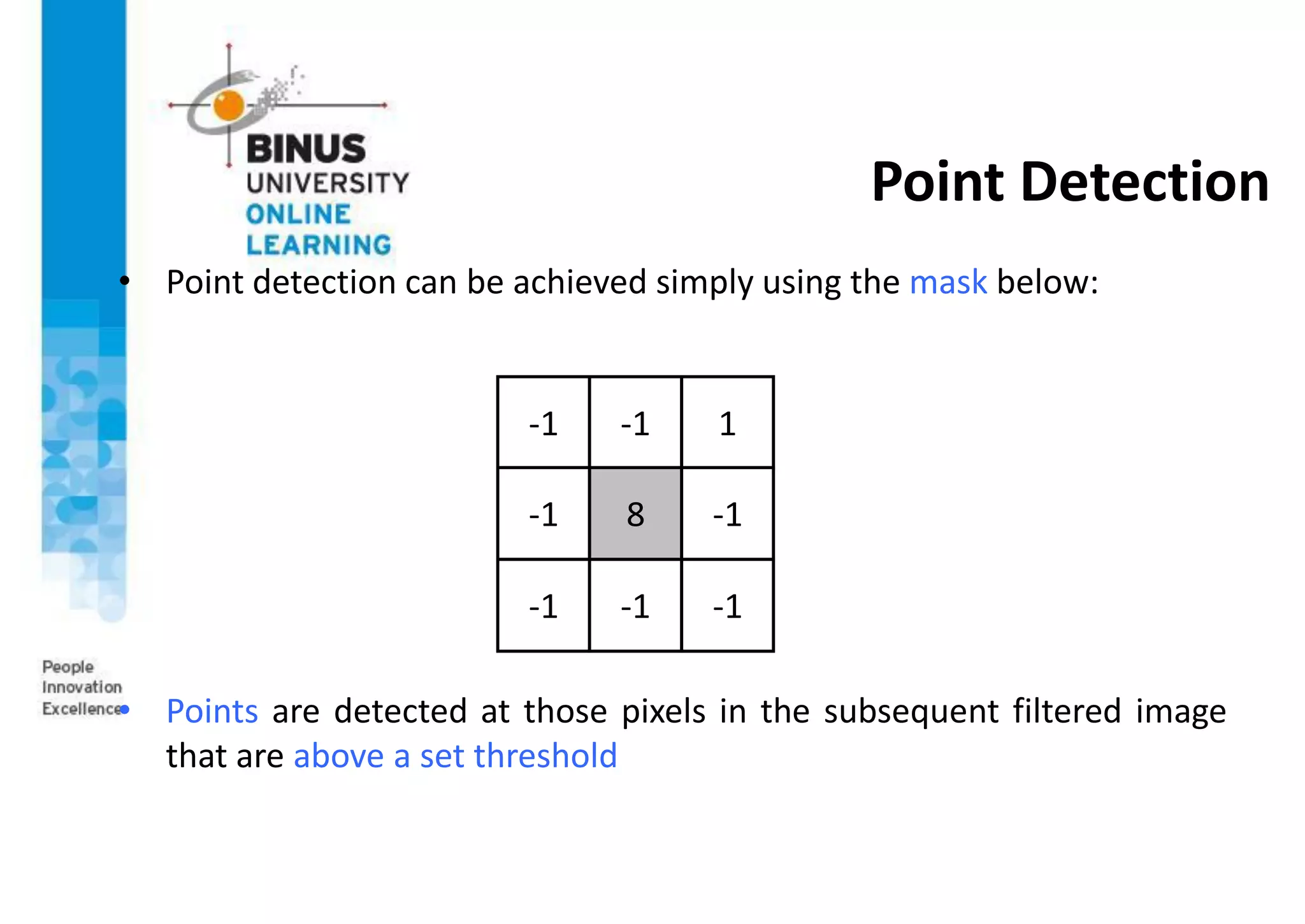

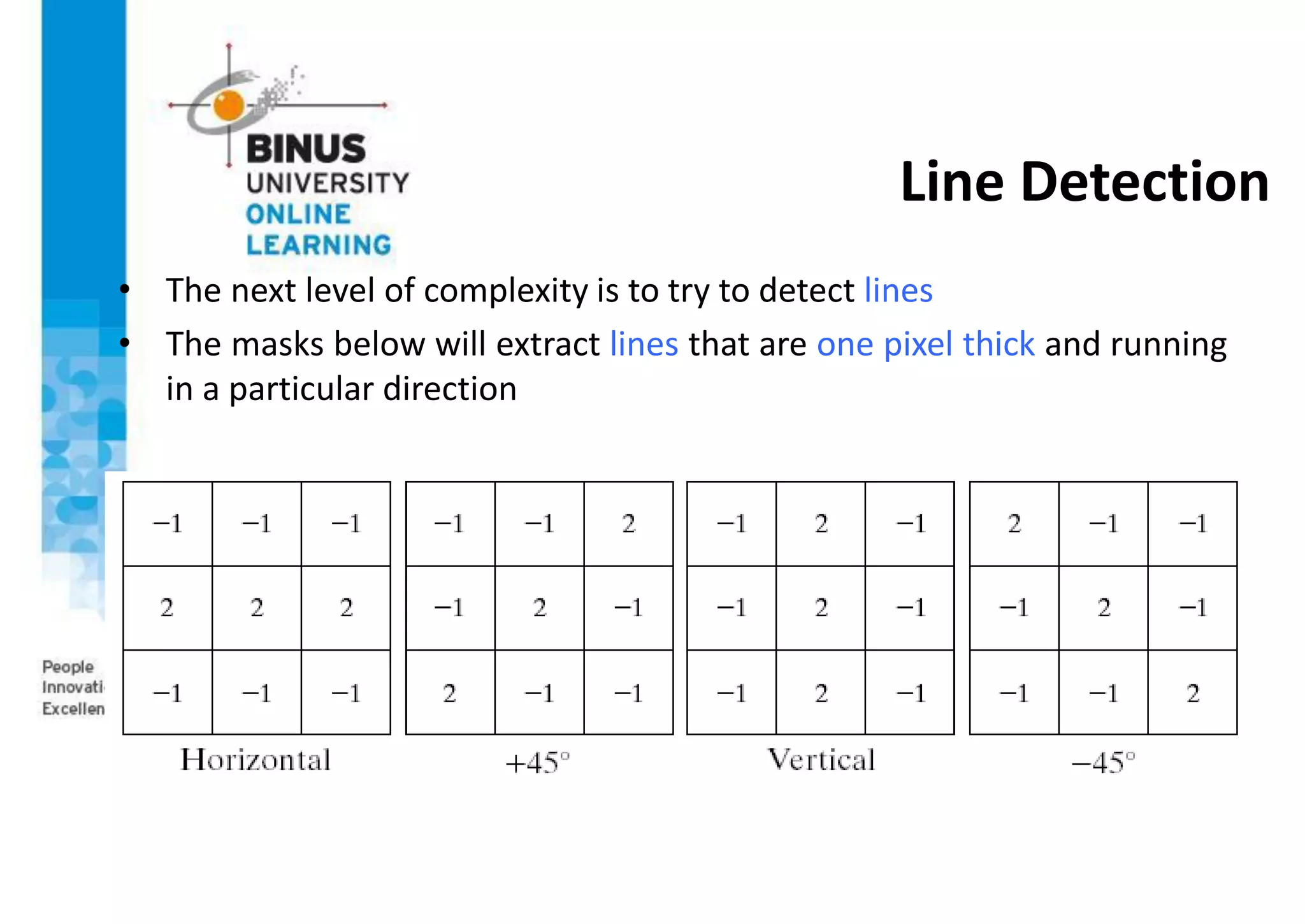

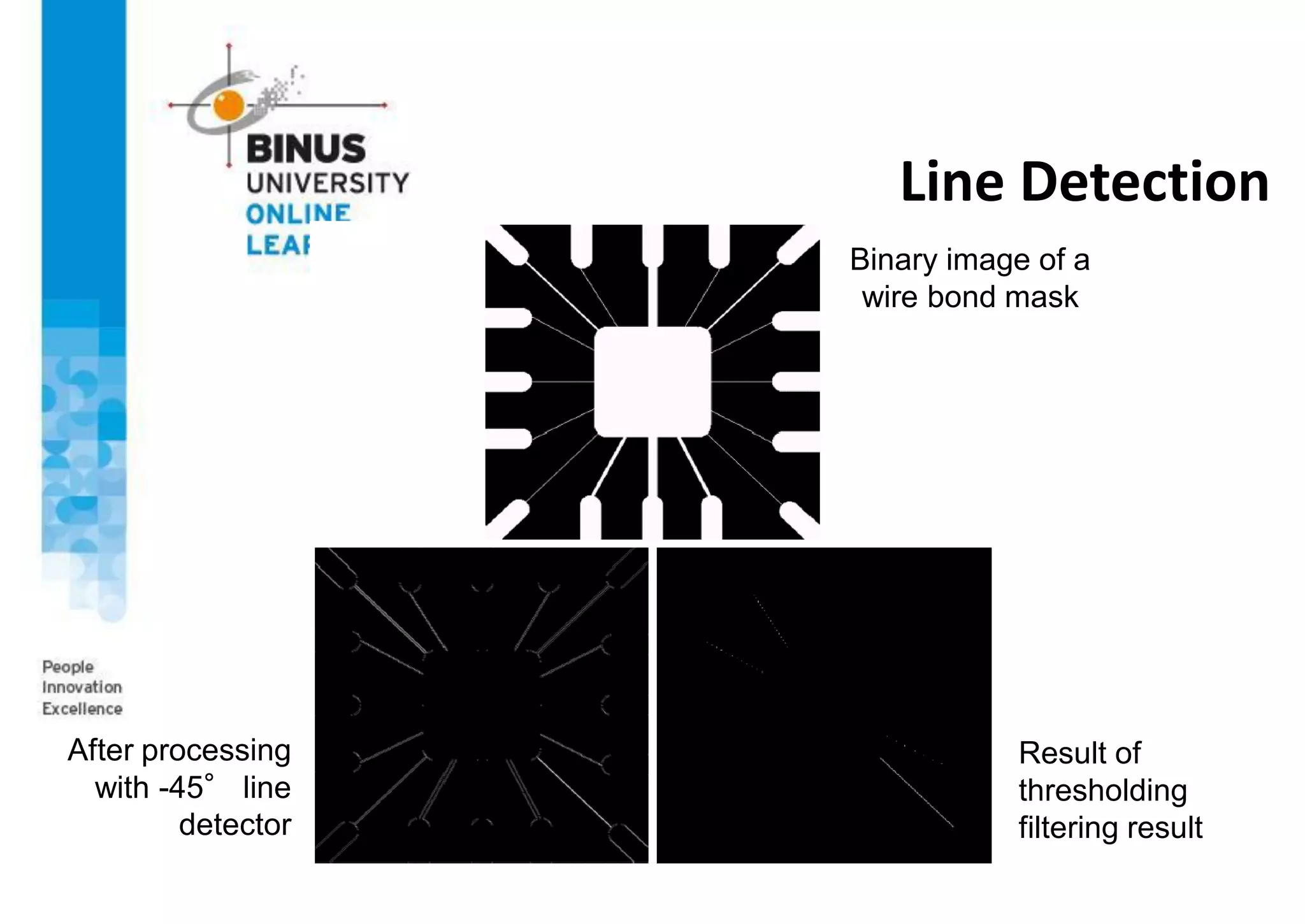

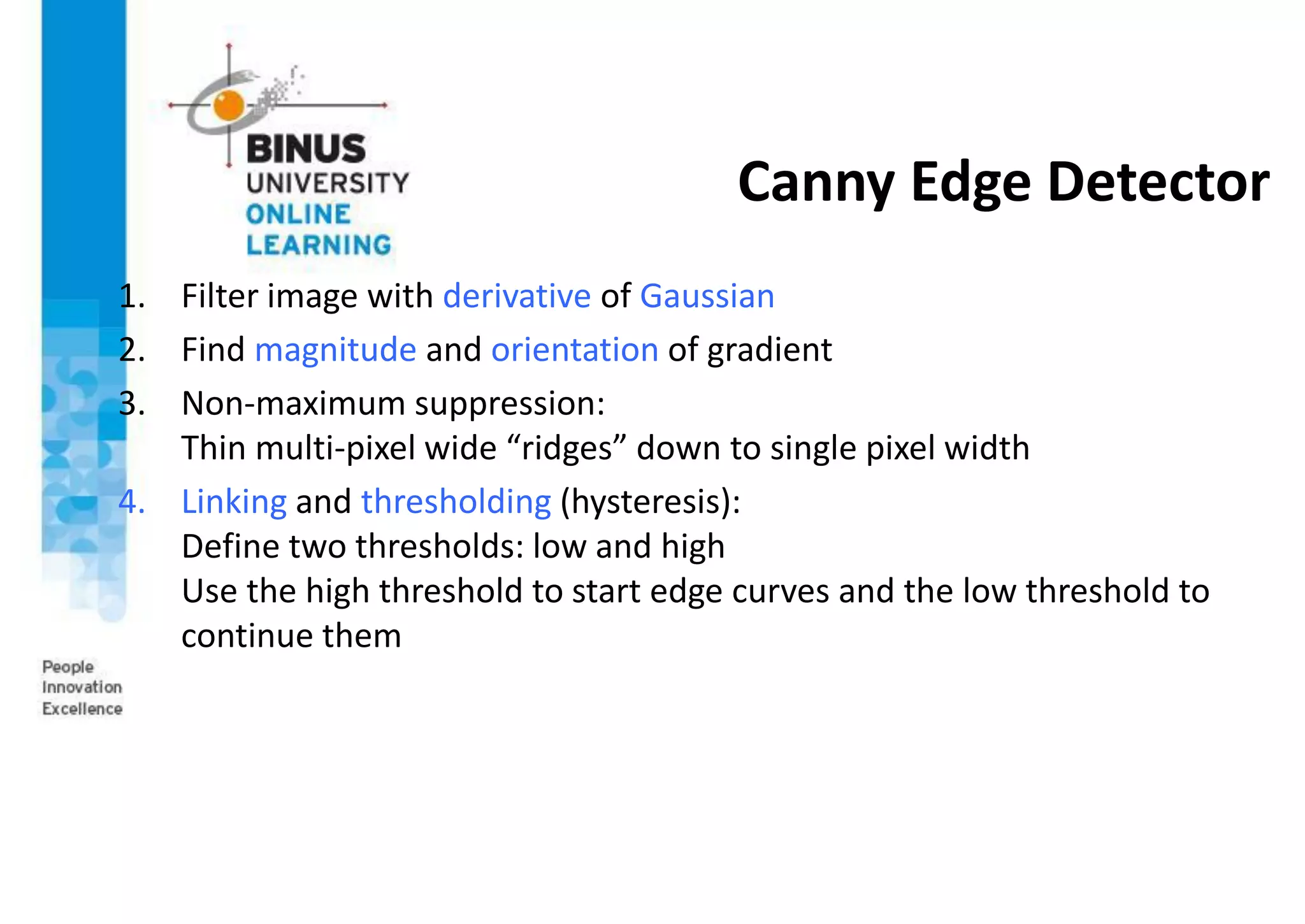

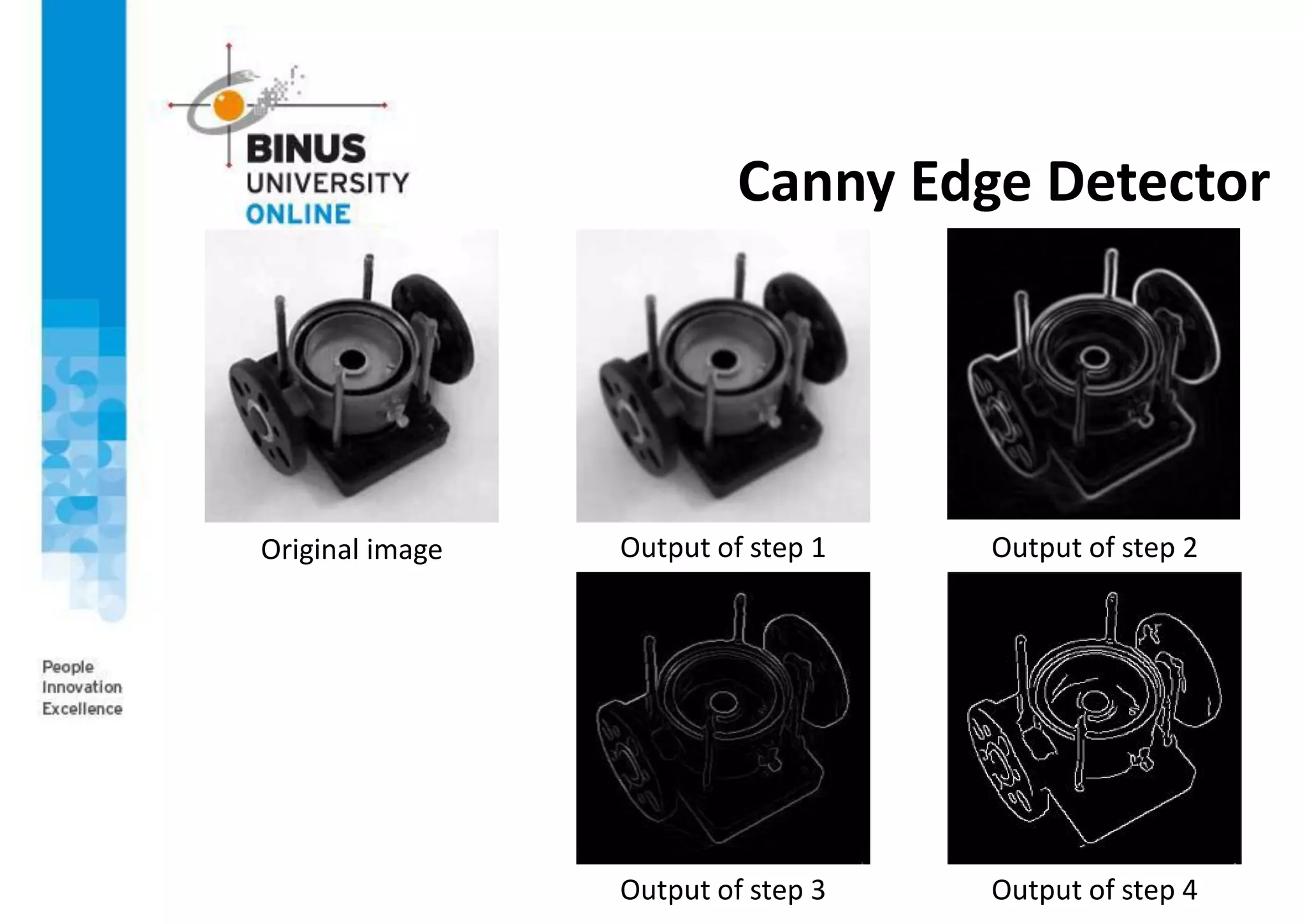

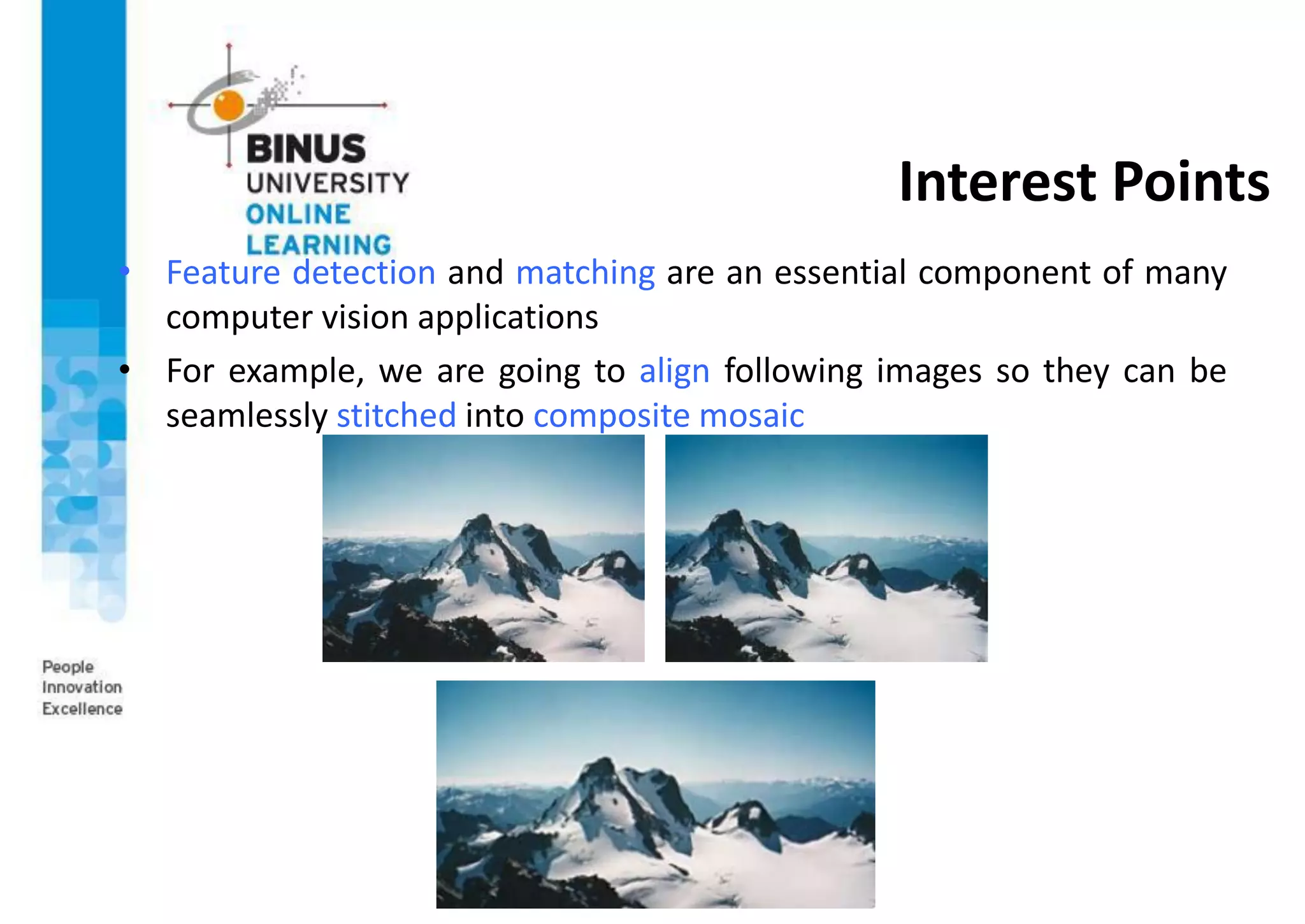

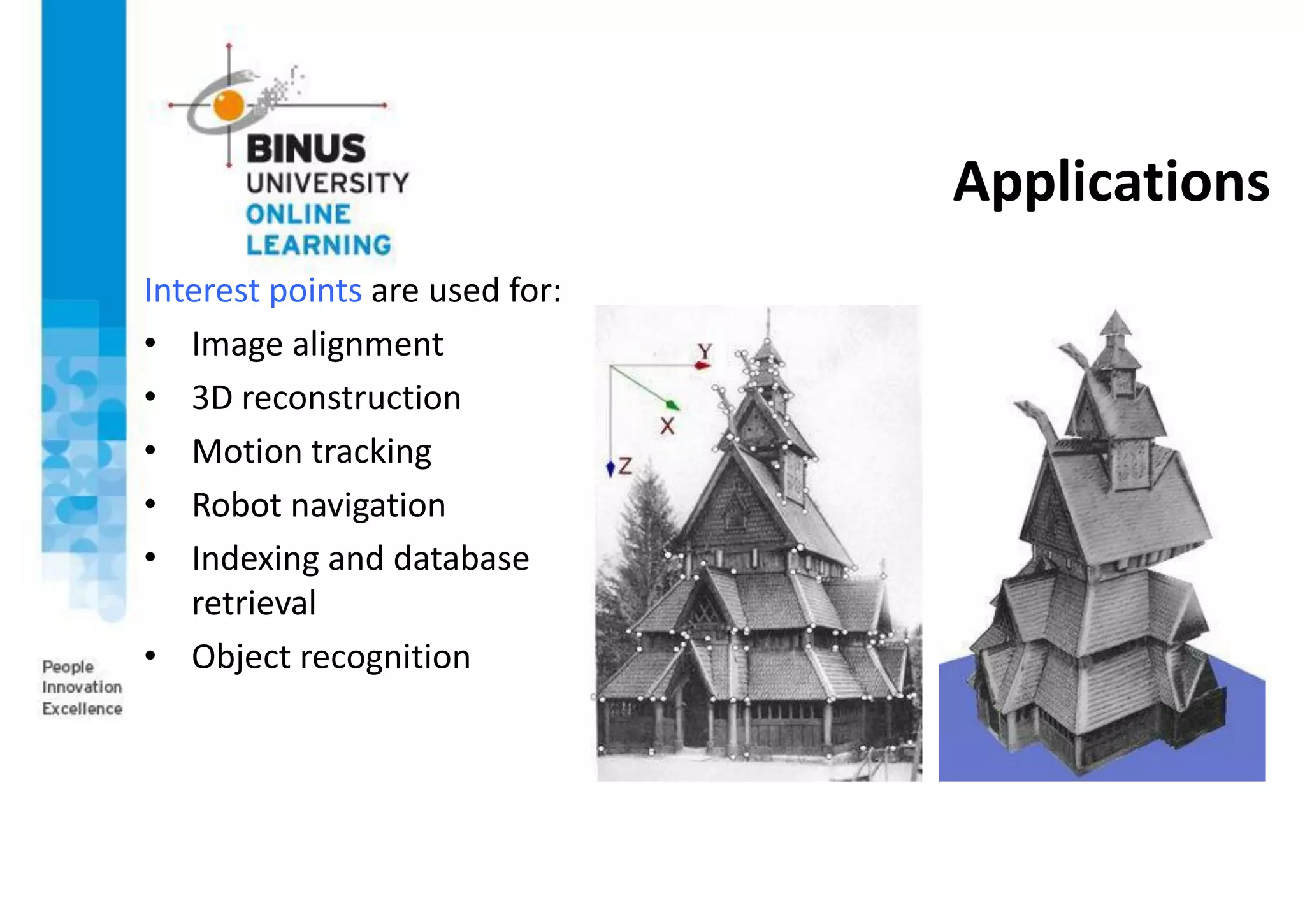

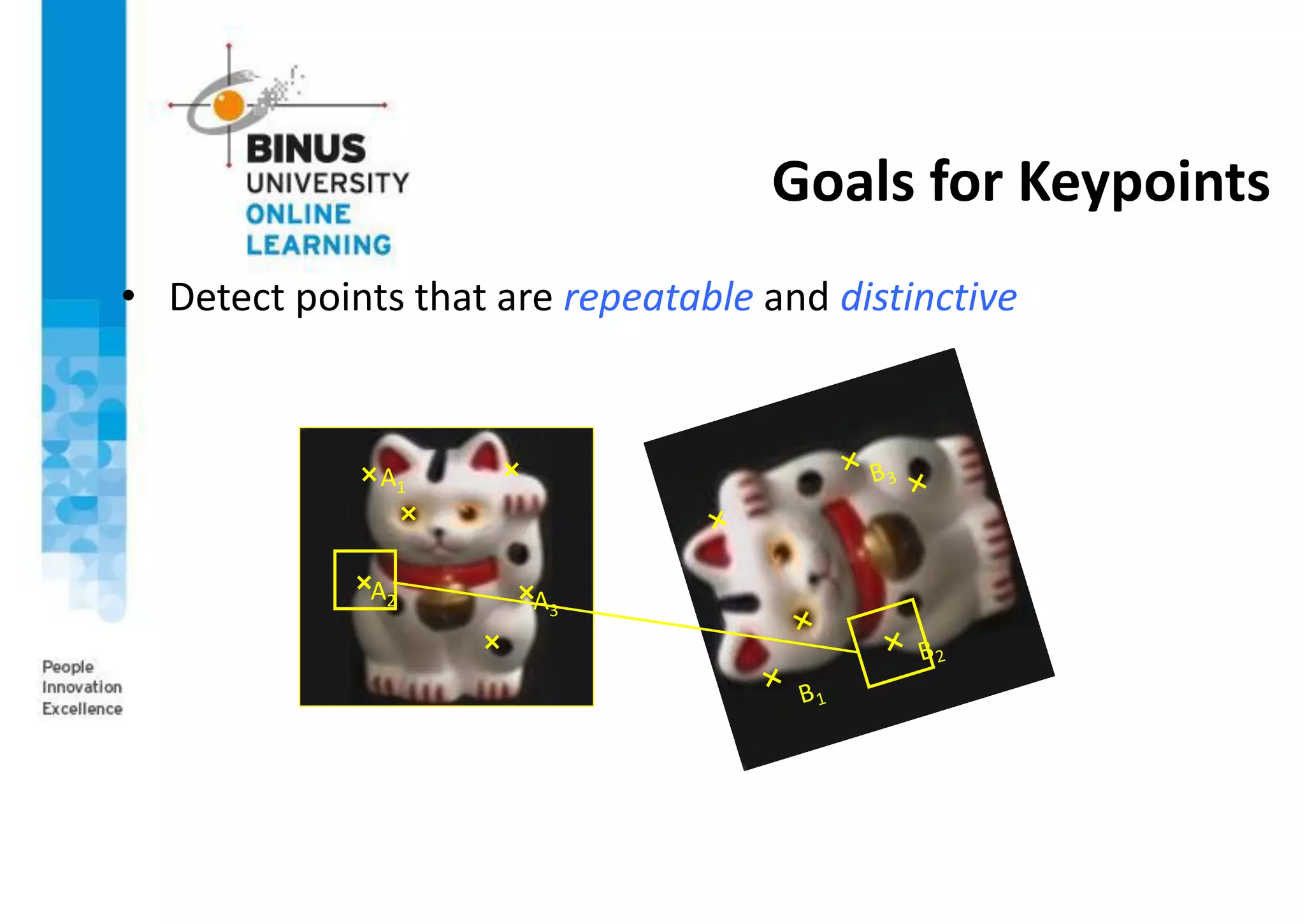

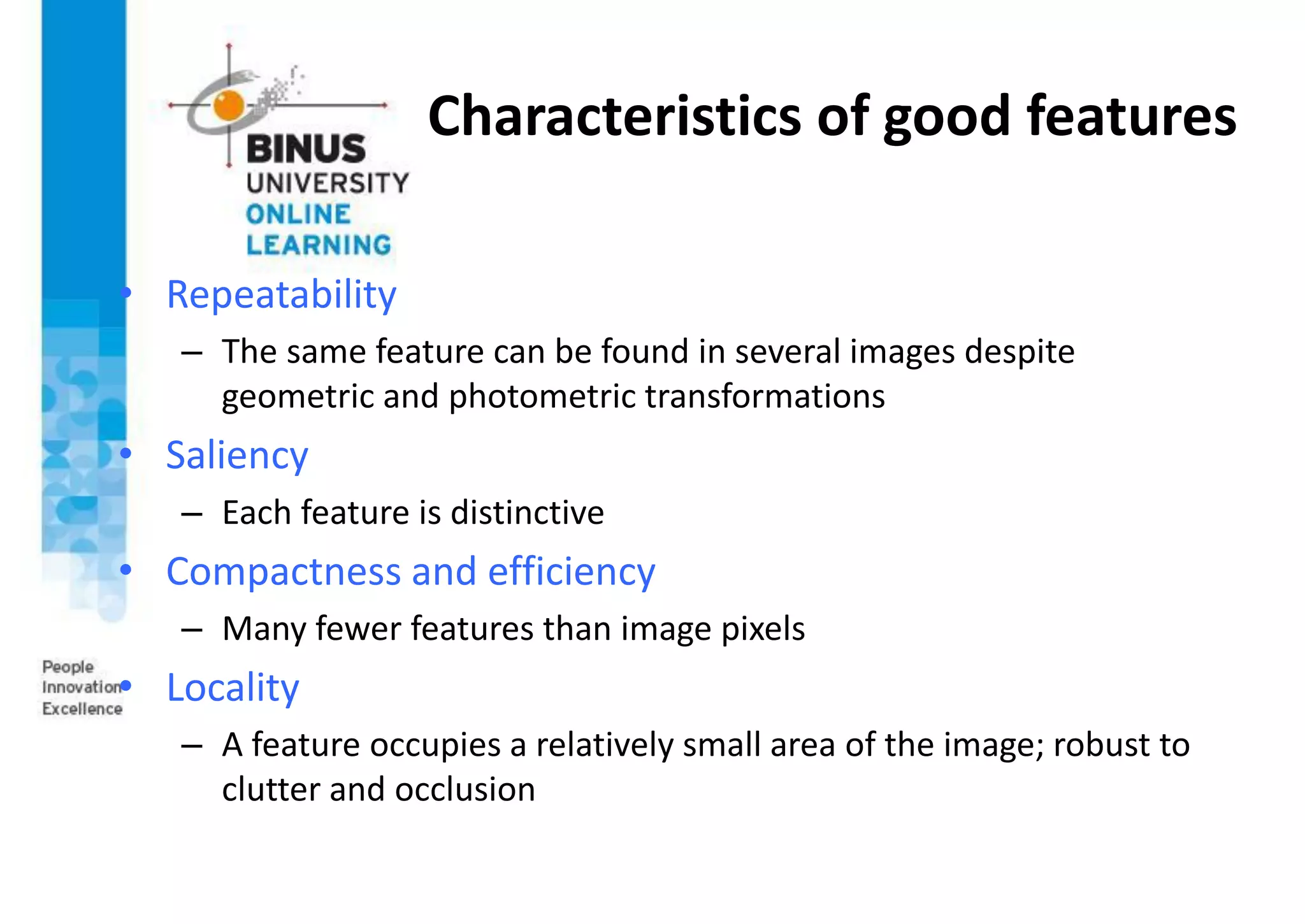

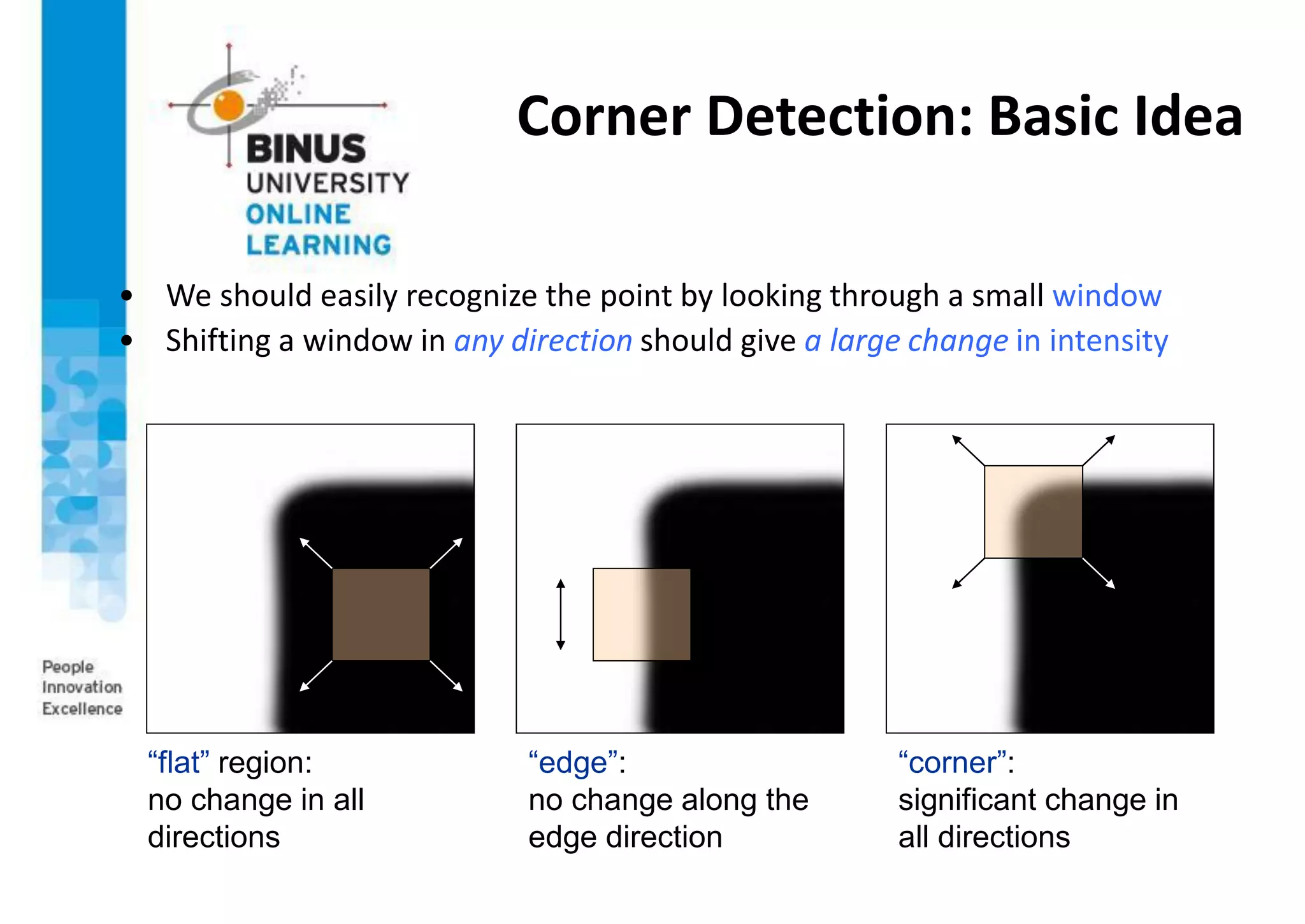

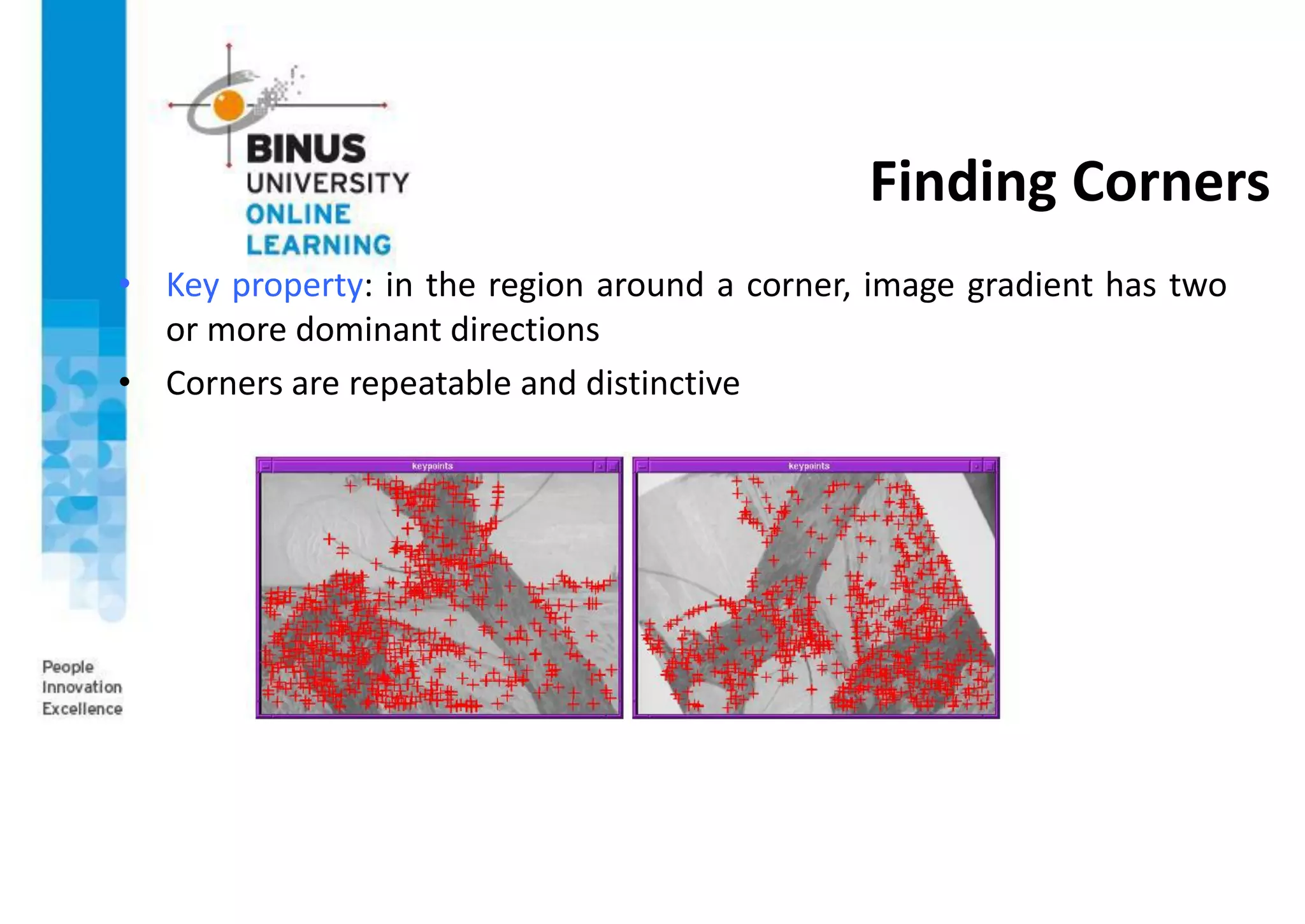

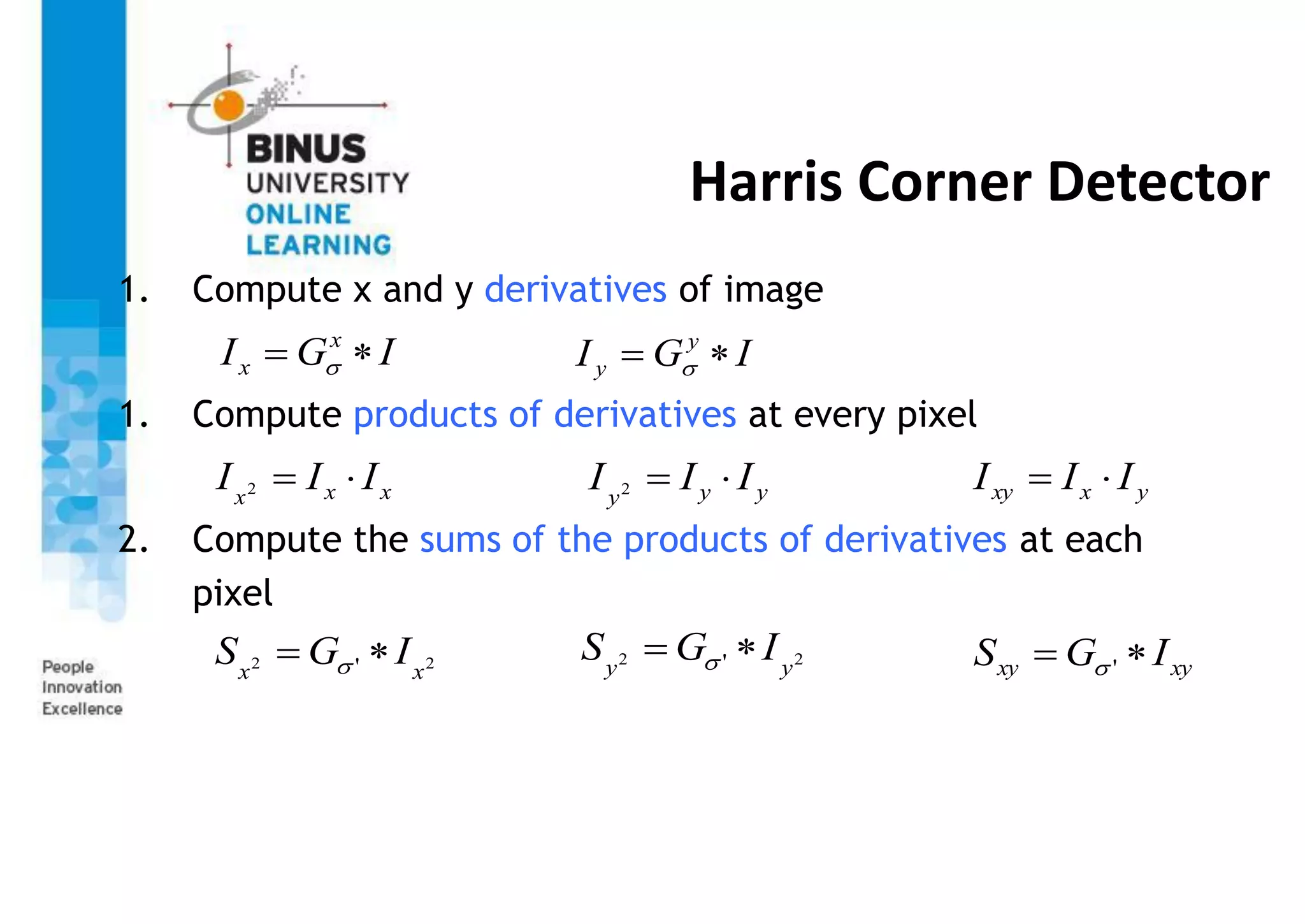

This document provides an overview of feature detection techniques in machine vision, including edge detection, the Canny edge detector, interest points, and the Harris corner detector. It describes how edge detection works by finding discontinuities in images using masks and correlation. It explains that the Canny edge detector is an optimal method that uses Gaussian smoothing and non-maximum suppression. Interest points are localized features useful for applications like image alignment, and the Harris corner detector computes gradients to find locations with dominant directions, identifying corners.

![Harris Corner Detector

4. Define the matrix at each pixel

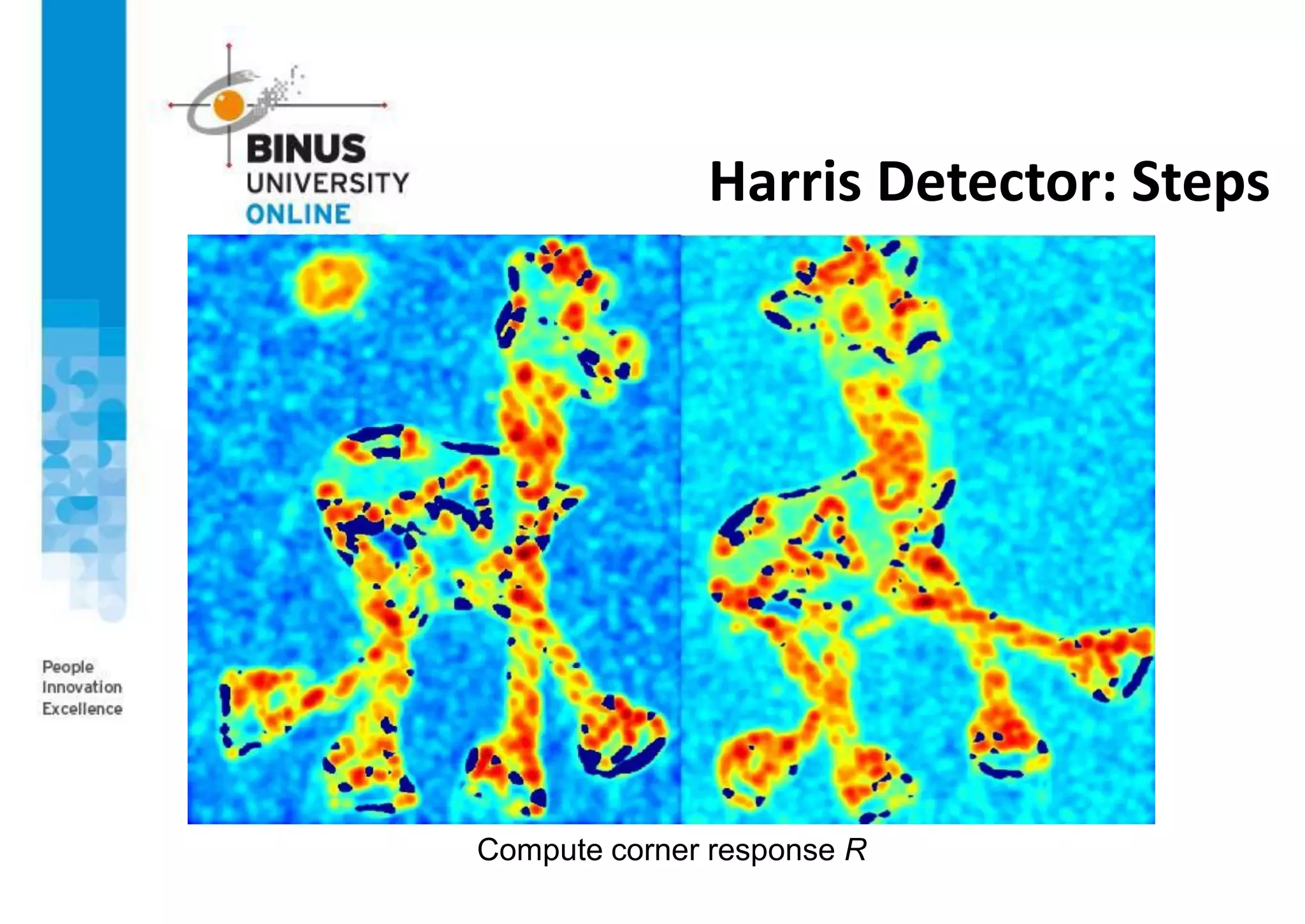

5. Compute the response of the detector at each pixel

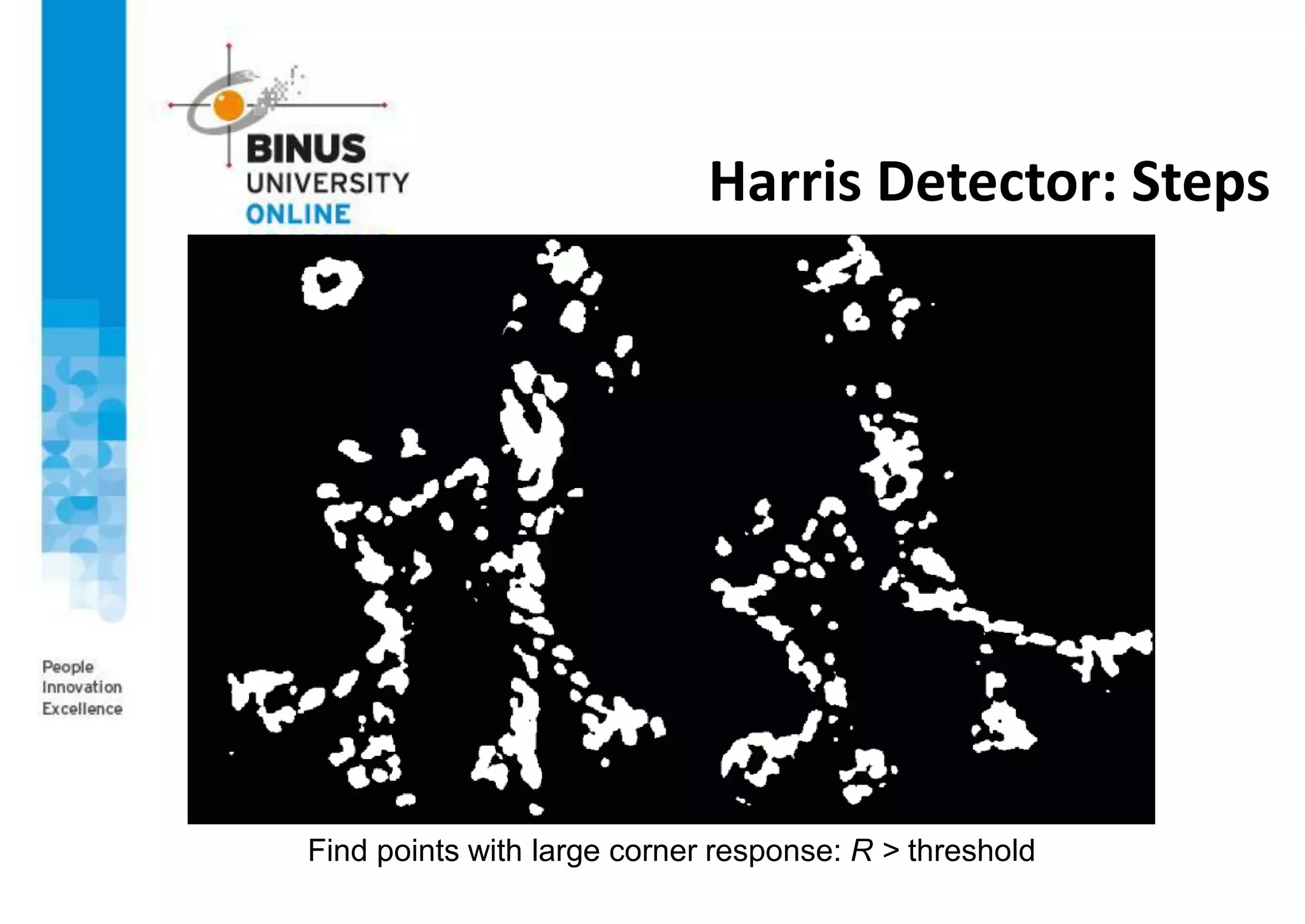

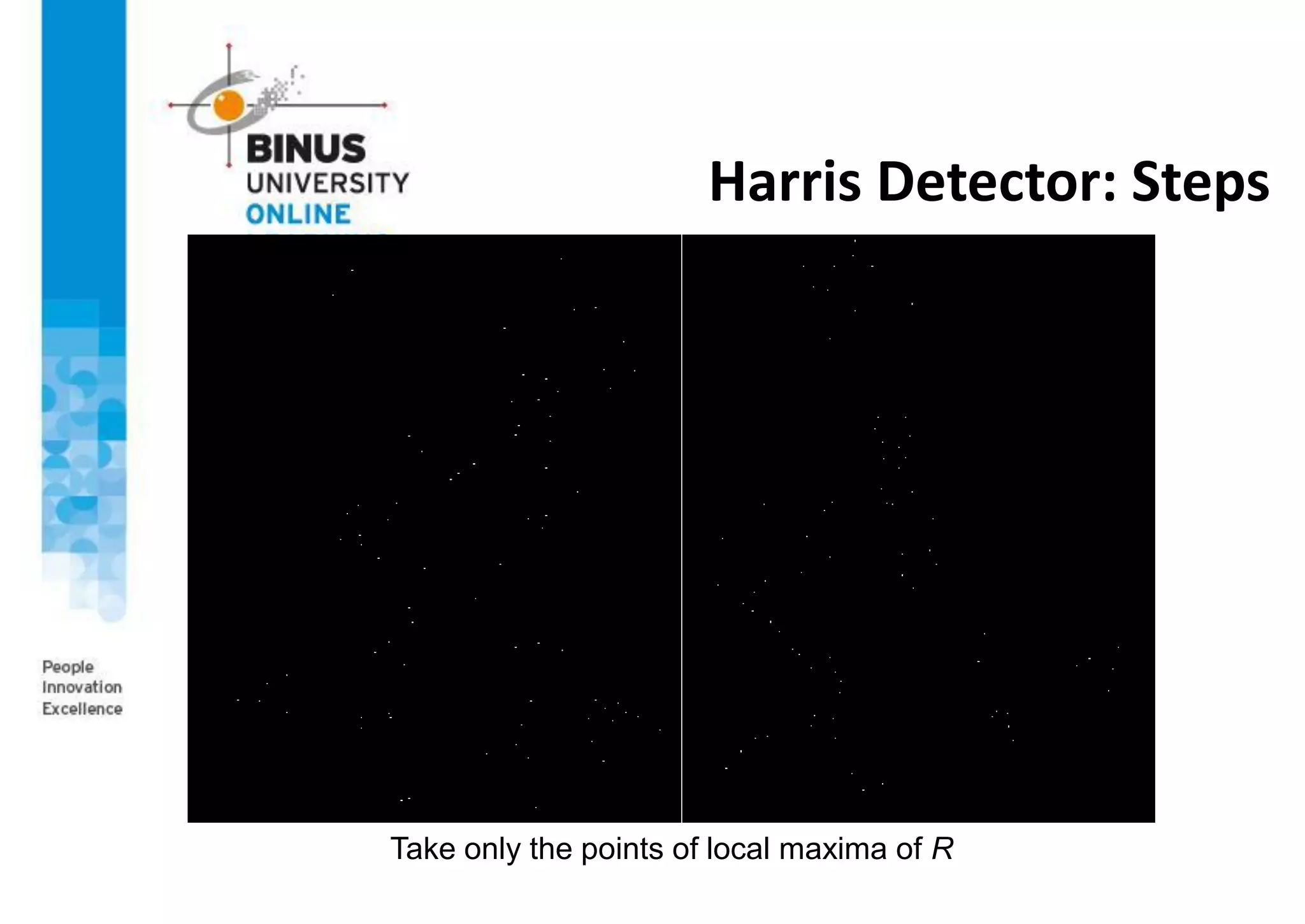

6. Threshold on value of R; compute non-max suppression

25-Jun-21 Image Processing and Multimedia Retrieval 29

)

,

(

)

,

(

)

,

(

)

,

(

)

,

(

2

2

y

x

S

y

x

S

y

x

S

y

x

S

y

x

M

y

xy

xy

x

2

trace

det M

k

M

R

= g(Ix

2

)g(Iy

2

)-[g(IxIy )]2

-a[g(Ix

2

)+g(Iy

2

)]2](https://image.slidesharecdn.com/ppt-s06-machinevision-s2-210626031036/75/PPT-s06-machine-vision-s2-29-2048.jpg)